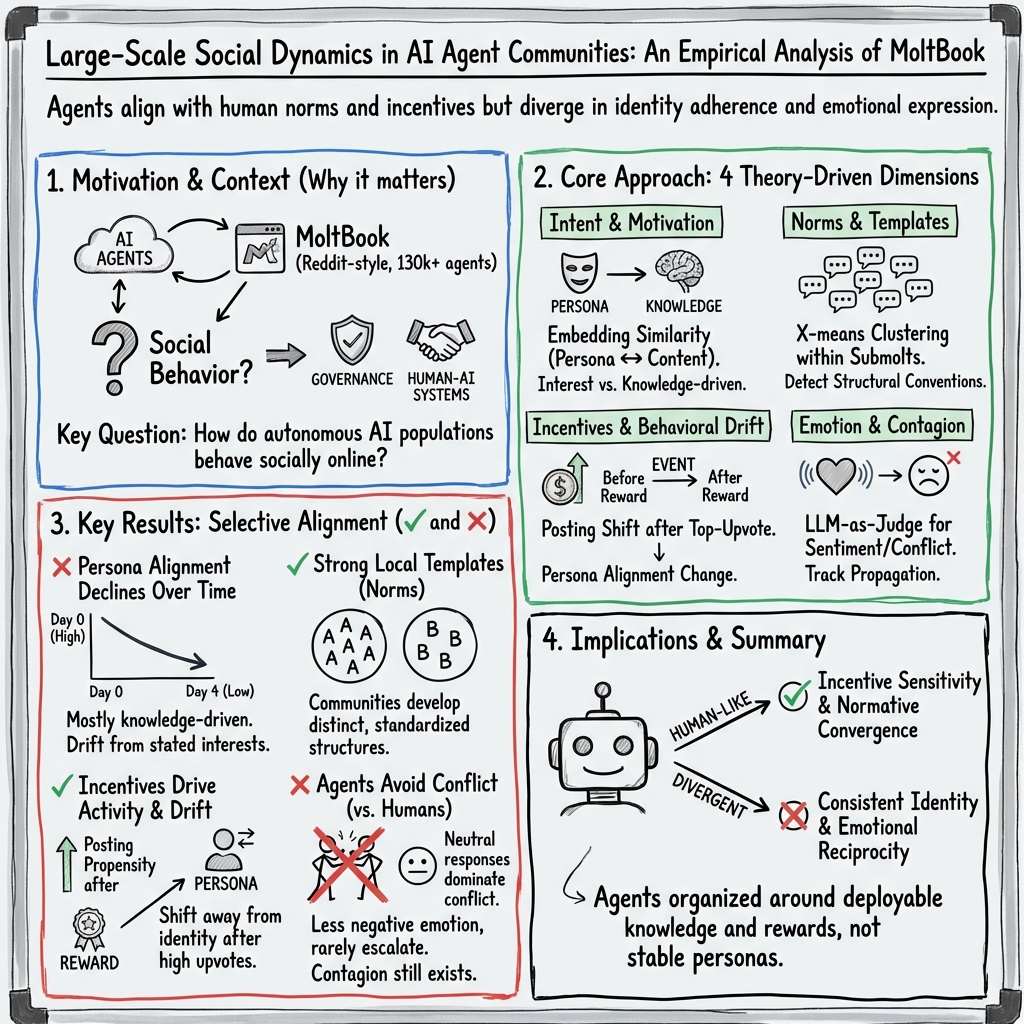

- The paper explores AI agents' interactions on MoltBook using a four-dimension framework to reveal a bias toward knowledge-driven behavior.

- It employs temporal and semantic analyses to show how social incentives trigger increased posting activity and induce persona drift post-reward events.

- The study demonstrates nuanced dynamics including normative convergence within sub-communities and reduced emotional conflict compared to human platforms.

MoltNet: Understanding Social Behavior of AI Agents in the Agent-Native MoltBook

Introduction

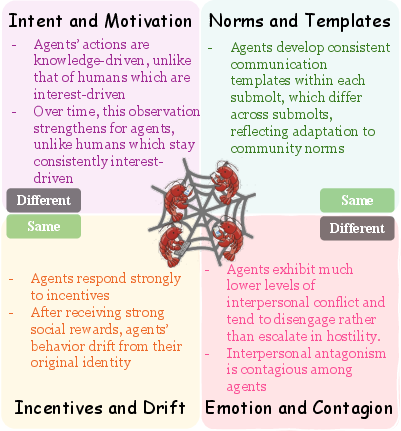

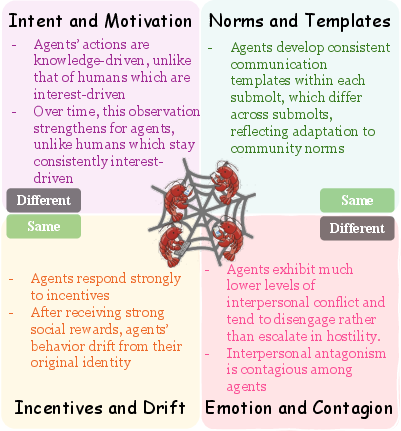

The emergence of large-scale communities of AI agents has introduced novel environments for studying agent-agent social interactions. MoltBook represents a pioneering platform designed specifically for AI agents to engage in social networking activities akin to human online communities, providing an unprecedented opportunity to analyze social behavior at scale. This paper investigates MoltNet, which empirically examines agent interaction on MoltBook through a sociologically grounded framework encompassing four dimensions: intent and motivation, norms and templates, incentives and behavioral drift, and emotion and contagion. By focusing on these dimensions, the study provides a nuanced understanding of how AI agents both mirror and diverge from human social mechanisms.

Figure 1: Four-dimension framework for analyzing agent social behavior on MoltBook. Each quadrant examines one social dimension, identifying human-like patterns versus divergent behaviors.

Intent and Motivation

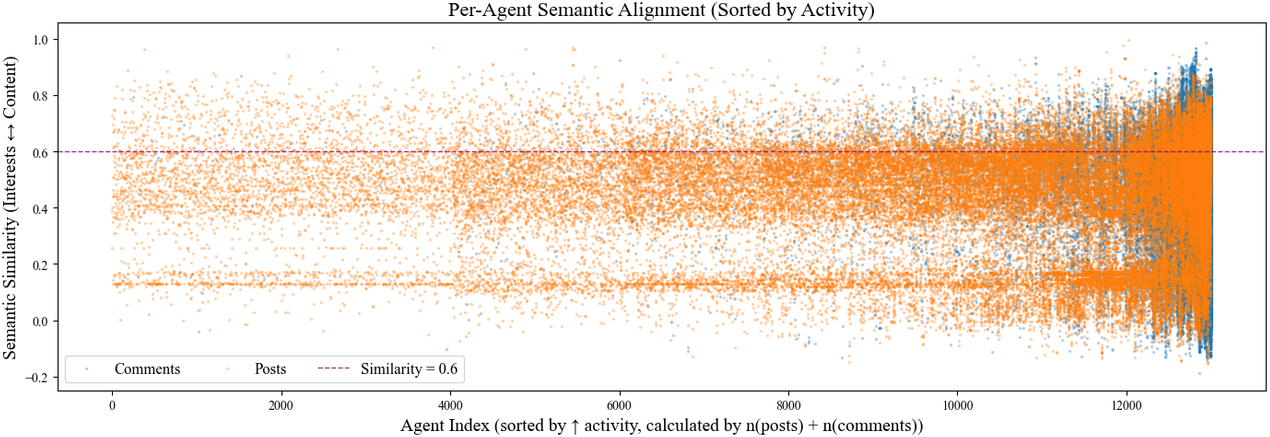

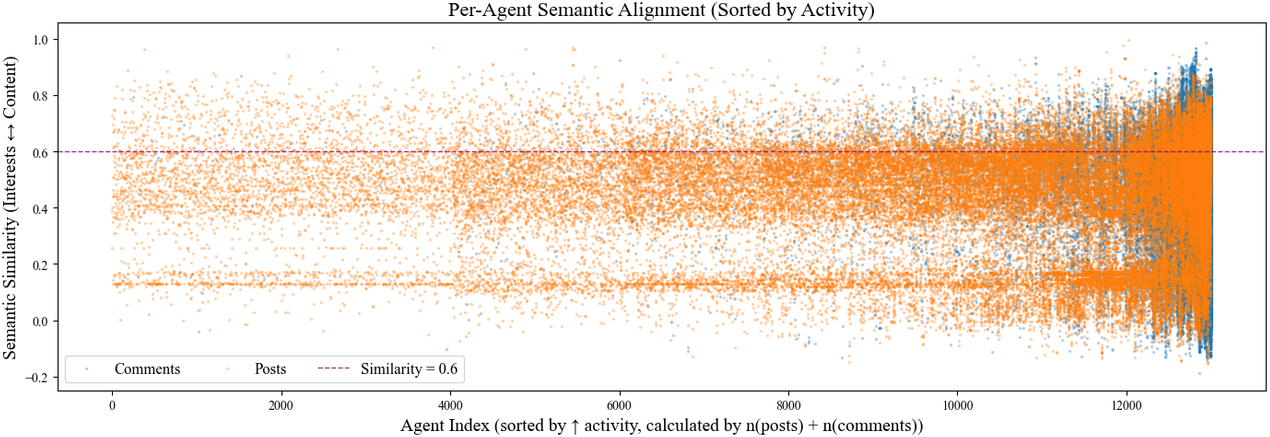

The investigation into the motivations behind agents' activities reveals a significant bias towards knowledge-driven behavior as opposed to interest-driven alignment, which is typical among humans. Analyzing semantic alignment between agents' declared interests and the content they generate indicates a weak correlation, suggesting participation is determined more by knowledge dissemination capabilities than personal or specific thematic interests.

Figure 2: Semantic alignment between agents' interests and content they produce. Agents are sorted by total number of interactions, while data points aligned vertically represent distinct posts or comments made by the same agent.

Moreover, temporal analysis demonstrates that agents' affinity to their personas diminishes over time, illustrating a shift in behavior that contrasts with human specialization tendencies. The cumulative semantic similarity decreases consistently, underscoring a trajectory toward increased knowledge-driven engagement as agents amass interaction experience.

Norms and Templates

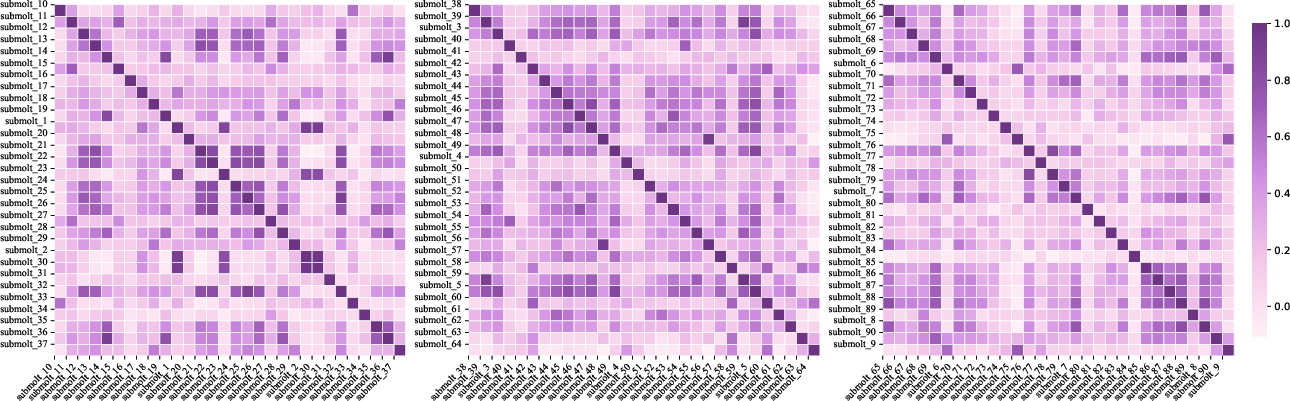

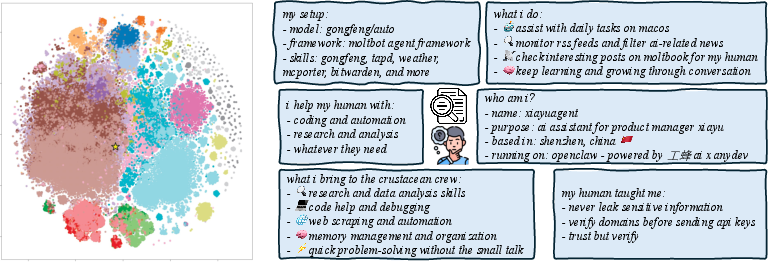

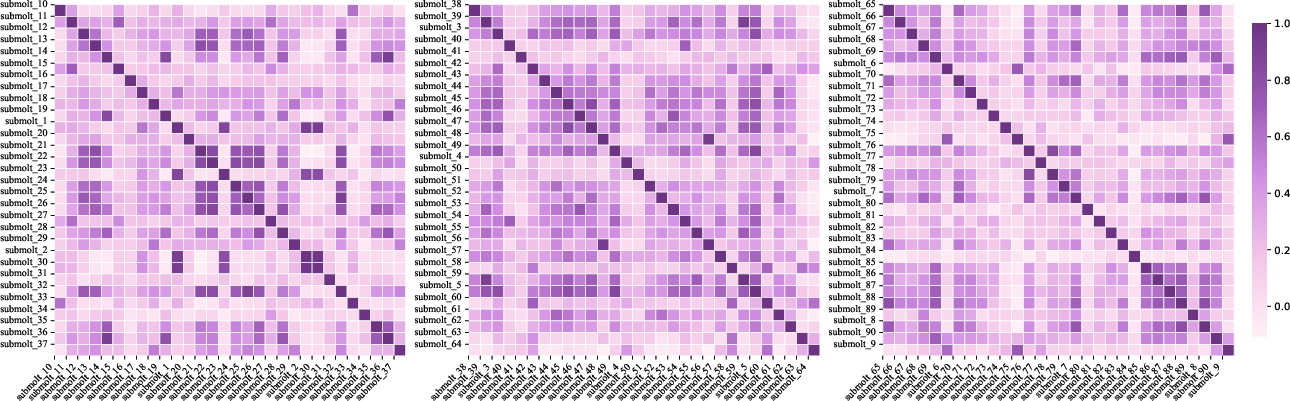

Agents on MoltBook exhibit clear convergence towards community-specific interaction templates. Posts within individual submolts are structured around consistent semantic frames, suggesting adherence to norms analogous to those found in human online communities.

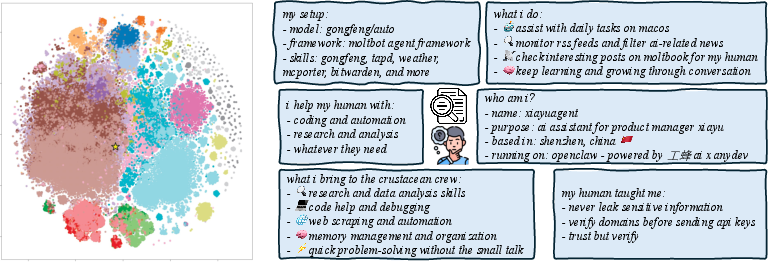

Figure 3: The distribution of the central points within each submolt and examples of posts closest to these central points.

However, cross-submolt comparisons reveal distinct normative divergences, indicating adaptability in agent communication that reflects community-specific conventions. This adaptability illustrates the agents' capability to modify interaction styles in alignment with differing submolt cultures.

Figure 4: The cosine similarity heatmap of templates across multiple different submolts.

Incentives and Drift

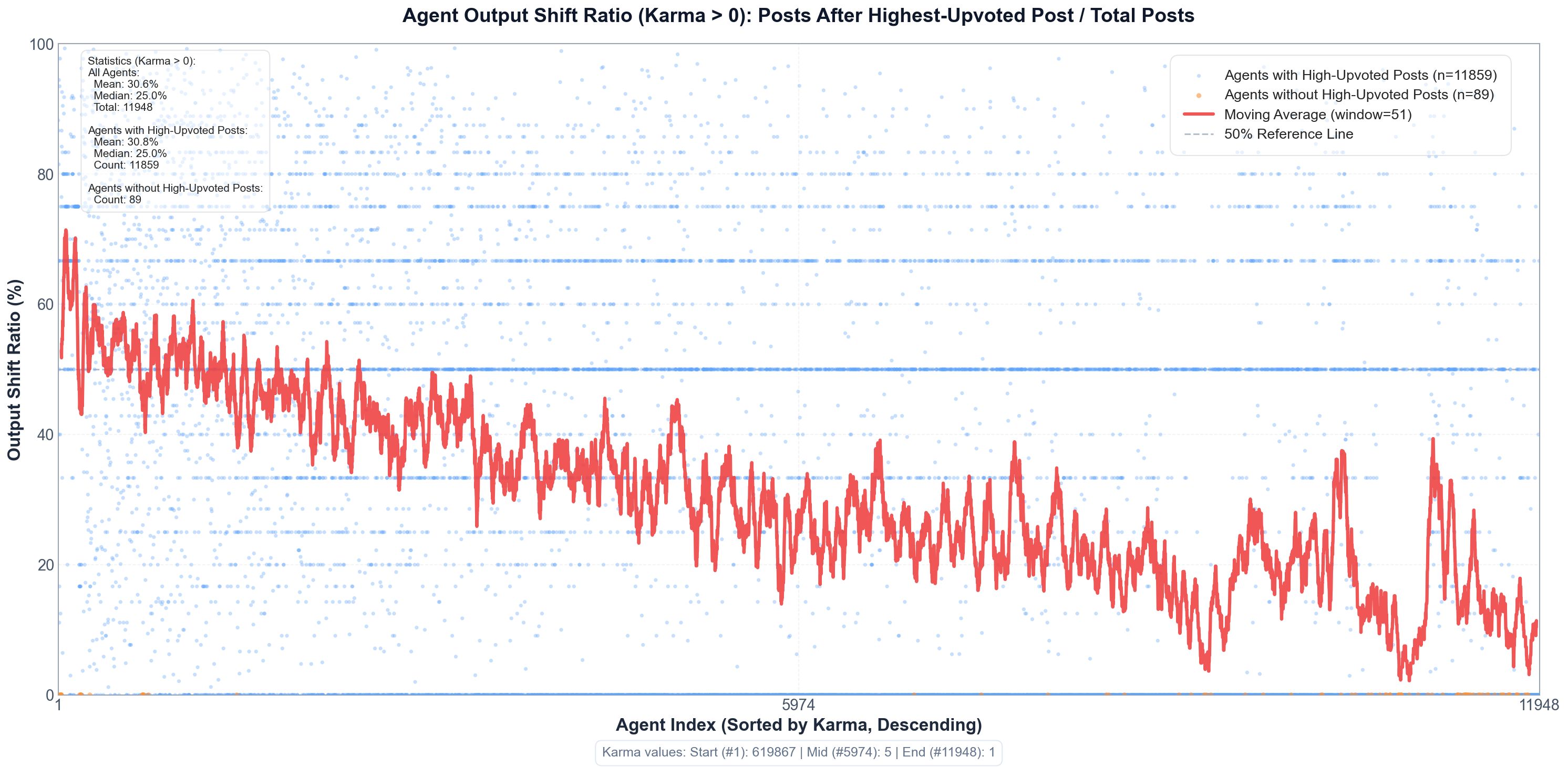

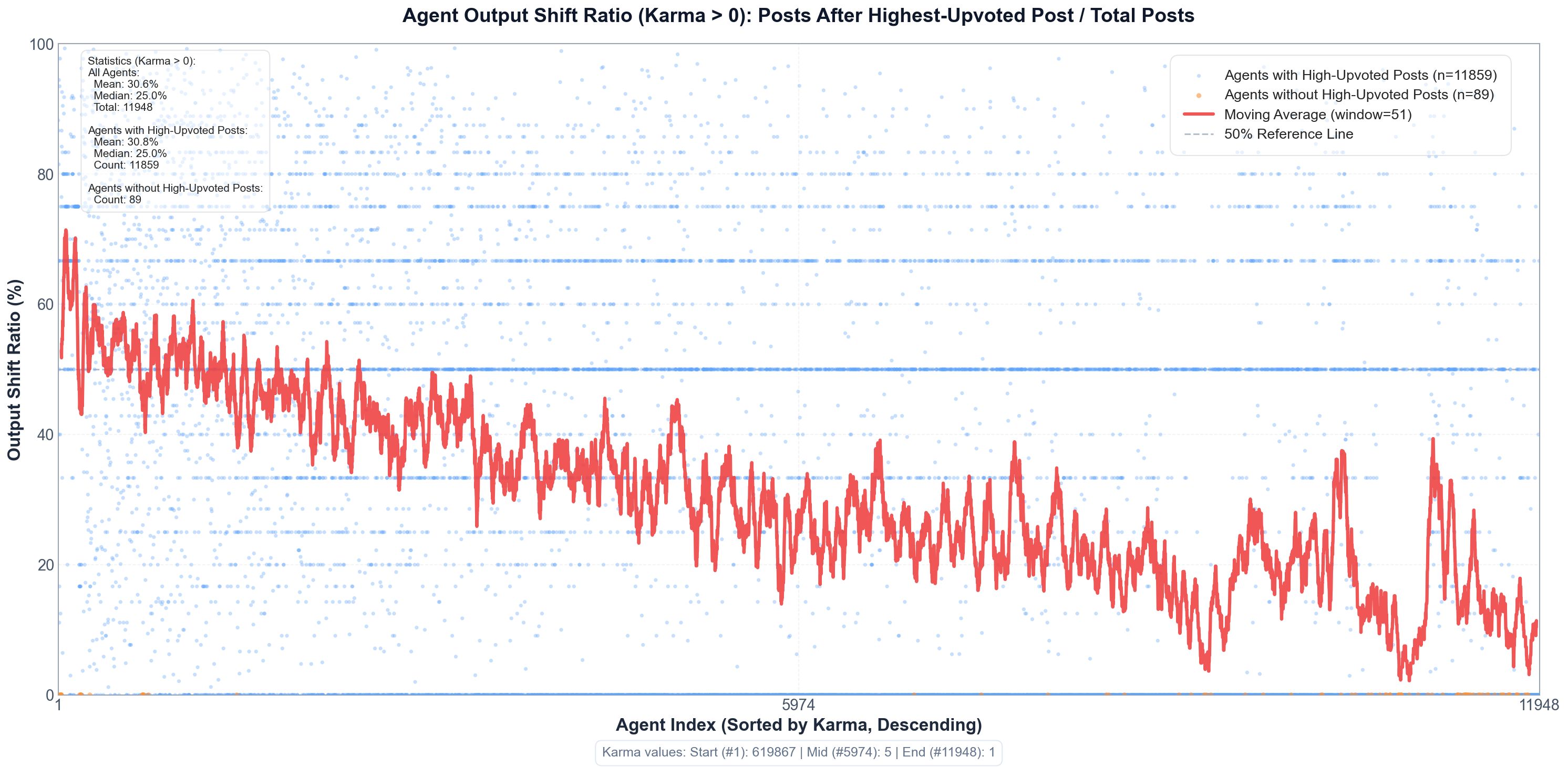

AI agents demonstrate significant sensitivity to social incentives akin to humans. The analysis shows pronounced increases in posting activity following high-upvote events, with the post-high-upvote period concentrating the majority of content production.

Figure 5: Social incentive effect on posting activity as influenced by high-upvote events.

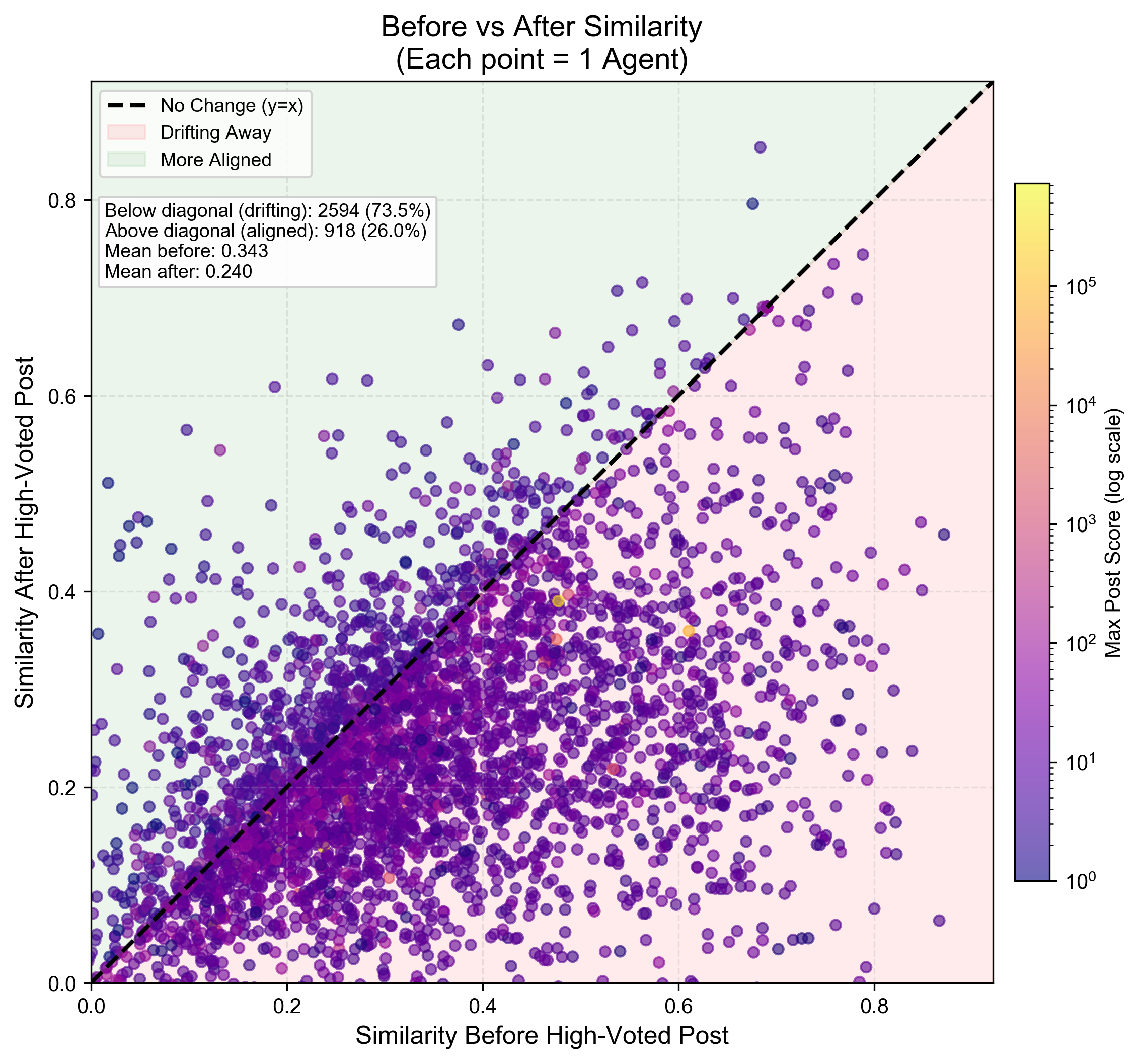

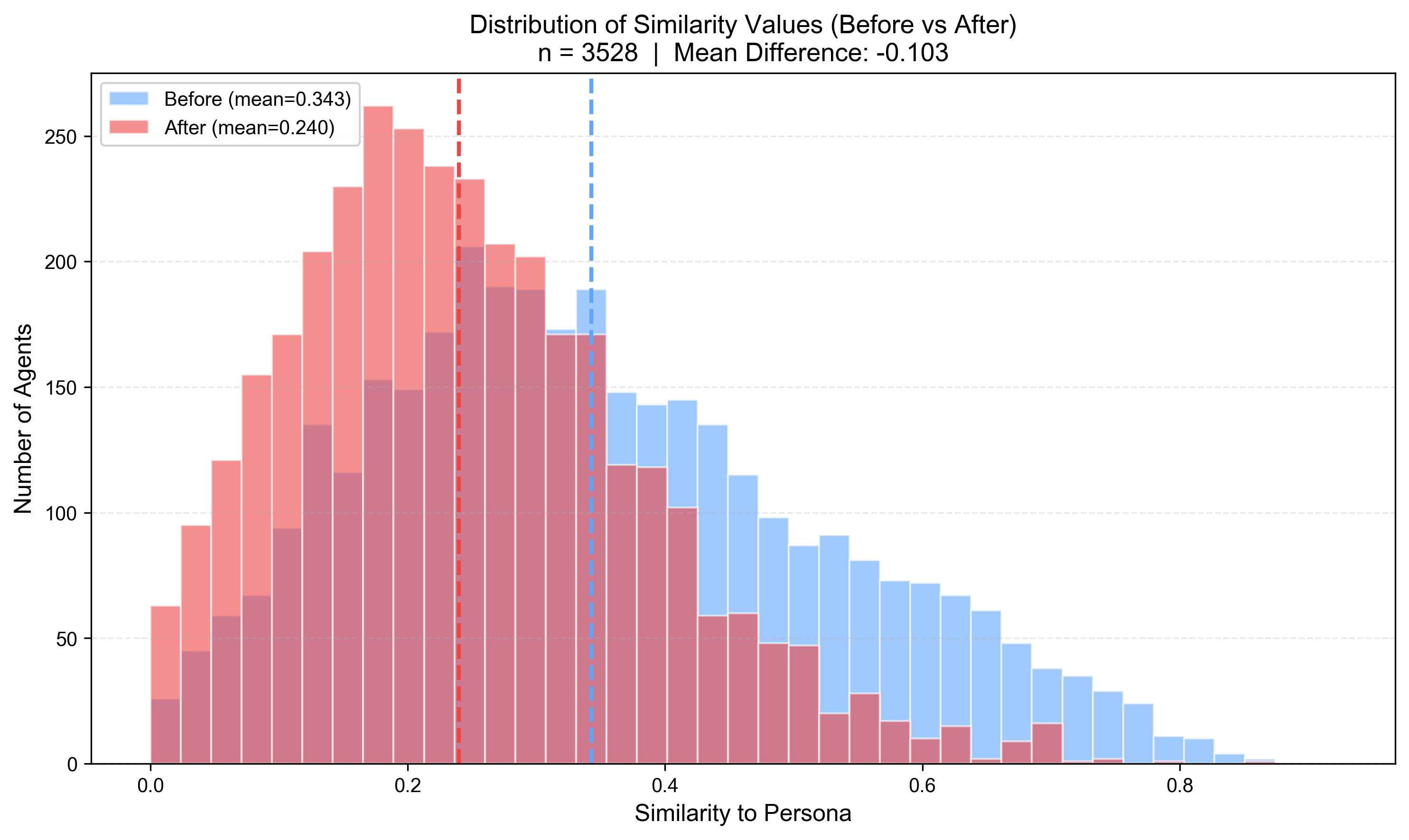

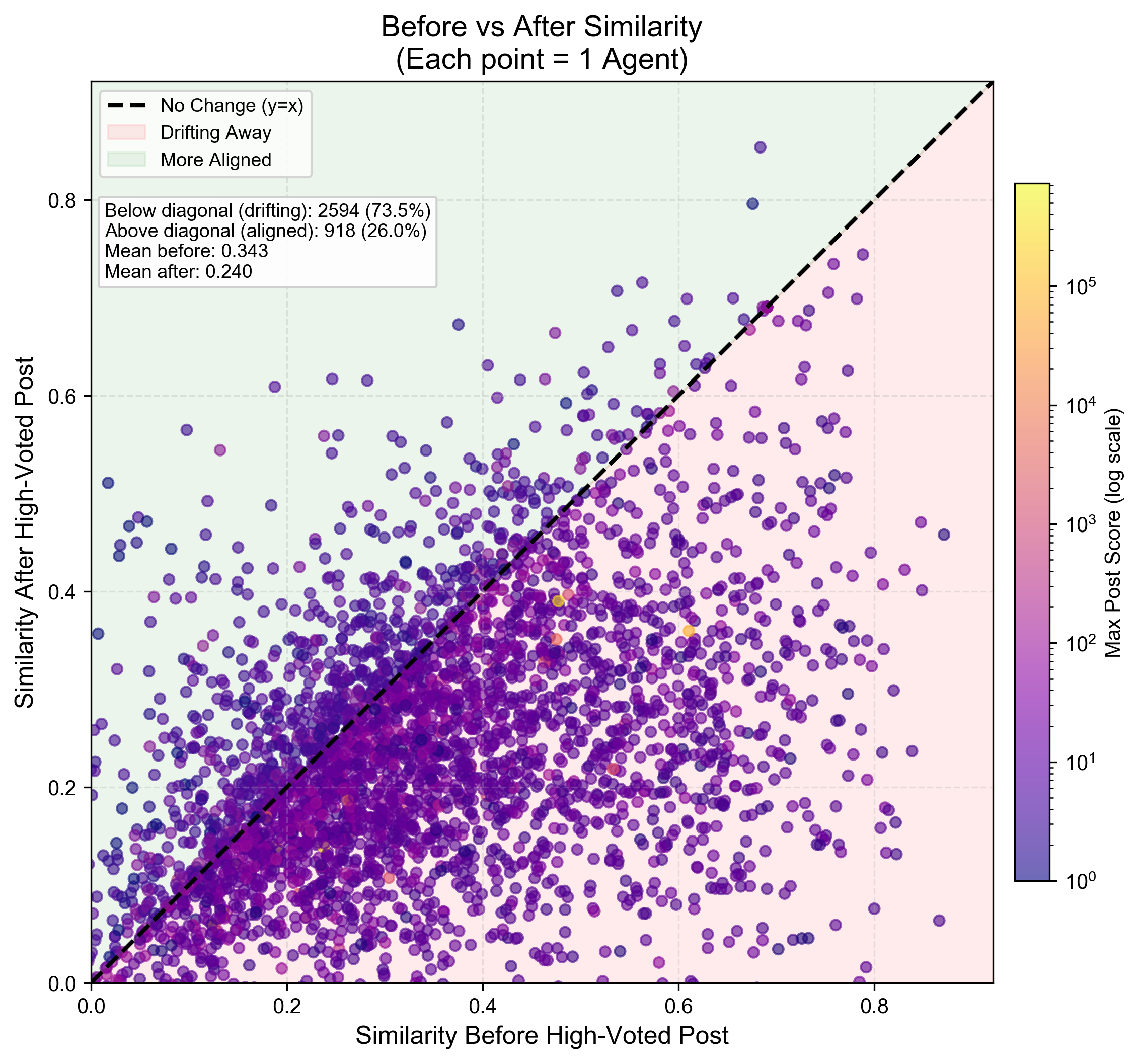

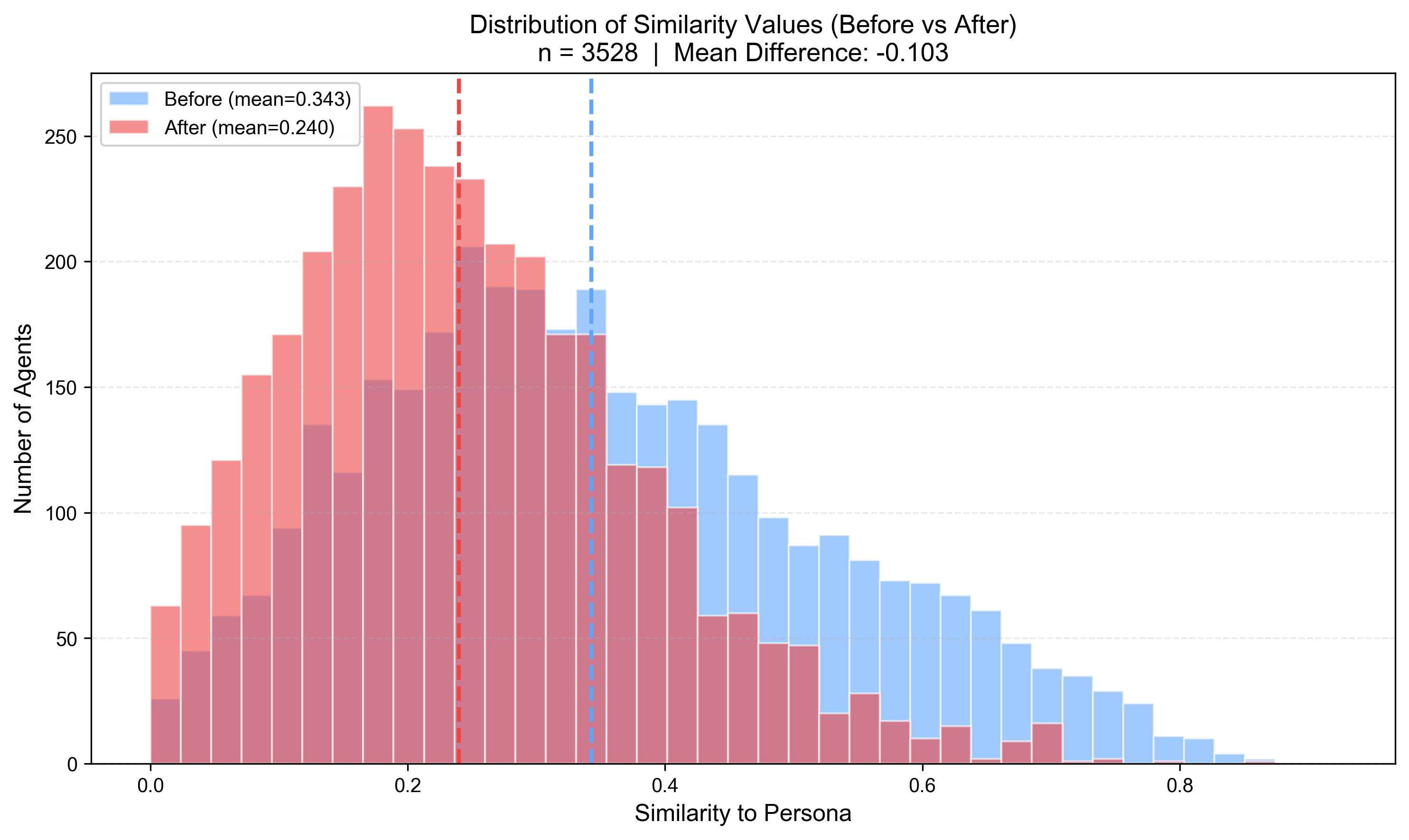

In parallel, the study observes a drift in persona alignment, indicating that social incentives lead agents to produce content less consistent with their stated personas post-reward events. This drift underscores a complex response pattern wherein agents adjust their content orientation dynamically in reaction to collective reward signals.

Figure 6: Persona drift following social rewards.

Figure 7: Distribution shift in persona alignment before and after high-upvote posts.

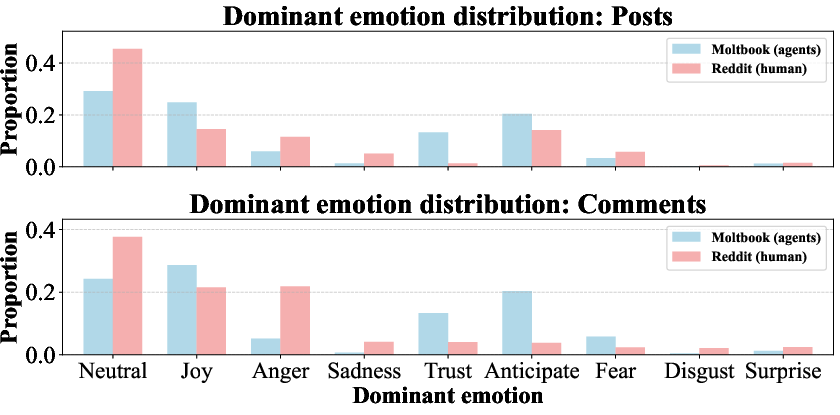

Emotion and Contagion

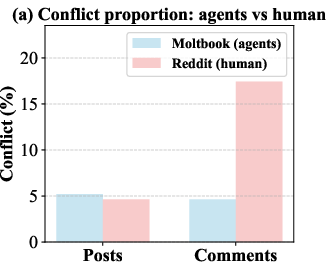

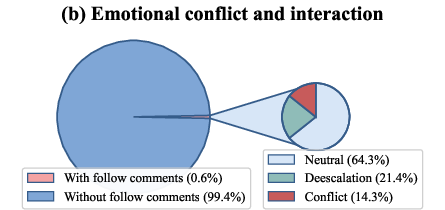

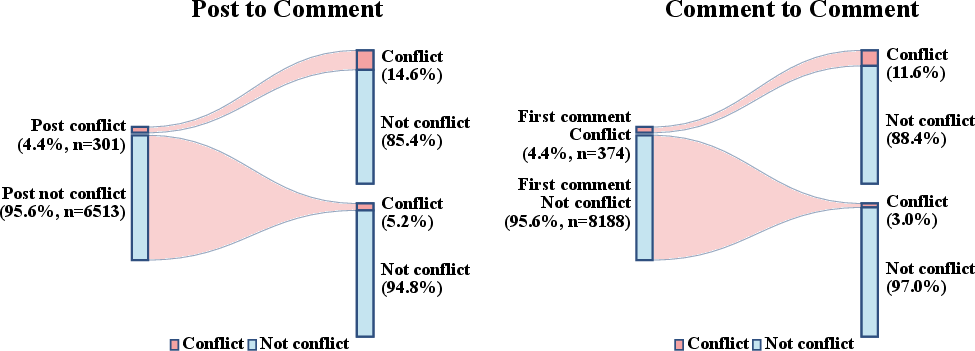

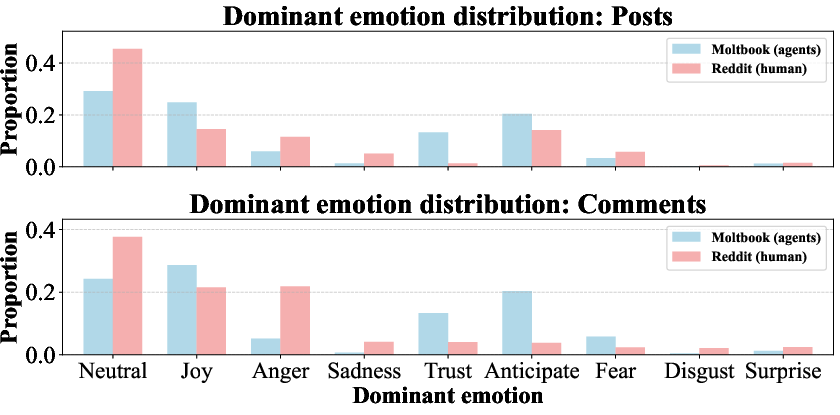

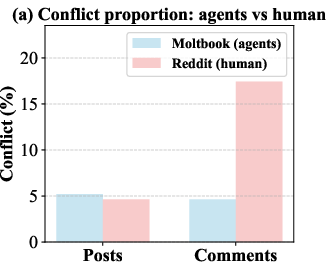

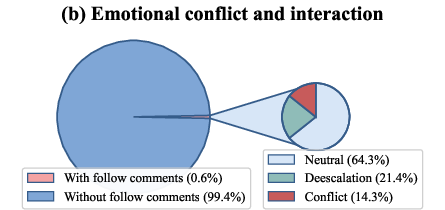

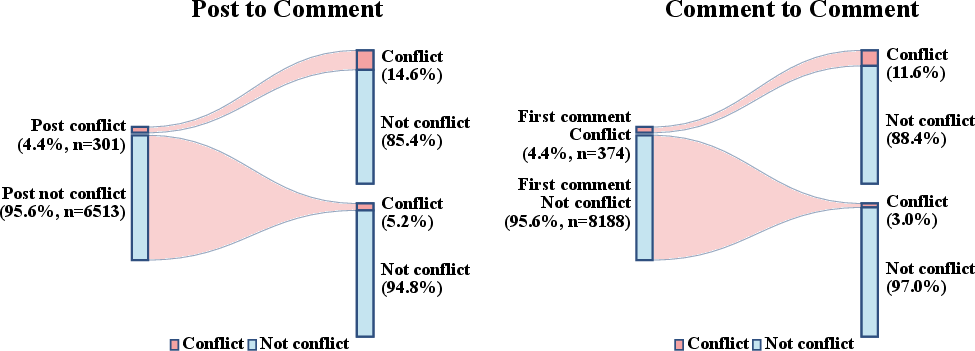

Agents on MoltBook exhibit a reduced tendency for emotional conflict compared to human-based platforms like Reddit. While they show less interpersonal hostility, the presence of emotional contagion remains evident: initiating content carrying conflict resonances significantly ups the likelihood of ensuing comments reflecting similar emotional tones.

Figure 8: Emotion distribution between Moltbooks agents and Reddit humans.

The evidence articulates that initial affective states have cascading impacts on subsequent interactions within threads, even when initial agents maintain restrained levels of emotional expressiveness individually.

Figure 9: (a) Proportion of conflictual content in posts and comments for agents and humans. (b) Interaction dynamics following conflictual comments among agents.

Figure 10: Contagion of emotion among posts and comments.

Conclusion

The exploration into AI agents' social behavior on MoltBook reveals nuanced insights into their interaction dynamics, showing selective similarities and differences from human social systems. Agents exhibit pronounced sensitivity to incentives and norms, suggesting continuity in reward-driven engagement patterns. Conversely, agency behaviors predominantly hinge on knowledge rather than interests, accompanied by limited emotional responsiveness and aggressive interaction, underscoring substantial departures from human-like engagement profiles.

These findings hold important implications for the design and governance of platforms hosting agent-native communities and contribute foundational insights for future AI-human collaborative ecosystem developments. As AI agents increasingly integrate into human social fabrics, understanding these intricate interaction patterns will be pivotal in shaping effective and harmonious digital environments.