- The paper presents a detailed mechanistic explanation of how two-layer neural networks develop sparse, single-frequency Fourier features for modular addition.

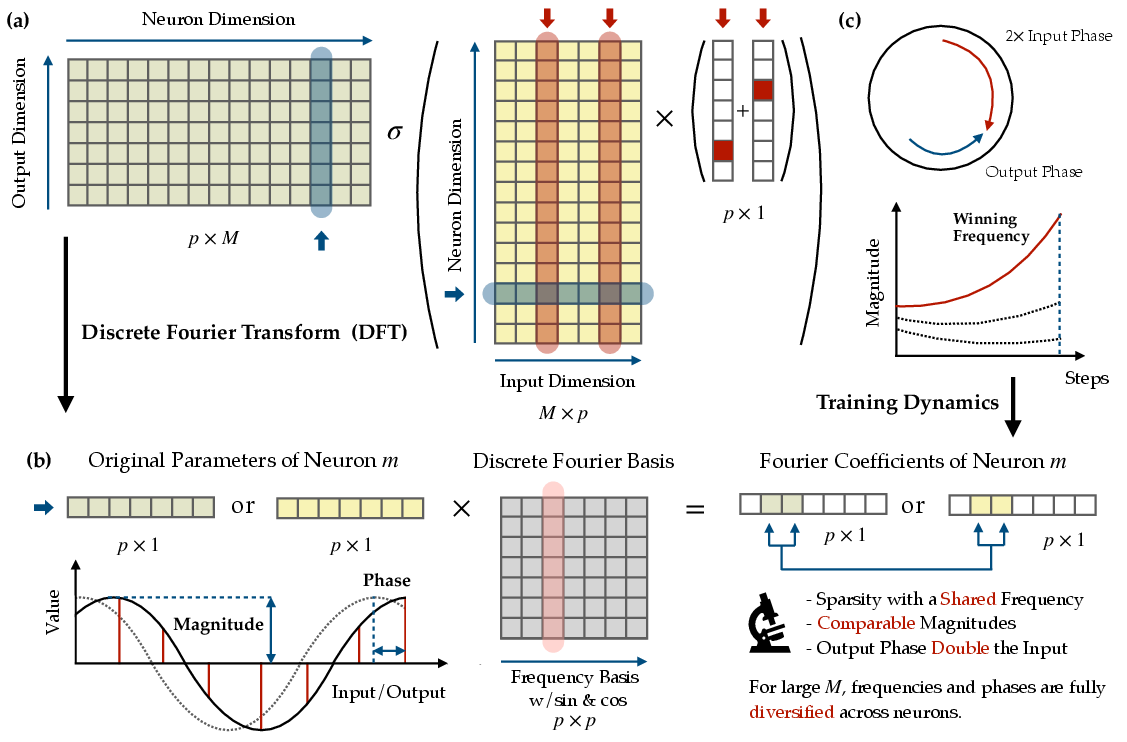

- It employs Discrete Fourier Transform to reveal emergent phase alignment and a lottery ticket mechanism that drives feature competition and majority-voting.

- The study demonstrates grokking as a three-stage process that prunes noisy features and enables robust neurosymbolic computation.

Mechanistic Interpretation and Training Dynamics of Modular Addition in Two-Layer Neural Networks

Analytical Framework and Main Empirical Findings

The study presents a rigorous mechanistic account of how two-layer neural networks learn to solve modular addition tasks, (x,y)↦(x+y)modp. The principal contribution is an end-to-end quantitative characterization of the emergent feature structure, parameter dynamics, and the conditions supporting generalization phenomena such as grokking.

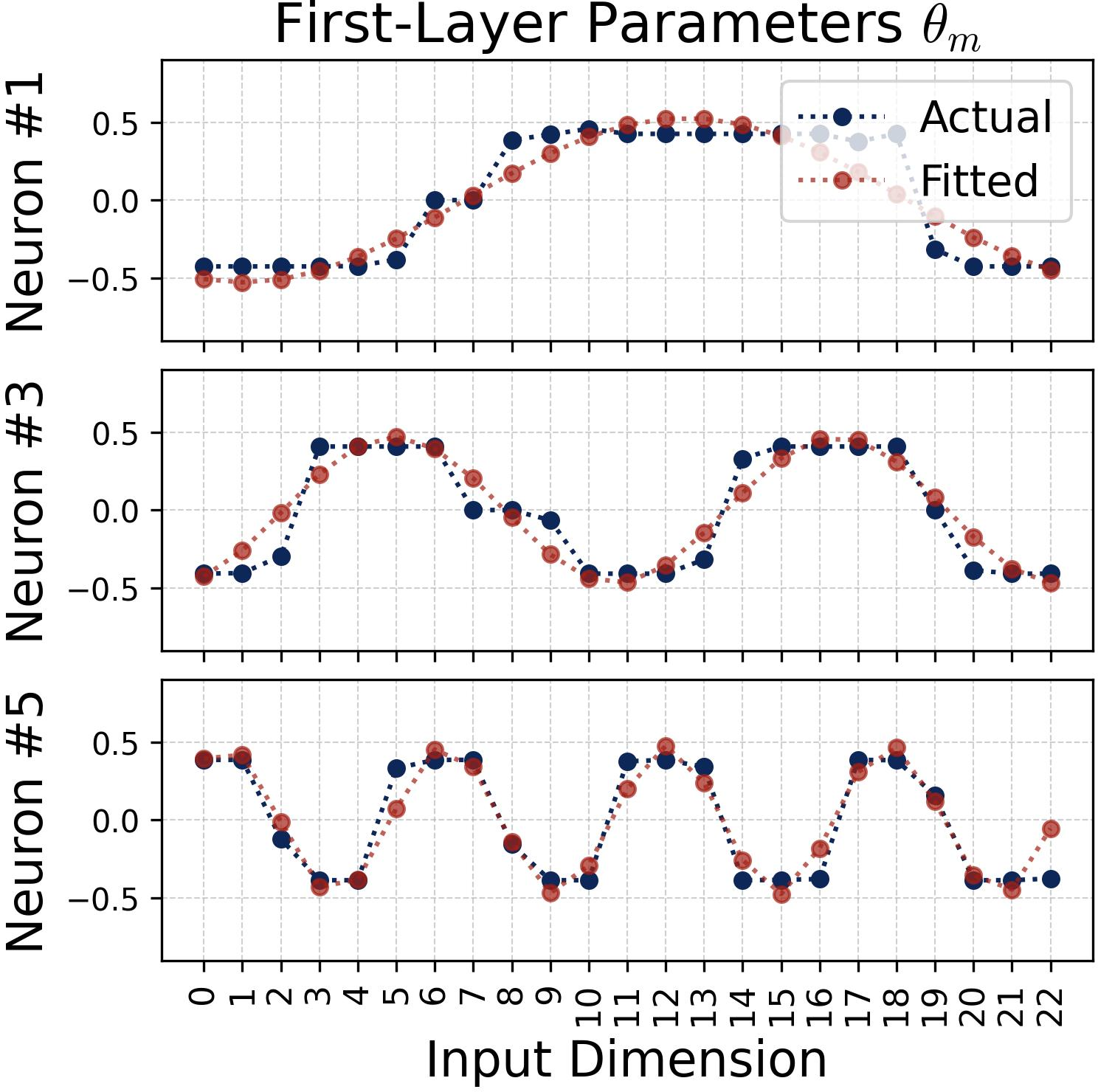

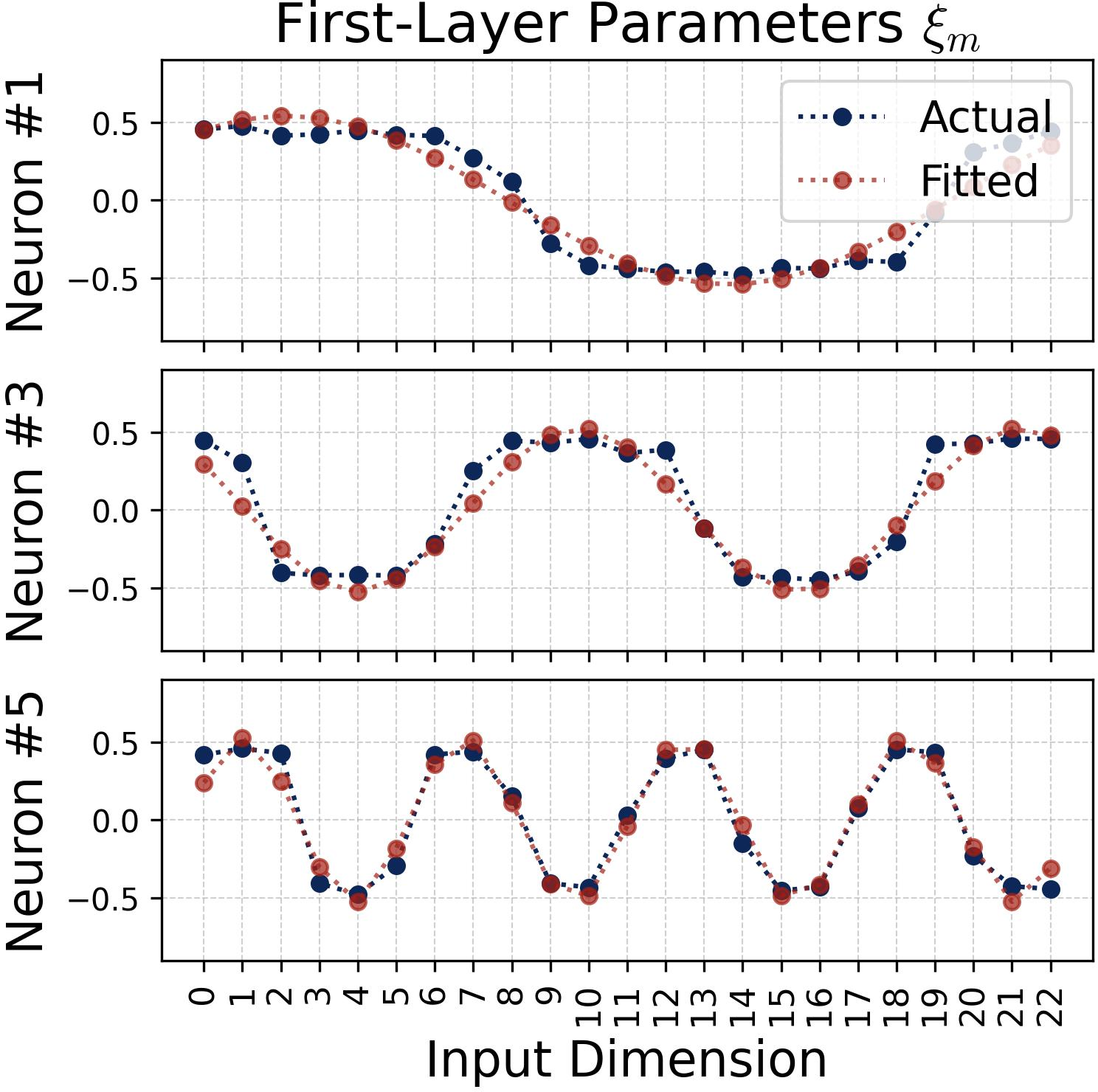

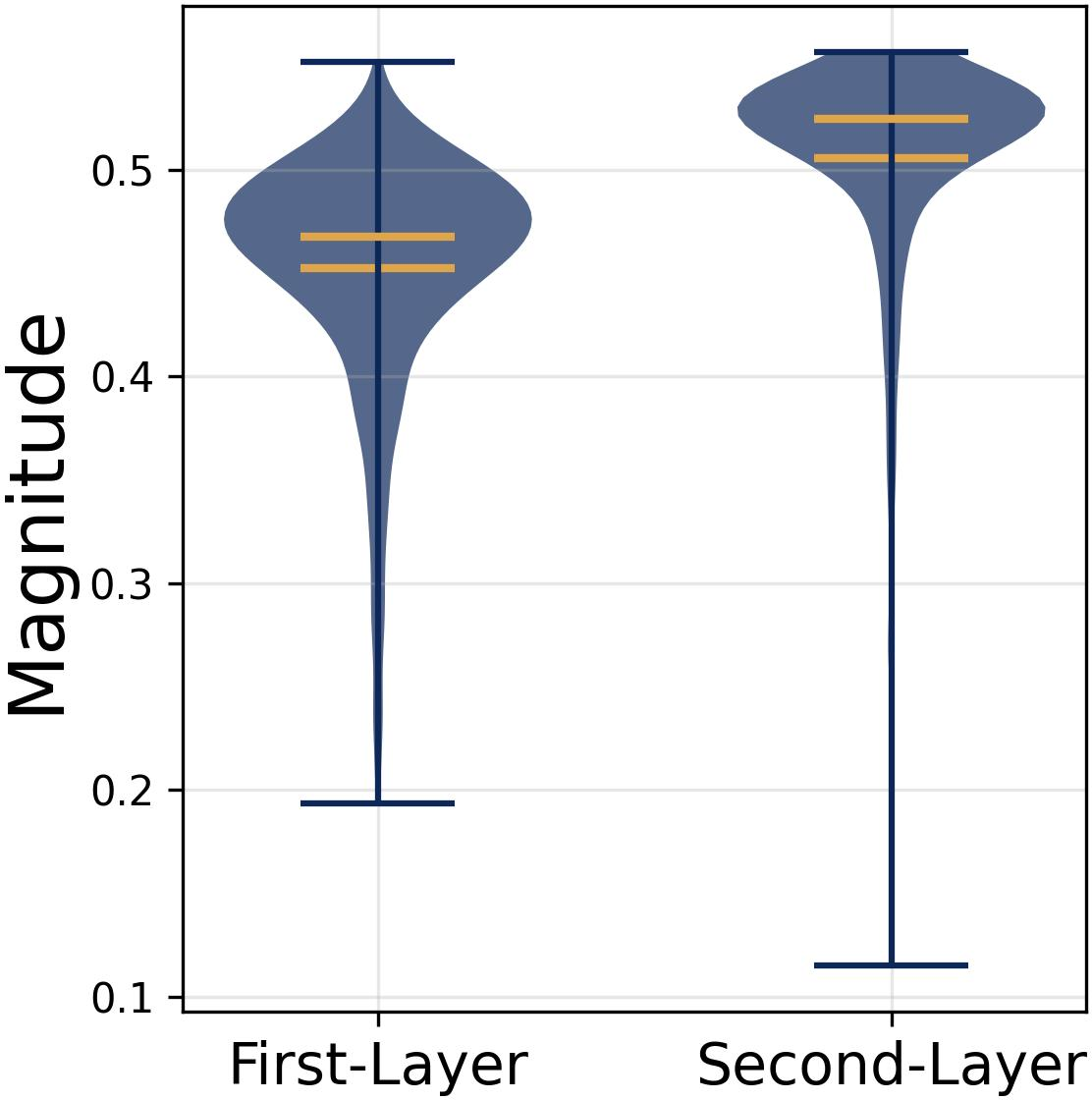

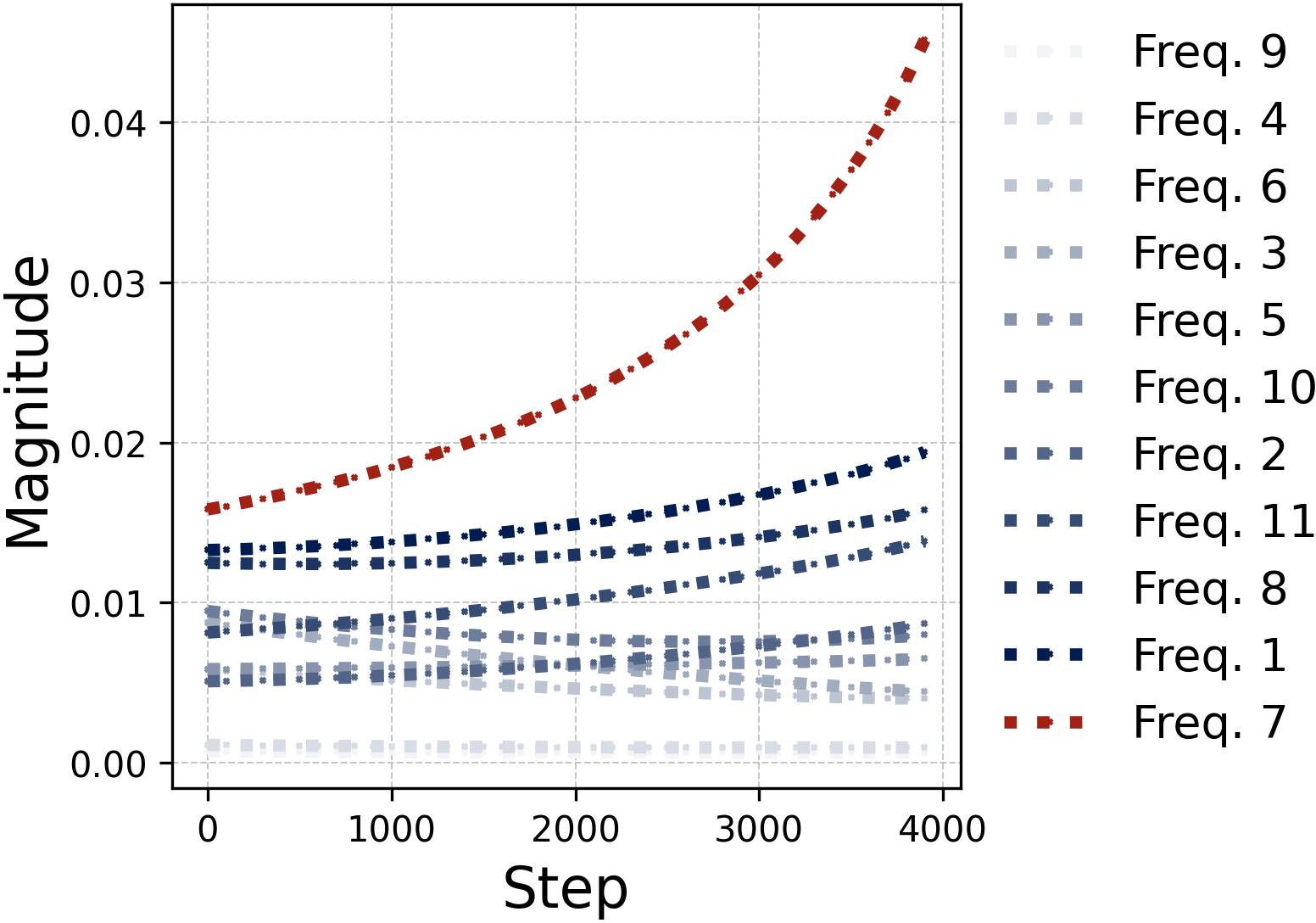

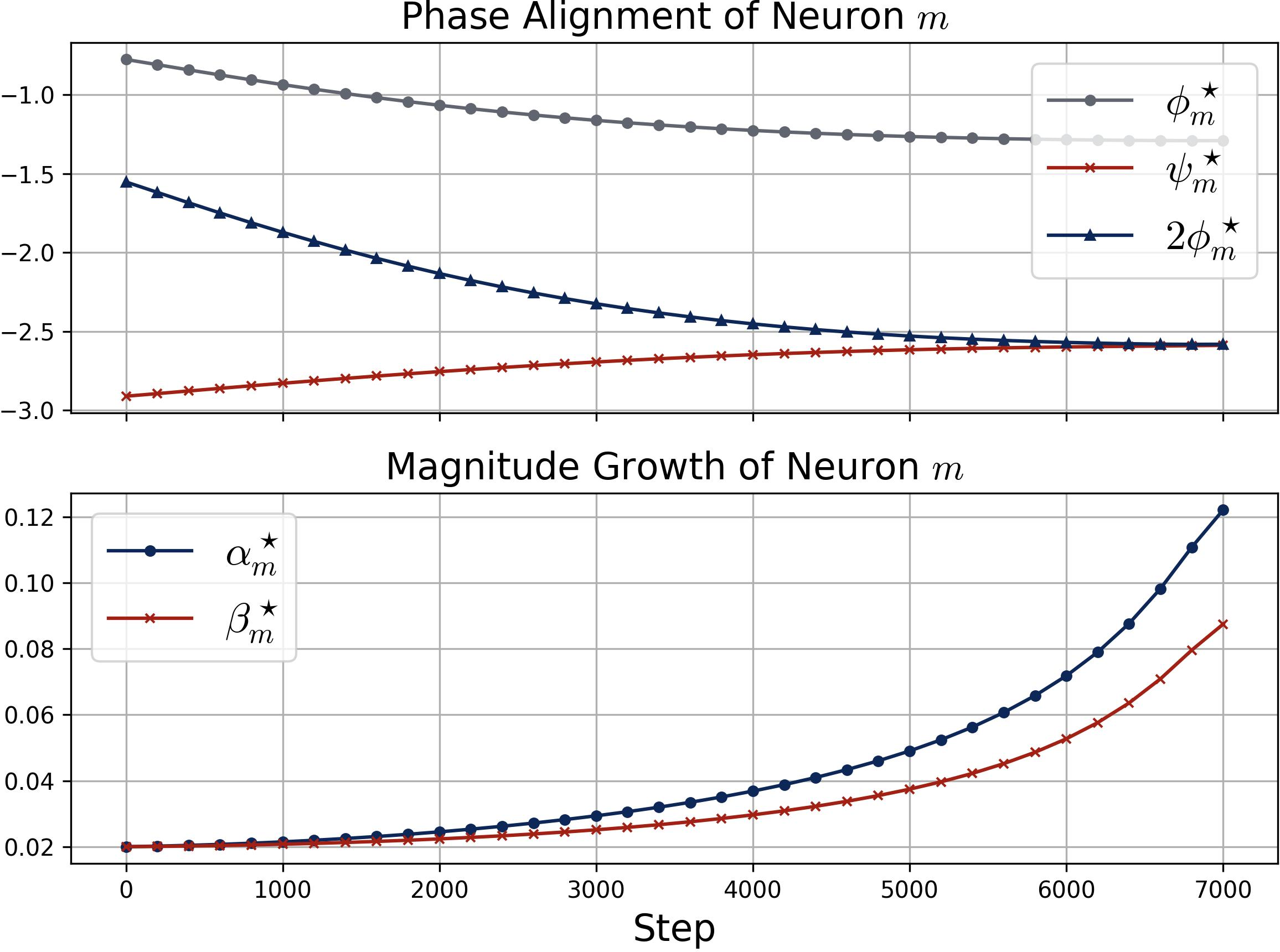

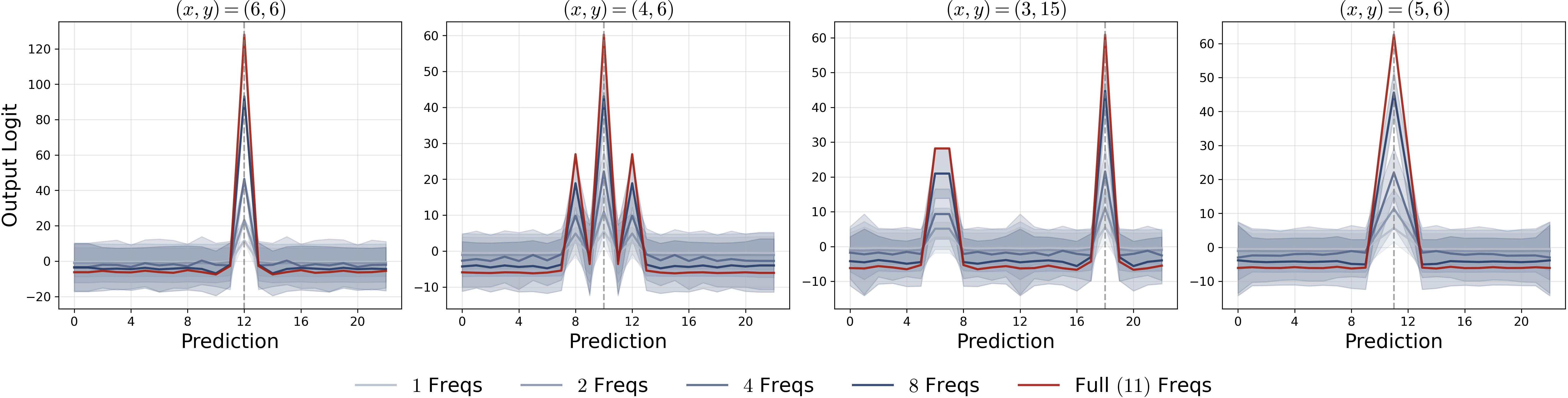

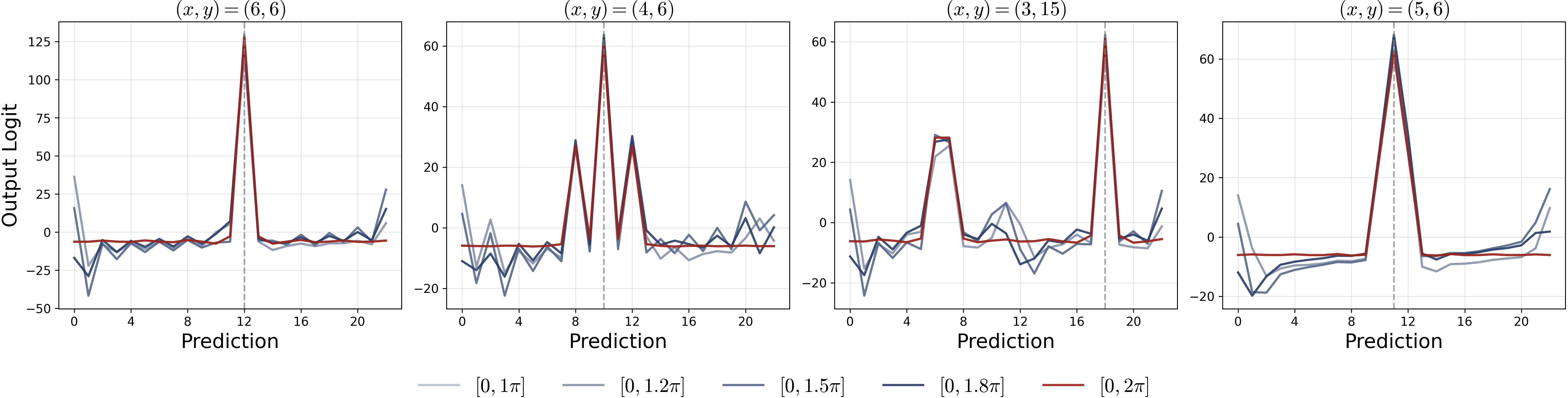

Empirical analysis utilizes Discrete Fourier Transform (DFT) to expose the structure of learned weights and track training dynamics in the feature space. Experimental results reveal that, in the overparameterized regime and under random initialization, each neuron develops a single-frequency Fourier component with tightly aligned phases across layers—a pattern arising consistently regardless of the random seed, modular base p, or activation choice.

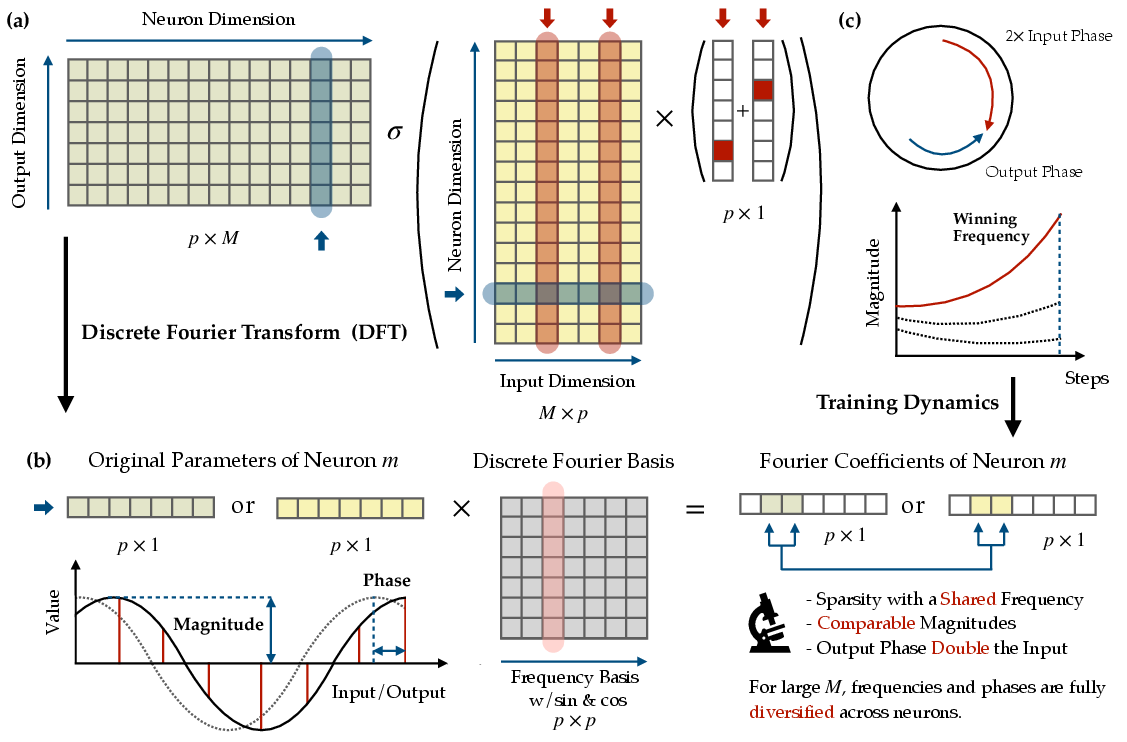

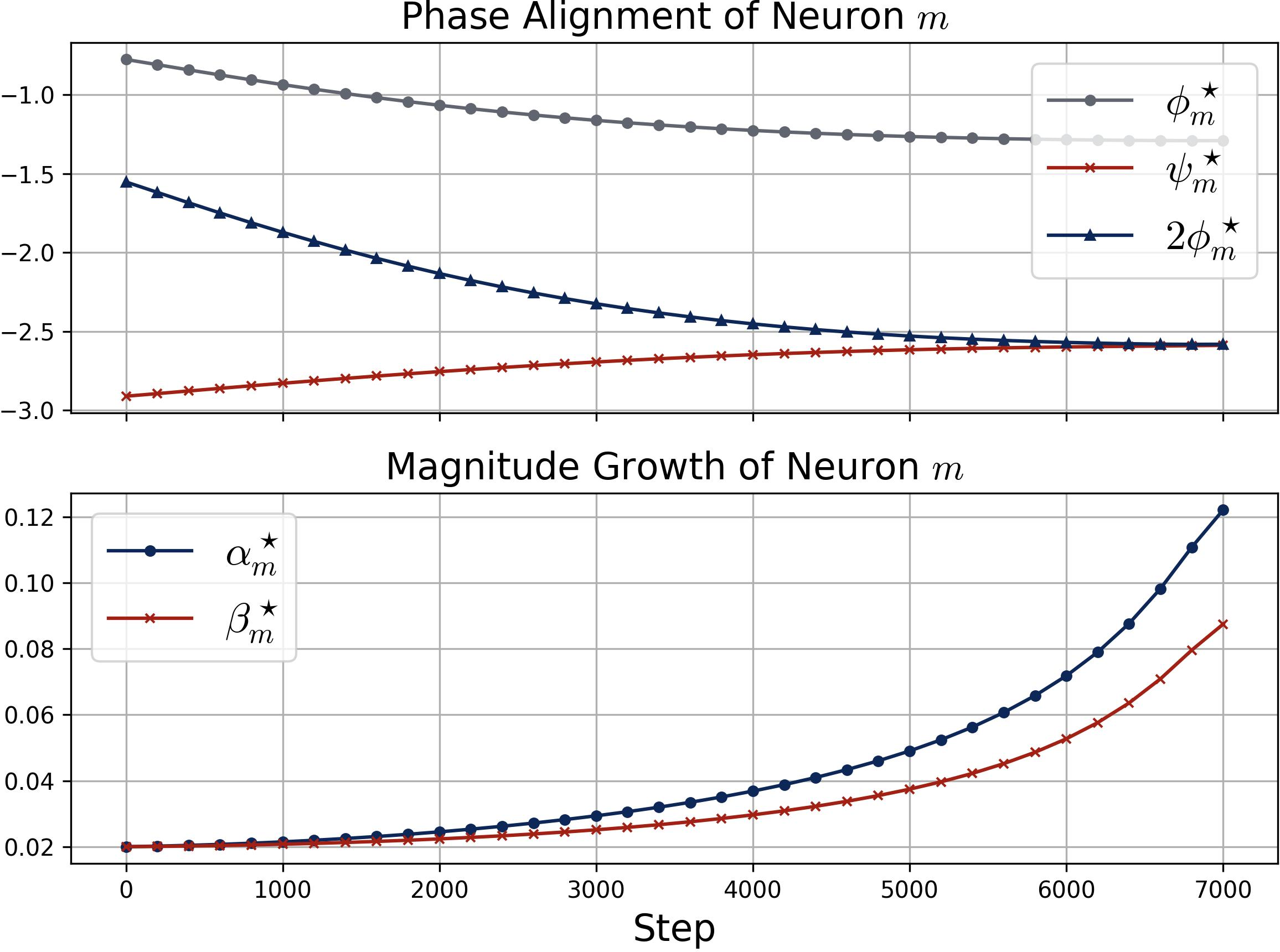

Figure 1: DFT-based analysis identifies the emergence of consistent single-frequency Fourier features, phase alignment, and the lottery ticket mechanism during training.

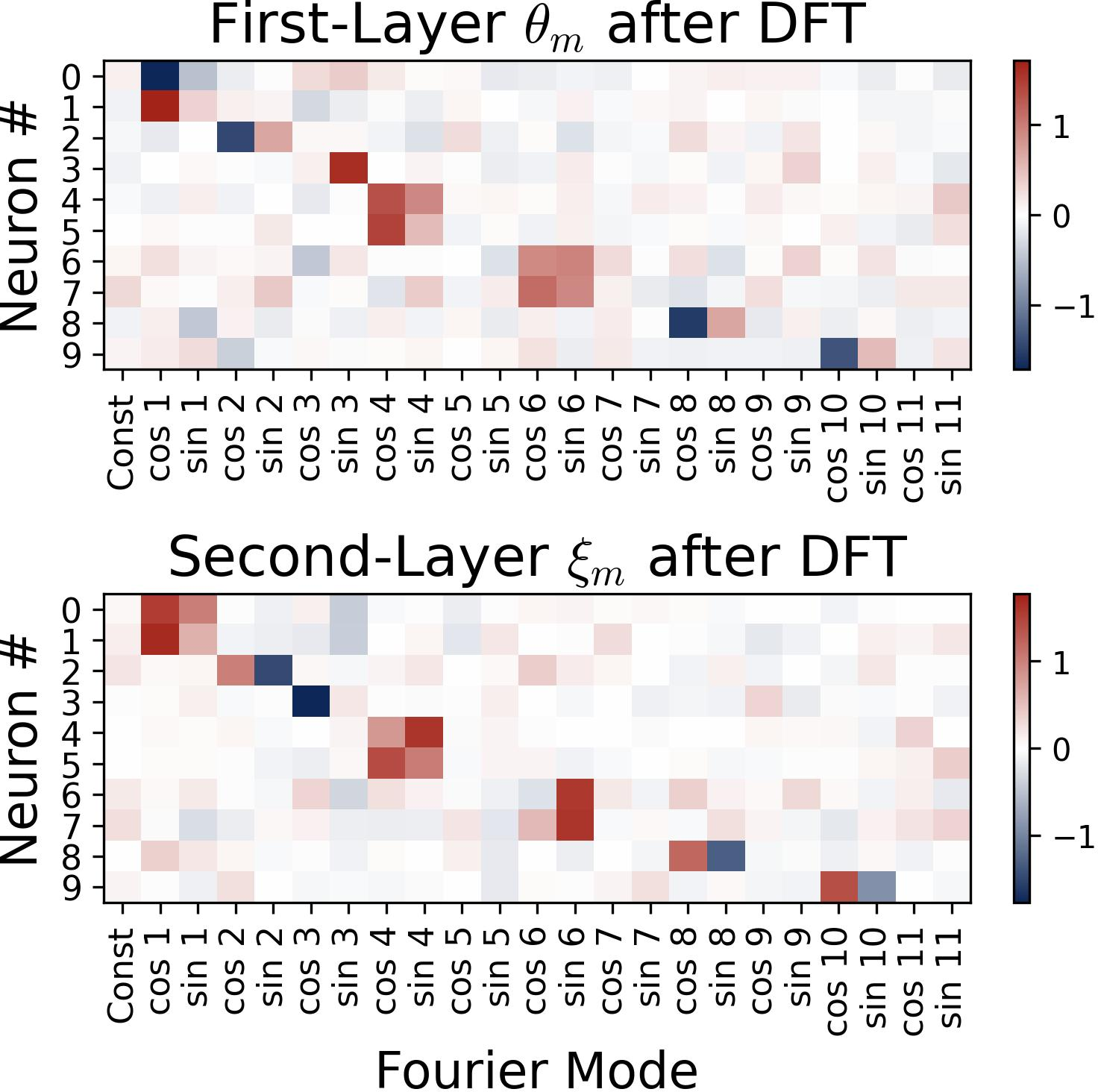

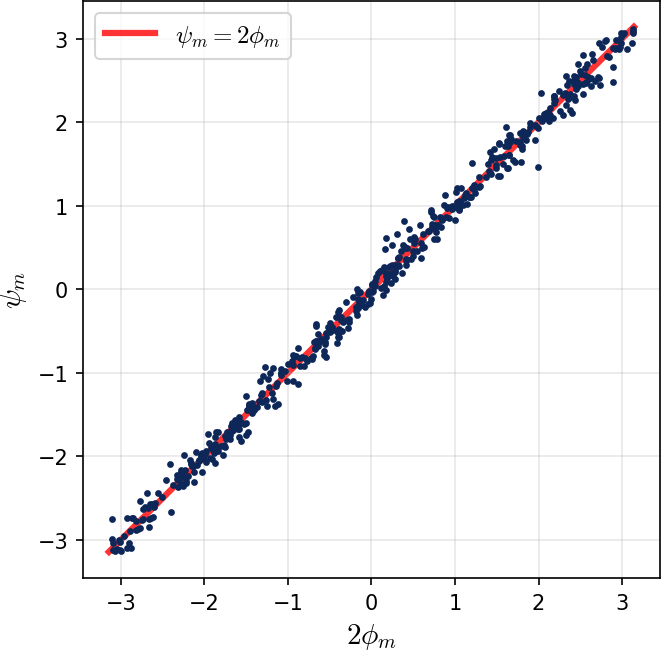

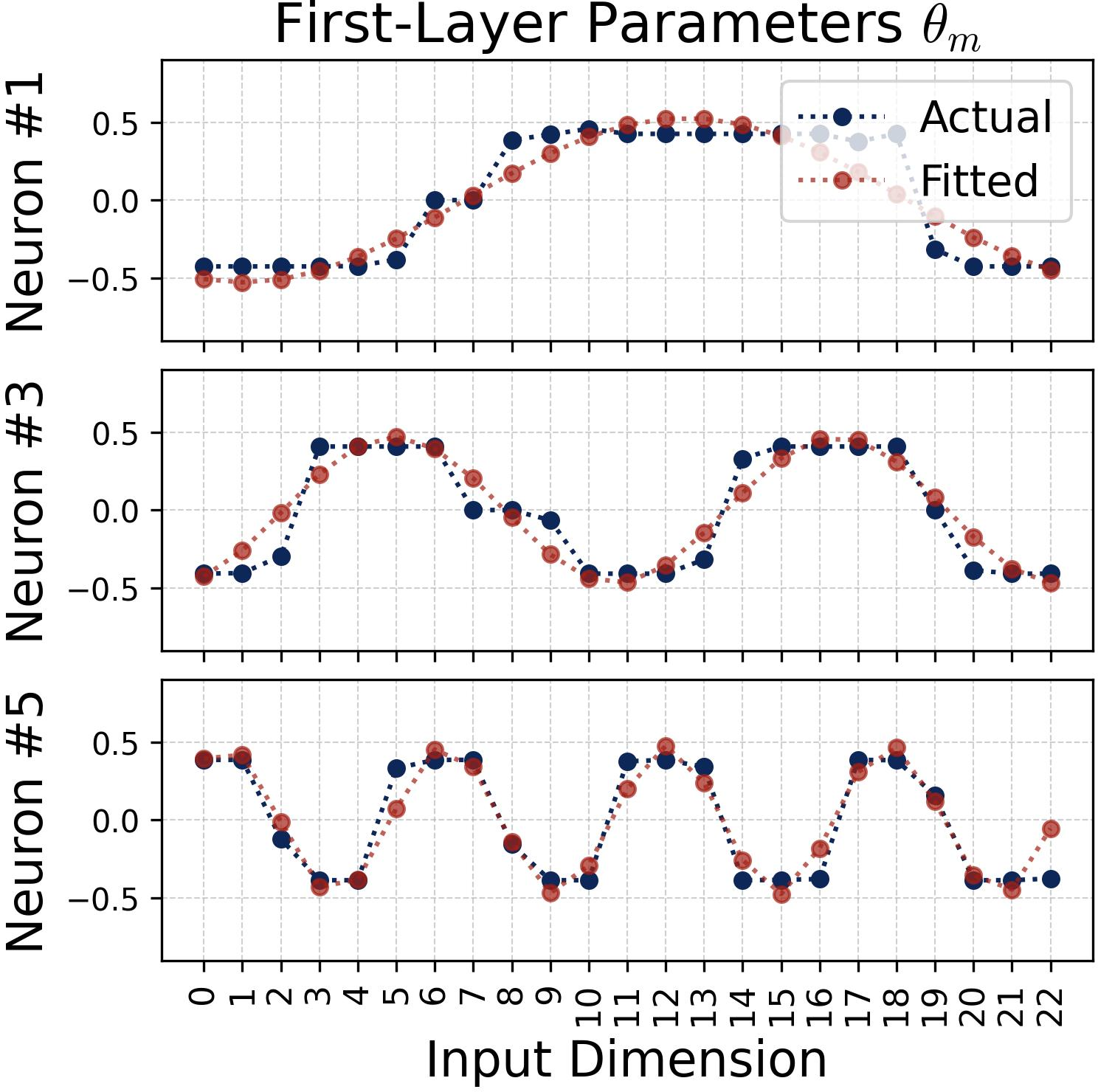

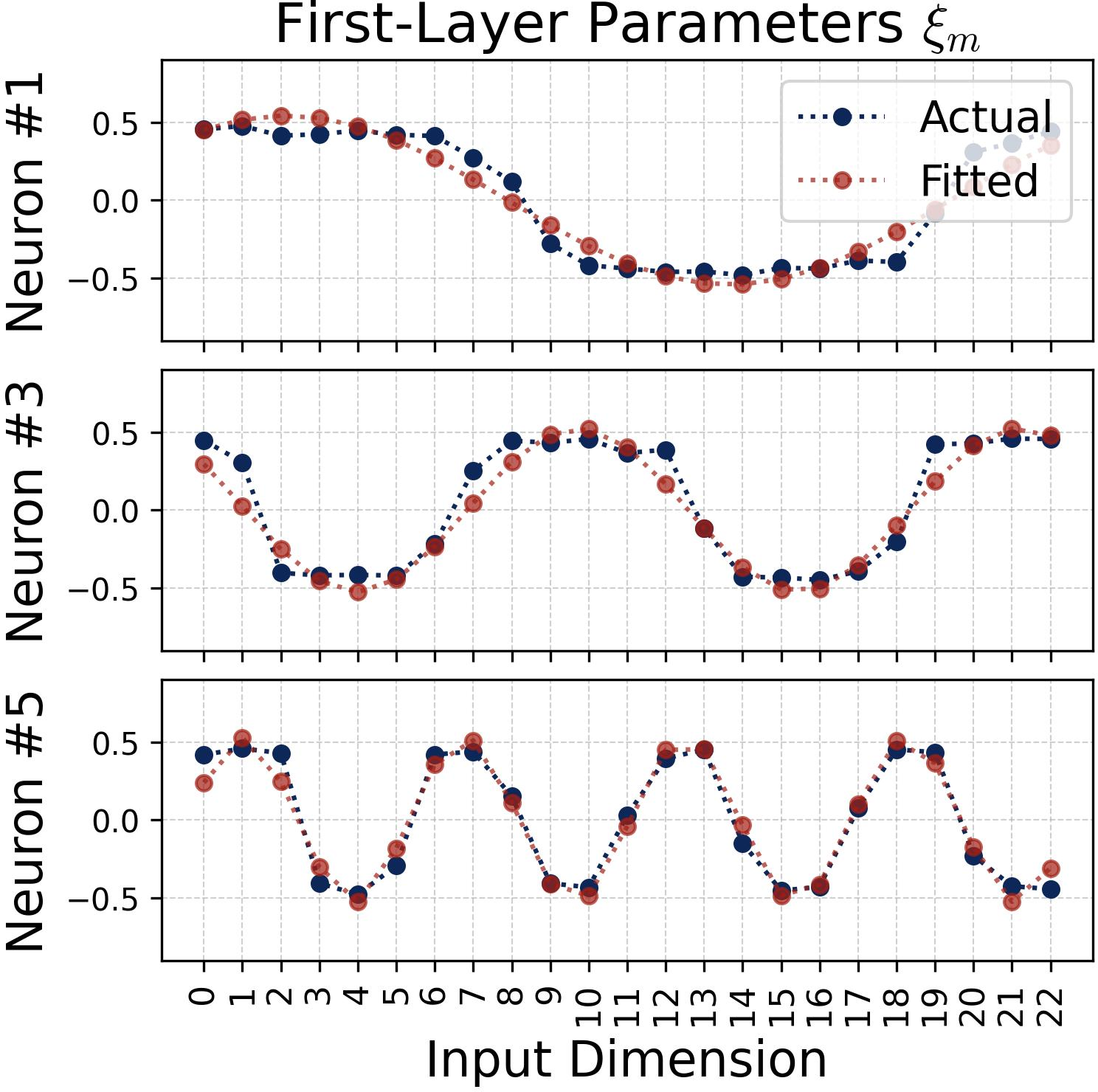

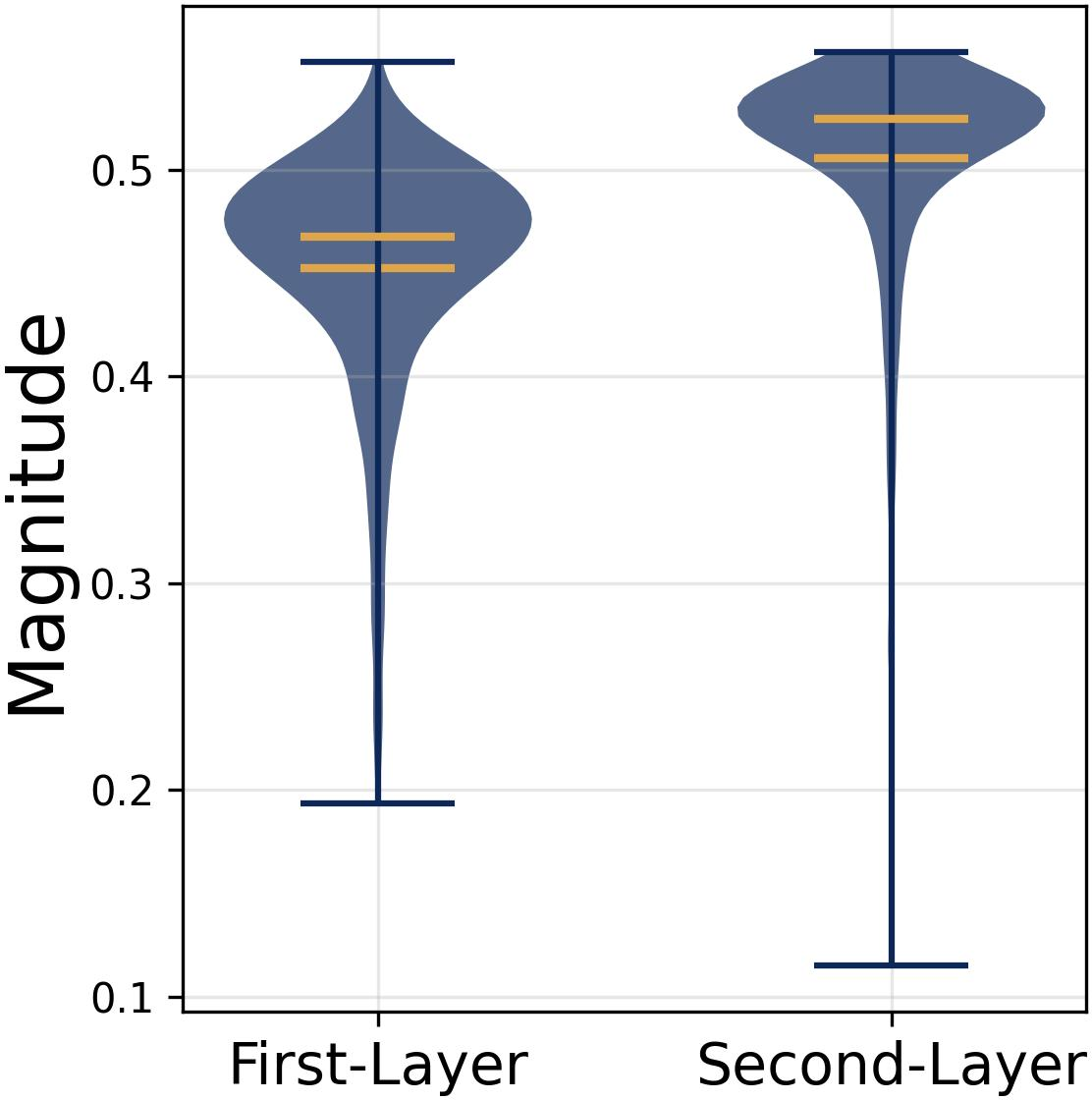

The learned parameters across neurons are well-approximated by trigonometric functions. Post-DFT analysis shows highly sparse frequency encodings: each neuron specializes in a distinct frequency and covers the relevant spectral range across the population. Furthermore, phase alignment is observed, where second-layer output phase ψm closely tracks double the input phase 2ϕm, resulting in effective phase coupling between layers.

Figure 2: Heatmap visualization shows each neuron's parameters concentrate on a single frequency, confirming emergence of sparse frequency encodings.

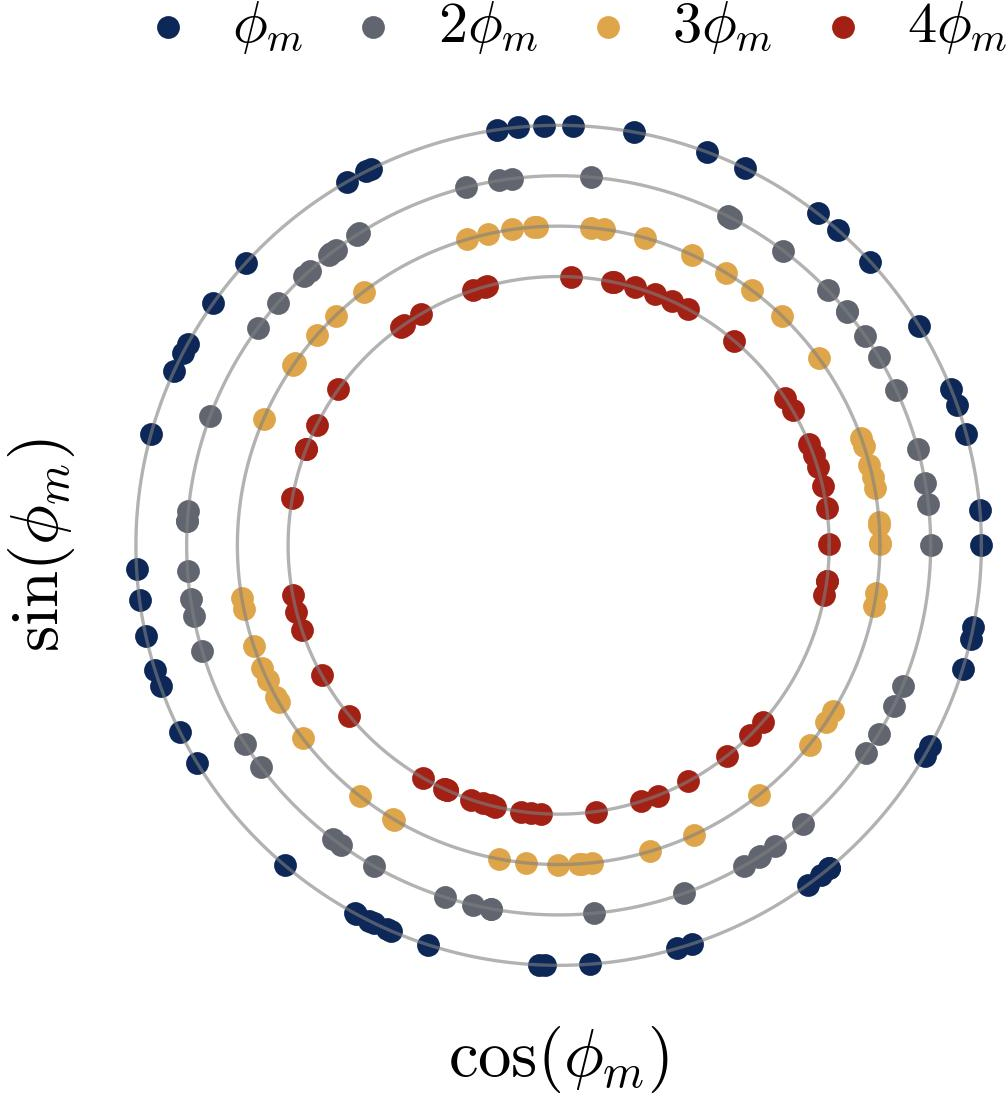

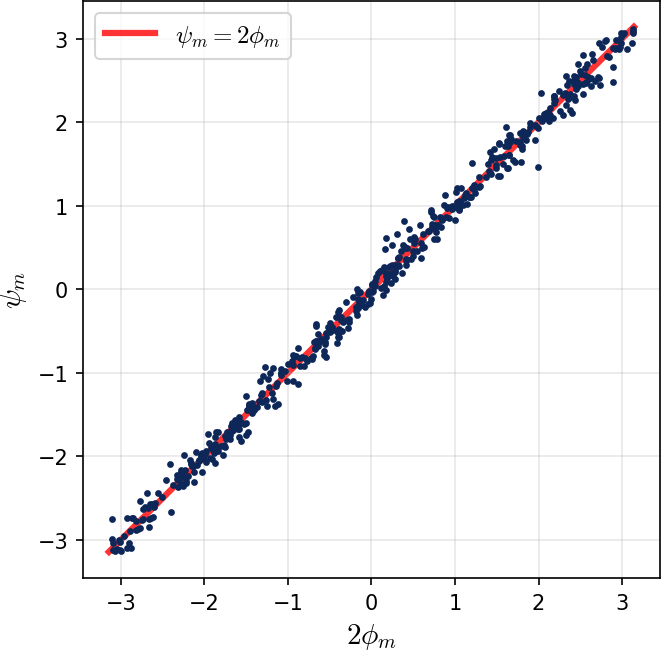

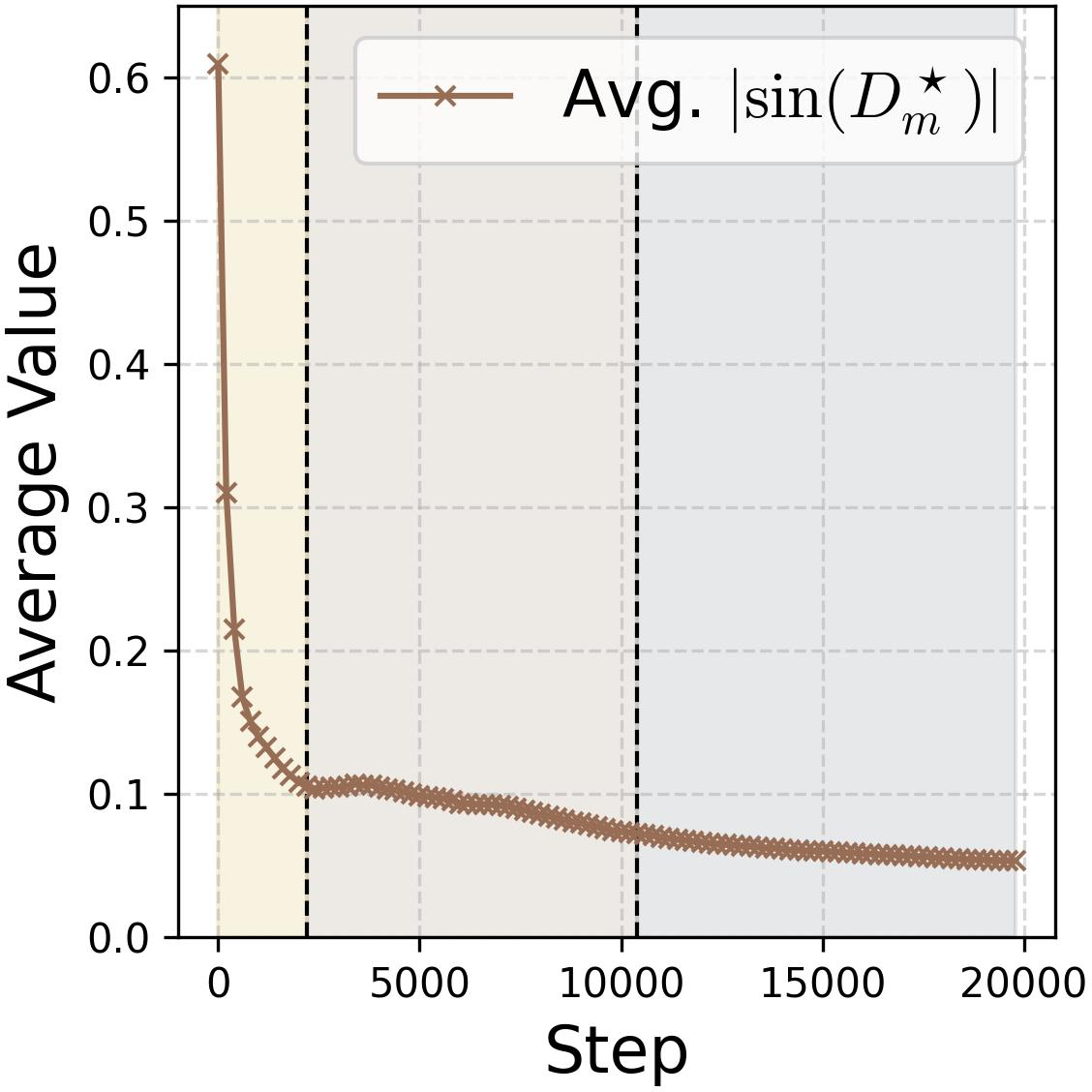

Empirically, neurons in the same frequency group exhibit phase uniformity and magnitude homogeneity. The network as a whole demonstrates a robust majority-voting mechanism in its aggregation of individual noisy trigonometric features.

Figure 3: Learned phases for M=512 neurons are uniformly distributed within each group, enabling robust noise cancellation and majority voting.

The authors formalize a joint condition—frequency diversification and phase symmetry—that is realized during training. This diversification ensures full spectral coverage and symmetric phase distribution within neuron groups, enabling cancellation of the noise terms. The collective output approximates an indicator function for the modular addition logic, with the clean signal peaking at the correct sum and noise terms attenuated via aggregation.

Proposition analysis demonstrates that, under a fully diversified neuron population of sufficient width, the network’s output logits concentrate on the true modular sum, achieving negligible cross-entropy loss and maximal confidence margin between correct and erroneous outputs.

Training Dynamics: Lottery Ticket and Feature Competition

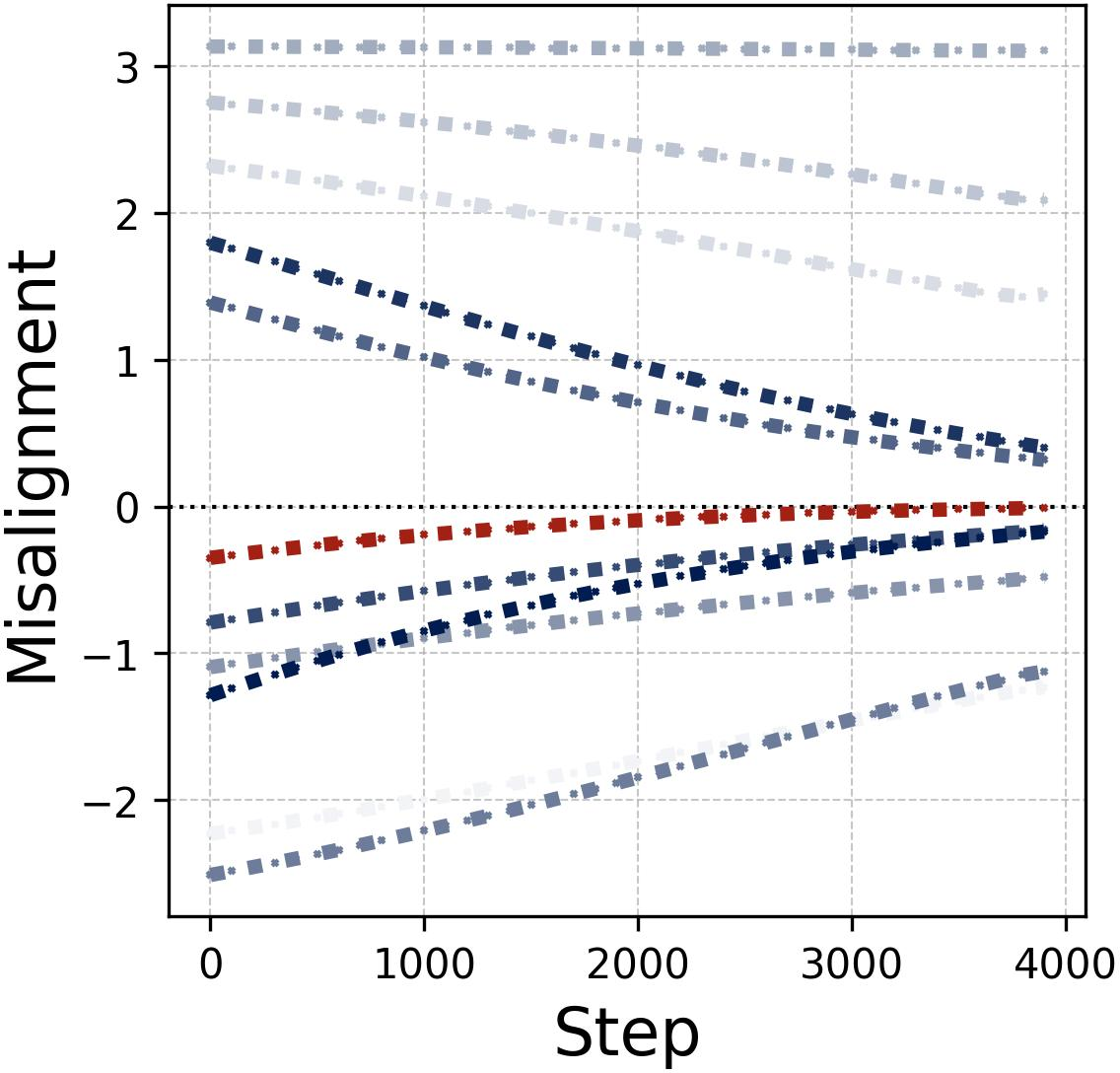

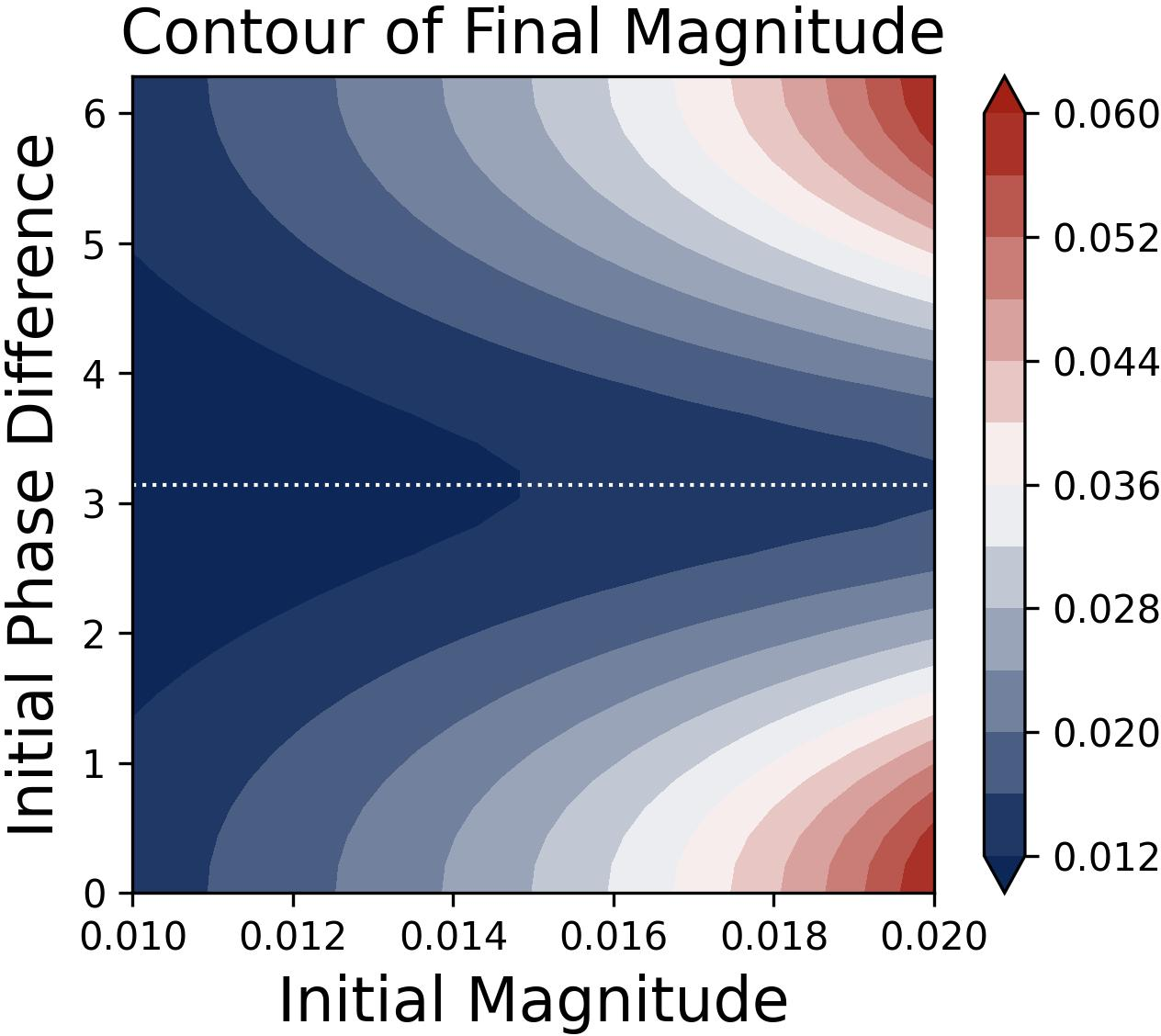

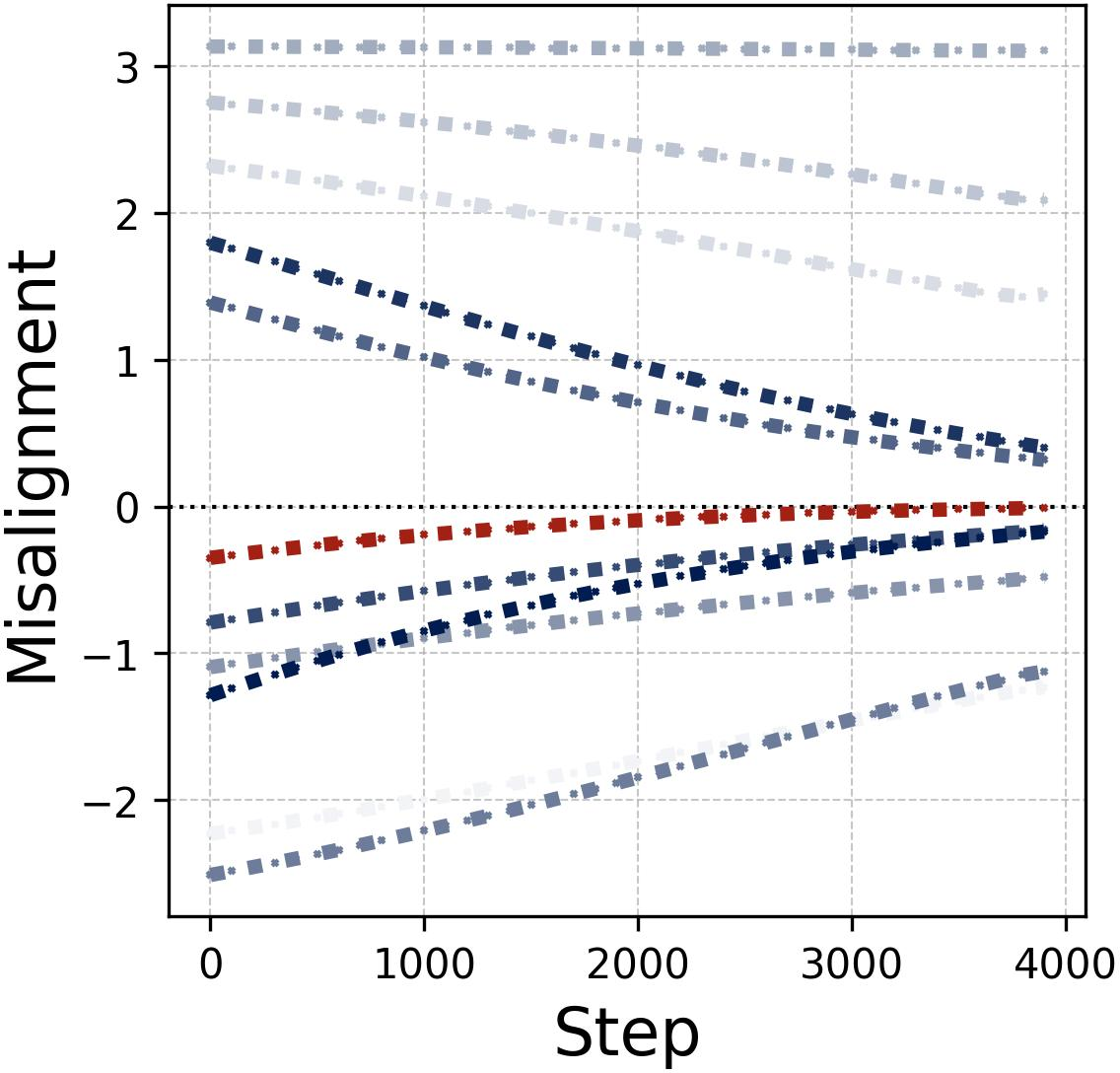

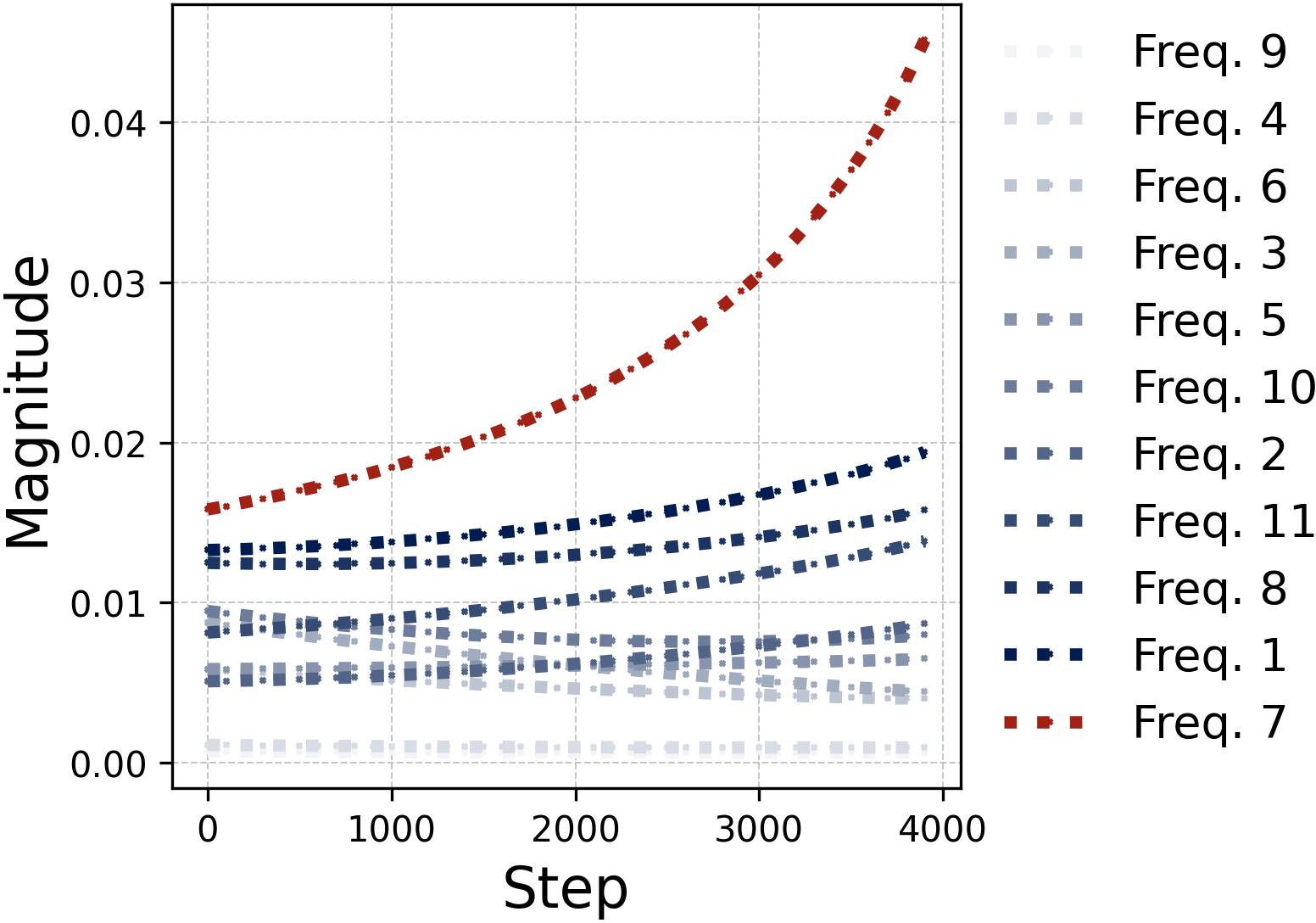

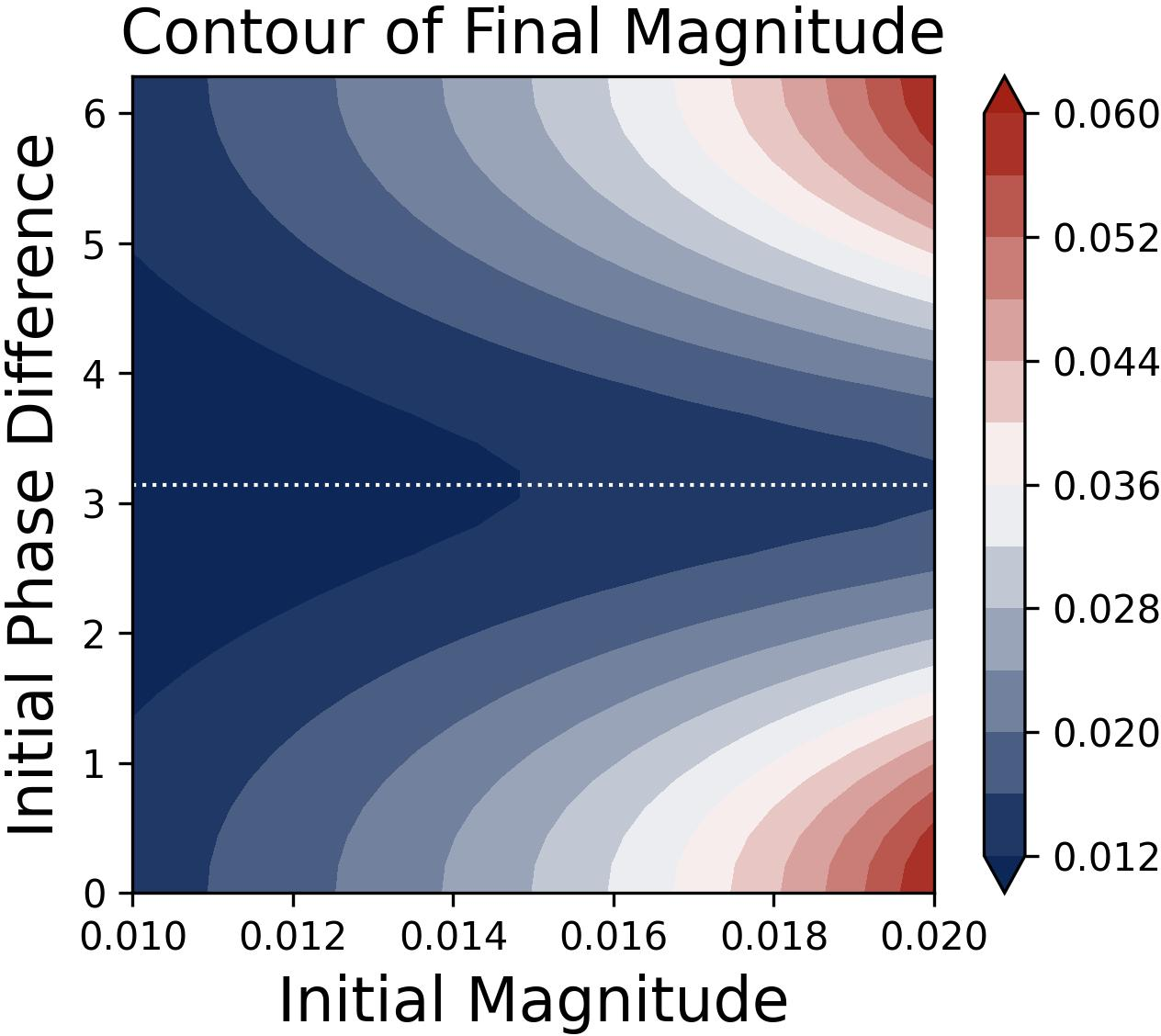

The study provides a rigorous account of how feature emergence unfolds via competition dynamics at the neuron-frequency level, formalized as a lottery ticket mechanism. Each neuron’s frequency components compete during gradient-based training; the winner is determined by initial spectral magnitude and phase misalignment. Frequencies that begin with high magnitude and near-alignment dominate and accelerate growth exponentially, while others decay.

Figure 4: Frequency competition within neurons is visualized, illustrating that the winner exhibits rapid exponential growth and dominates feature encoding.

The decoupling of neurons during early-stage training and subsequent selection of dominant frequency per neuron are proven via ODE analysis and chain rule in the Fourier domain. This mechanism is robust across different activation functions as long as they retain nonzero even-order components, especially quadratic and ReLU activations.

Figure 5: Alignment trajectories and magnitude evolution confirm layerwise phase coupling and dominance of winning frequency components.

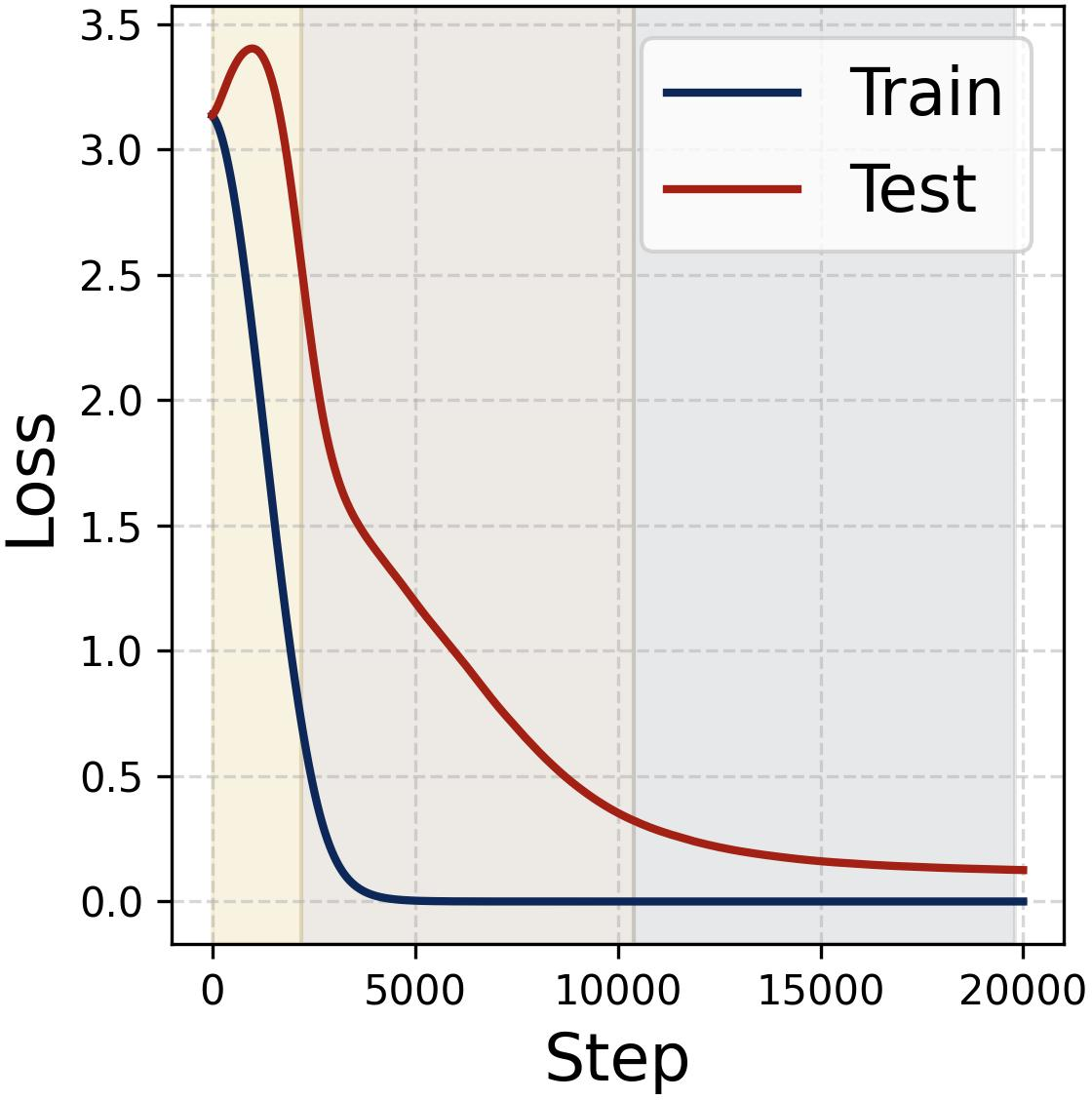

Grokking: Phases of Memorization and Generalization

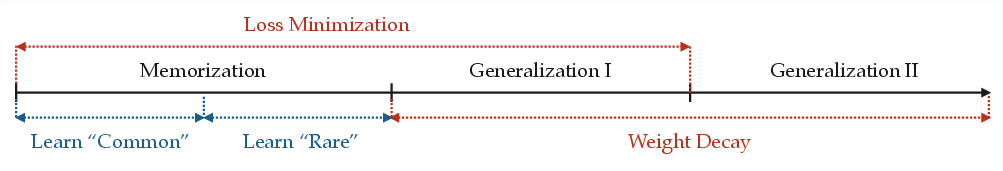

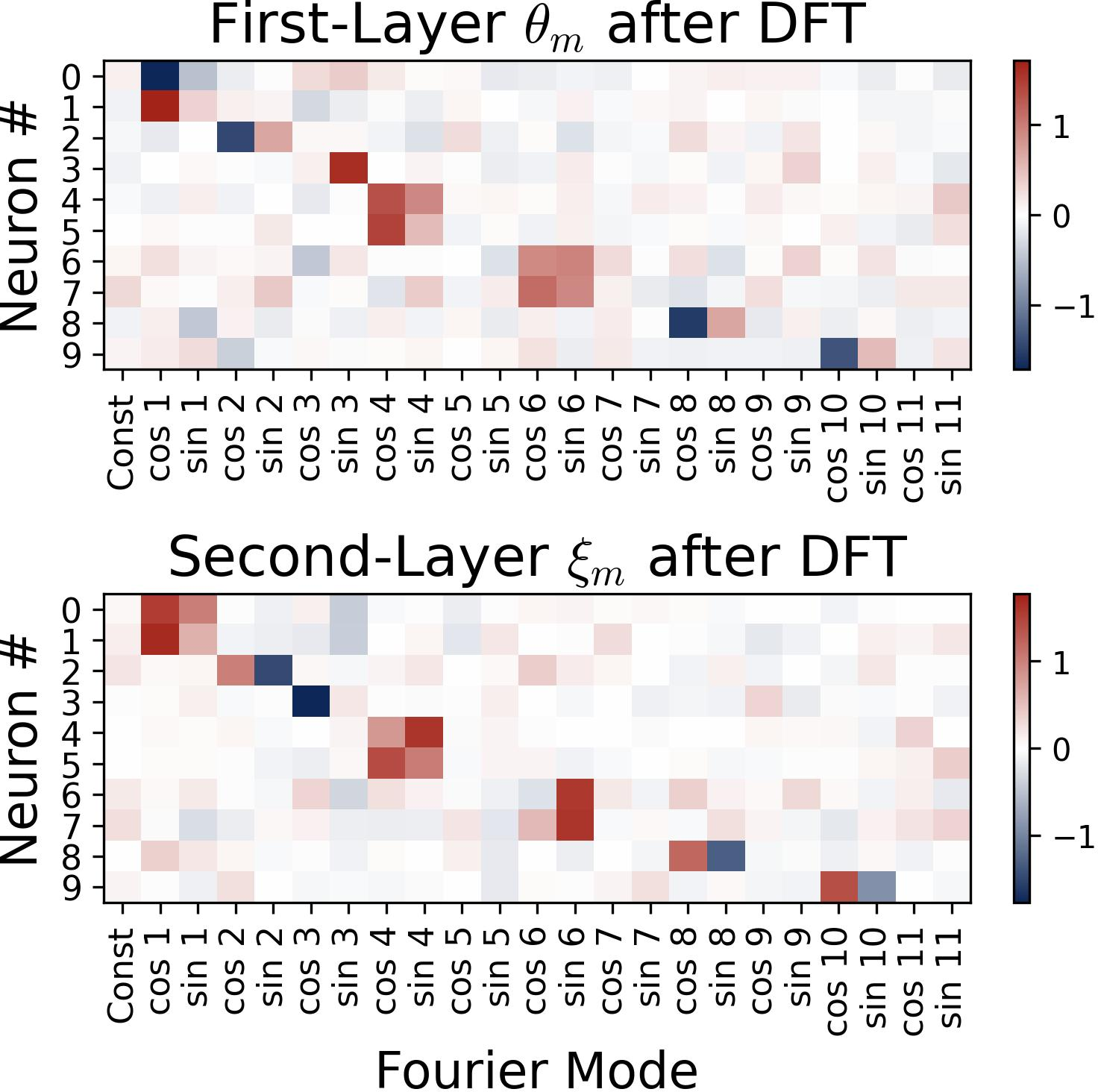

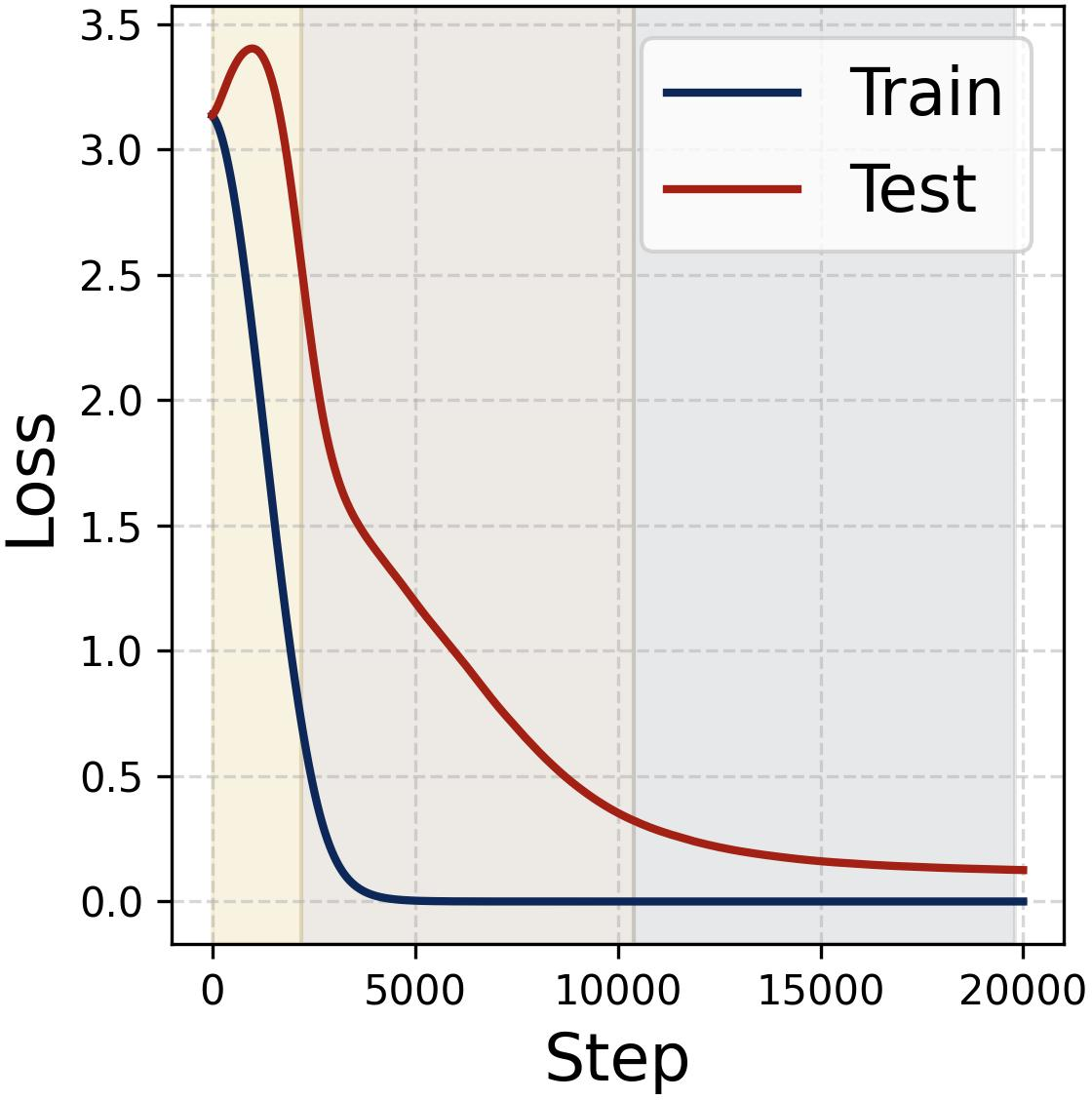

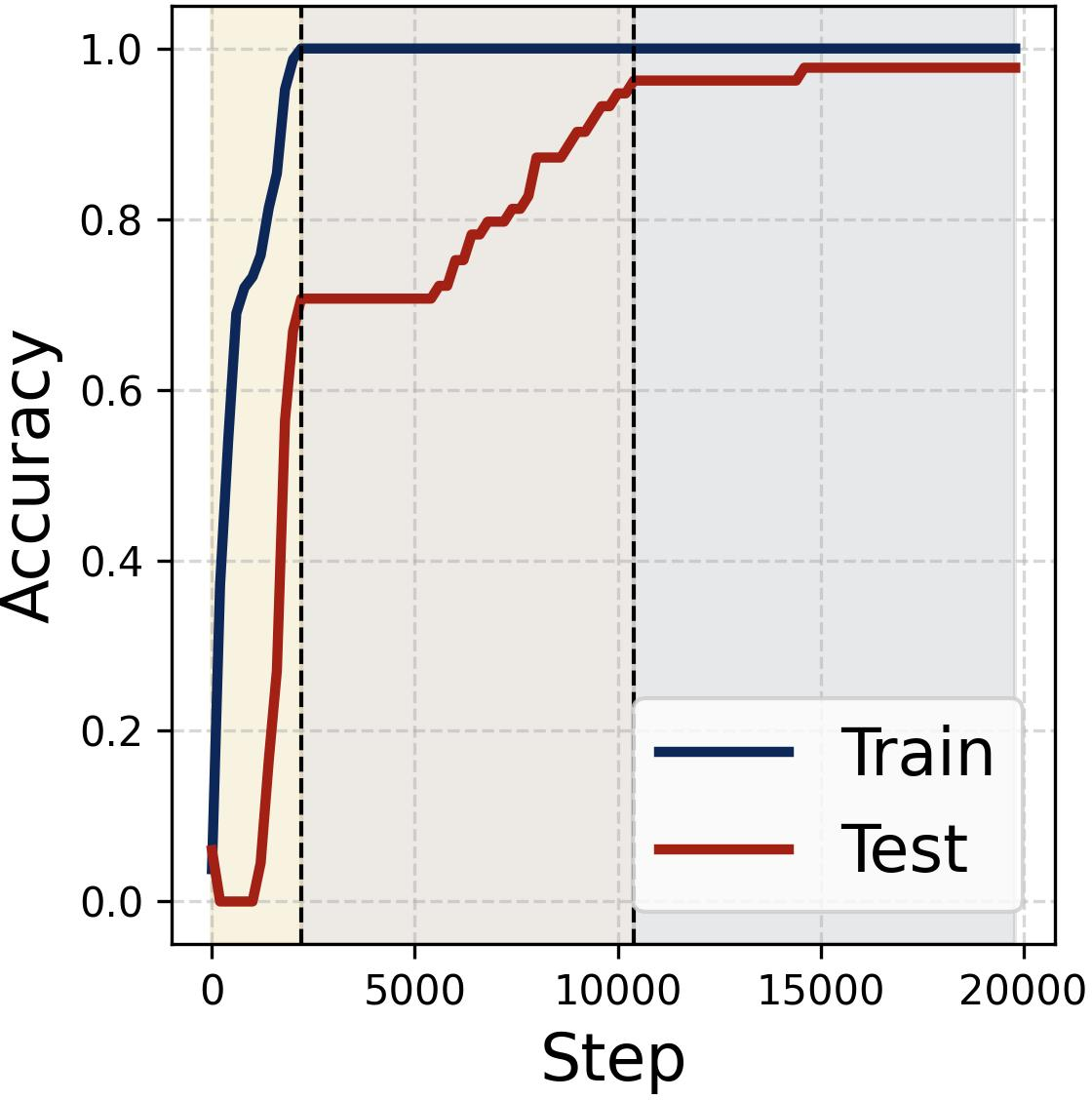

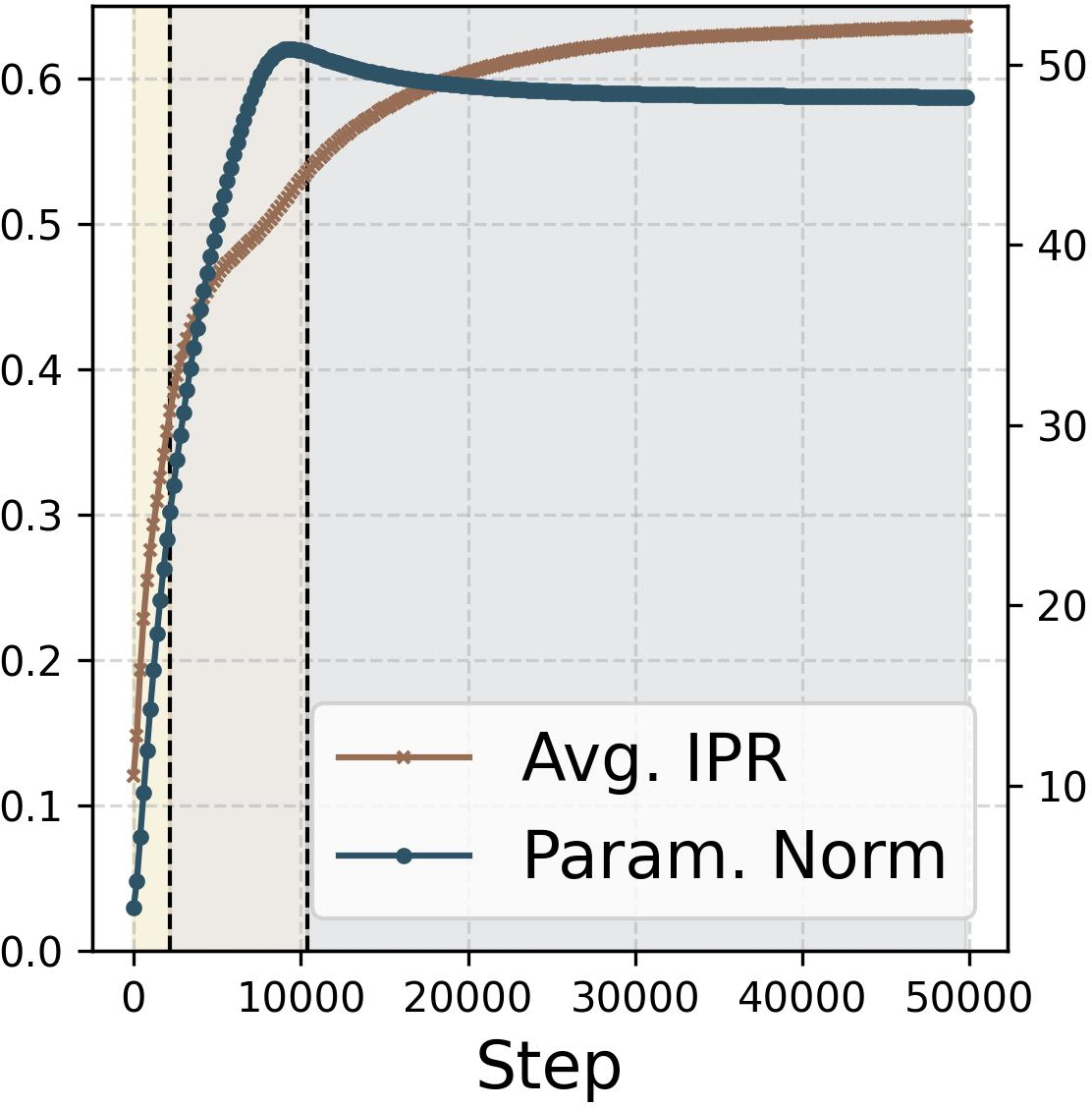

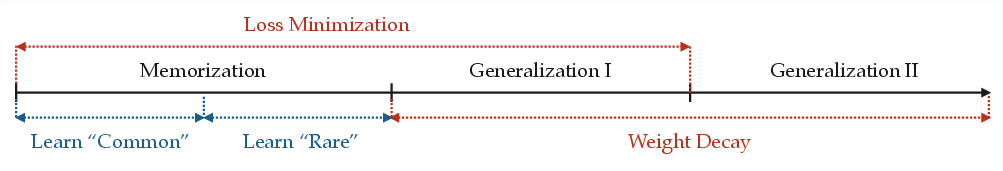

The delayed generalization phenomenon—grokking—is characterized as a three-stage dynamical process, governed by the interplay of loss minimization and weight decay. The network first memorizes training data, then progressively prunes noisy non-feature frequencies, and finally refines toward sparse, clean Fourier solutions enabling robust generalization.

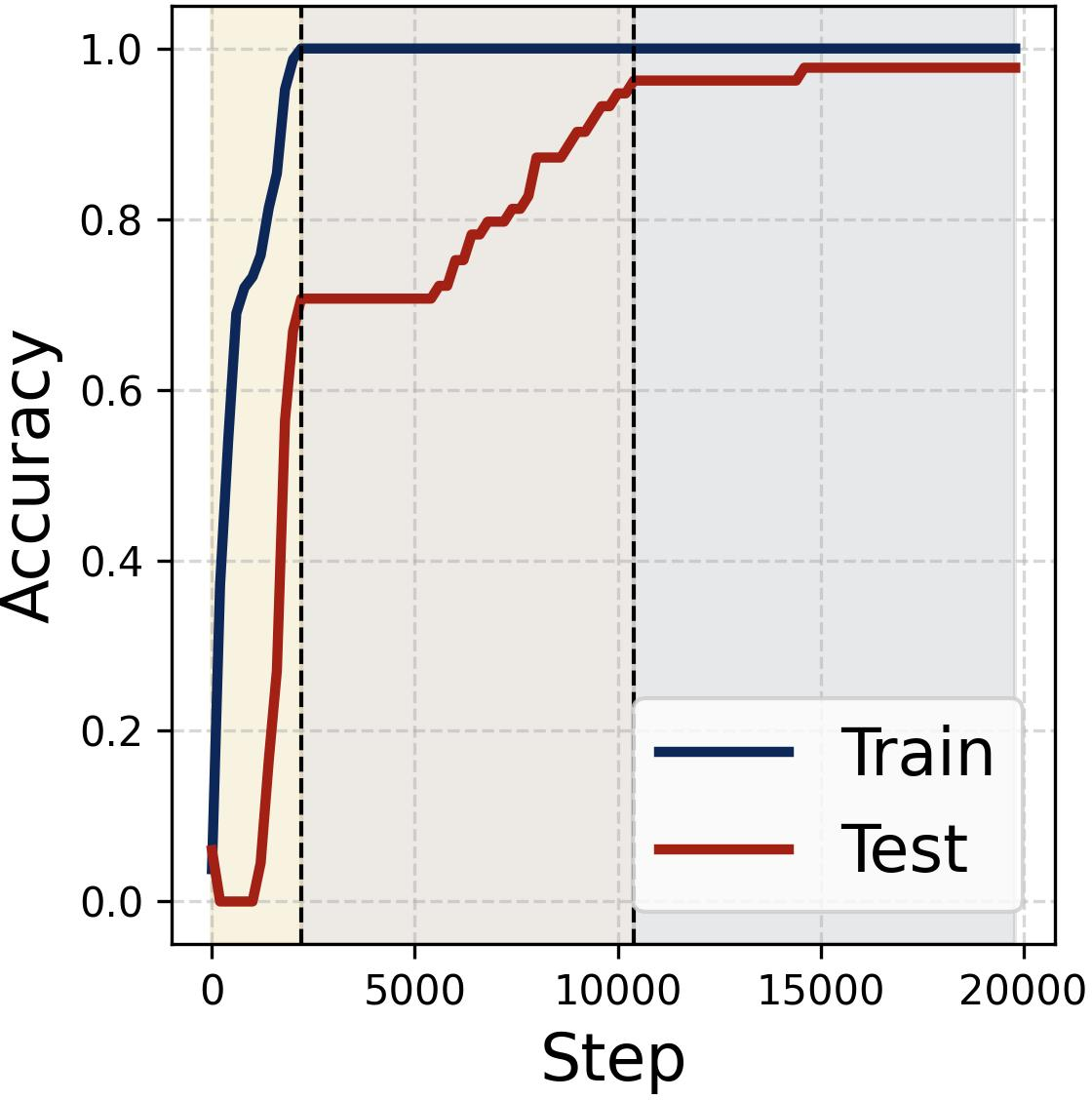

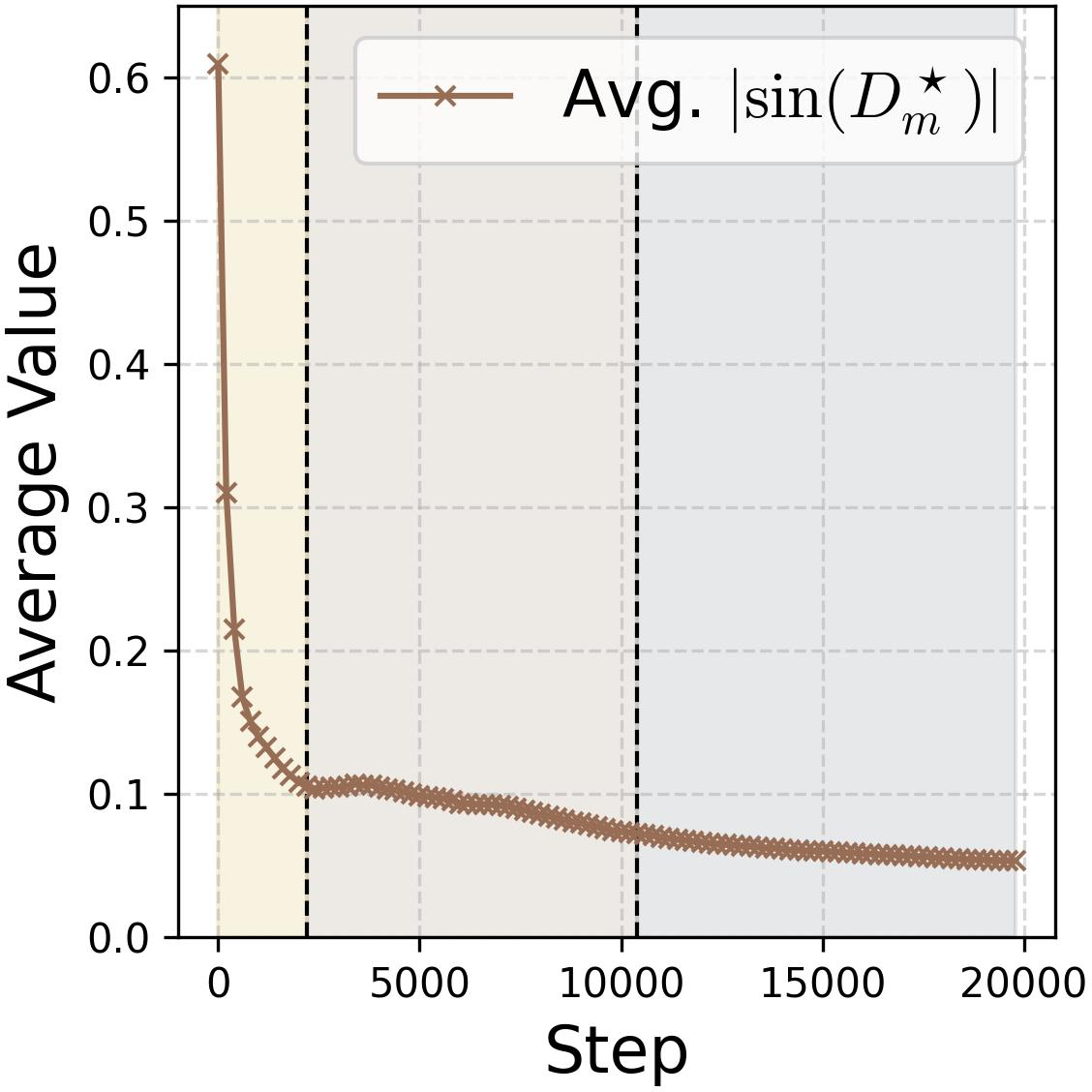

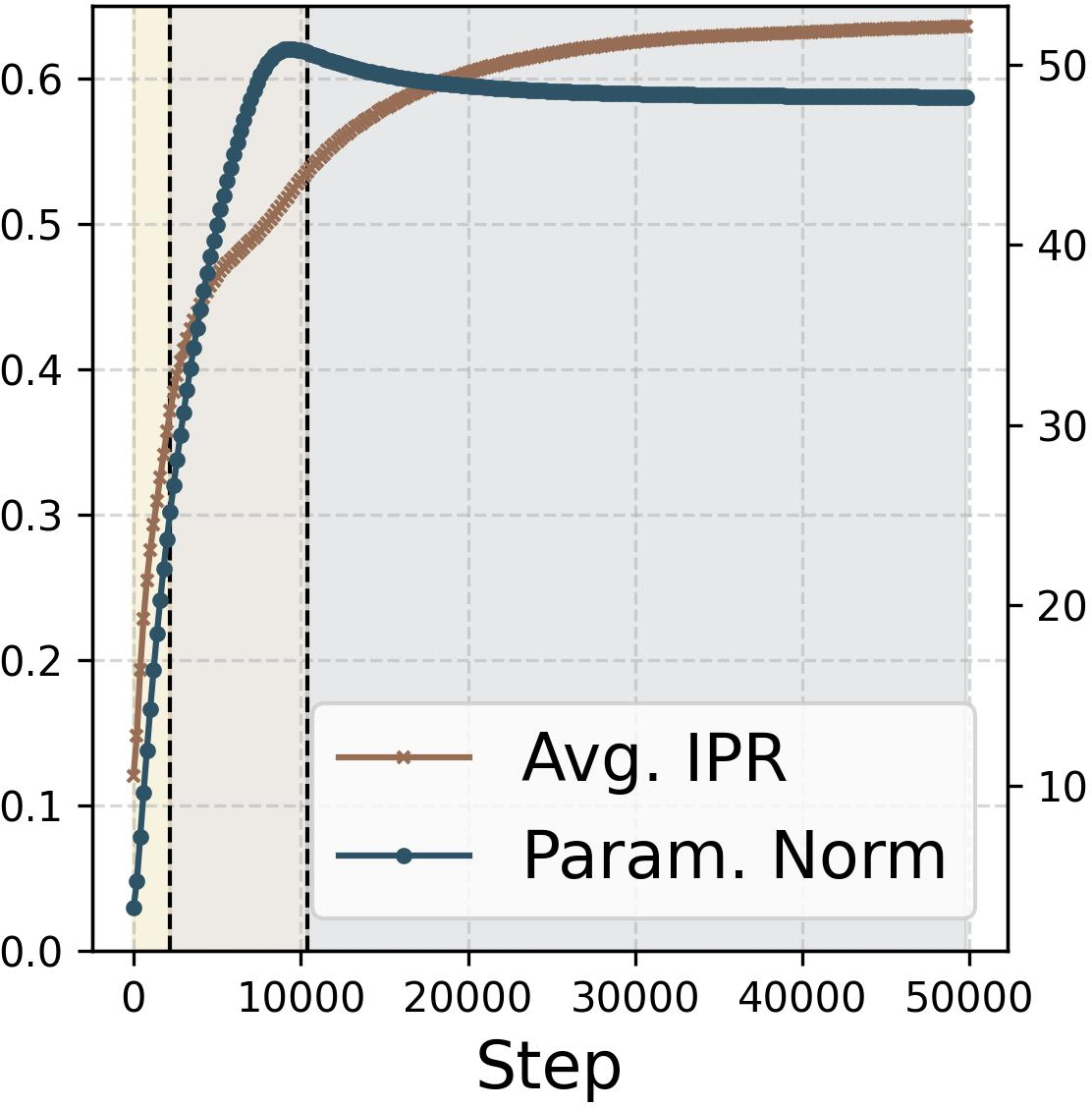

Figure 6: Training/test loss, accuracy, phase alignment, and feature sparsity dynamics show distinct memorization and two generalization phases during grokking.

During early memorization, the network overfits with perturbed Fourier solutions. Weight decay then drives iterative pruning of non-feature frequencies, and eventual convergence of parameters toward highly sparse and confidently aligned features, facilitating abrupt improvements in test performance.

Figure 7: Illustration of the three grokking stages and their dominant driving force: loss minimization and weight decay.

Theoretical Extensions and Numerical Results

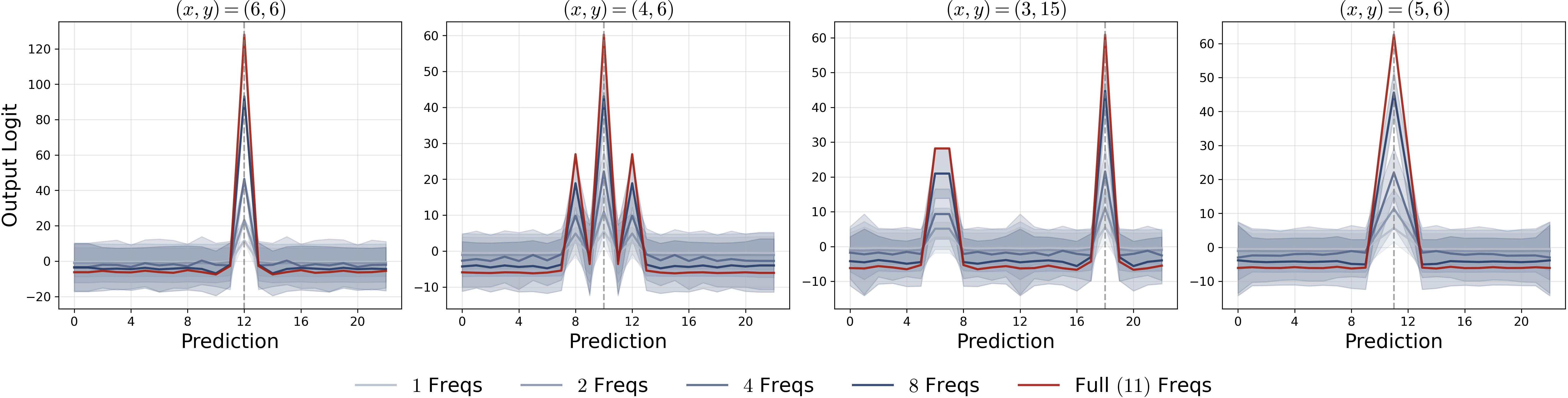

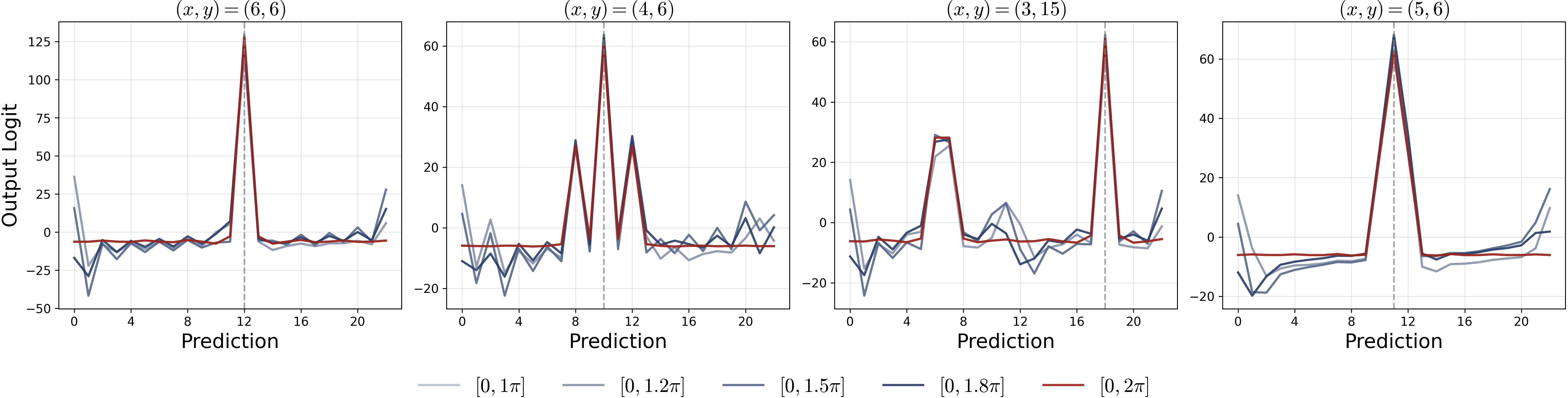

The theoretical framework is extended to multi-frequency initializations, rigorously confirming order preservation and monotonic dominance in frequency competition across neurons. The mechanism generalizes, confirming robustness to activation swapping: models trained with ReLU continue to perform under quadratic or other even-component activations without loss of accuracy.

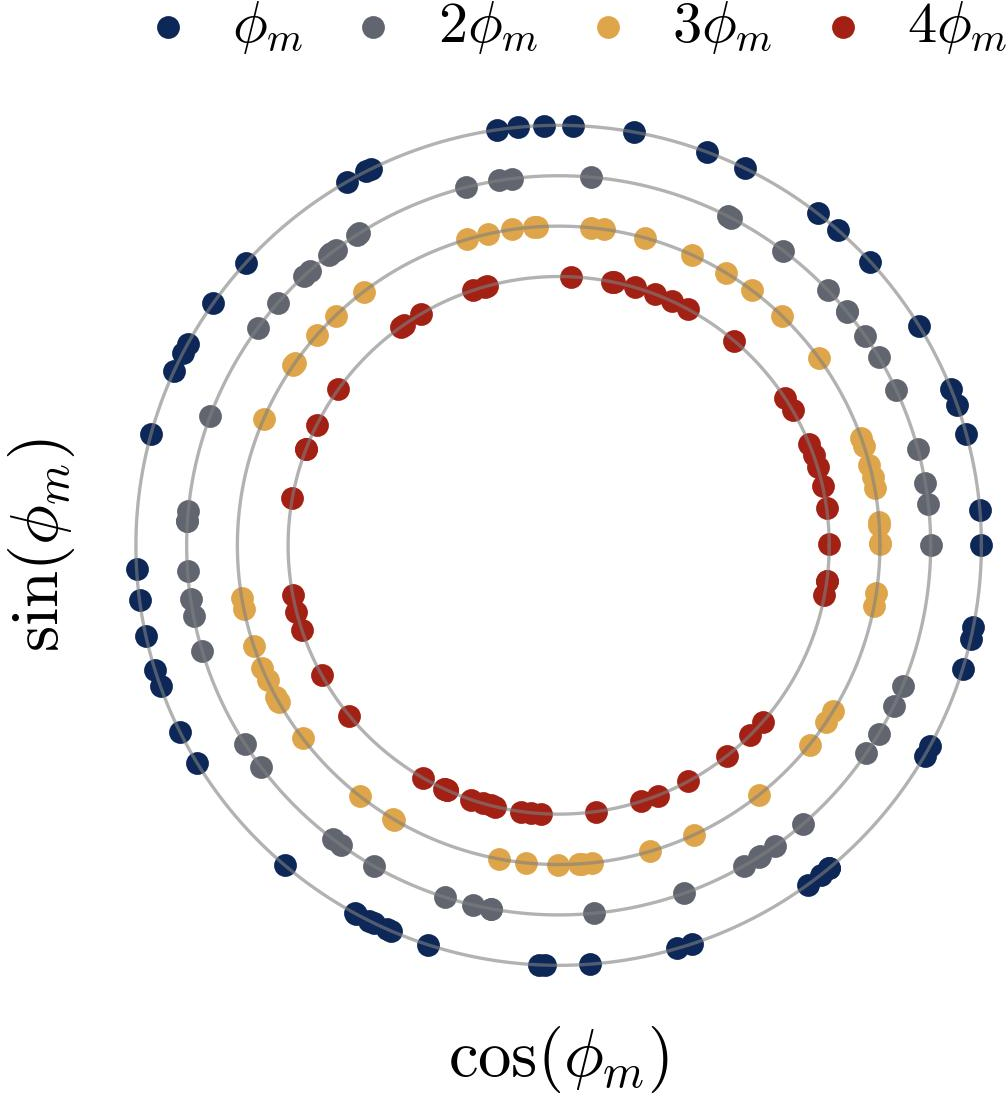

Numerical ablations validate that fully diversified frequency/phase parametrizations maximize parameter efficiency, achieving lowest losses and maximal output logit gaps between correct/incorrect labels.

Figure 8: Fully diversified parametrization yields maximal logit gap and confidence in prediction, substantiating theoretical claims.

Implications and Future Directions

Practically, these results elucidate the precise interplay between gradient-based optimization, initial spectral structure, and the emergent symbolic computation in neural networks. The formal mechanism and dynamics described here establish necessary conditions for robust generalization and efficient learning in discrete algebraic tasks. The analysis underscores the significance of spectral diversity and phase symmetry, both for avoiding pathological overfitting and for maximizing parameter efficiency.

Theoretically, this study offers rigorous insights extendable to more intricate group operation tasks, structured reasoning, and the emergence of abstract representations. The formalization of neuron decoupling, majority voting, and lottery ticket mechanisms presents a blueprint for future studies of neurosymbolic reasoning, neural algorithmic learning, and phase transition phenomena in deep architectures.

The grokking stages, driven by explicit competition between loss minimization and weight regularization, suggest plausible meta-learning and architecture design strategies for enhancing interpretability and generalization in modular arithmetic as well as broader algorithmic tasks.

Conclusion

This work delivers a full mechanistic and dynamical interpretation of modular addition learning in two-layer neural networks. It delineates how specialized single-frequency features, phase alignment, and spectral diversity emerge collectively, culminating in robust symbolic computation. The lottery ticket mechanism and phase coupling explain both the micro-level neuron-wise evolution and macro-level population dynamics, including grokking. These findings deepen understanding of feature learning in neural networks and set the stage for further studies of algorithmic generalization and neurosymbolic reasoning.