- The paper benchmarks the impact of 6D object pose estimation and 3D mesh reconstruction errors on robotic grasping success using a physics-based simulation framework.

- The paper utilizes detailed quantitative metrics, such as ADD and translation error, to correlate perception inaccuracies with decreased feasibility in grasp planning.

- The paper demonstrates that while accurate pose estimation can mitigate moderate mesh defects, severe geometric flaws still critically hinder robotic grasp execution.

Functional Benchmarking of 3D Pose Estimation and Reconstruction Error on Robotic Grasping Success

Introduction

The paper "Benchmarking the Effects of Object Pose Estimation and Reconstruction on Robotic Grasping Success" (2602.17101) examines the interplay between perception errors—specifically those arising from 6D object pose estimation and 3D mesh reconstruction—and the downstream efficacy of robotic grasping. Despite progress in geometric metrics for evaluating perception modules, the functional impact of these errors on manipulation tasks remains underexplored. The work proposes a benchmarking pipeline that directly measures how errors propagate from perception to physical action, offering a quantitative link between mesh fidelity, pose accuracy, and the probability of successful grasp execution.

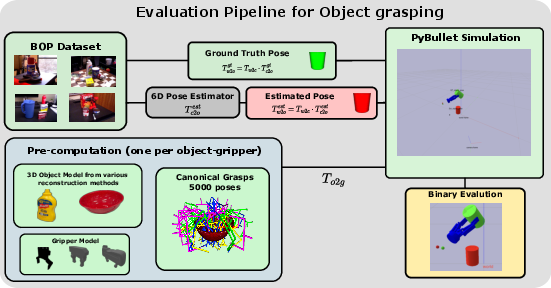

Figure 1: Overview of the evaluation pipeline, mapping canonical grasp libraries and estimated object pose to physical grasp execution outcomes and functional success metrics.

Methodological Framework

The presented framework incorporates a physics-based simulation environment (PyBullet) and a diverse set of realistic robotic grippers to systematically quantify three key factors:

- The accuracy of object pose estimation using state-of-the-art methods (e.g., FoundationPose, MegaPose)

- The geometric fidelity of 3D object reconstructions drawn from a spectrum of neural and photogrammetric techniques

- The resultant grasp planning efficacy and actual grasp success on physical objects

Transformation chains are utilized to bridge estimated and true object poses, simulating realistic operation situations where robots must act under perceptual uncertainty.

Evaluation is partitioned across three experimental conditions reflecting compounded and isolated effects of pose and reconstruction errors:

- Idealized (Ground-Truth) models for both pose and grasping

- Reconstructed models only for pose estimation, grasps planned on ground-truth

- Reconstructed models for both pose estimation and grasp sampling (realistic compounded error scenario)

Two principal metrics are used:

- Sgen: Grasp Generation Success Rate—fraction of viable grasps generated by a model

- Sest: Estimated Success Rate—fraction of canonical viable grasps (with perfect pose) that also succeed with estimated pose

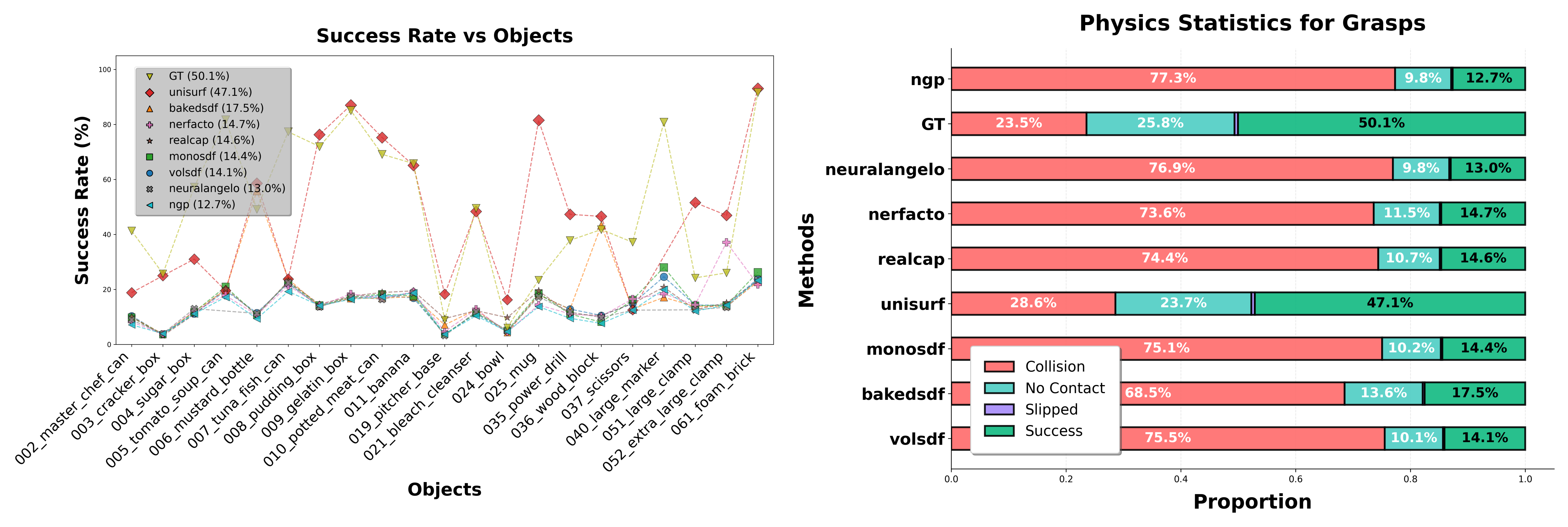

Failure modes are diagnostically decomposed into slips, no contact, and collision errors.

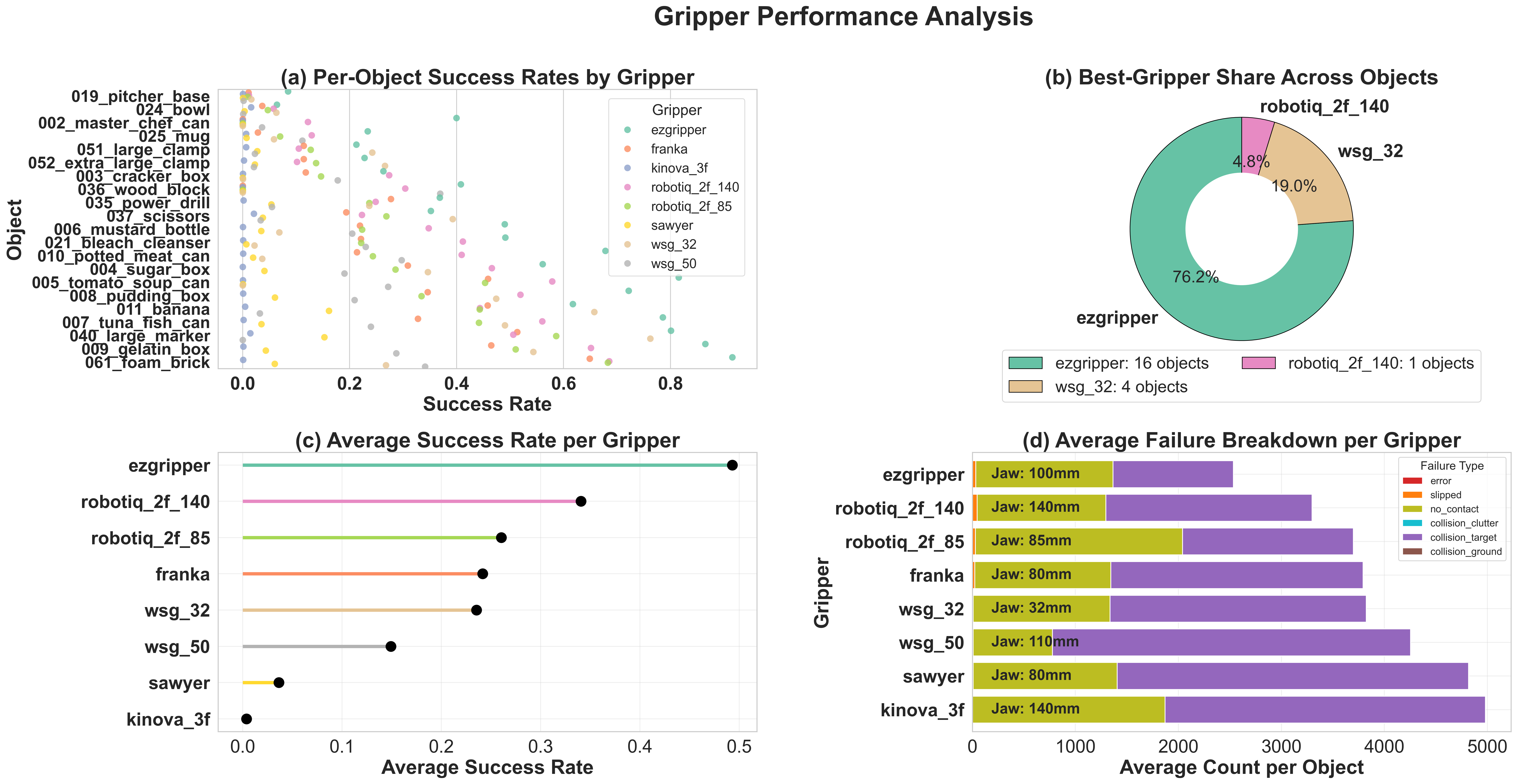

Baseline Analysis: Gripper Suitability and Physical Constraints

A large-scale baseline (over 5,000 antipodal grasps per object-gripper pair) establishes the effect of end-effector geometry on Sgen. Results reveal that gripper selection critically conditions the upper bound of possible grasp success rates for a given object, underlining the necessity of algorithm-agnostic aggregation over multiple gripper types.

Figure 2: Baseline gripper performance showing how different gripper-object pairings affect the Grasp Generation Success Rate, highlighting that gripper design imposes physical performance boundaries.

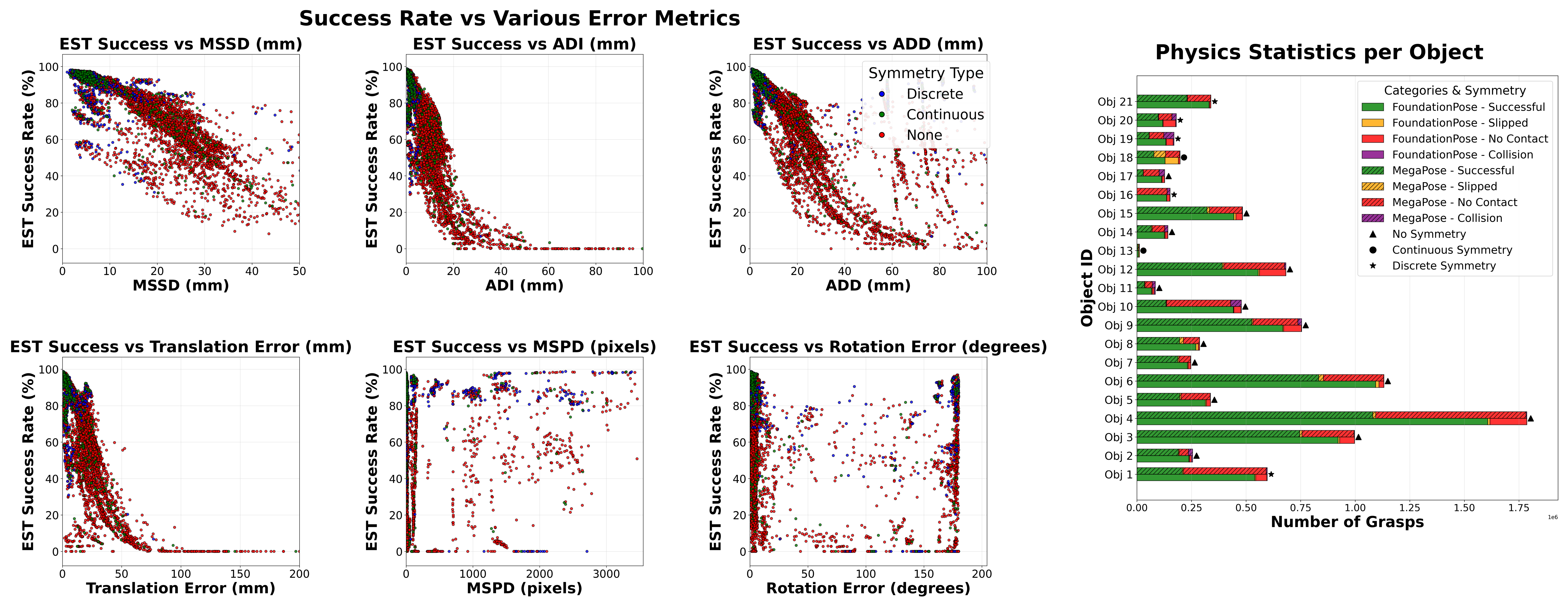

Quantifying the Impact of 6D Pose Estimation Errors

Functional grasp success is strongly correlated with spatial (3D) pose estimation error. Analysis demonstrates that while minor translation errors are often tolerated, there is a critical error threshold beyond which Sest declines abruptly. Notably, standard 2D-based metrics (such as projected pixel error or pure rotation error) are poor predictors of manipulation outcomes, whereas 3D metrics such as ADD, MSSD, and translation error are reliable proxies for functional success.

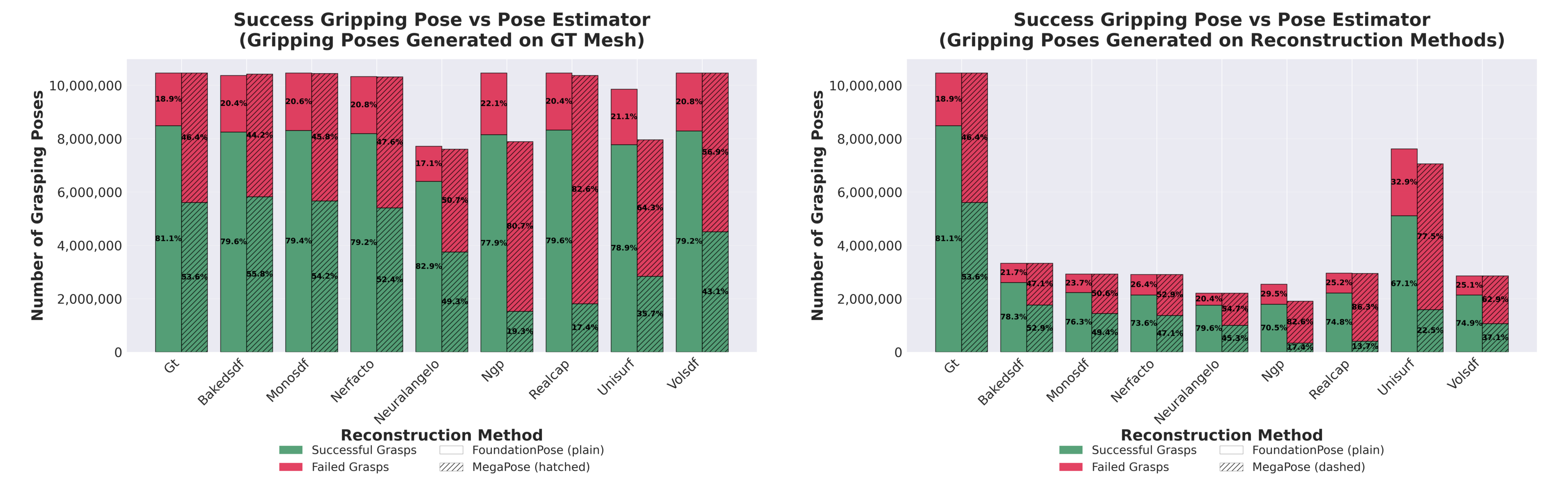

FoundationPose achieves higher average success (Sest: 89.9%) compared to MegaPose (59.4%), with the latter incurring substantially more 'No Contact' failures due to larger pose deviations.

Figure 3: Relationship between pose estimation error metrics and estimated grasp success rate—spatial errors sharply predict task failure, while 2D errors are only weakly informative.

The Role of Mesh Fidelity in Grasp Candidate Generation

Assessment of a suite of modern mesh reconstruction methods reveals that geometric artifacts—from noise to oversmoothing—in reconstructed meshes can sharply reduce the Grasp Generation Success Rate, Sgen, independent of pose error. Colloquially, models with higher geometric imperfections yield a higher frequency of collision-induced grasp failures when executing on ground-truth object instances, making many candidate grasps physically infeasible.

Interestingly, reconstructions like Unisurf, despite some loss of geometric detail, preserve a higher rate of functionally valid grasps than reconstructions afflicted by mesh defects, suggesting that a bias toward smoothness may be preferable to intricate but noisy geometry for manipulation-centric applications.

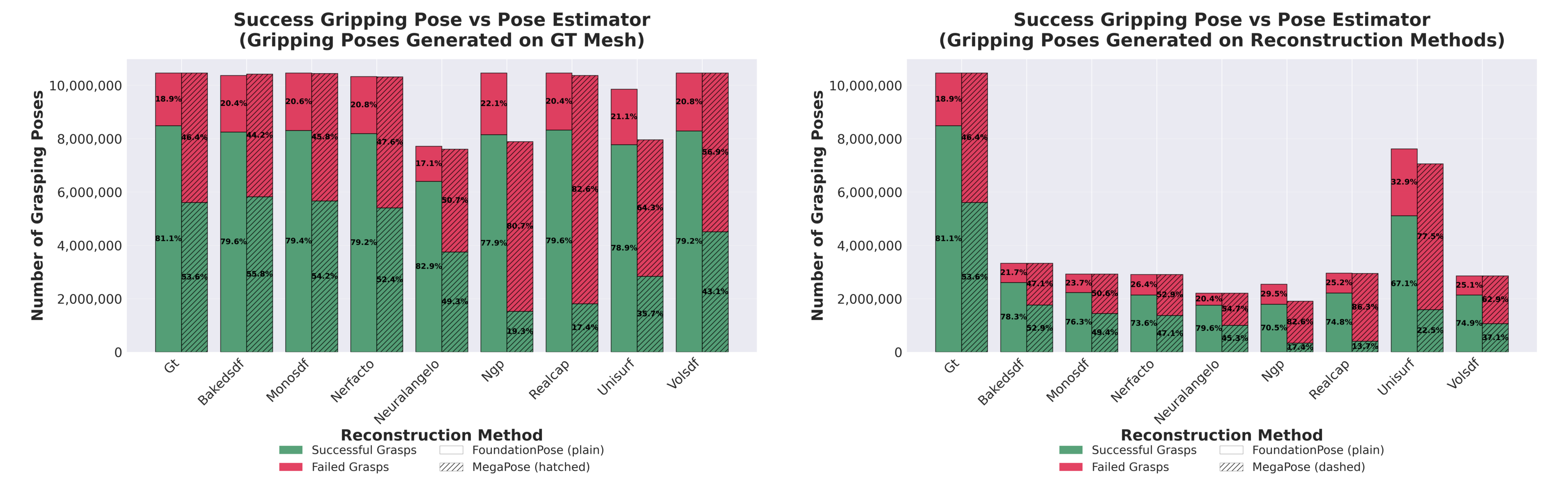

Figure 4: Impact of 3D model fidelity on viable grasp candidate density; lower-quality reconstructions induce more collisions, reducing usable grasp options.

Compounded Effects: Integrated Pose and Geometry Errors

End-to-end simulation of perception-to-grasp pipelines with compounded errors (imperfect reference mesh for both pose estimation and grasp planning) yields key findings: while geometric flaws in the mesh drastically reduce the pool of available grasp candidates, the final grasping success rate for state-of-the-art pose estimators is only slightly diminished—provided sufficient grasp candidates survive. This suggests that, for symmetric or moderate-complexity objects, high pose accuracy can partially compensate for mesh imperfections up to a certain degradation level, but no amount of pose estimation can counteract catastrophic grasp sampling failures induced by severe mesh artifacts.

Figure 5: Comparative grasping success rates under isolated and compounded perception errors; ultimate task success is robust to moderate mesh errors if pose estimation remains accurate and enough grasp candidates exist.

Implications and Future Directions

These findings prompt several operational and theoretical implications:

- Functional benchmarks like this one should supplement traditional geometric metrics to anticipate downstream manipulation failure modes in robotics pipelines.

- Reconstruction methods for manipulation should prioritize geometric features critical for contact and grasp stability (e.g., surface continuity, avoidance of collision-prone artifacts) over holistic geometric accuracy.

- Robustness to perception error may depend on object symmetry—symmetric objects tolerate larger classes of pose and mesh error without catastrophic decline in Sest, which is critical for application-specific gripper-object pairings.

Practically, this work provides a protocol for diagnosing, quantifying, and isolating where perceptual flaws become bottlenecks for manipulation, informing the design of both pose estimators and reconstruction algorithms for deployment in robotic manipulation settings. Extending the benchmark to high-precision placement, assembly, or more complex manipulation primitives will be key for mapping the full landscape of perception-action coupling.

Conclusion

This paper establishes a rigorous, simulation-driven standard for linking 3D perception errors to robotic manipulation outcomes. It identifies spatial accuracy of the pose estimate as the dominant determinant of grasping success when candidate grasps are available, but clarifies that mesh artifacts can fundamentally constrain the feasible action space. Future work should adapt this framework for closed-loop strategies, real-world validation, and manipulation domains beyond grasping to holistically optimize perception systems for embodied action in robotics.