- The paper introduces a formal semantic framework using layered knowledge graphs to integrate diverse IIoT data streams.

- It implements dynamic, context-aware RBAC with fine-grained access control and sub-millisecond operational latencies.

- Experimental evaluations demonstrate scalable, low-latency stream processing and real-time semantic querying in industrial environments.

Context-Aware Semantic Platform for Stream Processing in Industrial IoT

Motivation and Research Objectives

Industrial IoT (IIoT) and Internet of Everything (IoE) deployments introduce significant data integration complexities due to the heterogeneity of devices, communication protocols, and schema conventions. Additionally, Industry 5.0 principles necessitate dynamic, human-centric processes with adaptable access control—a scenario that exceeds the limitations of existing syntactic integration approaches. The paper "A Context-Aware Knowledge Graph Platform for Stream Processing in Industrial IoT" (2602.19990) establishes a formal framework for semantic data stream management via Knowledge Graphs (KGs) and reasoning, supporting interoperability, real-time stream processing, and context-sensitive authorization across diverse industrial ecosystems.

The platform's abstractions are grounded in uniform stream modeling: every device or agent is formalized as a stream source, each stream is uniquely identified, and transformations are modular operators composed in DAGs. Access control employs fine-grained RBAC, dynamically extended via contextual delegation relationships. Formal definitions encompass stream sources (typed, localized, schema-aware), streams (temporal sequences), operators (functions over streams), pipelines (DAGs of operators), agents (with context), and collaboration/delegation (contextual rights transfer). Access predicates are defined to enforce context-aware and role-based security on streams and pipelines.

Knowledge Graph Representation

Ontological Design

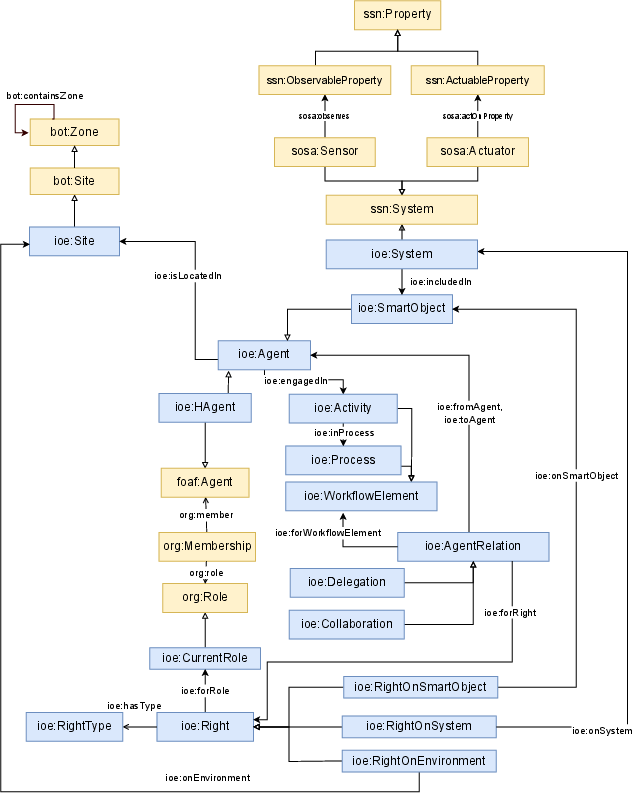

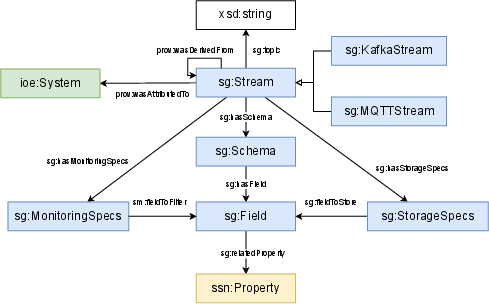

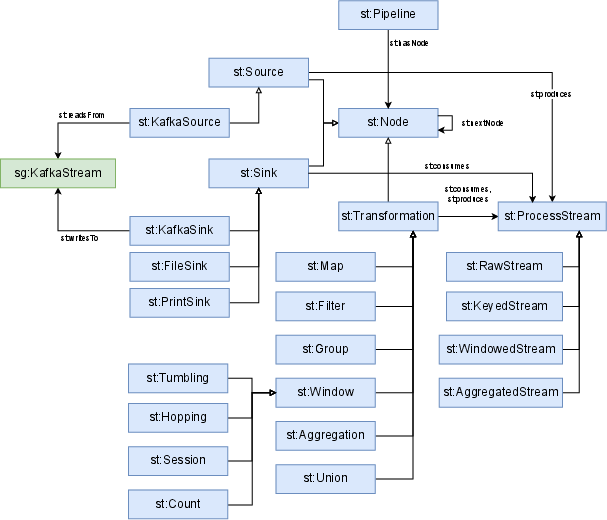

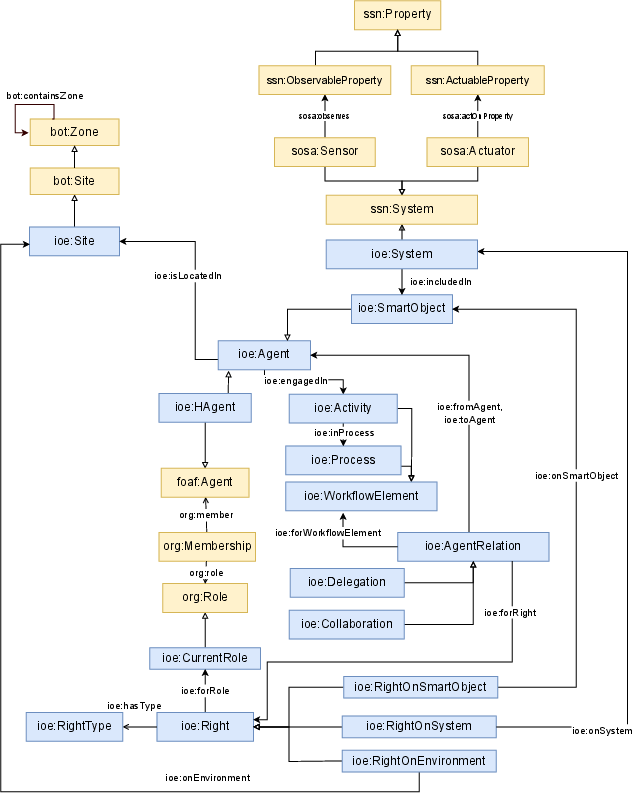

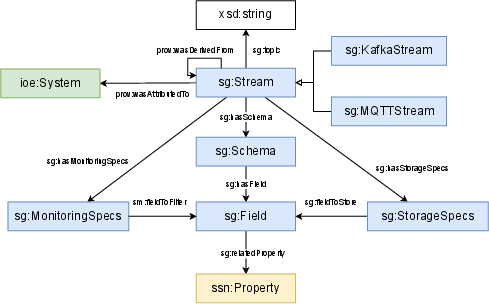

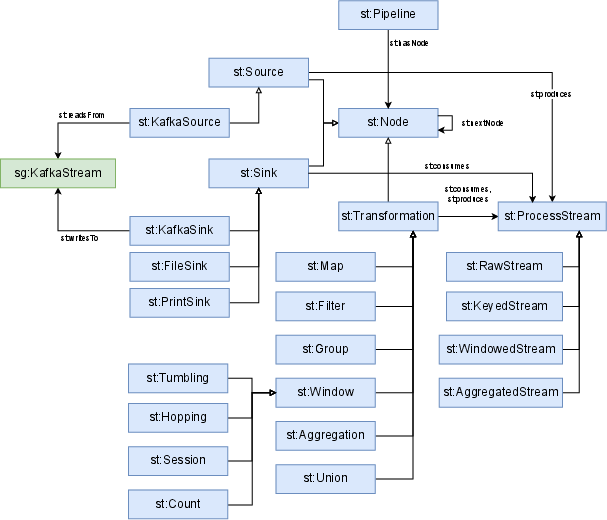

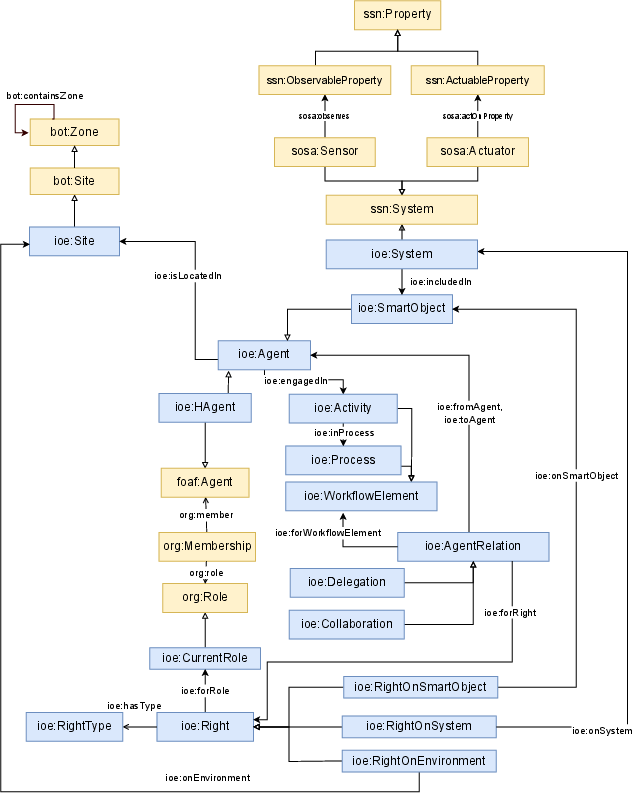

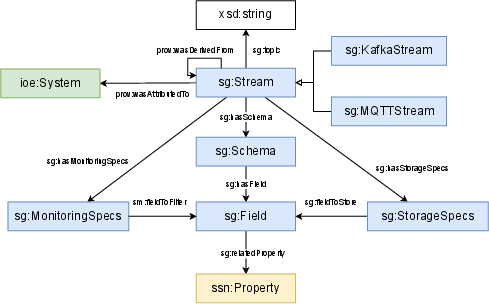

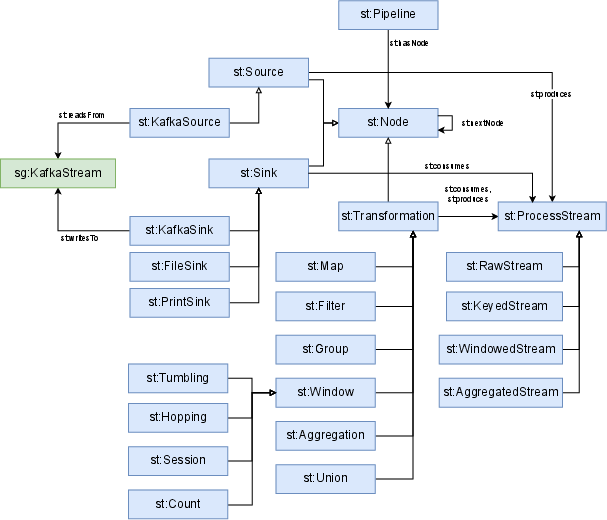

The KG infrastructure is modular and layered. Three interlinked graphs structure semantic metadata: (1) the Domain graph uses the SemIoE ontology, extending SSN, BOT, and ORG for devices, agents, locations, roles, rights, and processes; (2) the Stream gathering graph formalizes operational stream metadata such as protocol, topic, schema, and monitoring/storage specs; (3) the Stream transformation graph models composable pipelines as DAGs, supporting source, transformation, and sink nodes with explicit windowing and data typing.

Figure 1: Semantic infrastructure of the SemIoE ontology module, detailing integration points and external classes.

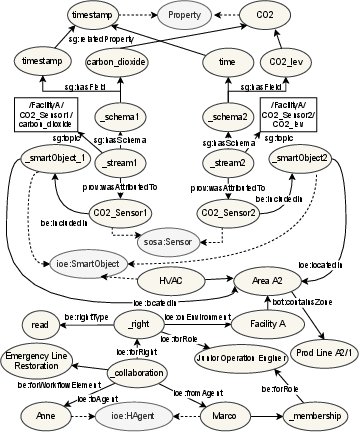

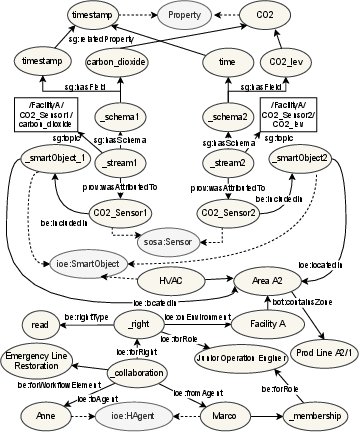

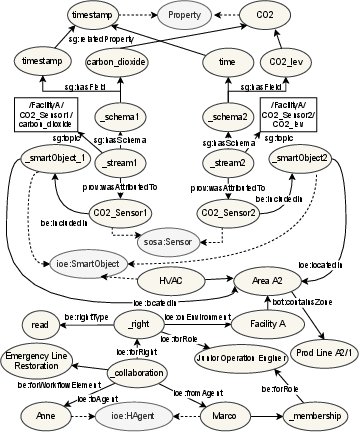

Real-world device and user context, processing logic, and rights relationships are encoded in these graphs, facilitating precise reasoning and discovery.

Figure 2: Domain and stream gathering KG fragment illustrating contextualized sensor, agent, and rights relationships.

Figure 3: Stream gathering ontological module, connecting heterogeneous protocols and schemas to semantic device properties.

Figure 4: Stream transformation module schema, representing pipeline structure and operator chaining within the KG.

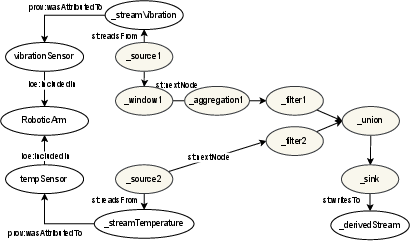

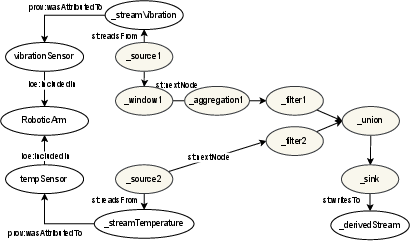

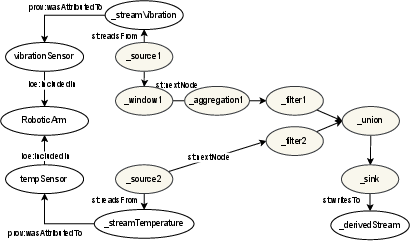

Pipeline and stream instances formalize operational transformation logic; e.g., anomaly detection jobs consuming high-frequency torque and low-frequency thermal data on collaborative robotic arms.

Figure 5: Stream transformation KG fragment illustrating composite pipeline formation and cross-graph instance linkage.

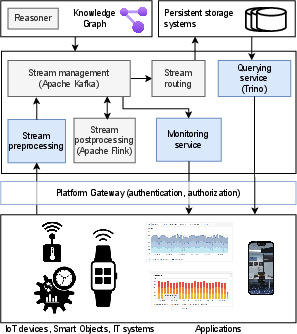

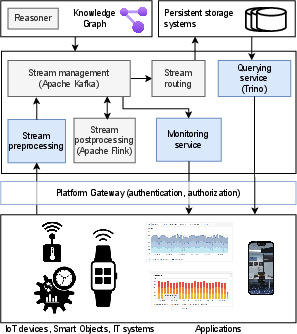

The multi-layered platform comprises microservices for data gathering, transformation, monitoring, querying, authentication, and authorization. Apache Kafka serves as the backbone for real-time stream acquisition and dissemination; Flink operates persistent and on-demand pipelines with event/time semantics.

Figure 6: Platform architecture diagram showing layered integration, microservices, and KG-driven orchestration.

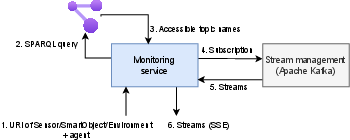

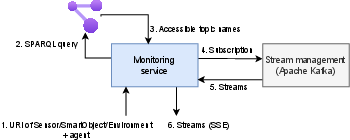

Semantic monitoring abstracts data subscription via conjunctive constraint resolution over the KG, bypassing rigid topic hierarchies. Contextual queries and authorization checks leverage SPARQL and SWRL reasoning to dynamically expose only authorized and context-relevant streams.

Figure 7: Interaction workflow of the monitoring service, illustrating dynamic topic subscription and context-based filtering.

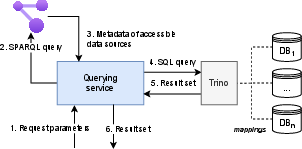

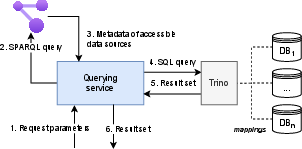

Persistent storage spans relational and NoSQL DBMSs; federated querying uses semantic metadata for unified retrieval, orchestrated via Trino.

Figure 8: Querying service workflow integrating semantic interpretation, multi-source subquery composition, and federated execution.

Reasoning Services and Authorization

Reasoning is divided into static inference (via OWL/SWRL) and run-time query execution (SPARQL). Inferred rights and containment relations provide efficient closure-based accessibility calculations, while runtime queries enforce universal quantification and closed-world constraints critical for RBAC and delegation. This enables:

- Rapid sensor/stream accessibility checks under current roles.

- Pipeline output accessibility validation based on input stream rights.

- Contextual discovery of co-located devices and streams for agents.

Rules and queries cover both static rights and dynamic collaborations, aligning with formal accessibility predicates defined in the model.

Experimental Evaluation

SPARQL query performance across graphs from 8k to 5.1M triples shows sub-10ms latency for frequent monitoring queries, with complex role-based accessibility checks scaling to ~1.4s in the largest scenario. This validates the approach's suitability for real-time industrial applications.

Figure 1: Execution time for representative queries across KG sizes (log scale).

Monitoring and Querying Latency

Monitoring service end-to-end latency remains below 0.5s under all tested KG sizes, with KG query overhead consistently <5% of total latency. Querying service achieves <185ms latency for selection and <85ms for aggregation across datasets up to 36M records, with KG overhead ~10ms.

Figure 2: Monitoring service latency breakdown, showing stable KG query contribution across KG sizes.

Figure 3: Query service latency, including KG and DB query overhead for various query patterns and data volumes.

Stream Processing Latency

Flink pipelines yield sub-ms computational operator latencies (filter/map) even at 1000 msg/s, with total end-to-end platform latency dominated by ingestion and republishing overhead (~10–40 ms). No significant throughput-induced latency escalation is observed, confirming horizontal scalability and suitability for high-frequency, fault-tolerant operation.

Figure 4: Internal Flink pipeline latency decomposition at varied throughput and durations.

Figure 5: End-to-end latency segmented by pipeline stage, demonstrating stable performance across message rates.

Implications and Future Directions

The proposed context-aware KG platform enables composable, scalable, and semantically interoperable stream processing workflows in Industry 5.0, addressing integration, security, and maintainability deficits of legacy approaches. The integration of formal semantics and context-sensitive reasoning supports dynamic authorization, federated querying, and pipeline orchestration—critical in highly collaborative, dynamic industrial environments.

Practical implications include robust real-time monitoring, flexible pipeline configuration, and auditable access control. Theoretical contributions demarcate universal-accessibility predicates, compositional operator semantics, and context propagation over dynamic agent roles.

Future advances will focus on formal pipeline validation, automated semantic relationship extraction to reduce manual configuration, and deeper integration with adaptive ML workflows. Machine learning models will leverage KG metadata for context-sensitive stream optimization and reconfiguration. The extension into natural language querying via LLMs and RAG demonstrates the trend towards user-centric, explainable systems that interface seamlessly with structured semantic platforms [10.1145/3762669].

Conclusion

This work specifies a scalable, ontology-driven KG platform for context-aware stream processing in IIoT, combining semantic interoperability, real-time reasoning, and dynamic access control. Experimental results confirm efficient, low-latency operation, validating the architecture for practical Industry 5.0 deployments. The framework sets the stage for further development in pipeline validation, automated semantic enrichment, and integrated ML systems, aligning with the demands for adaptability, transparency, and contextual intelligence in next-generation industrial environments.