Fast Spectrogram Event Extraction via Offline Self-Supervised Learning: From Fusion Diagnostics to Bioacoustics

Abstract: Next-generation fusion facilities like ITER face a "data deluge," generating petabytes of multi-diagnostic signals daily that challenge manual analysis. We present a "signals-first" self-supervised framework for the automated extraction of coherent and transient modes from high-noise time-frequency data. We also develop a general-purpose method and tool for extracting coherent, quasi-coherent, and transient modes for fluctuation measurements in tokamaks by employing non-linear optimal techniques in multichannel signal processing with a fast neural network surrogate on fast magnetics, electron cyclotron emission, CO2 interferometers, and beam emission spectroscopy measurements from DIII-D. Results are tested on data from DIII-D, TJ-II, and non-fusion spectrograms. With an inference latency of 0.5 seconds, this framework enables real-time mode identification and large-scale automated database generation for advanced plasma control. Repository is in https://github.com/PlasmaControl/TokEye.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper tackles a big problem in fusion energy research: modern fusion machines (like tokamaks) produce huge amounts of data every day, and it’s hard and slow for humans to find important events in that data. The authors built an AI-based method that automatically finds and highlights meaningful patterns in “spectrograms” (pictures that show how signal energy changes across time and frequency). Their system works fast, across different kinds of sensors, and even transfers to other fields like animal sound analysis.

What questions did the researchers ask?

Put simply, they asked:

- Can we automatically and quickly find important signal events (like steady tones, chirps, and short bursts) in very noisy time–frequency data?

- Can one general method work across many different fusion sensors (magnetics, ECE, CO₂ interferometers, BES) and on other devices (like TJ-II) without hand-tuning?

- Can we do this in a way that doesn’t rely on lots of human labels, but still builds a useful database and runs fast enough for real-time monitoring?

How did they do it?

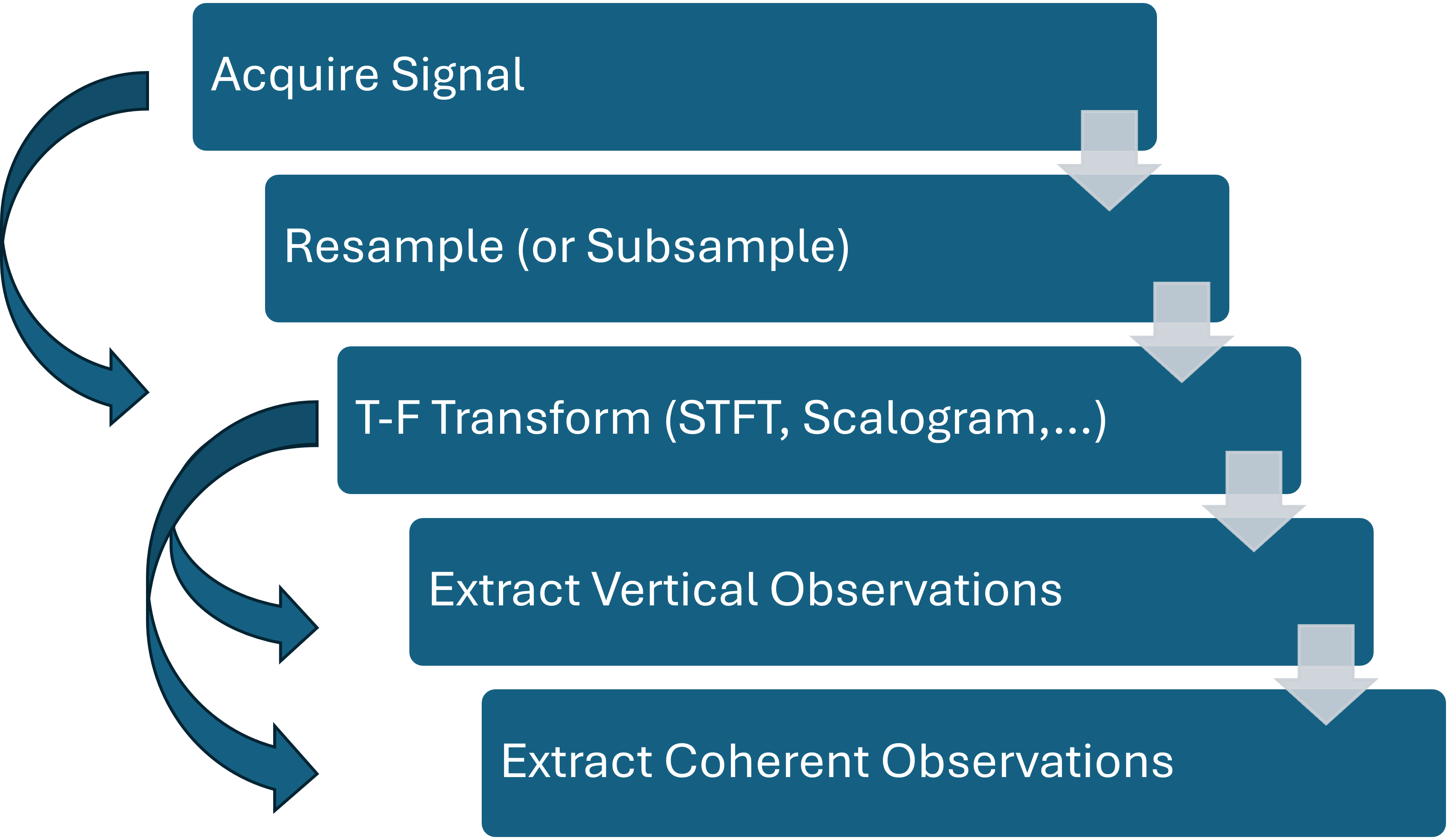

They designed a “signals-first” pipeline: start from the raw signals and systematically separate useful patterns from the background. Here are the main ideas in everyday terms.

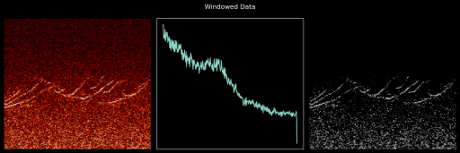

Turning signals into pictures (spectrograms)

- A spectrogram is like a piano-roll view of sound: it shows which “notes” (frequencies) are active at each moment.

- They used a standard tool called the Short-Time Fourier Transform (STFT). Think of sliding a short window over the signal and, at each step, listing which frequencies are present.

- This creates a time–frequency image where bright spots or lines can indicate interesting events.

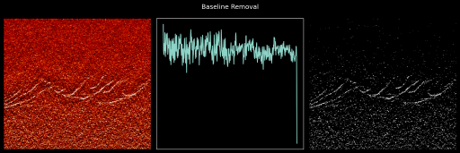

Separating the background “hum” (baseline removal)

- Many fusion signals have a strong low-frequency “slope” or rumbling background (like wind noise in a microphone).

- The authors estimate this smooth background (“baseline”) and subtract it, which “whitens” the spectrogram. That makes faint, narrow patterns stand out more clearly without throwing away important low-frequency details.

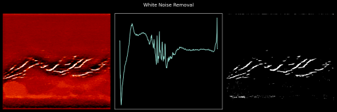

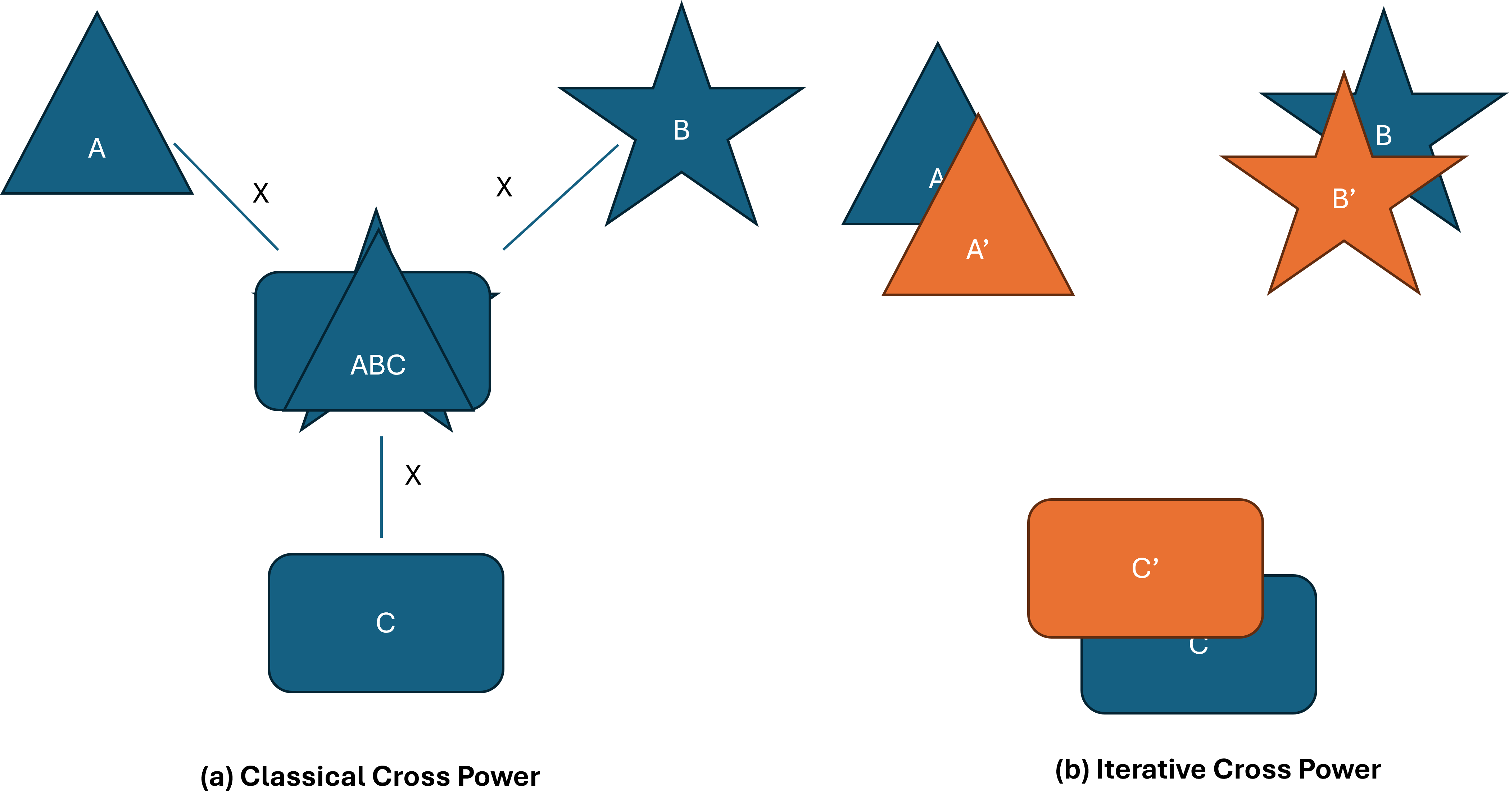

Using many sensors together (multichannel denoising)

- Fusion machines use many sensors at once—like having many microphones around a stage.

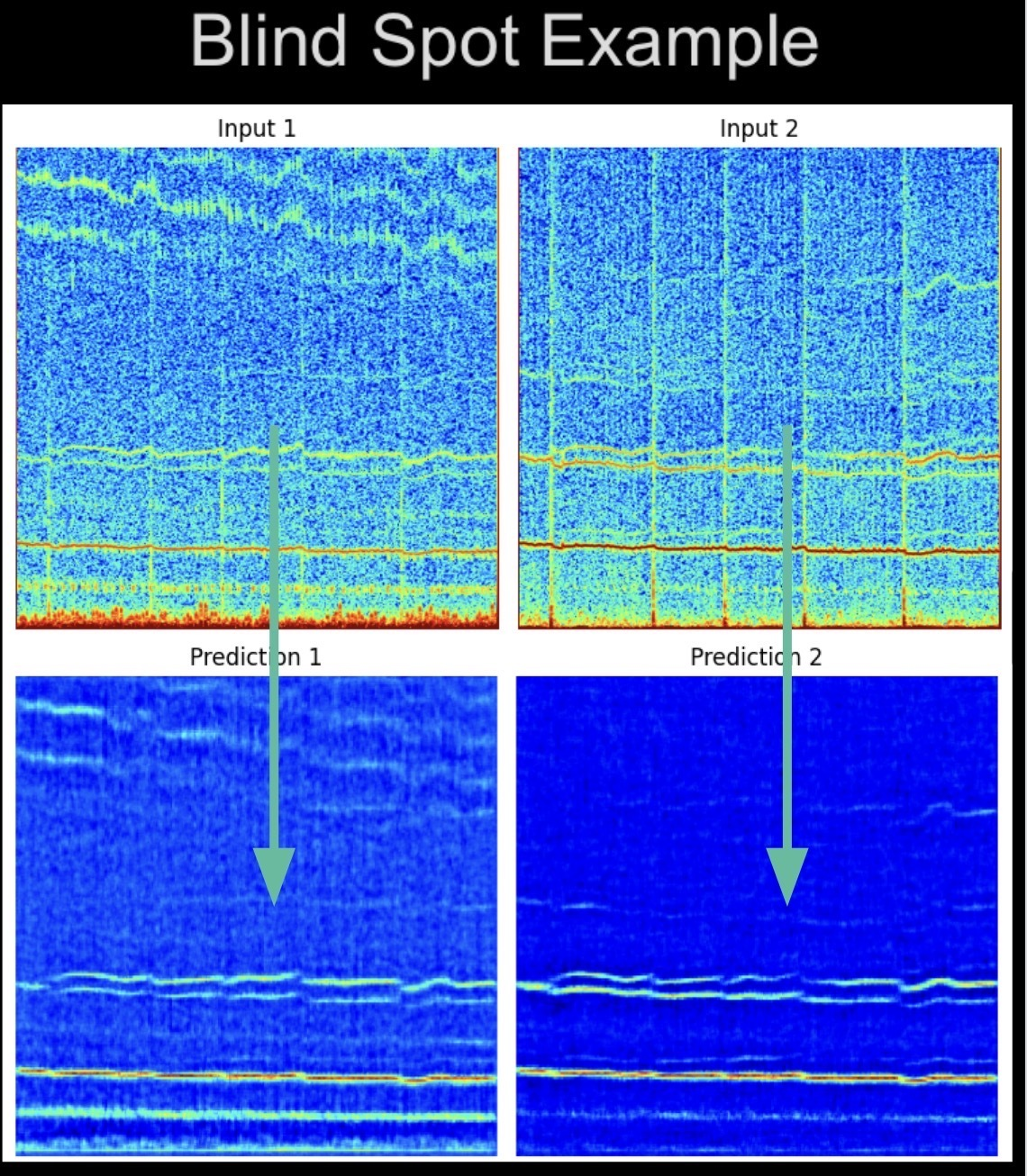

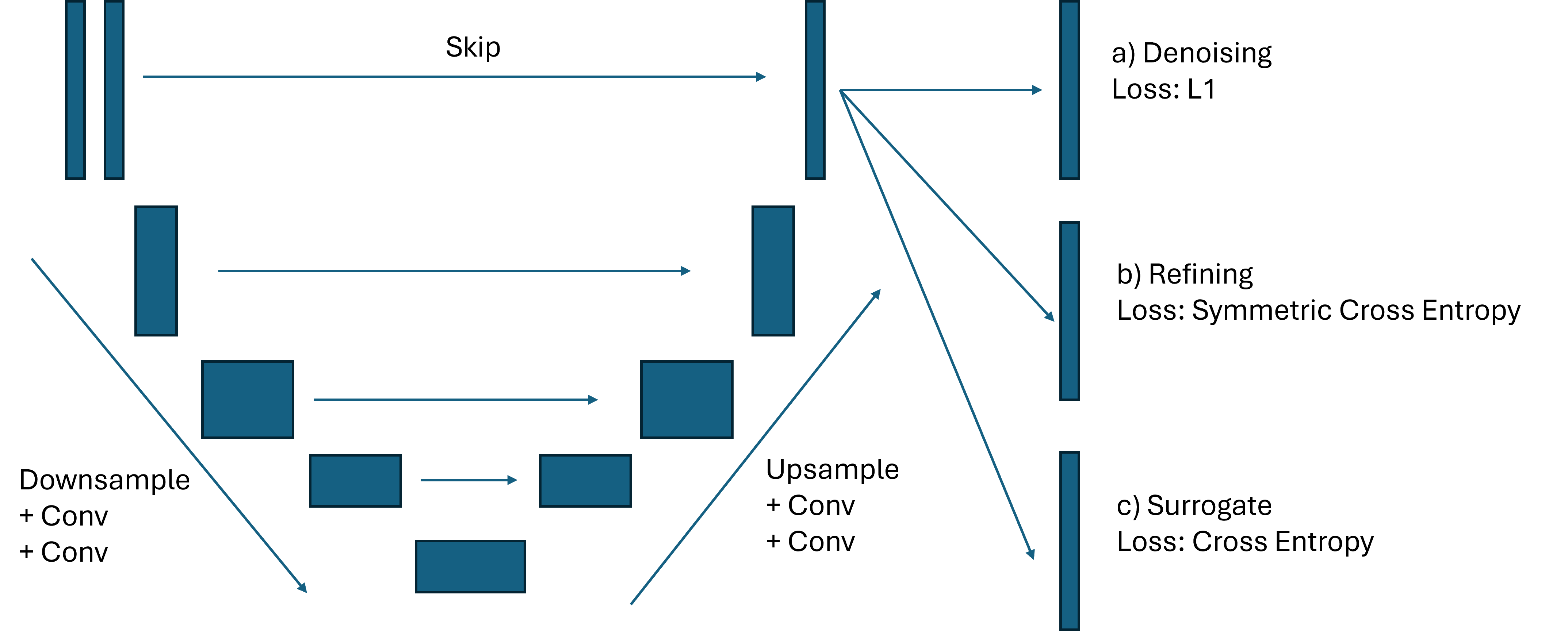

- Instead of simply averaging (which can erase rare events), they train a neural network (a U-Net) that learns how signals relate across channels. It uses information from all the other channels to predict and clean up each target channel.

- This self-supervised approach works without clean labels: it learns to keep consistent, shared patterns and suppress random noise.

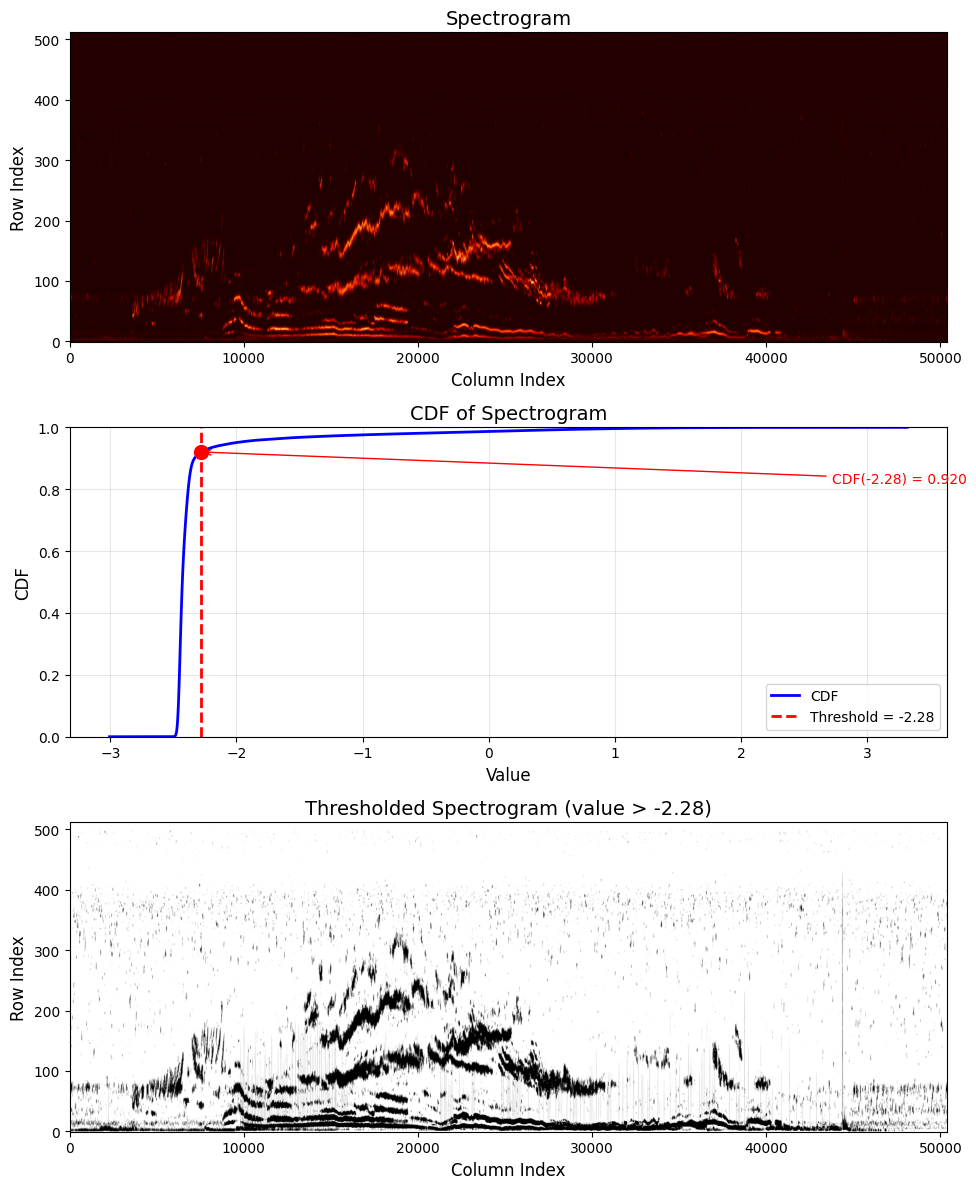

Picking out the events (smart thresholding)

- After cleaning, they need to decide which pixels in the spectrogram belong to real events.

- Rather than using a fixed cutoff, they look at the overall intensity distribution and find the “knee” point (the place where the curve bends). It’s like choosing a sensible boundary between background and meaningful signal automatically.

- This selects bright, sparse events (steady tones and short bursts) while avoiding over-selection.

Training a fast helper model (surrogate)

- They use the cleaned and auto-labeled data to train a final “surrogate” U-Net that can quickly spot events on new spectrograms.

- This model then runs in about half a second per shot on a GPU, enabling near real-time detection and building large databases automatically.

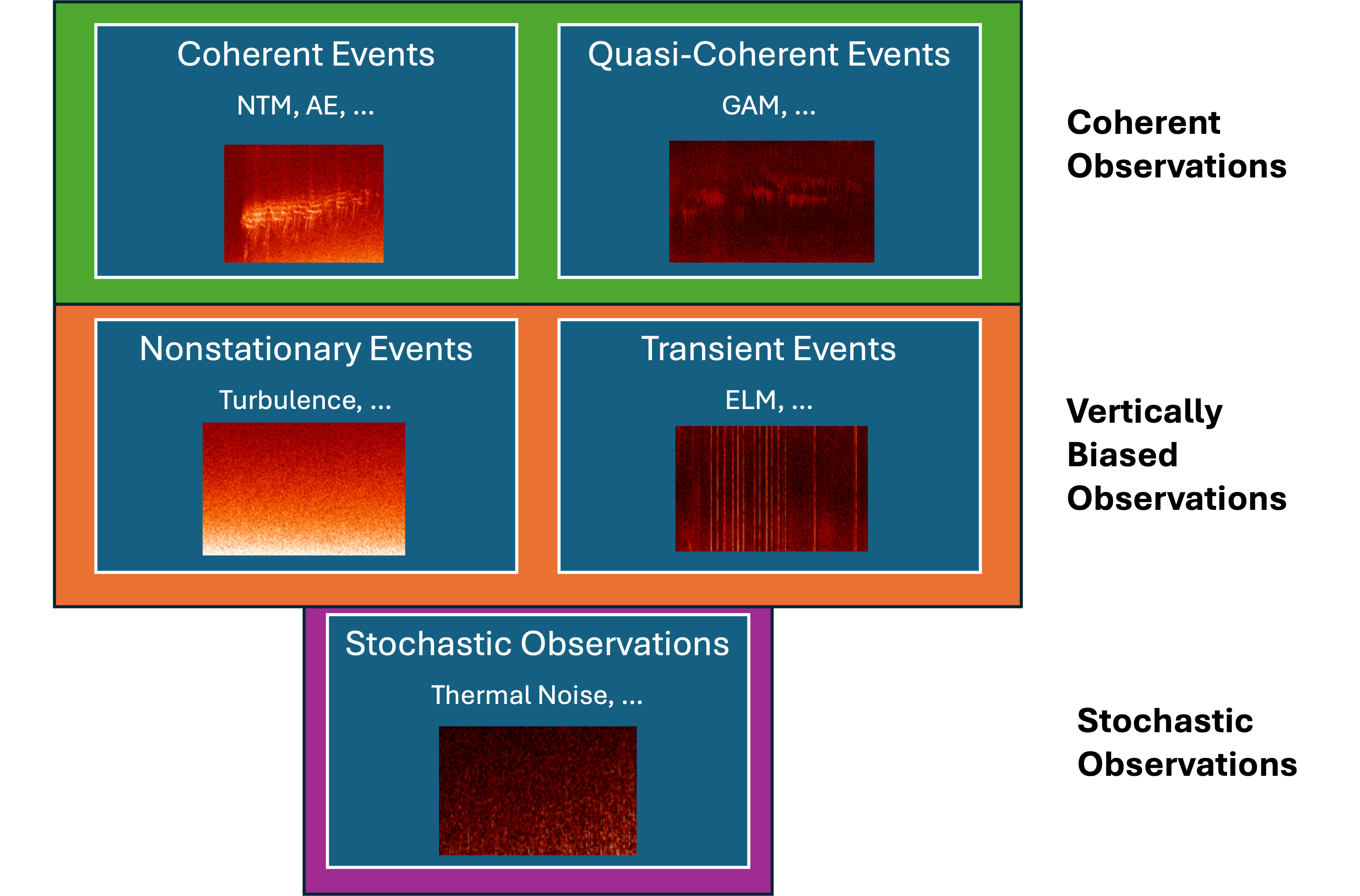

Before doing all this, they also define a simple taxonomy (categories) of what they’re looking for:

- Coherent (steady or slowly changing tones)

- Quasi-coherent (mostly steady but a bit fuzzy)

- Transient (short spikes, like claps)

- Broad (wide-band background or drifts, like a steady rumble)

- Stochastic (random noise)

What did they find?

Here are the main results, explained in plain language:

- The pipeline can reliably separate faint, steady patterns (like Alfvén modes) from heavy background and noise across multiple sensor types (magnetics, ECE, CO₂ interferometers, BES) on the DIII-D tokamak.

- It uncovers interesting physics automatically—for example, changes in high-frequency activity during tearing mode suppression (an important control topic in fusion).

- The surrogate model generalizes well:

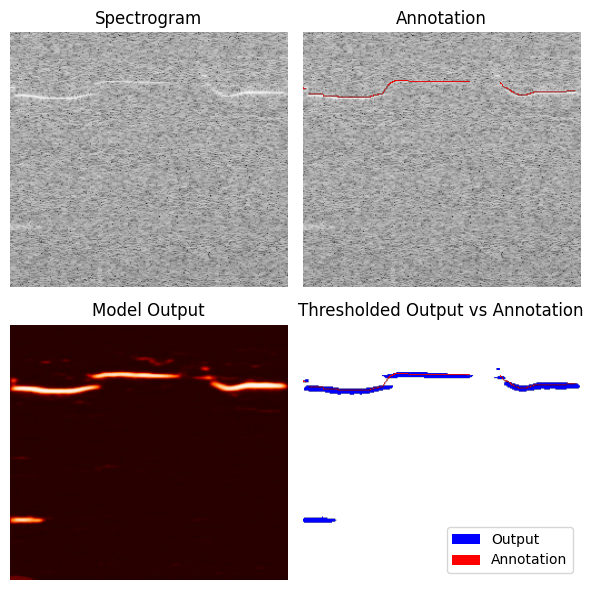

- On the TJ-II stellarator’s data, it matched expert labels strongly without retraining.

- On marine bioacoustics (dolphin calls), it detected vocalizations in a zero-shot setting (no retraining), showing the method works beyond fusion.

- It’s fast: around 0.5 seconds per shot on a GPU, which is suitable for real-time or inter-shot analysis. A CPU run is slower (about 5–10 seconds), but still practical.

- It created a large, auto-labeled database (tens of thousands of spectrogram snippets), which helps future AI training and science studies.

Why is this important?

- Fusion devices are moving toward “burning plasma” conditions and will produce enormous data (petabytes per day in future facilities). Manually scanning that data isn’t feasible.

- Automatic, reliable detection of events helps scientists understand and control the plasma more quickly, potentially preventing damaging disruptions.

- A single general method reduces the need to handcraft different tools for different sensors, saving time and reducing mistakes.

- The approach builds high-quality training data without heavy human labeling, which is crucial for modern AI systems.

- Because it generalizes to other machines and even other fields (like bioacoustics), it can accelerate scientific discovery across domains.

Takeaway

The authors created a practical, fast, and general AI pipeline that turns messy, noisy spectrograms into clear maps of important events—without relying on lots of human labels. It works across different fusion diagnostics, transfers to other devices and domains, and runs quickly enough to help with real-time plasma monitoring and control. This kind of tool can make a big difference as fusion research moves into data-heavy, high-performance operation.

Knowledge Gaps

Below is a consolidated list of the paper’s unresolved knowledge gaps, limitations, and open questions, framed to be actionable for future research.

- Formal validation of the proposed signal taxonomy: No quantitative or expert-reviewed assessment of the five classes (coherent, quasi-coherent, transient, broad, stochastic) across devices, diagnostics, and operating regimes; need inter-rater agreement studies and confusion analyses between “broad” and deterministic non-periodic phenomena.

- Separation of broad vs transient events: The paper suggests impulse-based heuristics but does not implement or evaluate a robust transient detector (e.g., ELM/sawtooth/pellet), nor quantify false separations of broad drifts vs turbulence.

- Baseline removal parameters and generality: Fixed asymmetric least-squares settings (p=0.001, λ=106, pre-emphasis α=1) lack adaptive selection, calibration, or sensitivity analyses across sensors and shots; edge effects (up to 4 kHz) are noted but not systematically mitigated or quantified.

- Objective evaluation of “whitening”: The claim that baseline removal whitens the residual is not validated with spectral whiteness tests (e.g., Ljung–Box, autocorrelation decay) or color assessment across frequencies and diagnostics.

- STFT design choices: The selection of window size (N=1024), overlap (87.5%), and resampling to 500 kHz is not justified via ablations; effects on time-frequency resolution, aliasing (especially for CO2 signals originally at 1 MHz), and sensitivity to parameter changes remain unquantified.

- Alternative T-F representations: The paper avoids wavelets/multitaper/synchrosqueezing for interpretability but does not benchmark them empirically against the proposed pipeline to quantify trade-offs (e.g., faint mode retention vs baseline suppression).

- Complex-valued denoising fidelity: Operating on real/imag components lacks constraints to enforce STFT phase consistency; no evaluation of phase errors, spectral leakage, or artifact formation; open question whether complex losses (e.g., magnitude–phase) improve reconstruction.

- Assumption of zero-mean, independent noise: The self-supervised denoising relies on noise independence across channels, which may be violated (electronics, environment); needs measurements of inter-channel noise correlation and robustness to colored residuals post-baseline removal.

- Single-channel diagnostics: The multichannel predictor cannot recover information unique to a single channel; performance on single-channel sensors (or sparsely instrumented systems) is underexplored and lacks alternative denoisers tested.

- TV-based early stopping: Total-variation criterion is proposed to avoid learning noise, but no thresholds, stopping rules, or correlation with noise content are reported; open whether TV is both necessary and sufficient across diverse noise types.

- CDF knee-point thresholding stability: While parameter-free, behavior in pure-noise spectra (no meaningful modes), multi-modal histograms, and time-varying noise floors is not quantified; need robust fallback thresholds and local adaptivity tests.

- Post-threshold morphological processing: The pipeline does not evaluate whether skeletonization, contour smoothing, or connectivity constraints improve precision without compromising recall, especially for narrow-band AE chirps and dolphin calls.

- Detection refinement details: “High-entropy measurements” inclusion is not formalized; criteria, thresholds, and impact on label quality (false positives/negatives) are missing; risk of confirmation bias in self-generated masks remains unaddressed.

- Label noise and dataset quality: The 40,000-fragment database is unlabeled and self-supervised; there is no systematic QA/QC protocol, human-in-the-loop validation, or error auditing to estimate label noise and its downstream effect on surrogate training.

- Surrogate evaluation metrics: Performance is reported primarily via recall on TJ-II and DCLDE; precision, IoU, F1, and calibration curves (e.g., probability-threshold sweeps) are missing; device-specific differences and thresholds (50%) are not stress-tested.

- BES results absent: Although BES is listed, no figures or quantitative metrics are reported; the pipeline’s robustness to BES-specific artifacts and lower SNR regimes remains untested.

- Physics validation and interpretability: Mode-number (n,m) inference, radial localization, and cross-diagnostic coherence checks are not demonstrated; need end-to-end validation linking segmentations to known physics events (ELMs, NTMs, AEs) across many shots.

- Integration with real-time control: Claims of suitability for advanced plasma control lack a demonstration of closed-loop integration (latency budgets, streaming architecture, trigger reliability, false-alarm rates) and risk analyses for control actions.

- Cross-diagnostic fusion: The current approach reconstructs one view at a time; joint multi-diagnostic fusion, alignment across sampling rates, time synchronization, and conflict resolution (inconsistent detections) is an open design and evaluation space.

- Mode classification vs binary segmentation: The surrogate produces binary masks of “coherent/transient” regions; a classifier to map detections into specific physics classes (e.g., AE, NTM, GAM, ELM, sawtooth) is absent and needed for science and control use.

- Robustness to device/domain shifts: Generalization beyond DIII-D and TJ-II (e.g., JET, KSTAR, EAST, NSTX-U, ITER-like diagnostics) is untested; domain adaptation strategies and failure modes under changed noise characteristics and sampling are unknown.

- Quantitative SNR improvements: No rigorous SNR/PSNR/SSIM or physics-specific metrics show how much denoising and baseline removal improve detectability of faint modes; sensitivity curves (minimum detectable amplitude/chirp rate) are missing.

- Threshold sensitivity and uncertainty: No uncertainty quantification (e.g., predictive confidence maps, calibrated probabilities) accompanies detections; threshold selection procedures and their stability across diagnostics are not documented.

- CPU deployment constraints: Inference on CPUs (5–10 s/shot) is slow for variable-size inputs; quantization/pruning is proposed but untested; memory footprint, throughput scaling, and energy costs for plant deployment remain open.

- Scalability with channel count: The multichannel U-Net scales memory with 2k input channels; no profiling for tens/hundreds of channels (e.g., MHR arrays); need architectures that scale (blind-spot, attention, low-rank fusion) with demonstrated speed/memory trade-offs.

- Choice of loss function: MAE on real/imag may not be optimal; investigation of complex-domain losses, magnitude–phase decompositions, or perceptual T-F losses tailored to mode detection is missing.

- Pre-emphasis effects: The α=1 pre-emphasis may bias high-frequency regions; its impact on faint low-frequency NTMs and near-DC coherent modes is not studied; adaptive pre-emphasis or frequency-dependent regularization could be evaluated.

- Baseline vs HPS vs morphological filtering: The paper motivates baseline removal via spectroscopy analogs but does not benchmark against harmonic–percussive separation or morphological filters on T-F planes; comparative studies are needed.

- Data and code reproducibility: The repository link is provided, but details on datasets (availability, licenses), exact preprocessing pipelines, seeds, and environment (versions) required for deterministic reproduction are not documented.

- Annotation mismatch in DCLDE: Precision is low due to annotation width differences; boundary-aware metrics (e.g., Hausdorff distance, boundary IoU) or label dilation studies are not conducted to fairly evaluate segmentation performance.

- Handling coherent non-physical artifacts: Antenna scans and instrument-induced coherent signatures are acknowledged but not explicitly detected/filtered; a method to distinguish physical modes from coherent sensor artifacts is needed.

- Streaming and online operation: The pipeline is offline; a streaming variant (sliding STFT windows, continuous baseline tracking, online thresholds) with bounded latency and drift correction is not developed or benchmarked.

- Parameter auto-tuning: The pipeline uses several fixed parameters (STFT, ALS, TV thresholds, augmentation configs); automated per-shot/dataset tuning (e.g., via Bayesian optimization) and its stability across diagnostics is unstudied.

- Data normalization/calibration: Diagnostic-specific normalization (cumulative mean/std, clipping at 0.1/99.9 percentile) may hinder cross-diagnostic amplitude comparability; mapping to physical units and cross-shot calibration procedures are not provided.

- Evaluation on edge frequency bins: The approach acknowledges low-frequency edge effects but does not quantify detection reliability near DC or at the highest frequency bins; needs boundary-aware analyses and mitigation strategies.

Practical Applications

Immediate Applications

Below are deployable use cases that can be adopted now using the paper’s released codebase (TokEye) and described methods, with sector links, potential tools/workflows, and key dependencies.

- Automated inter-shot mode cataloging in fusion experiments

- Sectors: Energy (fusion), Software/AI

- Tools/Workflows: Batch processing of STFT spectrograms from ECE, CO2 interferometers, magnetics, BES; event masks stored in a searchable database; amplitude/chirp summaries auto-generated per shot

- Assumptions/Dependencies: Access to raw multi-diagnostic data; STFT preprocessing compatible with local pipelines; compute availability (GPU for ~0.5 s/shot or CPU for ~5–10 s/shot)

- Real-time operator dashboards with mode overlays

- Sectors: Energy (fusion), HMI/Visualization

- Tools/Workflows: Live spectrograms with coherent/transient segmentation overlays; alarm thresholds for AE/ELM-like detections; integration with control room UIs

- Assumptions/Dependencies: Low-latency data stream; GPU in the loop for sub-second inference; stable STFT parameters; signal contains detectable features (knee-threshold requires signal presence)

- Cross-diagnostic event alignment and quick-look physics analysis

- Sectors: Energy (fusion), Research/Academia

- Tools/Workflows: Synchronized event masks across ECE/CO2/MHD/BES channels; rapid identification of onset times, chirps, and amplitude envelopes; export to MDSplus-compatible tags

- Assumptions/Dependencies: Time synchronization across diagnostics; sufficient channel count for multichannel denoising (best performance with ≥2 correlated channels)

- Rapid auto-label generation to train/benchmark ML for fusion control

- Sectors: Energy (fusion), Software/AI

- Tools/Workflows: Surrogate event segmentations used as labels for disruption predictors, NTM/AE classifiers, and anomaly detectors; dataset curation pipelines via FAITH + TokEye

- Assumptions/Dependencies: Agreement on label schemas within a group; acceptance of self-supervised labels for bootstrapping (with optional human QA)

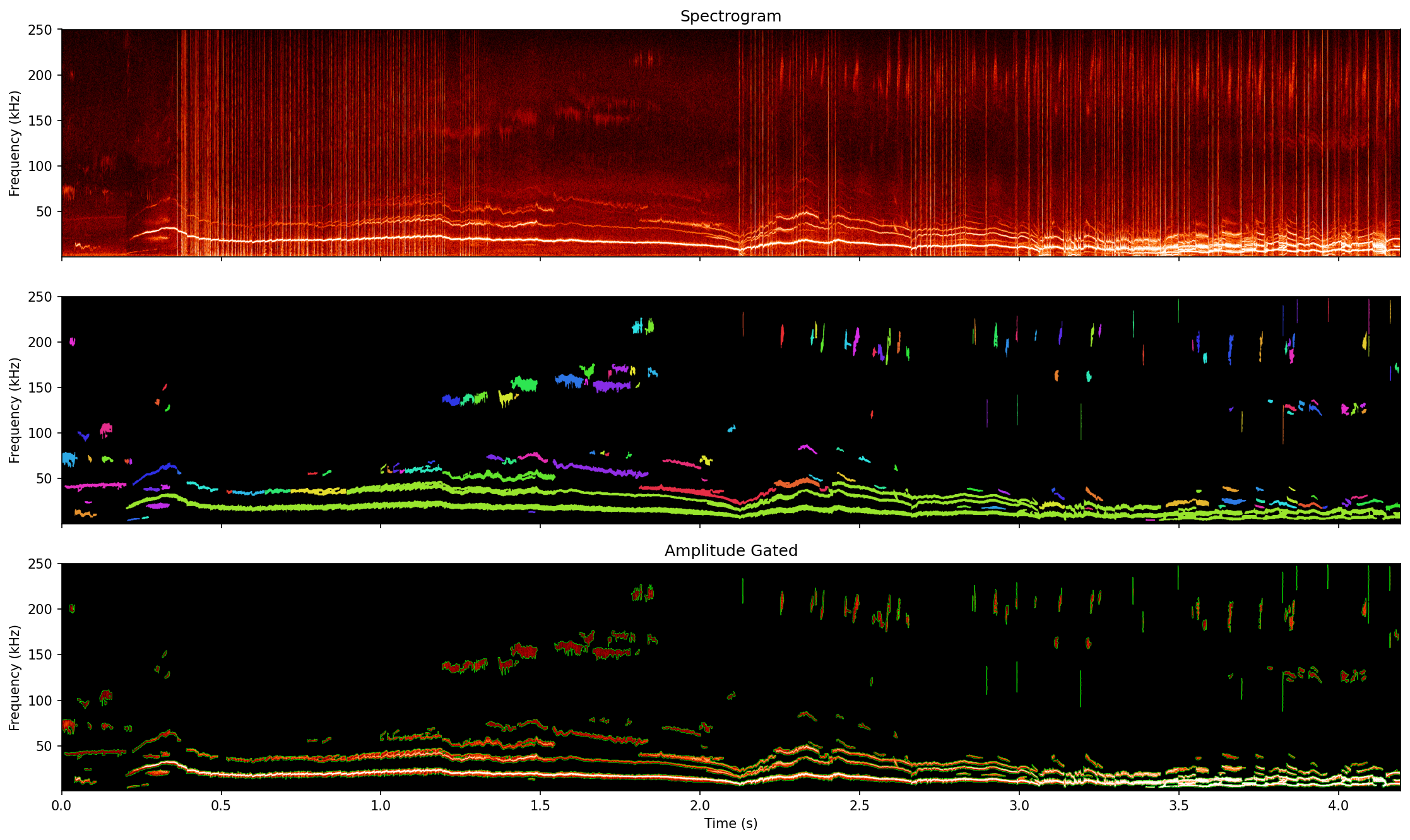

- Post-shot mode amplitude gating and chirp tracking

- Sectors: Energy (fusion)

- Tools/Workflows: Thresholded event masks gating amplitude and frequency bands; per-event statistics (fmin/fmax, duration, amplitude); CSV/JSON exports for analysis notebooks

- Assumptions/Dependencies: Stable thresholding; correct handling of low-frequency baseline bias (pre-emphasis and baseline removal tuned per diagnostic)

- Diagnostic commissioning and health monitoring

- Sectors: Energy (fusion), Test & Measurement

- Tools/Workflows: Baseline removal to quantify noise floors, 1/f drifts, and edge artifacts; trend monitoring of sensor health across campaigns

- Assumptions/Dependencies: Regular calibration data; consistent acquisition settings to track drifts

- Zero-shot bioacoustic call triage (marine mammal surveys)

- Sectors: Environment, Bioacoustics, Industry (offshore energy, shipping)

- Tools/Workflows: Batch segmentation of dolphin calls in passive acoustic datasets; pre-screening to reduce human annotation burden; triage for shrimp noise clutter

- Assumptions/Dependencies: Spectrograms computed with similar parameter ranges; acceptance that precision may be lower without fine-tuning; post-processing to match narrow annotation conventions

- General-purpose spectrogram preprocessing library for labs

- Sectors: Academia, Software/AI

- Tools/Workflows: Drop-in Python modules for baseline removal (asymmetric least squares + TV), multichannel self-supervised denoising (Noise2Noise-inspired), knee-CDF thresholding

- Assumptions/Dependencies: Access to STFTs or compatible T–F transforms; zero-mean independent noise for best denoising performance

- Pilot predictive maintenance on vibration/acoustic spectrograms

- Sectors: Manufacturing, Robotics

- Tools/Workflows: Apply baseline separation + denoising to motor/gearbox audio or accelerometer spectrograms; flag quasi-coherent harmonics and transients as early warnings

- Assumptions/Dependencies: Minimal fine-tuning likely needed; domain validation against labeled fault data; multichannel sensors improve robustness

- Seismology and geophysics quick screening of tremor bursts

- Sectors: Earth Sciences

- Tools/Workflows: Rapid segmentation of tremor-like time–frequency patches in regional networks; triage for analyst review

- Assumptions/Dependencies: Parameter tuning for domain-specific frequency bands; validation on representative datasets

- Teaching modules for time–frequency event extraction

- Sectors: Education, Academia

- Tools/Workflows: Open-source examples using TokEye/FAITH; lab assignments on baseline removal, denoising, and segmentation; reproducible pipelines

- Assumptions/Dependencies: Basic Python environment; spectrogram-ready datasets for classroom use

Long-Term Applications

These applications will benefit from further research, scaling, integration, or validation before widespread deployment.

- Closed-loop plasma control (in-shot) for mode suppression/avoidance

- Sectors: Energy (fusion), Control Systems

- Tools/Workflows: Integration with PCS for ECCD targeting (NTM), fast AE/ELM triggers; quantized/pruned models on real-time controllers; sub-100 ms end-to-end latency

- Assumptions/Dependencies: Deterministic latency guarantees; hardware acceleration on PCS; rigorous safety and reliability testing

- ITER/SPARC-scale automated labeling and data governance

- Sectors: Energy (fusion), Data Infrastructure, Policy

- Tools/Workflows: Petabyte/day pipelines to auto-label spectrograms; standardized event schemas; metadata catalogs and FAIR-compliant archives

- Assumptions/Dependencies: HPC resources; consensus on annotation standards; data access and retention policies across facilities

- Joint cross-diagnostic inference (multi-view fusion)

- Sectors: Energy (fusion), Software/AI

- Tools/Workflows: Models that learn inter-sensor correlations (phase, turbulence, multi-physics) in one pass; improved sensitivity to faint modes

- Assumptions/Dependencies: Synchronized multi-sensor datasets; architectural advances to avoid memory/compute blowup with many channels

- Explainable mode discovery and cross-device meta-analysis

- Sectors: Academia, Energy (fusion)

- Tools/Workflows: Large, curated event catalogs enabling statistical discovery of new mode families and operating envelopes; explainability overlays (e.g., saliency on T–F)

- Assumptions/Dependencies: Harmonized metadata across devices; reproducible pipelines; community tools for cross-lab analysis

- Clinical EEG/ECG event detection and triage

- Sectors: Healthcare

- Tools/Workflows: Self-supervised denoising + segmentation for seizure/spike detection or arrhythmia bursts in T–F space; clinician triage dashboards

- Assumptions/Dependencies: Extensive clinical validation; regulatory approvals; strong privacy/security safeguards; domain-specific thresholds

- Edge bioacoustic mitigation for marine operations

- Sectors: Environment, Maritime, Offshore Energy

- Tools/Workflows: On-buoy/on-vessel embedded inference for marine mammal detection; real-time alerts for ship-strike avoidance or survey shut-downs

- Assumptions/Dependencies: Efficient, low-power hardware; model compression; robust performance under variable ambient noise; stakeholder acceptance by regulators

- Embedded anomaly detection in robotics/manufacturing assets

- Sectors: Robotics, Manufacturing, Industrial IoT

- Tools/Workflows: PLC/edge-device deployment for continuous vibration/acoustic monitoring; predictive maintenance scheduling

- Assumptions/Dependencies: Integration with existing PLCs/SCADA; domain adaptation; labeled failure data for benchmarking

- Defense/sonar/radar signal extraction in cluttered environments

- Sectors: Defense, Aerospace

- Tools/Workflows: Self-supervised multichannel denoising and event segmentation for weak targets amidst broadband clutter

- Assumptions/Dependencies: Access to representative datasets; security/ITAR compliance; real-time processing constraints

- Astronomy and remote sensing event detection (e.g., gravitational-wave glitches, radio bursts)

- Sectors: Space/Astronomy, Earth Observation

- Tools/Workflows: Automated T–F event extraction to triage large-scale spectrogram archives; anomaly discovery workflows

- Assumptions/Dependencies: Domain-specific preprocessing (e.g., whitening pipelines); validation against existing pipelines

- Smart-home and on-device audio event detection with privacy

- Sectors: Consumer Electronics, Privacy Tech

- Tools/Workflows: Robust noise-aware event detectors (e.g., glass break, alarms) running locally; reduced false alarms in nonstationary noise

- Assumptions/Dependencies: Model compression for embedded hardware; curated datasets; privacy-by-design constraints

- “Spectrogram Event Extractor” platform and standards

- Sectors: Software/AI, Policy, Standards

- Tools/Workflows: Productized APIs and plugins (MDSplus/EPICS/CODAC); streaming microservices (Kafka/GStreamer) for T–F analytics; open annotation standards

- Assumptions/Dependencies: Community buy-in for standards; sustainable maintenance; interoperability with legacy systems

- Procurement and regulatory guidance for data-ready instrumentation

- Sectors: Policy, Energy (fusion), Environment

- Tools/Workflows: Guidelines to ensure future diagnostics or monitoring arrays produce AI-ready, synchronized, multi-channel T–F data; compliance checklists for environmental monitoring

- Assumptions/Dependencies: Coordination across labs/agencies; balancing data volume with cost; clear governance for data sharing

Key Cross-Cutting Assumptions and Dependencies

- Noise model: Best denoising performance assumes zero-mean, independent noise across channels; performance may degrade otherwise.

- Broadband baseline: Background must exhibit 1/f-like structure for baseline removal to be effective; parameters may require tuning per sensor.

- Channel availability: Multichannel inputs improve denoising; single-channel operation is supported but less robust (mitigated by refinement).

- Spectrogram configuration: STFT windowing and resampling must match the signal’s bandwidth and dynamics; multi-scale training helps but not a panacea.

- Latency and compute: GPU accelerates to ~0.5 s per shot; CPU-only deployments may need quantization/pruning for tighter latency.

- Thresholding: Knee-CDF thresholding requires presence of events; purely quiescent spectra may yield spurious sparse detections and need rule-based gating.

- Domain transfer: Zero-shot generalization is promising (TJ-II, DCLDE) but fine-tuning typically improves precision and alignment with domain-specific annotation practices.

Glossary

- 1/f noise: A type of signal noise where power spectral density scales inversely with frequency (often denoted 1/fχ). "it is observed to follow a dropoff"

- Alfvén eigenmodes (AE): Magnetically driven plasma oscillations associated with Alfvén waves in tokamaks/stellarators. "including coherent modes such as \acrfull{mhd} instabilities and \acrfull{ae},"

- AP-BSN: A blind-spot denoising network variant that masks target pixels to avoid identity mapping and learn noise statistics. "Methods such as AP-BSN, which uses variable kernel sizes has been tested,"

- asymmetric least squares: A baseline estimation method that penalizes positive residuals more to fit curved backgrounds in spectra. "We use the standard value of for asymmetric least squares, which balances fitting fidelity with smoothness constraints"

- AT-BSN: A blind-spot denoising model variant optimized for efficiency relative to AP-BSN. "Blind-spot denoising example with AT-BSN, a more efficient form of AP-BSN."

- beam emission spectroscopy (BES): A diagnostic measuring plasma density fluctuations via light from injected neutral beams. "and beam emission spectroscopy measurements from DIII-D."

- BF-16 (bfloat16) precision: A 16-bit floating-point format with 8-bit exponent for efficient deep learning training/inference. "Training was done using \acrfull{bf}-16 precision."

- BM3D: A state-of-the-art image denoising algorithm based on block matching and collaborative filtering in 3D transform domains. "Linear filters (e.g., Gaussian/Wiener filters, BM3D) tend to average out the faint, transient, and non-stationary signals"

- Brownian noise: Integrated (1/f2) stochastic noise with power increasing at lower frequencies. "also known as Brownian noise,"

- Chebyshev Type I decimation: Downsampling preceded by an IIR Chebyshev Type I low-pass filter to control aliasing. "8th-order Chebyshev Type I decimation with phase preservation"

- CO2 interferometer: A density diagnostic using CO2 laser interferometry to measure line-integrated electron density. "CO2 interferometers,"

- correlation electron cyclotron emission (CECE): A dual-channel ECE technique that exploits correlated fluctuations to improve SNR. "such as \acrfull{cece} which effectively provides two measures of the same phenomena"

- cross-power spectrum (CPS): The frequency-domain product of one signal and the complex conjugate of another, used to reveal coherent components. "similar to the classical \acrfull{cps}"

- cumulative distribution function (CDF): The integral distribution function used here to locate a knee point for spectrogram thresholding. "the knee point of the spectrograms \acrfull{cdf}"

- curvelet transform: A multiscale directional transform suited for sparsely representing edges/curves in images and spectrograms. "Conversely, sparse transforms (e.g., wavelet, curvelet) rely on strong priors"

- DCLDE 2011 dataset: A benchmark bioacoustics dataset for detection/classification/localization of marine mammals. "we deploy it on a well known \acrfull{dclde} 2011 Ocodonte dataset"

- electron cyclotron current drive (ECCD): A technique that drives plasma current using electron cyclotron waves to control instabilities. "199607 is \acrfull{eccd} supressed,"

- electron cyclotron emission (ECE): Radiation emitted by electrons gyrating in magnetic fields, used to infer electron temperature/fluctuations. "We also check \acrshort{ece}."

- electroencephalography (EEG): A neurophysiological measurement of brain electrical activity; used here for analogies to spectrogram event analysis. "which is widely used in \acrfull{eeg} signal processing"

- edge localized modes (ELM): Bursty instabilities at the plasma edge causing transient transport events. "and transient modes such as \acrfull{elm} and neutral particle spikes,"

- empirical mode decomposition (EMD): A data-driven method that decomposes signals into intrinsic mode functions for nonstationary analysis. "[e.g., \acrfull{emd}, \acrfull{vmd}]"

- event-related spectral perturbation (ERSP): A measure of spectral changes time-locked to events; adapted here for baseline removal. "This is based on \acrfull{ersp} which is widely used in \acrfull{eeg} signal processing"

- FAITH (Fusion Artificial Intelligence and Toolkit Hub): A Python toolkit and data hub for high-performance ML on fusion data. "Data is processed via \acrfull{faith}, a Python package and fusion database"

- geodesic acoustic modes (GAM): Axisymmetric oscillations in toroidal plasmas linked to geodesic curvature and zonal flows. "These can include \acrfull{ntm}, \acrfull{gam} \acrshort{tm}, \acrshort{ae}, and kinks."

- Hann window: A tapering function used in STFT to reduce spectral leakage. "processed using a Hann window (, overlap=87.5\%)"

- harmonic-percussive separation (HPS): A technique separating horizontal (harmonic) and vertical (percussive) components in time-frequency data. "This problem resembles that of \acrfull{hps}"

- HDF5: A hierarchical data format for large, complex datasets commonly used in scientific computing. "With the given starting HDF5 Dataset format,"

- ITER: An international tokamak project aiming to demonstrate net energy gain from fusion. "Next-generation fusion facilities like ITER face a "data deluge,""

- Kolmogorov-type cascades: Energy transfer across scales in turbulence following Kolmogorov’s theory, affecting spectral slopes. "roughly follows Kolmogorov-type cascades"

- magnetohydrodynamic (MHD): The macroscopic theory of conducting fluids (like plasmas) in magnetic fields; describes many plasma instabilities. "including coherent modes such as \acrfull{mhd} instabilities"

- Magnetics High Resolution (MHR): A high-resolution magnetic diagnostic used for fluctuation measurements. "This is demonstrated on four representative case studies: \acrfull{ece}, \acrfull{co2}, \acrfull{mhr}, and \acrfull{bes}"

- mean absolute error (MAE): A regression loss measuring average absolute deviation, used for robust training. "The network is optimized by minimizing the \acrfull{mae}"

- MODESPEC: A fusion workflow/tool for spectral analysis referenced for compatibility with the STFT pipeline. "compatibility with existing fusion workflows e.g., MODESPEC"

- MUSIC (Multiple Signal Classification): A high-resolution subspace method for frequency/DOA estimation. "such as \acrfull{ssa} or MUSIC scale exponentially with channel count,"

- neoclassical tearing modes (NTM): Magnetic islands driven by neoclassical effects, a key class of MHD instabilities. "These can include \acrfull{ntm}, \acrfull{gam} \acrshort{tm}, \acrshort{ae}, and kinks."

- Noise2Noise: A self-supervised denoising paradigm learning to map noisy inputs to noisy targets to recover signal. "we can combine this with a scheme closer to Self-inspired Noise2Noise for full coverage"

- Otsu (thresholding method): An automatic global thresholding technique assuming a bimodal histogram; ill-suited for sparse spectrograms. "Standard image thresholding methods such as Otsu are designed for bimodal distributions"

- pre-emphasis filter: A frequency pre-weighting to counteract low-frequency dominance or color, often fα. "a pre-emphasis filter which follows the equation"

- short-time Fourier transform (STFT): A time-frequency transform applying the Fourier transform on windowed signal segments. "Therefore we utilize the \acrfull{stft} for its computational efficiency"

- singular spectrum analysis (SSA): A decomposition method using trajectory matrices and SVD for trend/noise separation. "such as \acrfull{ssa} or MUSIC scale exponentially with channel count,"

- Slepian-based multitaper methods: Spectral estimation using multiple orthogonal tapers (Slepian sequences) to reduce variance/leakage. "While wavelet decomposition and Slepian-based multitaper methods offer theoretical advantages,"

- SpecAug (SpecAugment): Data augmentation on spectrograms (time/frequency masking) to improve model robustness. "specifically SpecAug"

- stellarator: A toroidal magnetic confinement device shaped to confine plasma without large plasma currents. "from the TJ-II stellarator in Spain."

- tearing modes (TM): MHD instabilities where magnetic reconnection forms islands, degrading confinement. "These can include \acrfull{ntm}, \acrfull{gam} \acrshort{tm}, \acrshort{ae}, and kinks."

- tokamak: A toroidal magnetic confinement device using strong plasma current for fusion research. "Understanding the complex internal state of a tokamak relies on interpreting a vast array of diagnostic signals."

- total variation (TV): A regularization/metric measuring signal/image total gradient, used here as a stopping criterion. "we employed a \acrfull{tv} stopping criterion"

- U-Net: An encoder–decoder convolutional neural network with skip connections for segmentation/denoising. "In this paper, we have used a U-Net three separate times."

- variational mode decomposition (VMD): A decomposition method that extracts band-limited intrinsic modes via variational optimization. "[e.g., \acrfull{emd}, \acrfull{vmd}]"

- Welch periodogram: A spectral estimator averaging periodograms over segments to reduce variance. "Welch periodogram, which is the time averaged spectrogram."

Collections

Sign up for free to add this paper to one or more collections.