- The paper introduces an agentic dual-agent framework (Researcher and Presenter) that autonomously curates content and designs adaptive presentations.

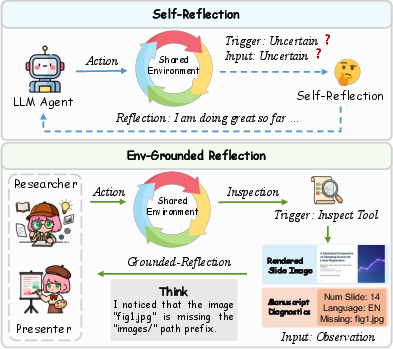

- It employs environment-grounded reflection by inspecting perceptual artifacts to iteratively correct visual and textual defects.

- Experimental results validate superior content quality, visual diversity, and cost-effective performance compared to baseline systems.

DeepPresenter: Environment-Grounded Reflection for Agentic Presentation Generation

Overview

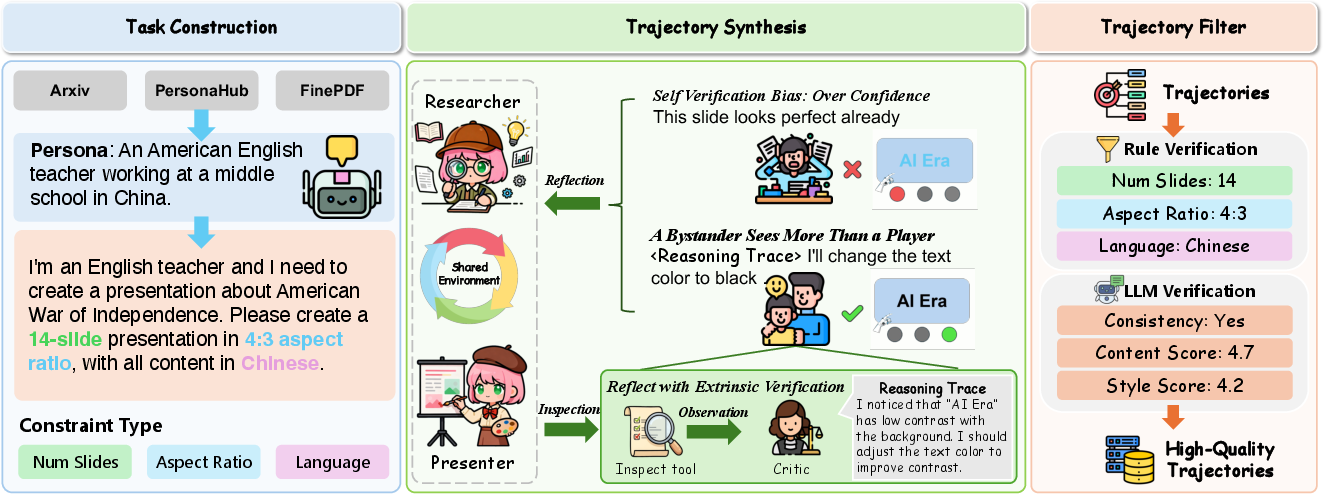

The paper "DeepPresenter: Environment-Grounded Reflection for Agentic Presentation Generation" (2602.22839) introduces a novel agentic framework—DeepPresenter—for automated presentation generation. Unlike existing systems founded on rigid templates and introspective self-reflection, DeepPresenter employs a dual-agent architecture (Researcher and Presenter) with environment-grounded reflective capabilities. This approach enables autonomous content research, adaptive visual design, and iterative artifact refinement based on rendered environmental observations.

Figure 1: DeepPresenter’s dual-agent system collaboratively generates manuscripts and slides, leveraging perceptual artifacts for environment-grounded reflection.

Framework Architecture and Methodology

Presentation generation is modeled as a sequential multi-step agentic task operating within an interactive environment. DeepPresenter decomposes execution into Researcher and Presenter phases:

- Researcher Agent: Conducts adaptive information retrieval and synthesis, generating a structured manuscript aligned with user intents. This agent invokes retrieval, analysis, and file manipulation tools, enabling intent-specific research depth responsive to task complexity.

- Presenter Agent: Transforms the manuscript into visually coherent HTML/CSS slides in a content-driven manner rather than template-based generation. Presenter develops a global design plan with topic-aligned themes and generates each slide as a fixed-layout artifact.

This decomposition ensures both deep content curation and topic-aware visual realization.

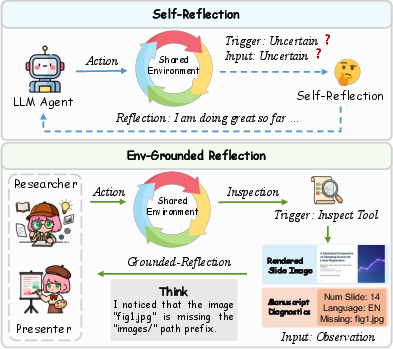

Environment-Grounded Reflection

DeepPresenter does not rely on introspective reasoning traces or intermediate code-level self-reflection. Instead, it grounds revision in perceptual artifact states—specifically, rendered manuscripts and slides exposed via the inspect tool:

Figure 2: Contrasting self-reflection with environment-grounded reflection; DeepPresenter leverages environmental observations for artifact inspection and targeted correction.

Agents inspect rendered artifacts, identify post-render defects (e.g., low contrast, layout overflow, broken images), and revise intermediate representations via the think tool. This observe–reflect–revise loop tightly aligns artifact inspection with end-user perception, effectively mitigating defects that introspection cannot capture.

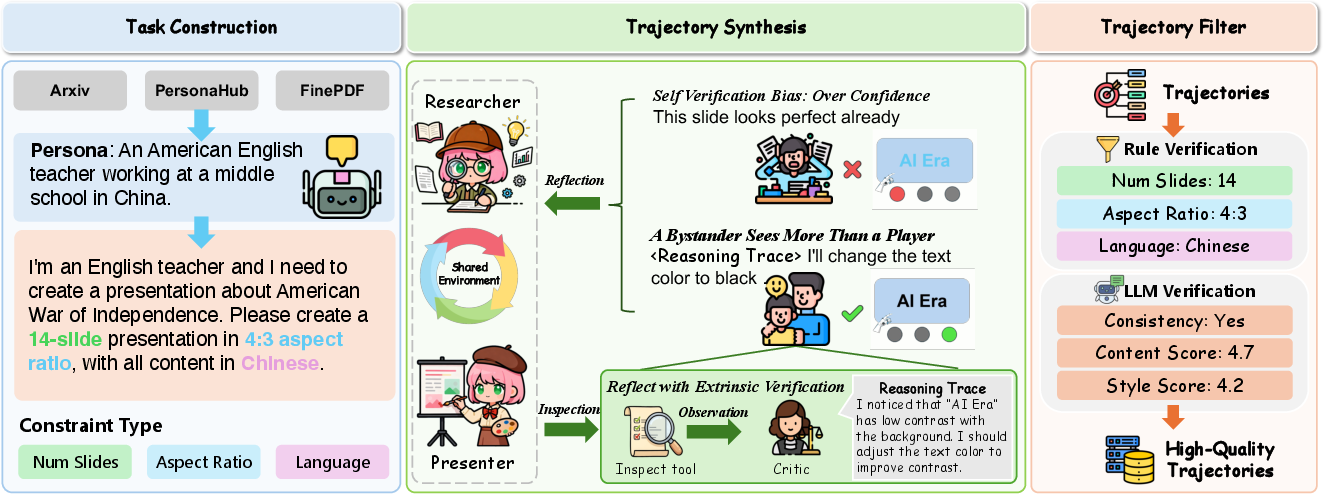

Data Synthesis and Extrinsic Verification

To enable robust fine-tuning of DeepPresenter-9B, a high-quality ultra-present dataset of agentic trajectories is synthesized using a three-stage pipeline:

Figure 3: Data synthesis pipeline integrating query construction, extrinsic verification via critic reasoning traces, and multi-step trajectory filtering for quality assurance.

Extrinsic verification leverages independent critics to review artifact states and prescribe defect-triggered corrective actions. This strategy decouples verification from the originating agent trajectory, mitigating self-verification bias and driving higher-quality reflective behaviors during agent rollouts.

Experimental Evaluation

Quantitative Results

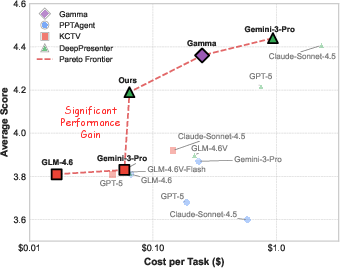

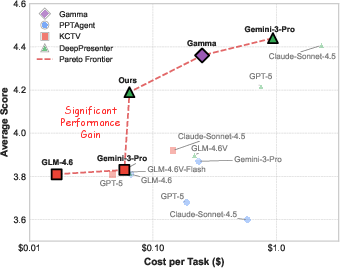

DeepPresenter is evaluated on 128 diverse presentation-generation tasks with metrics for constraint satisfaction, content quality, visual style, and diversity. The framework consistently achieves state-of-the-art results across commercial and academic baselines:

- Gemini-3-Pro backbone: Avg. score 4.44, surpassing Gamma (4.36) and all open-source systems.

- Visual diversity: DeepPresenter achieves a Vendi Score of 0.79, substantially exceeding template-based baselines (≤0.35).

- DeepPresenter-9B: Compact model achieves 4.19, outperforming all open-source systems, closely matching GPT-5 (4.22) at reduced cost.

Ablation studies validate the critical contributions of environment-grounded reflection, dual-agent decomposition, and rigorous trajectory filtering:

- Removing environment-grounded reflection degrades performance (Gemini-3-Pro: 4.32 vs. 4.44; DeepPresenter-9B: 3.82 vs. 4.19).

- Exclusion of dual-agent design yields substantial drops (Gemini-3-Pro: 4.04; DeepPresenter-9B: 3.23).

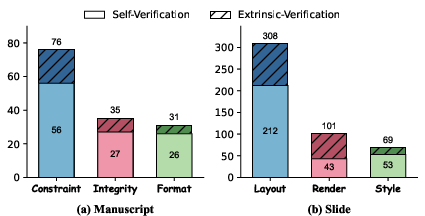

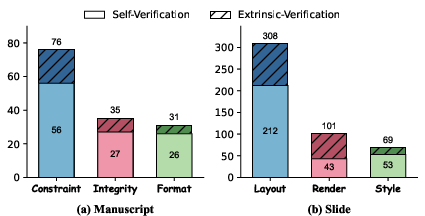

Extrinsic Verification Analysis

Extrinsic verification significantly strengthens defect identification and revision signals, particularly for slide-level failures:

Figure 4: Defect distribution comparison—extrinsic verification leads to broader and deeper detection of manuscript and slide errors.

Trajectory synthesis without extrinsic verification exhibits self-justification bias, with agents rationalizing defects and missing substantial post-render issues.

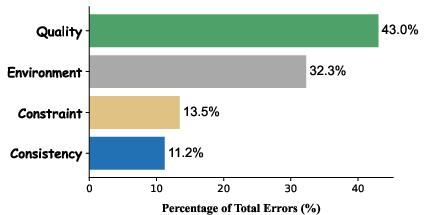

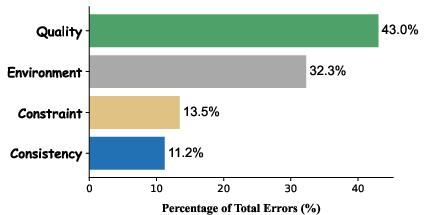

Failure and Efficiency Studies

Trajectory failure analysis indicates quality errors dominate long-horizon generation (43.0%), followed by environment/interruption failures (32.3%). Constraint and consistency violations are less prevalent.

Figure 5: Failure distribution in agentic rollouts—quality and environment errors are primary concerns.

Efficiency evaluations show DeepPresenter-9B advances Pareto-optimal trade-offs, delivering high quality at comparable or reduced inference cost.

Figure 6: Cost-performance trade-off; DeepPresenter-9B outperforms prior open-source frontiers at similar price points.

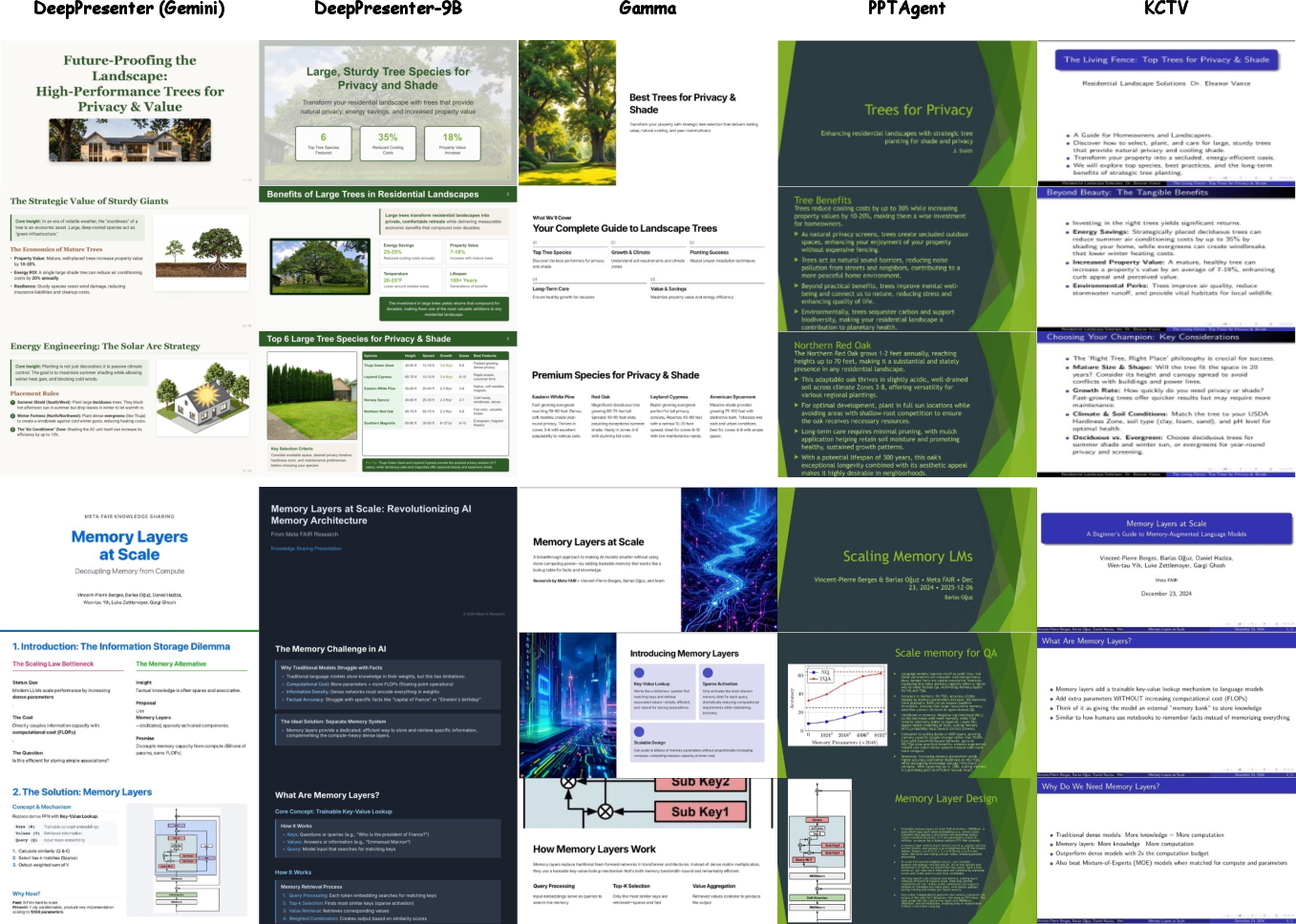

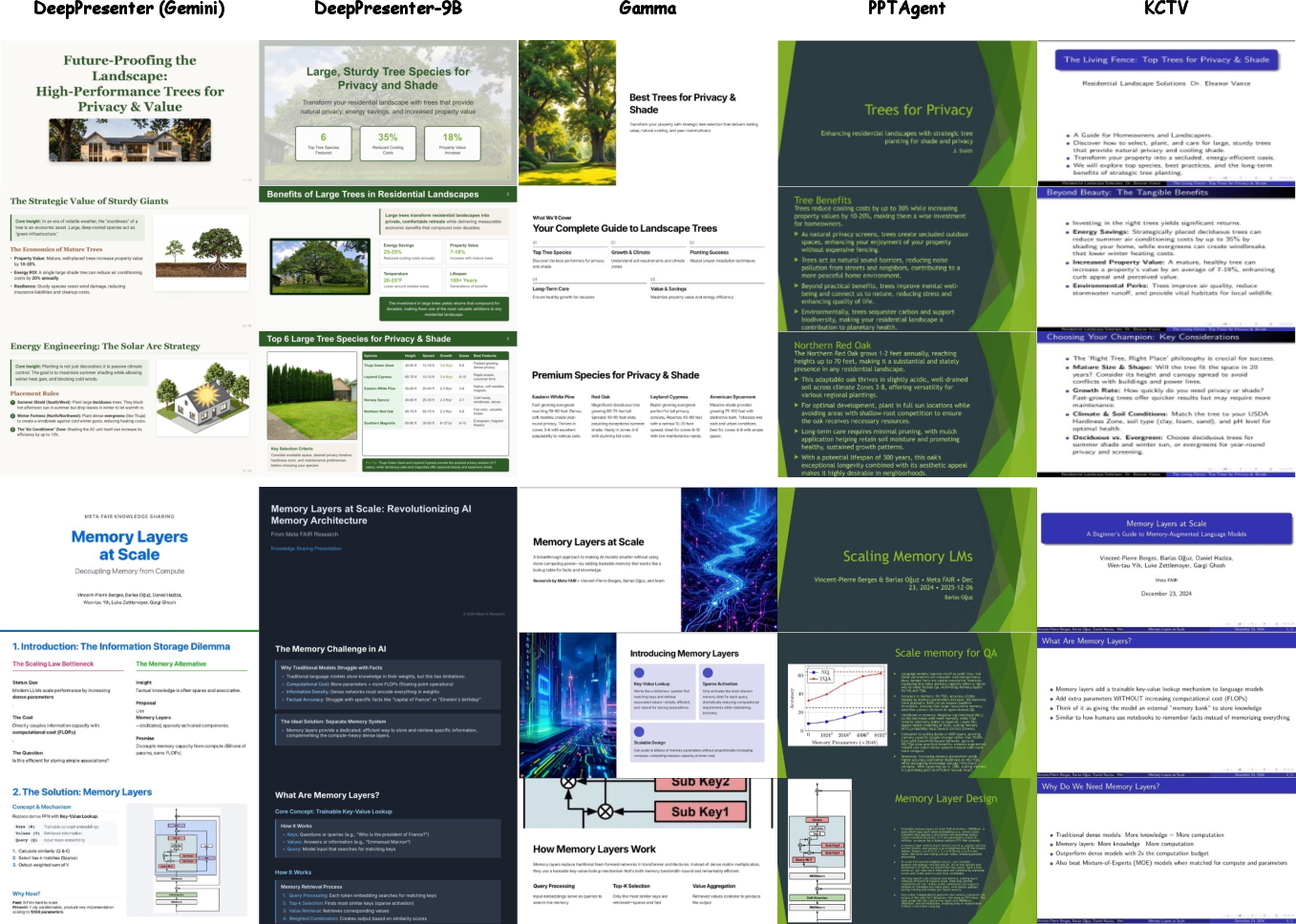

Qualitative Assessment

Case studies highlight DeepPresenter’s ability to generate visually thematic, asset-rich slides, while baselines produce text-heavy, template-constrained outputs with frequent misalignment of visual and narrative elements.

Figure 7: Qualitative comparison—DeepPresenter produces topic-resonant, visually coherent presentations absent in baseline methods.

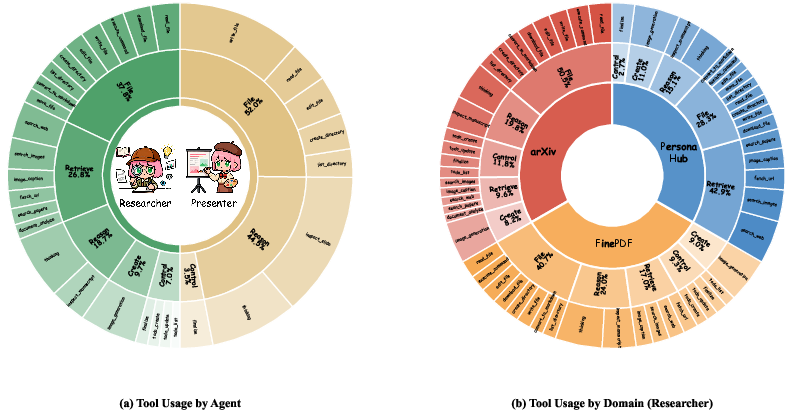

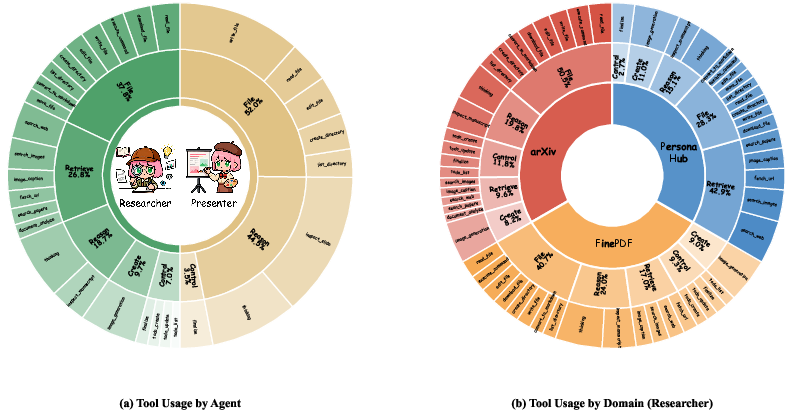

Tool Usage and Domain Analysis

Agent tool invocation patterns further validate role specialization. Researcher predominantly utilizes retrieval tools in persona-driven scenarios requiring knowledge search; Presenter focuses on file and reasoning tools for artifact manipulation and reflection.

Figure 8: Tool usage patterns—specialized agent roles adapt tool selection based on task domain characteristics.

Domain breakdowns demonstrate DeepPresenter’s strong performance across instructional, academic, and persona-driven presentations, with domain-specific trade-offs between constraint compliance and visual style.

Implications and Future Directions

The environment-grounded reflection, dual-agent specialization, and extrinsic verification mechanisms established in DeepPresenter represent a significant architectural departure from template-based, introspective systems. Practically, this enables highly adaptive, perceptually aligned artifact generation suitable for real-world educational, business, and research applications. Theoretically, it augments agentic LLM frameworks with embodied environmental feedback loops beyond internal token-based reasoning.

Future work may address inference-time integration of extrinsic verification to further mitigate self-verification bias, explore greater robustness in multi-step tool-using rollouts, and generalize environment-grounded reflection for other multimodal artifact generation domains.

Conclusion

DeepPresenter establishes an agentic paradigm for presentation generation, coupling autonomous research and design specialization with post-render environment-grounded reflection. Strong empirical results corroborate the efficacy of grounding artifact revision in perceptual environmental states and decoupling verification signals via extrinsic critics. The compact DeepPresenter-9B highlights the viability of distilling competitive agentic behaviors at reduced cost through high-quality trajectory synthesis. This framework paves the way for more adaptive, perceptually aligned, and efficient multimodal artifact generation in future AI systems.