Large-scale portfolio optimization on a trapped-ion quantum computer

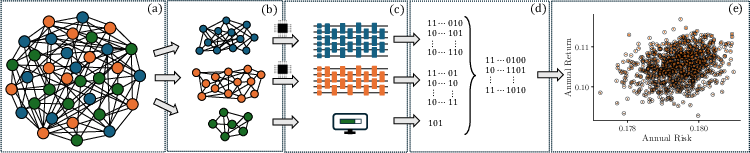

Abstract: We present an end-to-end pipeline for large-scale portfolio selection with cardinality constraints and experimentally demonstrate it on trapped-ion quantum processors using hardware-aware decomposition. Building on RMT-based correlation-matrix denoising and community detection, we identify correlated asset groups and introduce a correlation-guided greedy splitting scheme that caps each cluster by the executable qubit budget. Each cluster defines a hardware-embeddable QUBO subproblem that we solve using bias-field digitized counterdiabatic quantum optimization (BF-DCQO), a non-variational method that avoids classical parameter-training loops. We recombine low-energy candidates into global portfolios and enforce feasibility with a two-stage post-processing routine: fast repair followed by a cardinality-preserving swap local search. We benchmark the workflow on a 250-asset universe taken from the S&P 500 and execute subproblems on a 64-qubit Barium development system similar to the forthcoming IonQ Tempo line. We observe that larger executable subproblem sizes reduce decomposition error and systematically improve final objective values and risk-return trade-offs relative to randomized baselines under identical post-processing. Overall, the results establish a hardware-tested route for scaling financial optimization problems, defined by a trade space in which executable problem size and circuit cost are balanced against the resulting solution quality.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper shows a practical way to use a quantum computer to help pick a stock portfolio. The goal is to choose exactly K stocks (for example, 125 out of 250) that balance earning money (return) and avoiding big ups and downs (risk). Because today’s quantum computers are still limited in size, the authors break the big problem into smaller parts that fit the machine, solve those parts with a quantum algorithm, and then stitch the answers back together. They test the full “end-to-end” process on real trapped-ion quantum hardware.

What questions did the researchers ask?

- Can we design a full workflow that turns real market data into a portfolio and runs on today’s quantum computers?

- If we break a big portfolio problem into smaller chunks (so they fit on the device), does solving bigger chunks give better final portfolios?

- Can a special quantum method (called BF-DCQO) find good answers without lots of trial-and-error tuning?

- How do these quantum-guided solutions compare to simple classical baselines when we use the same final “fix-up” steps?

How did they do it?

To make this understandable, think of the portfolio problem as building a sports team with exactly K players: you want strong players (high return), but not all from the same risky position (control risk). The team size K is a hard rule.

Step 1: Clean the data (reduce noise)

They start with real stock data from the S&P 500 (250 stocks, daily prices from 2020 to mid-2025). Stocks often move together because of market “noise” (like static on a radio) and big market-wide waves. The team uses a mathematical filter (from random matrix theory) to:

- Separate random noise

- Separate the overall market move (the “global mode”)

- Keep the useful structure that shows which stocks truly move together

This makes the relationship map between stocks more reliable.

Step 2: Group related stocks into clusters that fit the quantum computer

Imagine grouping players who play well together. They find “communities” of stocks that are strongly related. Some groups are too big to fit on the quantum computer (like a bus with limited seats, called the “qubit budget”). So they split big groups greedily: start from a stock with many strong connections, add its closest “neighbors” until the size limit is reached, then repeat. Every stock ends up in some cluster that fits the device.

Step 3: Turn each cluster into a simple yes/no puzzle

Each stock becomes a yes/no decision: 1 means “include”, 0 means “exclude”. The math that scores a portfolio is a QUBO (Quadratic Unconstrained Binary Optimization), which you can think of as a score that depends on individual picks and how pairs of picks interact. For quantum hardware, they transform this into an “Ising model,” which is like a grid of tiny magnets that want to align or oppose each other; the best solution is the lowest-energy arrangement.

Step 4: Solve each cluster with a quantum algorithm (BF-DCQO)

BF-DCQO is a method that guides the quantum system toward good solutions quickly, using “shortcuts” to avoid getting stuck. In simple terms:

- Start from a gentle setup that nudges qubits toward certain directions (bias fields).

- Add counterdiabatic steps (shortcuts) so you can reach good solutions without waiting too long (important because quantum states don’t last forever).

- Digitize the steps into a circuit and prune tiny rotations (remove tiny tweaks) to keep the circuit simpler.

- Repeat in cycles: after each run, use the best samples to update the bias so the next run focuses on promising regions.

They run this on trapped-ion quantum computers (IonQ devices). In these machines, individual ions (charged atoms) act like qubits and are controlled by lasers. Trapped-ion systems are good for problems with lots of connections because they can make many qubits interact without needing lots of extra “wiring.”

Step 5: Recombine and fix the global portfolio

After solving each cluster, they build full portfolios by stitching cluster decisions into one long yes/no list for all 250 stocks. Then they do two quick classical fix-up steps:

- Repair the team size: make sure there are exactly K stocks by turning off or on the least helpful choices (based on the math score’s gradient).

- Swap search: try swapping one included stock with one excluded stock to see if the score improves, keeping the total number included equal to K.

These lightweight tweaks often turn a good quantum-guided guess into a better final portfolio.

What did they find, and why is it important?

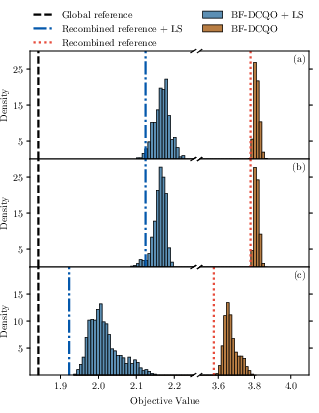

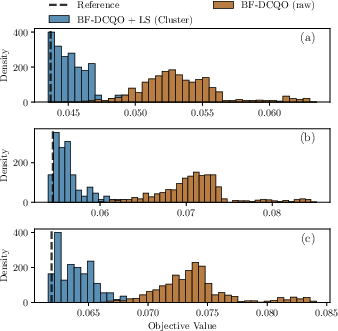

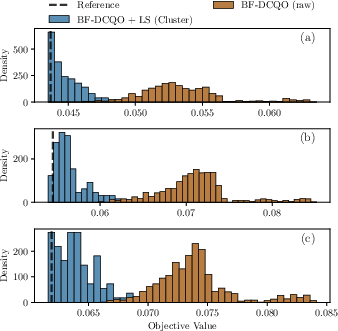

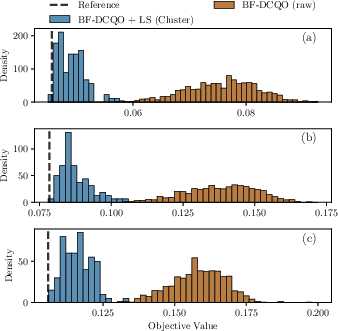

- Bigger chunks help: When the device could handle larger clusters (64-qubit runs vs 36-qubit runs), the final portfolios got better. This is because fewer cross-cluster relationships were cut off during splitting, so the optimization saw more of the true structure.

- The quantum method produces strong candidates: After the simple fix-up steps, many quantum-guided portfolios had better risk–return trade-offs than randomized baselines treated with the same fix-ups.

- Pruning didn’t hurt much: Cutting small gates from the circuits (to reduce noise and runtime) did not significantly worsen final performance, showing a practical trade-off that favors simpler circuits on noisy hardware.

- Post-processing matters: Even if each cluster’s “best” solution is good, combining them and then doing the swap search can beat the obvious recombination. In short, the final classical tweak is crucial.

- There’s still a gap to the true global best: The results improve as cluster size grows, but they don’t fully reach the global optimum—mostly because splitting the big problem into clusters ignores some links between clusters. More qubits and better hardware should shrink this gap.

Why it matters: This paper demonstrates a working path to tackle realistic financial optimization on current quantum machines, showing clear improvements as hardware scales. It’s a practical blueprint rather than a toy demo.

What’s the potential impact?

- Near-term practicality: You can solve parts of big finance problems today on real quantum hardware by respecting device limits and designing the workflow around them.

- Hardware-aware design scales: As quantum computers get more qubits and cleaner operations, the same method can use larger clusters, cut fewer links, and deliver better portfolios.

- Hybrid is the sweet spot: Quantum steps find promising regions; small classical fix-ups polish the solutions. This teamwork is likely to stay important for a while.

- Parallelization: Since clusters are independent, future setups could solve many subproblems at the same time across multiple devices, speeding up the whole pipeline.

- Beyond portfolios: The approach (clean data, cluster by structure, solve with device-aware quantum circuits, then smartly recombine and refine) can apply to many other large optimization problems in logistics, scheduling, or network design.

In short, this research shows a practical, tested route to using quantum computers in finance today: break the big problem into hardware-sized pieces, solve them with a fast quantum method, and then carefully put the puzzle back together. As quantum machines grow, this route gets better.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

The following list captures concrete gaps, limitations, and unresolved questions left by the paper that future work could address:

- Quantify decomposition error: Derive and empirically validate bounds that relate omitted inter-cluster couplings to suboptimality gaps in the global objective as a function of cluster sizes, coupling strengths, and .

- Cross-cluster coordination: Develop methods (e.g., Lagrangian relaxation, message passing, Benders-like coordination, or iterative reweighting) that incorporate cross-cluster couplings during subproblem solves or recombination, rather than relying solely on post-processing.

- Subproblem cardinality allocation: Replace the ad hoc choice with an optimized allocation of the global across clusters (e.g., via a master problem) to reduce repair burden and improve recombined feasibility/quality.

- Sensitivity to decomposition hyperparameters: Systematically study the impact of RMT denoising thresholds, eigenmode selection, community-detection algorithm/parameters, and the greedy splitting rule on solution quality and cluster topology.

- Use of denoising in the objective: Evaluate whether applying shrinkage/denoising to the covariance used in the QUBO (and robust estimation for ) improves optimization robustness relative to denoising used only for clustering.

- Penalty design and scaling: Analyze the conditioning, dynamic range, and hardware implementability of the penalty coefficient (including its effect on , magnitudes), and investigate rescaling or alternative constraint encodings to stabilize sampling.

- Constraint-preserving encodings: Explore -hot/weight-parity/LHZ encodings or subspace sampling that natively enforce cardinality, reducing reliance on penalty terms and post hoc repair.

- Recombination beyond concatenation: Design recombination schemes that account for inter-cluster interactions (e.g., overlapping clusters, consensus optimization, stitching via shared boundary variables) and maintain feasibility throughout.

- Post-processing neighborhoods: Compare the proposed gradient repair + first-improvement 1–1 swap with richer neighborhoods (k-flip, ejection chains), tabu/ILS/SA variants, and adaptive budgets to quantify marginal gains and runtime trade-offs.

- BF-DCQO hyperparameter robustness: Perform ablations on schedule choice, number of Trotter steps, , pruning thresholds, iterations, and bias-update sample count; propose auto-tuning or learning-to-tune strategies.

- Gate pruning methodology: Provide principled criteria (e.g., error bounds on expected cost or unitary distance) for selecting and quantify the fidelity–depth–performance trade-off under different pruning regimes.

- Comparative quantum baselines: Benchmark BF-DCQO against QAOA, quantum annealing, and other digitized CD variants on identical subproblems/hardware to assess algorithmic robustness and resource–quality trade-offs.

- Classical baseline rigor: Include strong end-to-end classical baselines (e.g., state-of-the-art metaheuristics on full 250-asset problem and cluster-decomposed variants) and time-limited MIQP solvers to isolate the quantum contribution beyond randomized baselines.

- Cost-to-solution accounting: Report circuit depths, two-qubit gate counts, wall-clock runtimes, queueing times, and total shots per subproblem; compare overall time/energy/cost against classical solvers for fair evaluation.

- Scalability characterization: Systematically vary , , and cluster counts/densities to model scaling of solution quality and runtime; explore parallel execution across multiple QPUs and its throughput limits.

- Generalization and robustness: Test across multiple universes, rolling windows, and market regimes; evaluate out-of-sample performance, turnover stability, and robustness to input noise (with uncertainty quantification).

- Financial realism: Incorporate sector/industry caps, turnover/transaction costs, minimum lot sizes, and continuous weights (mixed-integer formulations); assess how the pipeline adapts to more realistic constraints.

- Cardinality and preference parameters: Vary (away from ) and to map performance across risk-return trade-offs and different sparsity regimes; study how decomposition quality depends on these parameters.

- Bias-update policy: Investigate bias-update schedules (e.g., momentum, damping, entropy constraints) and stopping criteria to avoid mode collapse or excessive variance in larger systems.

- Error mitigation: Evaluate modern error-mitigation techniques (e.g., zero-noise extrapolation, symmetry verification, PEC) and their impact on achieved energies and feasibility rates.

- Measurement and diversity strategies: Analyze candidate-pool generation (size, selection of top- per iteration, resampling) and adopt diversity-promoting samplers (e.g., determinantal/thompson sampling, cross-entropy) to balance quality and coverage.

- Break-even analysis vs classical: Determine subproblem sizes/densities and hardware conditions under which quantum execution outperforms classical exact/heuristic methods in quality or time; current results still show Gurobi can solve the full 250-asset instance.

- Hardware generality: Assess portability to other architectures (superconducting, neutral atoms) with limited connectivity, including embedding/compilation constraints within the decomposition (e.g., topology-aware clustering).

- Circuit resource disclosure: Provide detailed per-subproblem resource profiles (depth, native gate counts by type) and their variance across runs to enable reproducibility and hardware-aware planning.

- Handling tiny clusters: Define specialized treatments for size-1/2 clusters (e.g., exact enumeration, analytic solutions) and policies for when to skip quantum execution to conserve QPU budget.

- Data and code availability: Release code, parameter settings, and datasets (or generators) to enable independent replication and extension of the workflow.

Practical Applications

Overview

The paper introduces a hardware-aware, end-to-end, hybrid quantum–classical workflow for fixed-cardinality portfolio optimization, combining: (i) RMT-based correlation denoising and community detection; (ii) correlation-guided greedy splitting to cap cluster size by a qubit budget; (iii) solving cluster-level QUBOs/Ising models with Bias-Field Digitized Counterdiabatic Quantum Optimization (BF-DCQO), a non-variational algorithm with circuit pruning; and (iv) a two-stage post-processing routine (cardinality repair + swap-based local search). The approach is demonstrated on trapped-ion hardware (36 and 64 qubits), showing that larger executable subproblems yield better global portfolios after recombination and post-processing.

Below are practical applications derived from the findings, grouped by time horizon, with sector links, potential tools/products/workflows, and feasibility assumptions.

Immediate Applications

These can be deployed or prototyped today on classical infrastructure with optional QPU calls via cloud access.

- Finance (asset management, ETFs, wealth, trading)

- Hybrid decision-support for cardinality-constrained portfolio construction (e.g., K-names, minimum lot sizes)

- Workflow: RMT denoising → community detection → qubit/compute-capped clustering → cluster-level solve (classical exact/heuristic or BF-DCQO on QPU) → recombination → cardinality repair + swap local search.

- Use cases: index/ETF composition, thematic baskets, smart-beta screens, constrained rebalancing, pre-trade what-if analysis across risk–return settings.

- Tools/products: Python library providing GetClusters, QUBO/Ising mapping, BF-DCQO wrappers (Qiskit + IonQ backend), and the two-phase post-processor; integration into existing portfolio construction pipelines and OMS/EMS tooling.

- Dependencies/assumptions: access to historical returns for correlation estimation; enough observations for RMT separation; cloud QPU credits optional; regulatory approval for decision-support (not necessarily full automation).

- Improved pre-processing and heuristics in classical optimizers

- Replace/noise-suppress correlation matrices via RMT; use community detection to reduce dimensionality; apply gradient-based cardinality repair and swap local search to any candidate set (even without QPU).

- Dependencies/assumptions: none beyond standard data and compute; immediate drop-in for existing optimizers (e.g., Gurobi, CPLEX).

- Candidate generation for downstream solvers

- Generate diverse, low-energy candidate portfolios via cluster-level solving (quantum or classical), then refine with existing mixed-integer frameworks.

- Dependencies/assumptions: existing MIP/MINLP solvers; batch compute.

- Cross-industry optimization with K-budget or selection constraints (QUBO/Ising)

- Machine learning: feature subset selection; experimental design with budget K.

- Supply chain/logistics: supplier selection, facility location with fixed number of sites, sensor placement, team selection.

- Marketing/Networks: influence maximization with limited seed set.

- Energy: selecting K generators or assets for contingency analysis (simplified variants).

- Tools/workflows: adopt the same decomposition-and-recombination template; swap local search at fixed Hamming weight.

- Dependencies/assumptions: ability to express problem as QUBO/Ising; domain-specific mapping and penalty selection.

- Software and developer tooling

- Hardware-aware QUBO decomposition toolkit

- “GetClusters” module (RMT denoising + community detection + greedy splitting), circuit-pruning compiler pass, sample debiasing, and cardinality-preserving local search as reusable components.

- Integrations: Qiskit, Gurobi, PyTorch/Scikit (for feature selection), D-Wave/QAOA alternatives.

- Dependencies/assumptions: availability of Python environment and open-source solvers/toolkits.

- Academia and education

- Benchmarking platform for dense Ising/QUBO under hardware constraints

- Teaching labs comparing BF-DCQO vs QAOA/annealing on clusterized instances; studying the trade-off between pruning, qubit budget, and solution quality.

- Dependencies/assumptions: access to public market data or synthetic datasets; simulator or limited QPU time.

- Policy and governance (foundational steps)

- Establish evaluation sandboxes for quantum-assisted decision-support in finance

- Create protocols for documenting hybrid workflows, decomposition choices, and post-processing for auditability.

- Dependencies/assumptions: regulator–industry collaboration; adherence to model risk frameworks (e.g., SR 11-7).

Long-Term Applications

These depend on larger qubit counts, better fidelities, faster execution, or further algorithmic development (e.g., cross-cluster coupling handling).

- Finance (production-scale quantum-accelerated optimization)

- Full-scale, multi-constraint portfolio optimization at hundreds–thousands of assets with real-time or frequent rebalancing

- Extensions: explicit transaction costs/turnover, sector/ESG/lot constraints, robust optimization, dynamic scenarios, multi-period models.

- Products: “Quantum Optimization as a Service” integrated into buy-side stacks; quantum-enhanced robo-advisors for personalization at scale.

- Dependencies/assumptions: ≥O(102–103) high-fidelity qubits, improved coherence, orchestration across multiple QPUs, error mitigation; integration with risk platforms; regulatory approval for production use.

- Cross-cluster coupling integration and better global refinement

- Use Lagrangian/ADMM-style coordination, cutting planes, or learned surrogates to reincorporate omitted inter-cluster interactions during recombination.

- Dependencies/assumptions: algorithmic advances; more shots/iterations; tighter hardware–software co-design.

- Energy and infrastructure

- Larger-scale unit commitment, network reconfiguration, and maintenance planning via clusterized QUBOs

- Map grid regions or asset sets to clusters; use quantum subproblem solvers with improved fidelity to approach near real-time operations.

- Dependencies/assumptions: faithful QUBO formulations, stronger devices, domain-specific constraints (ramping, reserves) embedded.

- Healthcare and life sciences

- Biomarker/gene-set selection and cohort selection under budget constraints

- Use correlation or mutual-information graphs to cluster features; apply the hybrid pipeline to select K features/samples.

- Dependencies/assumptions: validated mappings and regulatory oversight; privacy-preserving workflows.

- Telecom/transport/logistics

- Network design and augmentation (adding K links), facility placement at city scale, routing with selection constraints

- Dependencies/assumptions: problem-specific encodings; larger quantum resources; integration with digital twins.

- Advanced tooling and orchestration

- Automated decomposition engine and QPU orchestration

- Parallel execution of clusters across heterogeneous QPUs; auto-tuning of pruning thresholds; adaptive bias updates; error mitigation pipelines.

- Dependencies/assumptions: cloud multi-QPU schedulers; stable APIs, cost-effective access.

- Policy and standards

- Model risk, auditability, and fairness standards for quantum-assisted decision systems

- Traceability across decomposition choices, pruning levels, and post-processing steps; standardized reporting for backtesting and stress scenarios.

- Dependencies/assumptions: cross-industry consortia; alignment with existing AI/ML governance.

- Daily life (consumer finance)

- Quantum-enhanced personalized portfolio recommendations in retail apps

- Tailored K-name portfolios balancing risk/return and preferences, re-optimized on schedule.

- Dependencies/assumptions: mature, affordable quantum backends; clear explainability; compliance and consumer protection.

Notes on Key Assumptions and Dependencies (common across applications)

- Data: Sufficient time series length for RMT denoising and stable correlation estimates; stationarity assumptions may need stress testing.

- Hardware: Trapped-ion or equivalent gate-model devices with near all-to-all connectivity; improved fidelities and throughput for frequent runs.

- Algorithms: Robust penalty tuning for QUBO constraints; effective post-processing remains essential; cross-cluster interactions currently approximate.

- Integration: Secure, auditable pipelines; compatibility with enterprise risk/IT stacks; cost and latency acceptable for business SLAs.

Glossary

- Acousto-optic deflectors (AODs): Optical devices that steer laser beams to address individual ions in trapped-ion systems, improving control and alignment. "These QPUs leverage advanced optical control systems based on acousto-optic deflectors (AODs) to enable independent beam steering to individual ions,"

- Adiabatic path: A slowly varying interpolation between an initial and target Hamiltonian used to guide quantum evolution toward optimal solutions. "BF-DCQO builds up on an adiabatic path from a biased initial Hamiltonian to the target Hamiltonian ,"

- All-to-all two-qubit interactions: The ability (or near-ability) for any pair of qubits to interact directly, enabling dense coupling graphs without SWAP overhead. "near–all-to-all two-qubit interactions mediated by collective motional modes."

- Bias-field digitized counterdiabatic quantum optimization (BF-DCQO): A non-variational quantum optimization algorithm that augments digitized counterdiabatic evolution with adaptive bias fields to improve sampling of low-energy solutions. "Bias-field digitized counterdiabatic quantum optimization (BF-DCQO) is a recent algorithm proposed to tackle optimization problems encoded as Ising problems"

- Cardinality constraint: A restriction requiring exactly K variables (e.g., assets) to be active, often enforced via penalties or specialized search. "portfolio selection with cardinality constraints is a prototypical challenging problem"

- Cardinality-preserving swap local search: A local optimization method that improves solutions while maintaining a fixed number of selected items by swapping active/inactive variables. "fast repair followed by a cardinality-preserving swap local search."

- Community detection: Algorithms that partition a graph or correlation network into clusters (communities) of strongly related nodes, aiding decomposition. "A community detection method is applied to , producing an initial hard partition of assets."

- Counterdiabatic (CD) protocol: A technique that modifies the Hamiltonian during evolution to suppress diabatic transitions, enabling faster adiabatic-like processes. "Then, a counterdiabatic (CD) protocol is needed to overcome this issue,"

- Digitized counterdiabatic quantum optimization (DCQO): A gate-based implementation of counterdiabatic evolution where the dominant CD term is digitally simulated to target low-energy states. "Doing this effective time evolution in a digital quantum computer is known as digitized counterdiabatic quantum optimization (DCQO) in the impulse regime"

- Entangling ZZ gates: Two-qubit operations implementing a ZZ interaction, foundational for constructing Ising-like couplings in trapped-ion circuits. "enabling a gate set composed of arbitrary single-qubit rotations and entangling gates."

- First-improvement rule: A heuristic in local search that accepts the first move yielding a strict improvement, reducing search cost while ensuring progress. "The first swap that yields a strict improvement is accepted immediately (first-improvement rule),"

- Hamming weight: The number of bits set to 1 in a binary string; here, the number of selected assets. "Let denote the Hamming weight of ,"

- Hardware-aware decomposition: A strategy that explicitly tailors problem partitioning to the practical limits (e.g., qubit count) of the target quantum hardware. "experimentally demonstrate it on trapped-ion quantum processors using hardware-aware decomposition."

- Hyperfine ground states: Atomic energy levels used to encode qubits in trapped-ion systems, offering long coherence times. "36 qubits encoded in the hyperfine ground states of trapped ions,"

- Ising Hamiltonian: A spin-based energy function with local fields and pairwise couplings; its ground state encodes optimal solutions to mapped combinatorial problems. "Substitution yields an Ising Hamiltonian of the generic form"

- Leakage checks: Procedures that identify and discard runs where qubits leave the computational subspace, improving data quality. "the 64-Qubit Barium development system incorporates leakage checks to discard samples where quantum states have interacted with the environment."

- Louvain method: A modularity-based community detection algorithm used to partition correlation networks efficiently. "Apply a community-detection method (e.g.\ Louvain) to to obtain initial communities "

- Marchenko-Pastur spectral separation: An RMT-based technique that separates noise and structure in correlation matrices by analyzing eigenvalue spectra. "Marchenko-Pastur spectral separation"

- Mean-variance framework of Markowitz: A foundational portfolio theory balancing expected return and risk using covariance, often leading to quadratic objectives. "building upon the classical mean-variance framework of Markowitz"

- Nested commutator expansion: A series expansion using commutators to approximate counterdiabatic terms for accelerated adiabatic evolution. "We choose as the first order nested commutator expansion"

- Pauli interactions: Operations based on Pauli matrices (X, Y, Z) that define native gates and Hamiltonians in many quantum devices. "For quantum hardware that natively implements Pauli interactions, it is convenient to convert binary variables to Ising spins"

- Pauli product: A tensor product of Pauli operators used to decompose Hamiltonians into implementable gate sequences. "Decompose , with a Pauli product"

- Pauli-Z operator: The Z Pauli matrix acting on a qubit; in Ising mappings, it represents spin variables. "with the Pauli-Z operator for the -th spin."

- Quantum annealing: A quantum optimization approach that slowly varies a Hamiltonian to reach low-energy configurations, often in analog devices. "quantum annealing \cite{rosenberg2015solving, albash2018}"

- Quantum approximate optimization algorithm (QAOA): A variational gate-based algorithm alternating between cost and mixer operators to approximate optimal solutions. "the quantum approximate optimization algorithm (QAOA)~\cite{farhi2014},"

- Quantum processing unit (QPU): A quantum compute device analogous to a CPU/GPU, hosting qubits and implementing gates. "Forte and Forte Enterprise quantum processing units (QPUs)~\cite{Chen2024-co},"

- Random matrix theory (RMT): A statistical framework for modeling eigenvalue distributions, used here to denoise correlations. "Building on RMT-based correlation-matrix denoising and community detection,"

- Randomized benchmarking fidelities: Metrics assessing average gate performance by random sequences, characterizing noise and error rates. "demonstrated median direct randomized benchmarking fidelities for two-qubit gates of and ,"

- Surface linear Paul traps: Microfabricated ion traps using oscillating electric fields to confine ions in linear chains for quantum operations. "surface linear Paul traps."

- Trace norm: A matrix norm equal to the sum of singular values, used to quantify operator magnitudes in CD constructions. "Throughout, $\norm{\cdot}$ denotes the trace norm."

- Trotterization: A method to approximate time evolution by splitting Hamiltonians into sequential exponentials of simpler terms. "The digitization of the time evolution operator can be done through Trotterization, yielding"

- Trotterized step: A single discrete approximation step in a Trotterized time evolution sequence. "Compute the Trotterized step"

- Two-photon Raman transitions: Laser-driven processes that implement qubit rotations and entangling interactions in trapped-ion systems. "Universal control is implemented through two-photon Raman transitions driven by $\SI{355}{nm}$ laser pulses"

Collections

Sign up for free to add this paper to one or more collections.