- The paper introduces UD-SfPNet, a unified framework that integrates polarization physics with deep learning to mitigate scattering effects in underwater imaging.

- It employs a three-module architecture (PPN, DN, NEN) for joint optimization of descattering and surface normal estimation, enhancing reconstruction accuracy.

- Comprehensive evaluations demonstrate reduced error rates and improved geometric fidelity compared to state-of-the-art underwater 3D reconstruction methods.

Structured Learning for Underwater 3D Reconstruction: UD-SfPNet

Introduction and Motivation

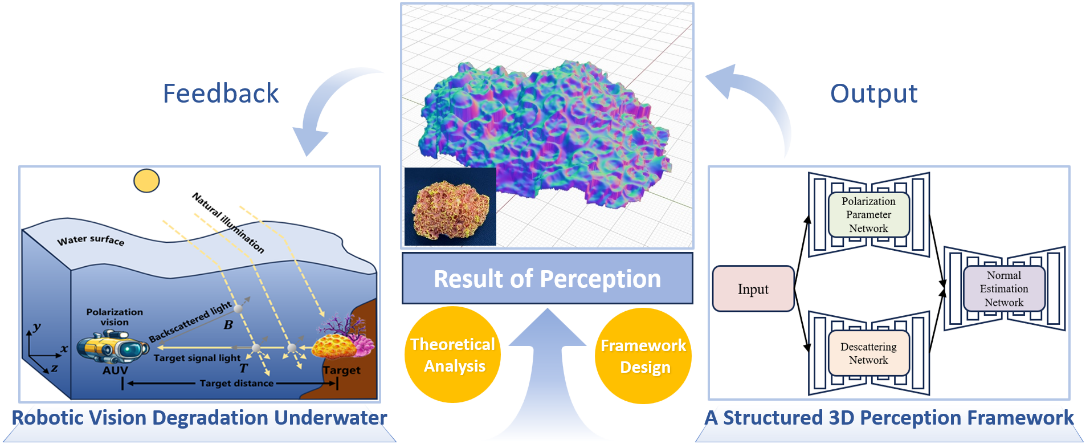

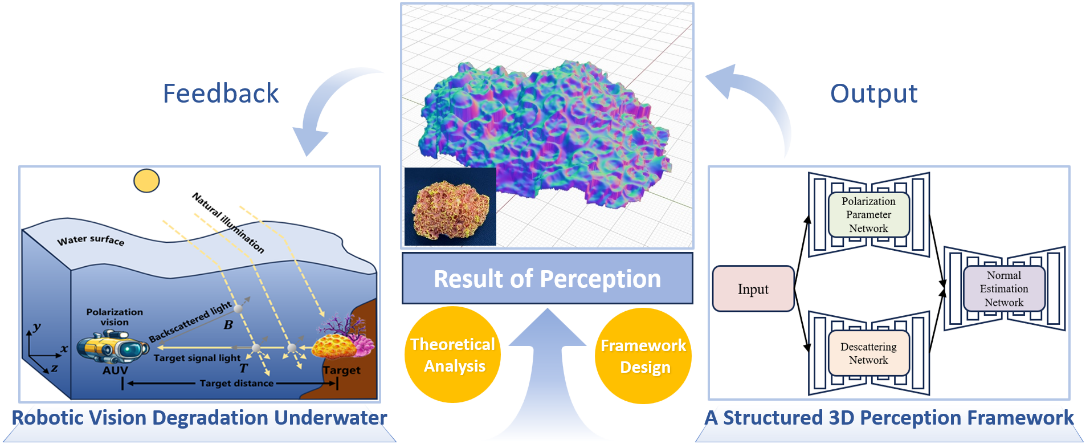

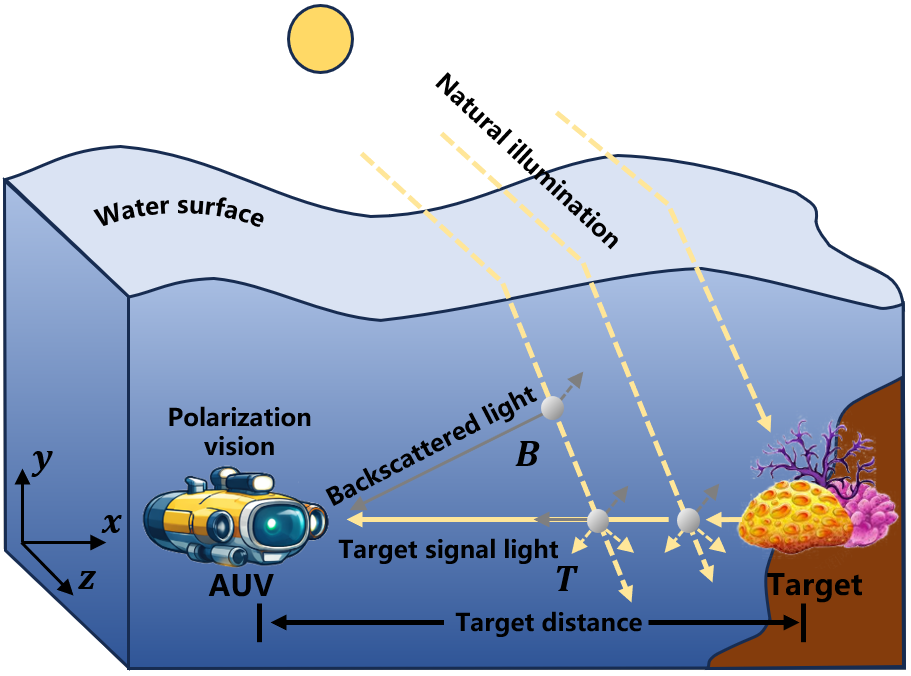

Scattering-induced degradation in underwater optical imaging presents a significant obstacle for precise 3D geometric perception, particularly in robotic and submersible applications. Standard opto-electronic pipelines suffer from blurred textures, limited observable range, and increased noise due to Mie scattering. Polarization imaging, as highlighted, provides dual advantages: it facilitates descattering and enables shape-from-polarization (SfP) based 3D surface normal reconstruction. This paper introduces UD-SfPNet, a unified framework that leverages both physical polarization cues and deep learning techniques to optimize the entire descattering and reconstruction pipeline, mitigating error accumulation typical of sequential cascades.

Figure 1: Schematic of the proposed underwater-robot polarization 3D visual perception pipeline.

Polarization Principles and Deep Learning Integration

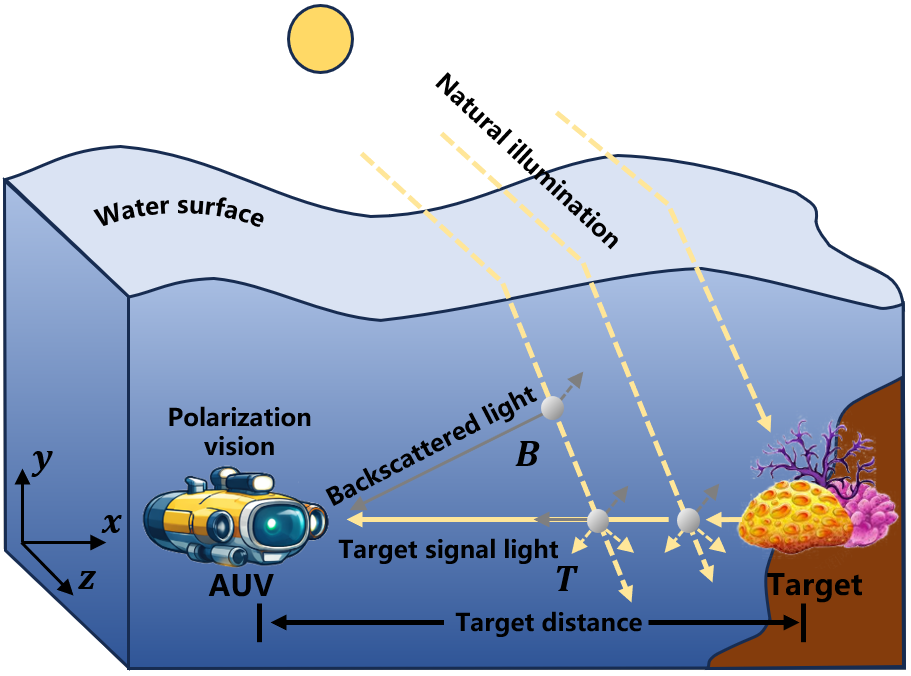

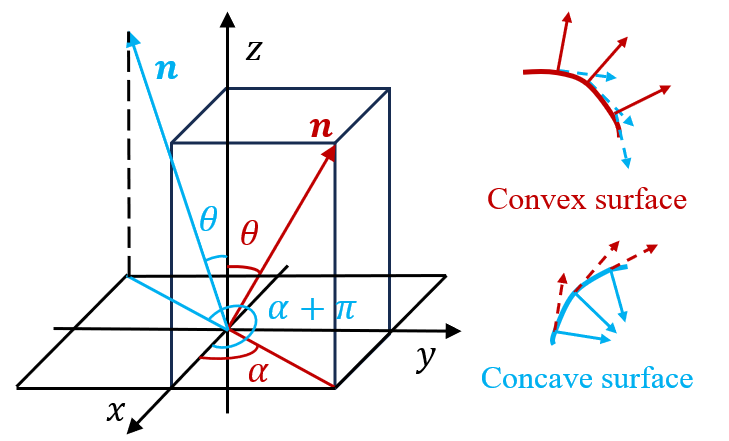

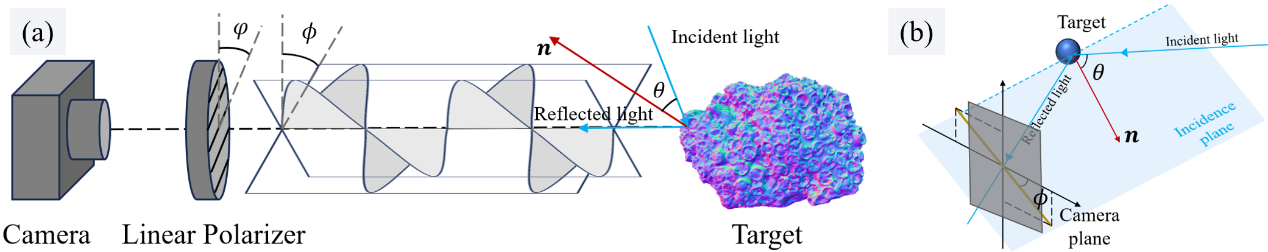

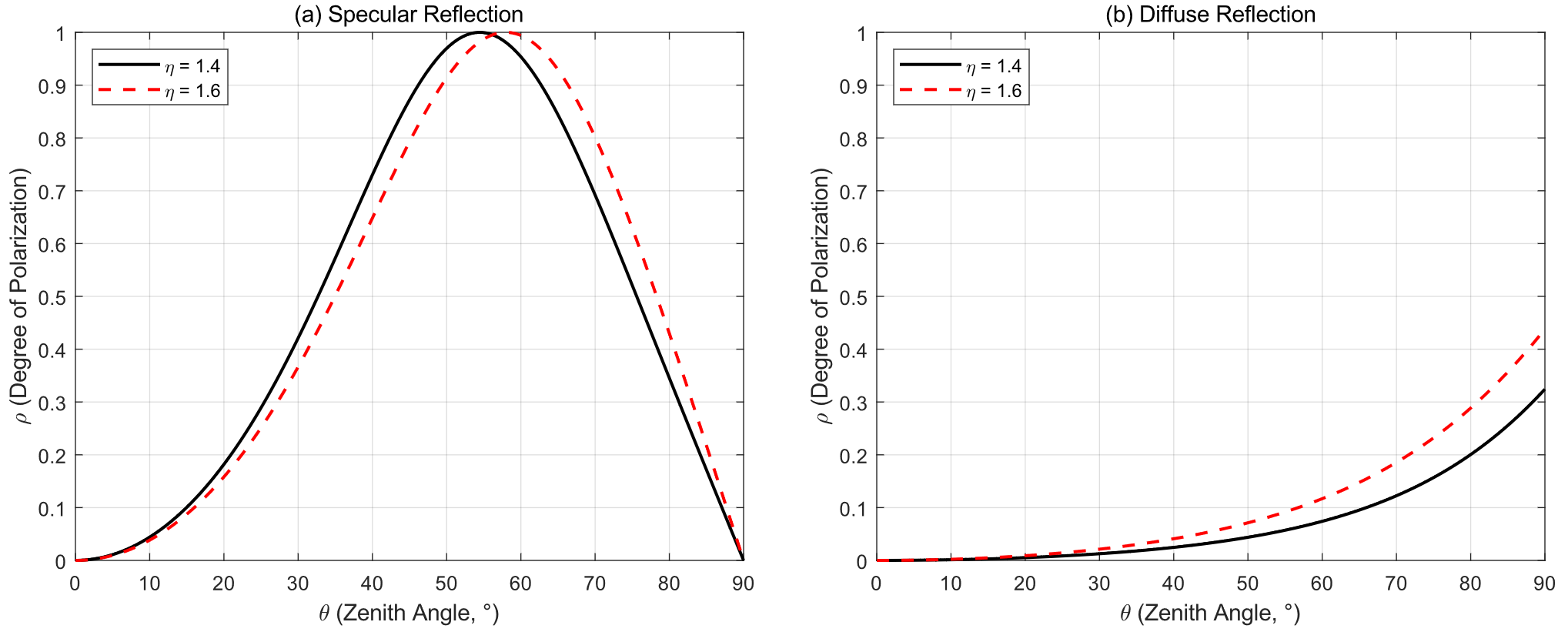

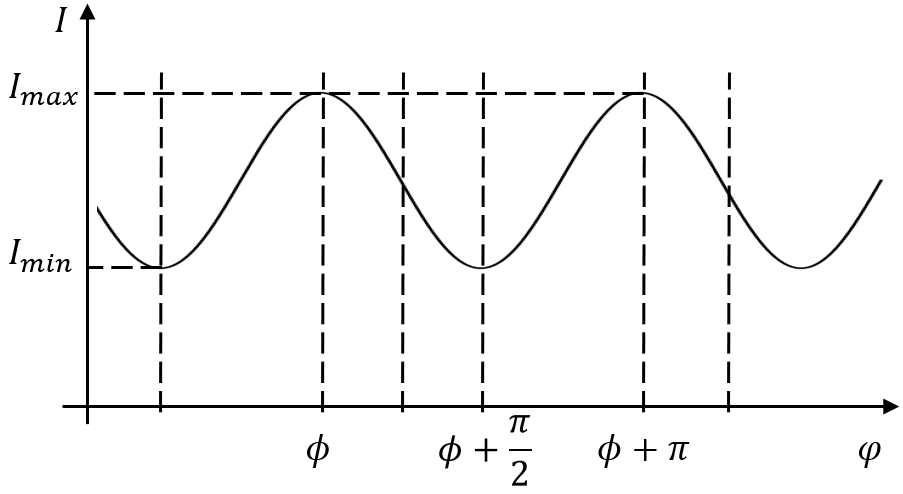

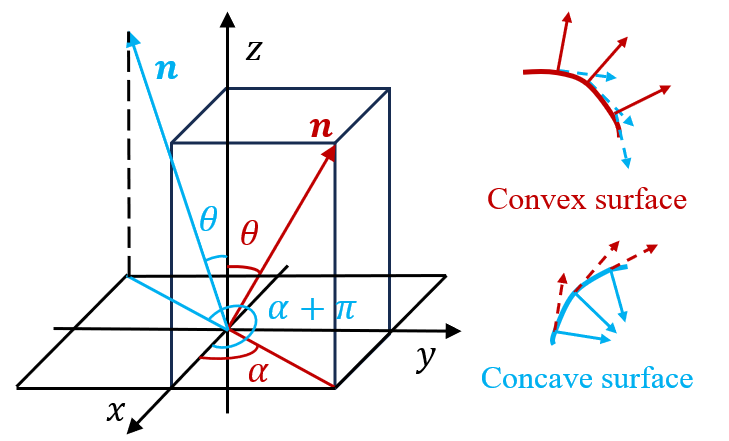

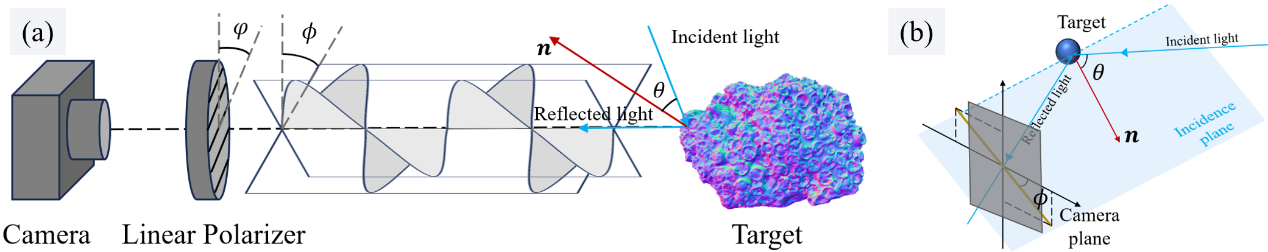

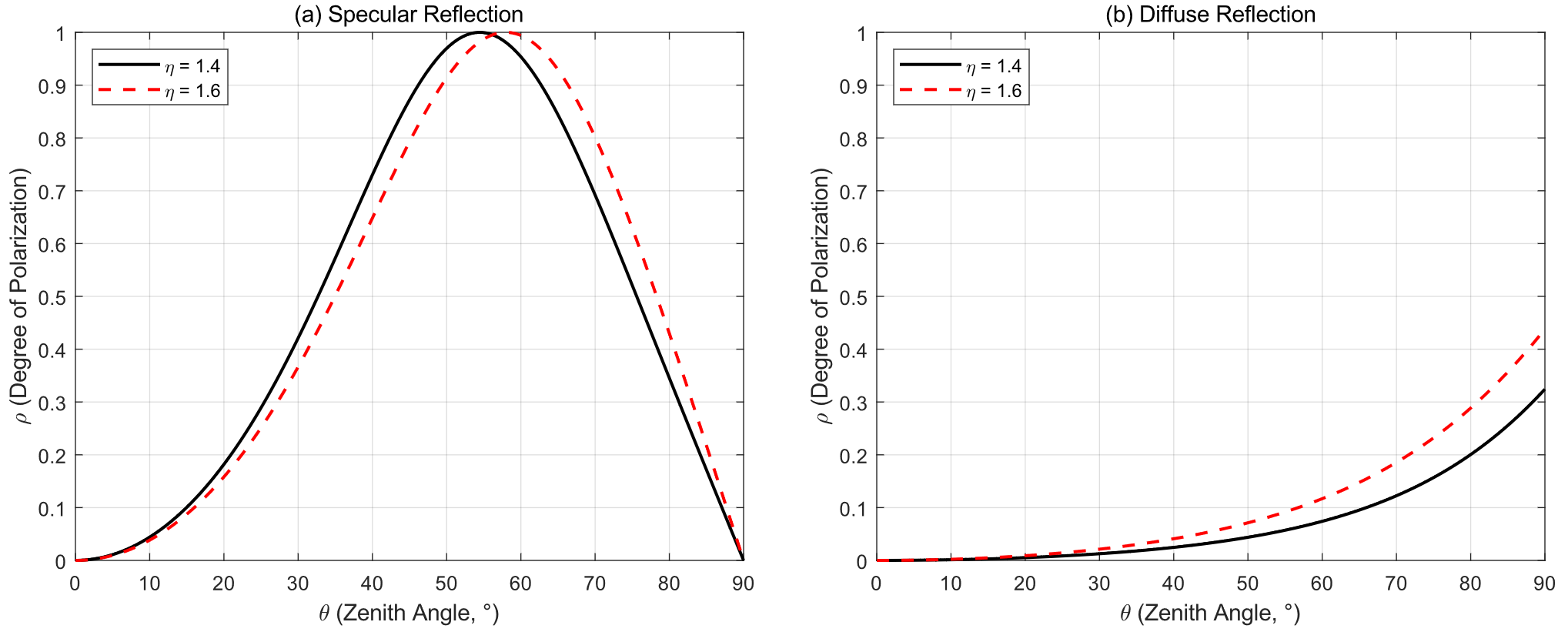

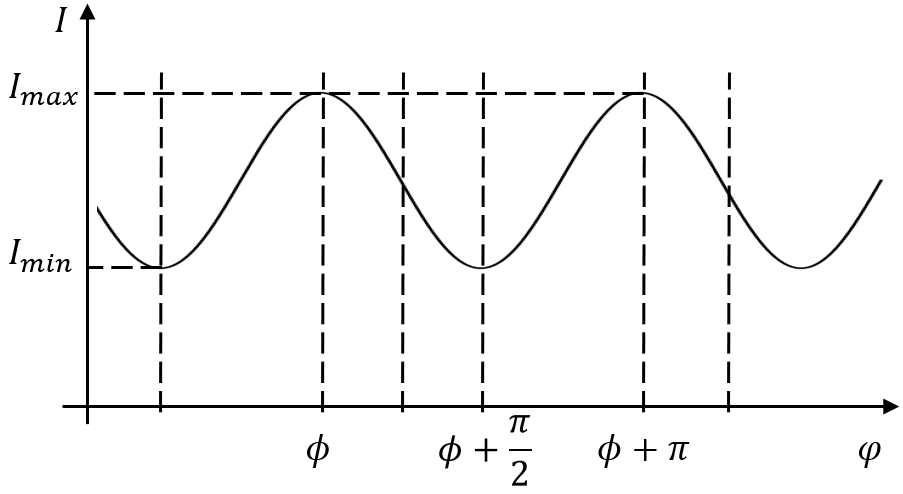

The fundamental principle underlying UD-SfPNet is the discriminatory power of polarization states between scattered and target-reflected light. Division-of-focal-plane polarization cameras capture multi-angle intensity images, which are subsequently transformed into Stokes vectors for encoding the polarization information. Recovery of surface normals exploits degree of polarization (DoP) ρ and angle of polarization (AoP) ϕ, which relate nonlinearly to the normal zenith (θ) and azimuth (α) angles (Figures 2–6).

Figure 2: Underwater polarization imaging model.

Figure 3: Minimal element schematic in the surface microfacet model (local surface normal ambiguity).

Figure 4: Physical model of polarization-based 3D reconstruction, visualizing interplay between global and camera views.

Figure 5: Relationship between ρ and θ for specular and diffuse reflection, foundational to ambiguity resolution.

Figure 6: Dependence of captured intensity I on polarizer rotation angle φ, critical for estimating AoP.

Unlike classical SfP that relies on strict material priors and auxiliary sensors, deep learning enables implicit learning of these polarization relationships, enhancing robustness to ambiguities and material variability.

Network Architecture and Module Design

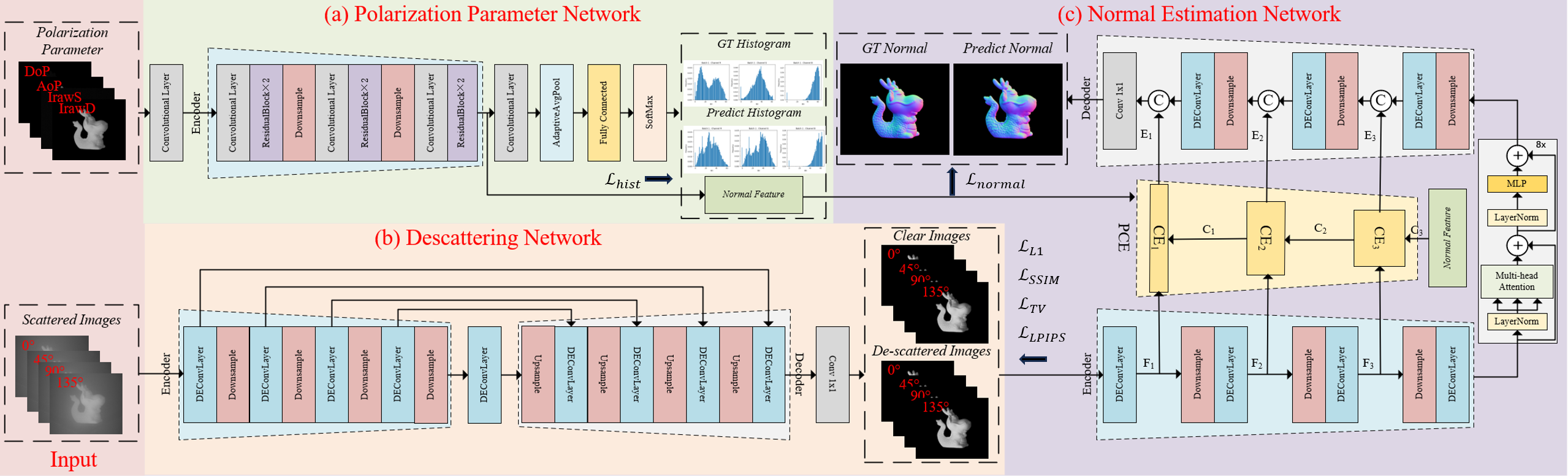

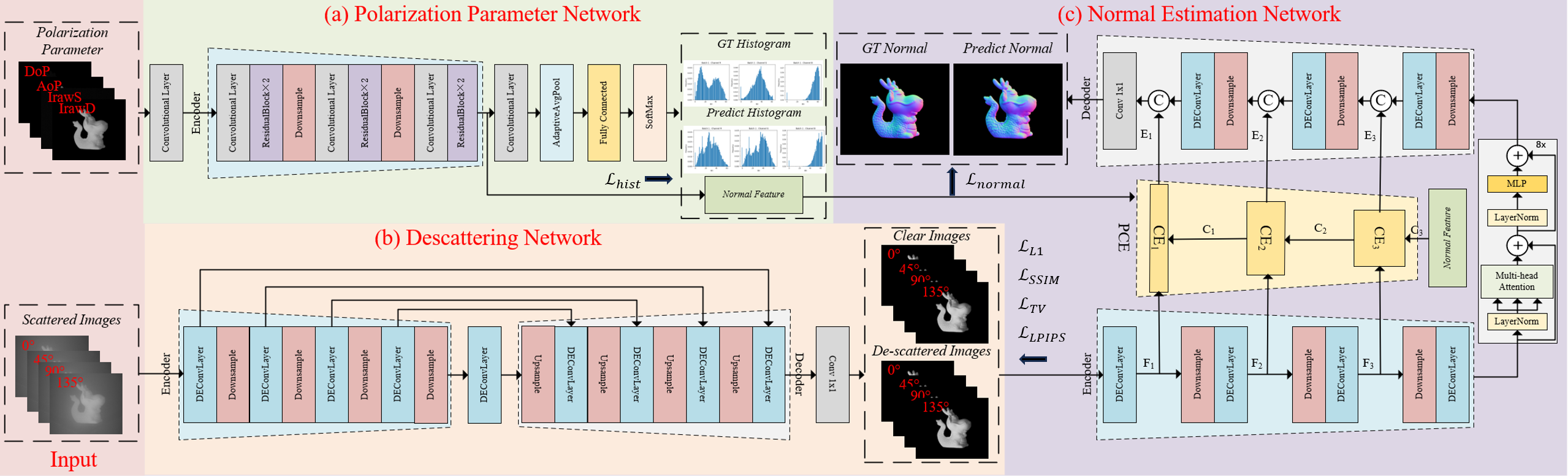

UD-SfPNet comprises three tightly integrated components: the Polarization Parameter Network (PPN), Descattering Network (DN), and Normal Estimation Network (NEN), collectively enabling end-to-end optimization from raw polarization images to surface normal maps.

Figure 7: Overview architecture of UD-SfPNet for underwater polarization-based 3D reconstruction.

- PPN encodes polarization features (DoP, AoP, specular/diffuse components) into a global representation of surface normal distributions.

- DN utilizes U-Net architecture for descattering, supervised by pixel-wise, structural (SSIM), total variation, and LPIPS losses to enhance contrast and sharpen polarization images under scattering noise.

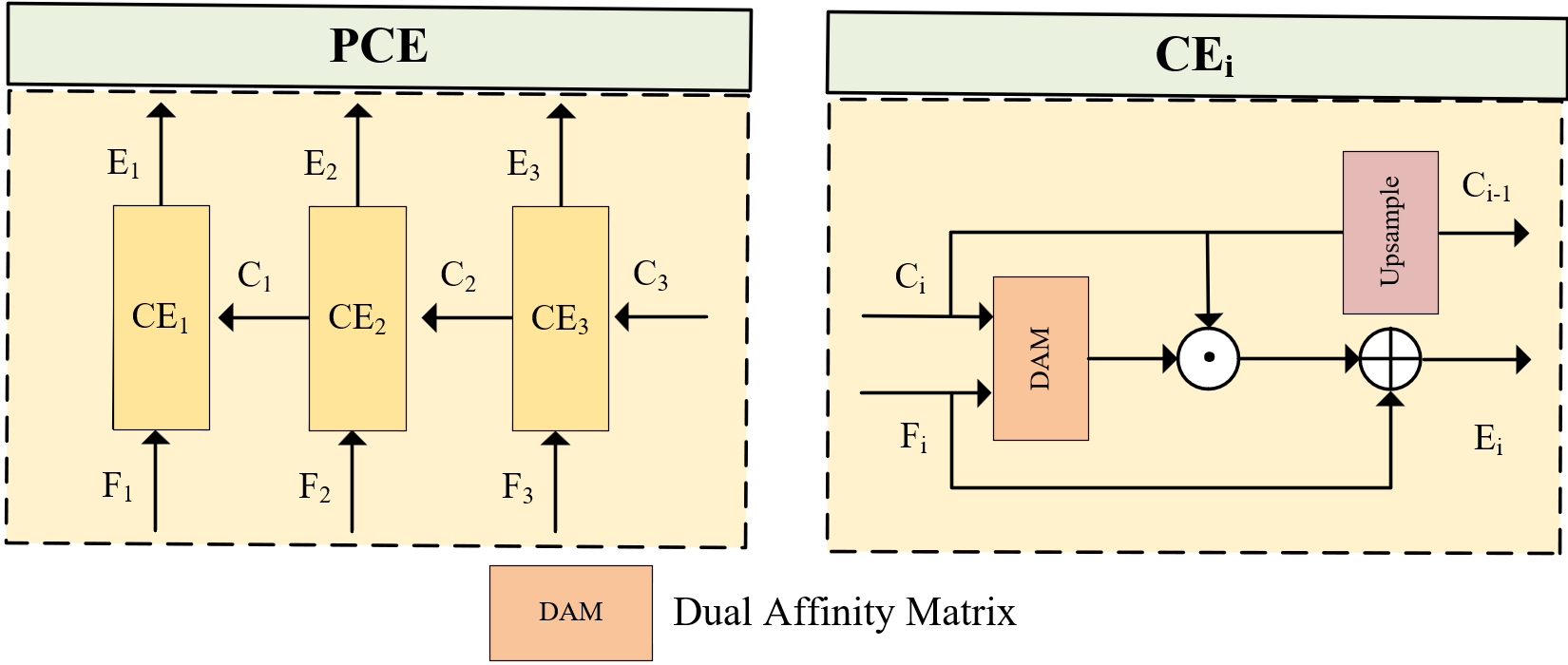

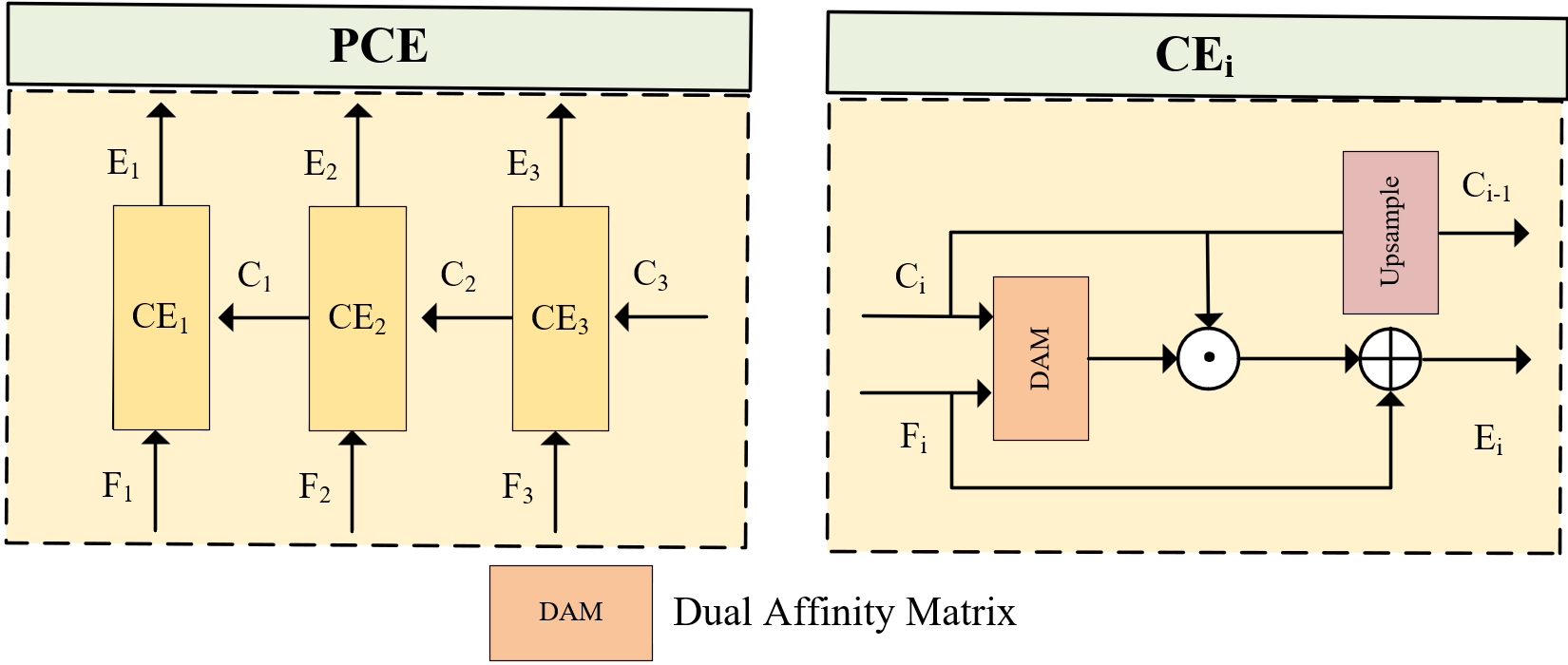

- NEN integrates outputs from PPN and DN, employing a multi-head attention mechanism and pyramid color embedding (PCE) module to recover high-fidelity surface normals.

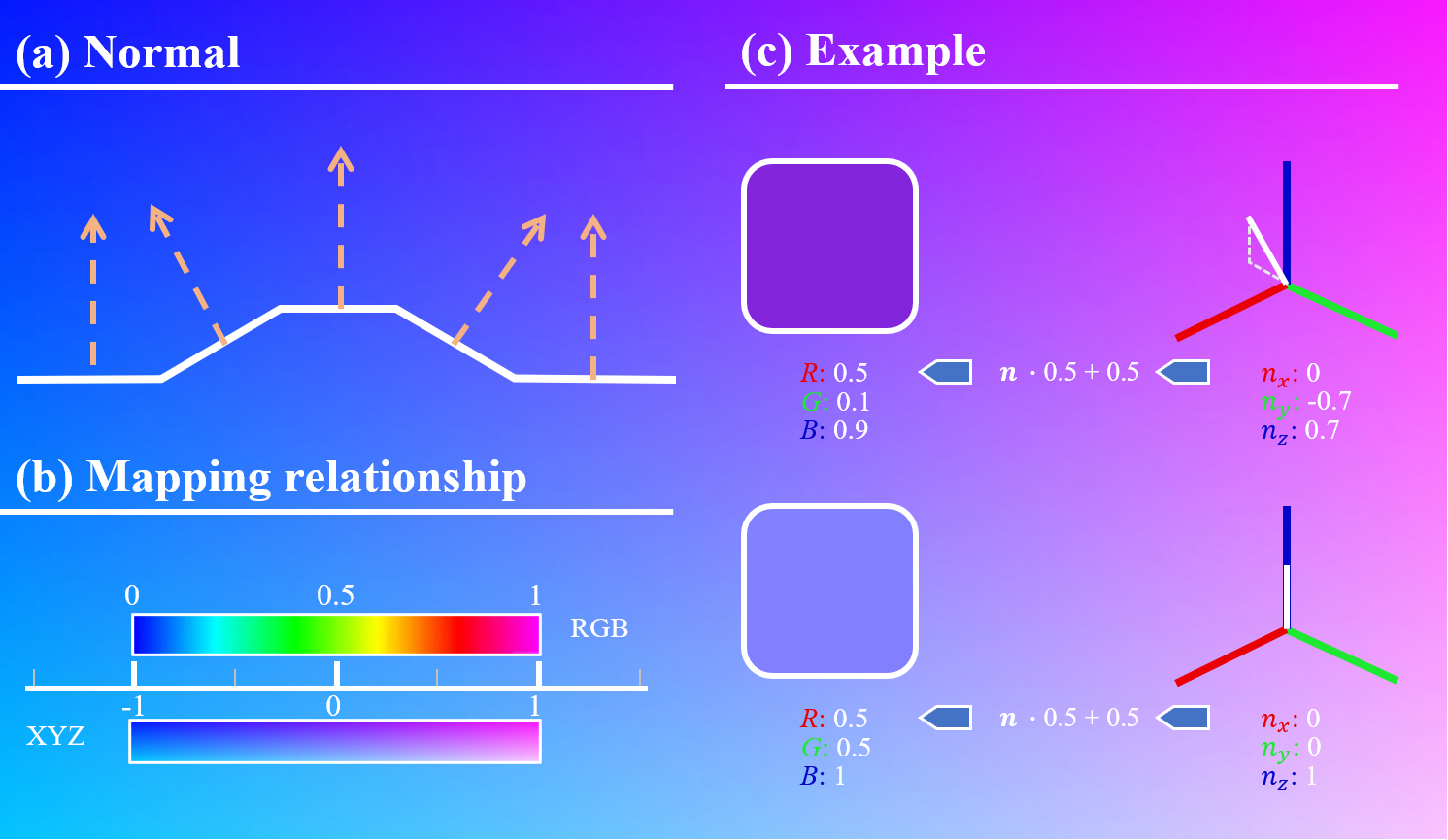

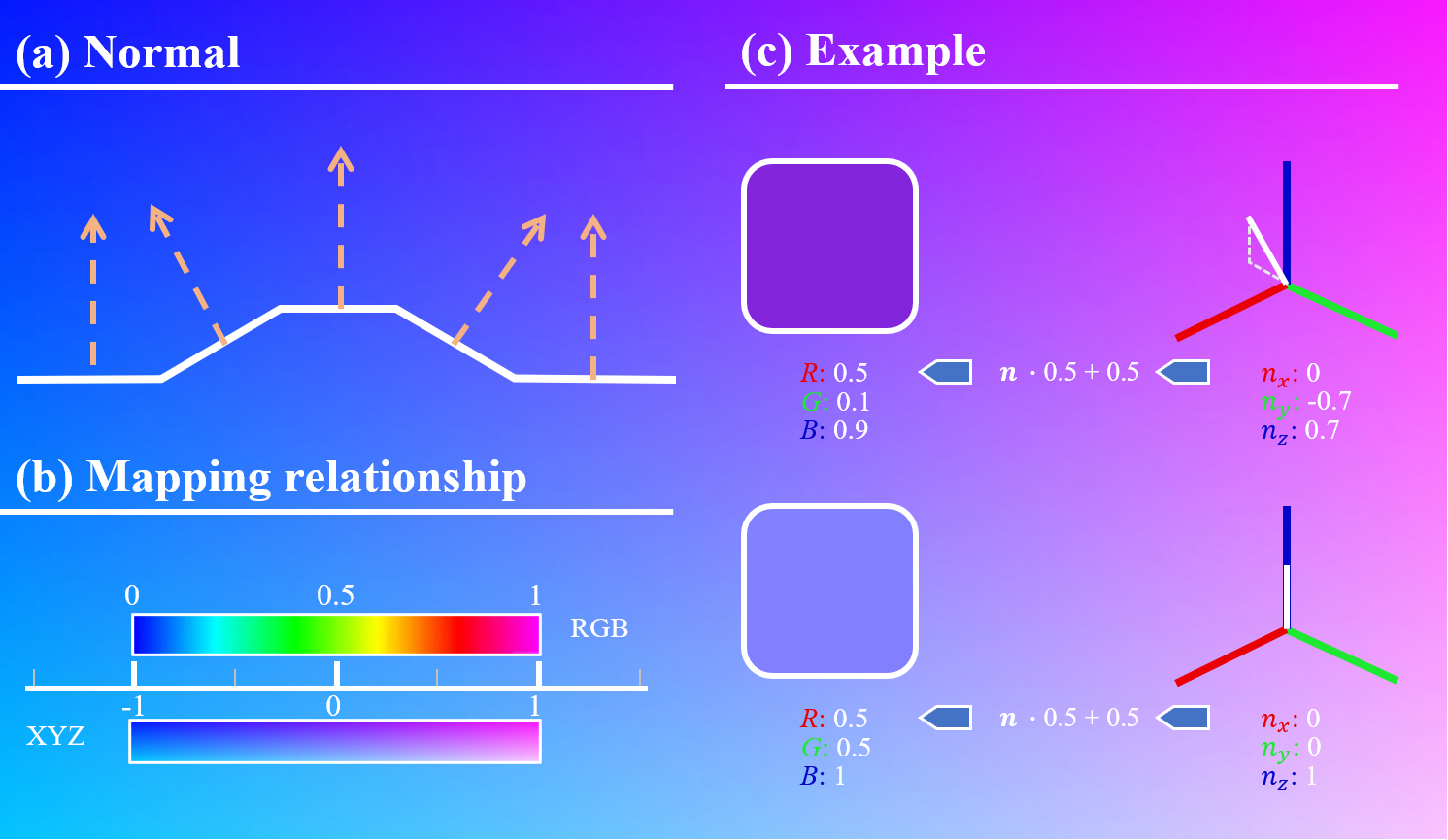

The color embedding mechanism exploits the standard RGB encoding of normal vectors baked into 2D maps, leveraging the color-geometry isomorphism for feature consistency and geometric stability (Figure 8, Figure 9).

Figure 8: RGB color encoding mechanism of baked normal maps, illustrating geometry-to-color mapping.

Figure 9: Adaptation of color embedding module for normal map consistency.

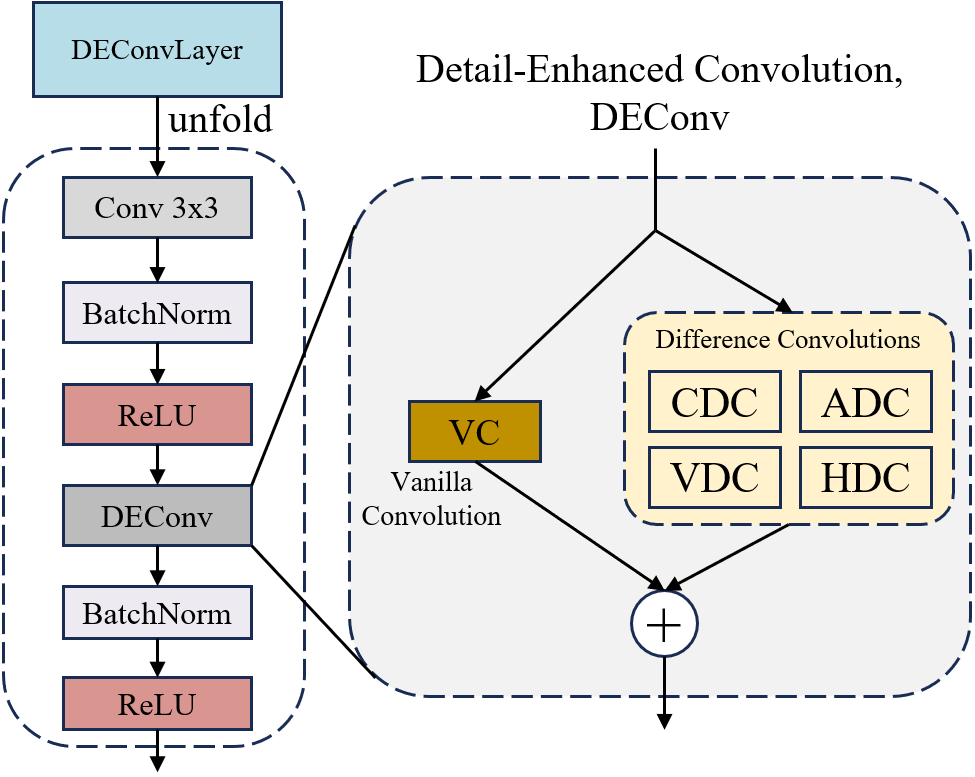

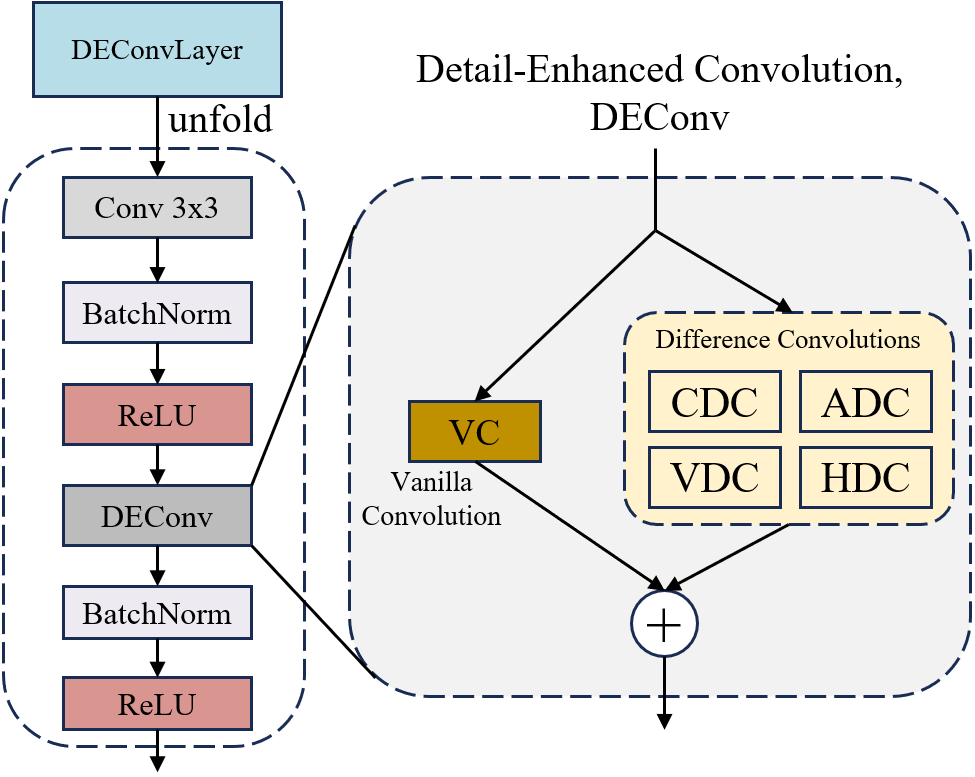

Furthermore, the DEConv module (Figure 10) introduces directional differential convolutions to explicitly model high-frequency geometric details critical for accurate normal recovery in regions with pronounced local surface variations.

Figure 11: Comparative error heatmaps highlighting the significance of detail-enhanced convolution in geometric reconstructions.

Figure 10: Schematic illustration of the DEConv module—explicit modeling of local pixel differences and directional changes.

Joint Optimization and Descattering Efficacy

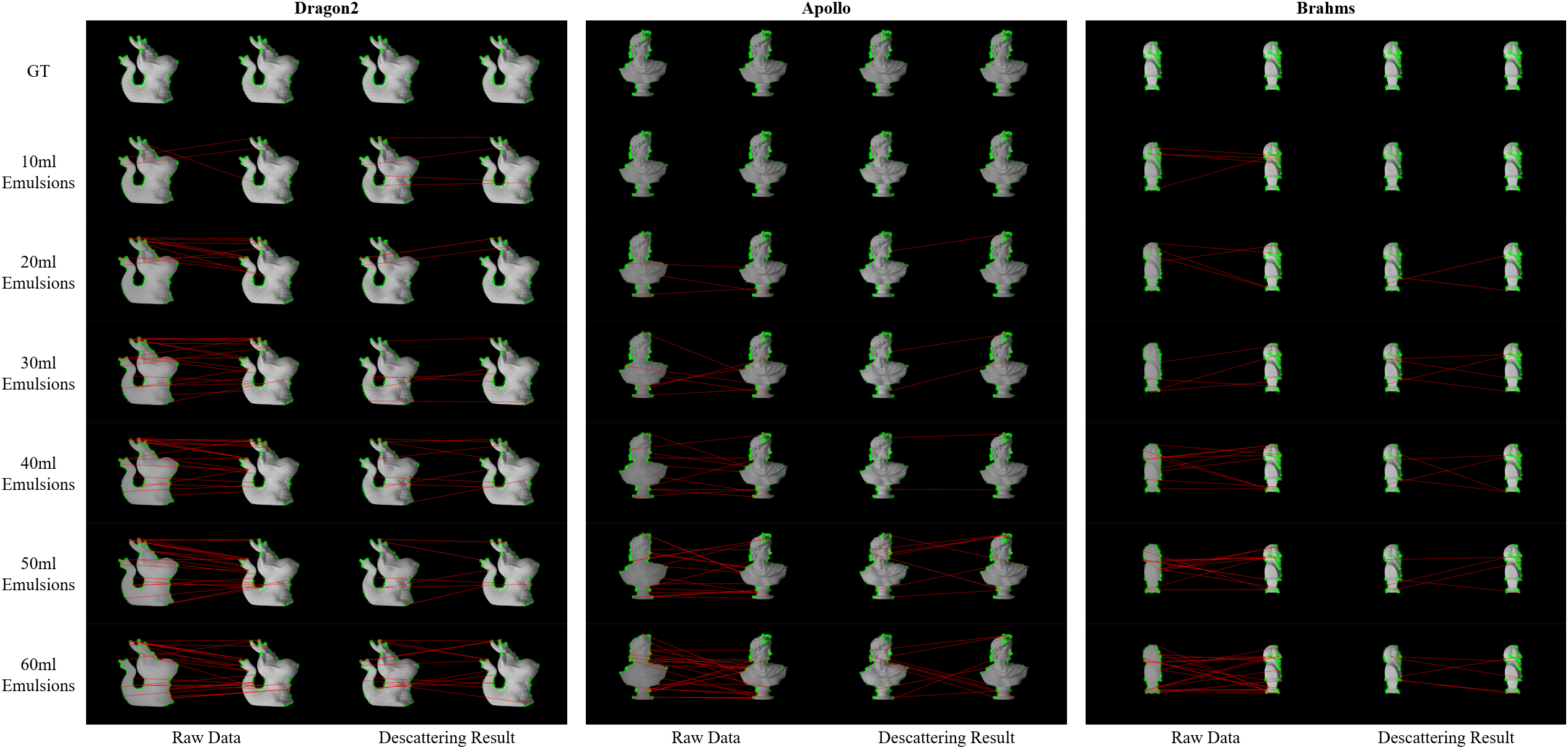

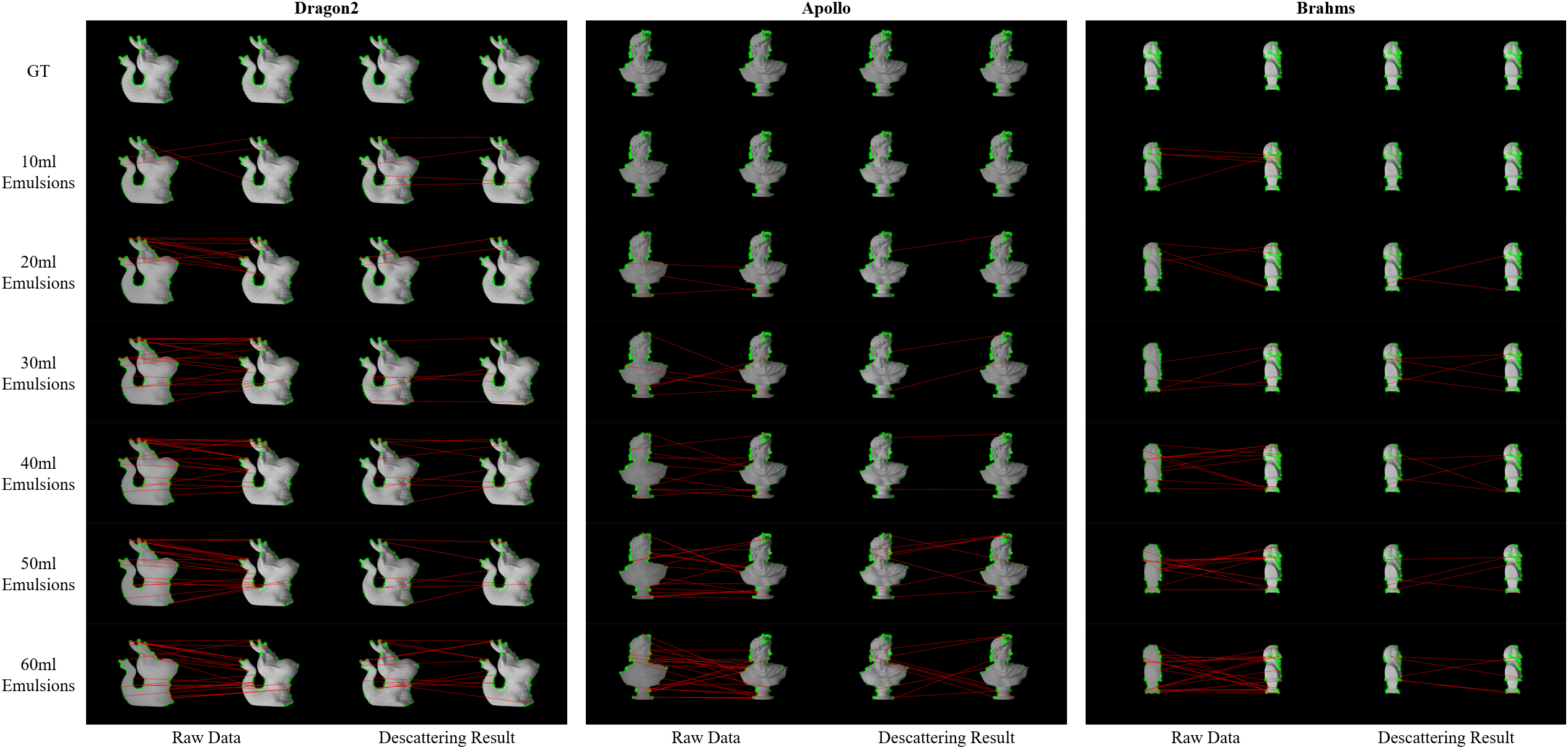

The global training of UD-SfPNet avoids cumulative errors inherent in cascaded pipelines, aligning low-level descattering with high-level geometric inference. Descattering performance, evaluated using ORB-based false match rates (Figure 12), demonstrates marked reduction in incorrect keypoint correspondences post-processing. Quantitative metrics (PSNR, SSIM, LPIPS) improve substantially when descattering is explicitly modeled, affirming enhancement of target region visual quality.

Figure 12: Descattering evaluation via ORB false match rates; reduction in incorrect matches validates effectiveness.

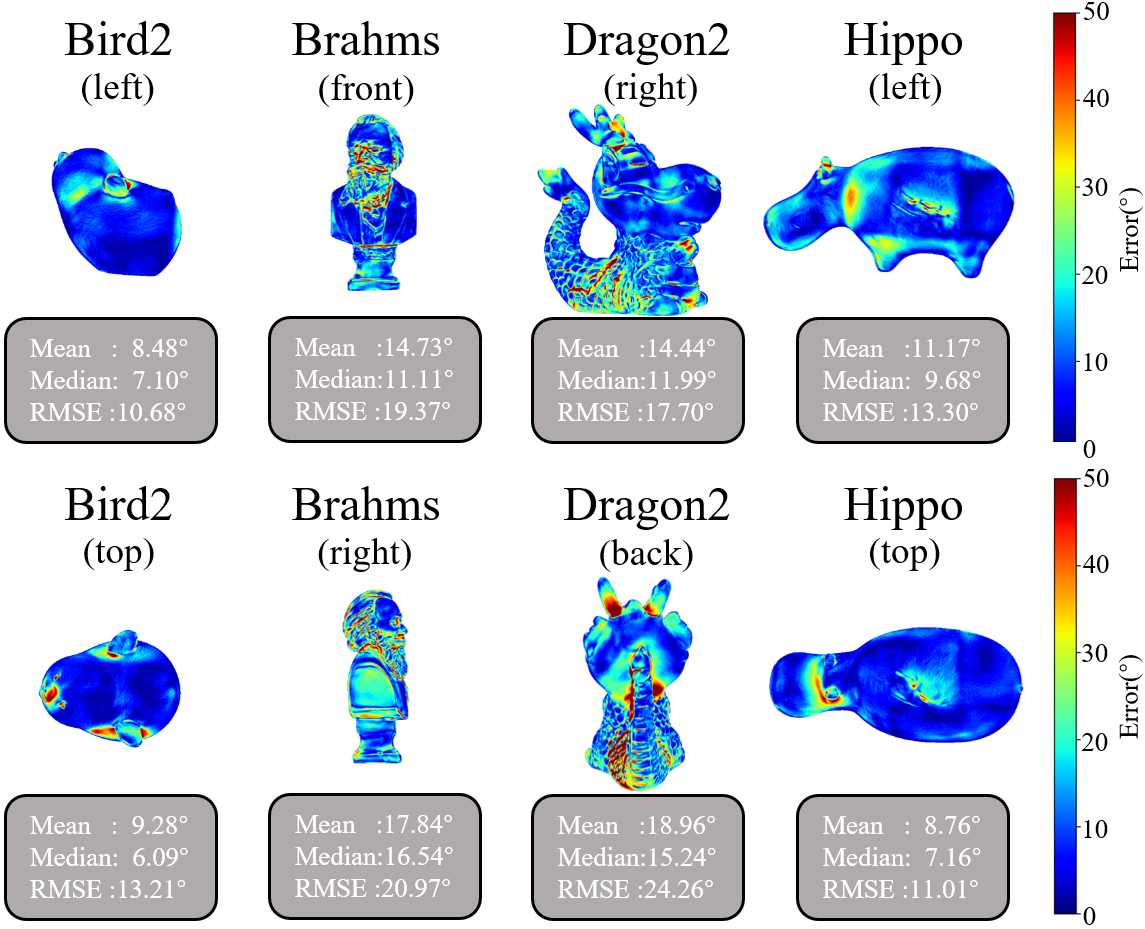

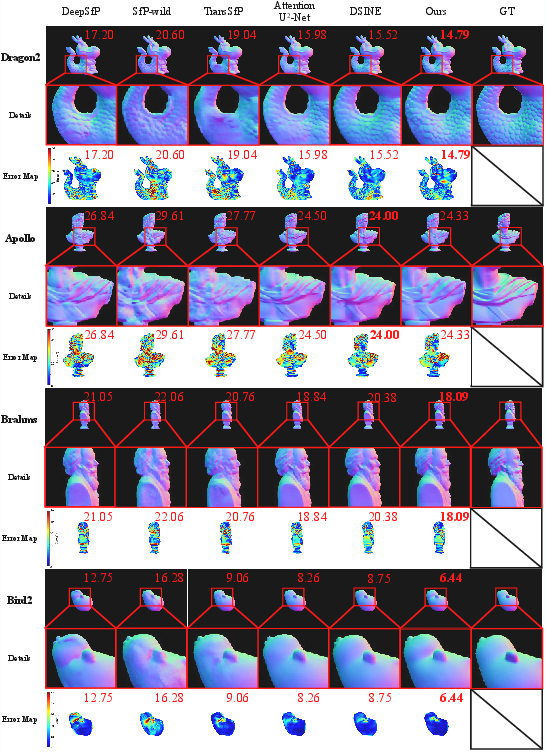

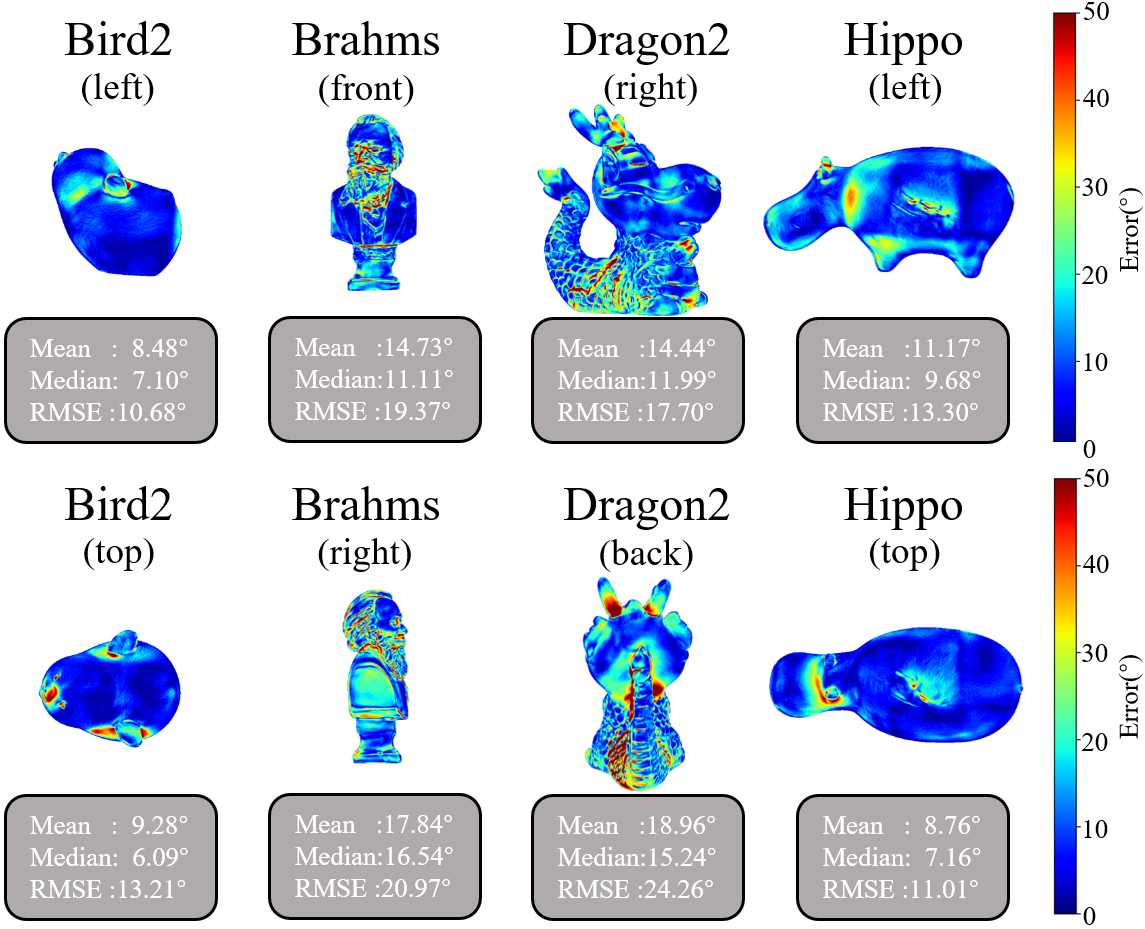

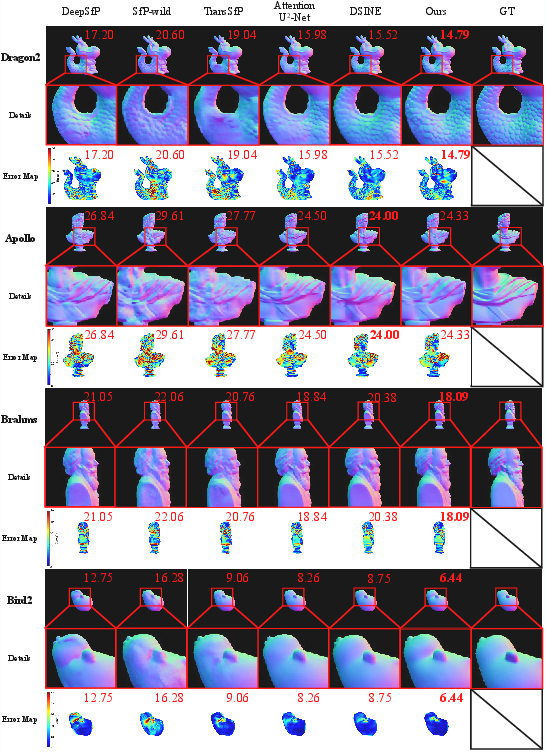

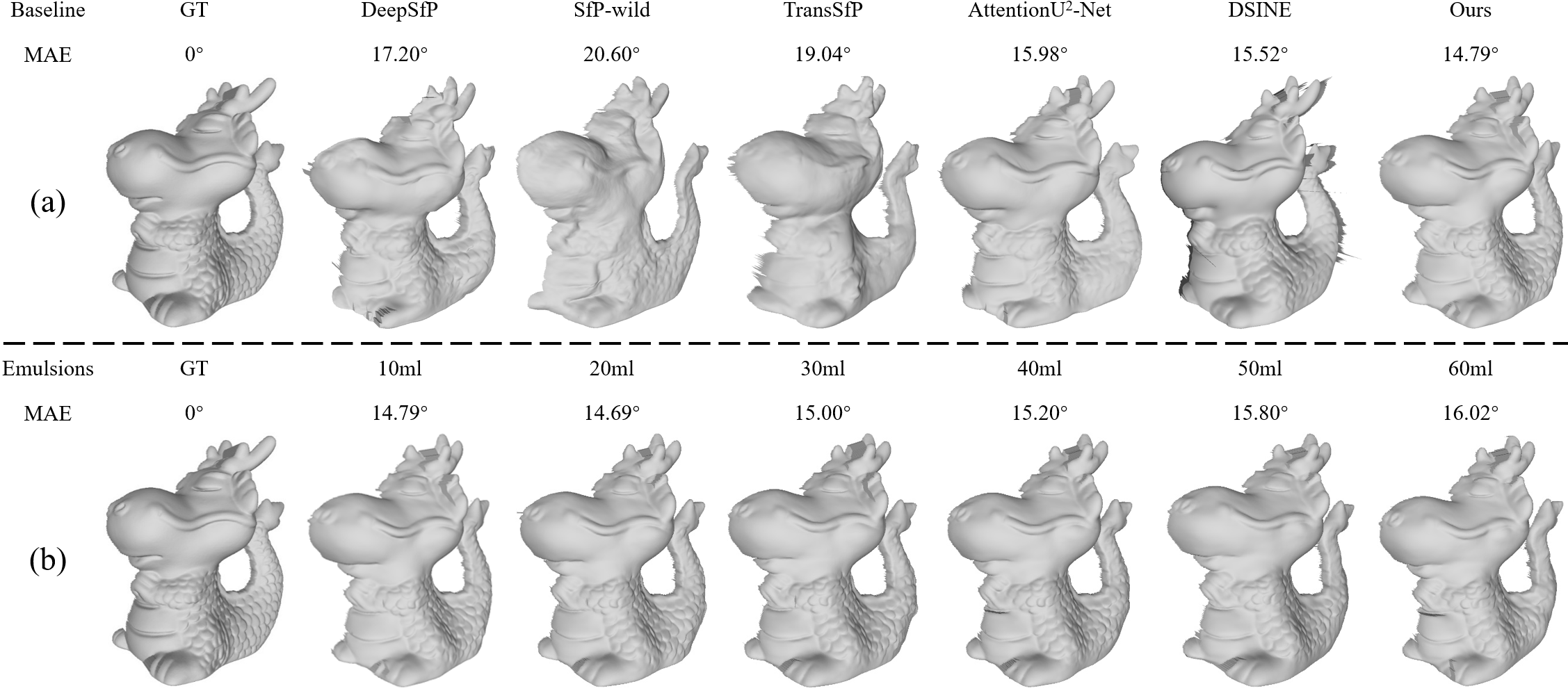

Joint learning in UD-SfPNet achieves superior surface normal estimation, evidenced by lower mean angular error (MAE) compared to state-of-the-art baselines including DeepSfP, SfP-wild, TransSfP, AttentionU2-Net, and DSINE. Error heatmaps and qualitative comparisons (Figures 13–14) reveal that UD-SfPNet produces more stable and precise normal predictions, especially in regions with high-frequency texture or curvature.

Figure 13: Visual comparison by MAE between baselines on Dragon2 sample; UD-SfPNet yields lowest error.

Figure 14: Comparative normal estimation under uniform turbidity; UD-SfPNet preserves local details and reduces error concentration.

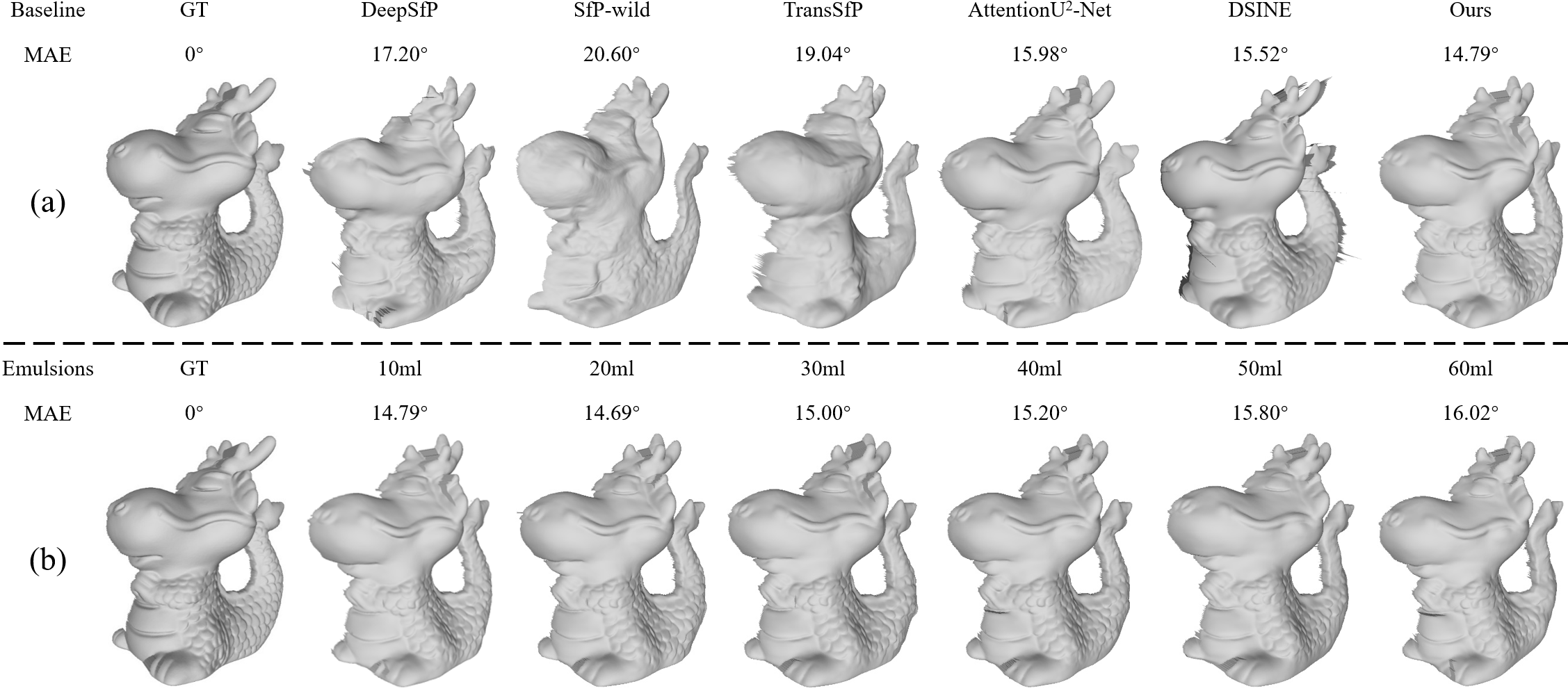

3D shape integration from predicted normal maps further demonstrates the robustness of UD-SfPNet across variable scattering conditions, with richer, more continuous surface geometry and fewer integration errors (Figure 15).

Figure 15: Integrated 3D shapes from predicted normals; increased robustness and detail preservation with UD-SfPNet.

Ablation Study and Component Effectiveness

Comprehensive ablation experiments isolate contributions of core modules. Removal of PPN or DN incurs moderate error increases, confirming necessity of leveraging both global polarization priors and local descattering. Exclusion of CE (color embedding) or DEConv (detail-enhanced convolution) substantially degrades the model, with DEConv removal precipitating the most severe performance drop. These results underscore the synergy between physics-informed feature design and deep learning architectures.

Implications and Future Directions

The unified pipeline design in UD-SfPNet addresses a key limitation of prior underwater SfP approaches — namely, error propagation from sequential descattering and reconstruction. The integration of physics-inspired modules (color embedding, directional convolutions) within deep learning frameworks results in substantial accuracy gains and operational robustness. Practically, this paradigm is relevant for robotic vision in marine surveying, environmental monitoring, and autonomous underwater vehicle navigation, where optical degradation is the norm.

Theoretically, UD-SfPNet demonstrates the value of full-chain optimization and the fusion of domain knowledge with learnable representations. Future research directions include further refinement of DEConv mechanisms, incorporation of Transformer-based models for enhanced representation power, and real-time adaptations for embedded systems. Addressing integrability constraints in single-view settings remains a challenge, particularly relating to boundary occlusion and depth discontinuity.

Conclusion

UD-SfPNet establishes a new benchmark for underwater 3D imaging by fusing polarization-guided descattering and deep learning-based shape-from-polarization normal estimation within a globally optimized pipeline. The combination of color embedding and detail-enhanced convolution modules contributes strongly to geometric consistency and high-frequency detail preservation. Experimental results substantiate both theoretical and practical benefits, achieving substantial performance gains and improved robustness under diverse scattering conditions. The framework exemplifies how physically-grounded, task-specific module integration elevates deep learning-based optical imaging, advancing 3D perception capabilities in adverse underwater environments.