- The paper introduces a unified online framework that decouples high-fidelity local detail extraction from stable global memory to mitigate cumulative drift.

- The method employs a two-stage recurrent pipeline with a Relative Geometry Extractor and an Anchor State Director, achieving precise geometry and real-time performance.

- The approach demonstrates state-of-the-art performance in novel view synthesis, camera pose estimation, and open-vocabulary semantic segmentation across challenging benchmarks.

OnlineX: A Unified Framework for Online 3D Reconstruction and Semantic Understanding

Introduction

OnlineX introduces a unified feed-forward framework for continuous online 3D scene reconstruction and semantic understanding from streaming RGB images, targeting real-time robotics, AR/VR, and general visual perception scenarios. The approach is grounded in generalizable 3D Gaussian Splatting (3DGS), enhanced with a novel active-to-stable state evolution paradigm that decouples high-fidelity local detail extraction from the maintenance of a consistent global structural memory. This mitigates cumulative long-term drift—a critical failure mode in prior online feed-forward architectures relying on a single bottlenecked hidden state. Simultaneously, OnlineX unifies appearance reconstruction and language field modeling and introduces an implicit Gaussian fusion module for compact, globally consistent scene parameterization.

Figure 1: Continuous and progressive 3D reconstruction from streaming images, with decoupling of high-fidelity active processing from the stable global structure.

Methodology

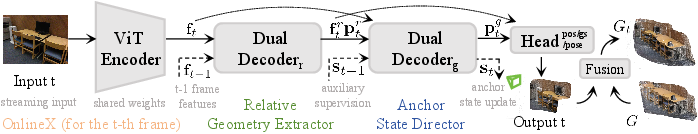

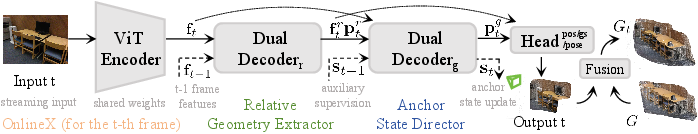

OnlineX's architecture features a two-stage, recurrent active-to-stable pipeline. Incoming streaming RGB frames are sequentially processed without dependence on pre-computed camera poses. The first stage, the Relative Geometry Extractor, computes frame-to-frame geometry and Gaussian attributes via a dual-view ViT-based architecture, extracting dense local relative information. This stage outputs per-pixel features, relative pose embeddings, and joint Gaussian predictions, offering strong intermediate supervision signals.

The second stage, the Anchor State Director, maintains a learnable stable global memory. At each timestep, compact descriptors (global pools of local features concatenated with pose embeddings) are integrated into the anchor state via a transformer decoder, yielding a globally consistent feature. Fusion of local and global features occurs through cross-attention, enabling the model to implicitly align local geometry with the evolving global context in the latent space, rather than via explicit pose composition. This design suppresses the representational bottleneck of memories seen in single-state online 3DGS approaches.

Figure 2: The active-to-stable pipeline, showing the recurrent flow of information and the decoupling of high-frequency local detail from stable global memory updating.

An implicit Gaussian fusion module further processes the 3DGS output. Within each voxel, newly predicted Gaussians are adaptively merged with nearby active Gaussians based on confidence weighting, with latent attributes fused via an MLP. This avoids the redundancy and instability issues of previous opacity-based pruning schemes and yields superior spatial and feature compactness.

Critically, OnlineX jointly supervises both appearance (RGB field) and language field modeling. Each Gaussian encodes visual, geometric, and low-dimensional language features, with rendered outputs for both image and semantic fields. The language field enables open-vocabulary semantic tasks.

The composite training objective supervises both the intermediate (relative stage) and final (global stage) outputs. It combines pose, appearance rendering (MSE and LPIPS), and language field (negative cosine similarity) losses, promoting convergence stability and fine local feature extraction.

Experimental Analysis

Novel View Synthesis

OnlineX is evaluated on RE10K and ScanNet, using online sequential streaming-input protocols against state-of-the-art (SOTA) offline and online baselines, including MVSplat, NoPoSplat, FLARE, Spann3R, and CUT3R. For few-view scenarios, performance matches or slightly surpasses offline baselines. As the view count increases, OnlineX demonstrates robust stability: unlike competing online models (which degrade due to drift or memory inefficiency), OnlineX maintains high PSNR, SSIM, and low LPIPS, significantly outperforming SOTA.

Figure 3: Qualitative comparison for novel view synthesis on RE10K and ScanNet, highlighting OnlineX’s ability to preserve accurate geometry, fine details, and suppress Gaussian artifacts even with larger sequential input.

Camera Pose Estimation

On the ScanNet benchmark, OnlineX provides the lowest absolute and relative translation and rotation errors among SOTA online pose-free 3D scene models, underscoring the utility of the decoupled two-stage representation for global structure inference and drift suppression.

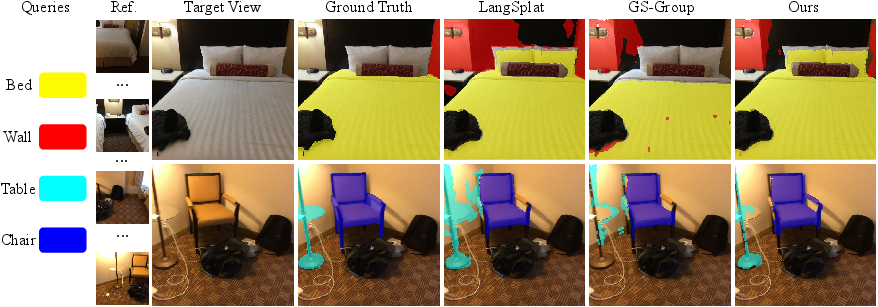

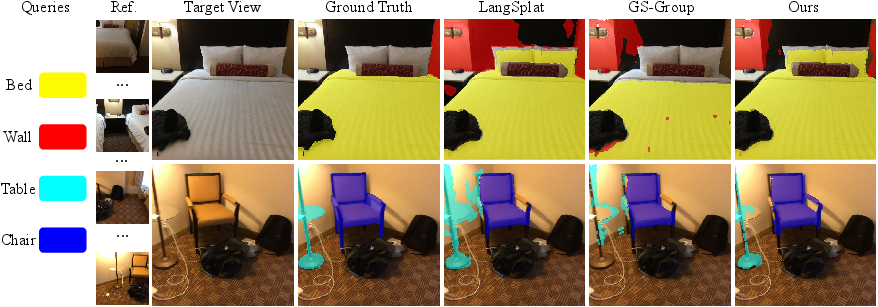

Open-Vocabulary Semantic Segmentation

Via the joint language field, OnlineX outperforms per-scene-optimized methods such as LangSplat and Gaussian Grouping in mIoU and mAcc. The method segments objects with highly detailed boundaries and produces more complete regions than ground-truth masks.

Figure 4: Semantic segmentation results on ScanNet; OnlineX’s segmentation masks yield greater semantic region completeness than previous methods and ground truth.

Zero-Shot and Cross-Dataset Generalization

Cross-dataset evaluation demonstrates that models trained on RE10K generalize robustly to unseen (DL3DV) datasets without any adaptation, attesting to the generality conferred by the unified online modeling paradigm and the embedding-level representation.

Figure 5: Zero-shot generalization on DL3DV, demonstrating OnlineX’s out-of-distribution transfer with accurate geometry and appearance fidelity.

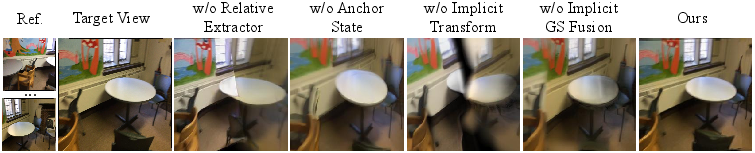

Runtime and Ablation

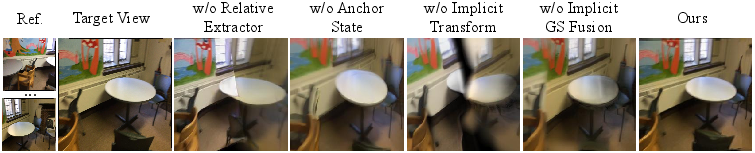

OnlineX operates at real-time framerates (23 FPS on a 256×256 input, single RTX A6000 GPU) with modest memory requirements, supporting practical deployments. Ablation studies illustrate that each architectural element—the Relative Geometry Extractor, Anchor State, Implicit Pose Transformation, and Implicit GS Fusion—is essential for achieving state-of-the-art NVS performance and qualitative scene quality.

Figure 6: Qualitative ablation study (full versus various module removals): the full model avoids drift, excessive blurring, misalignments, and redundant Gaussians.

Implications and Future Directions

The OnlineX framework demonstrates that memory state decoupling within recurrent online 3DGS architectures can robustly reconcile the need for active local detail and persistent global structure. The scheme substantially mitigates long-term drift, a recurring bottleneck in online perception and reconstruction. Further, by integrating language field modeling in a single-pass, joint optimization, OnlineX enables a unified approach to reconstruction and scene understanding, facilitating open-vocabulary, multi-modal downstream tasks on evolving 3D streams.

Future work may extend the paradigm towards multi-agent cooperative perception, lifelong mapping in robotics, or continual joint 3D reconstruction and instance-level semantic tracking in more dynamic, open-world settings. Additionally, interplay with foundation models and scaling towards outdoor, large-scale, or cross-modal (e.g., depth, audio-guided spatial fields) settings remains a promising avenue, particularly as implicit fusion and state decoupling show clear performance and stability advantages.

Conclusion

OnlineX provides a rigorous, unified approach for feed-forward online 3D reconstruction and semantic understanding from streaming RGB, utilizing a decoupled active-to-stable recurrent state evolution to maintain both local fidelity and global structural consistency. With integrated language field modeling and implicit Gaussian fusion, OnlineX surpasses state-of-the-art methods in reconstruction, semantic segmentation, generalization, and real-time performance, making it a robust baseline for progressive 3D scene processing and online multi-modal perception.

(2603.02134)