- The paper introduces an adaptive multi-view keyframe selection module (SIGMA) that optimizes keyframe subsets based on 3D overlap and information gain.

- It leverages geometry-aware foundation models combined with joint Sim(3) multi-view optimization to ensure globally consistent dense reconstructions.

- Experimental results show AIM-SLAM outperforms fixed-window methods in pose accuracy and reconstruction quality on benchmarks like TUM RGB-D and EuRoC.

Introduction

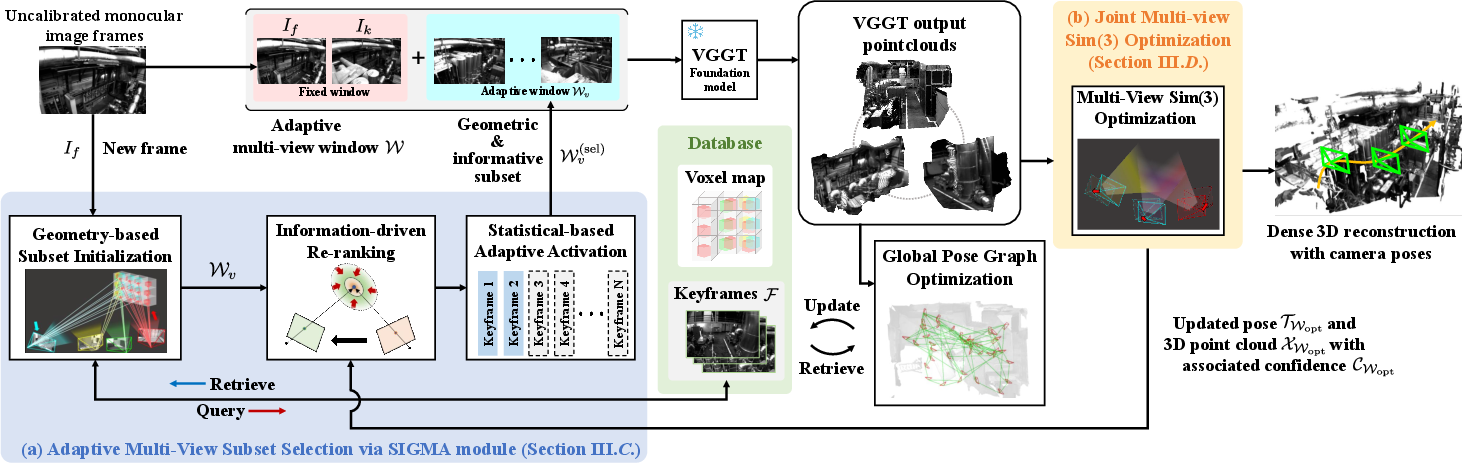

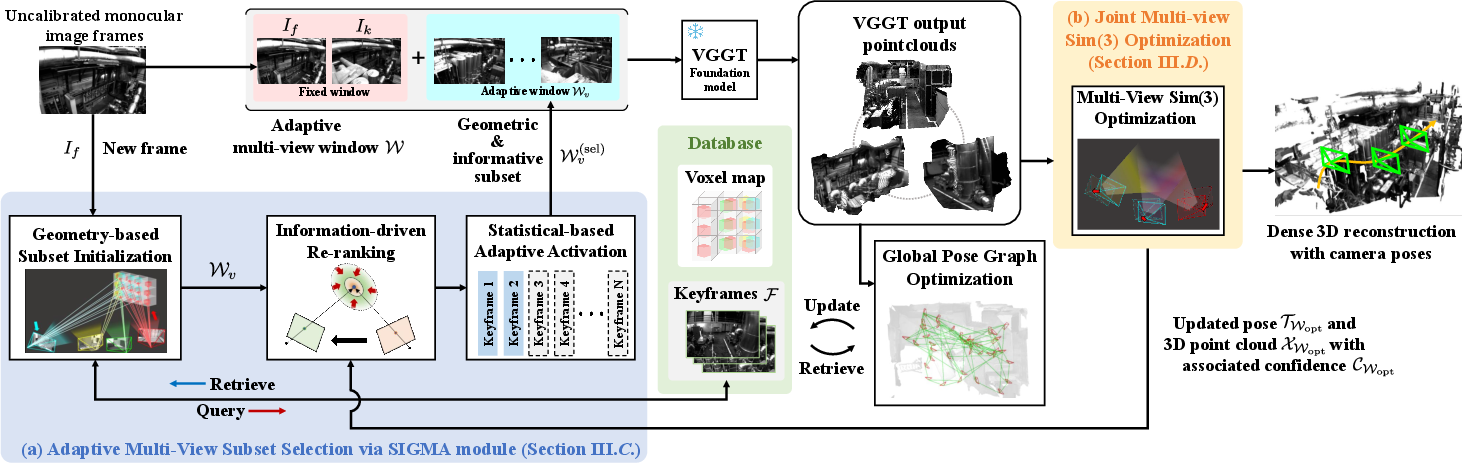

AIM-SLAM introduces a dense monocular SLAM framework leveraging geometry-aware foundation models, focusing on enhancing multi-view reasoning for uncalibrated RGB inputs. The central innovation is an adaptive, information-driven multi-view keyframe prioritization module (SIGMA) that optimizes input subsets for downstream Visual Geometry Grounded Transformer (VGGT) processing. This prioritization is formulated to maximize 3D scene overlap and information gain per keyframe, departing from fixed or sequential windowing strategies commonly used in foundation model-based SLAM. The architecture further integrates a joint Sim(3) multi-view optimization backend to ensure globally consistent pose and dense 3D reconstruction, even under varying camera intrinsics and wide-baseline frame selection.

System Architecture

AIM-SLAM consists of two primary pipelines:

- Frontend: Incorporates the SIGMA module for adaptive candidate keyframe selection and multi-view prioritization, passing these to the VGGT module for dense pointmap inference. The resulting keyframes undergo joint multi-view Sim(3) optimization, ensuring high-fidelity local tracking and minimizing short-/mid-term drift.

- Backend: Performs loop closure by matching global DINOv2-based patch descriptors, followed by pose-graph optimization to enforce loop consistency.

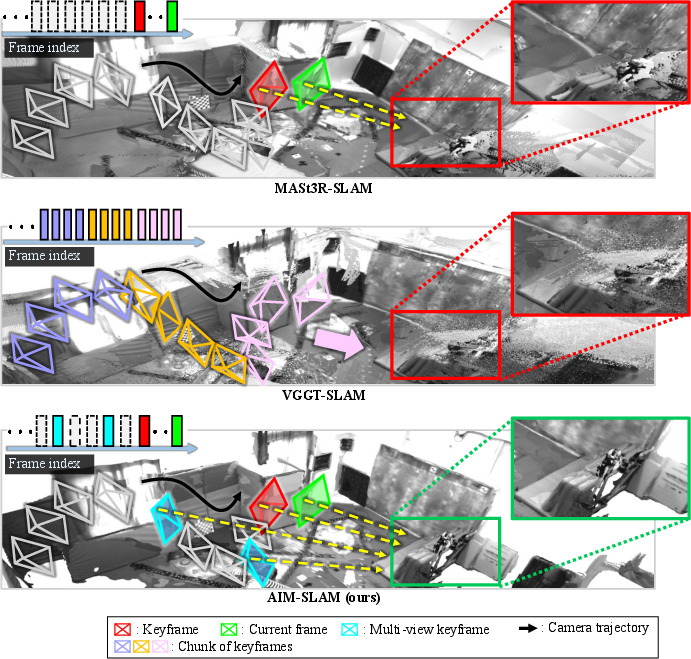

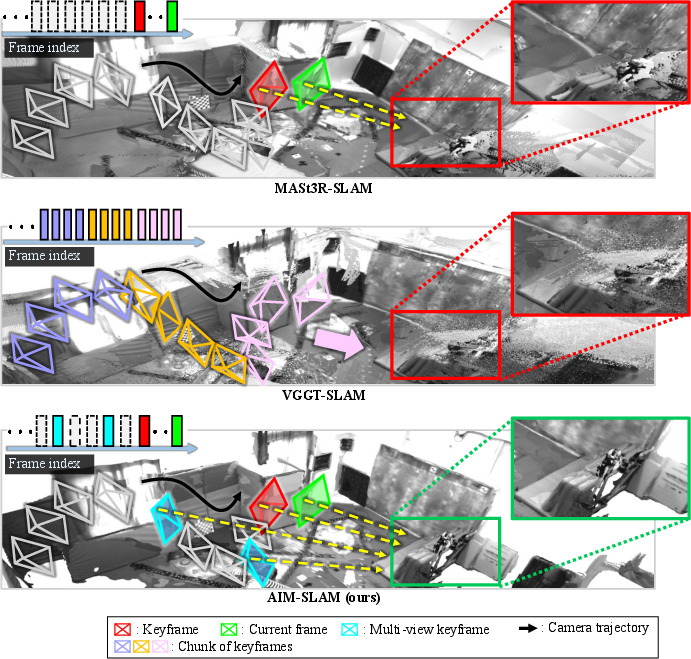

Figure 1: The AIM-SLAM architecture integrates SIGMA-based multi-view prioritization, VGGT inference, joint multi-view Sim(3) pose optimization, and global loop closure as a backend.

SIGMA Module: Adaptive Multi-View Prioritization

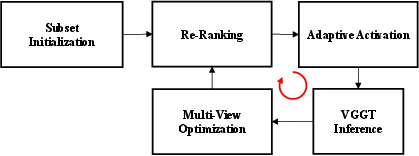

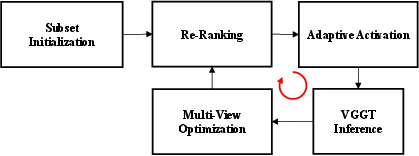

The SIGMA (Selective Information- and Geometric-aware Multi-view Adaptation) module consists of three sequential stages:

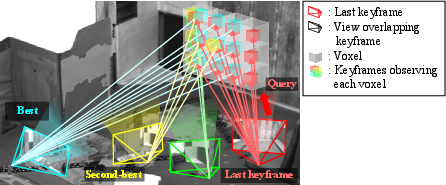

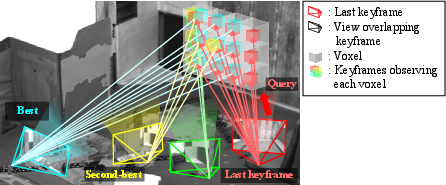

- Voxel-Indexed Keyframe Map (Geometry): For each new frame, keyframe candidates are initialized by voxel overlap, where each voxel stores IDs of keyframes observing it. Overlap is quantified by counting shared voxels, thus efficiently identifying geometrically relevant candidates.

Figure 2: The voxel-indexed keyframe map efficiently determines overlap and candidate selection for multi-view reasoning.

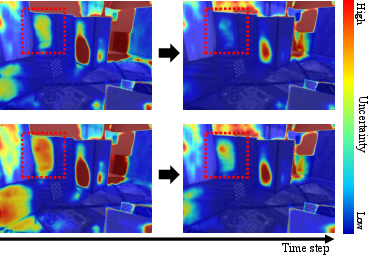

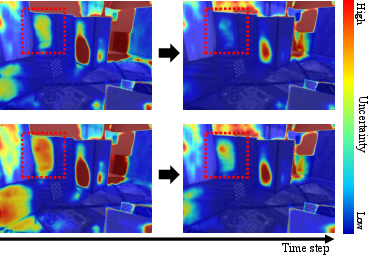

- Information Gain–Based Re-Ranking: From the candidate set, frames are re-ranked by the reduction in 3D point covariance (information-theoretic criterion) for the anchor keyframe. Posterior covariance is updated assuming Gaussian distributions and VGGT-predicted confidence, prioritizing keyframes that maximally contribute to scene disambiguation and uncertainty reduction.

Figure 3: Information-driven re-ranking within SIGMA sharply reduces keyframe uncertainty, focusing optimization on maximally informative frames.

- Adaptive Activation via Statistical Stability: The subset of candidate keyframes is expanded incrementally, guided by a reduced Chi-square test of joint optimization stability. Only subsets that improve the fit are retained, typically resulting in compact multi-view inputs that balance accuracy and efficiency.

Figure 4: SIGMA's block diagram illustrates the sequential geometry-based initialization, information-driven re-ranking, and stability-aware adaptive activation process.

Joint Multi-View Sim(3) Optimization

AIM-SLAM formulates bundle adjustment across the selected multi-view subset in Sim(3), comprising per-frame scale, rotation, and translation without requiring fixed intrinsics. Its residual formulation hybridizes ray-based and pixel-based reprojection errors, permitting robust, scale-invariant dense correspondence while exploiting VGGT's per-frame intrinsic predictions when available. Confidence-weighted fusions and Huber-norm regularization ensure resilience to outlier frames and per-point uncertainties.

Additionally, loop closures are detected by cosine-similarity search across DINOv2 embeddings. Visual loops add constraints in the global pose graph, which is optimized asynchronously to correct long-term drift and enforce trajectory consistency.

Experimental Evaluation

AIM-SLAM is evaluated on TUM RGB-D (room-scale indoor) and EuRoC (aggressive, large-baseline MAV) benchmarks, under strictly uncalibrated monocular conditions.

- Pose Accuracy: AIM-SLAM outperforms prior learning-based and foundation model SLAM approaches, including the heavily engineered MASt3R-SLAM, VGGT-SLAM, and recent fixed-window chunked approaches. It achieves the lowest RMSE absolute trajectory error among all methods, including those utilizing calibration (see Tables in the paper).

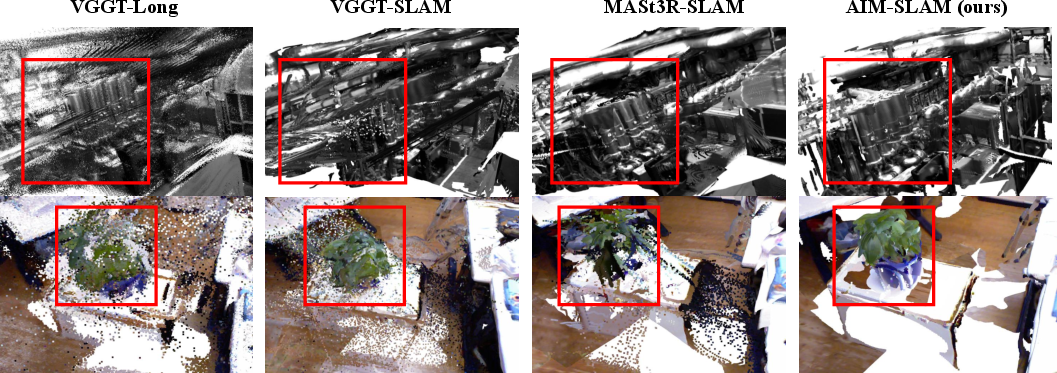

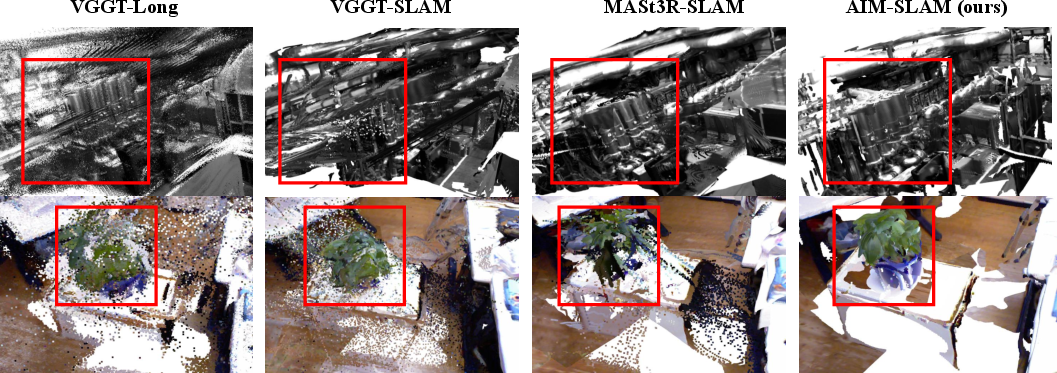

- Dense Reconstruction: AIM-SLAM delivers substantial improvement in both accuracy and completeness on dense 3D reconstruction (chamfer distance, voxel completion), demonstrating superior global consistency and finer object-level details, with marked reduction in scale-drift and artefacts.

Figure 5: AIM-SLAM achieves consistently superior dense reconstructions and trajectory estimates versus fixed-window or two-view methods.

Figure 6: Qualitative reconstructions on EuRoC and TUM RGB-D, emphasizing robust scene coverage and minimal drift or ghosting.

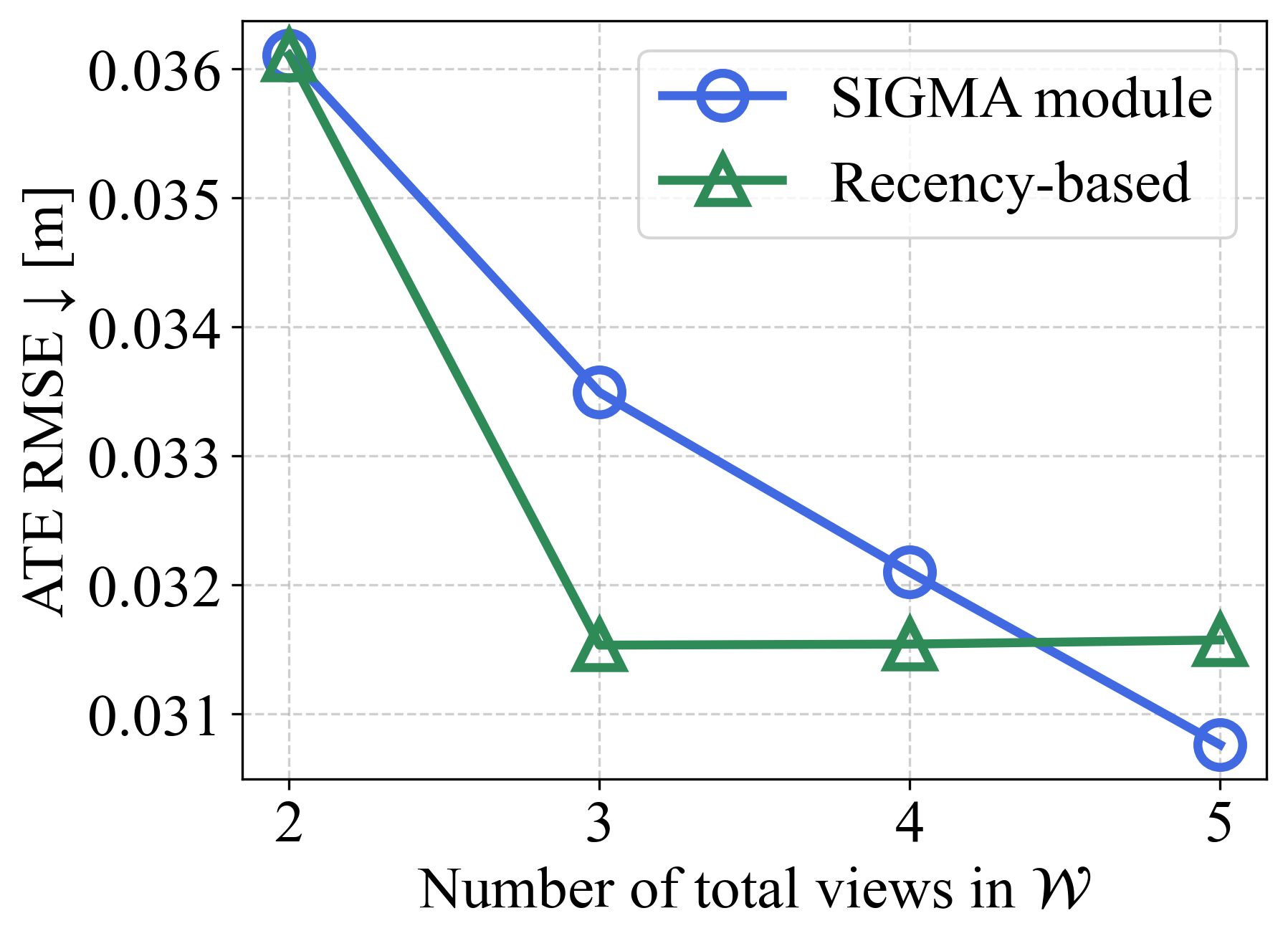

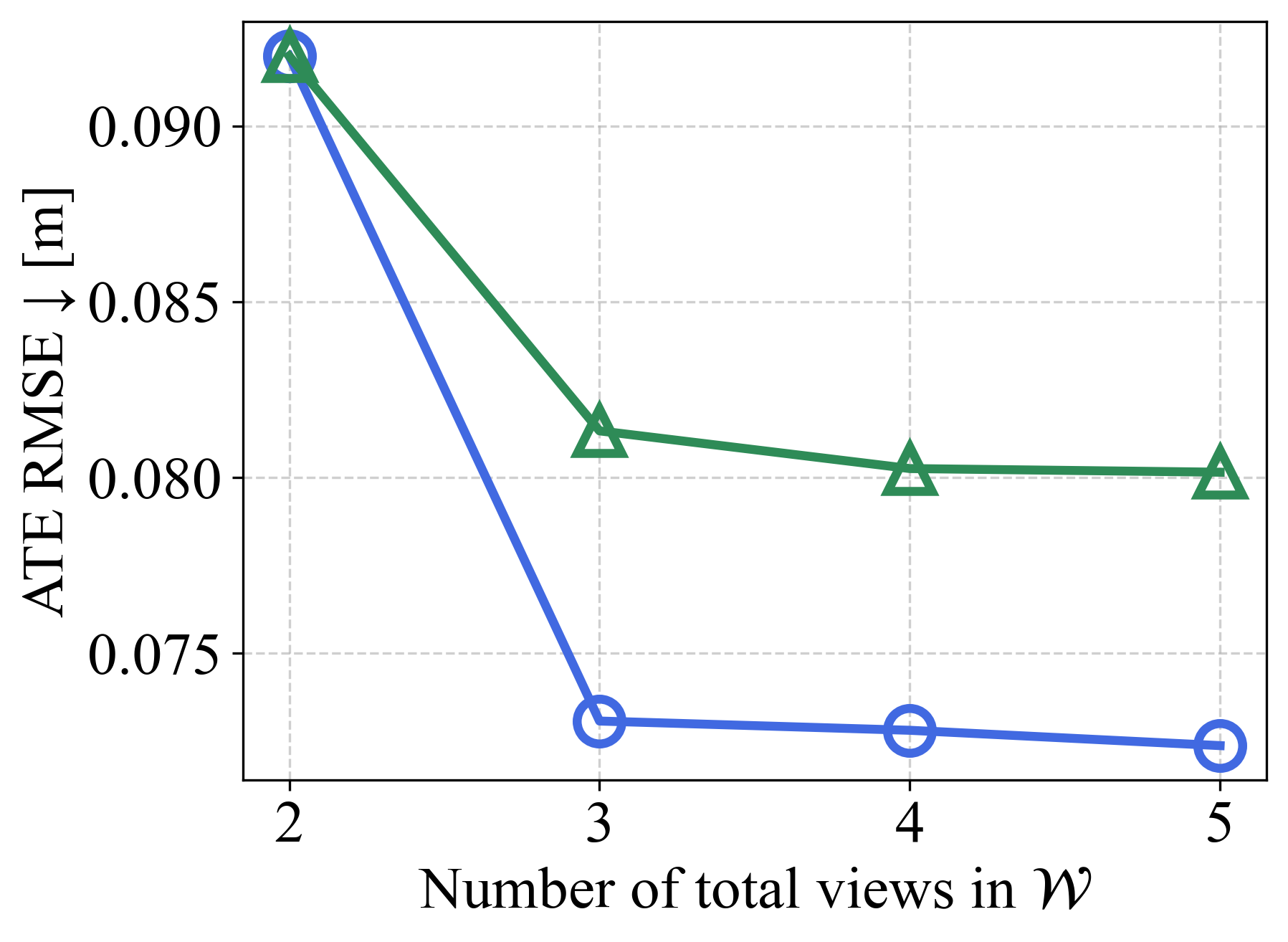

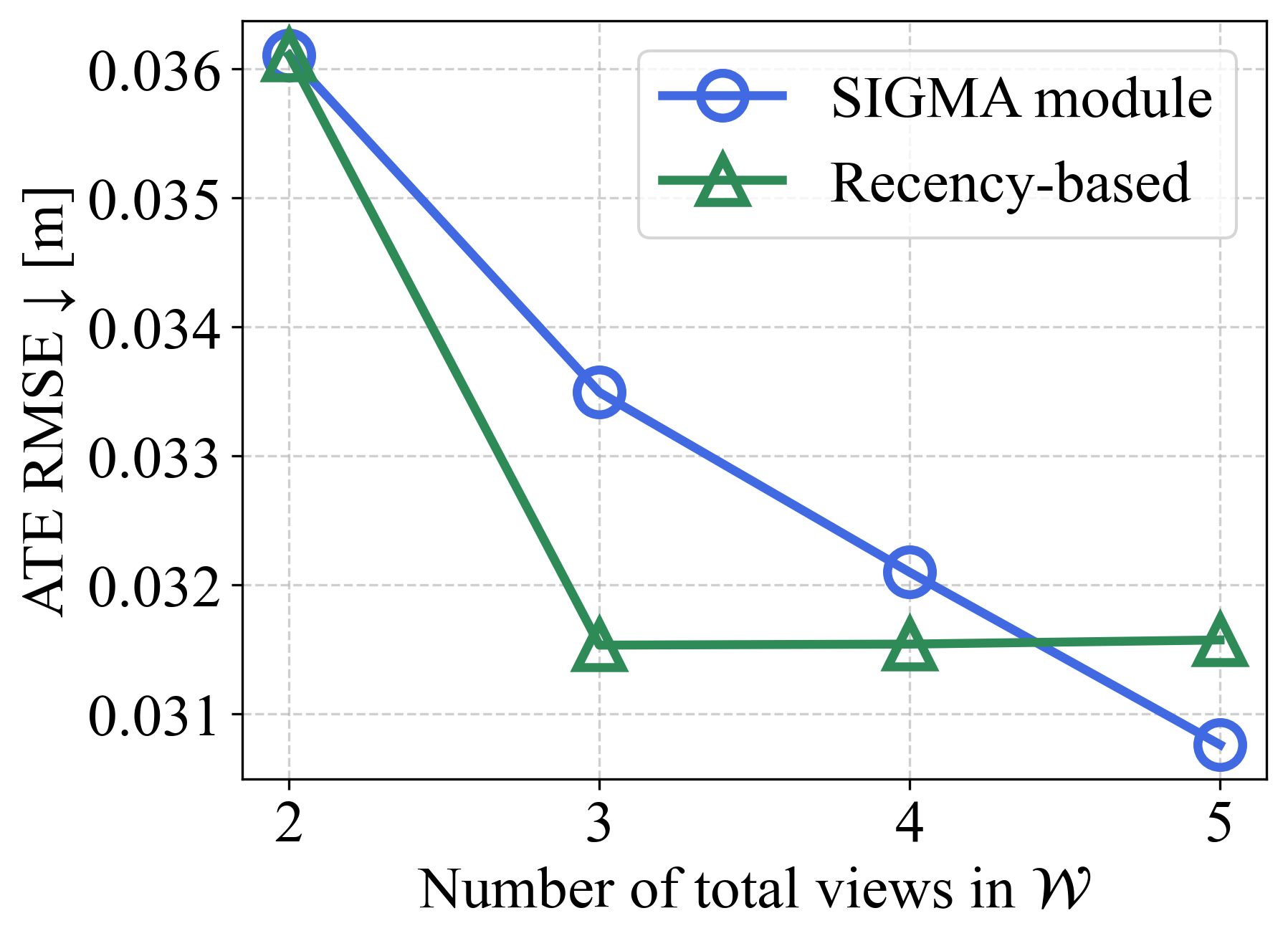

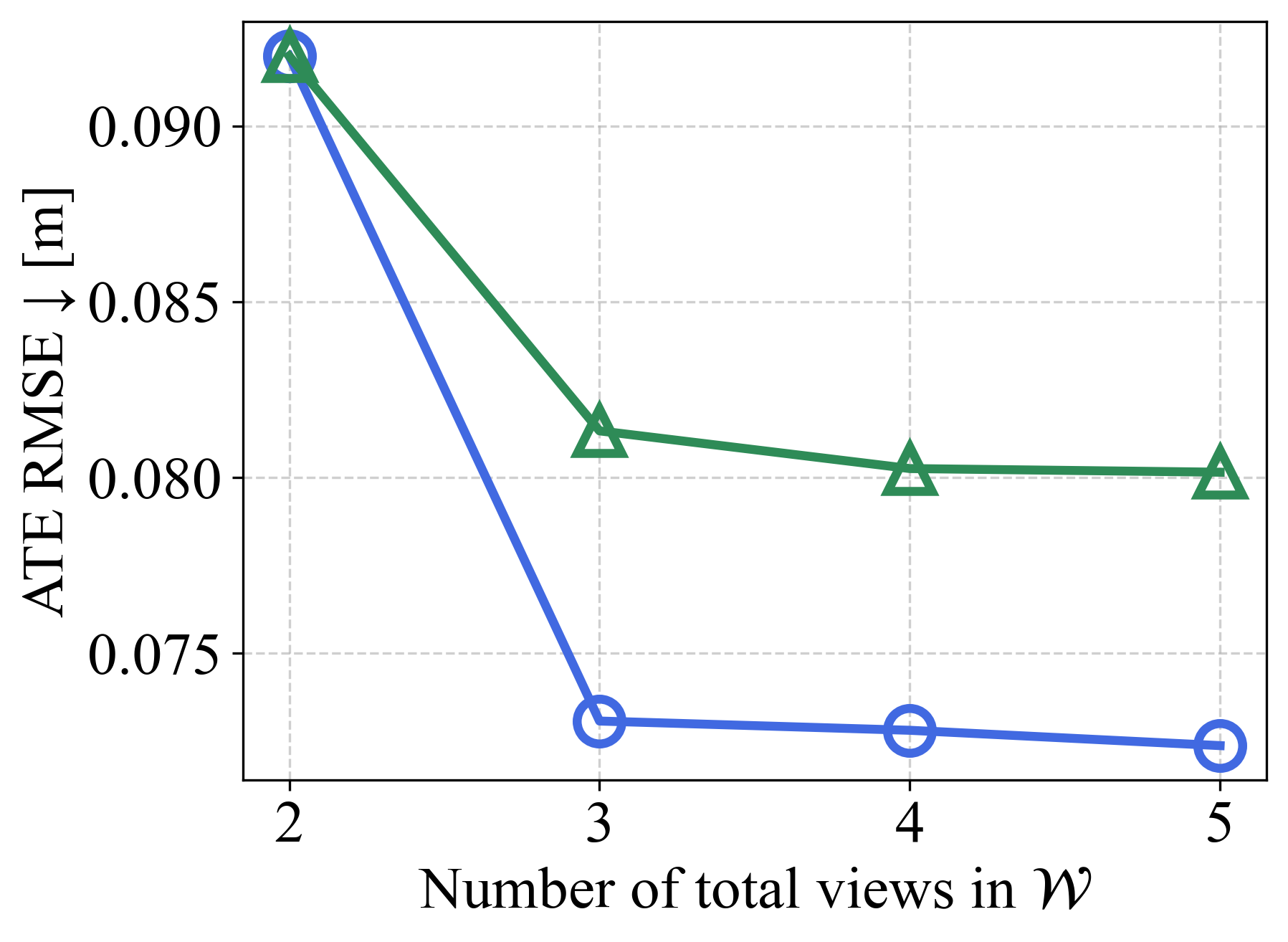

- Ablation Study: Varying the number of input views reveals that recency-based windowing quickly saturates in performance, while SIGMA-based adaptive multi-view consistently yields higher accuracy on challenging, wide-baseline sequences.

Figure 7: SIGMA module adaptivity ensures pose accuracy gains persist as input cardinality increases, especially in dynamic scenarios.

- Residual Formulation Impact: Hybrid ray + projection residuals outperform single-residual alternatives, striking a balance between geometric robustness and pixel-level precision.

Theoretical and Practical Implications

AIM-SLAM demonstrates that foundation model-based monocular SLAM systems can match and exceed the performance of traditional calibrated pipelines by leveraging adaptive geometric and information-theoretic keyframe selection. Joint Sim(3) optimization across variable, information-rich multi-view inputs mitigates issues arising from scale drift, variable intrinsics, and frame redundancy. The system is broadly applicable to robotics and AR/VR settings where geometric calibration is infeasible, and establishes a scalable template for further integration of large-scale image and geometry foundation models.

One notable tradeoff is computational: the reliance on VGGT inference constrains real-time throughput to approximately 3 Hz, although all other components run at >17 Hz. Advances in foundation model acceleration or distillation stand to improve AIM-SLAM's practical deployment.

Future Directions

Enhancements to foundation model speed, intra-frame parallelization, and future incorporation of cross-modal sensory constraints (e.g., inertial measurements, event cameras) are promising avenues. The general adaptive multi-view selection paradigm and information-driven keyframe prioritization can be ported to multi-modal, multi-agent, or dynamic scene understanding platforms.

Conclusion

AIM-SLAM establishes a new standard in dense, uncalibrated monocular SLAM by tightly integrating adaptive, information-aware keyframe prioritization with foundation model inference and robust multi-view optimization. It provides both strong empirical performance and a framework extensible to the next generation of geometry-aware foundation models and real-world robotics deployments (2603.05097).