Proof-of-Guardrail in AI Agents and What (Not) to Trust from It

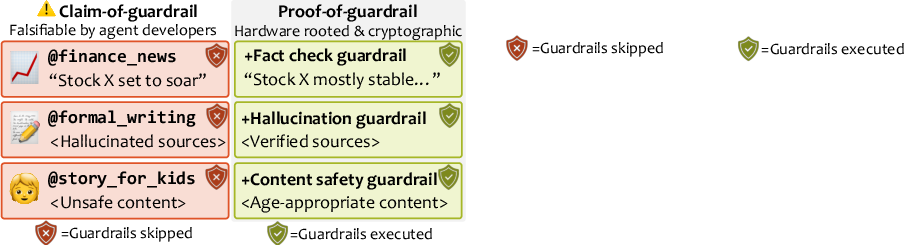

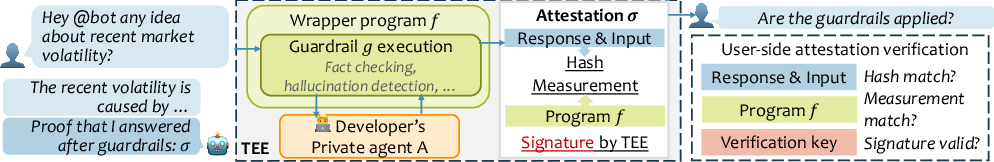

Abstract: As AI agents become widely deployed as online services, users often rely on an agent developer's claim about how safety is enforced, which introduces a threat where safety measures are falsely advertised. To address the threat, we propose proof-of-guardrail, a system that enables developers to provide cryptographic proof that a response is generated after a specific open-source guardrail. To generate proof, the developer runs the agent and guardrail inside a Trusted Execution Environment (TEE), which produces a TEE-signed attestation of guardrail code execution verifiable by any user offline. We implement proof-of-guardrail for OpenClaw agents and evaluate latency overhead and deployment cost. Proof-of-guardrail ensures integrity of guardrail execution while keeping the developer's agent private, but we also highlight a risk of deception about safety, for example, when malicious developers actively jailbreak the guardrail. Code and demo video: https://github.com/SaharaLabsAI/Verifiable-ClawGuard

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Plain-language explanation of “Proof-of-Guardrail in AI Agents and What (Not) to Trust from It”

1) What is this paper about?

This paper is about making it easier to trust that an online AI assistant actually used safety checks (called “guardrails”) before sending you an answer. The authors build a system that gives you a tamper-proof “receipt” proving that a specific, public guardrail really ran when the AI created its response—without forcing the AI’s creator to reveal their private code or prompts.

2) What questions are they trying to answer?

The paper focuses on three simple questions:

- How can users verify that an AI agent actually used the safety guardrails it claims to use?

- Can we provide that proof without exposing the developer’s private AI details?

- Is this practical to run in real-world apps (fast enough, affordable, and reliable)?

3) How did they do it? (Methods in everyday language)

Think of the setup like a locked, transparent kitchen:

- The “guardrail” is the safety rulebook (public and open-source).

- The “AI agent” is the chef (private recipe the developer wants to keep secret).

- The “Trusted Execution Environment” (TEE) is a special locked kitchen that makes sure the exact rulebook is followed and can’t be swapped or secretly changed.

Here’s the idea in simple steps:

- The developer puts the public guardrail and a small “wrapper” program into the TEE (the locked kitchen). The AI agent is loaded inside as a secret ingredient the user can’t see.

- When a user asks a question, the AI creates an answer—but the guardrail inside the TEE checks and filters it first.

- The TEE then creates a signed proof (called an “attestation”). This proof includes:

- A precise “measurement” (like a unique fingerprint) of the guardrail code that ran.

- A fingerprint (a cryptographic hash) of the question and the answer so you can be sure the proof matches exactly what you saw.

- Anyone can verify the proof offline using the TEE’s official public keys and the open-source guardrail code. If anything was changed—like the guardrail code, the proof, or the answer—the verification fails.

Key terms in plain words:

- Guardrail: A set of rules or checks that tries to keep the AI’s answers safe (e.g., blocking harmful content or verifying facts).

- Trusted Execution Environment (TEE): A special, hardware-protected “locked room” inside a computer where code runs safely and can’t be secretly changed.

- Attestation: A signed, tamper-proof receipt from the TEE saying “this exact code ran and produced this result.”

- Hash: A short, unique “fingerprint” of data. If the data changes even a little, the fingerprint changes a lot.

What they built and tested:

- They implemented this “proof-of-guardrail” system for an open-source AI agent called OpenClaw.

- They ran it on a real cloud TEE (AWS Nitro Enclaves).

- They deployed it as a Telegram chatbot so regular users could ask for proof directly in chat.

4) What did they find, and why is it important?

Main results:

- It works end-to-end: Users can get a cryptographic proof that the exact guardrail ran for a given answer.

- Attack attempts were caught:

- Changing the guardrail code → the proof fails (the measurement doesn’t match).

- Tampering with the proof document → signature checks fail.

- Editing the answer after the fact → the hash doesn’t match.

- Speed is still reasonable: Running inside the TEE made things slower, but not by too much for chat use:

- About 25%–38% extra time for generating responses/guardrail checks.

- About 100 milliseconds extra to generate the proof itself.

- Costs are higher: Using the TEE setup on the cloud was about 18.5× more expensive than a small non-TEE server, mainly because TEEs need more memory and a beefier machine.

- Real-world demo: A Telegram bot could produce the proof on demand so users could verify before trusting the answer.

Why this matters:

- It stops fake claims: Developers can’t just say “we used a safety filter”—they have to prove it.

- It protects privacy: The AI’s private prompts or models don’t have to be revealed.

- It boosts trust: Users (or platforms) can check the proof themselves, without trusting a middleman.

Important caution:

- Proof-of-guardrail is not proof-of-safety. Guardrails can still make mistakes, and a determined bad actor might try to “jailbreak” or trick the guardrail. The proof shows the guardrail ran—it doesn’t guarantee the answer is perfect or harmless.

5) What’s the bigger picture? (Implications)

- Better trust for online AIs: In a world with many AI agents (some honest, some not), this gives users a clear, checkable way to confirm safety steps were actually used.

- Helps honest developers stand out: They can show cryptographic proof that they followed safety practices, which could attract more users and partners.

- Not a silver bullet: Because guardrails aren’t flawless and can be attacked, this system should be combined with strong, community-tested guardrails, regular red-teaming, and safe design practices.

- A path toward safer ecosystems: If platforms encourage or require this kind of proof, users can compare agents more fairly and avoid ones that only pretend to be safe.

Overall, the paper shows a practical way to prove that guardrails were applied—fast enough for chat—and explains clearly what you should trust (guardrail execution) and what you shouldn’t assume (that the answer is automatically safe or correct).

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a concise, actionable list of what remains missing, uncertain, or unexplored in the paper, to guide future research and engineering work.

- End-to-end mediation guarantees: How to formally ensure that all agent inputs, outputs, and tool/API calls are forced through the measured wrapper program

f(e.g., via syscall filtering, network isolation, eBPF hooks), with proofs or audits that no alternative I/O channels exist. - Trajectory-level attestation: Mechanisms to bind proofs to the full agent trajectory (prompts, plans, intermediate tool calls and responses, retrieved context, model identities) rather than only the final , e.g., Merkle-tree commitments over step-by-step logs with acceptable overhead.

- Input binding and canonicalization: A standardized, interoperable canonical encoding for inputs/outputs (e.g., JSON canonicalization) to prevent hash mismatches from innocuous formatting changes and to avoid ambiguity in what exactly was attested.

- Freshness and anti-replay: Inclusion of nonces, timestamps, session IDs, or monotonic counters in attestation data to prevent replay of old proofs; evaluation of what Nitro Enclaves natively support and how verifiers should enforce freshness.

- Streaming and multi-turn support: Practical designs and benchmarks for streaming guardrails and multi-turn dialogs where partial outputs are moderated and attested incrementally, including latency/UX impacts.

- Completeness of enforcement: Methods to detect and prevent developers from generating responses outside the enclave and only passing the output through the guardrail (“output-only” moderation), including attestations that the agent itself (or all its I/O) ran under enforcement.

- Guardrail configuration integrity: Techniques to seal and attest guardrail configurations (thresholds, policies, allow/deny lists) so they cannot be weakened at runtime; secure, auditable update channels for configurations and versioning semantics.

- Composing multiple guardrails: How to attest and verify the order, configuration, and composition graph of multiple guardrails (e.g., safety + factuality), and study interactions/conflicts and error compounding.

- External API/model verification: Evidence that LLM/tool calls actually reached the declared providers/models (mitigating model substitution or endpoint spoofing), e.g., by logging TLS certificate pins in the attestation, obtaining signed receipts, or leveraging provider-side TEEs.

- Registry and governance: A community or standards-based registry mapping enclave measurements to audited open-source guardrail versions and build recipes, with revocation, update policies, and regression test suites.

- Reproducible builds and SBOMs: Procedures for deterministic enclave builds (toolchains, pinned dependencies), publication of SBOMs, and independent rebuild pipelines to recompute and validate the expected measurement

m. - Cross-platform portability: Validation on alternative TEEs (Intel TDX, AMD SEV-SNP, GCP Confidential VMs) to compare attestation formats, custom-data limits, performance characteristics, and platform-specific side-channel surfaces.

- Side-channel and TEE-specific threats: Systematic analysis of side channels (e.g., Iago attacks, microarchitectural leaks) relevant to Nitro Enclaves in this use case and concrete mitigations compatible with the performance envelope.

- Transparency and omission detection: Protocols and infrastructure (e.g., public transparency logs) to detect selective disclosure (developers showing proofs only when convenient) and to enable auditors to spot missing proofs for safety-critical interactions.

- Proof coverage policies: Clear policies for when proofs must be presented (e.g., every message, or only on high-stakes queries), and client-side enforcement/UI behavior when proofs are absent or invalid.

- Usability and verifier tooling: Empirical studies on whether typical users or integrators can verify proofs reliably; standardized client libraries, formats, and UI designs that reduce human error and misinterpretation (“proof-of-guardrail” not being misread as “proof-of-safety”).

- Scalability and throughput: Benchmarks under high concurrency and across workloads, including per-request attestation overheads, batching strategies, and performance on GPU-accelerated agents or larger enclaves.

- Attestation size and transport: Measurement of attestation document sizes across platforms, transport overhead in chat or web channels, compression strategies, and interoperable envelopes for proofs and associated metadata.

- Privacy-preserving variants: Designs for use cases where and/or are sensitive (contrary to current assumptions), such as encrypting custom data to verifiers, differential disclosure, or zero-knowledge extensions.

- Evidence reproducibility for fact-checking tools: For guardrails relying on dynamic web content (e.g., Loki), how to attest and preserve the evidence (URLs, timestamps, snapshots) so third parties can validate claims after the fact.

- Persistent state and memory: Techniques to attest guardrail enforcement over agent memory and long-term state (knowledge bases, vector stores) and to bind proofs to the state version that influenced a given response.

- Formal assurance of wrapper

f: Applying formal verification, fuzzing, or proof-carrying code to demonstrate thatfcannot be subverted by the developer’s agentA(e.g., preventing execution or command injection within the enclave). - Guardrail robustness under adversarial pressure: Systematic evaluation and attested self-tests/red-teaming of

gagainst jailbreaks and prompt-based bypasses, with on-chain or logged results to quantify residual risk. - Economic viability: Quantitative studies of user trust uplift and business outcomes relative to the reported ~18.5× cost increase; exploration of cost-reduction techniques (lighter TEEs, serverless enclaves, shared attestation services).

- Standardized claim semantics: A machine-readable schema for what exactly is being proven (guardrail identity, version, configs, coverage scope), aligned with regulatory or industry requirements, and accompanied by clear, standardized disclaimers.

- Revocation and update handling: End-to-end processes for revoking compromised guardrail versions or measurements, distributing updates to verifiers, and ensuring clients react appropriately to revoked or stale proofs.

Practical Applications

Below are practical, real-world applications that follow from the paper’s “proof-of-guardrail” concept (TEE-backed attestations that a specific open-source guardrail executed for an agent response). Each item notes sector fit, potential products/workflows, and key assumptions or dependencies.

Immediate Applications

- Proof-bearing chatbots and agent platforms

- Sector: Software/SaaS, Customer Support, E-commerce, Productivity

- Tools/products/workflows: “Guardrail Attestation Gateway” (TEE proxy with embedded guardrails); “Proof-of-Guardrail” badge in UI; per-message attestations users can download or verify via a verifier CLI; integration with OpenClaw/LangChain/AutoGen via an SDK; the “attestation skill” to auto-offer proofs on high-stakes prompts

- Assumptions/dependencies: Cloud TEE availability (e.g., AWS Nitro Enclaves); open-source guardrail code/config pinned via measurement; acceptable latency/cost overhead; users or platforms have verifier tools; guardrails can still err and be jailbroken (not proof-of-safety)

- Enterprise compliance logging and audits

- Sector: Regulated enterprise IT, MLOps, GRC (governance-risk-compliance)

- Tools/products/workflows: Attested transcript logging; audit-ready evidence that specific guardrail versions ran (SOC 2/ISO 27001/AI Act documentation); CI/CD checks that pin measurements; continuous verification dashboards

- Assumptions/dependencies: Trust in TEE chain-of-trust; stable release/measurement management; secure storage of attested logs; guardrail quality vetted through internal policy

- Agent marketplaces and bot stores with verification

- Sector: Platforms/marketplaces (app stores, plugin stores, bot directories)

- Tools/products/workflows: Submission-time verification service; “verified guardrail” badges; automated re-verification on updates; consumer-facing disclosure of guardrail identity and version

- Assumptions/dependencies: Marketplace policy and enforcement; standardizing how measurements and guardrail manifests are submitted; cross-TEE support as vendors diversify infra

- Financial advisory and compliance assistants with proof-of-guardrail

- Sector: Finance (retail investing, wealth management, banking compliance)

- Tools/products/workflows: Attested content-safety and factuality guardrails before advice is shown; compliance review workflows that reject non-attested answers; integration with suitability/risk guardrails; transcript evidence for FINRA/SEC examinations

- Assumptions/dependencies: Acceptance of TEE attestations by risk/compliance functions; latency tolerance; explicit disclaimers that this is not proof-of-safety; continuous red-teaming of the measured program to reduce bypass risk

- Healthcare triage and patient-facing support with verified guardrails

- Sector: Healthcare (patient portals, telehealth intake, benefits navigation)

- Tools/products/workflows: Medical-safety guardrails (contraindication checks, urgency triage policies) with attestations attached to responses; clinical review queues prioritizing non-attested or low-confidence items

- Assumptions/dependencies: Domain-specific, community-vetted medical guardrails; institutional acceptance of cloud TEEs; strong privacy posture if combined later with confidential inference; human-in-the-loop escalation

- Educational tutoring and campus assistants with age-appropriateness and factuality checks

- Sector: Education (K–12, higher-ed)

- Tools/products/workflows: Attested content-appropriateness filters and fact-check guardrails; instructor dashboards that show pass/fail of guardrails per answer; parent/student “proof” links

- Assumptions/dependencies: School policy for third-party verification; reliable guardrails for age appropriateness; bandwidth for verification at scale

- Developer toolchains for verifiable guardrails

- Sector: Software engineering, DevTools, MLOps

- Tools/products/workflows: Verifier CLI/SDK; CI pipeline step to fail builds without known guardrail measurements; “TEE proxy” docker images; templates for OpenClaw/LangChain to route model/tool traffic through the measured wrapper

- Assumptions/dependencies: Availability of prebuilt enclave images; engineering effort to route all I/O through the wrapper; handling streaming or switching to streaming-compatible guardrails

- Social/messaging platforms that demand proofs from third-party bots

- Sector: Social platforms, Messaging (Slack, Discord, Telegram)

- Tools/products/workflows: Platform policy requiring bots to attach attestations for certain content types; automated verifier bots or built-in app verification; user-visible proof buttons

- Assumptions/dependencies: Platform willingness to enforce; lightweight verifier libraries for client and server; consistent guardrail manifests per platform policy

- Research reproducibility and benchmark reporting

- Sector: Academia/Research, Safety benchmarking

- Tools/products/workflows: Attested benchmark runs (e.g., AgentHarm, WebAgentBench) with published measurements and verification scripts; artifact review that checks proofs before acceptance

- Assumptions/dependencies: Community norms to release measurements and wrapper code; reproducible build pipelines; researchers accept TEE trust model

- Security red-teaming and bug bounty evidence

- Sector: Security, Safety engineering

- Tools/products/workflows: Attested red-team transcripts proving exact guardrail versions; bounty programs that require attested exploits; regression suites tied to measurements

- Assumptions/dependencies: Mature attestation storage; pre-registration of measurements; clarity that proofs don’t imply safety, only enforcement

- Procurement and vendor due diligence

- Sector: Enterprise sourcing, Legal/Compliance

- Tools/products/workflows: RFP/RFI templates requiring sample attestations; vendor scorecards capturing measurement IDs; automated checks during pilot phases

- Assumptions/dependencies: Buyers’ teams can run verifiers; vendors standardize how proofs are provided; clear policies for measurement rotation

- Insurance underwriting signal for AI deployments

- Sector: Insurance (cyber, professional liability)

- Tools/products/workflows: Carriers accept attested guardrail enforcement as a control; premium credits for attested usage; claims forensics use attested transcripts

- Assumptions/dependencies: Insurer appetite and actuarial validation; legal acceptance of attestations; shared understanding of residual risks (jailbreaks, guardrail error)

- Content moderation and ads safety verification

- Sector: Media, AdTech, Trust & Safety

- Tools/products/workflows: Attested moderation decisions (e.g., Llama Guard 3); audit trails for disputes; publisher-side proof requirements for AI-authored content

- Assumptions/dependencies: Moderation guardrails tuned to domain; throughput and cost acceptable; acceptance that proof ≠ correctness guarantee

Long-Term Applications

- Standards and registries for proof-of-guardrail

- Sector: Cross-industry, Standards bodies

- Tools/products/workflows: Open formats for attestation payloads and guardrail manifests; public registries of “best-practice” guardrails with measurement IDs; interop across AWS Nitro, Intel TDX, AMD SEV-SNP, ARM TrustZone

- Assumptions/dependencies: Multi-vendor TEE alignment; governance over registry inclusion, deprecation, and revocation

- Community-vetted guardrail suites and regression tests

- Sector: Safety research, Open-source foundations

- Tools/products/workflows: Curated guardrail packs (content safety, factuality, domain policies) with ongoing red-teams; versioned benchmarks tied to measurements; automatic regression alerts

- Assumptions/dependencies: Sustained community maintenance; representative benchmarks; incentives for vendors to adopt community recommendations

- End-to-end attestable agent pipelines (inputs, plans, tools, outputs)

- Sector: Software, Critical operations

- Tools/products/workflows: Per-step attestation (planning, tool calls, code execution, output); streaming-compatible guardrails with attestations; chain-of-custody across multi-agent systems

- Assumptions/dependencies: Performance optimization for multi-attest flows; richer wrapper programs to bind intermediate states; robust handling of streaming

- On-device and edge deployments with attested guardrails

- Sector: Robotics, Automotive, IoT, Mobile

- Tools/products/workflows: TrustZone/TEE-backed proof for on-device assistants and robot controllers; local verification or delayed sync to a verifier service

- Assumptions/dependencies: Hardware TEE feature parity at the edge; real-time constraints and thermal envelopes; secure update channels for guardrail code

- Privacy + integrity: confidential inference plus proof-of-guardrail

- Sector: Healthcare, Legal, Finance

- Tools/products/workflows: Trusted VMs for model/data confidentiality, combined with attested safety enforcement; dual-proof artifacts (confidentiality + guardrail integrity)

- Assumptions/dependencies: Efficient confidential inference stacks; regulator and customer comfort with TEE trust models; careful policy for what goes into custom data (x, r)

- Cyber-physical safety gates for actuation

- Sector: Industrial control, Energy, Drones/Robotics

- Tools/products/workflows: Actuation permitted only after attested policy checks; per-command proofs that a safety policy allowed the action; event recorders for post-incident forensics

- Assumptions/dependencies: Hard real-time performance; certified safety policies; fail-safe behavior on attestation failures

- Regulatory adoption for conformity assessments

- Sector: Public policy, Regulators

- Tools/products/workflows: Conformity modules that verify proofs against recognized guardrail catalogs; mandatory disclosure of measurements; supervised markets requiring proofs for certain agent categories

- Assumptions/dependencies: Legal frameworks that recognize TEE attestations; harmonization with the EU AI Act and sectoral rules; oversight capacity

- Public transparency logs and decentralized verification

- Sector: Platforms, Web3/Transparency ecosystems

- Tools/products/workflows: Publishing attested transcripts or digests to public logs or blockchains; smart contracts gating payments on verified proofs; transparency tooling for civil society

- Assumptions/dependencies: Privacy-preserving logging designs; sustainable cost models; governance for disputes and takedowns

- Native UX indicators for proofs (“guardrail lock”)

- Sector: OS/Browser vendors, Messaging apps

- Tools/products/workflows: System APIs to verify attestations; UI indicators next to AI content; user controls to auto-hide non-attested answers

- Assumptions/dependencies: Standard verification libraries; product acceptance; mitigation of spoofing/phishing via UX

- Legal evidentiary use and incident forensics

- Sector: Legal, Claims, Internal investigations

- Tools/products/workflows: Chain-of-custody for AI outputs with signed attestations; evidentiary packaging; expert-witness guidance on interpreting proofs (proof-of-enforcement, not proof-of-safety)

- Assumptions/dependencies: Judicial acceptance; secure archival; clear interpretability of guardrail scope/limits

- Adaptive, jailbreak-resistant guardrail ensembles with proofs

- Sector: Safety engineering, High-risk domains

- Tools/products/workflows: Attested ensembles (e.g., constitutional classifiers, tool-augmented detectors) with rotation schedules; streaming enforcement with partial-response halting

- Assumptions/dependencies: Ongoing research on jailbreak resistance; performance and cost trade-offs; update channels that maintain measurement provenance

- Zero-knowledge or non-TEE proofs of enforcement

- Sector: Cryptography, High-assurance systems

- Tools/products/workflows: zk proofs for guardrail execution to reduce cloud trust assumptions; hybrid TEE–ZK designs for selective disclosure

- Assumptions/dependencies: Major research breakthroughs to make zk practical at interactive latencies; standardized circuits for guardrails

- Cross-provider policy orchestration and continuous verification

- Sector: Large enterprises, MSPs

- Tools/products/workflows: Centralized policy plane to push/rotate guardrail measurements across fleets; continuous verification and alerting; auto-quarantine of non-attested agents

- Assumptions/dependencies: Multi-cloud support; secure measurement lifecycle management; integration with SIEM/SOAR

Notes on feasibility and risk across applications

- Proof-of-guardrail is proof of enforcement, not proof of safety. Guardrails can be wrong and can be jailbroken. Measured wrapper programs must be hardened to prevent bypass.

- Trust anchors remain with TEE vendors/cloud providers; some applications may require alternatives (e.g., zk proofs) or multi-TEE attestation for higher assurance.

- Costs (memory requirements, enclave-friendly networking) and latency overheads must be acceptable; real-time domains may need optimized stacks and streaming-compatible guardrails.

- External APIs invoked by guardrails (LLM backends, web search) introduce additional trust dependencies; openness about those calls should be part of the manifest.

- Binding the input x: deployments should decide whether to bind both x and r in the attestation; the paper’s “attestation skill” variant omits x, which reduces traceability guarantees.

Glossary

- Attestable audits: An approach that uses TEEs to cryptographically prove a model has passed specified security evaluations. "The most relevant work, attestable audits~\cite{Schnabl2025AttestableAV}, ensures that a model the user communicates with has provably passed security audits."

- Attestation document: A TEE-produced, signed statement that includes the code measurement and a commitment to specific input/output of an execution. "The TEE's hardware/firmware-backed attestation service produces an attestation document that includes the enclave measurement and a custom data commitment , covering the input and the output."

- Attestation signing key: A platform-protected private key used by the TEE to sign attestation documents, backed by a certificate chain. "The document is signed using a TEE platform-protected attestation signing key whose certificate chain roots in the platform's trust anchor (e.g., AWS for Nitro Enclaves, or Intel for Intel Trusted Domain Extensions)."

- AWS Nitro Enclaves: Amazon’s confidential computing/TEE offering that creates isolated compute environments for secure processing and attestation. "We experiment with Amazon Web Service (AWS) Nitro Enclaves TEE on one m5.xlarge instance"

- AWS Nitro Secure Module (NSM): A module/services interface inside Nitro Enclaves used to generate attestation documents. "The attestation server takes custom data (such as input and output ) as input, generates an attestation document by calling the AWS Nitro Secure Module (NSM), and returns the attestation document in the response."

- Certificate chain: A hierarchical chain of certificates establishing trust from a signing key to a root authority. "The document is signed using a TEE platform-protected attestation signing key whose certificate chain roots in the platform's trust anchor..."

- Commitment (cryptographic): A hash-based binding to specific data (e.g., input and output) included in an attestation to prevent tampering. "a custom data commitment , covering the input and the output."

- Computational integrity: The assurance that declared code (e.g., a guardrail) actually executed to produce a given output. "We consider the following desiderata in the proposed system. (1) Computational integrity, i.e., the guardrail executed when generating the response ."

- Confidential computing: Cloud/TEE-based protection of workloads so code and data remain confidential during processing. "cloud providers offer TEEs as âconfidential computingâ to protect workloads."

- Confidential model inference: Running inference while keeping inputs and model parameters secret using TEEs or similar mechanisms. "and confidential model inference~\cite{tramer2018slalom, Lee2019OcclumencyPR, Grover2018PrivadoPA, anthropic_confidential_inference_trusted_vms_2025} to keep inputs, training data and model weights secret."

- Enclave: The isolated execution environment created by a TEE where measured code runs securely. "The program launches in an isolated execution environment created by a TEE called an enclave."

- Enclave image (EIF): The immutable image (including kernel and dependencies) loaded into a Nitro Enclave and measured for attestation. "the wrapper program denotes an enclave image (EIF), analogous to a virtual-machine image in that it is the immutable artifact loaded at boot and the component whose contents are measured for attestation."

- Enclave measurement: A cryptographic hash of the enclave’s loaded code/binary used to attest exactly what executed. "the TEE records an enclave measurement (a hash) that depends on the program binary of ."

- Federated learning: A distributed ML paradigm where models are trained collaboratively across clients without centralizing raw data. "TEEs are applied in multi-party and federated learning~\cite{Hynes2018EfficientDL, Law2020SecureCT, Mo2021PPFLPF, WarnatHerresthal2021SwarmLF}"

- Guardrail: A safety mechanism that restricts or evaluates an AI agent’s inputs/outputs or tool use according to policies. "Agent guardrails play a role in safety by restricting tool calls or responses with pre-defined rules or by predicting safety compliance~\cite{Sharma2025ConstitutionalCD, Chennabasappa2025LlamaFirewallAO, Xiang2024GuardAgentSL, Vijayvargiya2025OpenAgentSafetyAC}."

- Hypervisor: The virtualization layer that manages VMs/TEEs; in Nitro, it measures code and protects TEE keys. "We trust the cloud provider (AWSâs Nitro hypervisor) to correctly measure the code and protect the TEEâs private keys."

- Jailbreaking: Deliberately bypassing or subverting a guardrail’s restrictions to induce unsafe behavior. "a malicious developer can perform jailbreaking against the open-source guardrail."

- OpenClaw: An open-source AI agent framework used in the paper’s implementation and evaluation. "We implement proof-of-guardrail for OpenClaw agents and evaluate latency overhead and deployment cost."

- Platform Configuration Register (PCR): A TEE/TPM register storing measurements (hashes) of loaded components used during attestation. "- pcr2: 5760ec667cfa6a152c00379e492a2ceabfc9c1d63649fa846b945b4886d5c8321ec0712c6f1fd0d9f294811e68ce2005"

- Proof-of-guardrail: The proposed system that provides cryptographic evidence that a declared guardrail executed when producing a response. "we propose proof-of-guardrail, a system that enables developers to provide cryptographic proof that a response is generated after a specific open-source guardrail."

- Prover (): The party generating evidence (e.g., an attestation) to convince a verifier that execution occurred as claimed. "We introduce remote attestation as a way for a prover to convince a verifier that a public program executed inside a TEE..."

- Remote attestation: A TEE mechanism producing signed evidence that a specific measured program ran with given inputs/outputs. "TEEs support remote attestation, a proof that the program is running as expected (rather than a modified one), even though the verifier cannot directly inspect the machine."

- Signature chain: The chain of cryptographic signatures/certificates used to validate an attestation back to a trusted root. "Upon receiving , the verifier checks the attestationâs signature chain (using a verification key/certificate published by the TEE platform)..."

- Streaming guardrails: Guardrails that evaluate and enforce safety on partial/streamed model outputs rather than only on completed responses. "However, we note that streaming guardrails~\cite{Sharma2025ConstitutionalCD, Cunningham2026ConstitutionalCE} are compatible with the proposed proof-of-guardrail framework."

- Tool call: An agent action that invokes an external tool/service as part of its reasoning or response generation. "it invokes the attestation server as a tool call, and the agent generates intended response as the request parameter before requesting the attestation server."

- Trust anchor: The root of trust (e.g., a vendor’s root certificate) that anchors the attestation’s certificate chain. "whose certificate chain roots in the platform's trust anchor (e.g., AWS for Nitro Enclaves, or Intel for Intel Trusted Domain Extensions)."

- Trusted Computing Base (TCB): The set of components that must be trusted for the system’s security properties to hold. "Our system minimizes the Trusted Computing Base (TCB) by anchoring trust in the hardware or cloud service already used for deployment..."

- Trusted Execution Environment (TEE): A hardware-backed isolated environment ensuring code/data confidentiality and integrity, supporting attestation. "A Trusted Execution Environment (TEE) is a hardware-backed isolation mechanism that lets sensitive code run in a protected area."

- Verifiable inference: Producing evidence that an output was generated by a specific model on a specific input. "and verifiable inference (binding an output to a specific input and model)~\cite{tramer2018slalom, Cai2025AreYG}."

- Verification key: A public key or certificate used by verifiers to check attestation signatures. "Upon receiving , the verifier checks the attestationâs signature chain (using a verification key/certificate published by the TEE platform)..."

- Verifier (): The party that checks the attestation to confirm the claimed execution and outputs. "We introduce remote attestation as a way for a prover to convince a verifier that a public program executed inside a TEE..."

- Zero-knowledge proofs: Cryptographic proofs that a statement is true without revealing underlying secrets, often computationally heavy. "as a computationally efficient alternative to prohibitive zero-knowledge proofs~\cite{Tramr2017SealedGlassPU, South2024VerifiableEO}."

Collections

Sign up for free to add this paper to one or more collections.