GhanaNLP Parallel Corpora: Comprehensive Multilingual Resources for Low-Resource Ghanaian Languages

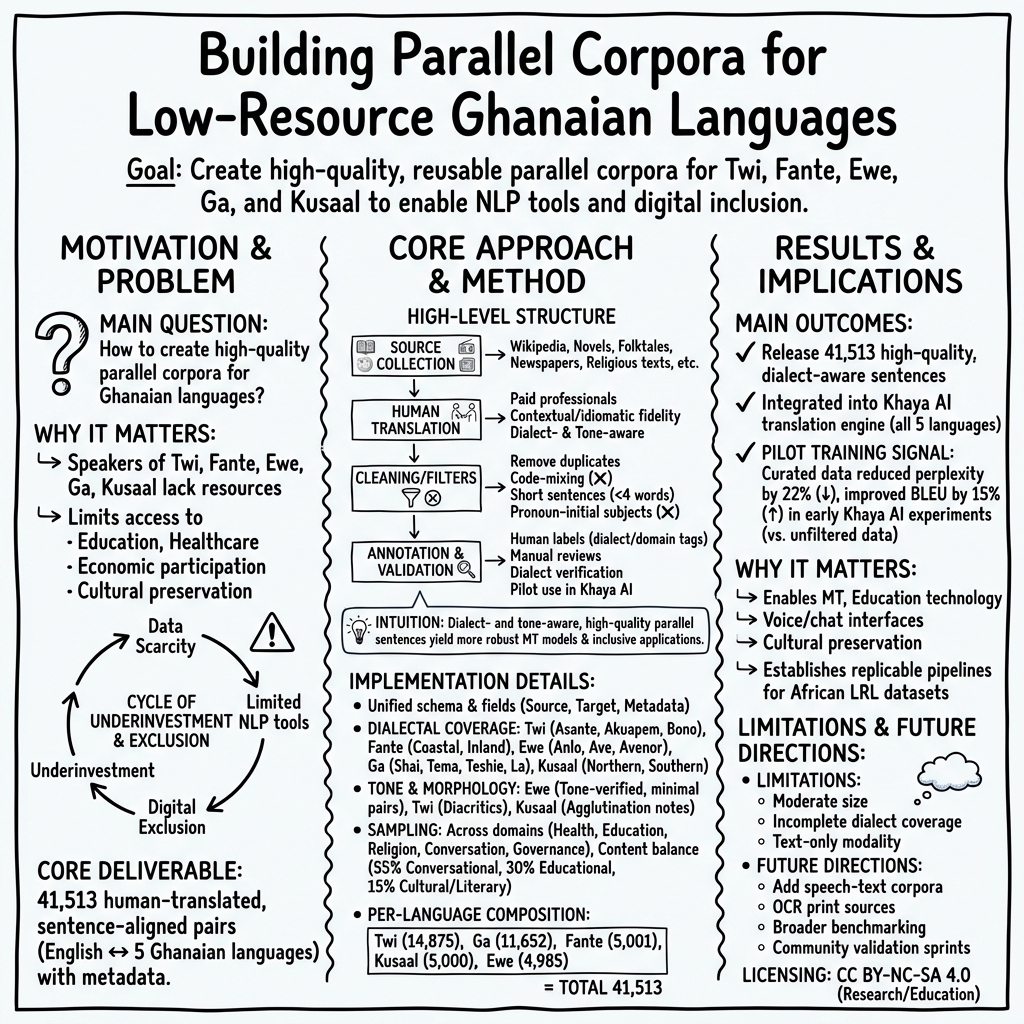

Abstract: Low resource languages present unique challenges for natural language processing due to the limited availability of digitized and well structured linguistic data. To address this gap, the GhanaNLP initiative has developed and curated 41,513 parallel sentence pairs for the Twi, Fante, Ewe, Ga, and Kusaal languages, which are widely spoken across Ghana yet remain underrepresented in digital spaces. Each dataset consists of carefully aligned sentence pairs between a local language and English. The data were collected, translated, and annotated by human professionals and enriched with standard structural metadata to ensure consistency and usability. These corpora are designed to support research, educational, and commercial applications, including machine translation, speech technologies, and language preservation. This paper documents the dataset creation methodology, structure, intended use cases, and evaluation, as well as their deployment in real world applications such as the Khaya AI translation engine. Overall, this work contributes to broader efforts to democratize AI by enabling inclusive and accessible language technologies for African languages.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper is about building high‑quality language data for five widely spoken Ghanaian languages—Twi, Fante, Ewe, Ga, and Kusaal—so computers can understand and translate them better. The team created 41,513 pairs of matching sentences: each sentence in a Ghanaian language sits side by side with its English translation. This kind of collection is called a “parallel corpus,” and it’s the basic ingredient needed to train translation apps, voice assistants, and other language tools.

What questions were the researchers trying to answer?

In simple terms, they wanted to know:

- How can we create trustworthy, well‑organized bilingual data for Ghanaian languages that don’t have much digital text yet?

- How do we include different dialects (like accents and regional ways of speaking) so tools work for many speakers, not just some?

- What rules and checks make the data clean, accurate, and useful for real apps?

- Can these datasets actually improve translation systems people use?

How did they do it?

Think of this like building a very careful two‑column book: left side in a Ghanaian language, right side in English, with each line meaning the same thing. To build it, they:

- Collected sentences: They gathered sentences from places like Wikipedia, storybooks (e.g., Ananse tales), the Bolingo cultural archive, and public documents.

- Translated by humans: Professional translators who speak both languages translated the sentences into English, paying attention to meaning, tone, idioms, and dialects.

- Cleaned the text: They removed very short or messy bits (like sentences under four words, lines starting with pronouns only, duplicates, or code‑mixed lines that blend English and a local language).

- Added notes (“metadata”): For each sentence pair, they recorded details like which language and dialect it came from and a unique ID, so others can reuse and check the data easily.

- Checked quality: Native speakers reviewed samples, verified dialects and tones (important in languages like Ewe), and fixed issues. They tried out the data in a real translation system to see if it helped.

Key ideas explained:

- Parallel corpus: A set of sentence pairs in two languages that say the same thing—like subtitles with two languages lined up.

- Dialect: A regional way of speaking the same language (like US vs UK English).

- Tone: In some languages, how your voice goes up or down can change a word’s meaning.

- Metadata: Helpful labels and notes about the data (who, what, where) so it’s organized and reusable.

- Low‑resource language: A language with very little digital text available for computers to learn from.

What did they find or make?

They produced five bilingual datasets with careful dialect coverage and cultural expressions (like proverbs), all aligned with English:

- Twi–English: 14,875 sentence pairs (Asante, Akuapem, Bono dialects)

- Ga–English: 11,652 pairs (urban and formal Ga, cleaned of code‑mixing)

- Fante–English: 5,001 pairs (Coastal, Inland, and other Fante varieties)

- Kusaal–English: 5,000 pairs (Eastern and Western Kusaal)

- Ewe–English: 4,985 pairs (including Anlo and Avenor variants, with careful tone checks)

Why this matters:

- The data is clean and trustworthy. For example, reviewers agreed on 98.4% of checks, and the team removed many low‑quality lines so what’s left is stronger.

- It already helps real tools. When they used the data to train Khaya AI (a translation tool focused on African languages), the models got noticeably better, showing a 22% drop in perplexity (a measure of confusion) and a 15% improvement in BLEU score (a common translation quality score) compared to training with unfiltered data.

- It reflects real life. The sentences include everyday talk, school topics, civic information, and traditional sayings, so tools trained on this data are more likely to be useful in real situations.

Licensing in brief:

- Free to use for research and education under CC BY‑NC‑SA 4.0 (give credit, non‑commercial, share alike).

- Commercial use needs permission; military uses are not allowed.

Why does this matter?

- Better access: People who speak Ghanaian languages can get translations, information, and services in languages they actually use every day—not just English.

- Fairer technology: Most AI tools are great for big languages; this work helps close the gap for African languages that have been left out.

- Education and public services: Schools, health clinics, and government platforms can localize materials, making services clearer and more effective.

- Culture and preservation: Including proverbs, idioms, and dialects helps keep languages alive and respected in the digital world.

- A foundation for future work: Researchers and developers now have a solid starting point to build translation systems, voice assistants, and learning apps. The clear documentation and metadata make it easier for others to add more languages or improve the datasets over time.

In short, the team didn’t just write about language technology—they built the core resources needed to make it work for Ghanaian languages and showed that these resources already improve real translation tools. This is a big step toward more inclusive, useful AI for everyone.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a consolidated list of unresolved issues that future work could address to strengthen the dataset, its documentation, and its downstream utility.

- No publicly released train/dev/test splits or standardized evaluation protocol per language to enable fair comparison and replication.

- Absence of baseline results (e.g., BLEU, chrF, COMET) reported per language/dialect with clear test sets; current Khaya AI claims are not reproducible (missing model details, data splits, metrics).

- No human evaluation of translation quality (adequacy/fluency, cultural fidelity) or detailed error analysis by language, domain, or dialect.

- Per-instance provenance is missing (“source for each data point not highlighted individually”), hindering legal compliance, deduplication, and trust.

- Copyright status of underlying texts (e.g., novels, Bible, court records) and compatibility with the dataset’s CC BY-NC-SA license are not clarified.

- Use of court records introduces potential privacy risks; no documented de-identification policy, consent procedures, or PII audit.

- Claimed metadata (dialect tags, tonal annotations, morpheme boundaries) conflicts with the published file schema of four columns; unclear what metadata is actually released.

- Tonal annotation practices are underspecified: coverage rates, marking conventions, Unicode normalization (NFC/NFKC), and inter-annotator consistency are not documented.

- Orthographic standardization across dialects is not described (rules, references, normalization), risking inconsistencies and noise.

- Exclusion criteria (≥4 words, subject–verb–complement, no pronoun-initial sentences) introduce selection bias; impact on downstream performance is unmeasured.

- Inconsistent handling of code-mixing (e.g., retained in Fante, filtered in Ga) without a principled modeling/evaluation strategy for code-switching scenarios common in Ghana.

- Actual domain distribution per language is not reported or labeled at the sentence level; claims (e.g., 55/30/15 conversational/educational/cultural) cannot be verified or subsetted by users.

- Dataset sizes remain small for modern MT; no data augmentation baselines, transfer learning strategies, or scaling plan are presented.

- Inter-annotator agreement is only reported as 98.4% for “borderline cases” without task definition, sample size, or methodology; full QA protocol is missing.

- No alignment confidence scores or validation procedures to quantify parallelism quality and detect misalignments.

- Dialectal balance effects are not evaluated (e.g., per-dialect performance, domain-by-dialect coverage, reliability of dialect labels).

- Tokenization/segmentation guidelines and tools (especially for agglutinative languages like Kusaal) are not released; claims of morpheme annotations are not evidenced in the files.

- Distribution in .xls only; lack of standardized, machine-friendly formats (TSV/JSONL), loaders, and Unicode normalization guarantees.

- No overlap/deduplication analysis within the corpora or against existing Ghanaian/African datasets; risk of test leakage and inflated benchmarks.

- No released scraping/cleaning/transformation code, translator/annotator guidelines, or data collection scripts; limits transparency and reproducibility.

- No links to actual data cards or full metadata dumps; claimed adherence to Hugging Face data card standards is not externally verifiable.

- No robustness evaluations (noisy input, code-mixed input, OOV handling) or generalization tests to user-generated content and informal registers.

- Ethical impact and community engagement processes (consent, feedback, benefit sharing, harm mitigation) are not documented beyond licensing statements.

- Licensing adds extra prohibitions (e.g., “military not permitted,” “removal of cultural or dialectal references prohibited”) that may be incompatible with CC BY-NC-SA and difficult to enforce; legal clarity is needed.

- Governance for commercial access (criteria, transparency, appeal), sustainability plans, maintenance schedule, versioning, and changelogs are unspecified.

- No cross-Ghanaian language parallel alignments (e.g., Twi–Ewe) or pivot strategies, limiting multilingual transfer beyond English-centric pairs.

- Speech use-cases are mentioned without releasing any aligned speech–text data or a concrete roadmap for multimodal expansion and QC.

- Cultural content (proverbs/idioms) lacks specialized validation or annotation for figurative language, making it hard to evaluate cultural fidelity.

- Selection of legal/civic texts (e.g., court/administrative documents) may bias registers; representativeness and potential skew are not analyzed.

- Coverage and consistency of diacritics and tone marks across dialects are not quantified; input method and normalization issues remain unresolved.

- Fairness and inclusion are not measured (e.g., performance by region, dialect, gender-coded language), nor are mitigation strategies proposed.

- Open question: How to reintroduce and evaluate short and pronoun-initial utterances that are prevalent in conversational systems.

- Open question: How to structure active learning, community feedback loops, and error reporting pipelines to iteratively improve data quality.

- Open question: How to harmonize orthographic differences across institutions (e.g., Bureau of Ghana Languages) and community norms for consistent, longitudinal datasets.

- Open question: How to interoperate with Masakhane/MAFAND-MT and avoid duplication while enabling joint benchmarks and dataset linkage.

Practical Applications

Immediate Applications

Below is a concise set of deployable, real-world uses that can be implemented with the current GhanaNLP Parallel Corpora and associated workflows.

- Machine translation and localization for apps and websites

- Sector: Software, media, e-commerce, government

- Tools/products/workflows: Khaya AI engine/APIs, browser extension, document translation pipelines, CMS i18n workflows for Twi, Fante, Ewe, Ga, Kusaal

- Dependencies/assumptions: Commercial use requires a paid license (CC BY-NC-SA 4.0 base license); domain coverage may vary (balanced conversational/educational/cultural); dialect selection and style guides needed per locale; XLS ingestion and dataset splits for training/QA

- Multilingual customer support and chatbots

- Sector: Telecom, finance, utilities, logistics

- Tools/products/workflows: NMT-powered chatbots/IVR, agent-assist translation, language identification (e.g., AfroLID) + MT routing, fallback to English

- Dependencies/assumptions: Conversational bias from removing pronoun-initial/very short sentences may require supplementary dialog data; human-in-the-loop review for edge cases; commercial license if monetized

- Public service localization and civic messaging

- Sector: Government, NGOs, healthcare

- Tools/products/workflows: Batch translation of public notices, health advisories, election materials, tax and civic portals; templated message banks in local languages

- Dependencies/assumptions: Government/NGO public-benefit use permitted by base license; set up native-speaker QA; tone-sensitive terms (esp. Ewe) require careful review

- Bilingual education materials and literacy tools

- Sector: Education, edtech, publishing

- Tools/products/workflows: Creation of bilingual readers, glossaries, flashcards, quizzes; localization of LMS content; teacher handouts in Twi/Fante/Ewe/Ga/Kusaal

- Dependencies/assumptions: Alignment with national curricula and dialect preferences; add domain-specific terminology for STEM/technical content

- Research benchmarks and reproducible experiments

- Sector: Academia, AI R&D

- Tools/products/workflows: Baselines for Ghanaian-language NMT; reproducible datacard-based benchmarking; cross-dialect evaluation; error analysis using curated test sets

- Dependencies/assumptions: Parse XLS to standard training formats; clear train/dev/test splits; maintain metadata for dialect/domain tags

- Cultural preservation and digital archives

- Sector: Cultural heritage, libraries, museums

- Tools/products/workflows: Digitized bilingual collections of proverbs/folktales; lexicon-building and dictionary extraction; searchable online cultural repositories

- Dependencies/assumptions: Non-commercial distribution fits license; respect cultural sensitivities and protected expressions; provenance not per-sentence (noted limitation) may require additional curation

- Government forms and e-services in local languages

- Sector: Public administration, justice

- Tools/products/workflows: Translation/localization of standard forms, permits, court notices, municipal websites; workflow for periodic updates

- Dependencies/assumptions: Official review/approval (e.g., Bureau of Ghana Languages); establish terminology glossaries for legal/civic terms

- Healthcare patient-facing materials

- Sector: Healthcare

- Tools/products/workflows: Translation of consent forms, triage instructions, clinic signage; SMS health campaigns in local languages

- Dependencies/assumptions: Domain adaptation for medical terminology is needed; human validation mandatory to reduce risk in safety-critical contexts

- Quality assurance (QA) for Ghanaian-language MT

- Sector: Software/AI product teams

- Tools/products/workflows: Assemble regression test suites per dialect/domain; CI pipelines tracking BLEU/TER and human ratings; error-bucket analysis

- Dependencies/assumptions: Hold-out test sets and human raters; periodic refresh to cover new domains (e.g., finance, agriculture)

- Translator training and LSP (language service provider) workflows

- Sector: Language services

- Tools/products/workflows: Use corpora as gold references for translator onboarding, QA rubrics, and style guides per dialect

- Dependencies/assumptions: Establish accepted orthography and tone marking conventions; ensure licensing compliance for training materials

Long-Term Applications

The following opportunities require additional research, scaling, or complementary datasets (e.g., speech), but are directly enabled or de-risked by the GhanaNLP corpora’s foundations.

- Speech technologies: ASR, TTS, and voice assistants in Ghanaian languages

- Sector: Telecom, healthcare, finance, robotics

- Tools/products/workflows: Voice IVR, on-device assistants, voice-enabled USSD; pronunciation lexicons and grapheme-to-phoneme rules that respect tone

- Dependencies/assumptions: Need aligned speech corpora and lexicons; robust tone modeling; data collection, funding, and compute resources

- Multilingual LLMs and NLU for Ghanaian languages

- Sector: Software, education, productivity

- Tools/products/workflows: Instruction-tuned LLMs for Q&A, summarization, drafting; multilingual retrieval and knowledge assistants

- Dependencies/assumptions: Much larger monolingual and parallel corpora beyond 41k pairs; compute capacity; safety/guardrail evaluation; commercial licensing for deployment

- Domain-specialized MT (health, agriculture, legal)

- Sector: Healthcare, agriculture, justice

- Tools/products/workflows: Fine-tuned models with domain glossaries; translation memory systems; risk-managed human-in-the-loop pipelines

- Dependencies/assumptions: Curate domain-specific parallel data; establish terminology standards; clinical/legal review processes

- Cross-dialect normalization and intra-language translation

- Sector: Media, education, publishing

- Tools/products/workflows: Asante↔Akuapem Twi, Coastal↔Inland Fante normalization; dialect-aware content distribution

- Dependencies/assumptions: Additional intra-language parallel datasets; agreed-upon normalization conventions; community input

- Cross-border translation for ECOWAS trade and tourism

- Sector: Trade, tourism, regional development

- Tools/products/workflows: Twi↔French, Ewe↔French, Ga↔French models (direct or pivot via English); multilingual guides and signage

- Dependencies/assumptions: New parallel corpora for Ghanaian↔French; evaluation against regional dialects; customs/tourism domain data

- Assistive technologies and accessibility

- Sector: Accessibility, social impact

- Tools/products/workflows: Screen readers and AAC devices in local languages; voice interfaces for low-literate users

- Dependencies/assumptions: ASR/TTS maturity; device-side optimization; user-centered design and field testing

- Media subtitling and dubbing automation

- Sector: Entertainment, broadcasting, education

- Tools/products/workflows: Auto-subtitling and machine dubbing for TV, radio, and online content; alignment and timing tools

- Dependencies/assumptions: High-quality ASR, forced alignment, and TTS; rights management and QC processes

- Smart agriculture and climate communication

- Sector: Agriculture, disaster management

- Tools/products/workflows: NLG-driven advisories (weather, pests) in local languages; two-way farmer Q&A via chat/voice

- Dependencies/assumptions: Agriculture/climate domain corpora; robust NLU for local idioms; connectivity and device access

- Financial inclusion: conversational banking and KYC in local languages

- Sector: Finance

- Tools/products/workflows: Local-language virtual agents, onboarding flows, fraud-triage conversations; agent-assist translation for compliance

- Dependencies/assumptions: Reliable NLU, privacy/compliance alignment, escalation workflows for low-confidence outputs

- Policy dashboards and language equity planning

- Sector: Public policy, development

- Tools/products/workflows: Dashboards tracking service availability by language/dialect; translation workload management across ministries

- Dependencies/assumptions: Inter-agency data integrations; governance for terminology and quality standards

- Lexicography and orthography standardization tooling

- Sector: Linguistics, publishing

- Tools/products/workflows: Semi-automated dictionary extraction and sense disambiguation; tone/orthography normalization tools

- Dependencies/assumptions: Ongoing linguist participation; community governance on spelling and tone conventions

- Safety-critical translation QA frameworks

- Sector: Healthcare, legal, public safety

- Tools/products/workflows: Auditable, human-in-the-loop MT systems with confidence scoring, error taxonomies, and incident response

- Dependencies/assumptions: Formal risk management, gold-standard test suites by domain, trained reviewers

Cross-cutting assumptions and dependencies

- Licensing: The datasets are CC BY-NC-SA 4.0 for research/education; commercial use requires a separate paid license; military applications prohibited.

- Data scope and bias: Corpora exclude pronoun-initial and very short sentences and filtered out heavy code-mixing; additional data may be needed for highly colloquial chat and social media use.

- Dialect coverage: Multiple dialects are included but not exhaustive; product teams should select/validate dialects for target audiences.

- Tone and orthography: Tone is preserved “where critical”; speech and pronunciation-dependent applications require additional tone-robust resources and conventions.

- Provenance granularity: Per-entry source provenance is not always individually tracked; audit trails for high-stakes uses should add manual provenance.

- Compute and expertise: Advanced applications (LLMs, speech) need significant compute, specialized expertise, and new data collection.

- Human oversight: For safety-critical deployments (health/legal/finance), human review and domain glossaries are essential to ensure accuracy and accountability.

Glossary

- Agglutination: A morphological process that builds words by concatenating morphemes, each carrying a distinct meaning. "Agglutination: Annotated morpheme boundaries (e.g., ninkãm "my head" -> ni-n-kãm)."

- AfroLID: A neural language identification system tailored for African languages. "AfroLID, developed by Adebara et al. (2022), is a robust neural language identification system covering over 500. African languages."

- Algorithmic labels: Automatically generated identifiers or metadata for dataset entries. "Algorithmic labels, including sentence IDs and structural metadata, were generated automatically."

- Baseline model: A reference model used for comparison during evaluation or development. "Khaya AI baseline model for Twi-English"

- BLEU score: A metric for evaluating machine translation quality based on n-gram overlap with reference translations. "achieving a BLEU score of 0.81 and outperforming Google Translate by 7% on their test set."

- CC BY-NC-SA 4.0: A Creative Commons license allowing sharing and adaptation with attribution, non-commercial use, and share-alike. "a base license (CC BY-NC-SA 4.0)"

- Cascading system: A translation setup that uses an intermediate (pivot) language between source and target languages. "cascading systems using English as a pivot"

- Code-mixing: The blending of words or phrases from multiple languages within a single utterance. "Focus on clean separation of code-mixed sentences"

- Crowdsourcing: Collecting data or annotations from a distributed group of contributors, often via online platforms. "created through crowdsourcing, professional translation, and alignment using tools like Sketch Engine"

- Data card (Hugging Face datacard): A standardized documentation format for datasets covering purpose, composition, and usage. "a standardized schema inspired by Hugging Face's datacard guidelines."

- Data provenance: Documentation of the origins and history of data, ensuring transparency and traceability. "they explicitly state license terms, data provenance, and intended use cases."

- Dialectal variation: Systematic differences in language features across regional or social dialects. "significant dialectal variations within a single language"

- Downstream tasks: Practical applications or evaluations that use a dataset or model, such as translation or summarization. "utility in downstream tasks such as real-time translation"

- Gur languages: A branch of the Niger-Congo language family spoken in parts of West Africa. "shares roots with other Gur languages like Dagbani and Mampruli."

- Inter-annotator agreement: A measure of consistency among different annotators on the same data. "achieving an inter-annotator agreement rate of 98.4%."

- Interoperability: The ability of systems or datasets to work together seamlessly through shared formats or standards. "each corpus follows a standardized structure to ensure interoperability"

- Language identification: Automatically determining the language of a given text or speech input. "a robust neural language identification system covering over 500. African languages."

- Low-resource languages (LRLs): Languages with limited digital data and tools for NLP and machine learning. "those classified as low-resource languages (LRLs)."

- Morphological segmentation: The process of splitting words into their constituent morphemes. "Evaluation of morphological segmentation tools"

- Named entity recognition (NER): Identifying and classifying proper names (e.g., people, locations) in text. "introduced named entity recognition datasets for 10. African languages"

- Neural machine translation (NMT): Machine translation models based on neural networks, typically sequence-to-sequence architectures. "neural machine translation (NMT) for low-resource African languages"

- Parallel corpora: Collections of texts in two or more languages with aligned units (e.g., sentences) conveying the same content. "five parallel corpora designed specifically for Ghanaian languages"

- Perplexity: A metric measuring how well a LLM predicts a sample; lower values indicate better performance. "a 22% reduction in perplexity"

- Pivot language: An intermediate language used to bridge translation between a source and a target language. "using English as a pivot"

- Post-editing: Human correction of translated text to improve grammar, style, or accuracy. "Minor post-editing was done for grammar and clarity in English translations"

- Postpositional structures: Grammatical constructions where relational words (postpositions) follow their complements. "Reflects agglutinative morphology and postpositional structures"

- Purposive sampling: A non-random sampling strategy that selects data points based on relevance to specific goals. "Purposive sampling: 90,000 entries reviewed, 14,875 selected"

- Sentence alignment: The process of pairing corresponding sentences across languages in a parallel corpus. "sentence-aligned pairs between the local language and English."

- Sketch Engine: A corpus management and text analysis tool used for alignment and linguistic exploration. "alignment using tools like Sketch Engine"

- Subject-verb-complement structure: A syntactic pattern ensuring sentences have a subject, a verb, and a complement. "subject-verb-complement structures"

- Syntactic validation: Checking and confirming the grammatical correctness of sentence structures. "systematic syntactic validation to confirm the presence of essential sentence components such as a subject, verb, and complement."

- Tonal marking: Notation indicating pitch variations (tones) that distinguish meaning in tonal languages. "Tonal Marking: High/low tones annotated via diacritics where critical for meaning."

- Tonal minimal pairs: Word pairs differing only by tone, leading to distinct meanings. "Tonal Minimal Pairs: 200+ entries disambiguate meaning via tone (e.g., fé "love" vs. fè "want")."

- Vowel harmony: A phonological process where vowels within a word agree in certain features (e.g., front/back, roundedness). "complex tone, vowel harmony, and agglutinative features."

- Web scraping: Automated extraction of content from web sources for data collection. "web-scraped data"

Collections

Sign up for free to add this paper to one or more collections.