- The paper introduces AgentFactory, a framework that constructs and refines reusable Python subagents to enhance task orchestration efficiency with up to 31% token savings.

- The methodology employs a three-phase pipeline—install, self-evolve, and deploy—to dynamically build, improve, and export portable, human-auditable subagents.

- This study demonstrates robust skill accumulation and cross-system interoperability, advancing life-long agent learning and programmable autonomy in LLM-based systems.

AgentFactory: A Self-Evolving Framework for Executable Subagent Accumulation and Reuse

Motivation and Conceptual Foundation

AgentFactory introduces a paradigm shift in LLM-based agent self-evolution by prioritizing the preservation of actionable knowledge—persisted as executable, documented Python subagents—over the traditional accumulation of textual experiences. The framework is designed to address the inefficiency and lack of reliability in verbal-experience-based systems, particularly in complex procedural or tool-augmented tasks where prompt retrieval and chain-of-thought reflection fail to guarantee successful re-execution.

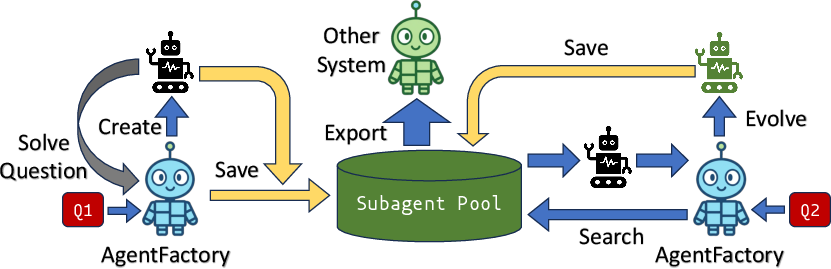

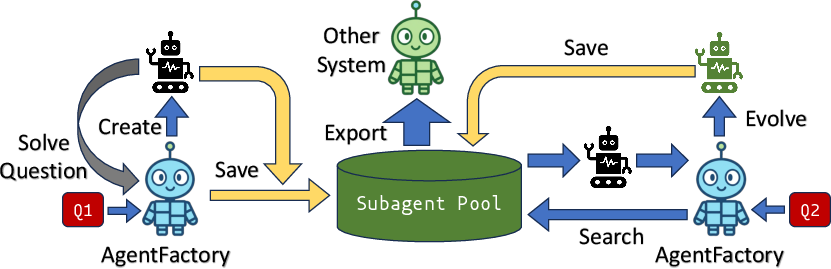

Recognizing that most real-world tasks can be decomposed into recurring subtasks, AgentFactory systematically constructs, refines, and accumulates subagents, each encapsulating reusable, executable logic. The meta-agent at the core of the system orchestrates task decomposition, code synthesis, skills allocation, feedback-driven improvement, and deployment, enabling a robust, open-ended skill library to emerge.

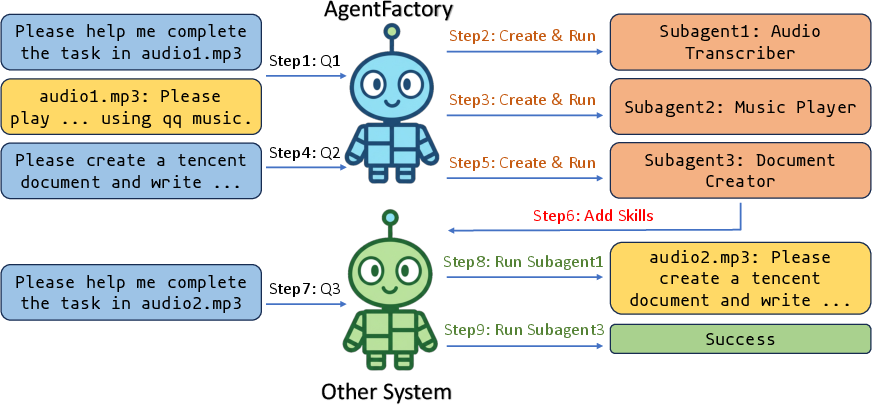

Figure 1: Schematic of the AgentFactory pipeline, illustrating the creation and reuse spectrum of subagents across tasks and the buildup of the skill library.

Architectural Overview

AgentFactory's architecture consists of three principal subsystems:

- Meta-Agent Orchestrator: Acts as the top-level planner and controller, responsible for decomposing tasks, dispatching subtasks, constructing subagents via code synthesis, and updating subagents through autonomous code revision.

- Skill System: Unifies all agent operations within a standardized interface, segregating skills into three categories:

- Meta Skills: Core management primitives for subagent lifecycle control.

- Tool Skills: Built-in, static tools (e.g., browser automation, web search, shell commands) used as atomic operations.

- Subagent Skills: Dynamically generated, executable Python modules with standardized documentation, subject to continuous self-evolution.

- Workspace Manager: Ensures task-atomic, isolated code construction and testing to avoid skill library corruption, securing the integrity of both concurrent tasks and the evolving subagent repository.

This tripartite division facilitates modular, scalable expansion and aligns with emerging agent skill standards for interoperability.

Three-Phase Self-Evolution Pipeline

Install: De Novo Subagent Construction

When faced with novel tasks, the meta-agent analyzes requirements, decomposes objectives, and programmatically synthesizes new Python subagents using both built-in tools and existing skills. Each constructed subagent is documented, versioned, and persisted, forming the foundational basis of the growing skill library. This phase is characterized by strong agentic autonomy in both task understanding and functional decomposition, moving beyond static templates to dynamic code synthesis.

Self-Evolve: Autonomous Refinement via Feedback

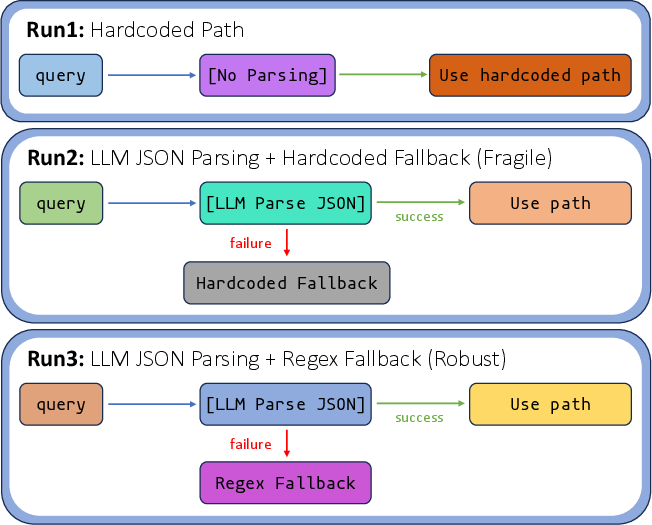

Upon encountering tasks with latent or explicit similarity to previously solved problems, the meta-agent retrieves relevant subagents and attempts direct reuse. Failures or suboptimality trigger a tightly integrated feedback loop involving execution trace analysis, error diagnosis, and targeted code modification via the modify_subagent primitive. This iterative improvement is not limited to prompt or plan tuning but extends to the transformation of executable agent code, encompassing error handling, generalization enhancements, and edge-case support.

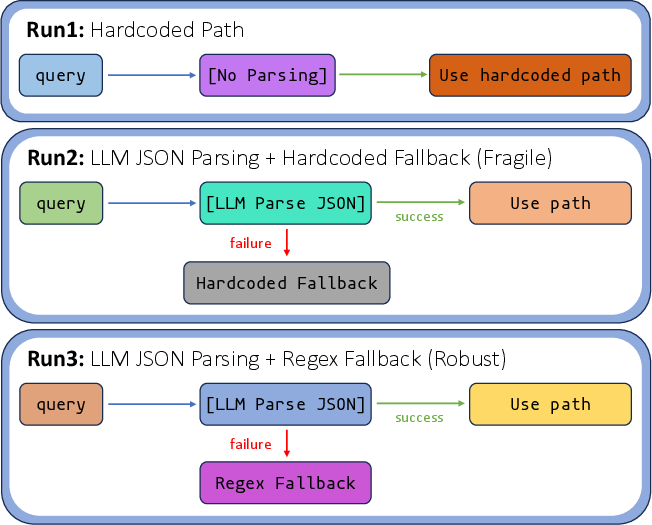

Figure 2: Trace of subagent refinement demonstrating the migration of the path resolution logic toward increased robustness across iterations.

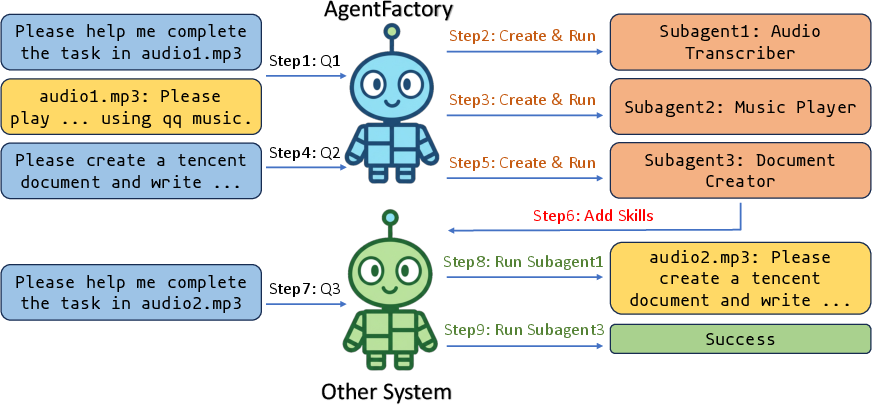

Deploy: Exporting Portable Subagents

Mature subagents are exported as standalone, documented Python modules, compatible with any Python-capable framework. Integration into host systems is achieved via descriptive documentation (SKILL.md) and standardized I/O, enabling cross-system interoperability. External frameworks can thus dynamically discover, inspect, and chain these skills, catalyzing rapid composition of high-level capabilities.

Figure 3: Visualization of subagent recycling and cross-system utility, evidence of persistent, portable skill transfer and zero-shot reuse.

Empirical Evaluation

AgentFactory's efficacy is quantitatively established by measuring the orchestration-level token consumption across real-world task batches and two LLM backbones (Claude Opus 4.6 and Sonnet 4.6). Notably, subagent saving and reuse yields substantial reductions in orchestration cost—up to a 31% decrease in batch-average output tokens versus ReAct and reflection-style baselines. This is particularly pronounced in heterogeneous task batches, where even minimal inter-task overlap is sufficient for the meta-agent to exploit accumulated skills. Thus, AgentFactory demonstrates both high-fidelity task memory and negative transfer minimization.

Key claims substantiated by the quantitative results include:

- Executable subagent reuse outperforms textual experience retrieval in orchestration efficiency and reliability.

- Skill accumulation is effective even for long-tail, low-overlap task distributions in high-capacity models.

- Feedback-driven self-evolution leads to measurable improvements in agent robustness and task generality.

Practical and Theoretical Implications

Practically, AgentFactory bridges the gap between program synthesis, skill memory, and compositional agent design, enabling continuous, auditable workflow automation. The persistent accumulation of executable, portable skills offers a substantial step toward life-long agent learning, cross-system capability transfer, and scalable agentic infrastructure. With transparent, human-auditable code, AgentFactory mitigates safety and interpretability concerns inherent in purely prompt-driven agents.

Theoretically, the work operationalizes the paradigm of self-improving systems in the context of LLM-based agents, showing that autonomous program rewriting can be made both granular (subagent-specific) and compositional (skill chaining across systems). This trajectory points to the emergence of agent ecosystems where skills evolve, migrate, and generalize much like open-source software components, laying the groundwork for open-ended, community-driven agent intelligence.

Future Directions

Promising avenues include the integration of Vision-LLMs for GUI-based automation, reinforcement learning-based subagent optimization, meta-learning for cross-domain skill transfer, and community-sourced subagent marketplaces. Further, exploration into the dynamics of subagent library evolution, catastrophic forgetting avoidance, and long-horizon compositionality can yield deeper insights into autonomous agent scaling.

Conclusion

AgentFactory delivers a comprehensive framework for LLM agent self-evolution by accumulating and autonomously refining executable subagents. This approach enables robust, efficient, and portable skill library growth. The demonstrated reductions in orchestration cost, coupled with portable, human-auditable subagent modules, establish AgentFactory as a canonical platform for research in life-long agent learning, programmable autonomy, and open-ended capability transfer across AI systems (2603.18000).