- The paper introduces a rigorous framework for extending first- and second-order optimality conditions to infinite-dimensional weak Riemannian manifolds via novel definitions of gradients, Hessians, and coercivity.

- The paper develops new methodology through the concept of Hesse manifolds and structural hierarchies, ensuring well-posed Riemannian gradient descent algorithms and convergence guarantees.

- The paper provides explicit computations and numerical experiments in shape analysis and diffeomorphism groups, demonstrating the practical feasibility of optimizing on weak Riemannian spaces.

Optimization on Weak Riemannian Manifolds: Framework, Theory, and Numerical Analysis

Introduction

The paper "Optimization on Weak Riemannian Manifolds" (2603.25396) presents a comprehensive theoretical framework for developing and analyzing optimization algorithms—specifically Riemannian gradient descent—on infinite-dimensional manifolds endowed with weak Riemannian metrics. Weak Riemannian structures frequently occur in areas such as shape analysis, shape optimization, and the geometry of diffeomorphism groups, where manifolds are modeled on topological vector spaces more general than Banach or Hilbert spaces. While finite- and Hilbert-dimensional Riemannian optimization is mature, this work highlights both the generalization of key optimality and convergence results and the structural challenges unique to weak Riemannian settings, culminating in the introduction of the concept of a Hesse manifold—a weak Riemannian manifold admitting a metric spray.

Preliminary Structures on Weak Riemannian Manifolds

Optimization on infinite-dimensional manifolds necessitates careful generalization of differential geometric concepts. Weak Riemannian metrics are continuous, symmetric, positive definite tensor fields that generally induce a weaker topology on tangent spaces than the model space norm—notably, these do not guarantee local completeness or equivalence of inner product and manifold topology.

Differentiation uses the Bastiani calculus, suitable for locally convex (possibly non-normable) spaces, with directional derivatives providing the foundation for function regularity and the structure of configuration and tangent spaces. Critical Riemannian constructions relevant to optimization—such as gradients, Hessians, connections, and metric sprays—are redefined in a way tailored to these weak settings. Notably, the existence of Riemannian gradients and Levi-Civita connections is not ensured for general weak Riemannian manifolds, requiring explicit structural criteria.

First and Second Order Optimality

First-Order Conditions

The classical first-order necessary condition for optimality extends: For f:M→R, where M is a weak Riemannian manifold, any local minimizer must be a critical point, i.e., the Riemannian gradient vanishes at this point. This is justified through careful adaptation of Bastiani differential calculus and the non-degeneracy of weak inner products.

Second-Order Conditions

A crucial divergence from finite- and Hilbert-manifold theory arises in second-order conditions. While positive-definiteness of the Hessian suffices in the standard settings, on weak Riemannian manifolds coercivity must be imposed: a critical point p is a strict local minimizer if and only if the Riemannian Hessian at p is coercive, i.e., there exists μ>0 such that for all v∈TpM, gp(Hessf(p)[v],v)≥μ∥v∥p2. This stronger requirement stems from the lack of compactness and the possible incompleteness of tangent spaces in the weak setting.

Structural Properties: Hesse, Robust, and Strong Riemannian Manifolds

The existence of well-posed optimization algorithms and analytical tools on weak Riemannian manifolds prompts the introduction of Hesse manifolds: these are weak Riemannian manifolds admitting a metric spray. Hesse manifolds provide the framework wherein gradients, Hessians, and connections needed for first- and second-order analysis become available and well-behaved.

A crucial hierarchy is established:

- Strong Riemannian manifolds (modeled on Hilbert space with strong metrics) are always robust and Hesse manifolds.

- Robust Riemannian manifolds are weak manifolds whose local fiber completions yield a smooth Hilbert bundle, and whose metric derivatives satisfy an appropriate product rule. These include many manifolds from geometric analysis, e.g., spaces of smooth embeddings or mappings with Sobolev-type metrics.

- Hesse manifolds generalize robust and strong cases, allowing for broader infinite-dimensional applications while retaining necessary geometric structure.

This hierarchy is summarized visually:

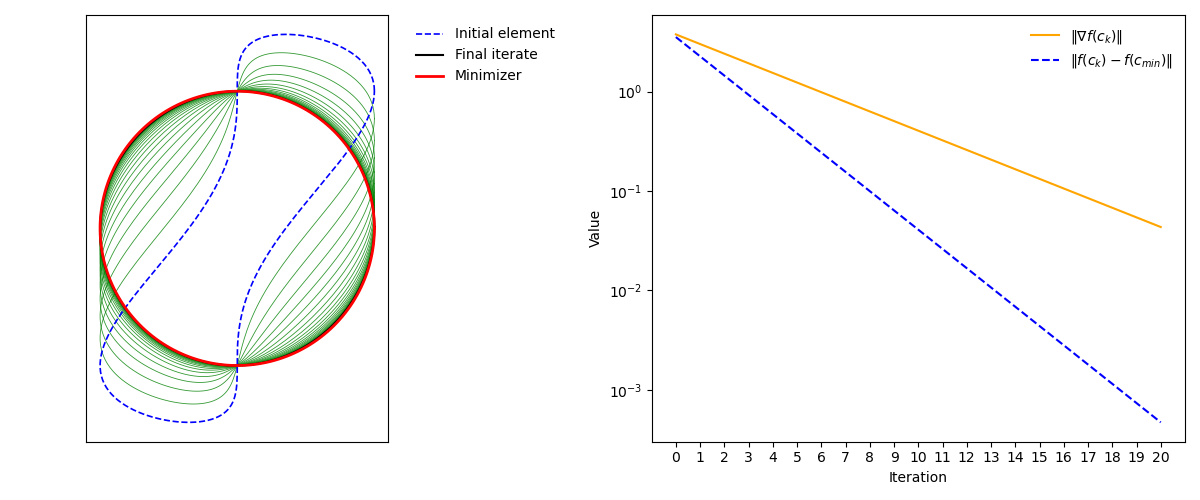

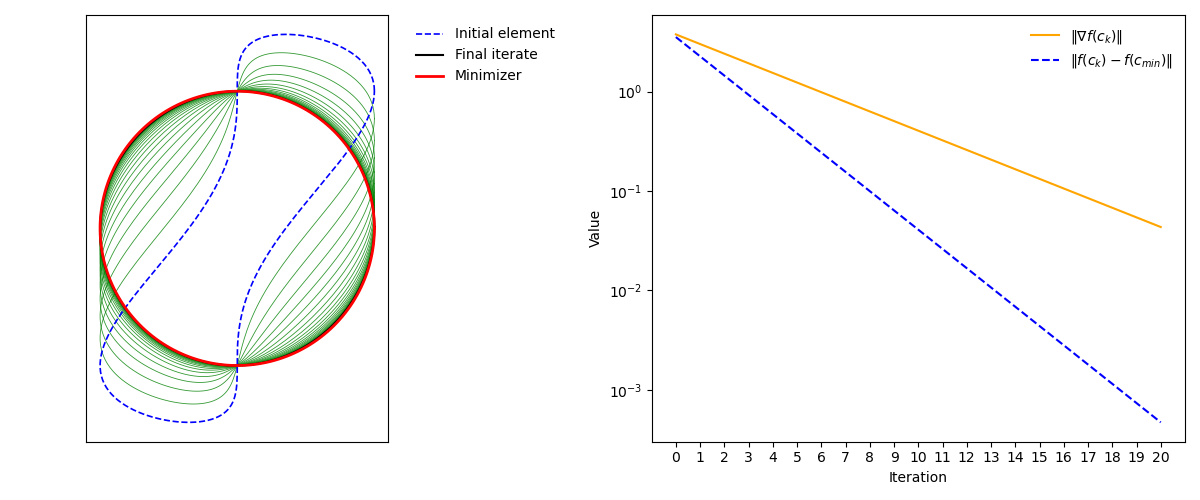

Figure 1: Riemannian gradient descent for f. Left: evolution of the iterates. Right: function values and gradient norms over twenty iterations.

Riemannian Gradient Descent: Algorithm and Convergence

The Riemannian gradient descent (RGD) method is formulated using the notion of local additions—generalizations of retractions suitable for infinite-dimensional settings. Given a suitable local addition Σ and step sizes (αk), the iterates evolve as M0, provided the Riemannian gradient is sequentially continuous and the structure supports sufficient decrease in the objective.

A key result: Under natural technical assumptions (existence of a global lower bound, sufficient decrease condition, and sequential continuity of the gradient), accumulation points of the RGD iterates are critical points and M1 converges to zero. For strong Riemannian manifolds, the required continuity properties hold automatically.

Examples and Explicit Computations

The paper presents explicit computations of Riemannian gradients and Hessians on weak Riemannian manifolds relevant to shape analysis:

- On spaces of immersions M2, equipped with the M3 metric, the gradient of the length functional is given by the curvature vector.

- For energy functionals on M4-loop spaces (as in geodesic analysis), the gradient and Hessian admit explicit forms, with the latter involving the curvature tensor and invertible operators built from the Laplacian.

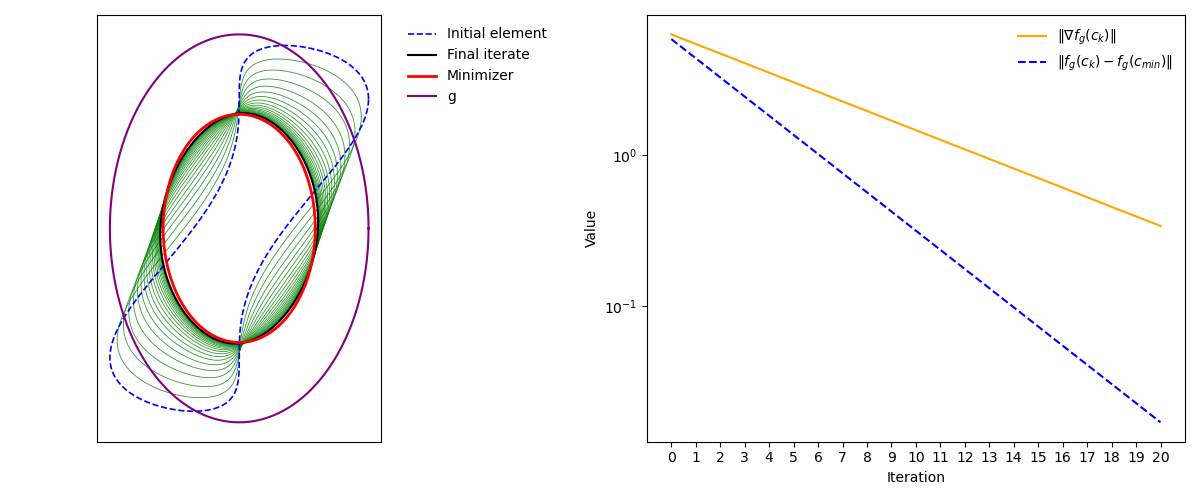

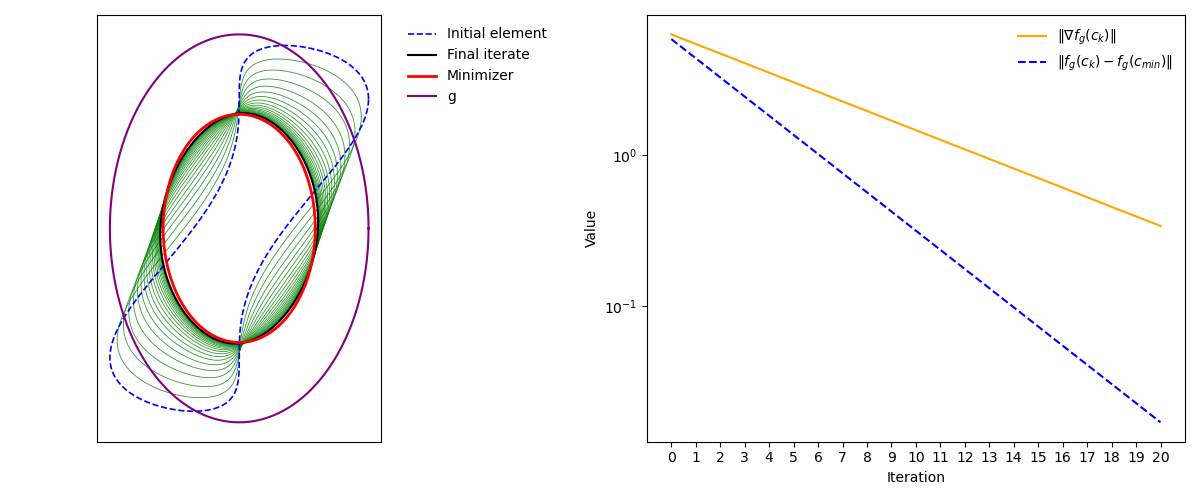

Detailed numerical experiments on RGD are provided, showing convergence consistent with theoretical predictions in the context of shape matching and regularization functionals.

Figure 2: Riemannian gradient descent for M5. Left: evolution of the iterates. Right: functional values and gradient norms over twenty iterations.

Numerical Implications and Applications

Through the adoption of robust and Hesse manifold structures, Riemannian optimization can be extended to handle many natural infinite-dimensional applications:

- Shape analysis using M6- and elastic metrics

- Large deformation diffeomorphic metric matching (LDDMM)

- Optimal transport on Wasserstein space

- PDE-constrained shape optimization

The results inform algorithmic design for spatially continuous optimization, particularly relevant for emergent applications in computational anatomy, geometric morphometrics, and high-dimensional data transformation.

Discussion and Outlook

The paper's framework exposes structural requirements and technical obstacles in extending Riemannian optimization to general weak infinite-dimensional manifolds. Positive-definiteness of the Hessian must be replaced by coercivity; the gradient and Hessian may only be well-defined under additional analytic and geometric constraints, necessitating the new notions of robustness and Hesse structure. The results pave the way for rigorous analysis and practical algorithm development in shape spaces and other infinite-dimensional geometries.

Future directions suggested by this work include:

- Further study of retractions and local additions specific to weak infinite-dimensional contexts

- Generalization of advanced second-order and stochastic optimization schemes

- Application to geometric learning algorithms in functional and shape data analysis

Conclusion

This work establishes a rigorous foundation for optimizing functionals on weak Riemannian manifolds, with robust conditions ensuring the generalization of classical first- and second-order optimality and convergence results. The introduction of Hesse manifolds refines the geometric prerequisites for well-posed Riemannian gradient algorithms, enabling new advances in the analysis and numerical solution of infinite-dimensional optimization problems—particularly those arising in shape analysis, geometric PDEs, and related fields.