- The paper demonstrates that embedding thermodynamic laws in PINNs dramatically improves invariant recovery and phase space fidelity using variational and symplectic methods.

- It compares five PINN variants, showing that structure-informed models notably enhance noise robustness and parameter inference in both conservative and dissipative systems.

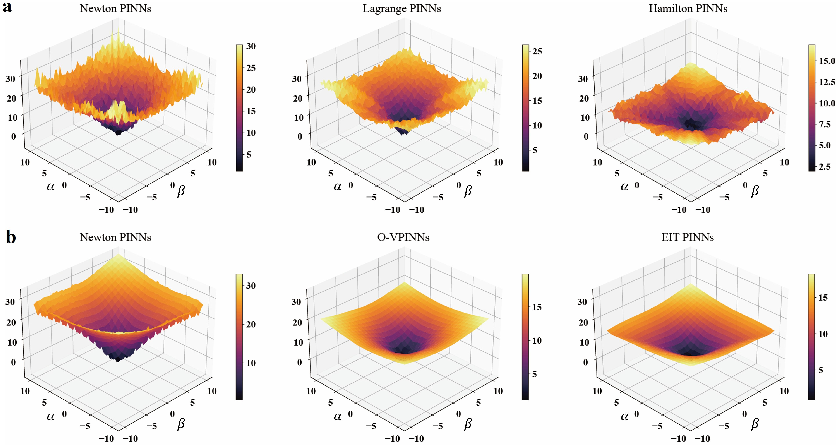

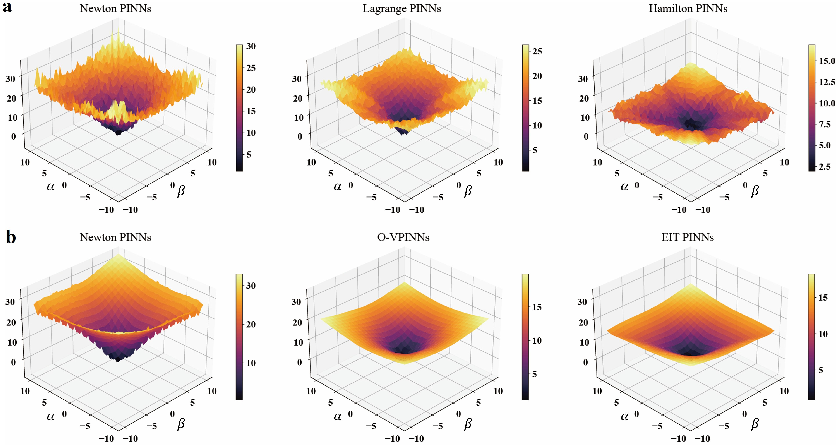

- Loss landscape analysis reveals that models with physical constraints yield flatter minima, leading to better generalization and reliable inverse problem performance.

Introduction

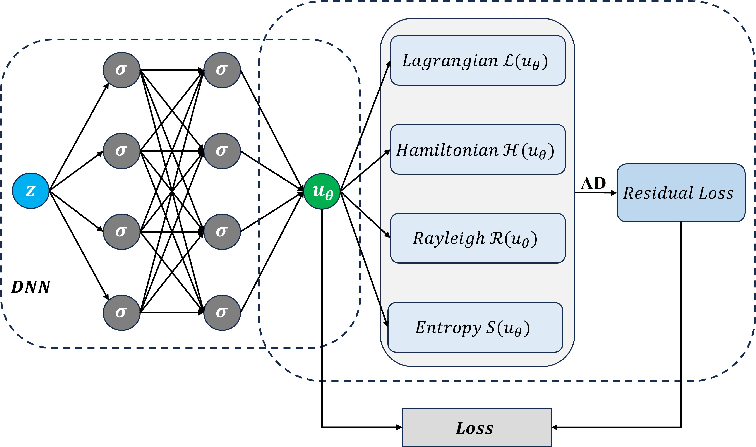

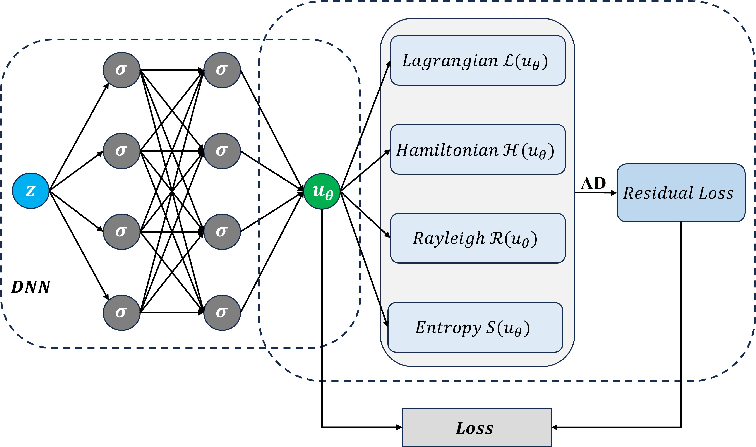

This paper provides a rigorous analysis of thermodynamic structure-informed neural networks within the Physics-Informed Neural Networks (PINNs) paradigm. It systematically evaluates the impact of embedding various thermodynamic formalisms—Newtonian, Lagrangian, Hamiltonian mechanics for conservative systems, and Onsager's Variational Principle (OVP) and Extended Irreversible Thermodynamics (EIT) for dissipative systems—on the accuracy, physical consistency, interpretability, and robustness of PINN solutions to both forward and inverse problems. The authors conduct exhaustive numerical experiments on canonical ODE and PDE testcases (ideal mass-spring, simple and double pendulum, damped pendulum, diffusion equation, and Fisher-Kolmogorov equation), providing quantitative and qualitative evidence for how physical structure influences learning outcomes (Figure 1).

Figure 1: Schematic of different classes of thermodynamic structure-informed neural networks and their associated physical formalism, model structure, and loss integration.

PINN Variants: Embedding Physical Structure

The conventional PINN methodology constructs residuals based on the Newtonian paradigm, directly penalizing the violation of differential equations for the system state variable. However, this neglects conserved quantities and system symmetries crucial for generalization and interpretability. The paper therefore contrasts five PINN variants:

- NM-PINN: Newtonian Mechanics residual

- LM-PINN: Lagrangian mechanics (Euler-Lagrange structure)

- HM-PINN: Hamiltonian mechanics (canonical equations, energy conservation loss)

- OVP-PINN: Onsager’s Variational Principle (Rayleighian minimization, dissipation structure)

- EIT-PINN: Extended Irreversible Thermodynamics (entropy balance/residual structure)

Each variant employs a tailored loss function that reinforces the governing thermodynamic law as a structural constraint during neural network optimization, with critical differences in their treatment of state variables, parameter inference, and higher-order system properties.

Conservative Systems: Physical Consistency and Parameter Recovery

Ideal Mass-Spring, Pendulum, Double Pendulum

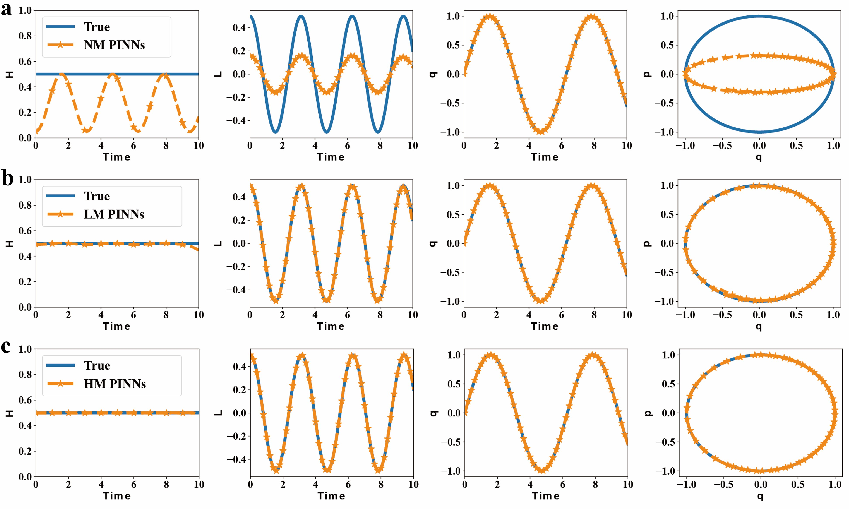

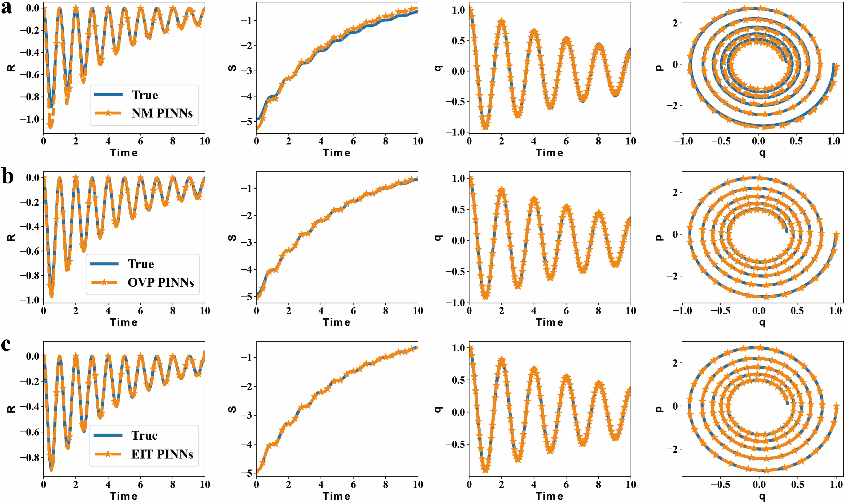

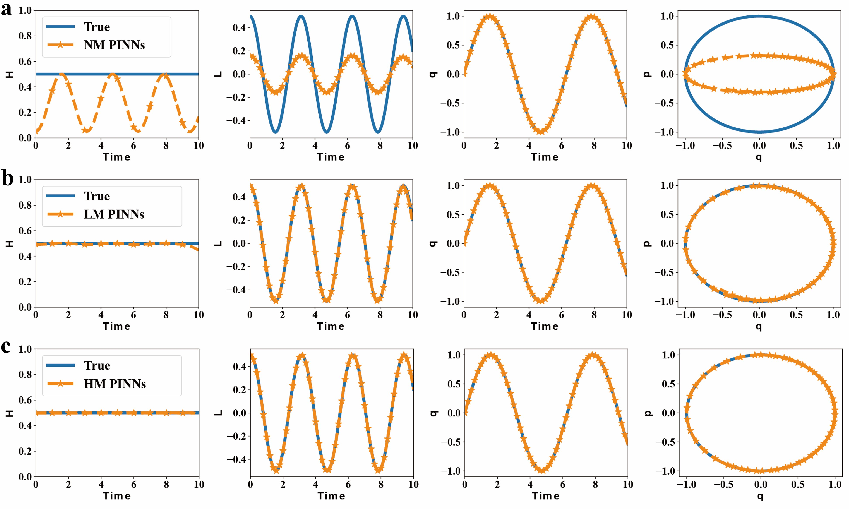

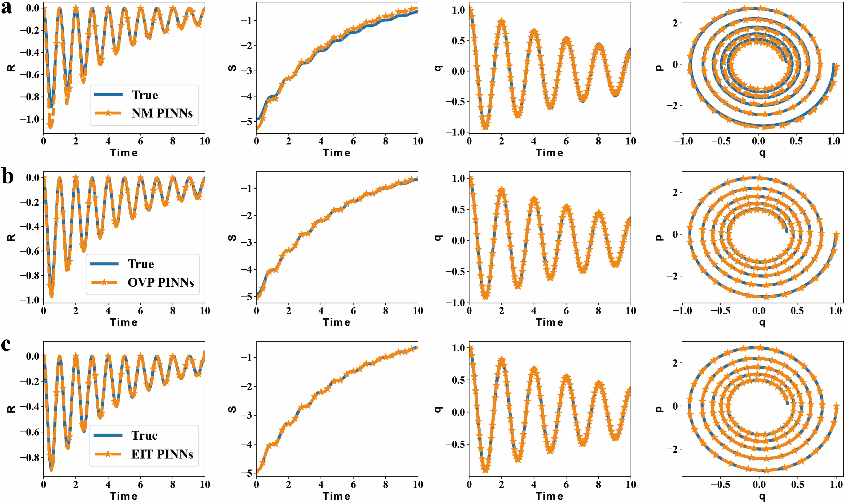

For conservative ODEs, NM-PINNs reliably reconstruct the system trajectory but fail to accurately capture essential invariants (Hamiltonian, Lagrangian), phase space structure, and generalized momentum. LM-PINNs and HM-PINNs, by leveraging variational and symplectic structures, achieve orders-of-magnitude improvements in invariant recovery and phase portrait fidelity.

Figure 2: Comparative forward modeling results for the ideal mass-spring system; only LM-PINNs and HM-PINNs capture the correct dynamical invariants and phase space geometry.

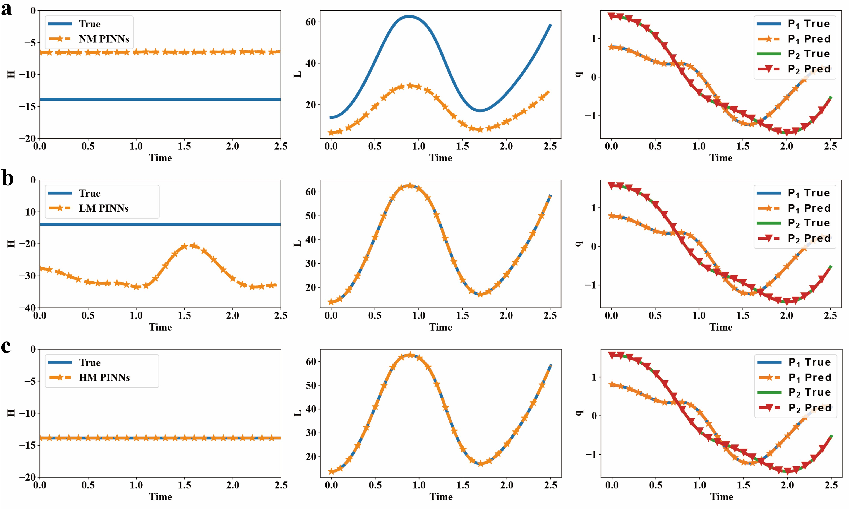

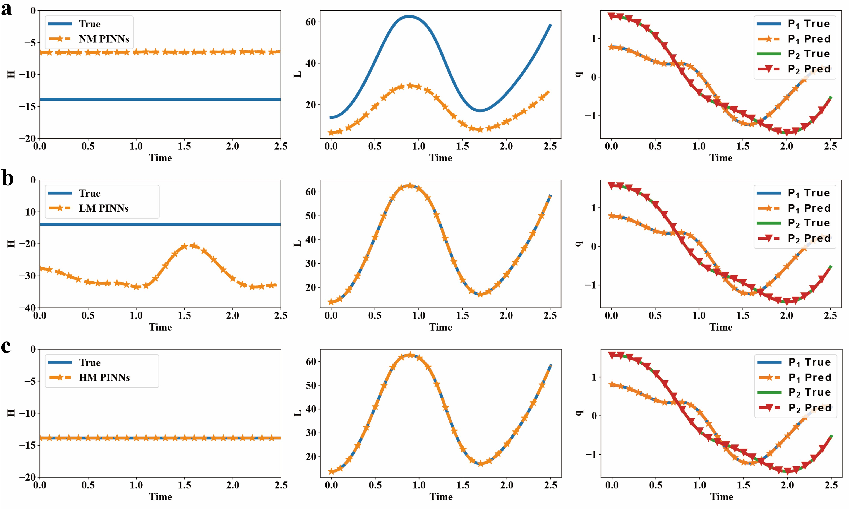

In inverse tasks, LM-PINNs outperform other approaches in noise robustness for coefficient identification due to their explicit encoding of the variational principle, while HM-PINNs provide the most accurate invariant recovery but are sensitive to noise in the presence of first-order variables. The double pendulum exemplifies that only Hamiltonian-structure-enforcing models consistently recover both Lagrangian and Hamiltonian density fields in highly complex dynamics (Figure 3).

Figure 3: In the double pendulum system, only HM-PINNs provide consistent recovery of both trajectory and conserved quantities; NM-PINNs fail to capture the correct invariants.

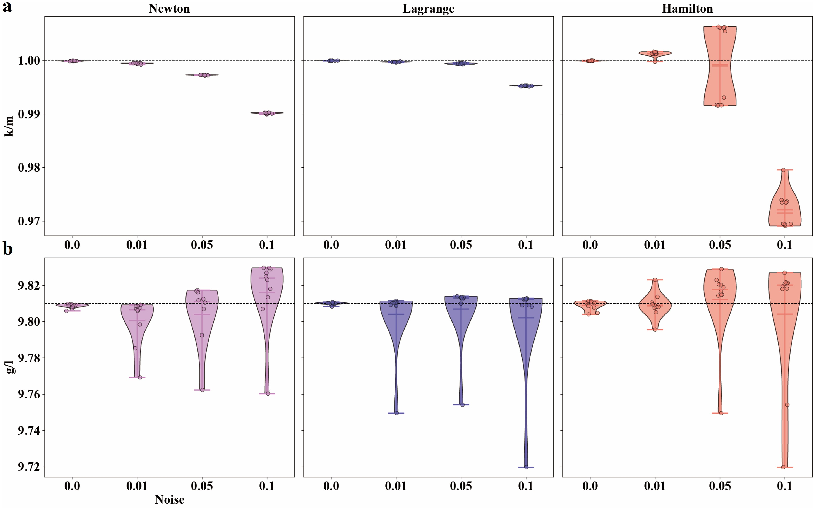

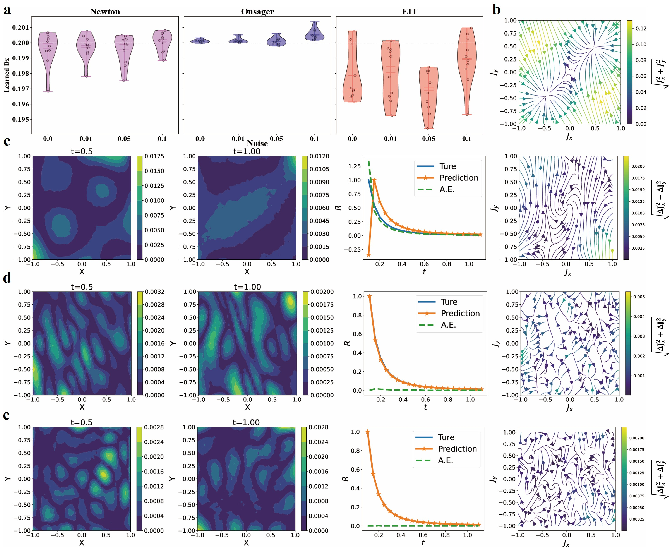

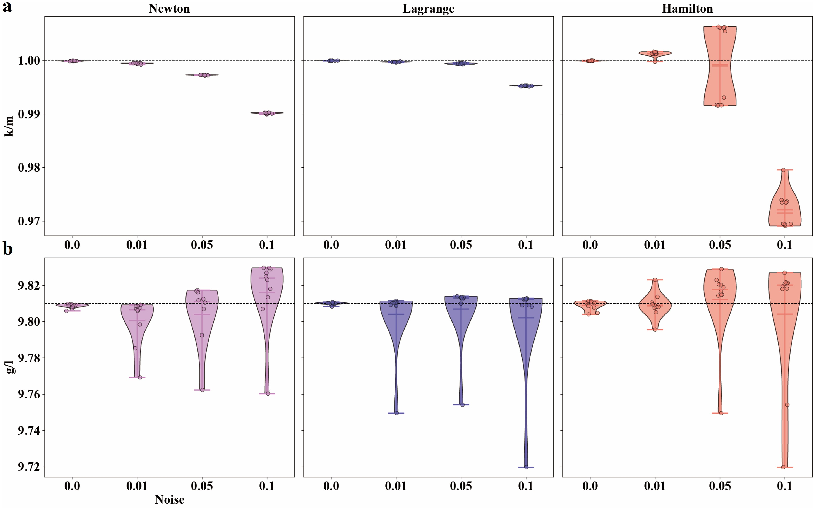

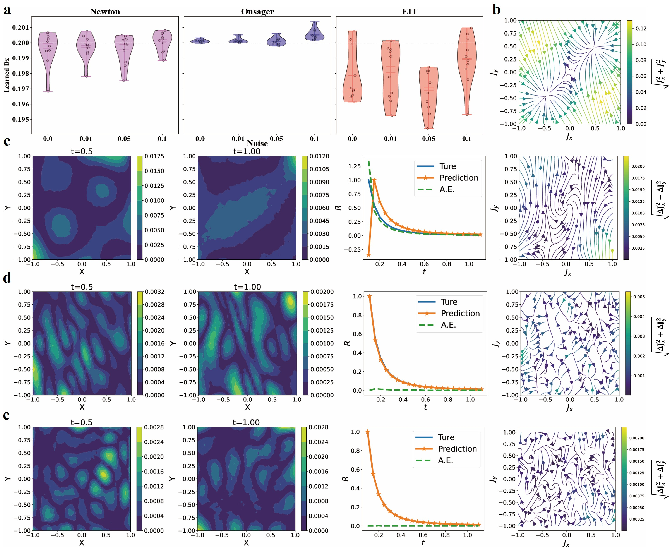

Noise Robustness in Inverse Problems

Repeated trials with varied measurement noise highlight that while Newtonian models can infer physical parameters in ideal settings, their stability and reliability degrade rapidly in realistic, noisy environments. Structure-enriched variants yield superior mean error and significantly lower variance, especially LM-PINNs in ODEs and OVP-PINNs for dissipative PDEs (Figure 4).

Figure 4: Distribution of inferred parameters for mass-spring and pendulum systems at increasing noise levels; structure-preserving PINNs mitigate noise sensitivity.

Dissipative Systems: Thermodynamic Quantities and Entropy Structure

Damped Pendulum and Diffusion Equation

For dissipative systems, NM-PINNs reconstruct the physical trajectory but consistently misestimate the Rayleighian, entropy, and entropy flux. OVP-PINNs, by constraining the Rayleighian minimization condition, and EIT-PINNs, by enforcing local entropy balance and production, are able to recover these thermodynamic fields with high fidelity.

Figure 5: Forward modeling of the damped pendulum; OVP-PINNs and EIT-PINNs capture physically consistent Rayleighian and entropy evolutions unlike NM-PINNs.

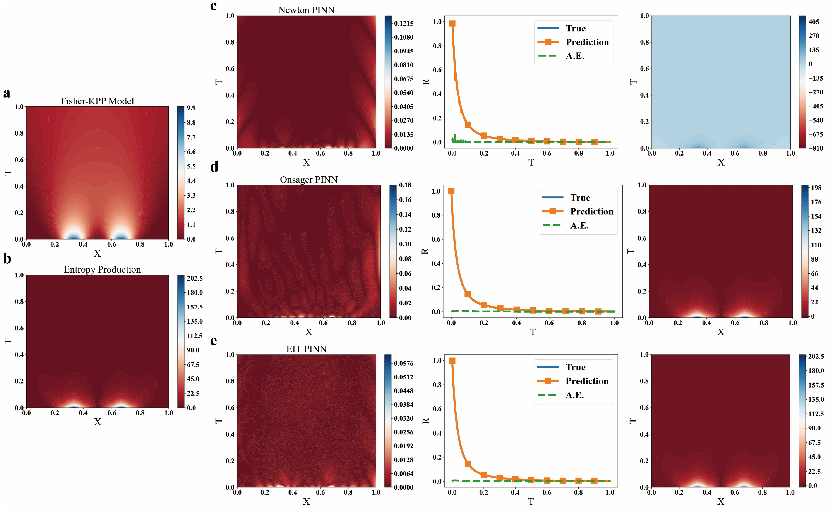

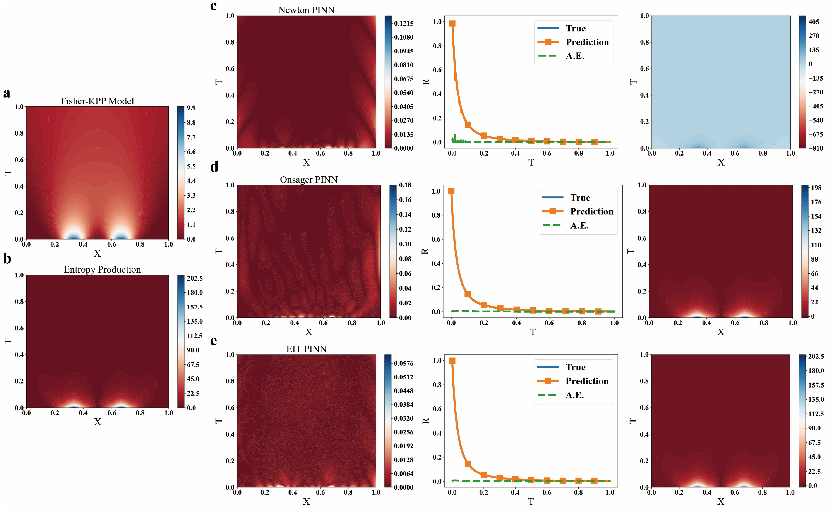

In PDE systems (diffusion, Fisher-Kolmogorov equation), OVP-PINNs and EIT-PINNs generalize these advantages; EIT-PINNs, in particular, excel in learning entropy flux and local entropy production geometries (Figures 7, 8), eliminating coherent error fields and ensuring data-consistent thermodynamic structure.

Figure 6: Diffusion equation—EIT-PINNs yield the lowest pointwise error and smallest entropy flux discrepancy across space-time.

Figure 7: Fisher-Kolmogorov equation—EIT-PINNs produce accurate solutions and localized entropy production rates compared to OVP- and NM-style PINNs.

Quantitative analysis of the learned solutions reveals that structure-informed models produce an order-of-magnitude reduction in RMSE for both state recovery and physical field estimation.

Inverse Problem Stability

Parameter inference for dissipative dynamics confirms the superior stability of OVP-PINNs and EIT-PINNs, especially under high noise. These models maintain unbiased estimates with minimal variance in diffusion coefficient and damping parameter recovery tasks.

Loss Landscape Analysis

An in-depth analysis of the high-dimensional loss surfaces uncovered systematic differences: structure-informed PINNs, especially HM-PINNs and EIT-PINNs, consistently exhibit flatter and more benign loss landscapes (Figure 8), evidenced by up to two orders of magnitude lower Frobenius norms of the Hessian. This correlates with improved generalization, greater resistance to suboptimal minima, and higher reproducibility across optimization trials. However, the authors note that inverse problem performance is more sensitive to the global parameter-reduced loss shape than local minimum flatness.

Figure 8: Loss landscape visualizations—structure-preserving PINNs yield broader minima, correlating with enhancements in generalization and thermodynamic consistency.

Implications and Outlook

This comprehensive comparative investigation demonstrates that thermodynamic structure enforcement in PINNs is not merely a matter of physical interpretability—it is essential for parameter robustness, invariant recovery, and reliable generalization in both forward and inverse settings. The integration of variational, symplectic, and entropy-based residuals directly constrains the solution geometry, stabilizes training, and dramatically improves the ability to learn physically meaningful quantities.

The meticulous benchmarking across ODE and PDE systems establishes a roadmap for the future evolution of structure-preserving PINNs. In particular, these insights motivate the systematic embedding of more general nonequilibrium frameworks (GENERIC, Conservation-Dissipation Formalism) for highly complex multiphysics systems and their hybridization with operator learning.

Conclusion

Thermodynamic structure-informed neural networks, by explicitly encoding physical invariants, energy/entropy balance, and dissipation structure, far outperform generic PINN architectures both qualitatively and quantitatively. The empirical and theoretical analysis provided in this paper constitutes a robust foundation for next-generation scientific machine learning models, where physical law and data-driven inference are deeply integrated for reliable forward and inverse modeling.