- The paper introduces a novel framework integrating contraction theory and interval analysis to certify neural network controllers with provable stability guarantees.

- It leverages an asymmetric matrix formulation and GPU-parallelized interval checks to reduce conservatism and scale verification to high-dimensional systems.

- Empirical evaluations on a 10-dimensional quadrotor demonstrate enhanced tracking performance and efficient certification across complex state regions.

Certified Learning of Neural Network Controllers via Contraction and Interval Analysis

Introduction

The paper "Learning Certified Neural Network Controllers Using Contraction and Interval Analysis" (2603.28011) introduces a formal framework for synthesizing neural feedback controllers with provable closed-loop contraction guarantees. The approach addresses a persistent challenge in nonlinear control—the ability to leverage the expressivity of neural networks for highly nonlinear plants while simultaneously providing mathematically rigorous certification of system behavior. Prior verification methods for neural control, especially those based on Lyapunov and barrier functions, are hindered by sample complexity, over-conservatism from interval over-approximations, and scalability issues to higher-dimensional systems. In contrast, this work leverages an asymmetric matrix formulation of contraction theory and novel interval analysis techniques to resolve critical dependency management challenges, enabling tractable, parallelizable verification over large contraction regions.

Theoretical Foundations

Riemannian Contraction and Certification

The central theoretical contribution is a reformulation of the Riemannian contraction condition to exploit an asymmetric contraction matrix G, in contrast to the standard symmetric LMI condition S:

S(t,x):=M(x)∂x∂f(t,x)+(∂x∂f(t,x))TM(x)+∂f(t,x)M(x)+2cM(x)

Here, M(x) is a smooth, positive-definite Riemannian metric. Instead of directly certifying negative definiteness via over-approximations—which incurs significant dependency-related overestimation—the method introduces G:

G(t,x)=Θ(x)T[∂f(t,x)Θ(x)+Θ(x)(∂x∂f(t,x)+cI)]

with M(x)=Θ(x)TΘ(x). By focusing on the logarithmic norm μ2(G) rather than the eigenvalues of S, the approach dramatically reduces conservatism in the verification pipeline, leading to significantly larger certifiable contraction regions.

Interval Analysis and GPU-Parallelized Certification

A core technical advancement is the introduction of an interval analysis scheme that employs 2n GPU-parallelized corner checks (where S0 is the state dimension) for the verification of contraction conditions across an entire interval subset S1 of the state space. By exploiting the structure of the logarithmic norm and leveraging a key result from interval matrix theory [Rohn 1994], the method computes the maximum over all possible sign permutations on the diagonal of the interval hull, guaranteeing coverage of the worst-case scenario for contraction violations. This approach avoids the exponential scaling inherent to naive sampling (which incurs S2 checks), making the method applicable in dimensions previously considered intractable.

Algorithmic Framework

The certification-training framework jointly optimizes two neural networks: the control policy S3 and the upper-triangular metric factor S4 defining S5. The algorithm (see Algorithm 1 in the paper) utilizes JAX-based automatic differentiation, interval bound propagation (via immrax), and CROWN-style linear bound propagation, with a composite loss that ensures:

- The interval hull of S6 satisfies the contraction logarithmic norm condition uniformly.

- S7 remains strictly positive-definite with prescribed bounds.

- Parallelization efficiently accelerates logarithmic norm maximizations via GPU.

This seamless integration enables end-to-end differentiable training with certified contraction throughout the underlying set S8.

Explicit Tracking Controller Synthesis

For general affine-in-control systems, the framework extends to the design of explicit tracking controllers under the strong constant curvature (Killing field) condition. If S9, the control vector field, and the metric S(t,x):=M(x)∂x∂f(t,x)+(∂x∂f(t,x))TM(x)+∂f(t,x)M(x)+2cM(x)0 satisfy:

S(t,x):=M(x)∂x∂f(t,x)+(∂x∂f(t,x))TM(x)+∂f(t,x)M(x)+2cM(x)1

then a simple feedback form

S(t,x):=M(x)∂x∂f(t,x)+(∂x∂f(t,x))TM(x)+∂f(t,x)M(x)+2cM(x)2

exponentially stabilizes the system along an arbitrary feasible reference S(t,x):=M(x)∂x∂f(t,x)+(∂x∂f(t,x))TM(x)+∂f(t,x)M(x)+2cM(x)3 in the contraction region S(t,x):=M(x)∂x∂f(t,x)+(∂x∂f(t,x))TM(x)+∂f(t,x)M(x)+2cM(x)4. Notably, this controller is explicit—requiring only two policy evaluations per timestep—and obviates the need for online geodesic computation or state-space augmentation prevalent in previous contraction-based tracking algorithms.

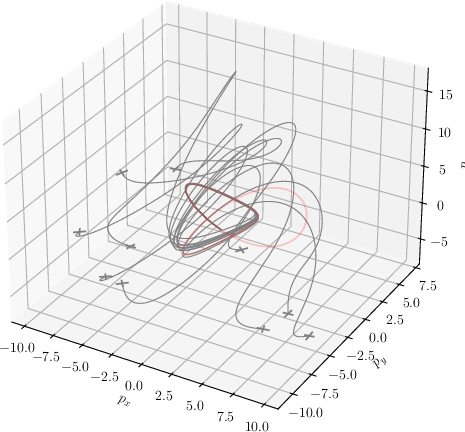

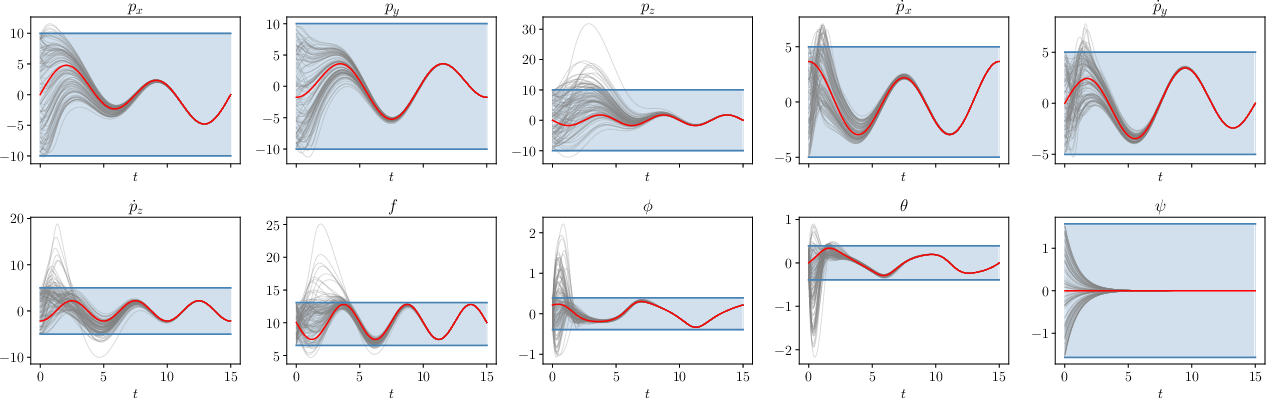

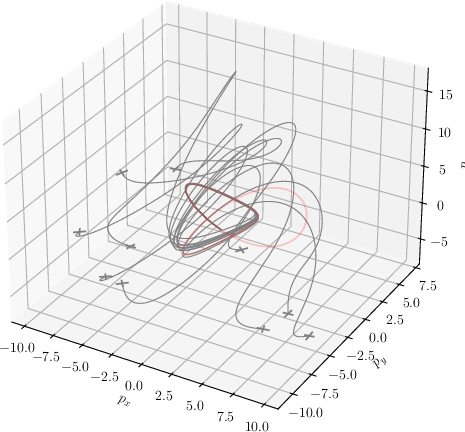

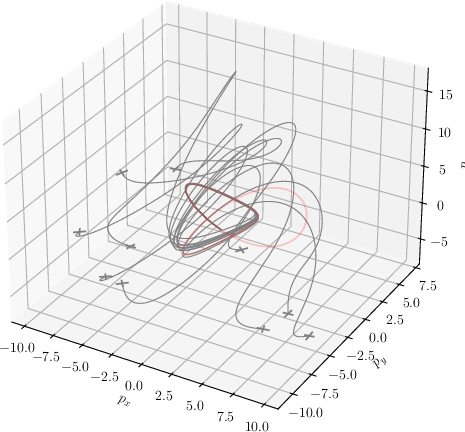

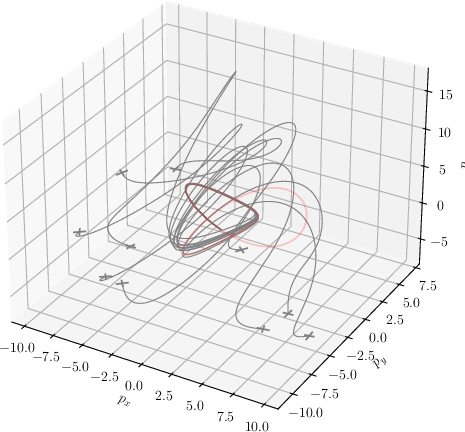

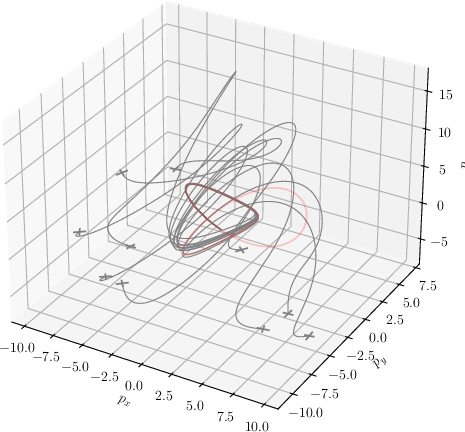

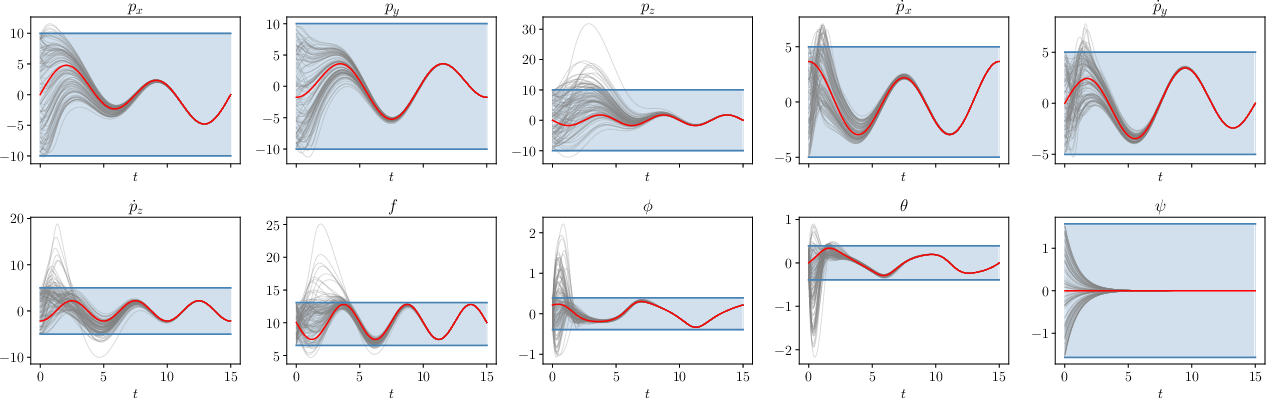

Empirical Evaluation: 10-Dimensional Quadrotor

The effectiveness and scalability of the approach are demonstrated on a nonlinear 10-state quadrotor system, a canonical benchmark in nonlinear control. The joint neural policy and metric are successfully trained and certified (in under 10 minutes of training) for a region S(t,x):=M(x)∂x∂f(t,x)+(∂x∂f(t,x))TM(x)+∂f(t,x)M(x)+2cM(x)5, encompassing realistic bounds on translation, attitude, and thrust.

Key empirical findings include:

- Sample Complexity Reduction: The certifiable contraction region is substantially larger compared to Lipschitz or sample-based certification approaches, which become infeasible in such high dimensions.

- Strong Empirical Tracking: The neural controller, coupled with the certified metric, tracks complex trajectories (e.g., figure-eight, helix, trefoil), consistently regulating state tracking errors and returning to the contraction region even if briefly left due to system nonlinearities or initial condition perturbations.

- Efficient Verification: All S(t,x):=M(x)∂x∂f(t,x)+(∂x∂f(t,x))TM(x)+∂f(t,x)M(x)+2cM(x)6 (1024) corner cases for contraction verification are dispatched in parallel, confirming the practical tractability of the analysis.

Figure 1: Verified contraction and stable trajectory tracking in a 10-state quadrotor; each subplot demonstrates contraction behavior from random initial conditions under the certified policy for distinct reference trajectories.

Practical and Theoretical Implications

This framework offers a tractable certification strategy for learning-based controllers in safety-critical high-dimensional systems. By rigorously verifying contraction over nontrivial subsets of the state space and by reducing conservatism from dependency management, the method closes a critical gap in uniting expressive policy learning with formal guarantees. The explicit tracking form (when Killing fields are satisfied) makes the approach particularly practical, mitigating online computational burdens.

Theoretically, this asymmetric contraction condition and interval analysis technique points toward more general applications in nonlinear stability verification, particularly for scalable synthesis of neural Lyapunov and barrier certificates. The explicit feedback controllers bridge the gap between neural network approximations and classical geometric control synthesis grounded in contraction theory.

Future Directions

Potential extensions of this work include:

- Forward Invariance: Developing methods for ensuring forward invariance of the certified region to guarantee robust safety under bounded uncertainties.

- Saturation and Realizability: Integrating bounded control input constraints for deployment on real platforms.

- Generalized Metrics: Extending certification and synthesis to Finsler-Lyapunov functions and non-Euclidean (e.g., polyhedral) norms for systems where Riemannian structures are restrictive.

- Modular/Hierarchical Control: Exploiting compositional properties of contraction for modular-certified control design in large-scale systems.

Conclusion

The paper offers a significant advance in certified control synthesis for nonlinear systems with neural network policies. By introducing an efficiently verifiable, tractable, and provably less conservative criterion for contraction certification, the methodology enables the practical deployment of deep learning controllers in environments demanding strong theoretical safety and stability guarantees. The integration of GPU-parallelizable interval analysis and explicit controller synthesis frameworks is likely to catalyze further research in scalable, certified learning for complex dynamical systems.