- The paper introduces VulGNN, a lightweight graph neural network framework that reduces computational overhead by 100x compared to LLM-based detectors while achieving competitive accuracy.

- It employs attention-based graph convolutions and sinusoidal positional encodings to capture code structure, yielding an accuracy of 93.17% and enhanced F1-scores in unseen projects.

- Results show that incorporating even modest real-world data significantly boosts performance, making VulGNN a practical solution for integration in CI/CD pipelines.

Lightweight Graph Neural Network for Software Vulnerability Detection: The VulGNN Framework

Introduction: Motivation and Context

The detection of software vulnerabilities is a critical challenge in secure software development. While successful, deep learning-based detectors—especially those using LLMs—suffer from immense inference and retraining costs, thus undermining their integration into resource-constrained environments such as CI/CD pipelines. The paper "Software Vulnerability Detection Using a Lightweight Graph Neural Network" (2603.29216) introduces VulGNN, a lightweight, graph-based alternative that leverages the intrinsic graph-structured nature of code to provide an efficient, highly customizable, and competitive vulnerability detection solution.

VulGNN Architecture and Design Principles

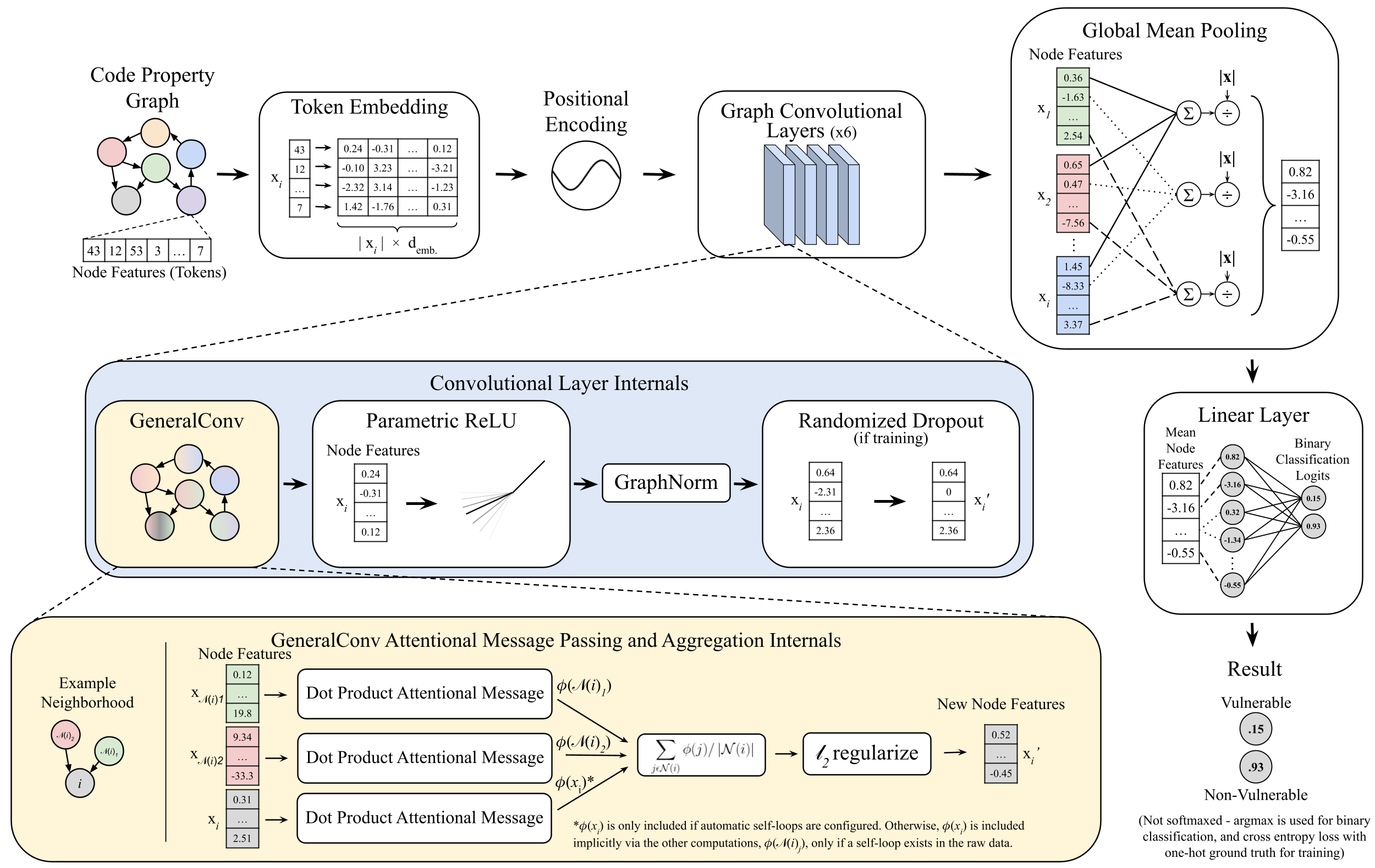

VulGNN is designed for whole-graph binary classification tasks, specifically targeting Code Property Graphs (CPGs) derived from program source code. The architecture comprises several key components:

Regarding optimization, VulGNN is trained using a weighted binary cross-entropy loss to address class imbalance, utilizing the ADAM optimizer and standard regularization techniques (dropout, normalization). The model is modular, enabling architectural variations (edge and node abstraction, backbone, edge heterogeneity) through a unified configuration interface.

VulGNN is systematically benchmarked against both graph-based and LLM-based baselines on the DiverseVul dataset and the SARD/Juliet suite. Experiments address key research questions concerning architectural ablations, comparative accuracy to LLMs, generalizability across projects and datasets, and effects of synthetic versus real-world training data.

Comparative Analysis

- Efficiency: VulGNN contains 1.1M parameters—approximately 100x fewer than LLM detectors (60–220M)—and requires less than 500MB of VRAM, enabling inference on commodity hardware or even CPUs.

- Accuracy and F1: In the "unseen projects" scenario, VulGNN achieves an F1-score of 18.17 and accuracy of 93.17, outperforming the state-of-the-art ReVeal GNN and producing competitive results compared to LLMs, which typically exhibit higher accuracy but lower recall and significantly higher resource demand.

- Data Regime Sensitivity: The introduction of even modest amounts of real-world code (10% DiverseVul) into training alongside SARD/Juliet data improves accuracy from 62% to 90%. F1-score increases from 14.2 (synthetic-only training) up to 40.3 as real-world samples dominate.

Ablation and Generalization: Data Composition and Training Regimes

Empirical results from hybrid and ablation studies reveal the following:

- Synthetic→Real-World Generalization: Models trained exclusively on synthetic data perform poorly (F1 ≈ 14.2), but accuracy and especially F1 ramp steeply with increasing proportions of real-world data, plateauing beyond ~40%.

- Class Imbalance Sensitivity: Training with more balanced Vul:Non-Vul datasets improves F1 and accuracy, but class weights alone are insufficient—direct imbalance mitigation (downsampling, augmentation) is essential for optimal performance.

- Cross-Project Robustness: Testing on held-out projects confirms VulGNN’s ability to generalize and avoid overfitting to codebases, a key prerequisite for deployment in dynamic software development contexts.

Practical Implications, Limitations, and Theoretical Insights

Practical Applicability

The core claim substantiated in the study is that VulGNN achieves performance competitive with LLM-based detectors yet with two orders-of-magnitude less computational overhead—a demonstrably practical solution for integration within CI/CD tools or as a complement to large-scale code review systems.

Theoretical Implications

Systematic ablation elucidates the diminishing returns of model scale alone when adequate structure-aware representations are employed. This indicates that the bottleneck in vulnerability detection is not large model capacity, but the inductive bias and informed preprocessing enabled by leveraging CPGs and appropriate feature embedding.

Limitations

The study acknowledges several threats to validity:

- Construct Validity: Despite DiverseVul’s improved real-world coverage, benchmark datasets still fall short of encompassing all vulnerability phenomena encountered in modern software.

- External Validity: Empirical conclusions drawn from academic or synthetic datasets may have limited transfer to large, industrial codebases with different workflow characteristics or language dialects.

VulGNN extends the GNN vulnerability detection literature by providing a function-level, highly parameter-efficient architecture. Unlike precedent works (e.g., Devign [zhou2019devign], ReVeal [chakraborty2021deep]), VulGNN explicitly targets cross-domain generalization and resource constraints rather than single-dataset accuracy or explanation alone. Compared to more complex hybrid models (e.g., integrating transformers or multimodal graph fusion), VulGNN's design rationale prioritizes practical deployment and reproducibility.

Future Directions

Potential avenues for further study include exploration of alternative GNN operators, pre-training on foundation code models, language-agnostic training, and rigorous industrial validation. The paper also identifies potential directions in augmenting node and edge features with richer semantic data, and integrating lightweight multi-task learning objectives.

Conclusion

VulGNN presents a tangible advancement in the deployment of efficient, accurate, and robust software vulnerability detectors driven by graph neural networks. The approach demonstrates that, when informed by explicit code structure, resource-light GNN models can approach or match the efficacy of LLM-based systems, greatly enhancing the feasibility of real-world, scalable secure software analysis pipelines, with implications extending toward broader AI-driven software engineering tools.