- The paper establishes that the neuromanifold dimension of PGCNNs is L(|G|-1)+1, independent of the activation degree.

- It employs graded group algebras with dual parametrizations (Kronecker and Hadamard) to rigorously model equivariant convolutional filters and activations.

- The analysis provides clear criteria for parameter identifiability and reveals how group symmetry influences sample complexity and expressivity.

Geometry of Polynomial Group Convolutional Neural Networks

Introduction and Motivation

This work develops a rigorous algebraic-geometric framework for the study of polynomial group convolutional neural networks (PGCNNs) with arbitrary finite group G. Leveraging graded group algebras, the paper provides a precise characterization of these equivariant architectures—specifically those employing polynomial activation functions and structured convolution according to the group action. This is situated within the emerging context of neuroalgebraic geometry, wherein the network's expressivity and landscape are investigated through the algebraic properties of the set of all realizable functions (the "neuromanifold"). The study focuses on principled, dimensional, and identifiability properties of neuromanifolds for PGCNNs, extending prior results known for classical polynomial CNNs to general group settings.

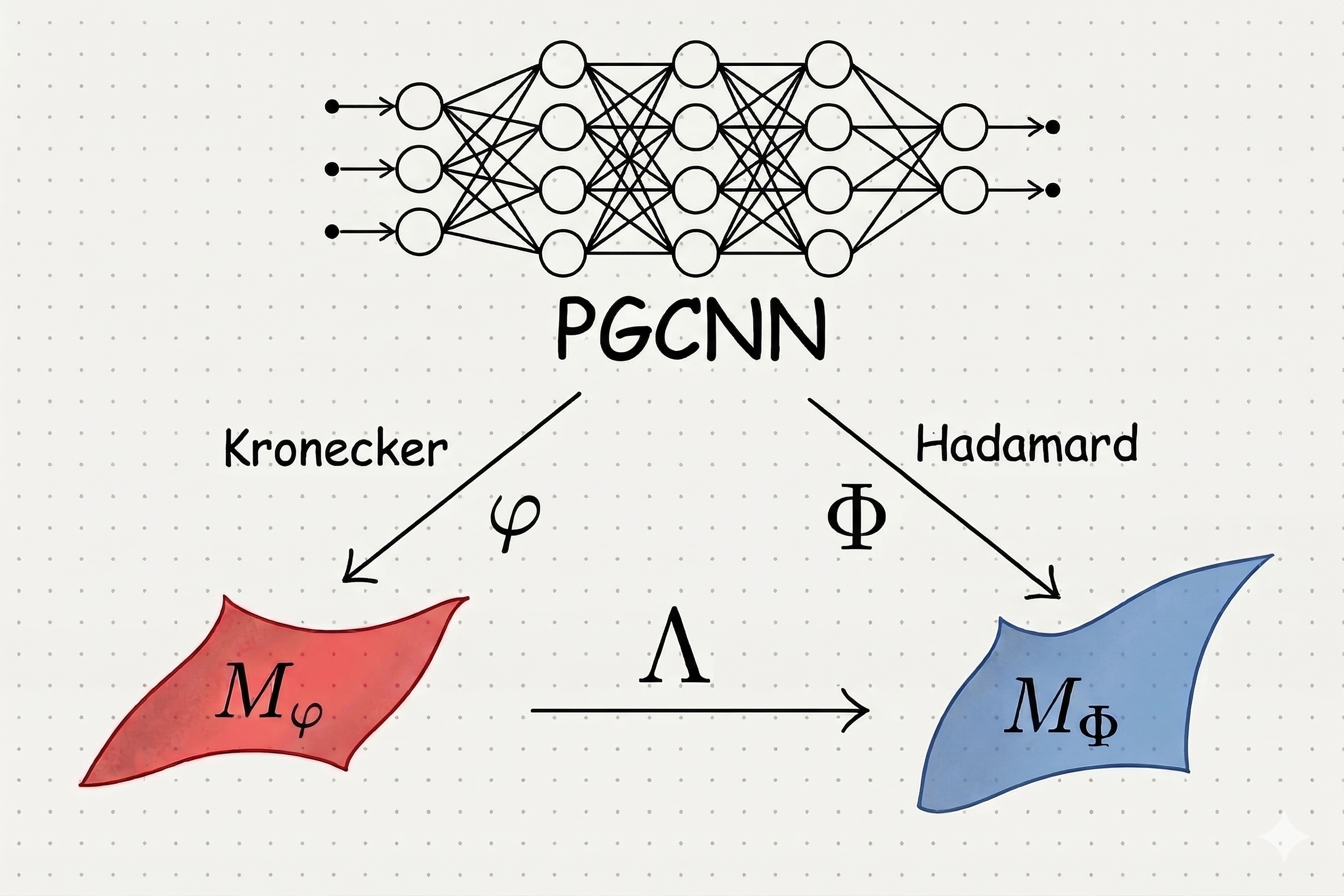

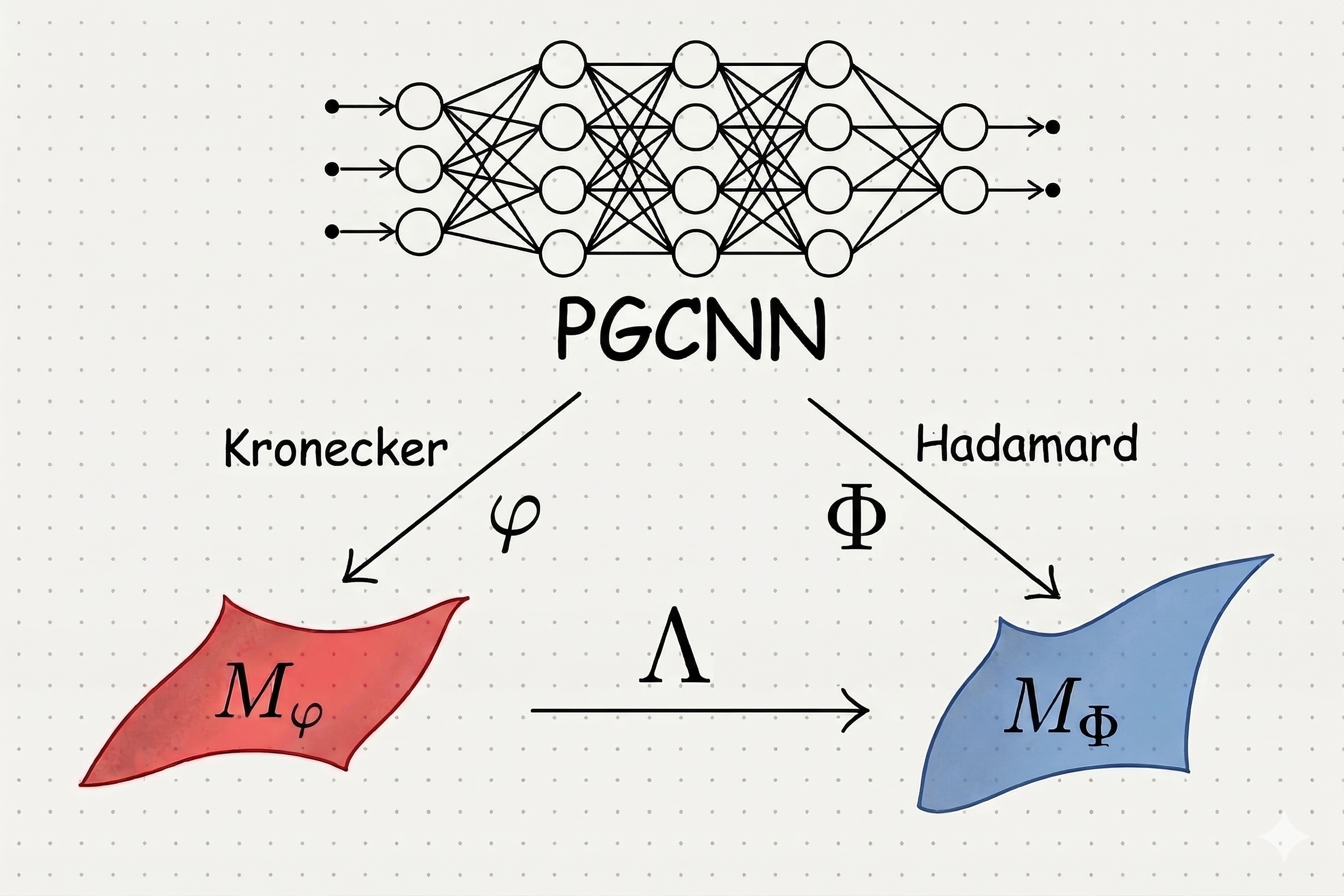

The authors introduce graded group algebras S[G] to model G-convolutional filters, elucidating their algebraic structure and regular representations through G-circulant matrices. Two products are critical to the PGCNN constructions: the Kronecker product, enabling explicit tensorial stacking, and the Hadamard product, which provides a mechanism for polynomial nonlinearities (e.g., the r-th Hadamard power as an activation of degree r). Importantly, these two parametrizations of activation yield distinct but linearly related parameter spaces, denoted by maps φ (Kronecker) and Φ (Hadamard), with a linear transformation Λ connecting their images.

Figure 1: Schematic illustration of the Kronecker and Hadamard parametrization maps φ and S[G]0 for PGCNNs and their relation via an explicit linear map.

The framework is illustrated in Figure 1, emphasizing the dual parametrization's algebraic relations and the central role of the group structure in shaping both filter and activation representations.

Neuromanifold Theory for PGCNNs

Central to the analysis is the neuromanifold—the algebraic variety describing the set of functions realized by the architecture over its parameter space. The main technical results of the paper establish the dimension and structural properties of these manifolds for arbitrary finite groups S[G]1 and polynomial activations of degree S[G]2:

- The neuromanifold's dimension is S[G]3, where S[G]4 is the network's depth (number of layers), and S[G]5 is the order of the group. Notably, this dimension is independent of the activation degree S[G]6 and the inner structure of S[G]7—depending solely on its cardinality.

- The parametrizations given by S[G]8 and S[G]9 are proved to yield neuromanifolds with identical dimension. The explicit computation proceeds via symbolic Jacobian analysis and application of generality in the algebraic sense (Zariski open conditions).

- For fixed G0 and G1, the fibers (preimages) of the parametrization maps G2 and, conjecturally, G3 are finite up to group action and rescaling, precisely characterized by group-theoretic shift and scaling symmetries. This gives a full description of the identifiability (or non-identifiability) of the architectures.

Analysis of Parametrization Fibers

The general fiber structures are determined explicitly for G4 and conjecturally extended to G5. The result states that—up to scaling—distinct parameter tuples mapping to the same realized function can only differ by a prescribed sequence of group translations across layers:

- If G6 and G7 map to the same function, there exist G8 and non-zero scalars such that for all G9,

G0

This structure is derived using properties of convolutions, invertibility of filters, and leveraging rank properties of symmetric tensor decompositions.

Theoretical and Practical Implications

The dimension result generalizes prior studies of neuromanifolds for polynomial (and linear) CNNs to a broad equivariant context, showing that weight-sharing constraints dramatically alter manifold structure compared to unconstrained polynomial networks. Specifically, the G1 formula demonstrates that modeling with group symmetry reduces network expressivity in a manner precisely captured by the group's cardinality and layer count.

These findings have several implications:

- Identifiability: The classification of the fibers delivers clear criteria for when network parameters are uniquely specified by the function, up to group-theoretic equivalence and rescaling.

- Sample Complexity & Generalization: Smaller neuromanifold dimensions—arising from equivariance constraints—entail reduced capacity and lower sample complexity, providing quantitative insight into the benefits and limitations of equivariant architectures.

- Neuroalgebraic Geometry: The work affirms that specialized architectures (PGCNNs) yield neuromanifolds that are strict specializations of the more general fixed-width polynomial neural network neuromanifolds, a subtlety not addressed in previous geometric analyses.

- Computational Tools: The PGCNNGeometry software package enables computational investigation of manifold dimensions and fiber cardinalities for arbitrary finite groups, supporting both empirical validation and further exploration.

Open Problems and Outlook

The paper poses an explicit conjecture regarding the precise description of fibers for the Hadamard parametrization (G2) in the most general case, supported by symbolic and computational experiments for small groups and networks. This is tied to a subtle intersection problem in the geometry of tensor secant varieties and symmetric powers, for which the authors outline future work involving algebraic geometric techniques.

Further directions highlighted include:

- Extension to compact groups (infinite-dimensional graded group algebras or discretization approaches), relevant for harmonic analysis and generalized equivariant architectures.

- Investigation of singular loci of the neuromanifold, motivated by the observation that such points may correspond to critical points in the loss landscape and have training implications.

- Generalization to more complex polynomial activation functions, including sums of Hadamard products, motivated by recent empirical successes and universality approximation results.

Conclusion

This paper provides a technically detailed, algebraic-geometric analysis of the expressivity and identifiability of polynomial group convolutional neural networks. It establishes concrete dimension formulas for neuromanifolds that depend only on group size and depth, classifies symmetries in the parameterization, and demonstrates the nuanced effects of equivariant weight-sharing on the function space of neural networks. The results highlight both the power and the limitations of using symmetry in neural architectures, paving the way for deeper geometric understanding and further theoretical developments in equivariant and polynomial neural networks.