- The paper demonstrates that enforcing five physical constraints (positivity, consistency, mirror symmetry, Galilean and scaling invariance) ensures near-exact flux approximations.

- It maps the complex Riemann solve into a supervised regression problem using a neural network architecture with explicit invariance enforcement.

- Experiments on shallow water and Euler systems show that HCNRS achieves roundoff-level errors and a 2× speedup while preserving critical physical symmetries.

Learning the Exact Flux: Neural Riemann Solvers with Hard Constraints

Introduction

This work introduces a hard-constrained neural Riemann solver (HCNRS) for hyperbolic conservation laws, targeting the dominant computational bottleneck in Godunov-type methods: the Riemann solve at cell interfaces. Instead of relying on global surrogates, which often struggle with preserving key invariants, the study designs neural solvers that directly approximate the flux mapping defined by the exact Riemann solution while strongly enforcing five fundamental physical and mathematical constraints. The methodology is thoroughly validated on shallow water and ideal-gas Euler systems across standard CFD benchmarks, with a focus on accuracy, well-balancedness, boundary behavior, symmetry, and computational cost.

Motivation and Context

Neural networks have seen rapid adoption in CFD, particularly through data-driven, PINN, and neural operator approaches. However, when deployed within conservative solvers for advection-dominated systems, unconstrained neural surrogates frequently violate conservation laws, break symmetry, and suffer from poor generalization near discontinuities. The paper identifies that these failures are rooted in the lack of strong constraint enforcement—especially when previous neural Riemann solvers only used data-driven, weakly-regularized training objectives. As a result, they introduce spurious oscillations, boundary errors, and loss of well-balanced states.

To address this, the proposed HCNRS strictly enforces:

- Positivity (e.g., h≥0, ρ≥0, p≥0)

- Consistency (reducing to the exact flux when left/right states coincide)

- Mirror Symmetry (correct odd and even response under left/right flipping)

- Galilean Invariance (insensitivity to uniform velocity shifts)

- Scaling Invariance (correct homogeneous scaling of inputs/outputs)

These invariances and symmetries are incorporated directly in the solver's architecture, significantly reducing effective input dimension and guaranteeing the preservation of core physical properties.

Hard-Constrained Neural Riemann Solver Architecture

The Riemann solver for both the shallow water and Euler equations reduces to determining intermediate "star" states via a nonlinear algebraic system, typically solved via iterative root finding. This work maps the root-finding task to supervised regression, but crucially, leverages the above constraints to design a neural architecture where:

- Inputs are expressed through a minimal set of dimensionless parameters, exploiting invariance properties.

- Mirror symmetry is enforced via explicit symmetrization of the MLP outputs.

- Consistency is imposed by construction.

- Positivity is ensured with output post-processing (clipping).

Through this, the network predicts dimensionless contrasts (e.g., δh,δρ,δp,δu), which are then lifted back to the physical variables for use in the finite volume update.

Numerical Results

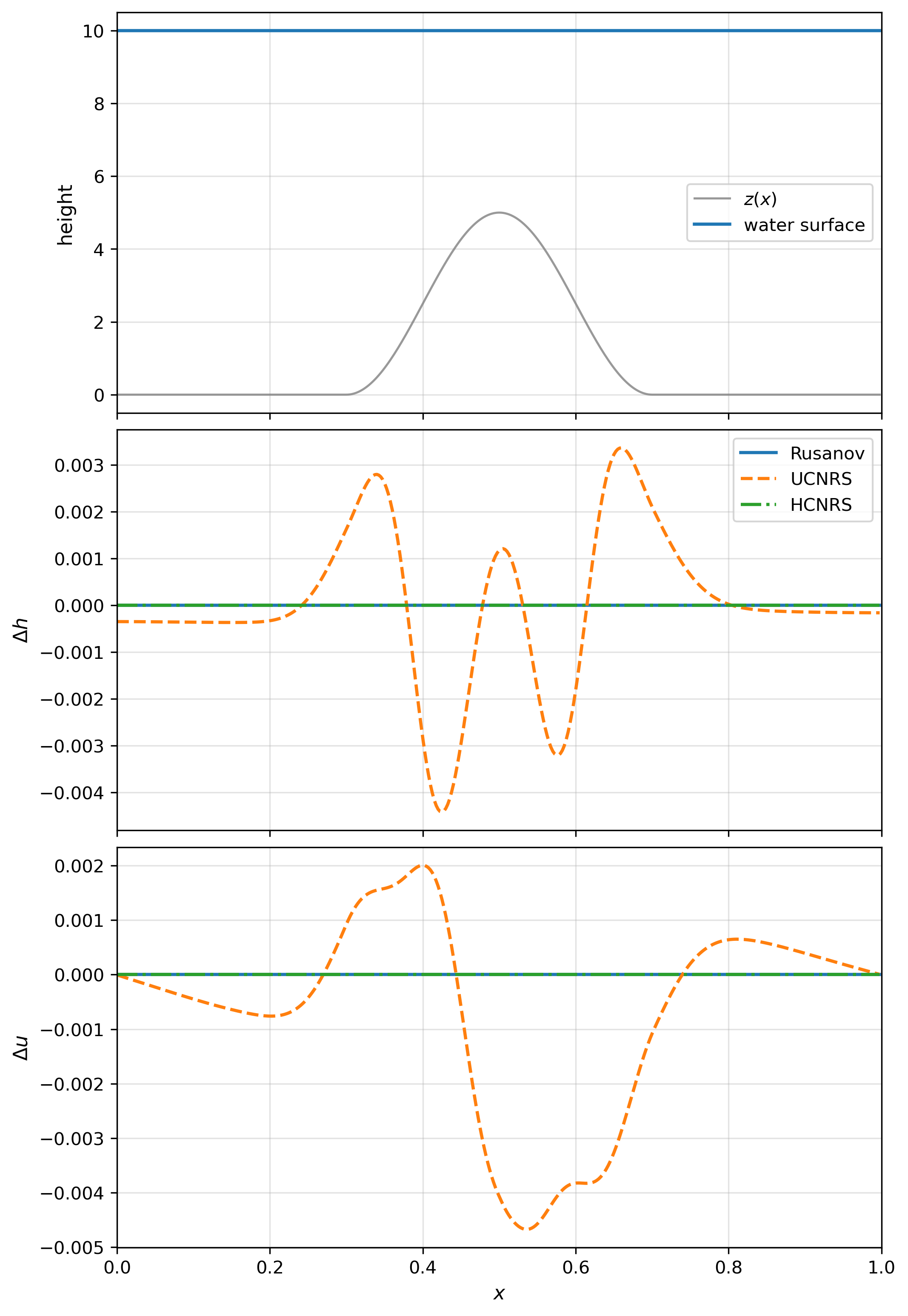

Still Water Test

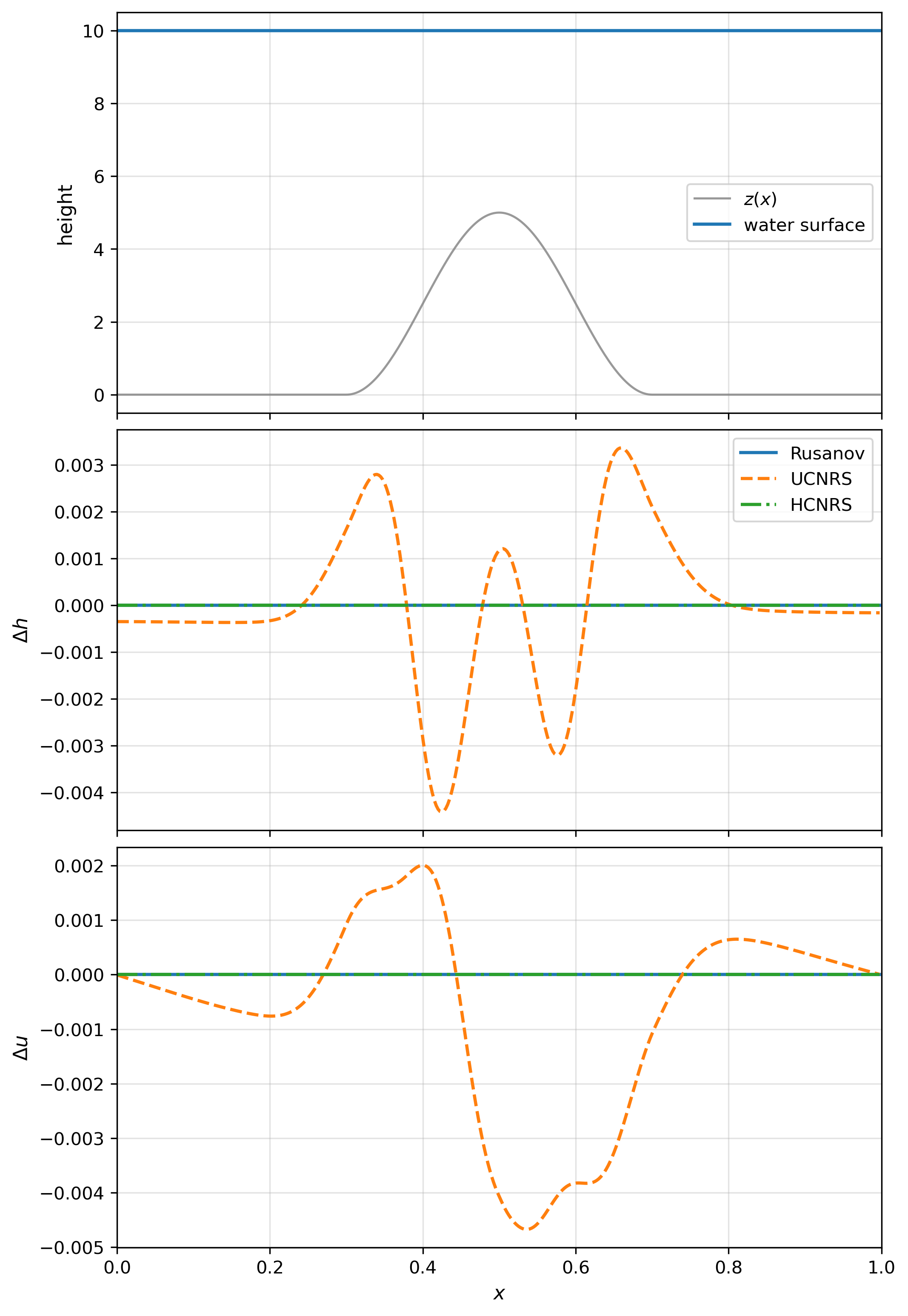

Absent hard constraints, unconstrained neural solvers (UCNRS) fail to preserve the well-balanced property and yield nonzero mass flux at solid wall boundaries, causing violation of conservation and secular mass errors. By contrast, HCNRS achieves roundoff-level errors and perfect wall boundary fluxes due to strict mirror symmetry and consistency.

Figure 2: Still water test at t=0.1s; HCNRS maintains equilibrium, while UCNRS exhibits spurious deviations.

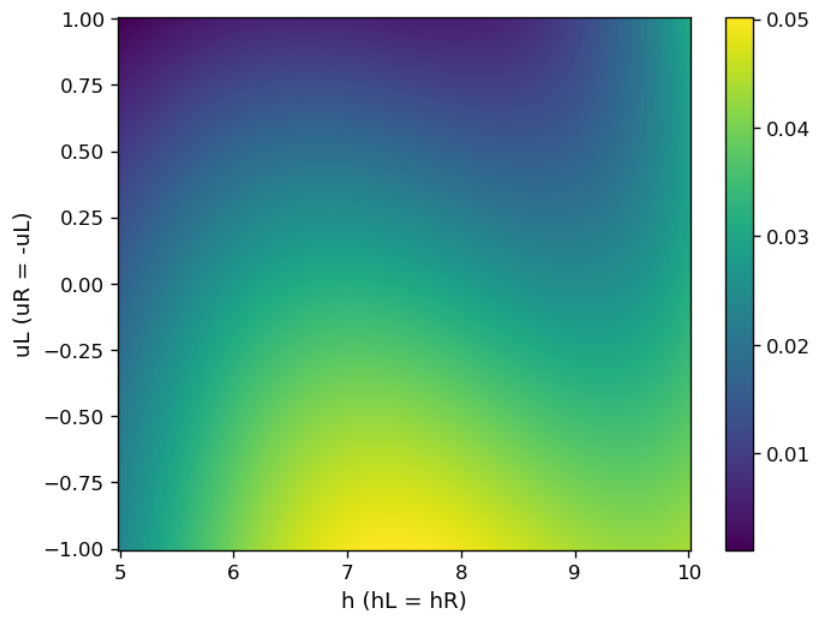

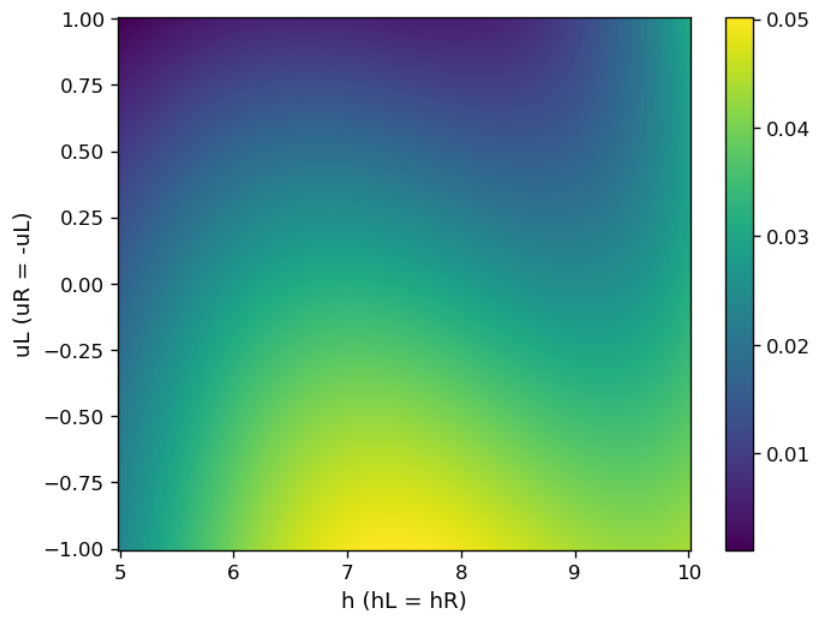

Figure 1: Absolute value of the mass flux returned by UCNRS for velocity-mirrored test cases, illustrating nonzero wall flux due to broken symmetry.

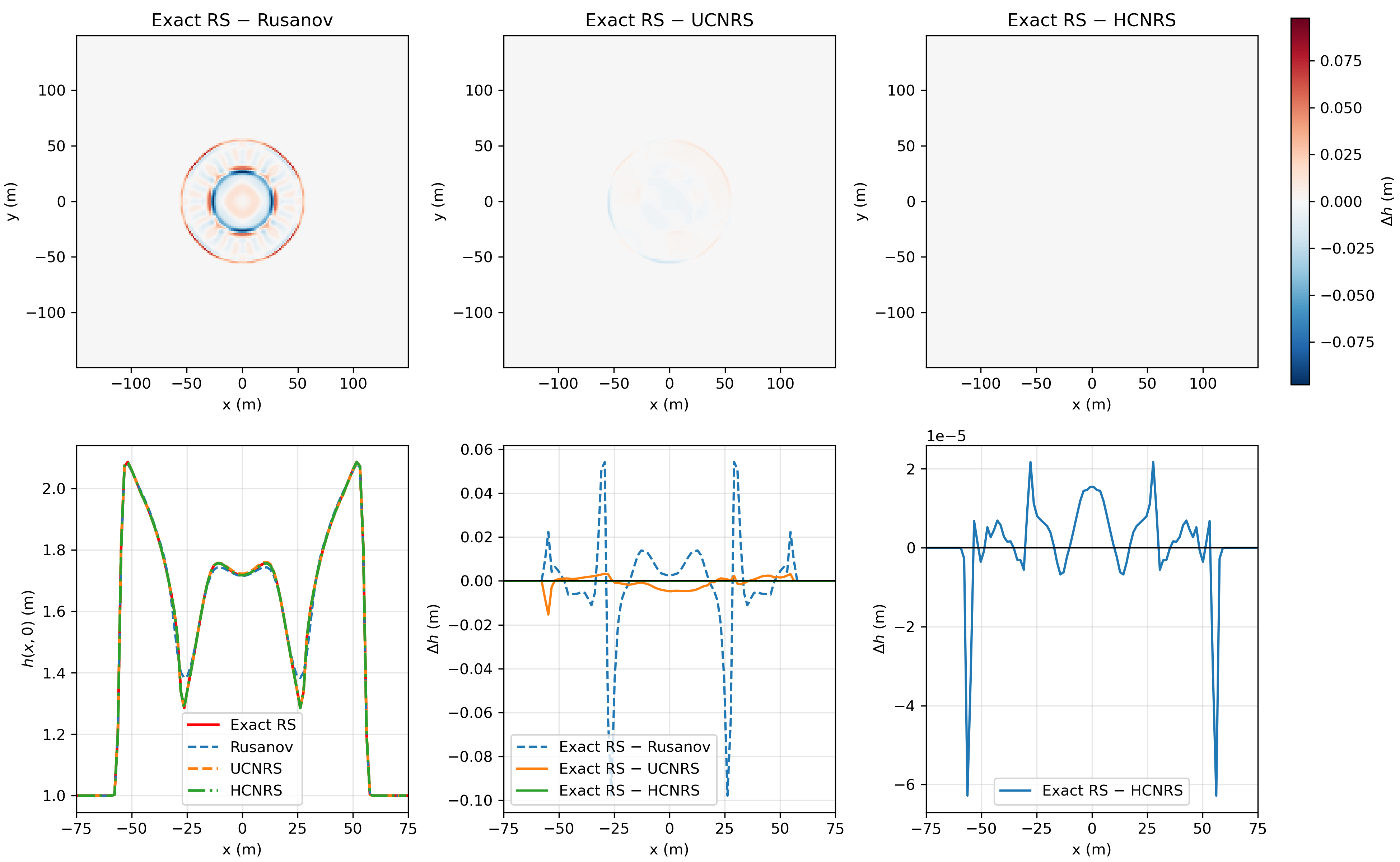

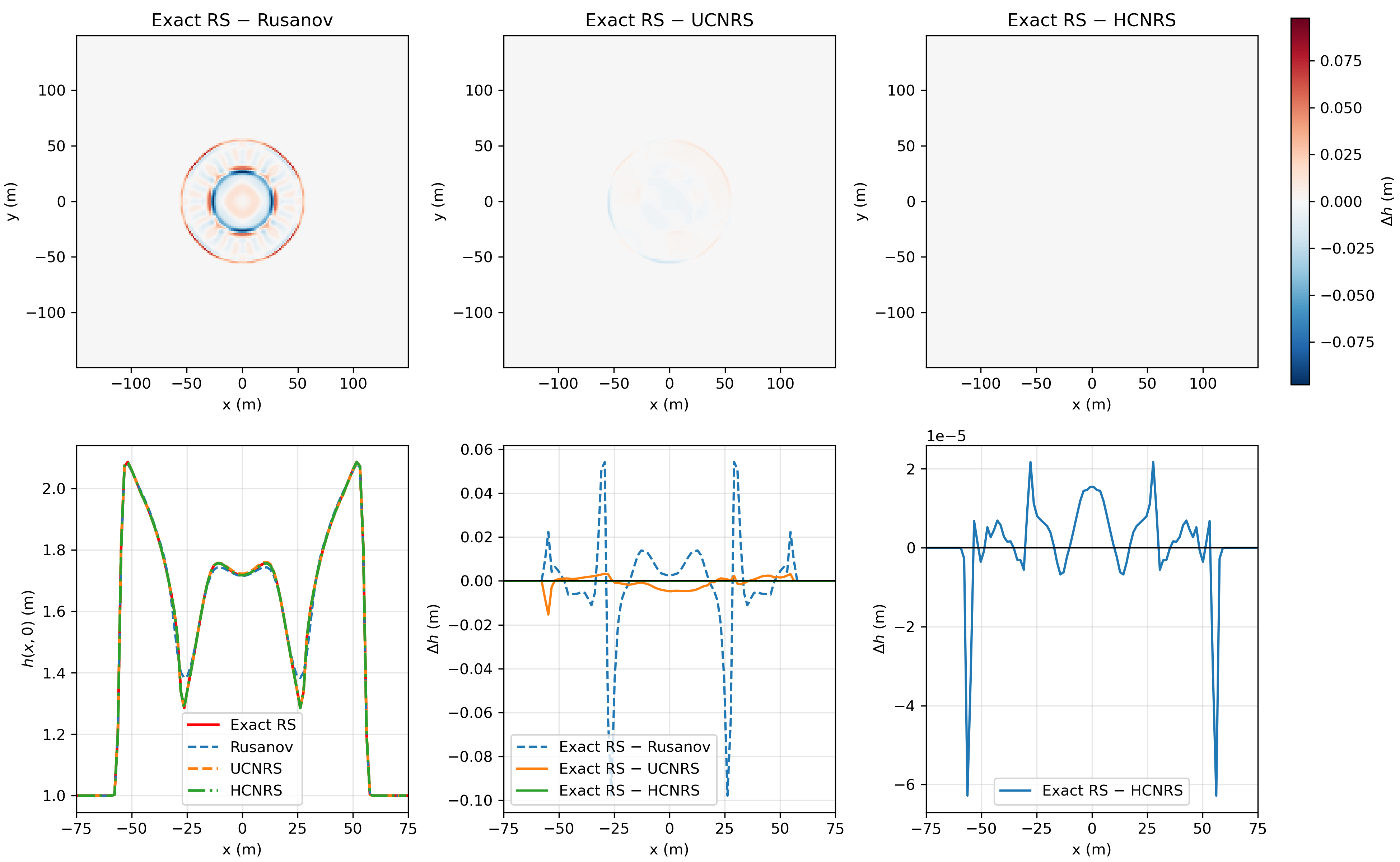

Two-Dimensional Dam Break

The radial dam-break scenario stresses both conservation and symmetry. UCNRS introduces asymmetric errors and reduces dissipation, outperforming classical Rusanov but remaining inferior to HCNRS, which matches the exact RS up to numerical tolerance and perfectly preserves radial symmetry.

Figure 3: Spatial error comparisons for the dam break test at t=5; HCNRS displays symmetry and O(10−5) errors, UCNRS is less accurate and loses symmetry, while Rusanov is highly diffusive.

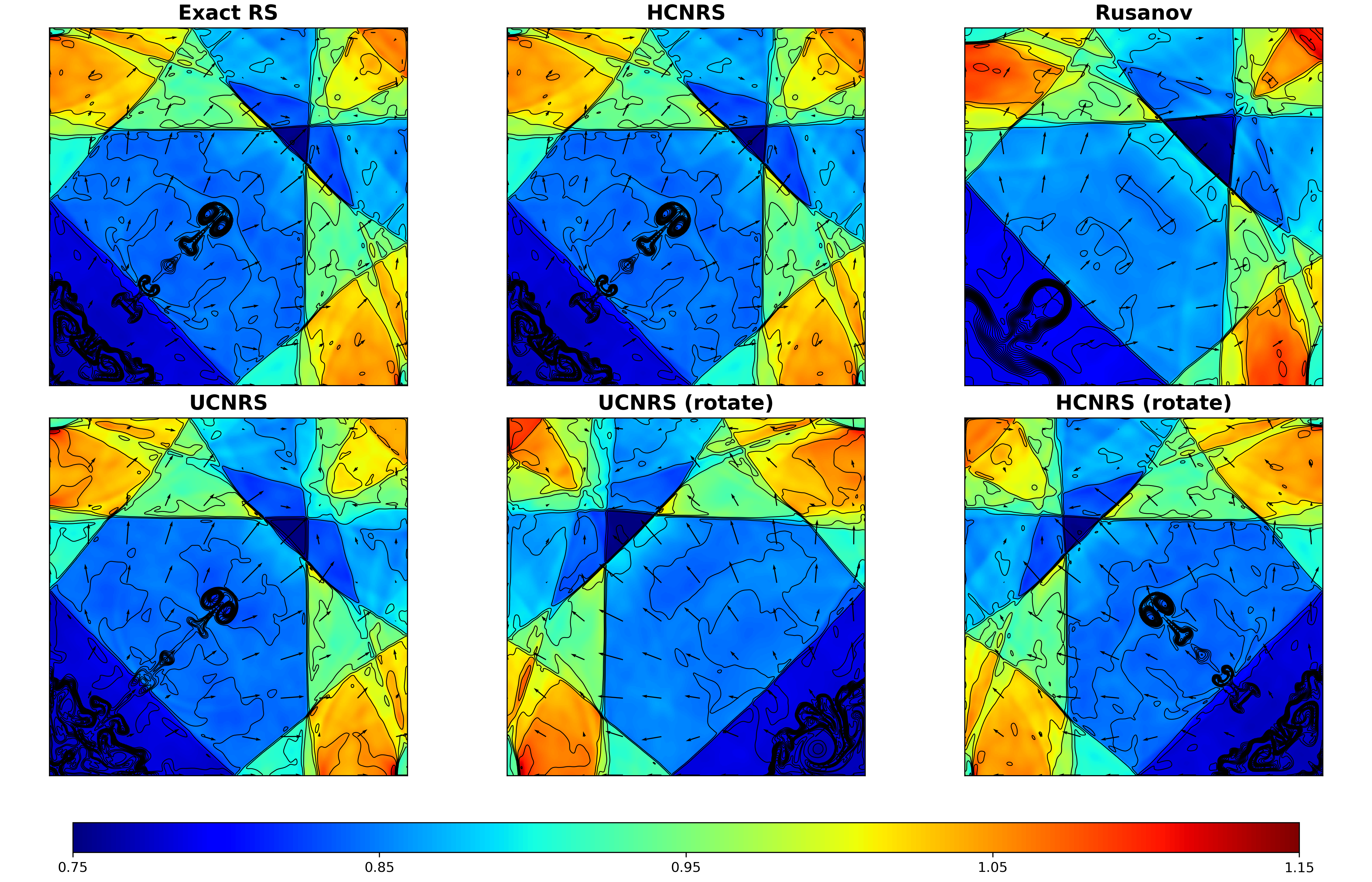

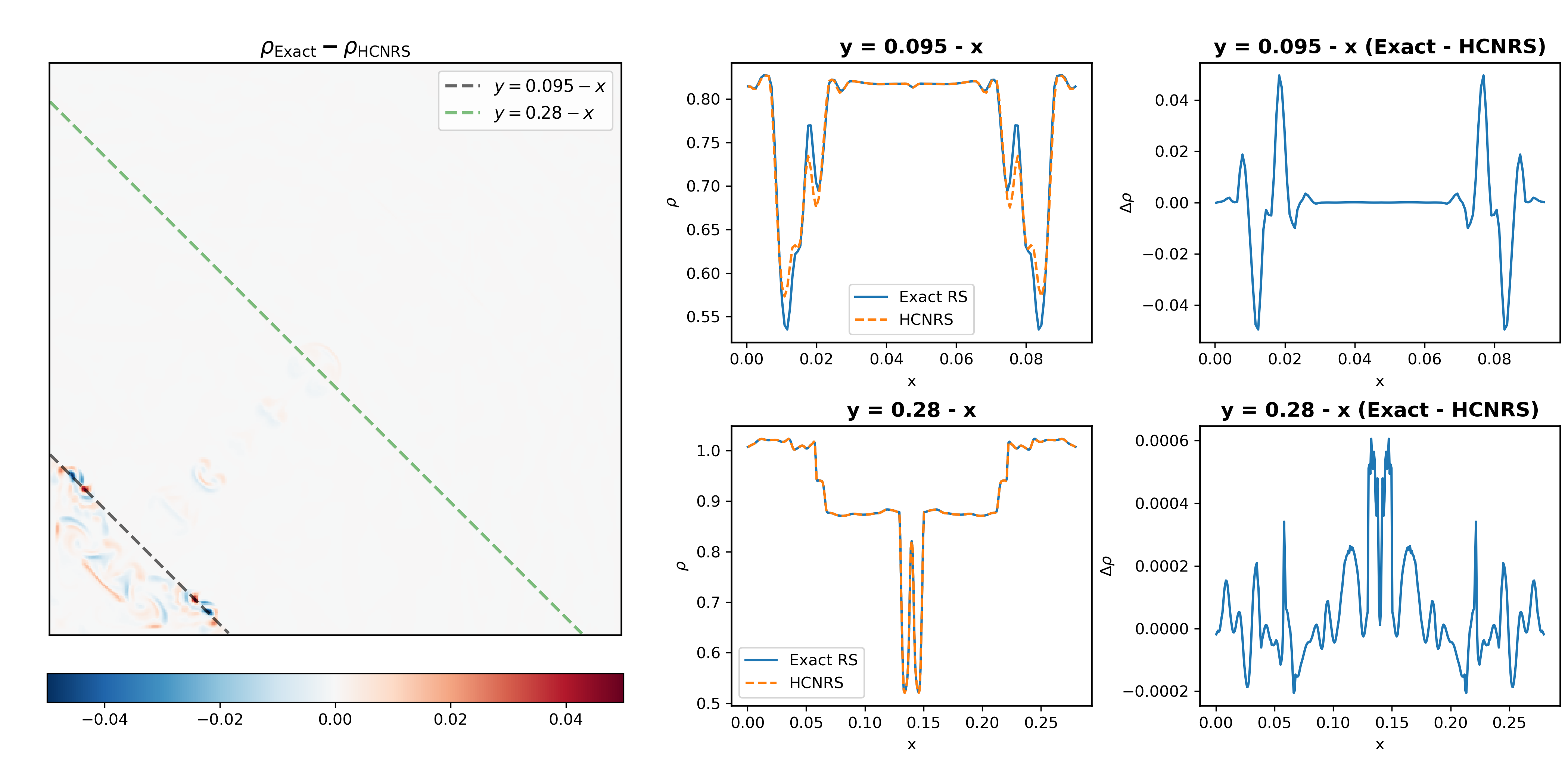

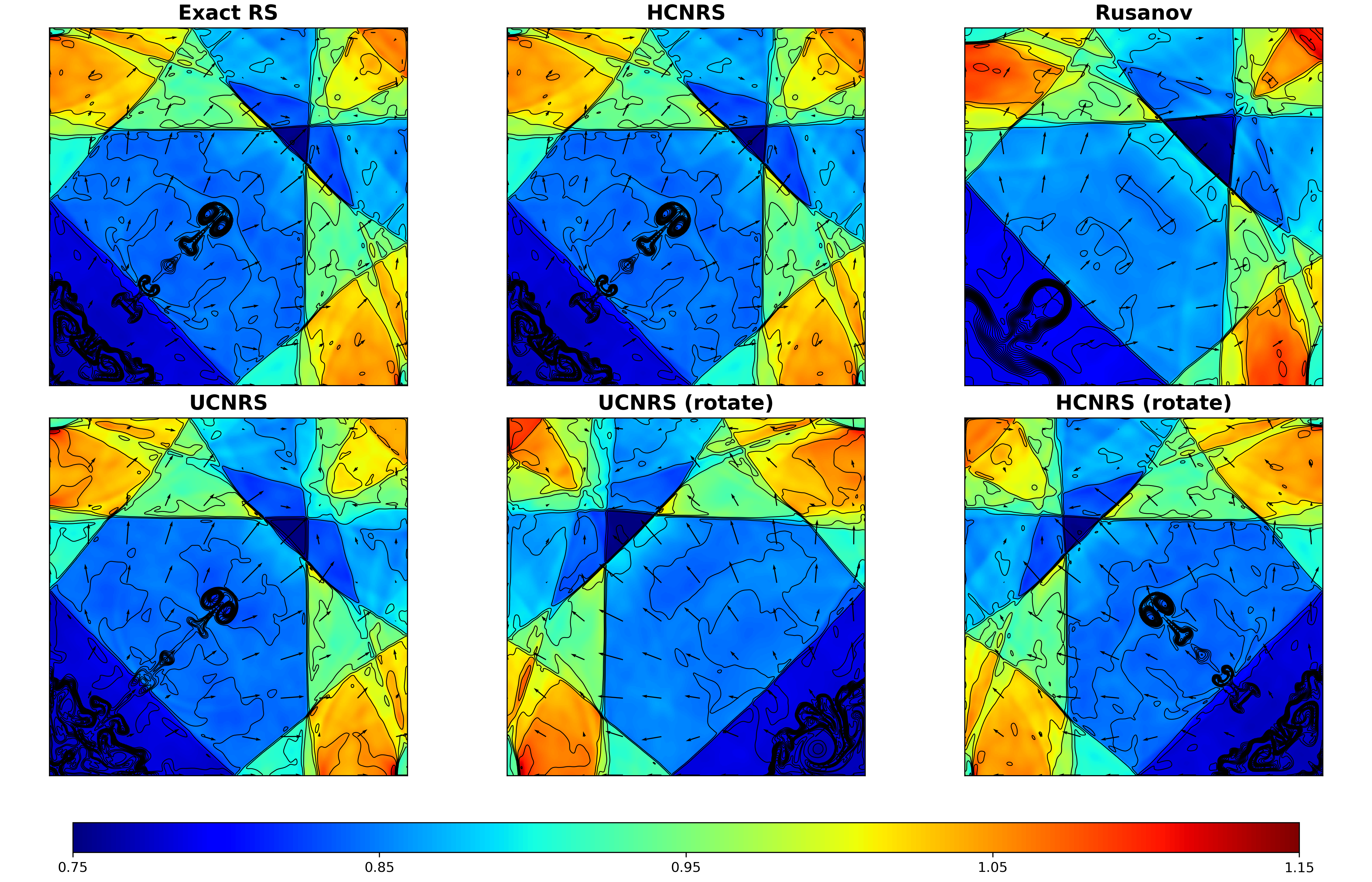

Euler Implosion Problem

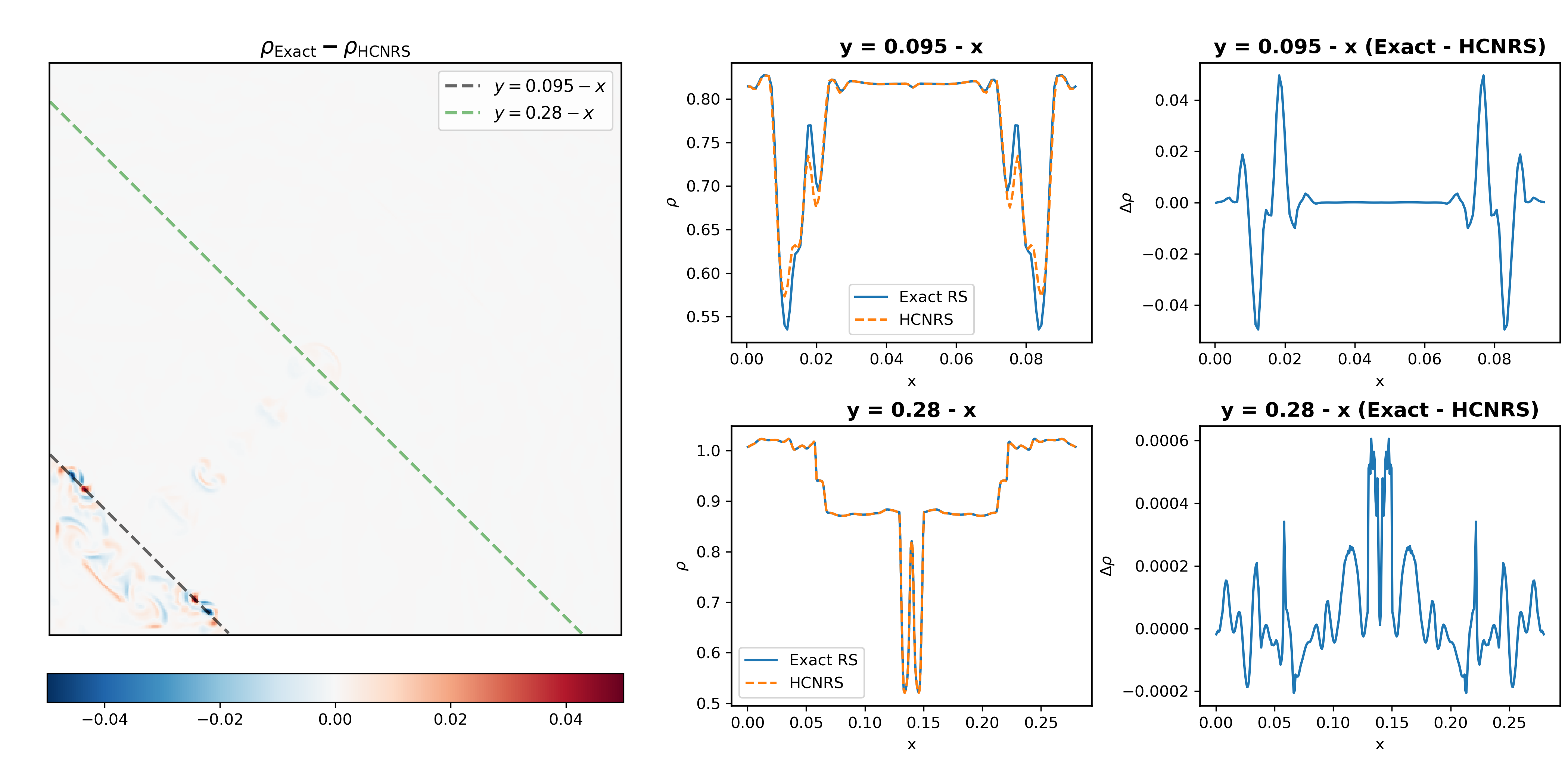

The Liska–Wendroff implosion case demands accurate shock and contact wave capturing as well as invariant preservation under coordinate transformations. HCNRS resolves fine-scale jet structures identically to the exact RS and maintains correct diagonal symmetry, even under rotational transformations. UCNRS, on the other hand, both shifts or completely erases the jet and breaks symmetry, as its flux mapping is not reference-frame independent.

Figure 4: Euler implosion at T=2.5; HCNRS reproduces the exact RS, preserving the jet structure and symmetry. UCNRS solutions are inconsistent under rotation and lose critical features.

Figure 5: Error plots for HCNRS versus the exact RS on diagonal transects, showing excellent agreement (errors O(10−4) in the jet region).

Computational Cost

On modern hardware (GPU, JAX+JIT), HCNRS achieves a roughly 2× speedup over exact solvers for the considered systems; the gain is more pronounced for more complex equations of state (e.g., high explosives or multiscale interface models). Importantly, the evaluation cost is modest and network inference is compatible with high-order schemes such as ADER, where the cost reduction is expected to scale better for increasing local complexity.

Implications and Future Directions

The study demonstrates that hard-constrained neural Riemann solvers can robustly serve as drop-in replacements for exact solvers in numerical PDE schemes, maintaining all key invariants and symmetries. Practically, this enables simulations that retain high accuracy and physical fidelity with reduced computational cost—especially impactful for large-scale and high-order applications where the Riemann solve is the dominant bottleneck. Theoretically, it shows that strict enforcement of invariance constraints within the design and training of neural surrogates is not simply cosmetic: it is essential for the viability of integrating such models into physically compatible solvers.

Potential future research trajectories include:

- Extending the approach to more complex hyperbolic systems, such as ideal and resistive MHD, or arbitrary convex thermodynamic models.

- Developing adaptive or online training strategies for improved extrapolative robustness.

- Formal analysis of the stability and convergence of hybrid schemes incorporating neural solvers.

- Generalization to high-order Riemann solvers and non-classical formulations.

Conclusion

HCNRS, formulated through principled constraint enforcement, resolves critical shortcomings found in unconstrained neural RS models when deployed in CFD solvers. It achieves near-exact solution fidelity, guaranteed preservation of fundamental invariants, correct boundary behavior, and efficient computational cost. This work establishes a concrete and practical framework for future neural surrogates in computational physics, provided physical and mathematical constraints are enforced at the neural architecture level.