- The paper establishes that integer-state quantized SNNs exhibit stable, nontrivial recurrent dynamics suited for digital acceleration.

- It introduces a co-design model where bit width, overflow, and leakage operations fundamentally govern network behavior.

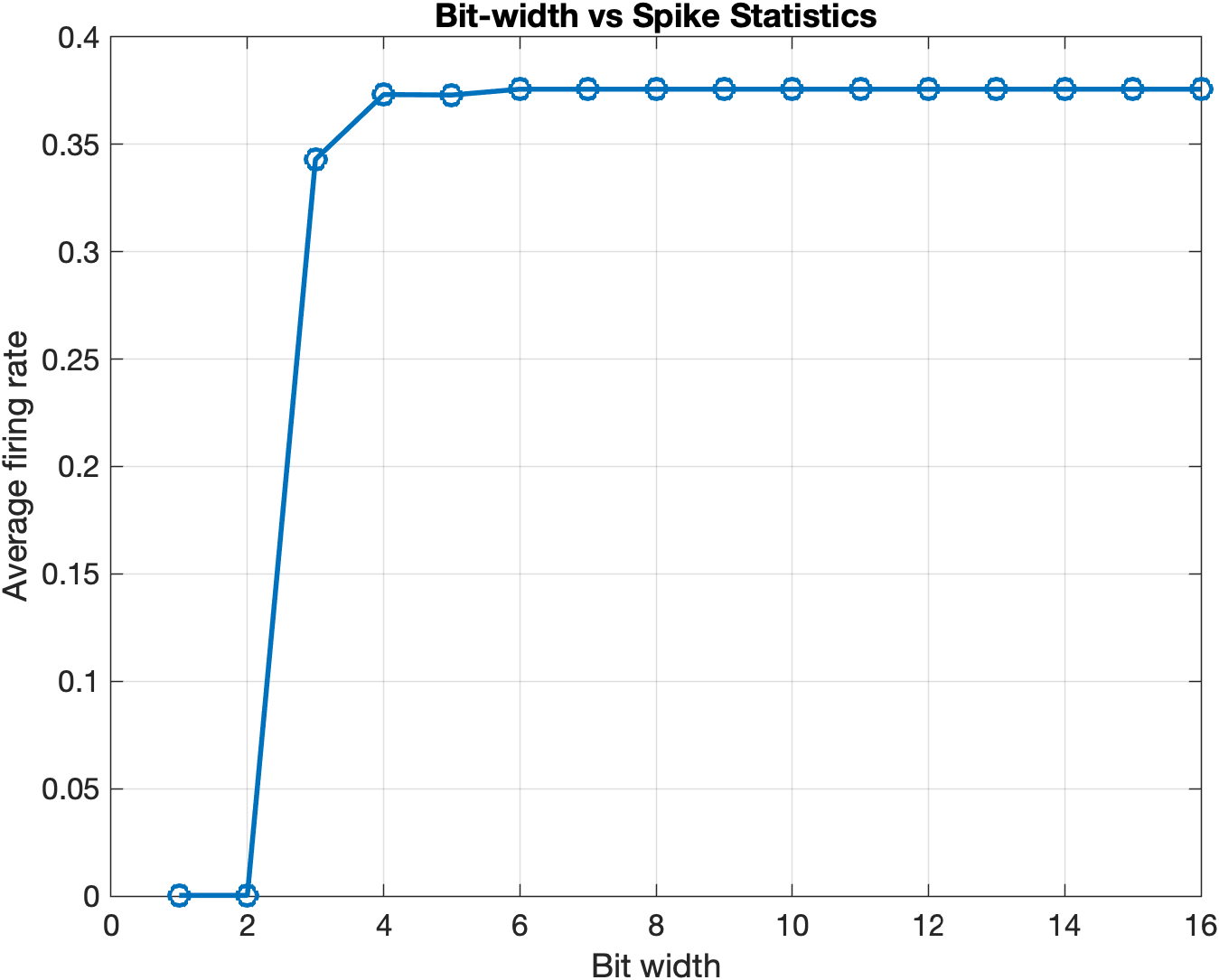

- Empirical results indicate a precision threshold (3–4 bits) necessary for bounded recurrence, linking hardware efficiency with network expressivity.

Integer-State Dynamics of Quantized Spiking Neural Networks: Finite-State Regimes and Hardware Implications

Introduction

"Integer-State Dynamics of Quantized Spiking Neural Networks for Efficient Hardware Acceleration" (2604.01042) establishes a hardware-centric framework for analyzing spiking neural networks (SNNs) under finite-precision integer representation. The novelty lies in reframing SNNs, as implemented on digital platforms, as deterministic discrete-time dynamical systems whose behavior is dictated not merely by their connectome or algorithmic properties but by bit width, overflow/clipping semantics, and hardware-specific arithmetic. Rather than treating quantization as a mere approximation artifact, the framework posits numerical precision as a central design parameter with direct consequences on network recurrence, attractor structure, and functional dynamics. The paper's qualitative and quantitative analyses motivate a co-design perspective for SNN algorithms and hardware, challenging the typical separation between model specification and deployment constraints.

The work formalizes the SNN update rule with strictly integer-valued membrane states, thresholds, and synaptic weights, replacing traditional floating-point arithmetic with hardware-efficient operations such as shift-based leakage and bounded update rules. Formally, the update equation:

Vi(t+1)=Vi(t)−(Vi(t)≫k)+j∑wijSj(t)

binds the neuron to a bounded integer lattice where all operations are amenable to low-cost implementations on FPGA and ASIC logic units. Here, the leakage parameter is manifested via a right shift (bitwise operation), cementing the practical fidelity to digital hardware constraints.

The theoretical implication is that the SNN thus implemented constitutes a finite-state, deterministic discrete map. The system state, X(t), evolves according to a deterministic mapping F; consequently, every possible state sequence is eventually recurrent, either fixed-point or periodic, with cycle lengths and transients dictated by the finite state space and update rules.

This perspective reframes quantization not as an approximation of the idealized continuous neural model, but as defining the actual attractor and regime structure accessible to hardware SNNs. Critically, the representation-induced boundaries—bit width, clipping, threshold semantics—determine whether the network is quiescent, oscillatory, or supports rich recurrent activity.

Hardware Realization and Co-Design Rationale

Treating integer-state SNNs as primary models rather than proxies for floating-point behavior directly informs the design and optimization of neuromorphic and digital accelerators. The architecture of hardware such as Loihi, SpiNNaker, and custom FPGA pipelines inherently relies on integer or fixed-point processing, shift operations, and bounded accumulators, as outlined in the paper.

This approach foregrounds bit width and update semantics as first-class determinants of both energy/area efficiency and the emergent temporal behaviors. Hardware-aware SNN design thus shifts from post-hoc quantization error analysis to a system-theoretic perspective, enabling the principled exploration of the hardware–algorithm interface.

Empirical Characterization of Finite-State Effects

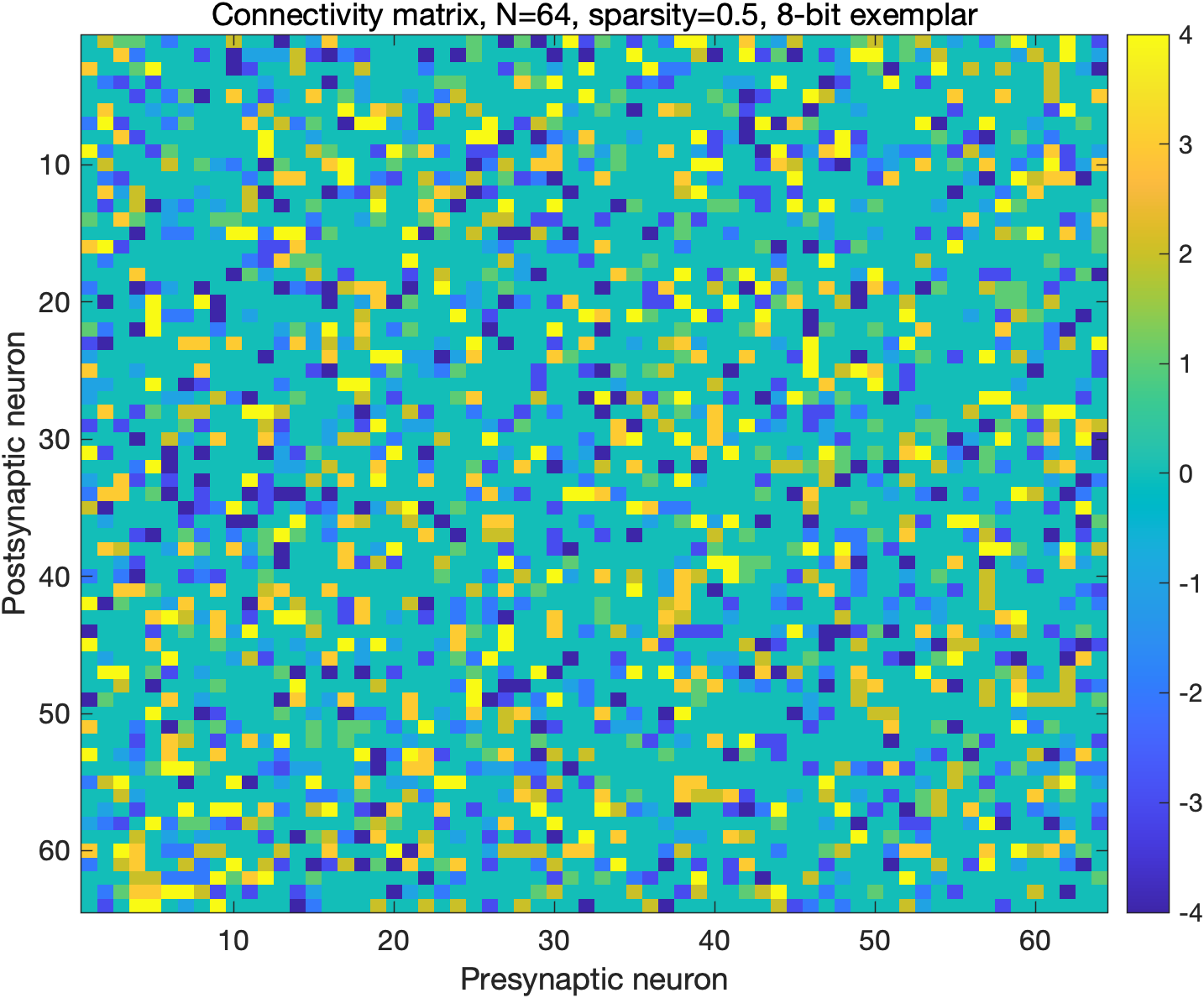

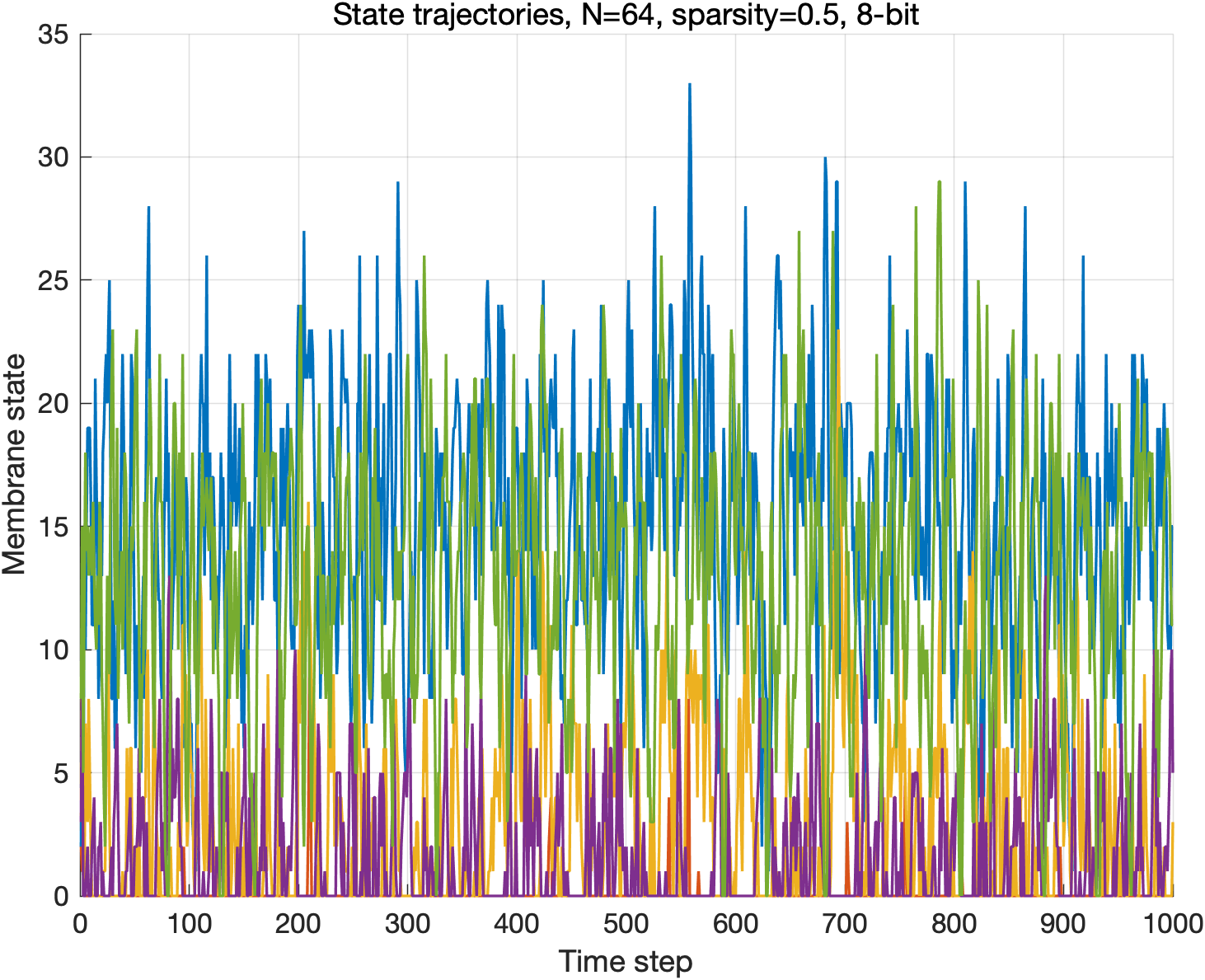

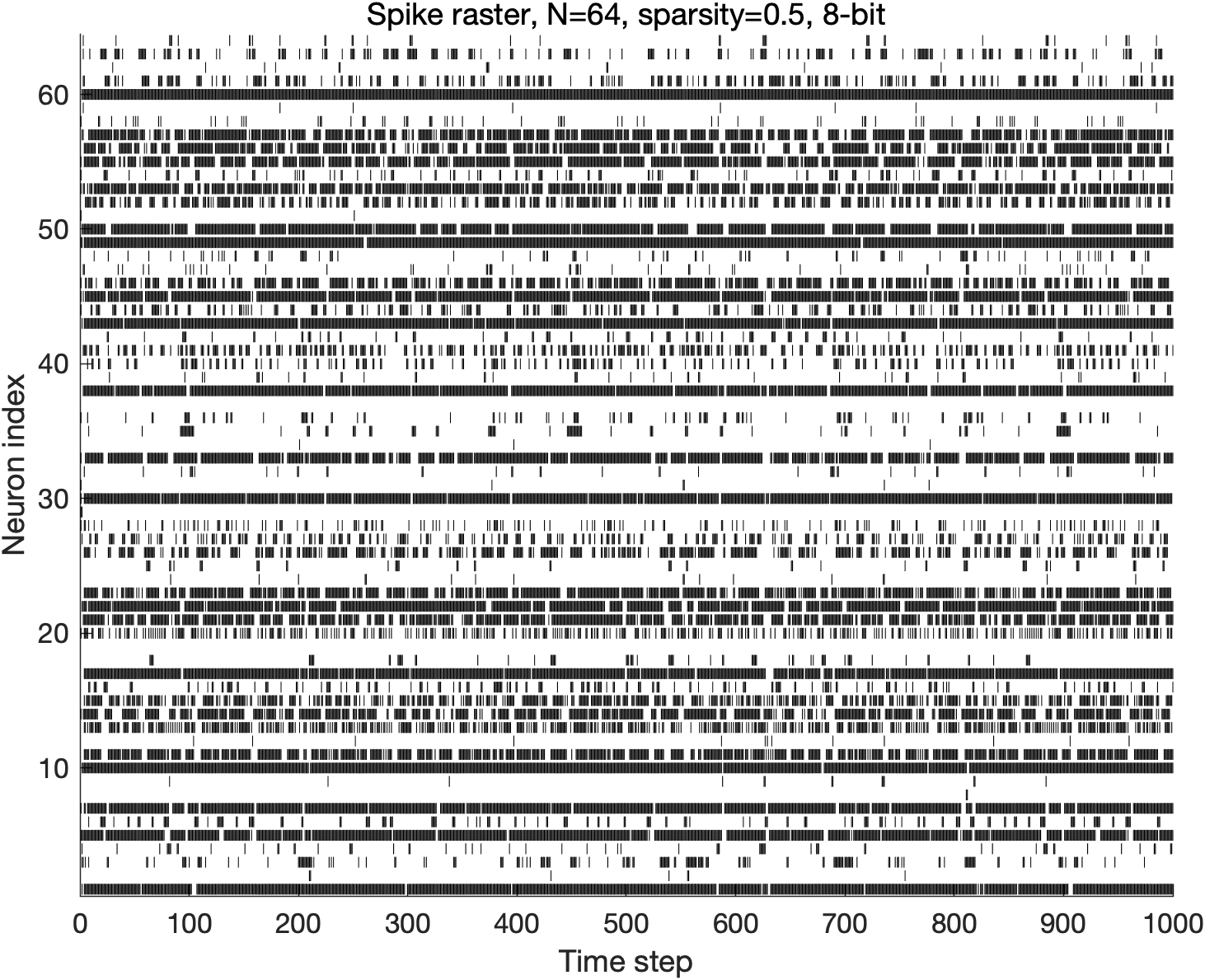

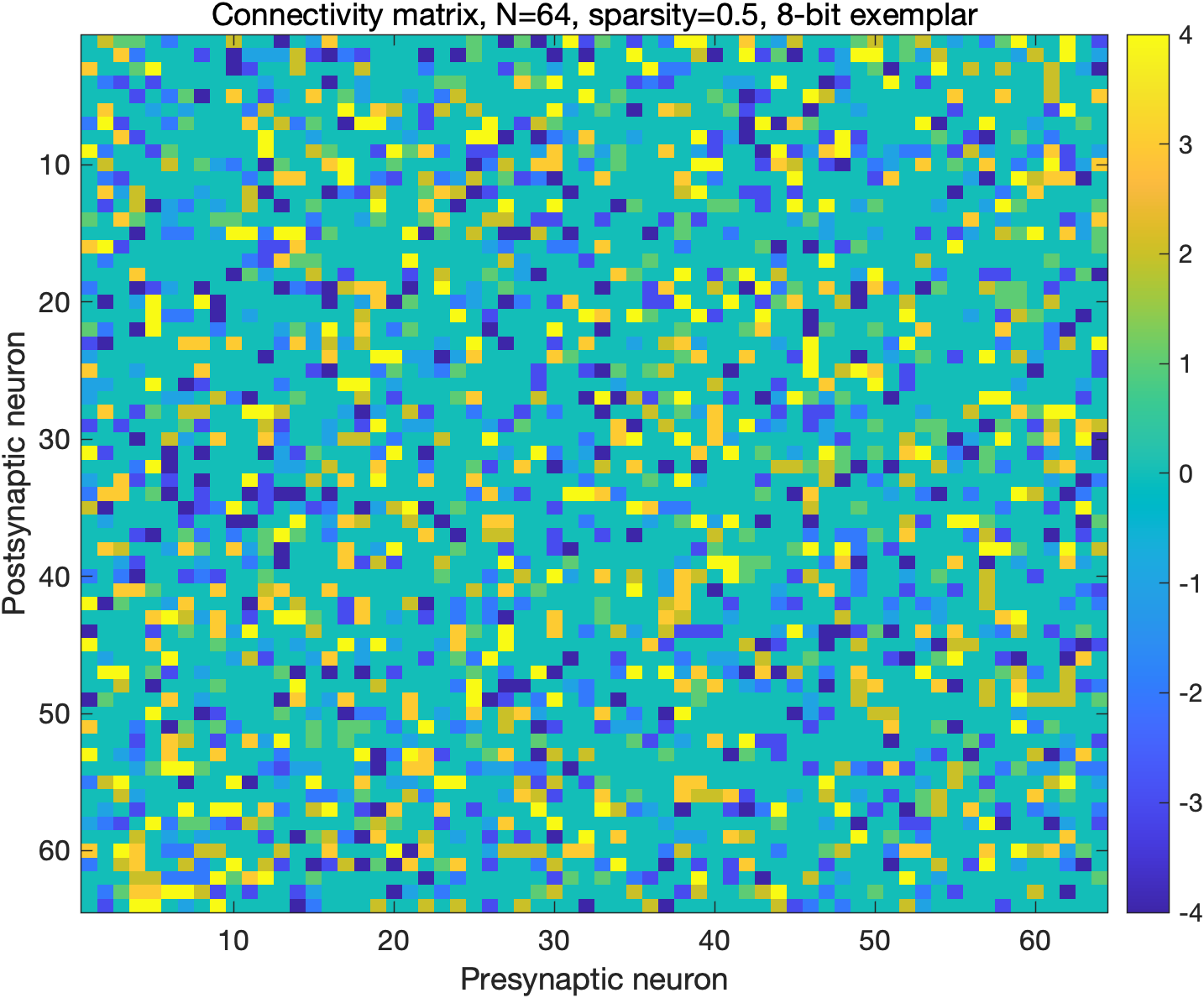

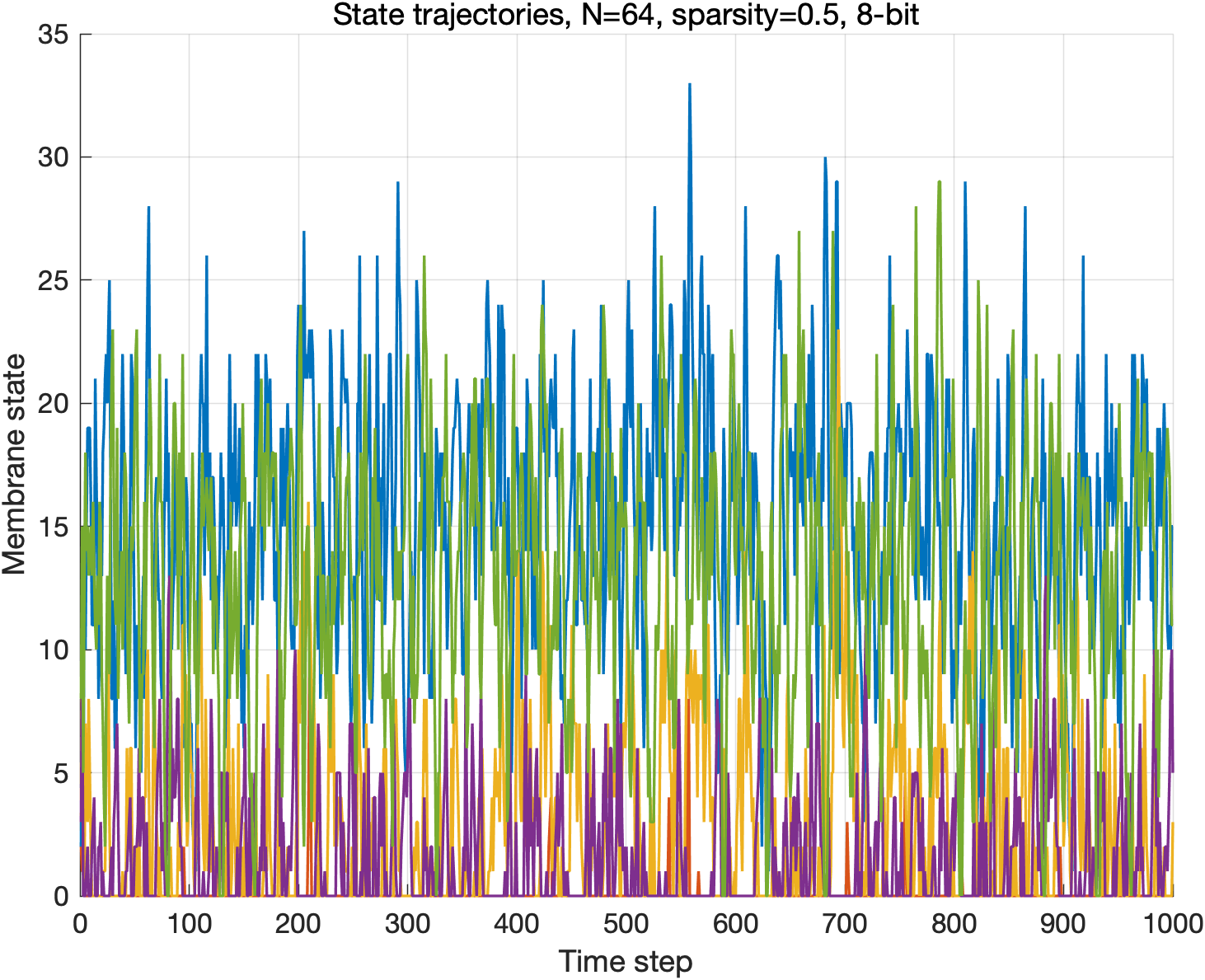

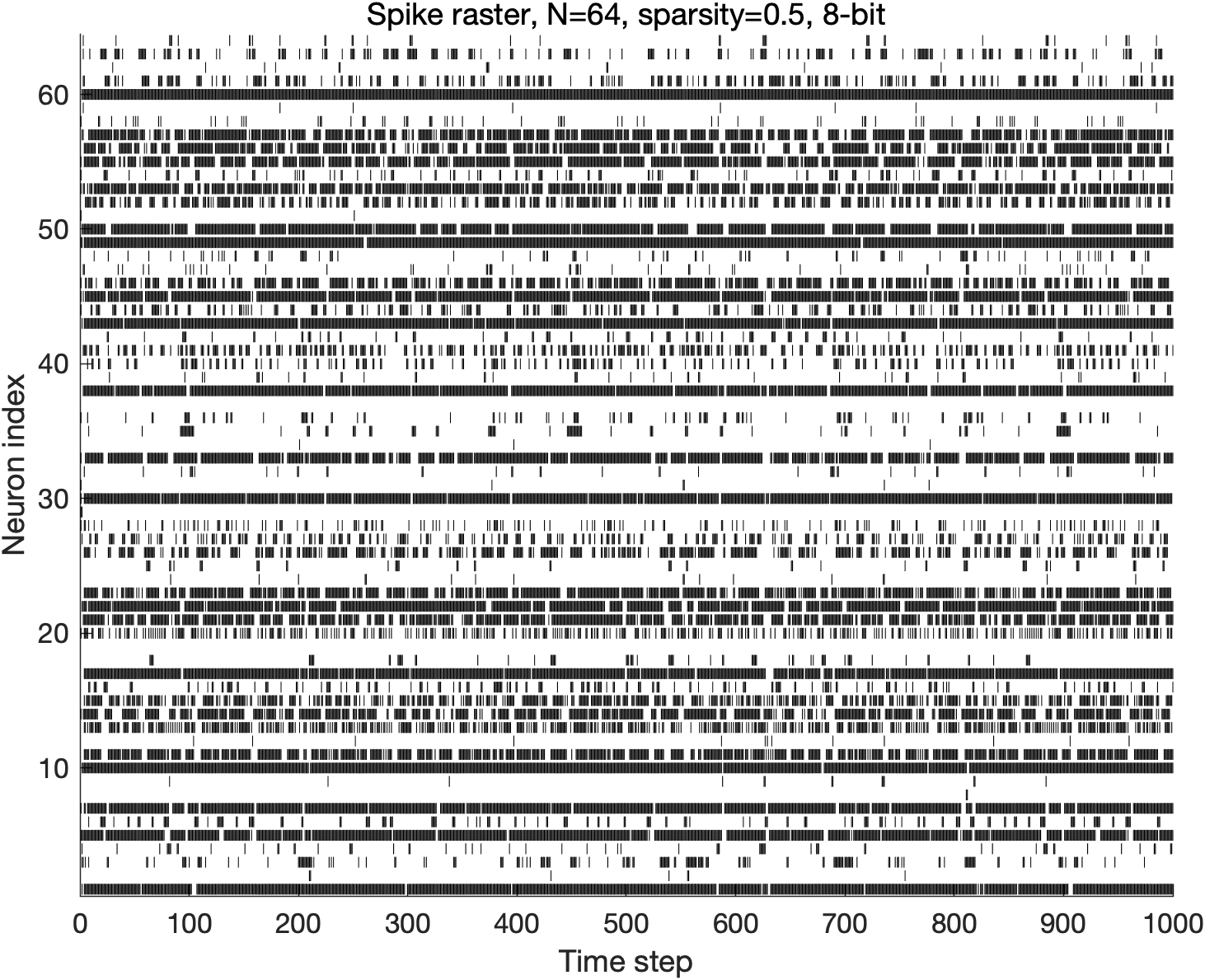

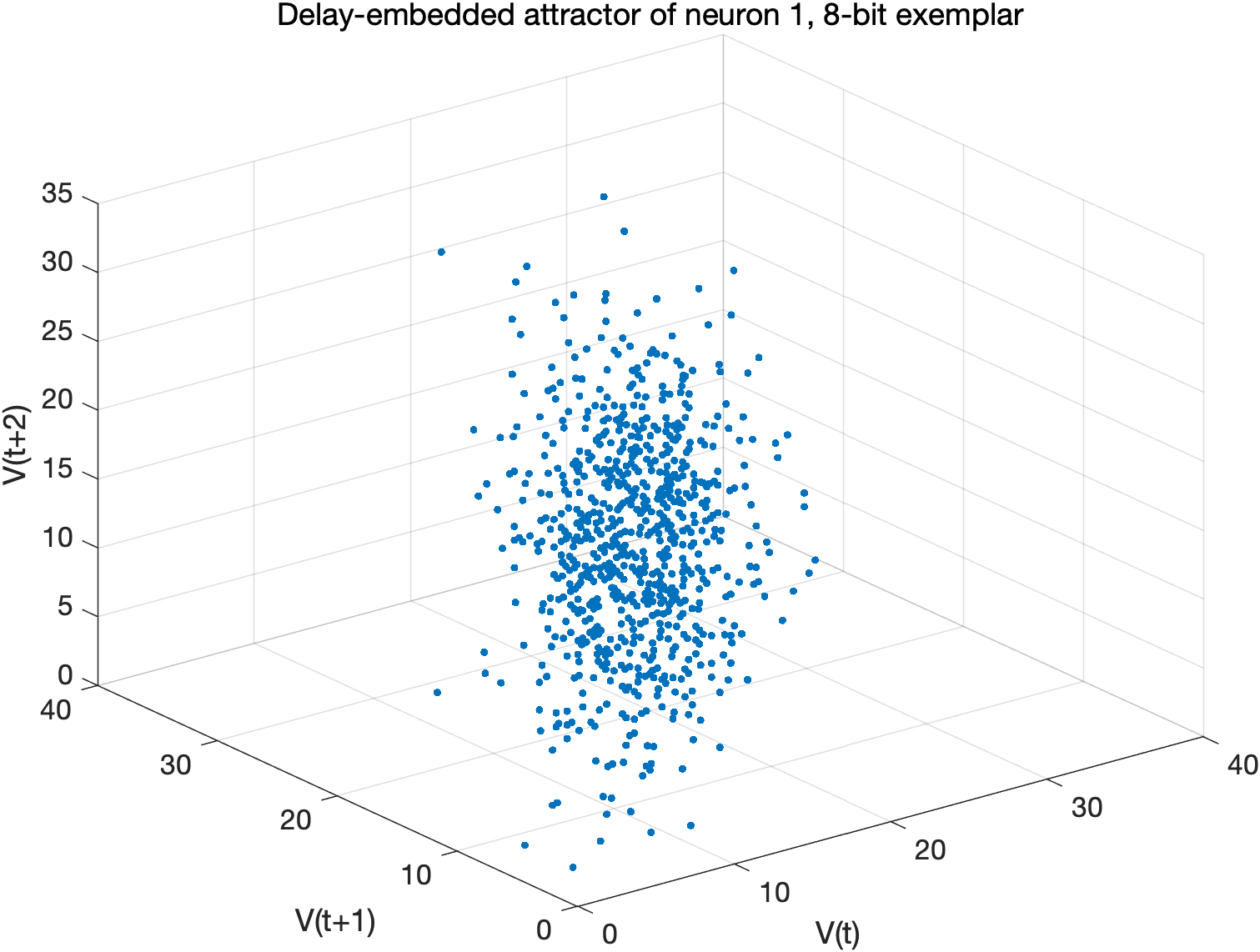

The authors conduct extensive exploratory simulations across networks with varying sizes (N=30–$130$), connection densities (0.1–0.9), and bit widths (1–16 bits), with a focus on unsigned, no-reset regimes to isolate the influence of representation. A representative N=64, 8-bit, 50% sparse regime is analyzed in detail.

The integer connectivity structure (Figure 1) and its manifestation in membrane dynamics (Figure 2) reveal bounded, nontrivial temporal recurrences that are neither static nor trivially periodic, demonstrating that complex, structured activity is compatible with stringent precision constraints.

Figure 1: Representative sparse integer connectivity matrix for the focused exploratory configuration (N=64, sparsity = 0.5, 8-bit exemplar).

Figure 2: Representative membrane-state trajectories for the focused exploratory configuration (N=64, sparsity = 0.5, 8-bit).

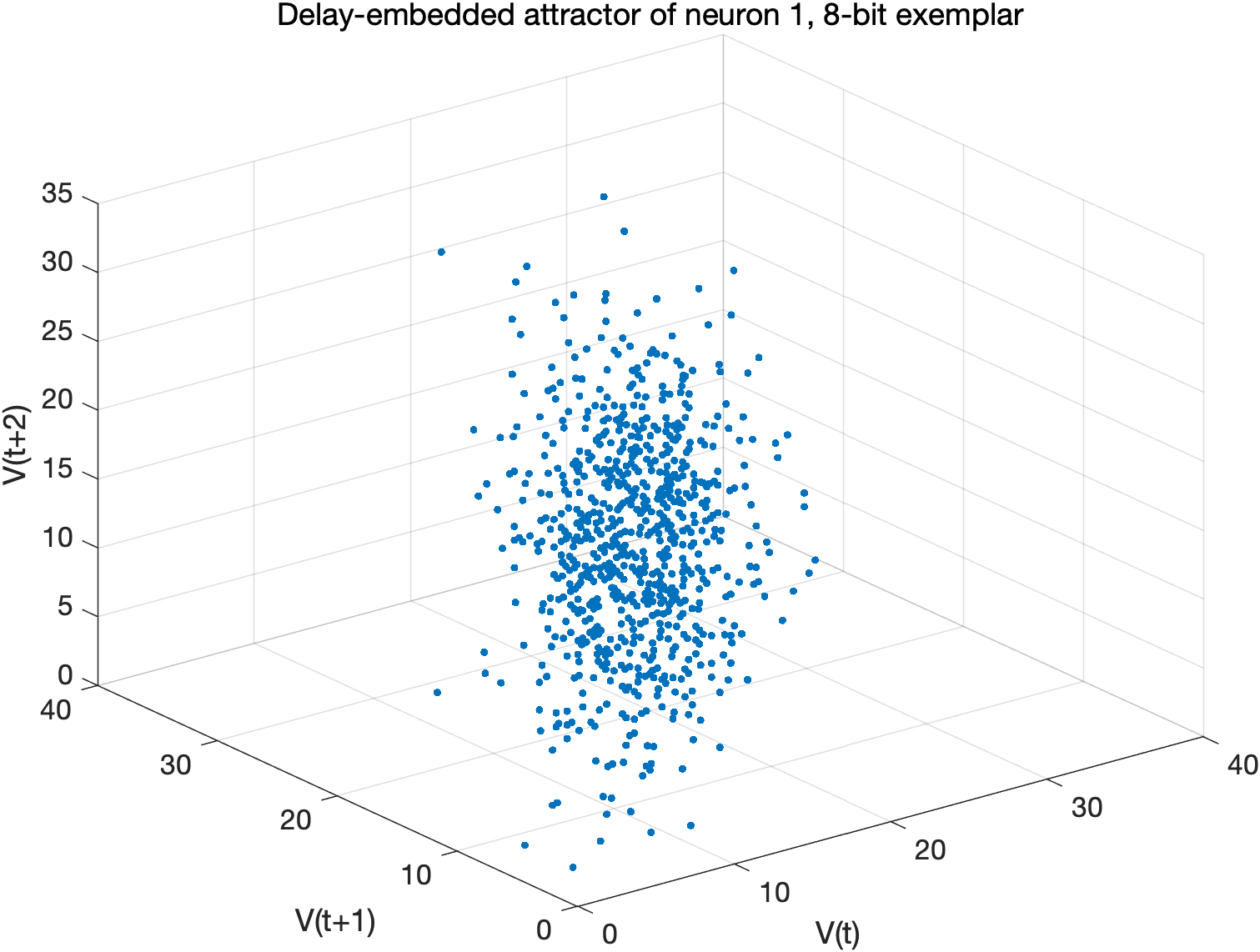

Spike raster plots confirm that the network maintains sustained, structured activity (Figure 3), excluding collapse to inactivity or pathological saturation. Delay-embedded state visualization (Figure 4) visibly demonstrates bounded recurrence distinct from random-walk or fixed-point degeneration.

Figure 3: Spike raster for the focused exploratory configuration (N=64, sparsity = 0.5, 8-bit).

Figure 4: Delay-embedded state visualization for a representative neuron in the focused 8-bit configuration.

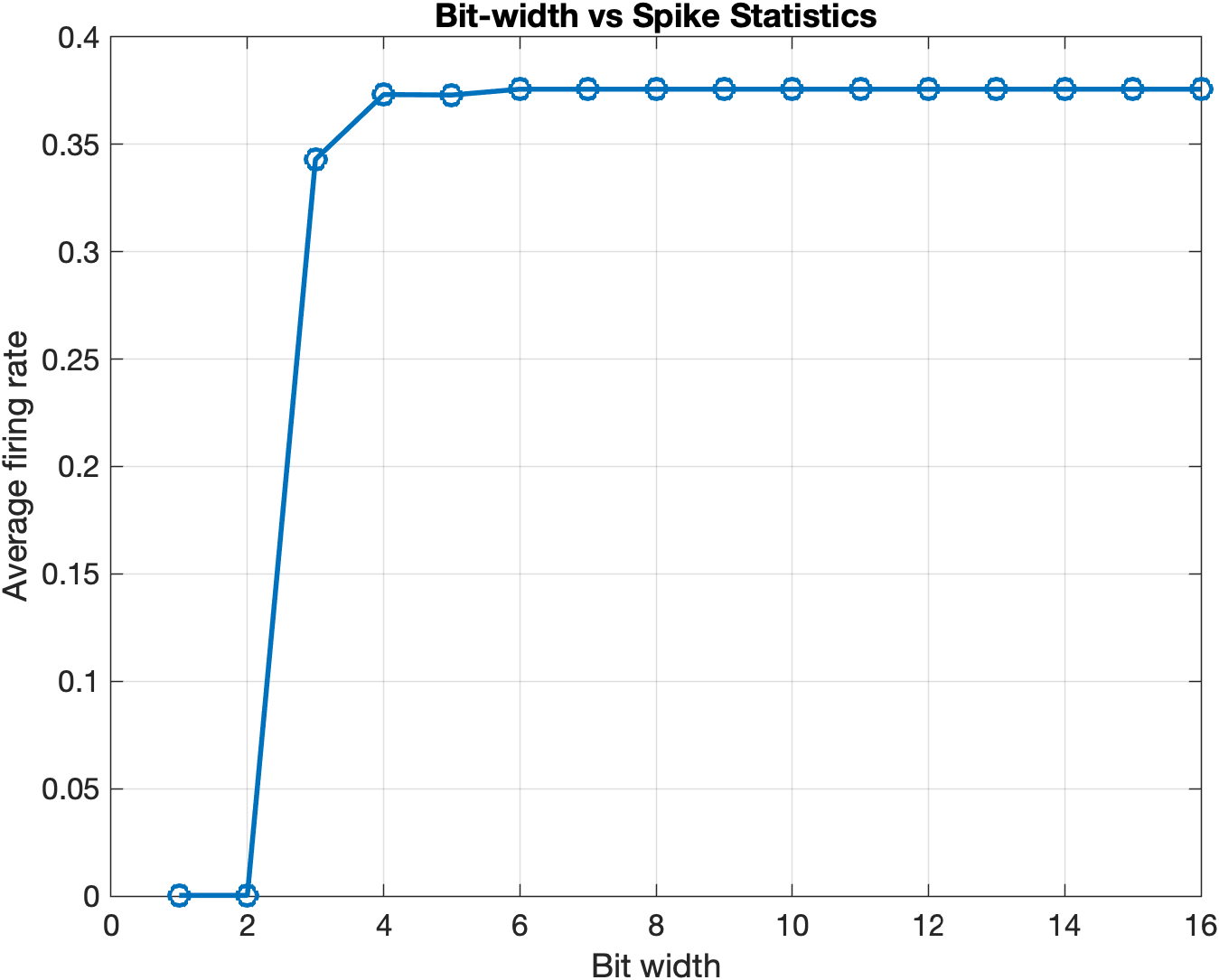

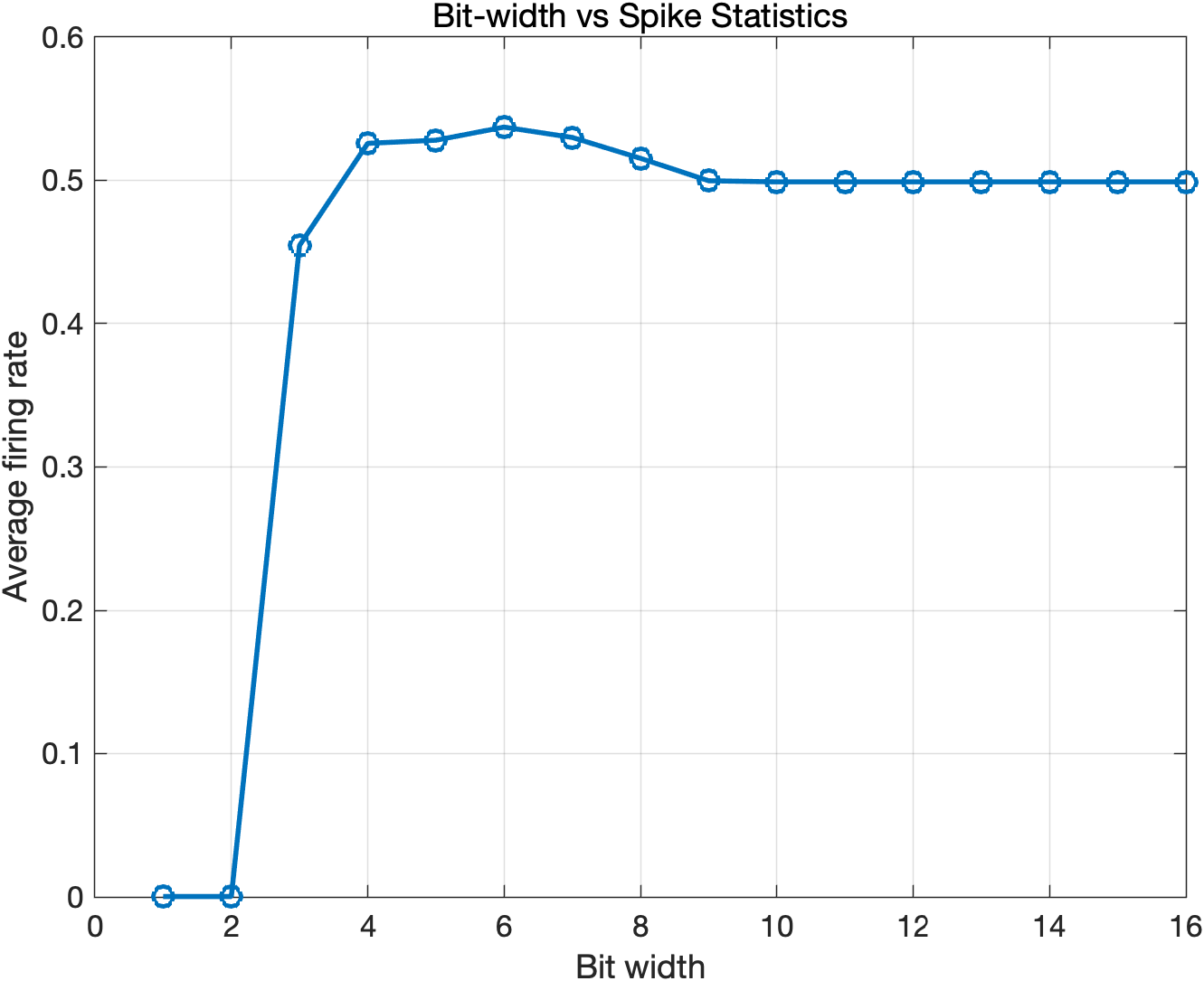

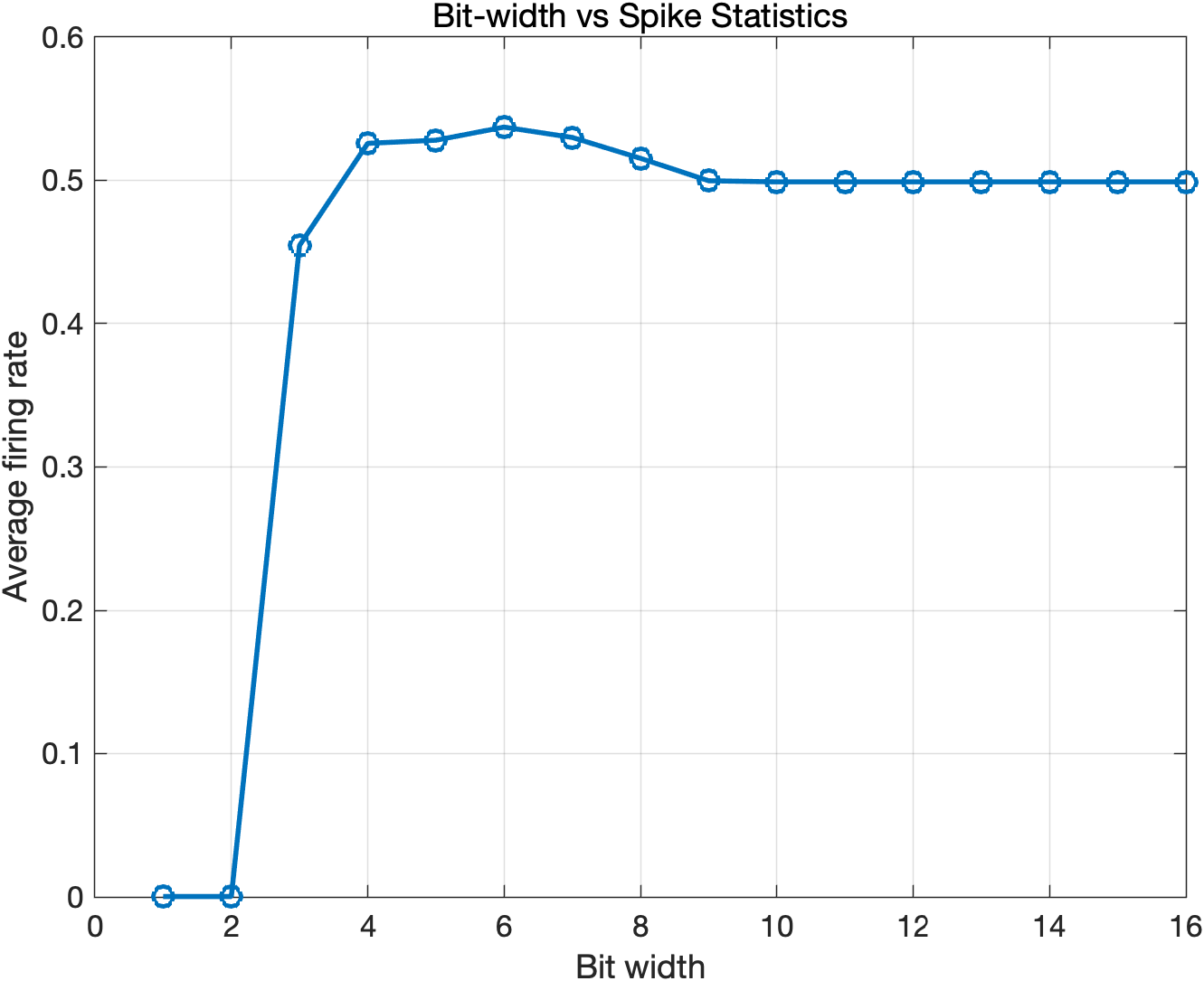

Bit-width sweeps show sharply delineated transitions: below 3 bits, the network is quiescent, while at 4 bits and above, sustained recurrent activity emerges (Figure 5). The plateau and sensitivity of firing rates depend explicitly on the leakage and sparsity parameters, demonstrating dynamical regime determination by quantization (Figure 6).

Figure 5: Average firing rate as a function of bit width across the global parameter sweep (k=1, sparsity=0.5).

Figure 6: Average firing rate as a function of bit width with higher leakage and lower connectivity (X(t)0, sparsity=0.2).

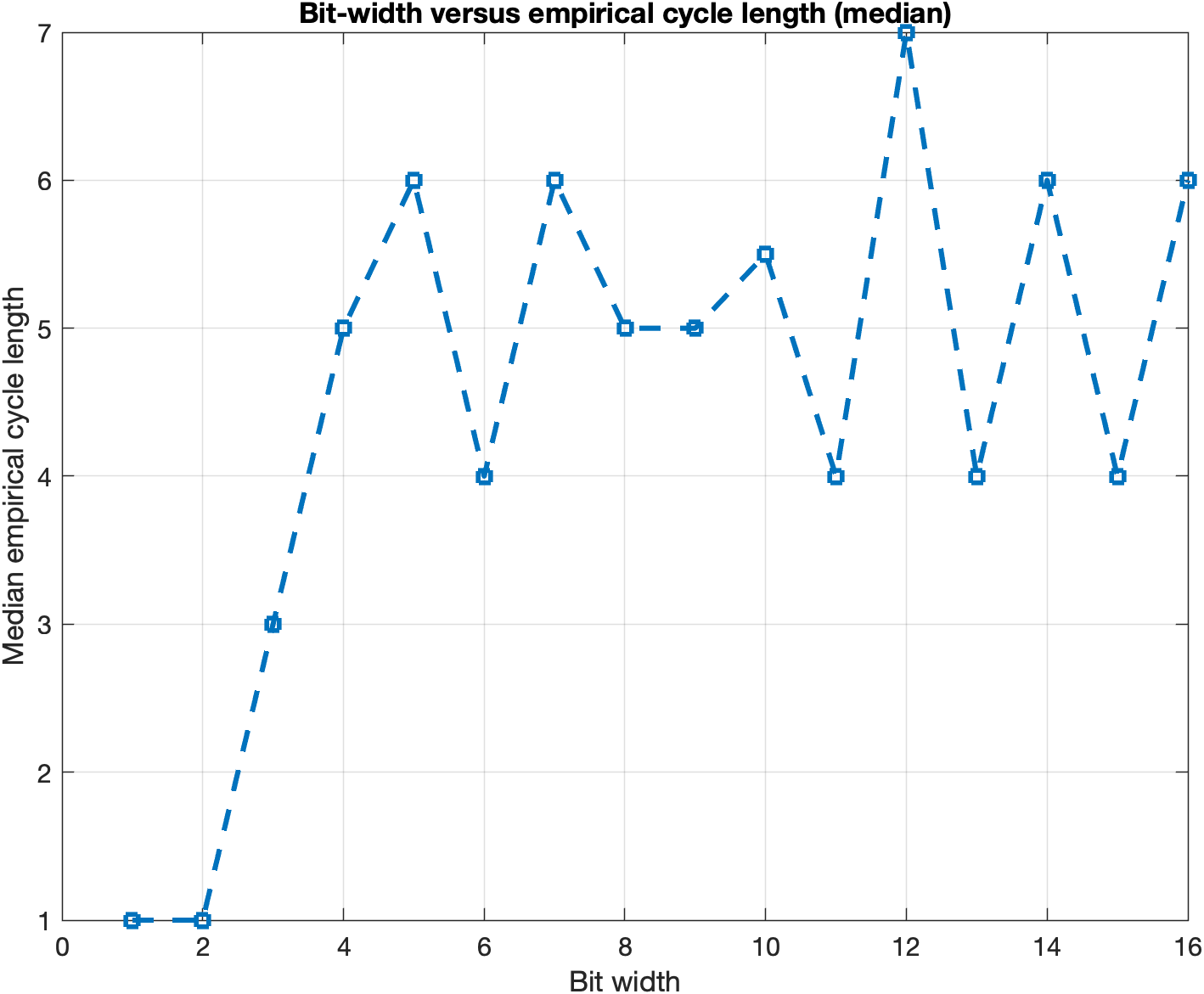

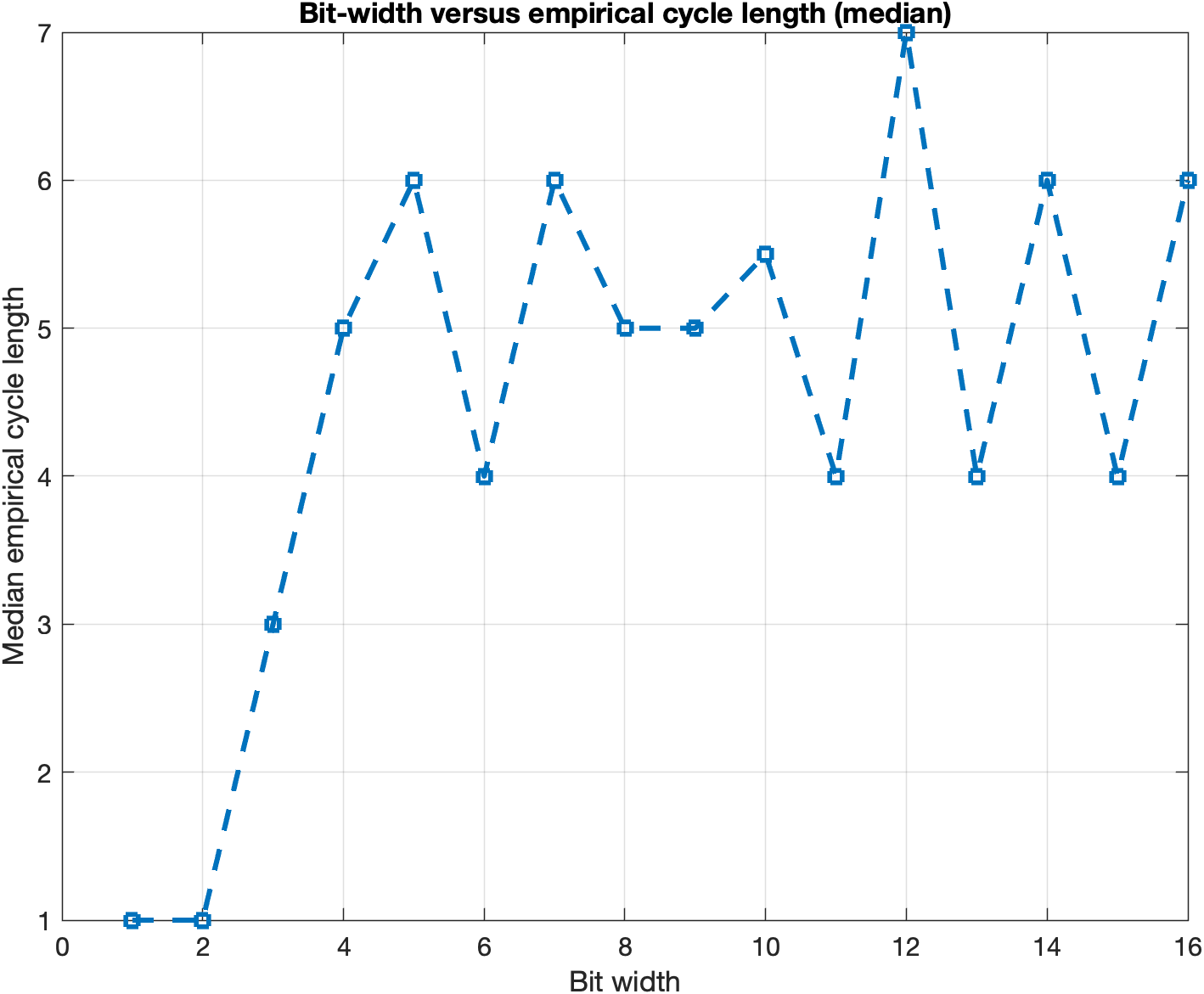

Cycle-length analysis (Figure 7) confirms that bounded recurrence is an intrinsic property across precision regimes, not a corner case of extremely coarse quantization.

Figure 7: Median empirical cycle length as a function of bit width; bounded recurrence persists across precision regimes.

Key Results and Claims

- Quantized, hardware-like SNNs can exhibit stable, nontrivial, and recurrent dynamics without recourse to floating-point emulation, provided representation parameters are chosen judiciously.

- There exists a strong, empirical precision threshold for sustained neural activity, typically around 3–4 bits; operating below this threshold induces network silence.

- Dynamical regimes (quiescence, recurrence, oscillation) are functions not only of connectivity/topology but fundamentally of hardware-level bit width, clipping behavior, and leakage semantics.

- Precision (bit width) therefore acts as a powerful control variable—on par with topology or learning rule—in shaping the functional regime of SNNs.

- Empirical results support the thesis that finite-state recurrence is not merely an artifact but can support functionally expressive, bounded dynamics.

Theoretical and Practical Implications

The integer-state framework suggests that future SNN algorithm development, training regimes, and application design should be intimately coupled with hardware parameterization. This co-design perspective requires analyzing and optimizing not only weight initialization and architectural macroparameters but also fine-grained hardware semantics: bit width, overflow/clipping, reset conditions.

The results invite extensions such as:

- Formal characterization of attractor and transient statistics as functions of architecture and precision.

- Development of training algorithms (e.g., precision-aware surrogate gradients) explicitly targeting the bounded integer state space.

- Empirical studies tying quantization-induced recurrence to functional downstream tasks, e.g., classification, control, or generative modeling.

- End-to-end FPGA/ASIC implementation, benchmarking actual energy/performance trade-offs as modulated by integer-state rules.

Conclusion

This work provides a rigorous formal and empirical foundation for the analysis of SNNs as discrete, hardware-fidelity finite-state systems. By demonstrating that quantization does not simply degrade but in fact reorganizes dynamical regimes as accessible states and attractors, the paper motivates a hardware-aware shift in SNN theory, modeling, and implementation. Integer-state analysis emerges as a vital analytical lens for principled, efficient, and robust deployment of spike-based intelligence on digital hardware. Future research directions naturally include formal attractor analysis, precision-bounded training strategies, and real-world hardware-algorithm integration, consolidating the role of numerical precision as a primary design axis in neuromorphic computing.