- The paper presents an interactive 4D scene generation system that integrates geometry-aware motion alignment and a hash-based global motion field for realistic environmental dynamics.

- It leverages bidirectional motion propagation to ensure temporal consistency and rapid scene expansion with stable, coherent motion fields.

- Experimental results demonstrate superior realism and efficiency compared to video-driven baselines, achieving full 4D scene synthesis in just 12 seconds.

LivingWorld: Interactive 4D World Generation with Environmental Dynamics

Motivation and Context

Contemporary progress in 3D scene synthesis from minimal input has generated compelling explorable environments; however, the overwhelming focus remains on static geometry and appearance. Environmental dynamics—fluid processes such as flow, smoke, or clouds—are critical for realism yet remain unaddressed in scene-level representations, especially under interactive constraints. The absence of temporally coherent, physically plausible large-scale dynamics limits the applicability of these systems in simulation and embodied intelligence.

"LivingWorld: Interactive 4D World Generation with Environmental Dynamics" (2604.01641) introduces an interactive 4D scene generation paradigm, extending 3D scene synthesis to fully dynamic worlds with explicit, globally coherent environmental motion, constructed efficiently from as little as a single image.

Figure 1: LivingWorld generates a dynamic 4D world with environmental dynamics from a single image. The geometry-aware alignment module maintains globally coherent scene dynamics as the world progressively expands.

Methodology

Scene Expansion and Representation

LivingWorld operates by initiating a 3D scene from an image and incrementally expanding it via user-guided camera motion and text prompts. The framework integrates scene expansion with motion field construction, supporting real-time interaction: each expansion cycle involves motion cue extraction, alignment, global motion field update, and bidirectional motion propagation. Critically, geometry and motion are co-evolved, avoiding inconsistencies arising from conventional video-driven optimization regimes.

Geometry-Aware Motion Alignment

Motion is estimated per generated view using a 2D Eulerian flow predictor, leveraging user-guided masks and orientation cues for region-of-interest specification. These dense flows are lifted to 3D scene-flow vectors using predicted scene depth. Due to view-wise, independent prediction, inherent ambiguities in orientation and scale arise across the spatially distributed cues. Consistency is rectified by a geometry-aware alignment module, which fits a global similarity transformation (rotation and scale) using the Kabsch algorithm and least squares refinement over matched correspondences. This approach rapidly fuses local, view-dependent motion cues into a globally consistent 3D motion structure, efficiently supporting interactive scene expansion.

Hash-Based Global Motion Field

Rather than optimizing scene motion per individual primitive, LivingWorld parameterizes environmental dynamics as a continuous spatial velocity field Fθ:R3→R3, implemented via a multi-resolution spatial hash-grid encoding coupled with an MLP. Sparse, globally aligned scene flows provide supervisory signals for regression, resulting in a compact and expressive representation. This architecture supports efficient per-location velocity queries, resolving both spatial sparsity and runtime complexity issues that affect traditional deformation- or trajectory-based frameworks. The result is stable, coherent motion propagation throughout dynamically expanding scenes with negligible computational overhead.

Bidirectional Motion Propagation

Single-pass motion integration tends to propagate density out of reconstructed regions, creating gaps and artifacts. LivingWorld addresses this by integrating motion both forward and backward in time for each Gaussian primitive, using symmetric trajectories and time-dependent blending to ensure coverage and reversibility. Only Gaussians in designated dynamic regions are advected; static scene elements remain fixed, further reducing unnecessary compute and mitigating artifact introduction.

Experimental Evaluation

Comparative Analysis

LivingWorld is benchmarked against leading video generation baselines (Veo 3.1, CogVideoX, and Tora) and the explicit 4D scene construction paradigm (4DGS-Cinemagraphy). Notably, the proposed approach achieves the highest scores in both environmental physics realism and photorealism metrics, and generates full 4D scenes in just 12 seconds, a significant improvement over alternative frameworks that require several minutes or more. Importantly, the method avoids expensive video-based refinement while maintaining temporal and geometric consistency.

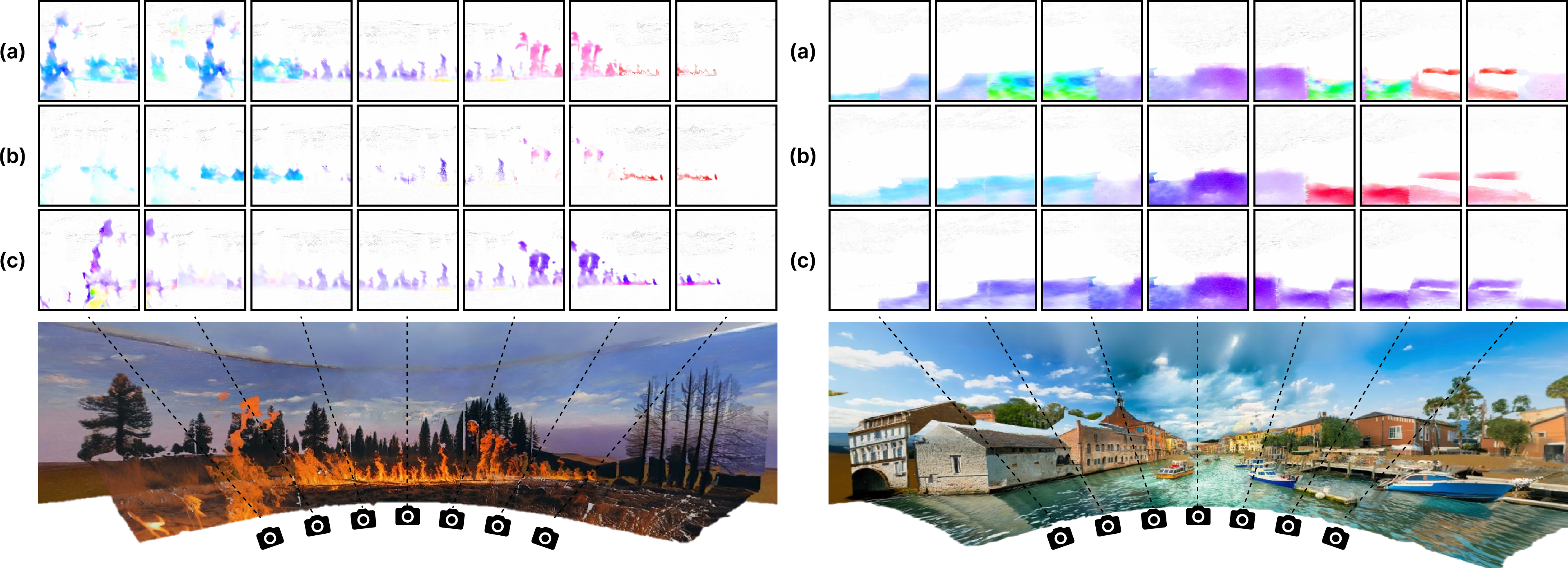

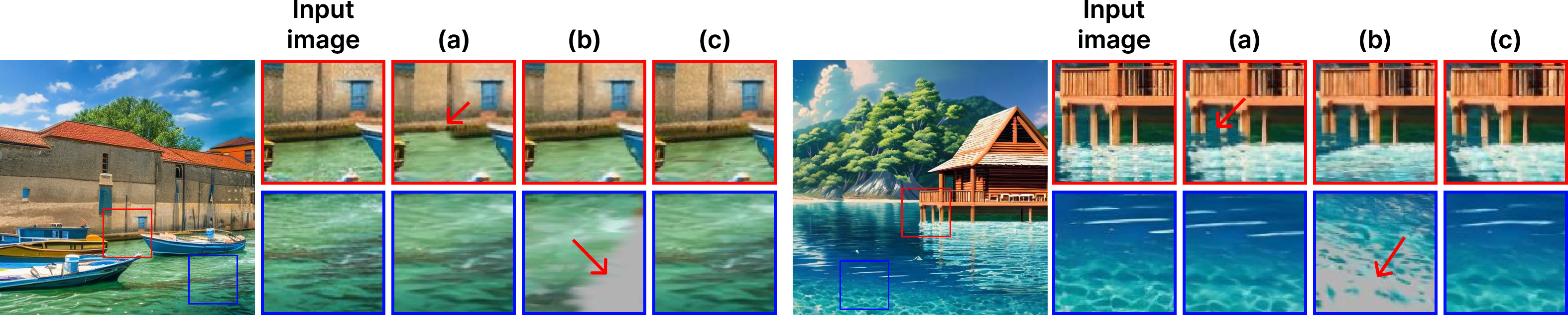

Figure 2: Qualitative comparison under camera movement: LivingWorld demonstrates high motion consistency and viewpoint stability absent in video-based and prior 4D scene baselines.

Qualitative analysis demonstrates that video-based baselines yield either static or temporally inconsistent dynamics when subject to viewpoint changes, with major geometric distortions. 4DGS-Cinemagraphy can enforce local coherence but fails at global consistency due to optimization restrictions. LivingWorld, in contrast, produces stable motion fields that preserve geometry and offer instant user feedback throughout exploration.

Figure 3: Qualitative comparison of motion consistency—LivingWorld resolves the instability evident in naive or optimization-only counterparts, maintaining coherent scene-wide environmental dynamics.

Ablations

The ablation studies confirm that both the hash-based motion field and bidirectional propagation are essential: omitting the hash network leads to unchecked magnitude accumulation and artifacts; forward-only integration results in coverage loss and instability at dynamic boundaries. The geometry-aware motion alignment module is shown to not only substantially improve motion consistency metrics but also reduce computational costs by several orders of magnitude relative to optimization-based schemes.

Figure 4: Ablation study on motion field design; only the full LivingWorld scheme achieves artifact-free, smooth environmental motion.

Object-Centric Motion Integration

While LivingWorld targets environmental (Eulerian) dynamics, the architecture admits localized, object-centric motion overlays (e.g., flag fluttering, branch swaying) with seamless integration, without disrupting the scene-level motion field.

Figure 5: Integration of environmental dynamics with localized object-centric motion, confirming extensibility of the framework.

Implications and Future Directions

LivingWorld represents an architectural convergence of scene generation, real-time user interaction, and physically plausible dynamics. The explicit modeling of global scene-level motion fields enables applications spanning interactive simulation, perceptual intelligence research, advanced VR content, and dynamic world modeling for embodied AI. Notably, the system’s efficiency and controllability position it as a practical alternative to both video-driven and optimization-constrained approaches.

However, the Eulerian field design is tuned to non-rigid, environmental phenomena; straightforward extension to general rigid or articulated object motion is non-trivial and may introduce geometric distortions (see discussion and Figure 6). Further research directions include the fusion of object-centric deformation models for integrated support of articulated actors, improved motion–appearance disentanglement, and direct physical simulation conditioning within the learning setup. Extensions to causally factorized or action-conditioned motion are of particular importance for downstream simulation and video generation applications.

Conclusion

LivingWorld establishes a formal, modular approach for scene-level dynamic world generation directly from minimal visual input, excelling in physical realism, coherence, and efficiency. The methodology—combining geometry-aware alignment, hash-based motion field learning, and bidirectional propagation—sets a high baseline for future systems requiring controllable, real-time environmental dynamics with high spatial and temporal fidelity. The scalability and extensibility of this framework suggest important avenues in interactive scene understanding and content creation, while systematic integration of richer object-centric motion remains a compelling challenge.