- The paper introduces a random-subspace SQP method that limits evaluation costs by estimating directional derivatives within a reduced subspace.

- It proves high-probability convergence to first-order KKT points with iteration complexity scaling with the effective constraint dimension.

- Empirical results on benchmarks and power network optimization validate its robust constraint handling and superior performance over baseline methods.

Random-Subspace Sequential Quadratic Programming for Constrained Zeroth-Order Optimization

The work "Random-Subspace Sequential Quadratic Programming for Constrained Zeroth-Order Optimization" (2604.02202) introduces a novel algorithmic framework for nonlinear constrained optimization in the regime where only function evaluations of the objective and constraints are available—i.e., no gradients or Jacobians can be computed or are efficient to evaluate. The primary challenge arises in high-dimensional settings: conventional zeroth-order (ZO) methods become prohibitively expensive, as estimating gradients and constraint Jacobians via finite differences typically incurs costs scaling with the ambient dimension. SQP is renowned for its structured handling of constraints and fast convergence under first-order access, but its classical instantiations are unsuited for the ZO regime.

The authors aim to bridge this gap by integrating random subspace techniques with first-order-proximal SQP to lower the iteration cost in high dimensions, while maintaining rigorous constraint handling.

ZO-RS-SQP Algorithmic Framework

Subspace Restriction via Stiefel Sampling

The central algorithmic construct is the random-subspace sequential quadratic programming (ZO-RS-SQP) framework. At each iteration, the method samples a low-dimensional, Haar-uniform orthonormal basis Ut∈Rn×d from the Stiefel manifold St(n,d). The search direction Δxt is restricted to this subspace as Utαt, where αt∈Rd is a step vector determined by solving a reduced quadratic programming (QP) subproblem.

Zeroth-Order Directional Estimation

Within the sampled subspace, the method employs two-point finite difference estimators for the directional derivatives of the objective and constraint functions along the subspace directions. This avoids the need to estimate full gradients/Jacobians. For each basis vector ut,j of Ut, the estimates are: 2rf(xt+rut,j)−f(xt−rut,j)and2rh(xt+rut,j)−h(xt−rut,j),2rg(xt+rut,j)−g(xt−rut,j).

Thus, the number of function evaluations per iteration is proportional to d (the subspace dimension) rather than the ambient dimension n.

Reduced SQP Update

Given the directional estimators, the reduced-dimension SQP QP is formed, linearizing constraints and building a first-order (proximal) model within the subspace. Acceptance is conditioned on feasibility and bounded dual variables (multipliers). Infeasible or ill-conditioned subproblems are rejected, and a new subspace is sampled.

Line Search Variant

Recognizing potential instability and constraint violation in practice, the authors develop a variant coupling the SQP search direction with an Armijo line-search procedure applied to an exact-penalty merit function. This ensures sufficient descent in a penalized composite objective while preserving feasibility.

Theoretical Guarantees

High-Probability Convergence to First-Order KKT Points

The analysis establishes, under standard smoothness and regularity (uniform MFCQ) assumptions, that the ZO-RS-SQP algorithm converges to first-order KKT points with high probability. Explicitly, a convergence rate of St(n,d)0 is proven for a KKT residual averaged over St(n,d)1 iterations (see Theorem “High-probability St(n,d)2 convergence”), provided the subspace dimension St(n,d)3 scales with the number of constraints rather than the ambient space.

Additionally, the convergence analysis demonstrates that the per-iteration complexity remains tractable for problems with a low effective constraint dimension, even in very high-dimensional spaces.

Robust Acceptance Rate via Randomized Subspace Sampling

A key technical result is that, for St(n,d)4 (with St(n,d)5 the total number of equality constraints), the probability of sampling a subspace yielding a feasible reduced SQP subproblem with bounded multipliers is uniformly positive (Theorem “Positive acceptance probability”), even under rejection sampling. This ensures the method is well-posed and efficient in practical scenarios.

Armijo Descent and Bounded Steps

Merit-function-based analysis confirms that the line search variant guarantees decrease of a suitably chosen merit function at each iteration (Theorem “Decrease of Merit Function per-step”), ensuring robust progress and feasibility.

Numerical Experiments

Nonlinear Programming Benchmark

Experiments on a synthetic nonlinear programming problem demonstrate that both ZO-RS-SQP and its line-search variant achieve convergent decrease in objective value, constraint violation, and KKT residual, with the line search variant providing smoother and slightly faster convergence.

Transient Dynamics Optimization in Power Networks

A more complex application targets the optimization of generation setpoints in a dynamic power network subject to transient angle separation constraints—prototypical of large-scale, simulation-based optimization in power systems where only function evaluations from trajectory simulations are available. Here, the line search variant again yields clear advantages in improved constraint satisfaction and KKT gap reduction.

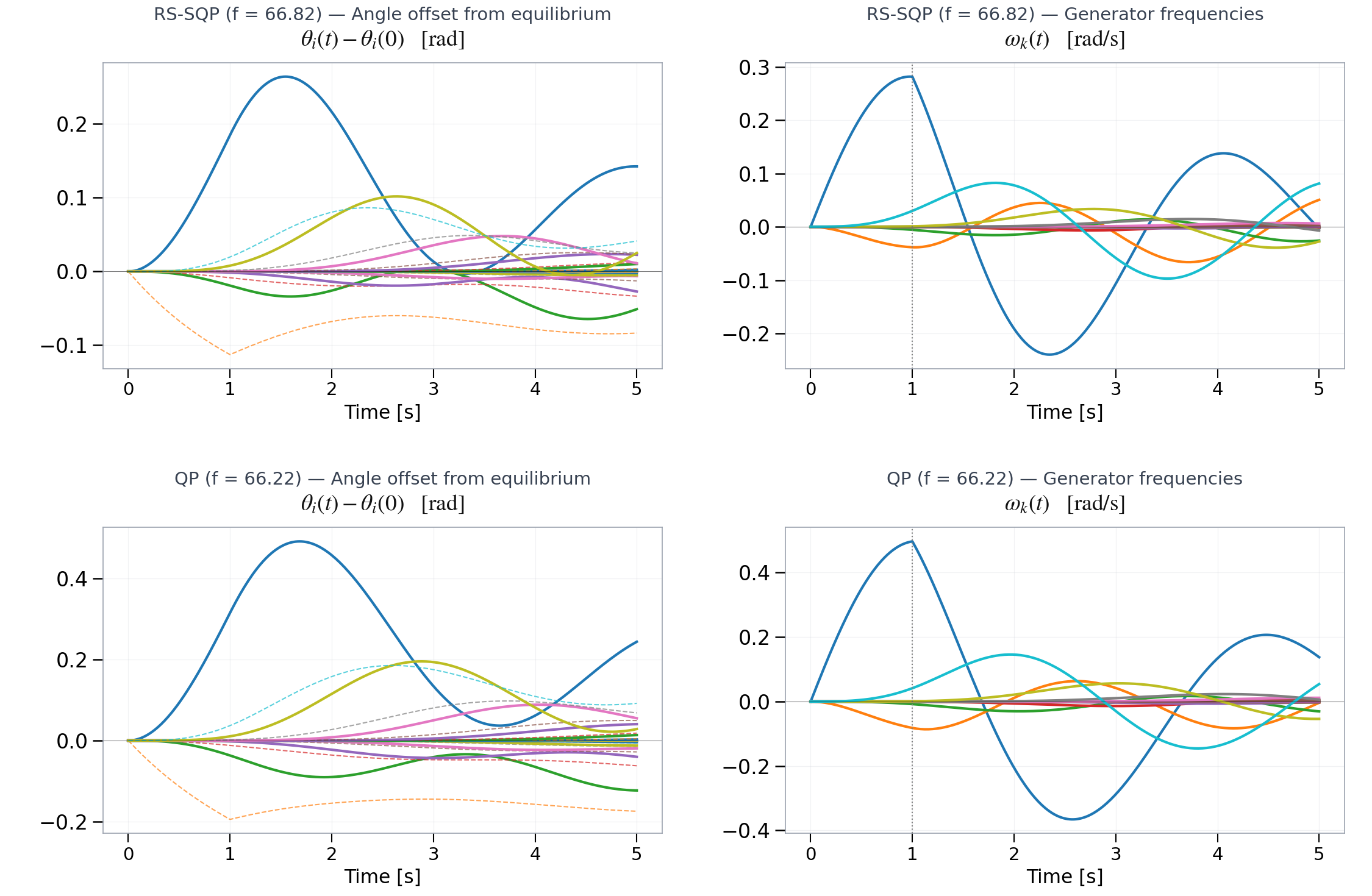

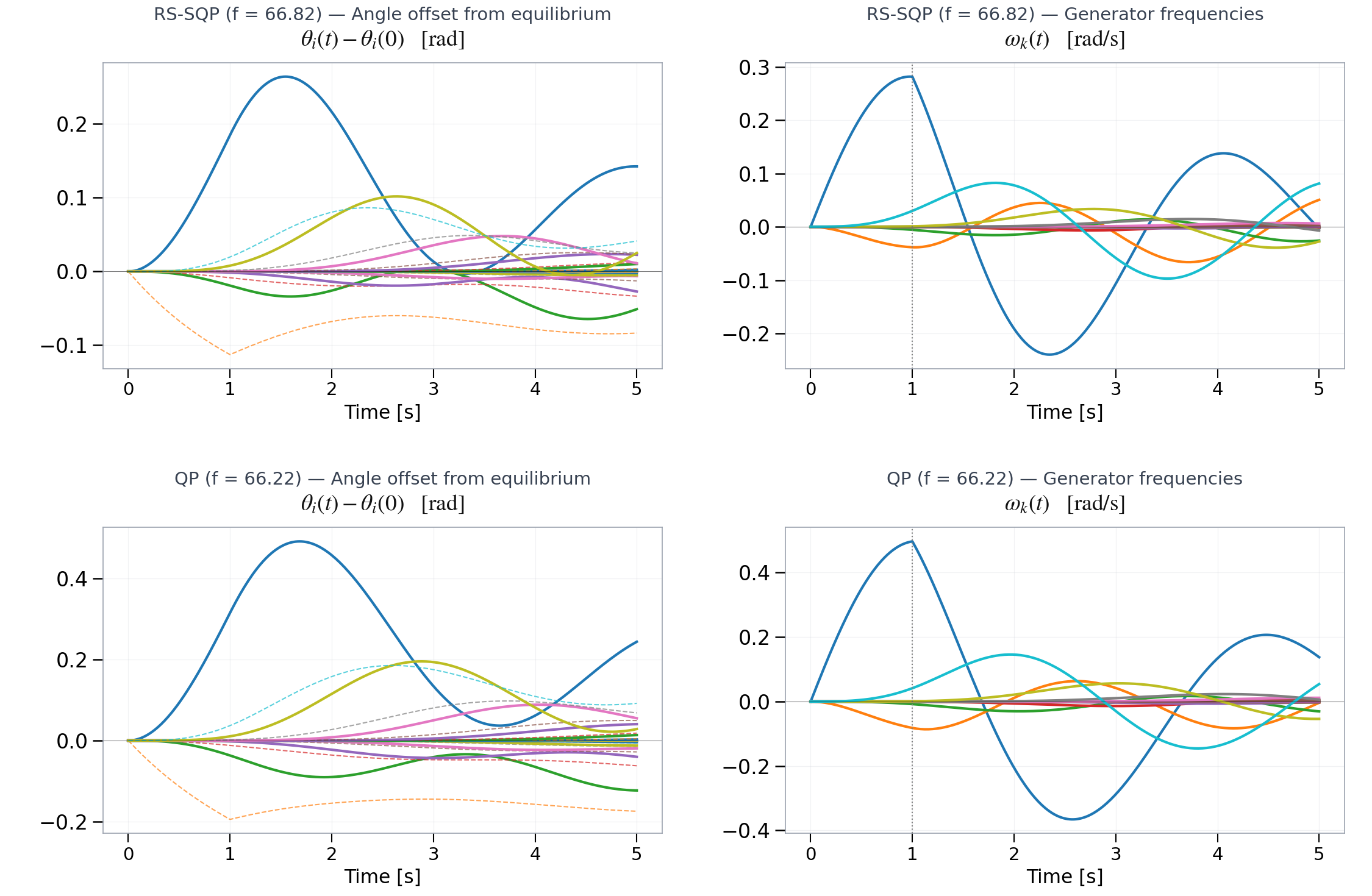

Figure 2: Transient phase (offset) and frequency dynamics under a fault disturbance. Top: solution obtained ZO-RS-S-LS. Bottom: baseline QP solution ignoring angle separation constraints.

In this setting, ZO-RS-SQP successfully maintains phase angle constraints by leveraging explicit constraint linearization in the reduced subspace, outperforming a baseline that ignores nonlinear constraints. These results validate both the theoretical and practical promise of the proposed methodology for nontrivial simulation-based, nonlinear problems.

Implications and Future Directions

The ZO-RS-SQP framework constitutes a significant advance in the scalable, constraint-respecting zeroth-order optimization methodology. By decoupling function evaluation cost from the ambient dimension and leveraging the structural advantages of SQP, the technique is particularly well-suited for simulation-based design and learning in engineering systems where gradients are unavailable or unreliable. Furthermore, the explicit retention of constraint structure offers clear advantages over penalty-based or primal-dual zeroth-order approaches for nonconvex, nonlinear problems.

The approach raises several future research avenues. Incorporating quantitative analysis of approximation errors induced by finite difference estimators is an immediate next step; adaptive subspace strategies (e.g., learning the intrinsic constraint manifold dimension) could further enhance efficiency. The method also holds promise for black-box policy search in reinforcement learning and for the design/control of cyberphysical networks where only rollouts or simulation-based interactions are feasible.

Conclusion

The paper develops a robust, dimension-scalable algorithm for zeroth-order constrained optimization by combining random subspace projection with sequential quadratic programming. Provable convergence to first-order KKT points with high probability is established, with iteration costs scaling in the constraint manifold dimension rather than the full space. Empirical evaluations corroborate the practical effectiveness and stability of the method, especially in conjunction with penalized line search. This framework provides a powerful tool for a wide array of simulation-based, high-dimensional optimization tasks under nonlinear and nonconvex constraints.