- The paper demonstrates TGPNet, a unified architecture that uses task-guided prompting to restore remote sensing images across optical, SAR, and TIR modalities.

- It introduces learnable task-specific embeddings and hierarchical feature modulation to adaptively process diverse degradations, such as noise, blur, and cloud cover.

- TGPNet achieves state-of-the-art improvements in PSNR and SSIM while being parameter-efficient and capable of handling out-of-distribution composite degradations.

Task-Guided Prompting for Unified Remote Sensing Image Restoration

Introduction and Motivation

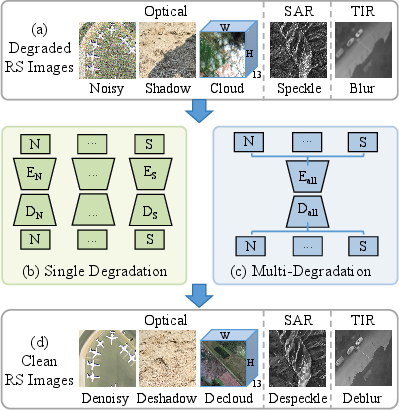

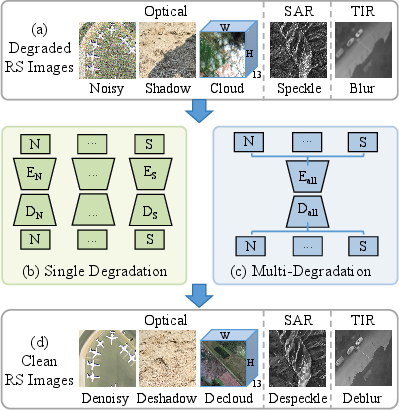

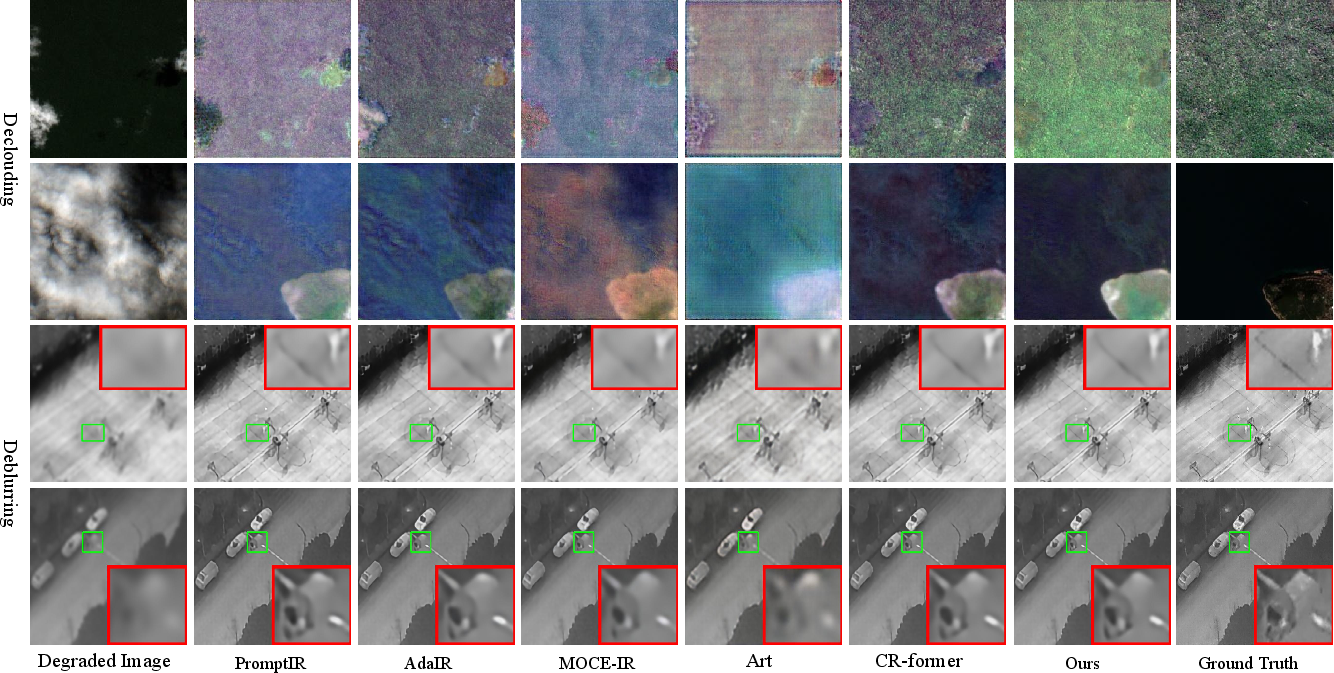

The paper "Task-Guided Prompting for Unified Remote Sensing Image Restoration" (2604.02742) introduces TGPNet, a unified architecture addressing multi-degradation remote sensing image restoration (RSIR) across optical (RGB/MultiSpectral), SAR, and TIR domains. Traditional RSIR models predominantly focus on isolated degradation types and single modalities, leading to inflexible pipelines and poor scalability in operational scenarios where real data is contaminated by multiple, heterogeneous degradations. The proposed framework leverages data-driven priors to obviate modality-specific hand-crafted physical equations, enabling streamlined, unified processing over denoising, cloud removal, shadow removal, deblurring, and SAR despeckling.

Figure 1: Conceptual comparison of RSIR paradigms, exemplifying modality diversity and the TGPNet unified pipeline.

Architecture

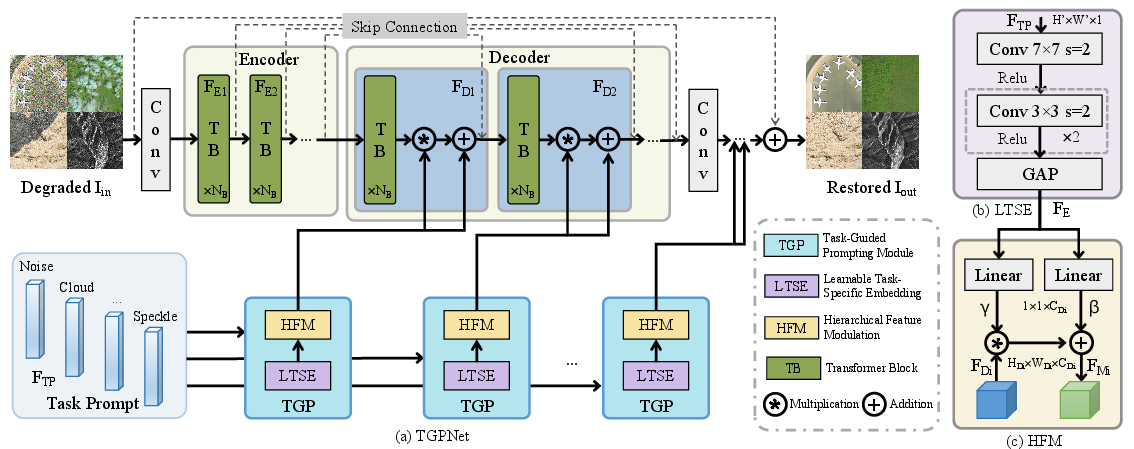

TGPNet follows a transformer-based encoder-decoder backbone augmented with multi-location Task-Guided Prompting (TGP) modules. Each TGP module consists of:

- Learnable Task-Specific Embedding (LTSE): Each restoration task is assigned a learnable prompt, shaped via convolutional layers and global average pooling, encoding task/degradation characteristics.

- Hierarchical Feature Modulation (HFM): LTSE output modulates decoder features at six strategic points using channel-wise affine transformations (scaling and bias), producing degradation-aware reconstructions.

This design enables a single, weight-sharing network to solve multiple restoration problems with adaptive feature statistics modulation, supporting modular and dynamic task composition.

Figure 2: Architecture of TGPNet, showing integration of LTSE and HFM within an encoder-decoder backbone.

Unified Multi-Modal Benchmark and Evaluation Protocol

The authors propose a comprehensive benchmark, URSIR, aggregating standard datasets covering all major tasks and modalities in remote sensing: UCMLUD for denoising, RICE1/RICE2/SEN12MS-CR for declouding, SRD/UAV-TSS for deshadowing, NRD for SAR despeckling, and HIT-UAV for TIR deblurring. Evaluation relies on PSNR and SSIM as core metrics and extends to MAE and SAM for cloud removal.

Experimental Results

Multi-Task and Multi-Modal Generalization

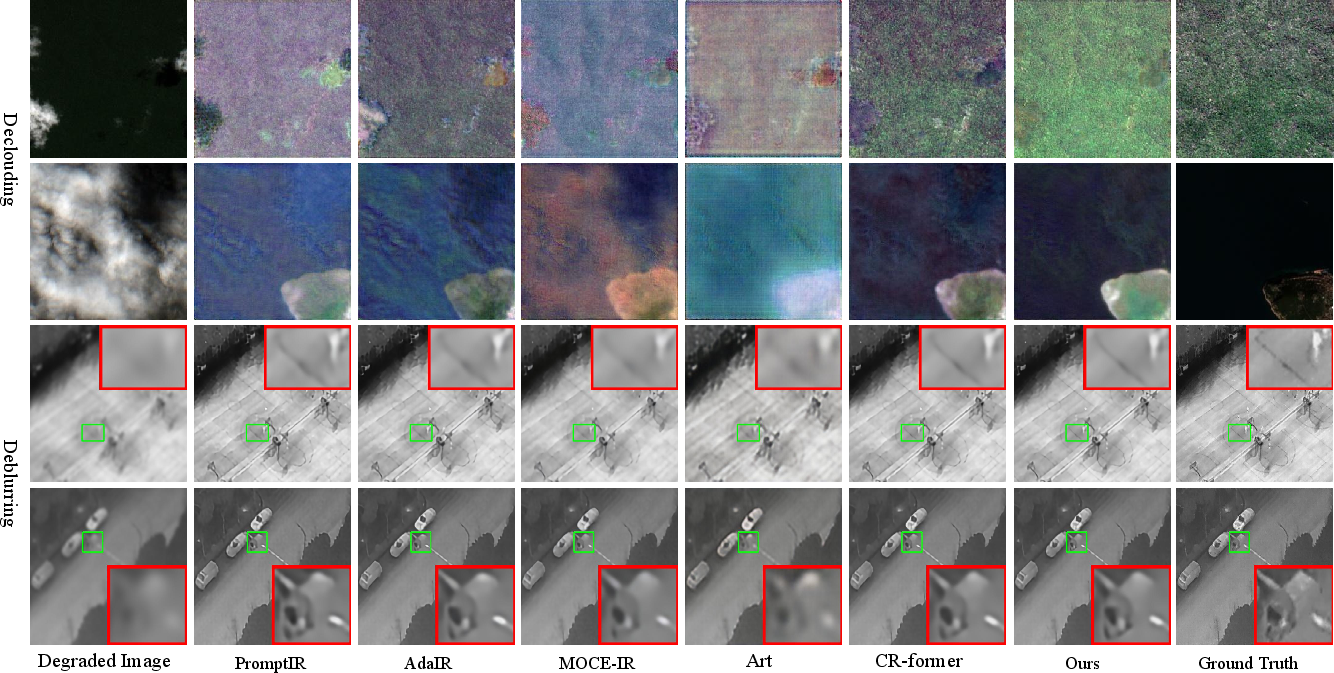

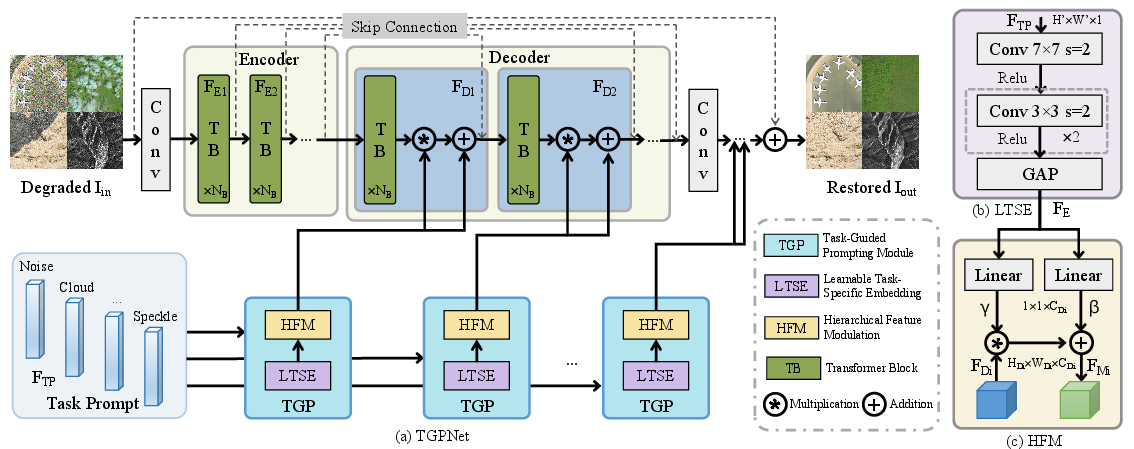

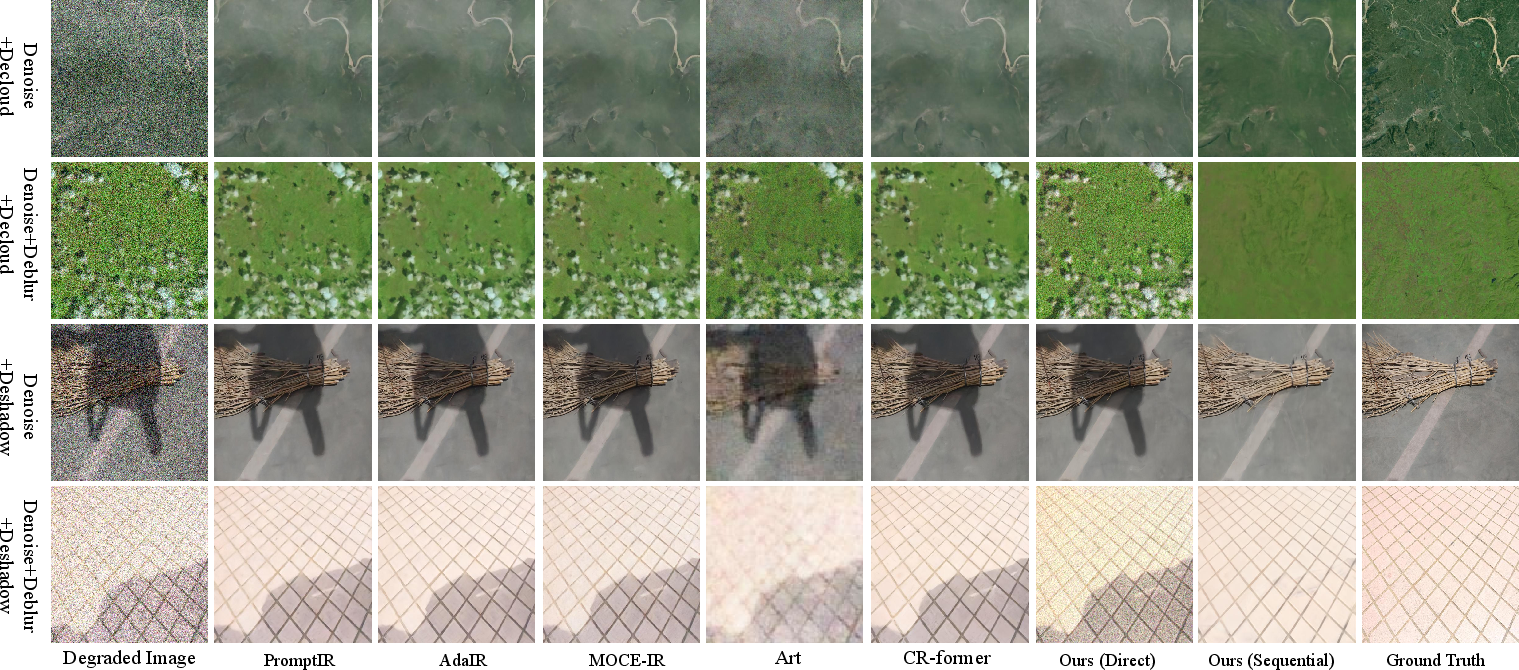

TGPNet outperforms both natural image unified baselines (PromptIR, AdaIR, MOCE-IR) and domain-specific architectures in progressively harder settings:

- 2/3-task (Optical-only): Superior unified performance over SOTA (e.g., CR-former) with notable PSNR/SSIM advantages (up to +3.35 dB).

- 4-task (Optical + SAR): Highest scores on cloud removal, strong SAR despeckling even under additive-multiplicative domain shift. Maintains best average PSNR/SSIM.

- 5-task (Optical + SAR + TIR): Outperforms specialized baselines even after inclusion of challenging TIR deblurring and multispectral declouding tasks.

Figure 3: Visual comparison for four degradation types; TGPNet preserves structural and spectral fidelity under diverse challenges.

Figure 4: Restored multispectral (declouding) and thermal (deblurring), highlighting generalization to non-optical modalities.

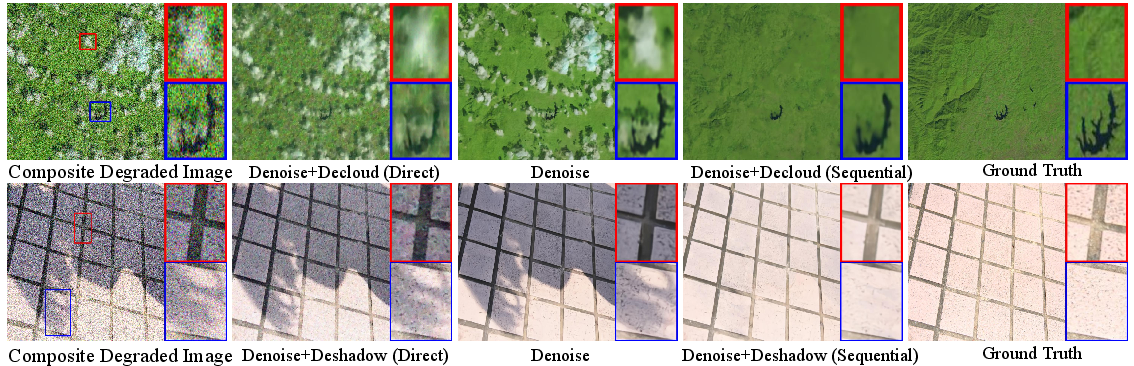

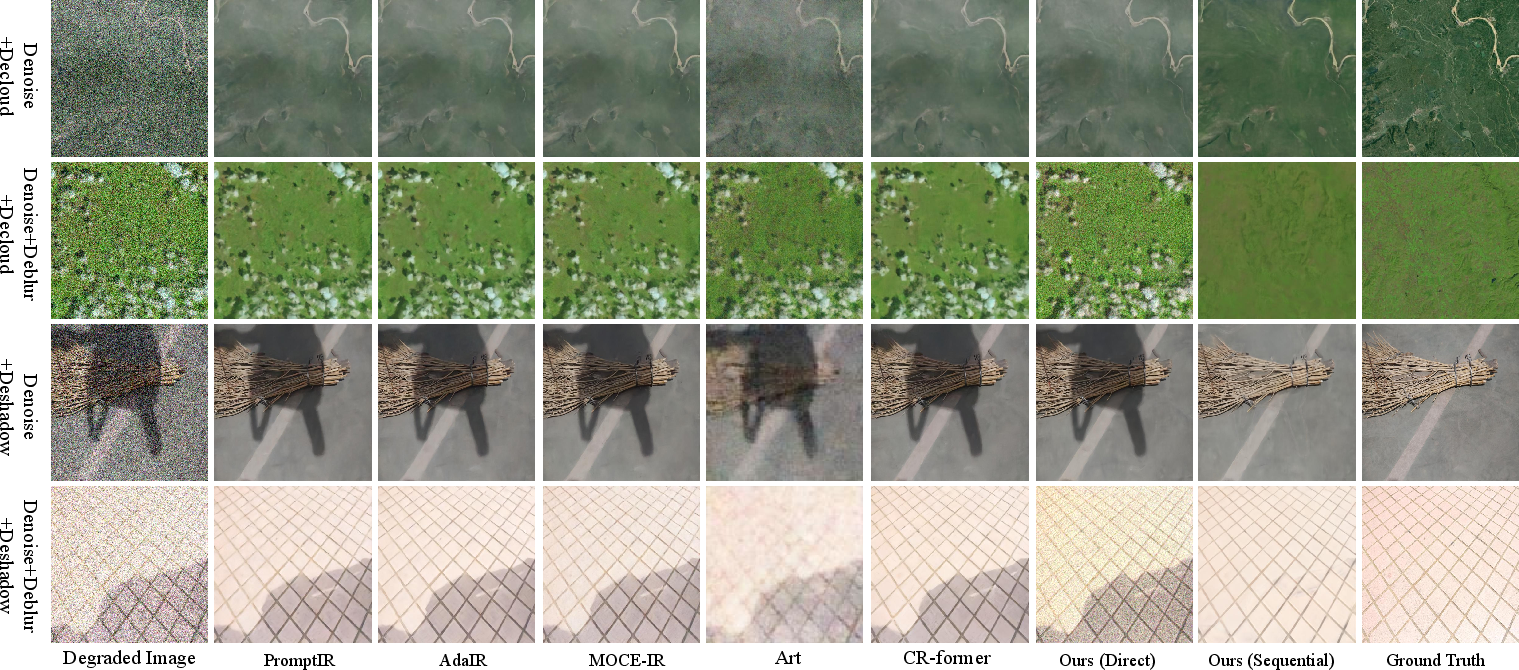

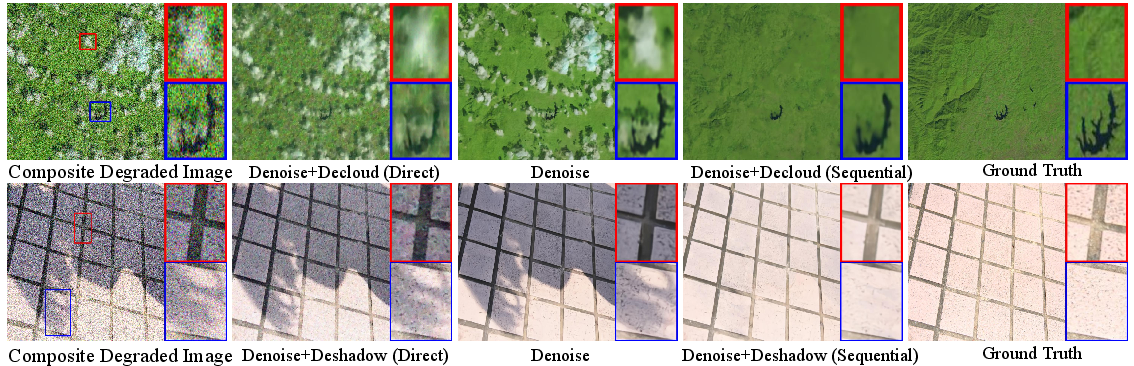

Generalization to Out-of-Distribution Composite Degradations

Directly averaging task embeddings for unseen degradations (e.g., noisy, blurred, and cloudy inputs) leads to suboptimal outcomes. Instead, the authors leverage TGPNet’s compositionality: sequential application of relevant prompts produces state-of-the-art results, drastically outperforming all baselines (up to +8 dB PSNR improvement in severe Gaussian + cloud composites).

Figure 5: Restoration comparison on composite degradations; sequential processing yields clear advantages over direct averaging.

Figure 6: Robustness under high-noise, multi-artifact settings—TGPNet’s sequential decomposition mitigates compound artifacts.

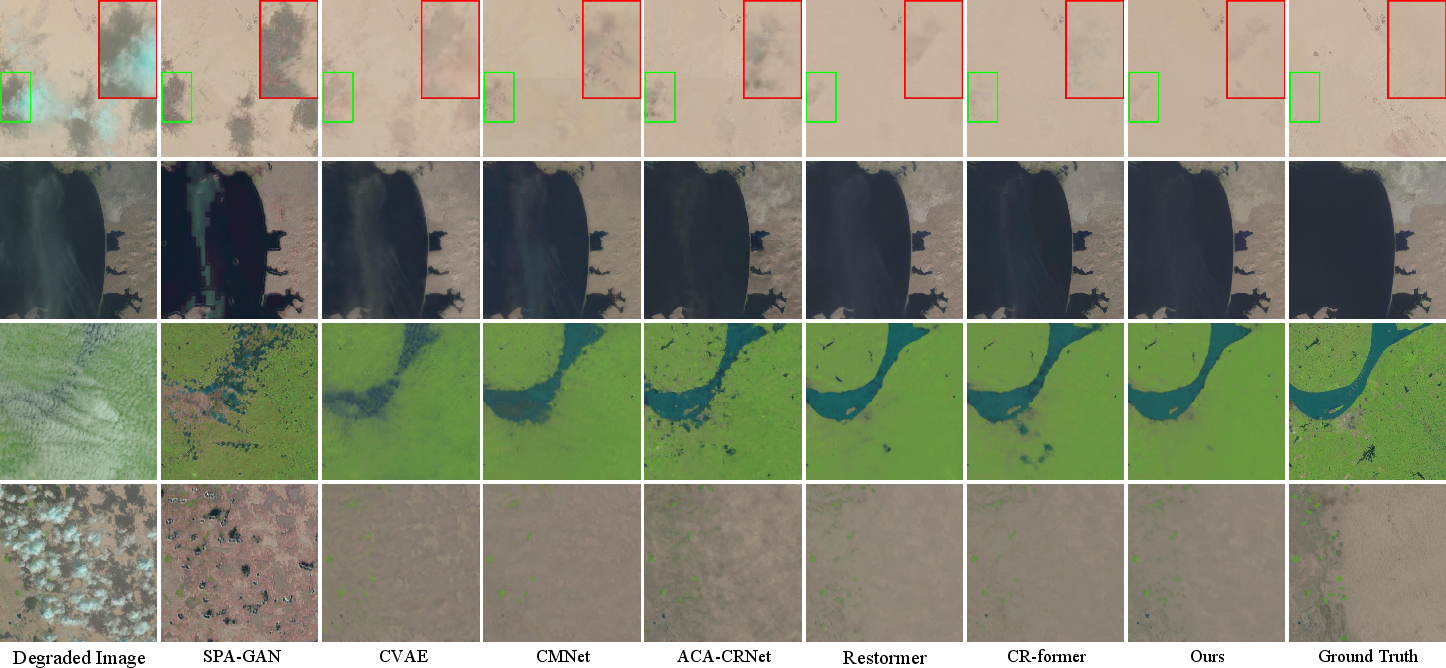

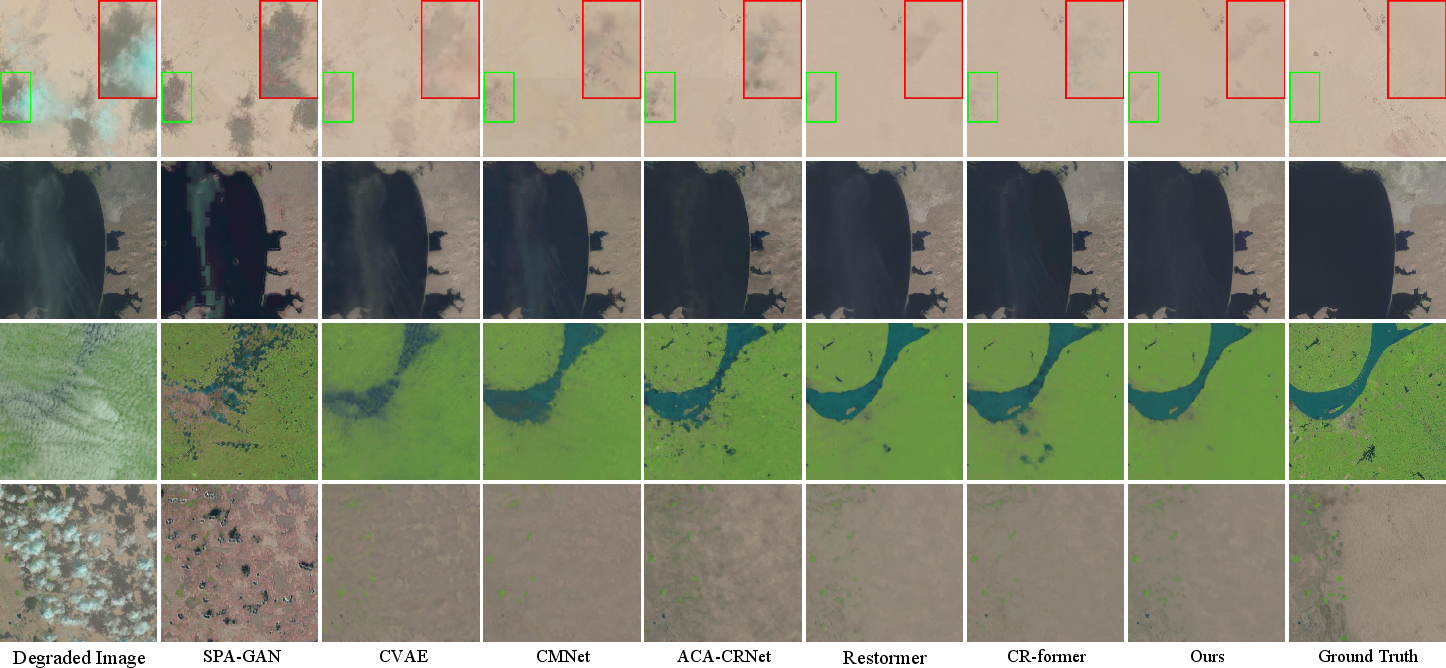

Single-Task Specialization

Despite being a unified model, TGPNet achieves top performance in single-task benchmarks, surpassing ACA-CRNet, CR-former, and hybrid attention-transformer models in cloud removal by 0.24–0.28 dB PSNR and 0.06° SAM.

Figure 7: Qualitative advantage of TGPNet (declouding): improved recovery of fine terrain details and water body structure.

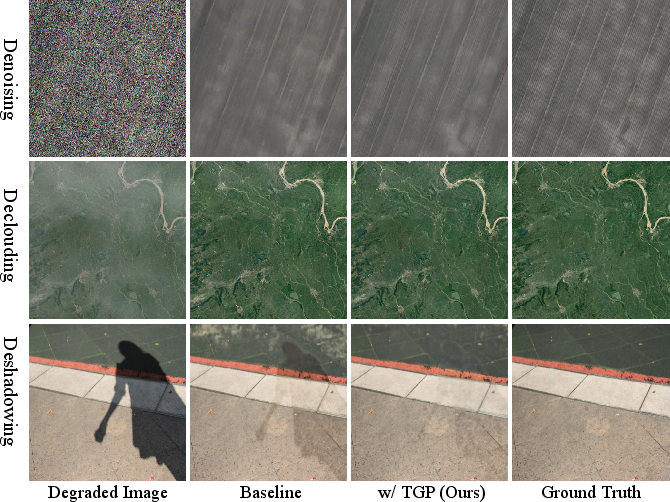

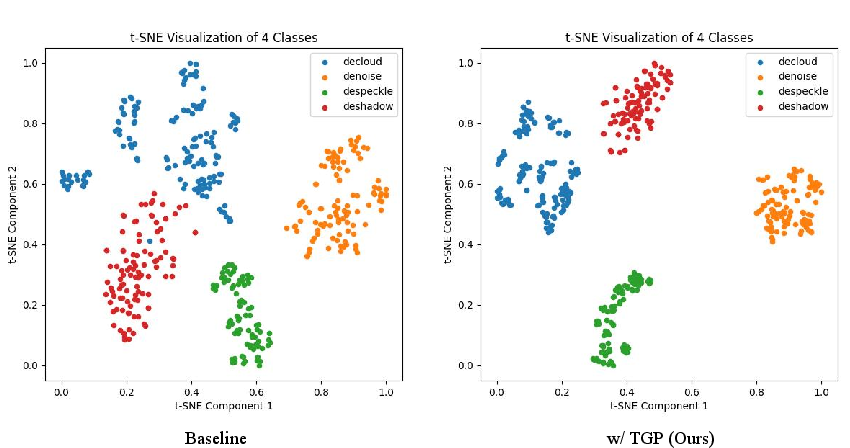

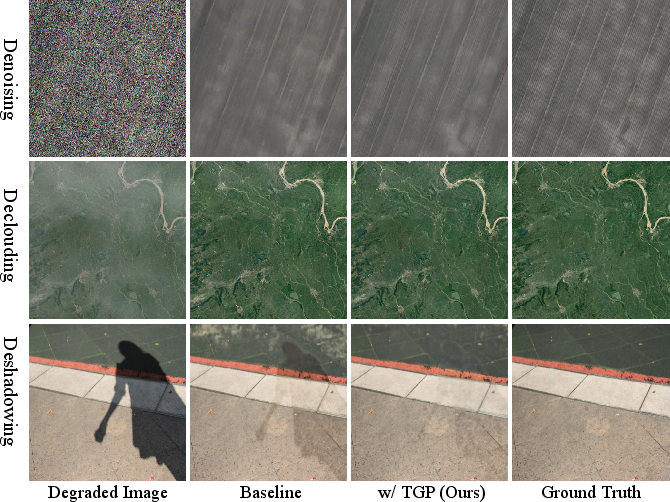

Ablation and Analysis

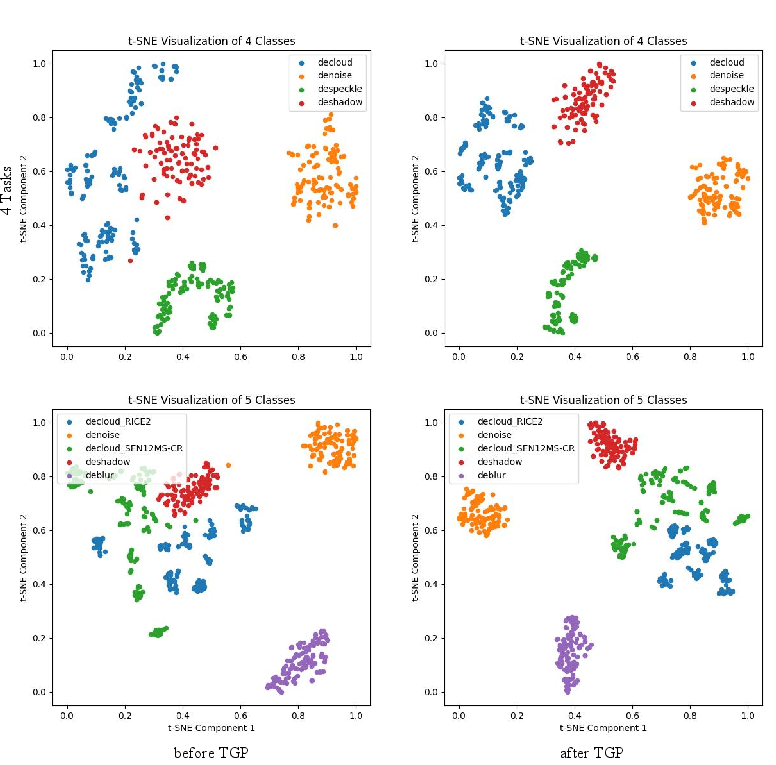

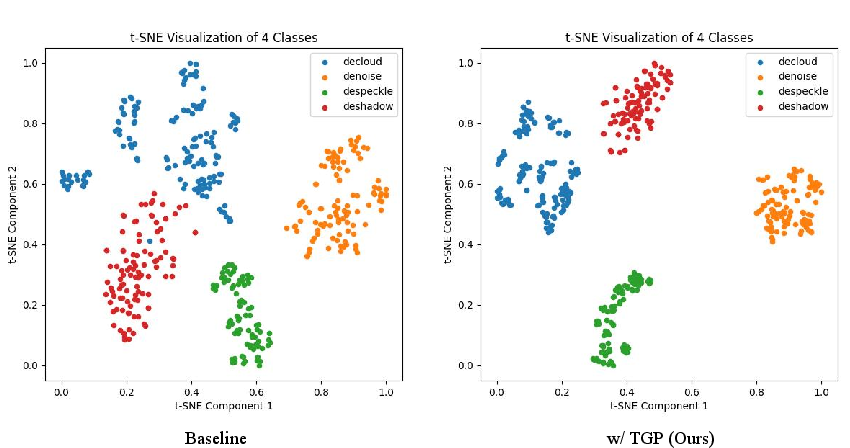

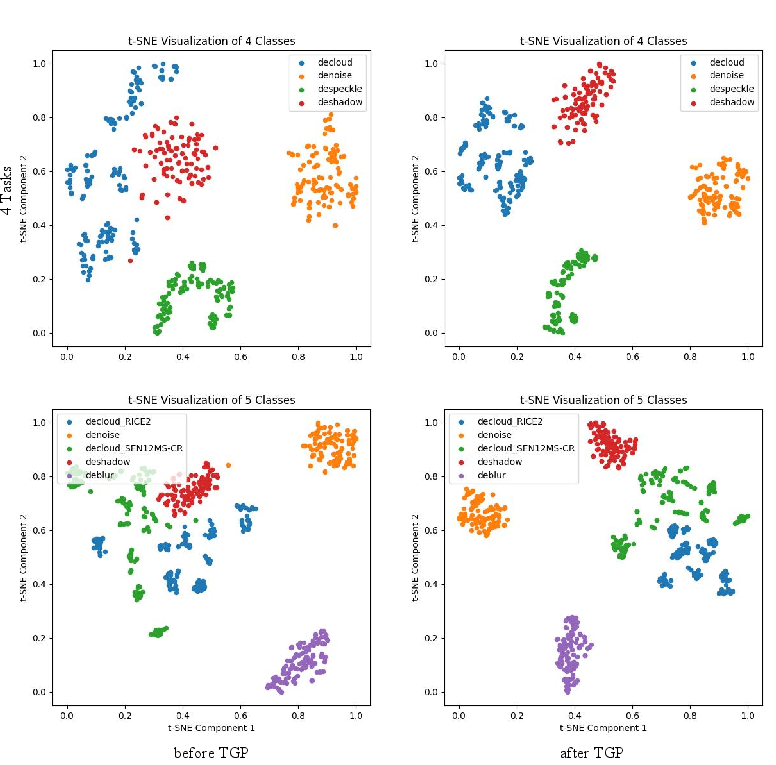

Ablation tests validate the necessity and contribution of the TGP module. Integrating TGP into the backbone gives consistent quantitative and qualitative gains, producing more disentangled, task-aligned feature clusters (as verified via t-SNE and K-means metrics).

Figure 8: Visual ablation; TGP brings sharper details and enhanced suppression of residual degradations.

Figure 9: Decoder feature t-SNE—TGPNet (right) yields better-separated clusters corresponding to distinct degradations, compared to the baseline (left).

Figure 10: Feature disentanglement pre- and post-TGP: post-TGP, clusters are more compact and aligned with ground-truth tasks.

The linear affine modulation design is empirically validated as optimal; non-linear variants provide negligible additional benefit due to the sufficient nonlinearity already present in the LTSE/backbone.

Efficiency

TGPNet is computationally efficient compared to all strong baselines, requiring only 21.27M parameters and 71.4 GFLOPs, with the fastest inference (60.84 ms), a crucial attribute for operational remote sensing workflows.

Implications and Future Prospects

Practically, TGPNet constitutes the first parameter-efficient, prompt-guided unified model with demonstrated generalization across cloud, noise, shadow, blur, and SAR-specific degradations spanning optical, SAR, and TIR modalities. Its task compositionality is critical for deployment in real-world settings where multi-artifact contamination is the norm.

Theoretically, the paper validates the feature modulation paradigm as a means to harmonize disparate physical noise domains, resolving negative transfer and optimization conflicts through learned, adaptive prompts. However, the framework is currently non-blind (requires explicit knowledge of degradations at inference), and there are residual limitations in handling multiplicative noise (e.g., SAR speckle) due to residual additive design bias.

A clear future direction lies in blind degradation identification for prompt selection and improved support for domain-specific degradations via regularized or adapter-based architectures. Incorporation of cross-modal priors and hybrid prompt initialization (semantic-linguistic + learnable vectors) are plausible advancements. More generally, TGPNet paves the way toward single-architecture terrestrial data processing, with obvious implications for scalable, globally operational remote sensing analytics.

Conclusion

The work establishes TGPNet as a generalist restoration framework achieving SOTA accuracy and efficiency in unified RSIR, demonstrating robustness to task, modality, and real-world complexity. Its compositional prompt-guided approach robustly extends to OOD degradations and challenges existing practice of per-task/per-modality specialization, providing both a scalable benchmark and a methodological foundation for future unified image restoration research.