- The paper demonstrates that LOGGIA, a neural routing algorithm, outperforms static shortest-path baselines by effectively handling delay-aware conditions.

- It employs a GNN-based message passing network with a novel log-space output layer, ensuring stable, monotone cost assignments and efficient convergence.

- LOGGIA integrates imitation pretraining with multi-agent reinforcement learning to adapt to real-world network dynamics and compensate for inference delays.

Telemetry-Aware Routing as Delay-Aware Neural Control: The LOGGIA Framework

Modern networks require routing algorithms that can adapt to rapid and unpredictable changes in traffic and topology on millisecond timescales. Traditional protocols (e.g., OSPF, EIGRP, RIP) are insufficient for such responsiveness due to slow convergence and limited utilization of fine-grained telemetry. Recent neural approaches leverage high-dimensional telemetry for adaptive routing, but practical deployment is impeded either by the unrealistic assumption of delay-free global network state or by restricting decisions to local, delay-oblivious views. These limitations raise the core scientific and engineering question: can neural routing algorithms leverage real-time, telemetry-enriched state while accounting for inherent network and inference delays, and ultimately outperform classical static or dynamic baselines in realistic settings?

To address this, the work recasts telemetry-aware routing as a closed-loop, delay-aware control problem over attributed network graphs and frames routing policy learning under realistic propagation and computation delays.

System Model and Simulation Framework

Topology is modeled as an undirected graph G=(V,E); each router v∈V is both a user endpoint and a routing node, with symmetric links e∈E. The simulator, built over ns-3 and the ns3-ai connector, supports per-packet dynamics, in-band telemetry, and traffic generation based on empirical data center distributions.

The agent’s environment is a partially observable MDP M where at each discrete step:

- Each node and edge maintains attribute vectors xv,t,xe,t (telemetry, buffer occupancy, rates, delays, etc.).

- The system state St is a directed, attributed graph that evolves under injected traffic, routing actions, and network dynamics.

- Observations are delay-aware: any (central or local) observer receives remote state data propagated according to measured link delays; no agent instantaneously observes global state unless explicitly configured to be "birdseye."

- Delays are factored both for telemetry/state snapshot distribution and for routing table update latencies, including inference time modulated by parameter λac (to reflect hardware/parallelism speedup).

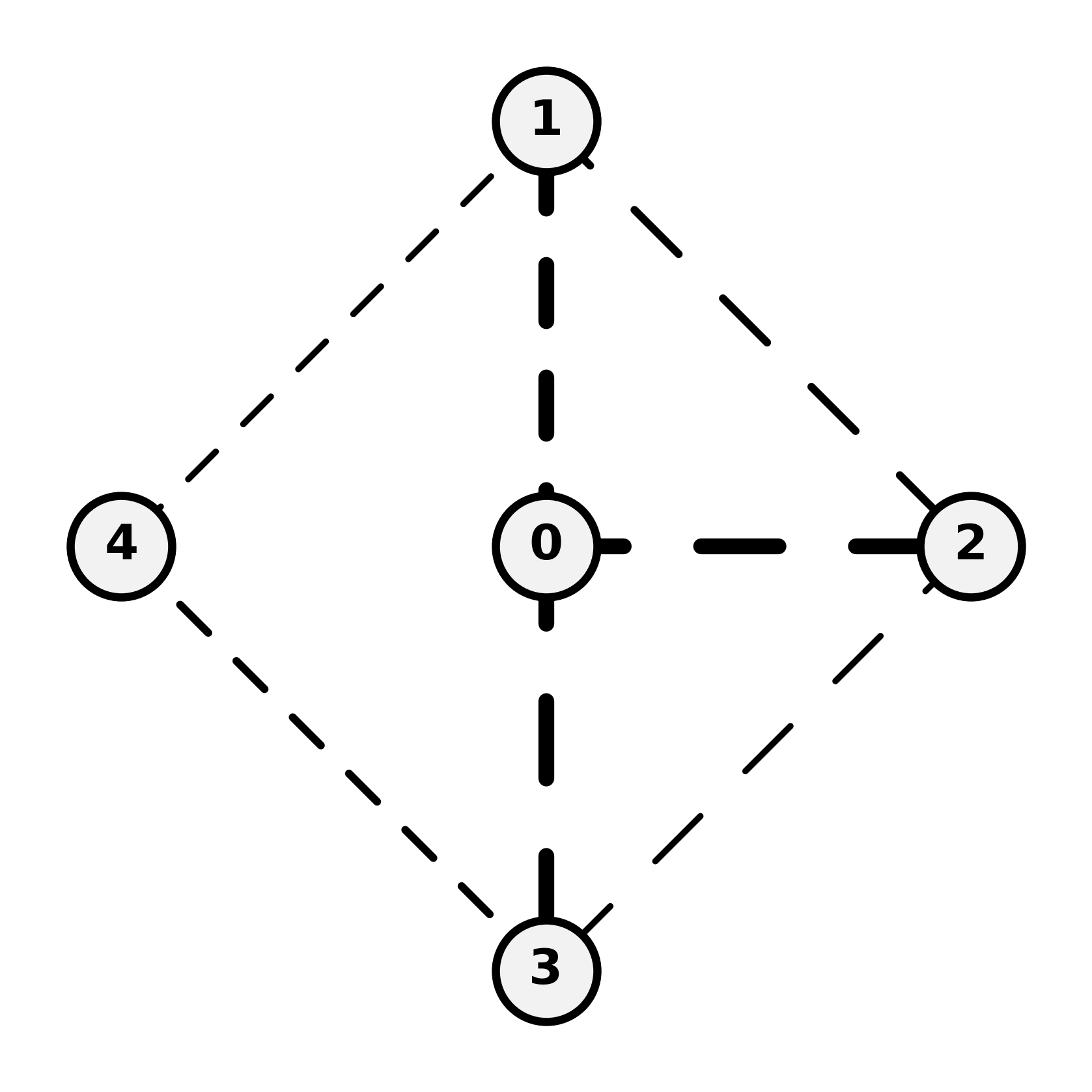

Figure 1: Telemetry-aware near-real-time routing modeled as a delay-aware, closed-loop system with distributed observers and control loops anchored on per-router or central decision-making.

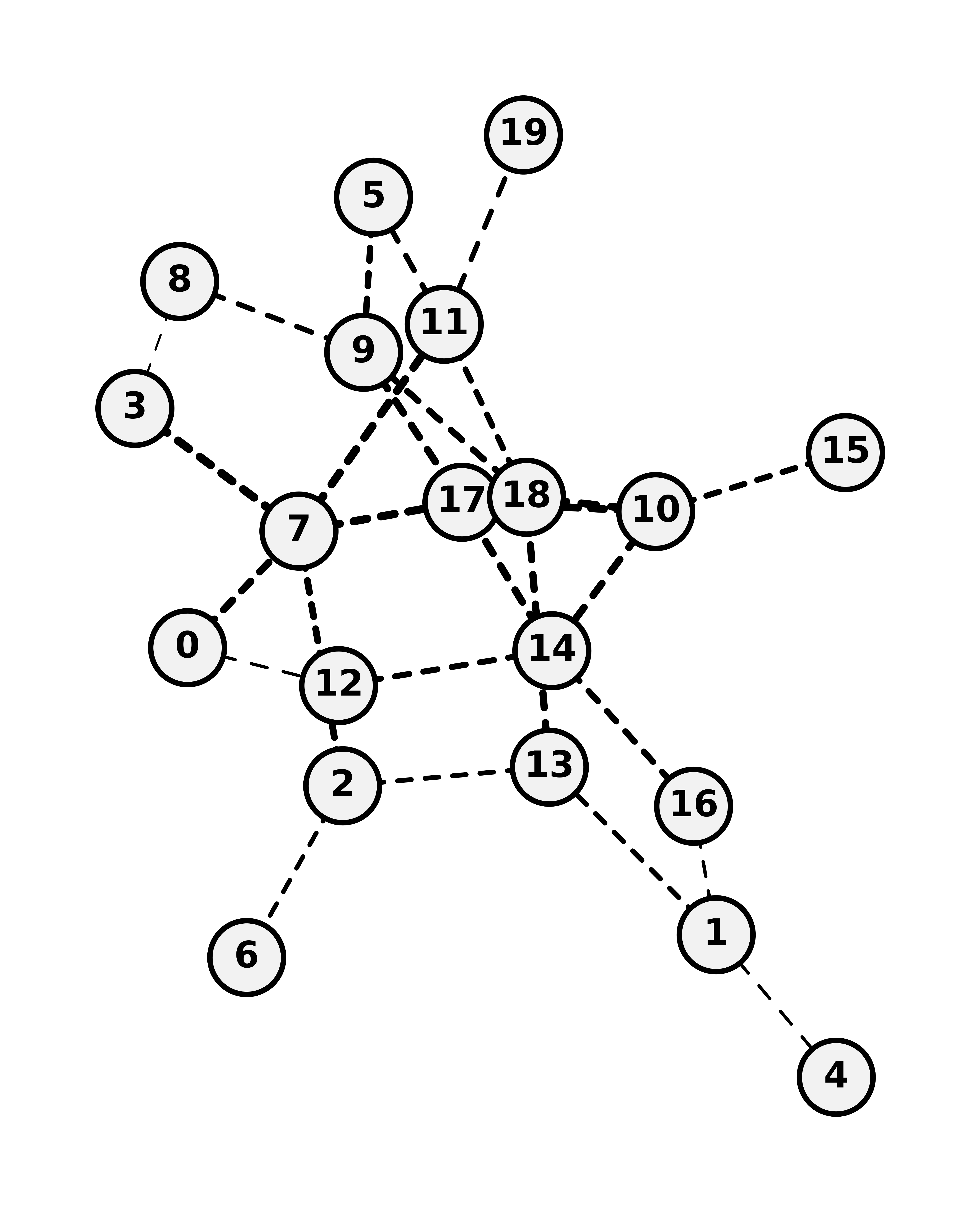

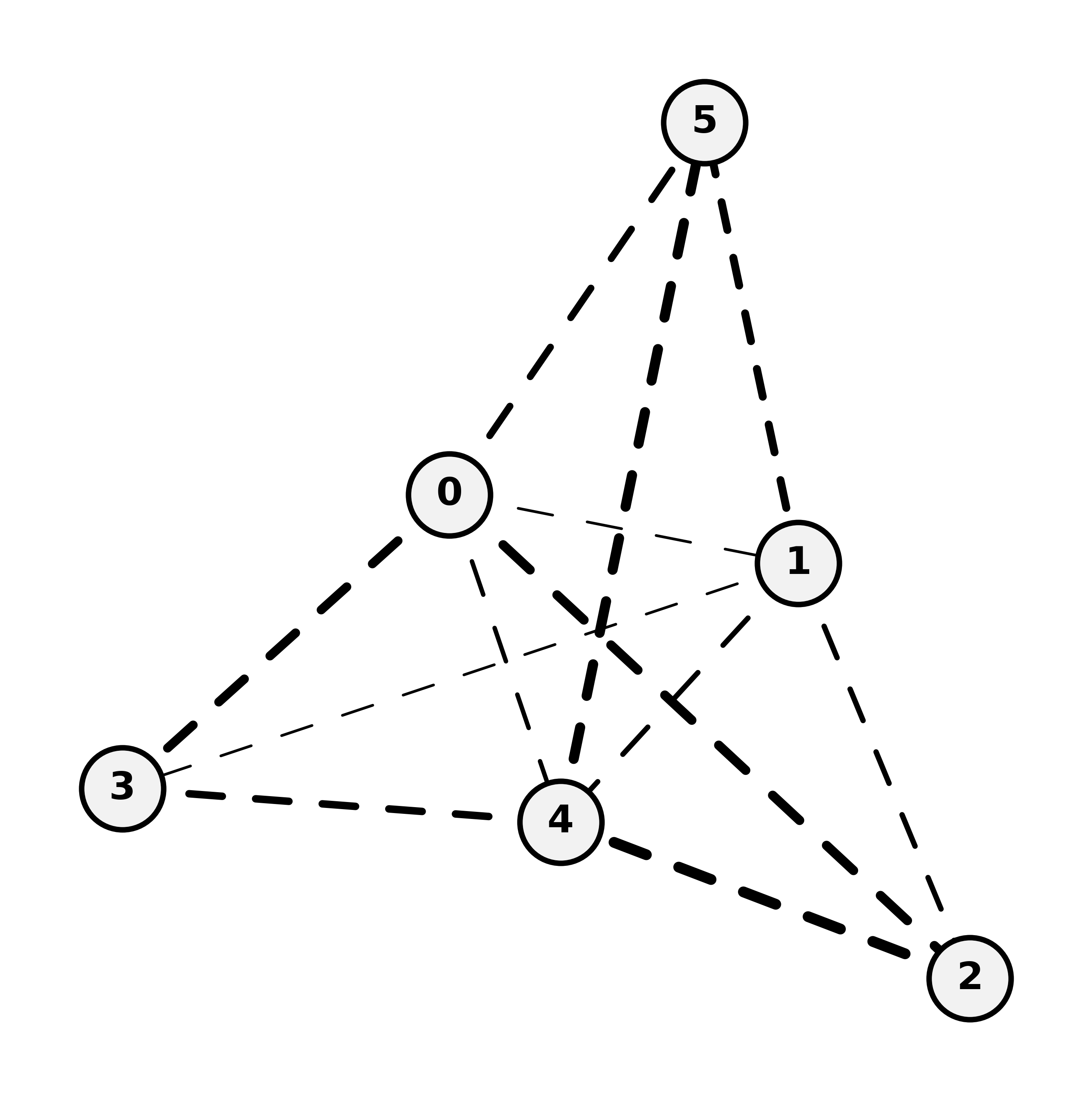

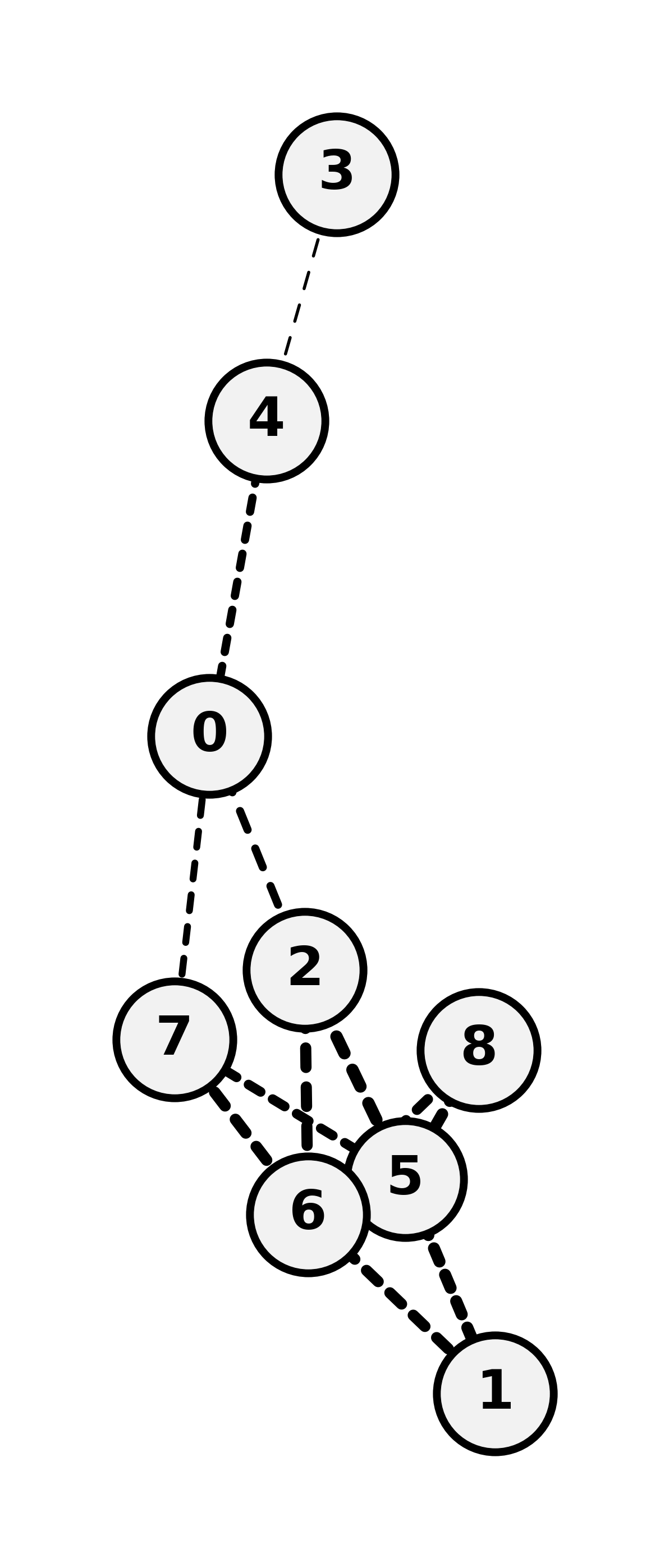

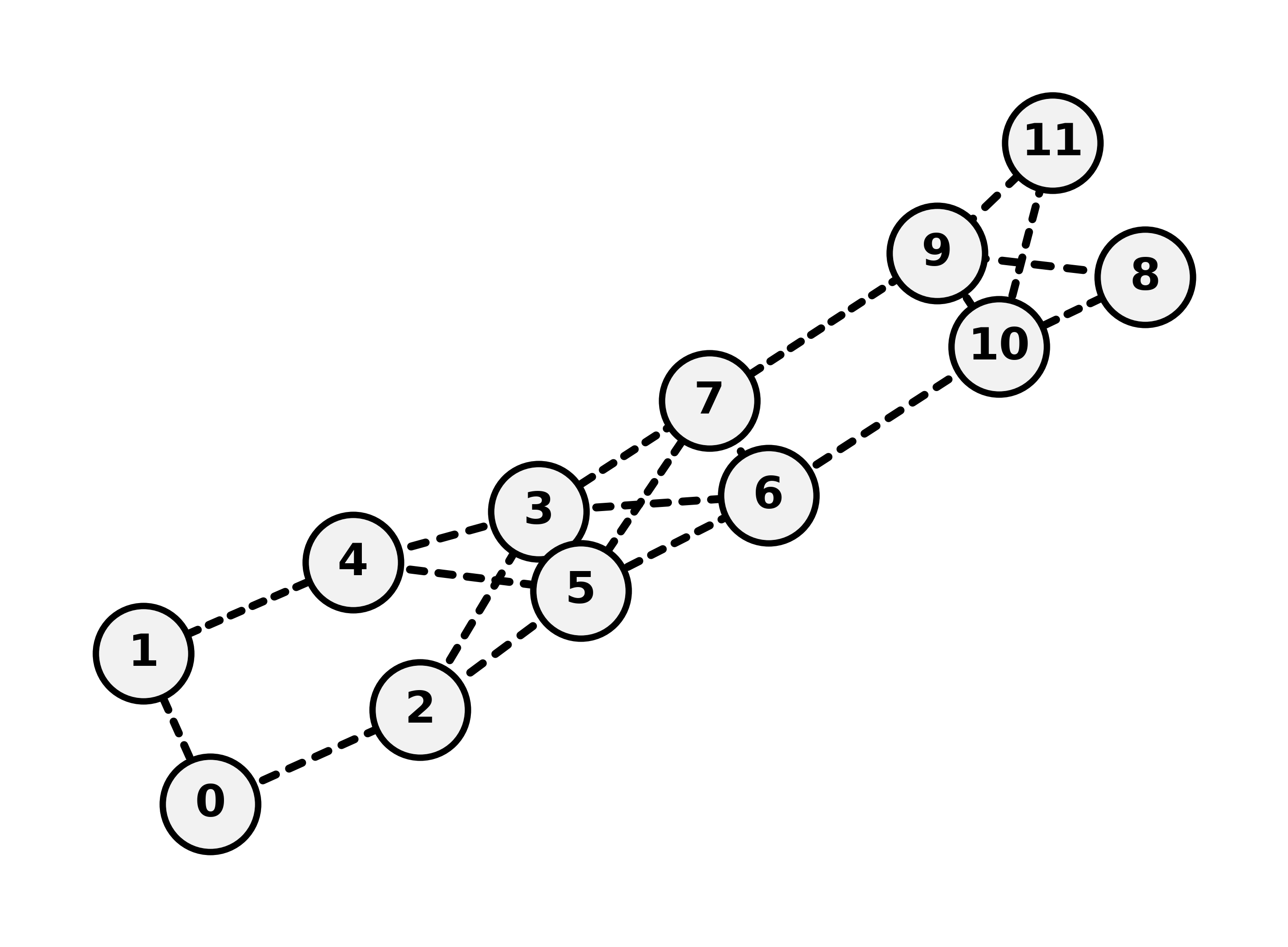

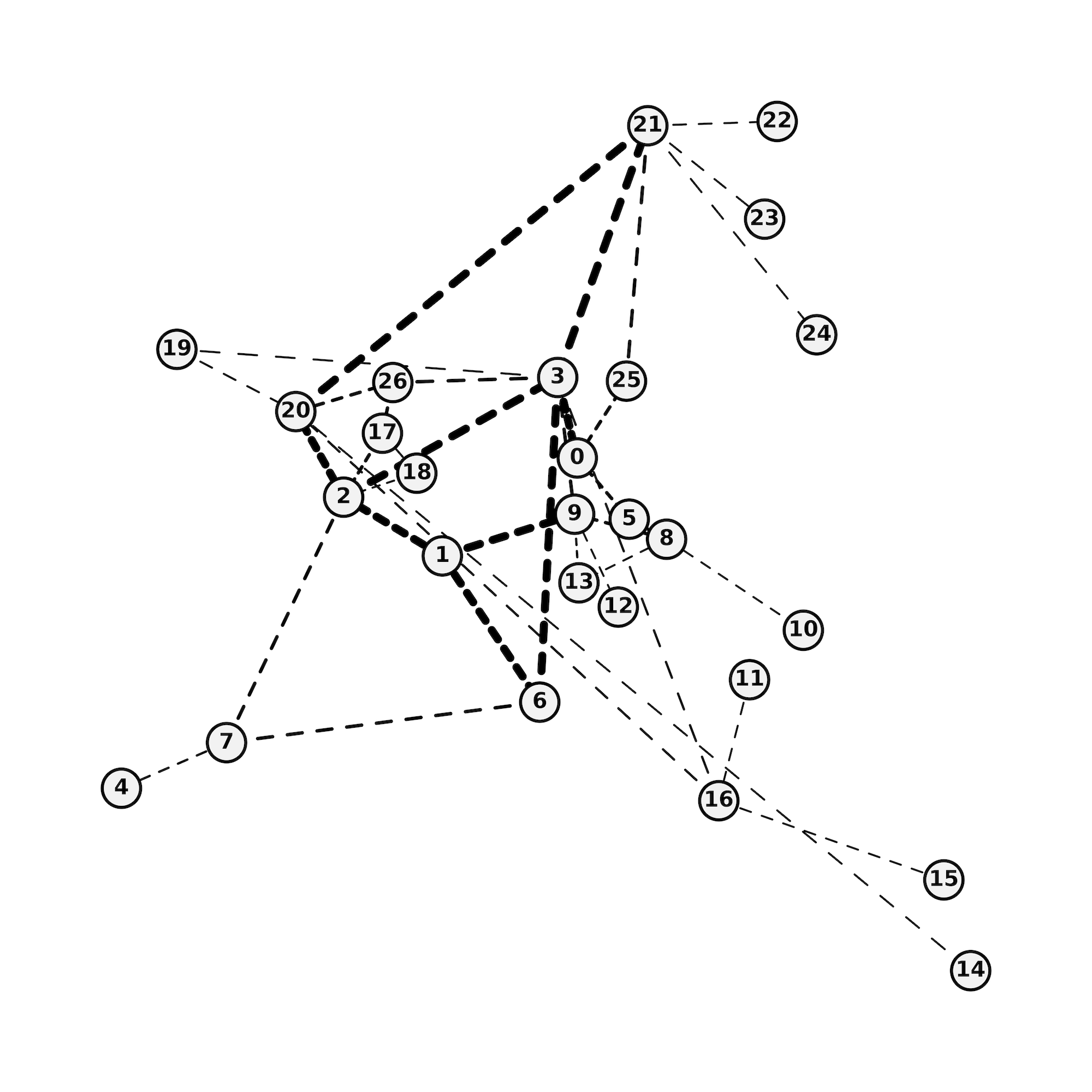

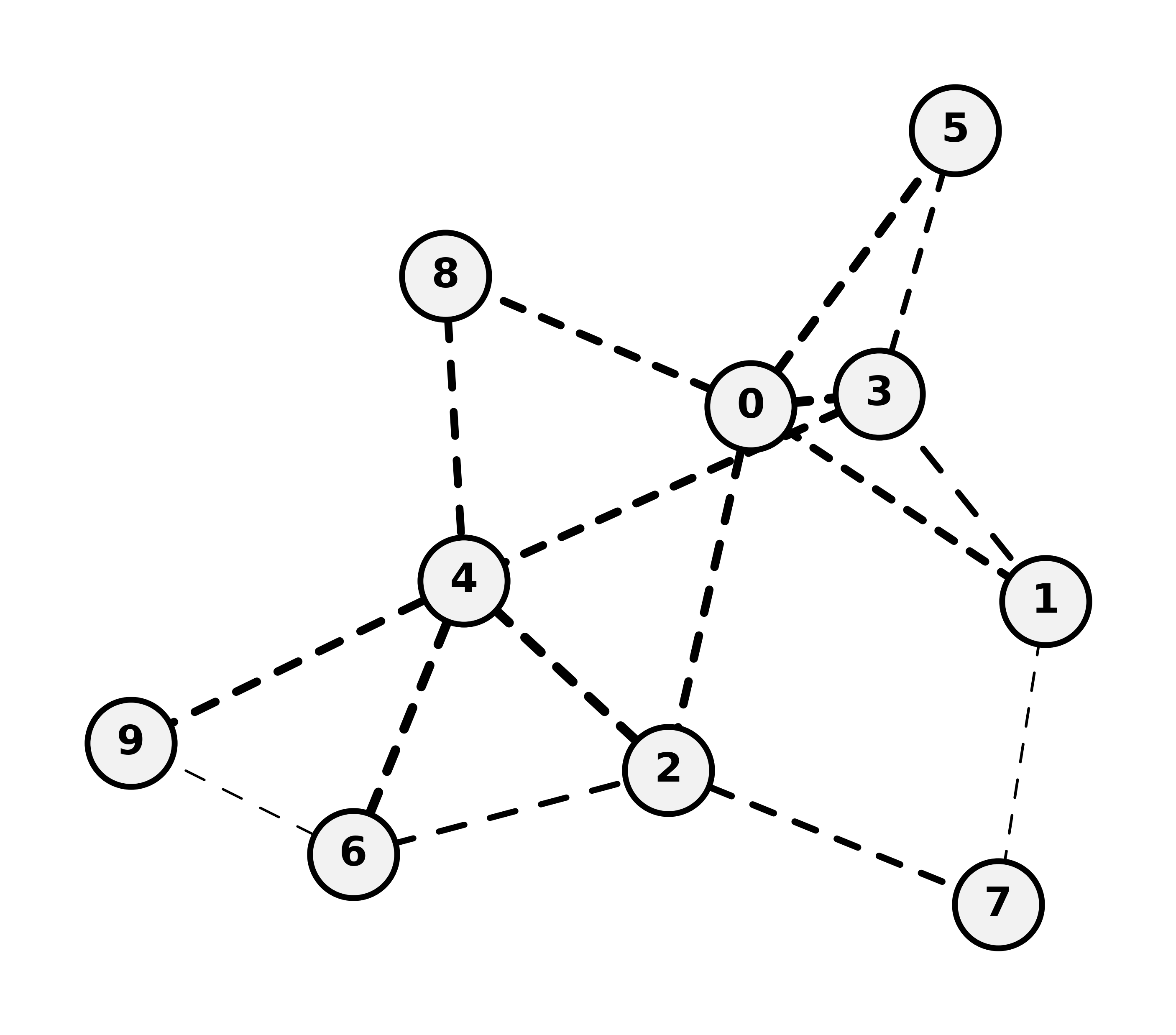

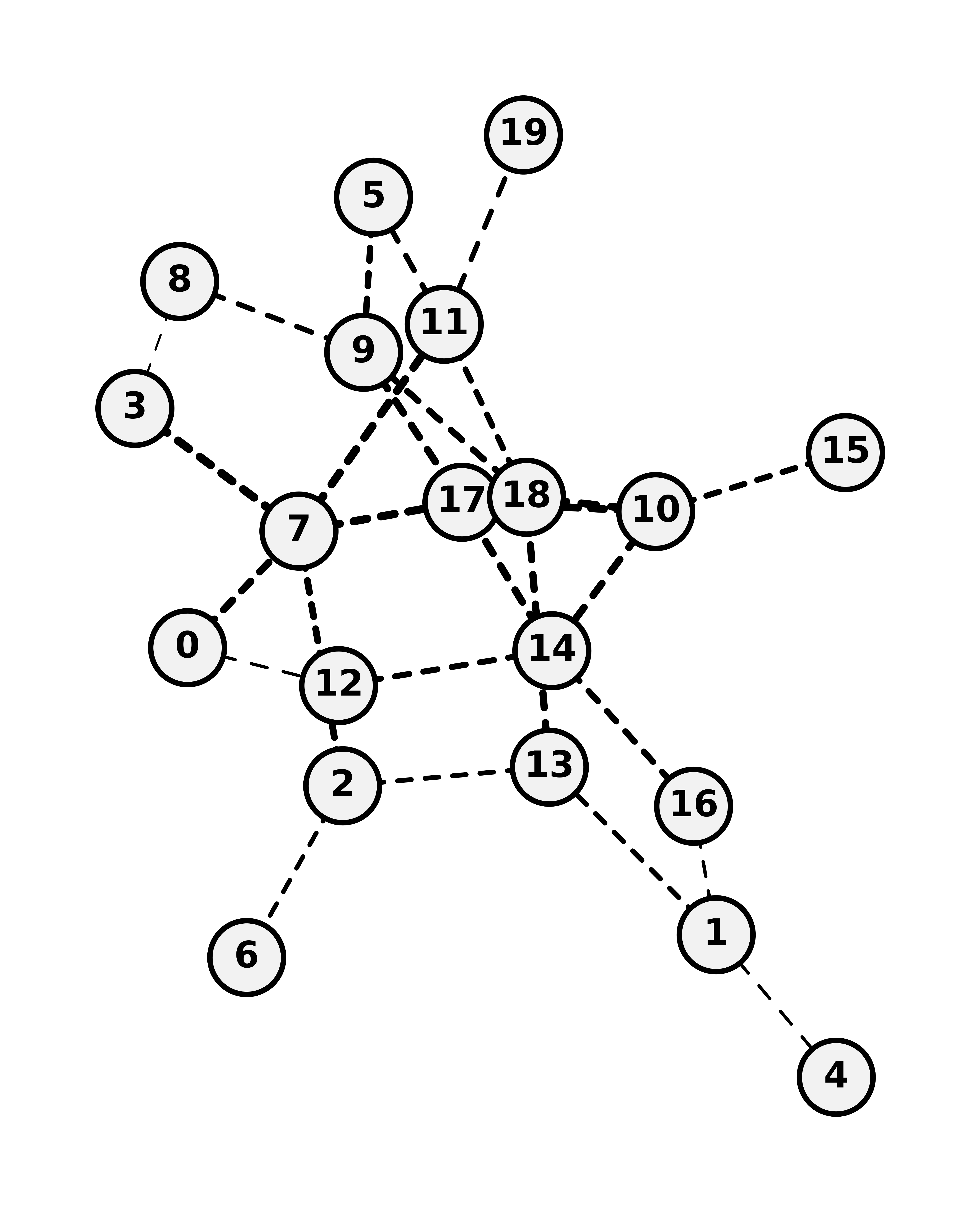

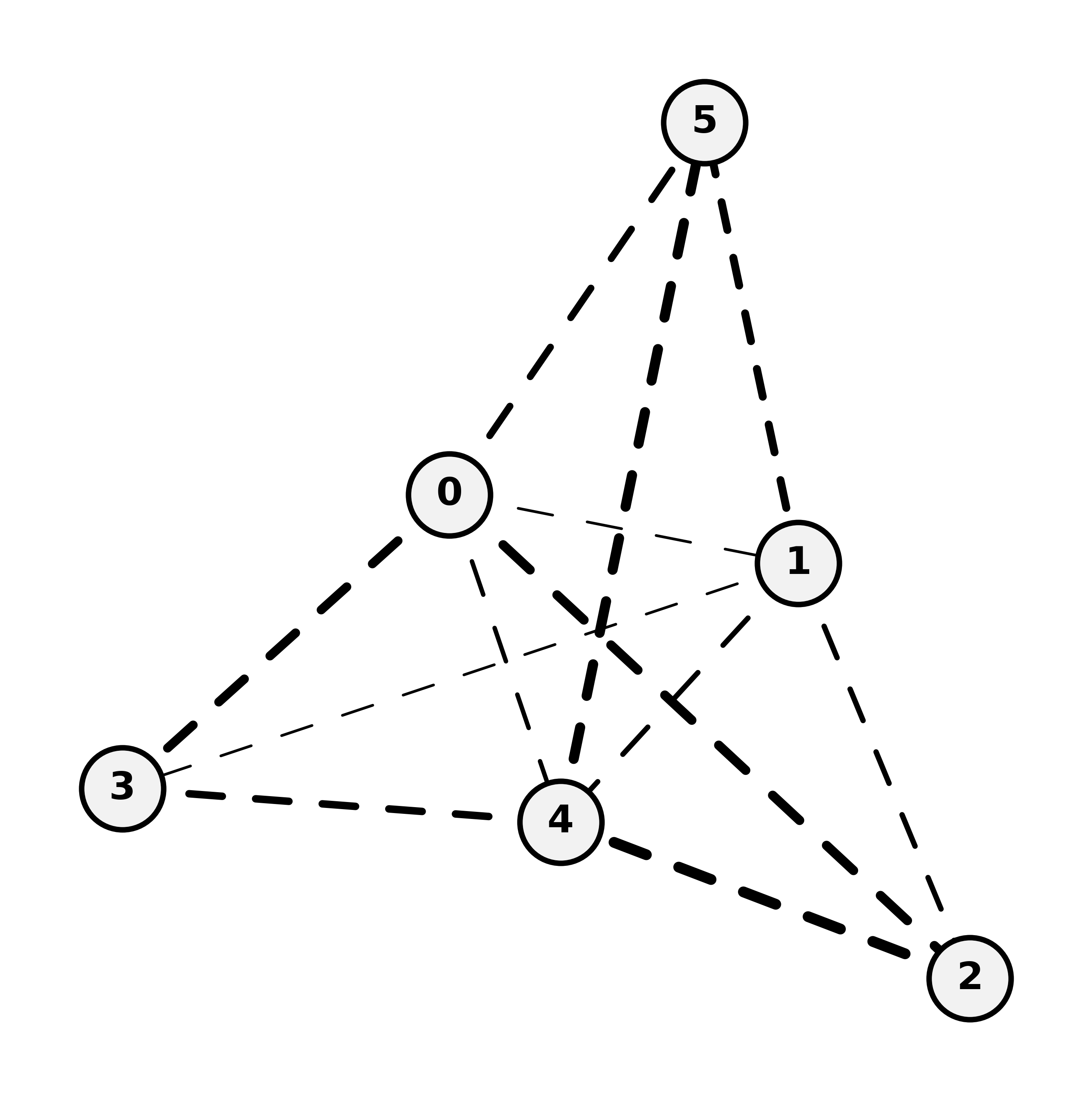

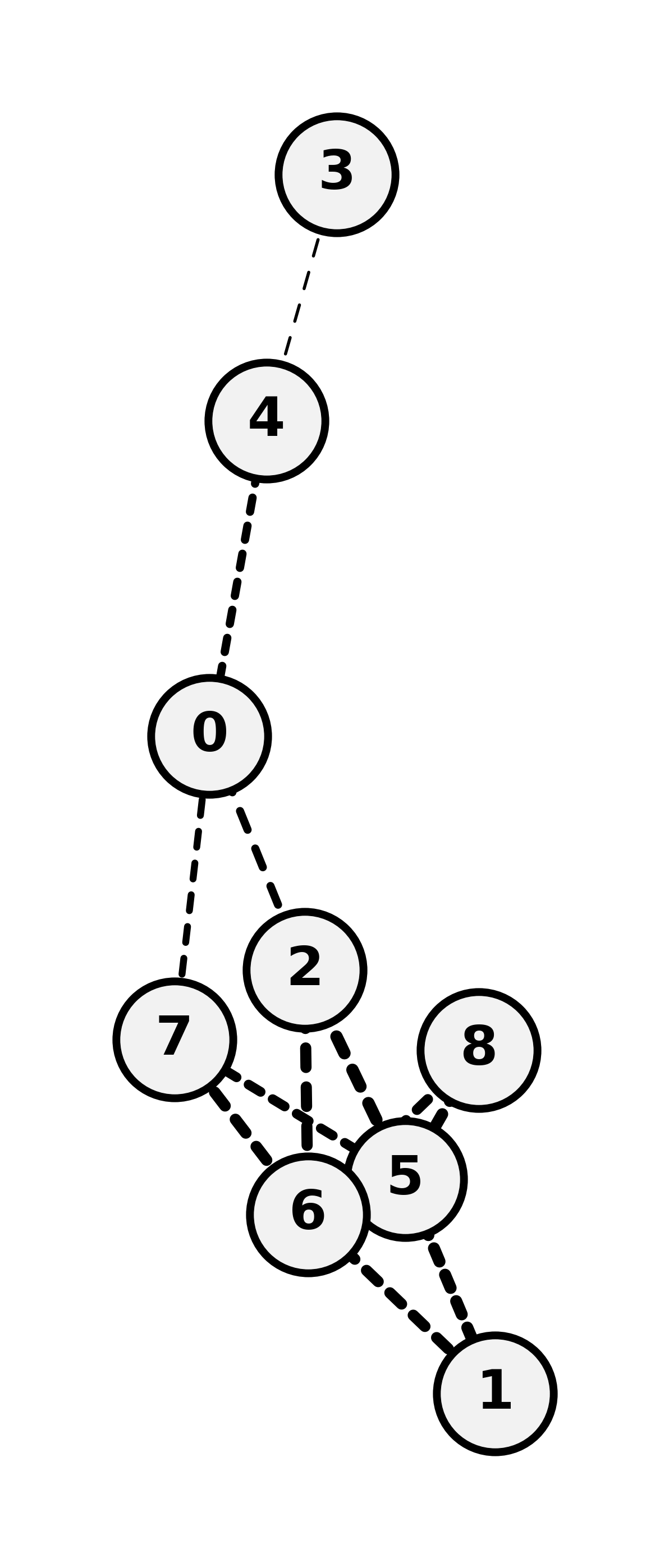

Figure 2: Construction of observation graphs for a sample topology; local observers perceive partial, delay-propagated snapshots, sharply contrasting the unattainable birdseye view.

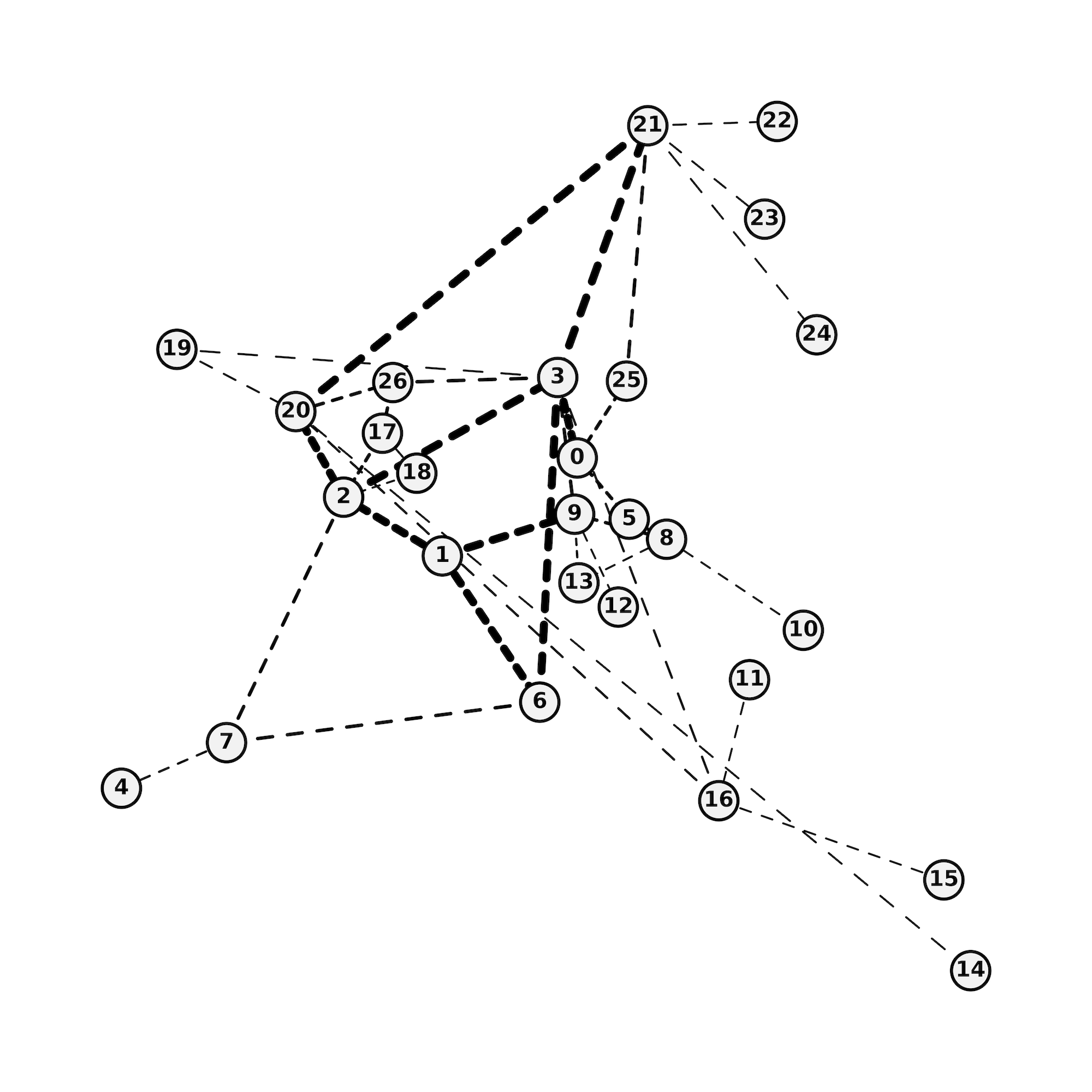

Figure 3: Deployment options for observers and agents: centralized/birdseye ("oracle") vs. central-with-delays vs. fully distributed, with each arrangement incurring different effective delays and communication patterns.

The LOGGIA Algorithm: Log-Space Neural Link-Weighting for Routing

Policy and Architecture

LOGGIA is a multi-stage neural routing policy:

- Message Passing Network (MPN): A GNN operating on node, edge, and global features with L iterations, updating latent vectors via permutation-invariant aggregation (mean/min) and edge/node/global MLPs.

- Log-Space Weight Output: Instead of incrementally updating weights or using unconstrained outputs, the GNN predicts (μ,σ) per edge, exponentiating μ to produce strictly positive, log-space link weights. This facilitates stable, monotone cost assignment and addresses initialization issues in prior work.

- Path Selection: Routing tables are generated by applying Dijkstra’s algorithm to the link-weighted graph (per-source in single-agent mode, per-node in multi-agent).

Key differentiators versus prior work (e.g., MAGNNETO, M-Slim, FieldLines):

- Direct inference on the original graph (not line digraphs), reducing computational and memory overhead.

- Stable log-space parametrization improves sample efficiency and convergence in nonstationary, high-variance environments.

Training: Multi-Agent Reinforcement and Imitation Learning

Experimental Results and Analysis

Experiments are conducted on real (B4, GEANT, TopologyZoo) and synthetic topologies (nx-XS, nx-S, nx-M, nx-L), with traffic sequences reflecting realistic TCP/UDP mixes and burst patterns.

Main Numerical Findings

- LOGGIA outperforms all static shortest-path (SP) baselines* and prior neural methods on episodic delivered data (goodput) in delay-aware settings across all tested topologies when deployed in the fully distributed Local-Multi mode (see Figure 5 and main results).

- Competing neural algorithms exhibit catastrophic performance degradation once global observation/inference is subject to network-round delays, often failing to surpass static SP.

- LOGGIA demonstrates robust generalization when trained on a minimal topology (mini5) but tested on much larger, unseen networks (up to 100 nodes), with performance scaling on par with SP in both goodput and inference time (see Figure 5).

- Inference delay and buffer size both critically impact performance. Faster hardware and lower v∈V0 (faster inference) yield consistent gains in throughput.

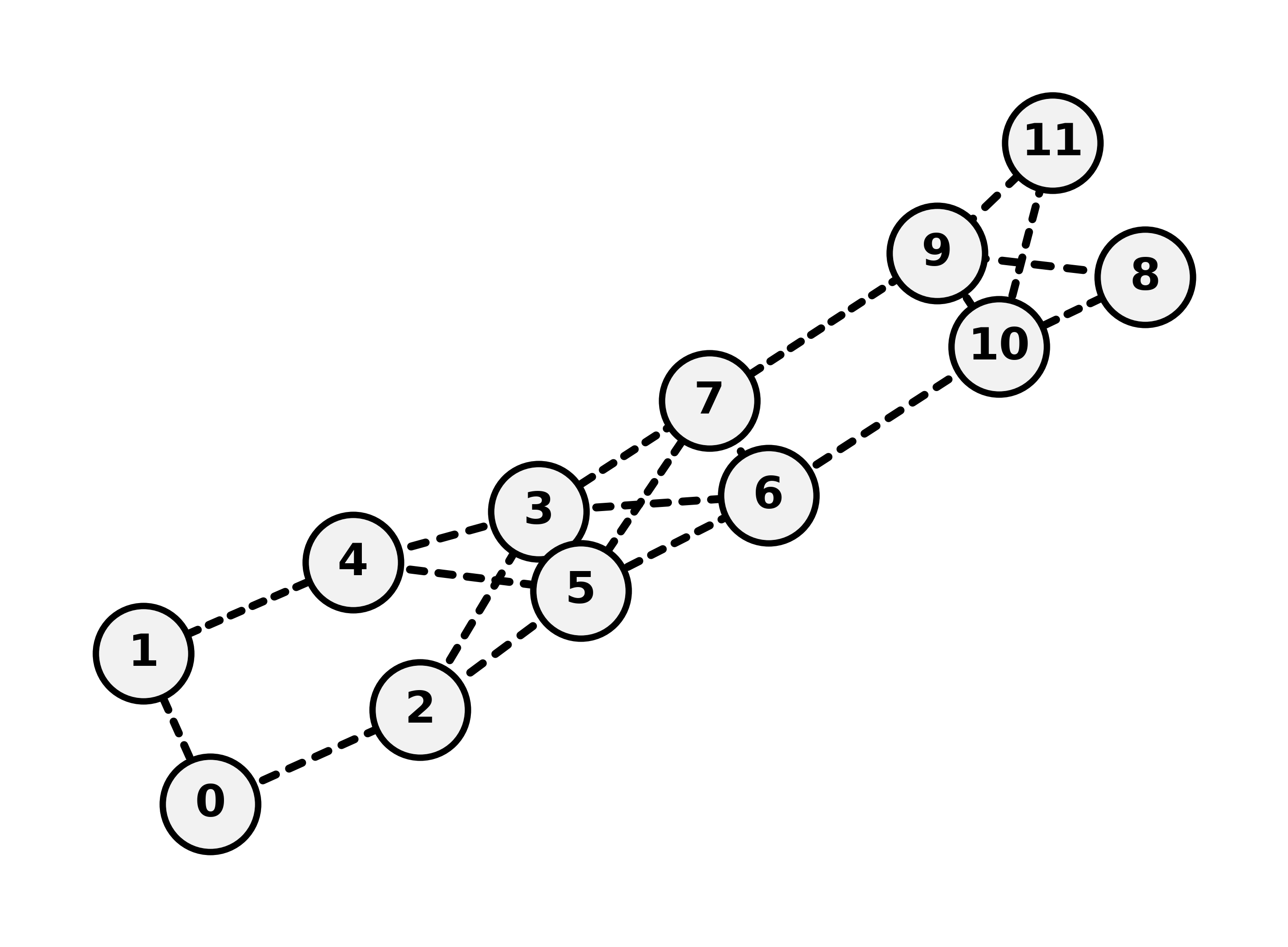

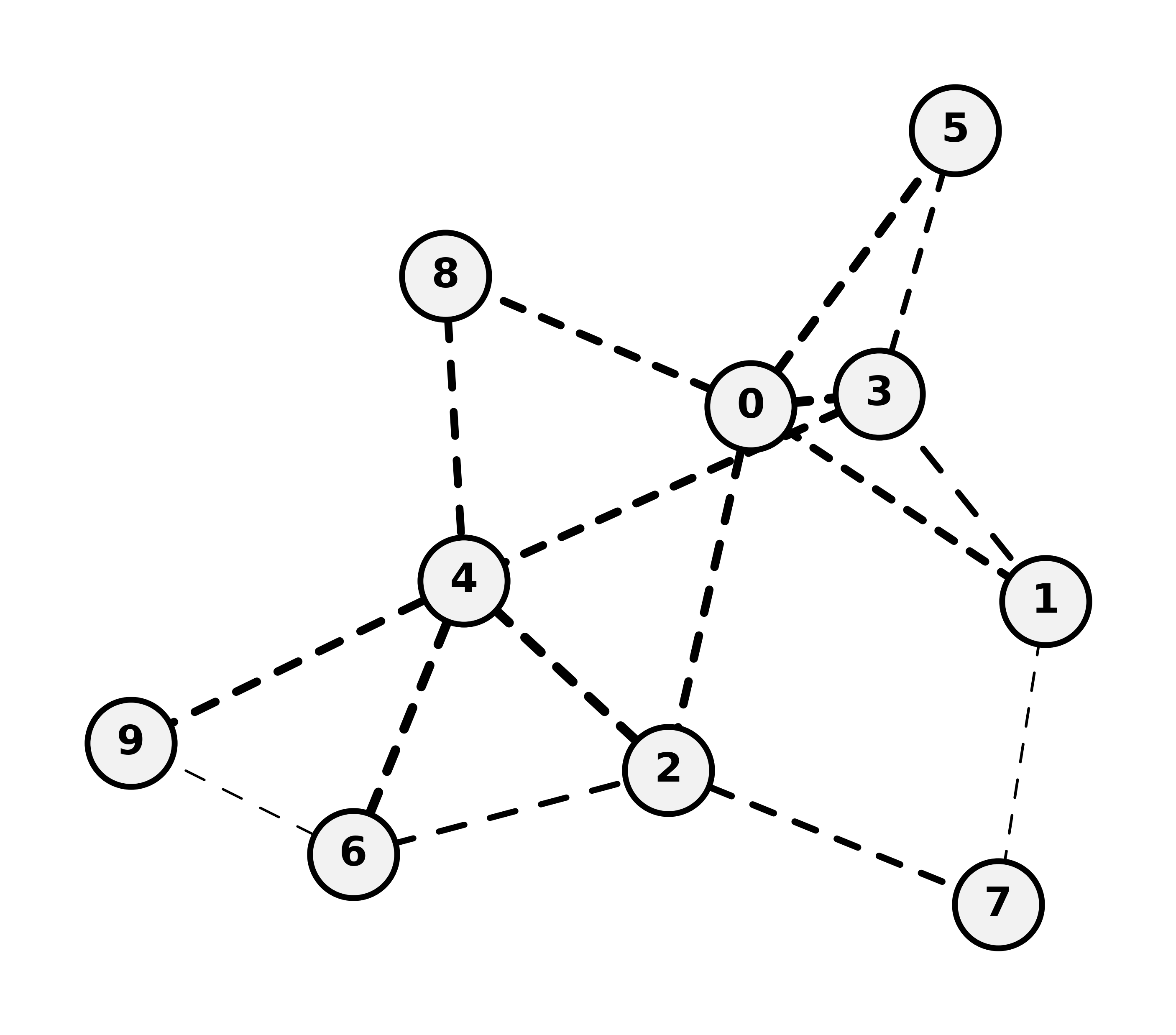

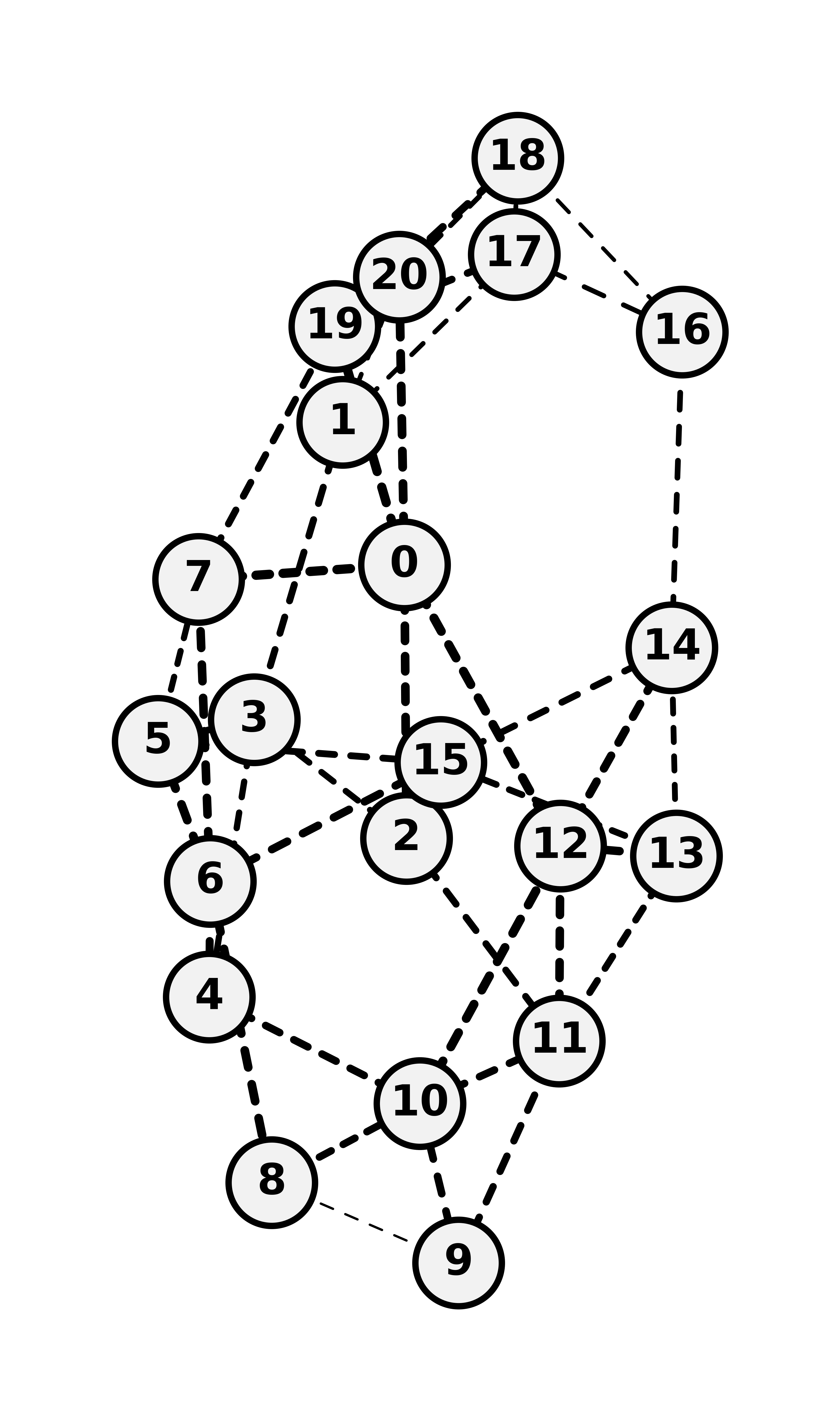

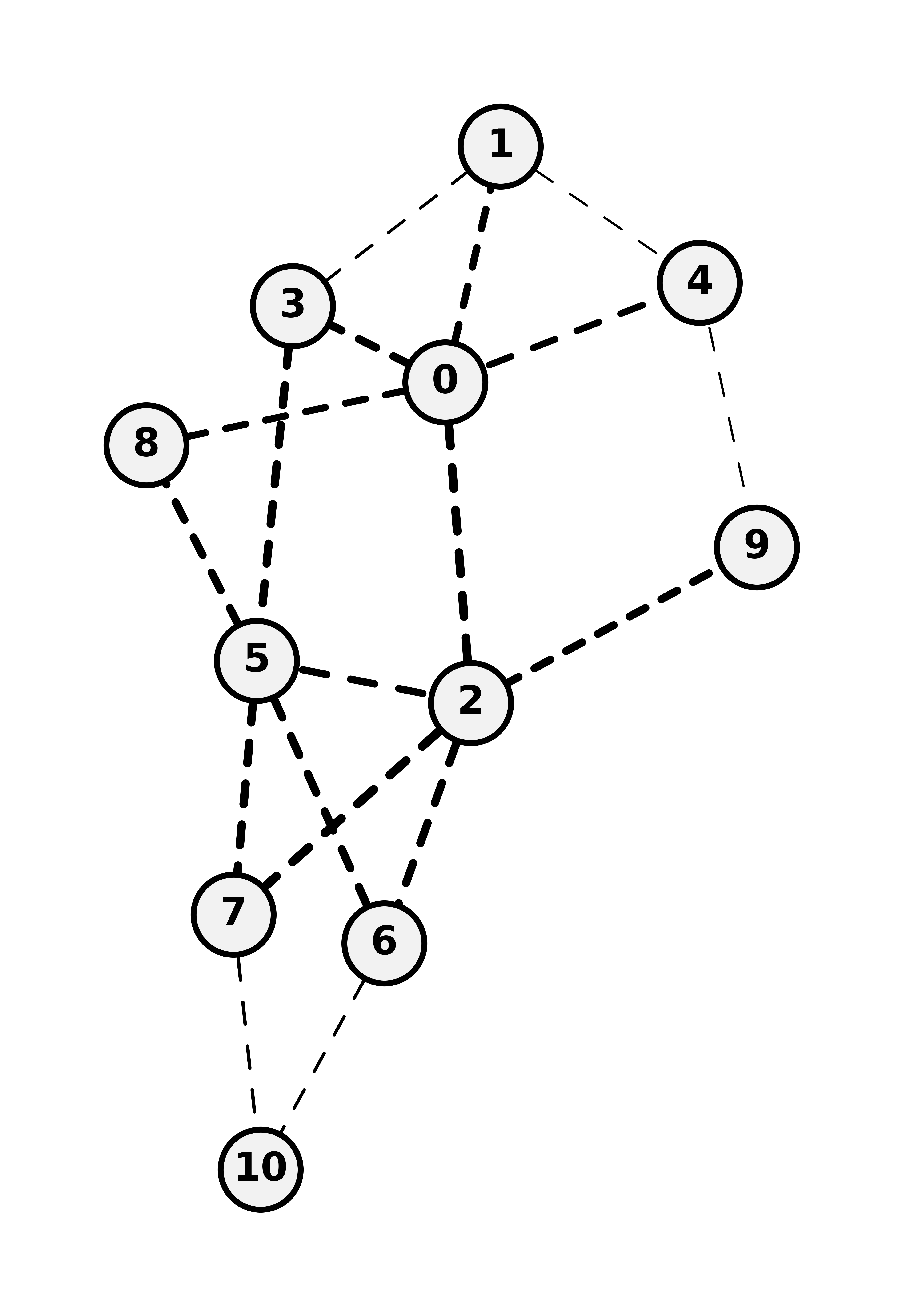

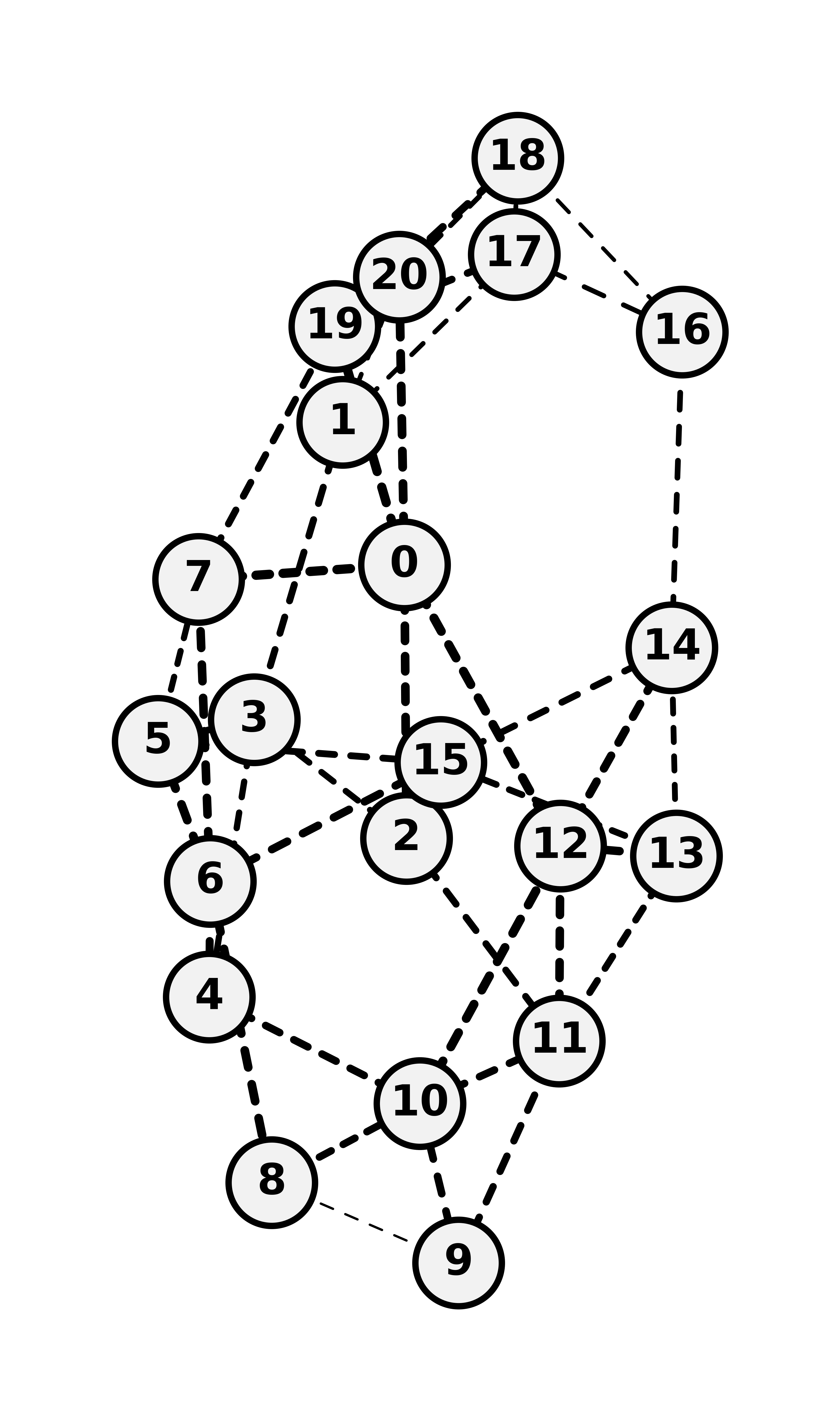

Figure 5: Topologies used for comprehensive evaluation: from mini5 and B4 through large real-world and synthetic nx-

graphs.*

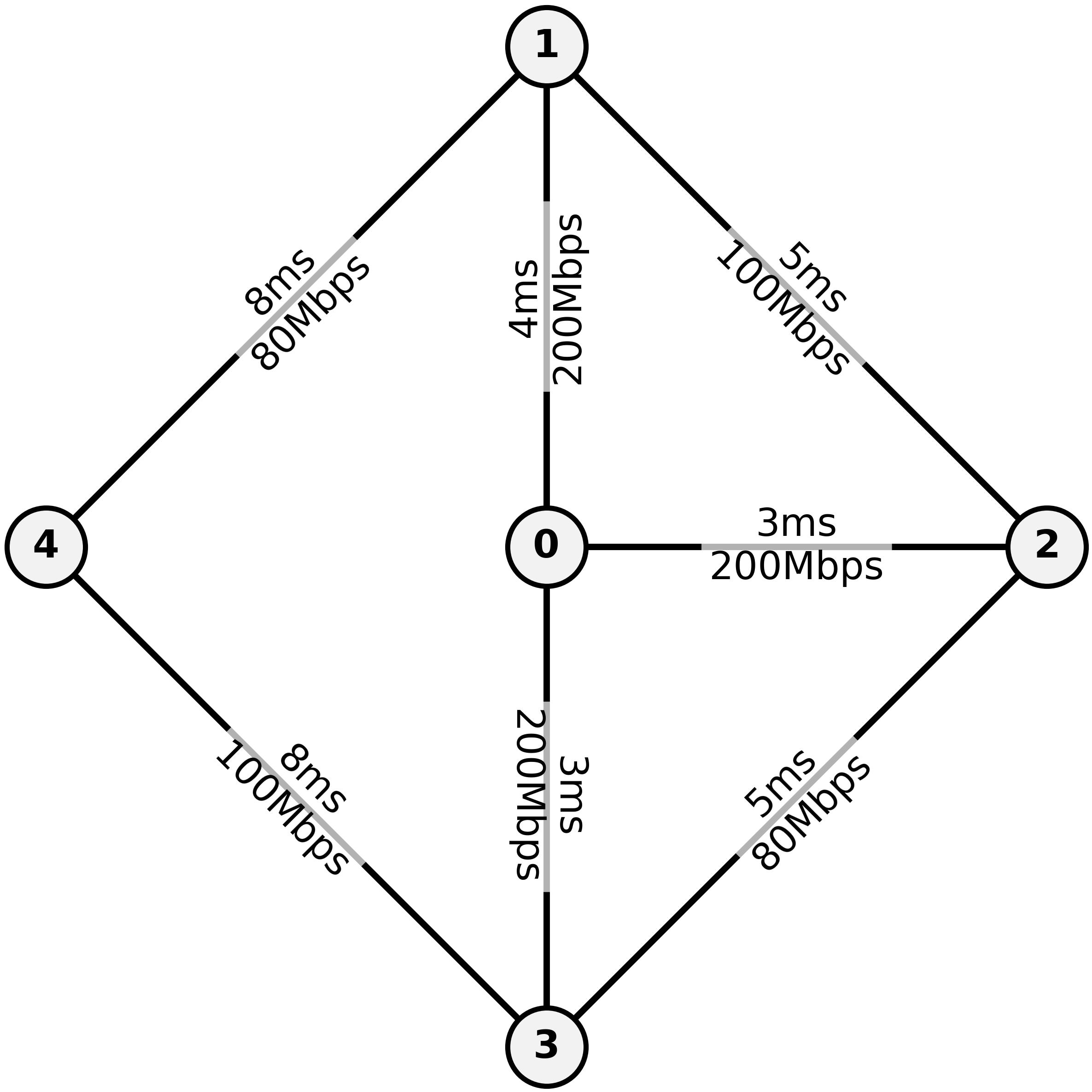

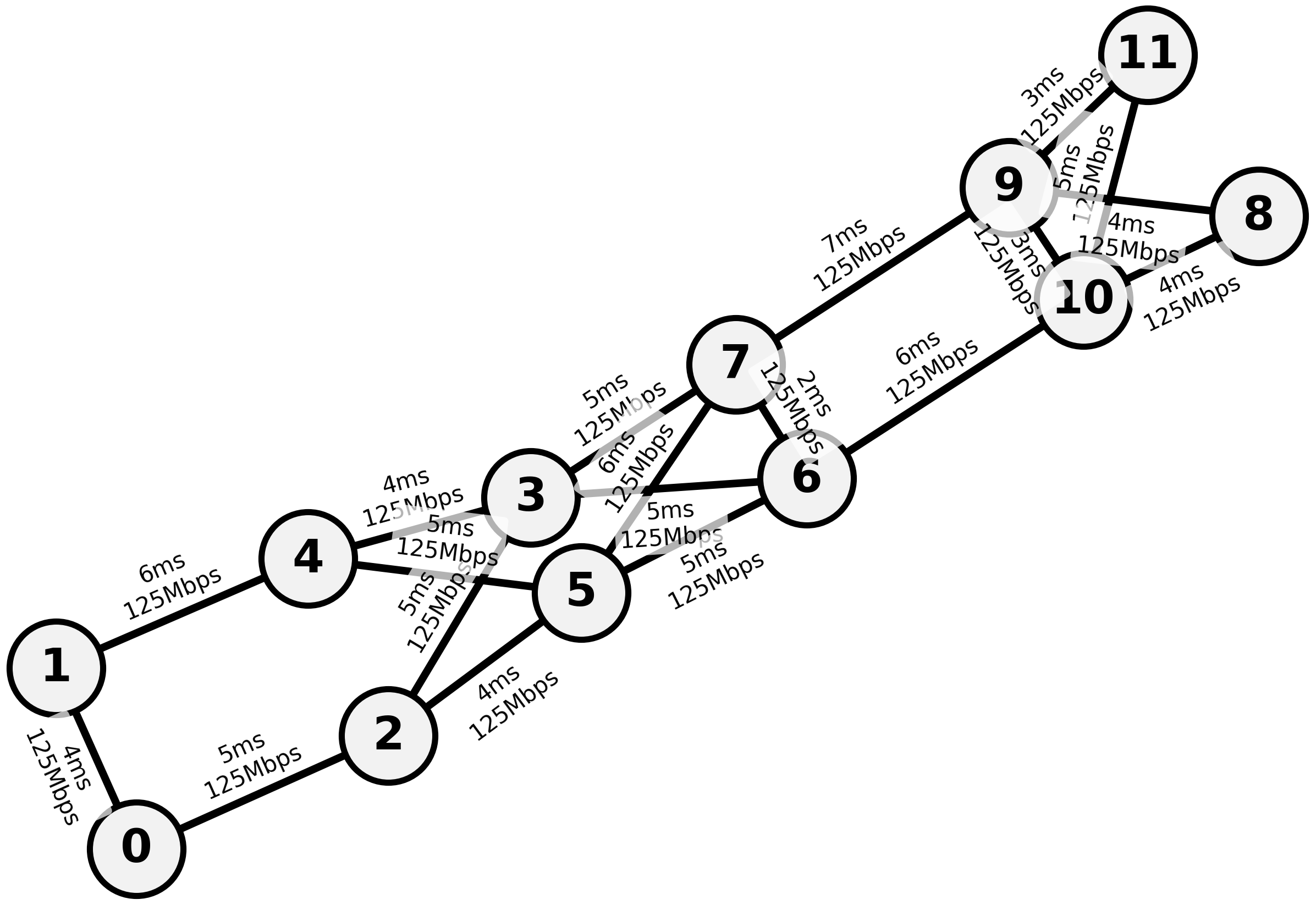

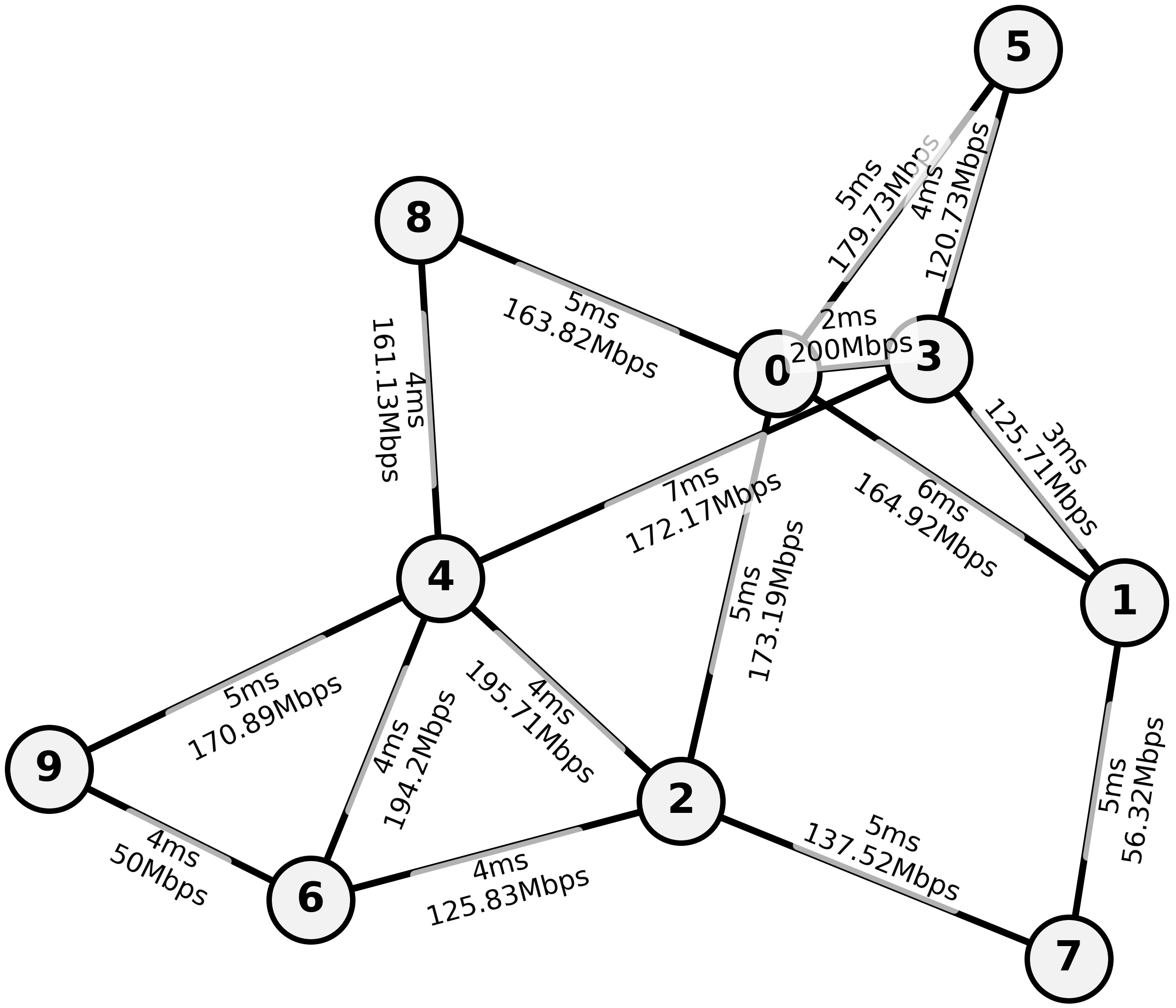

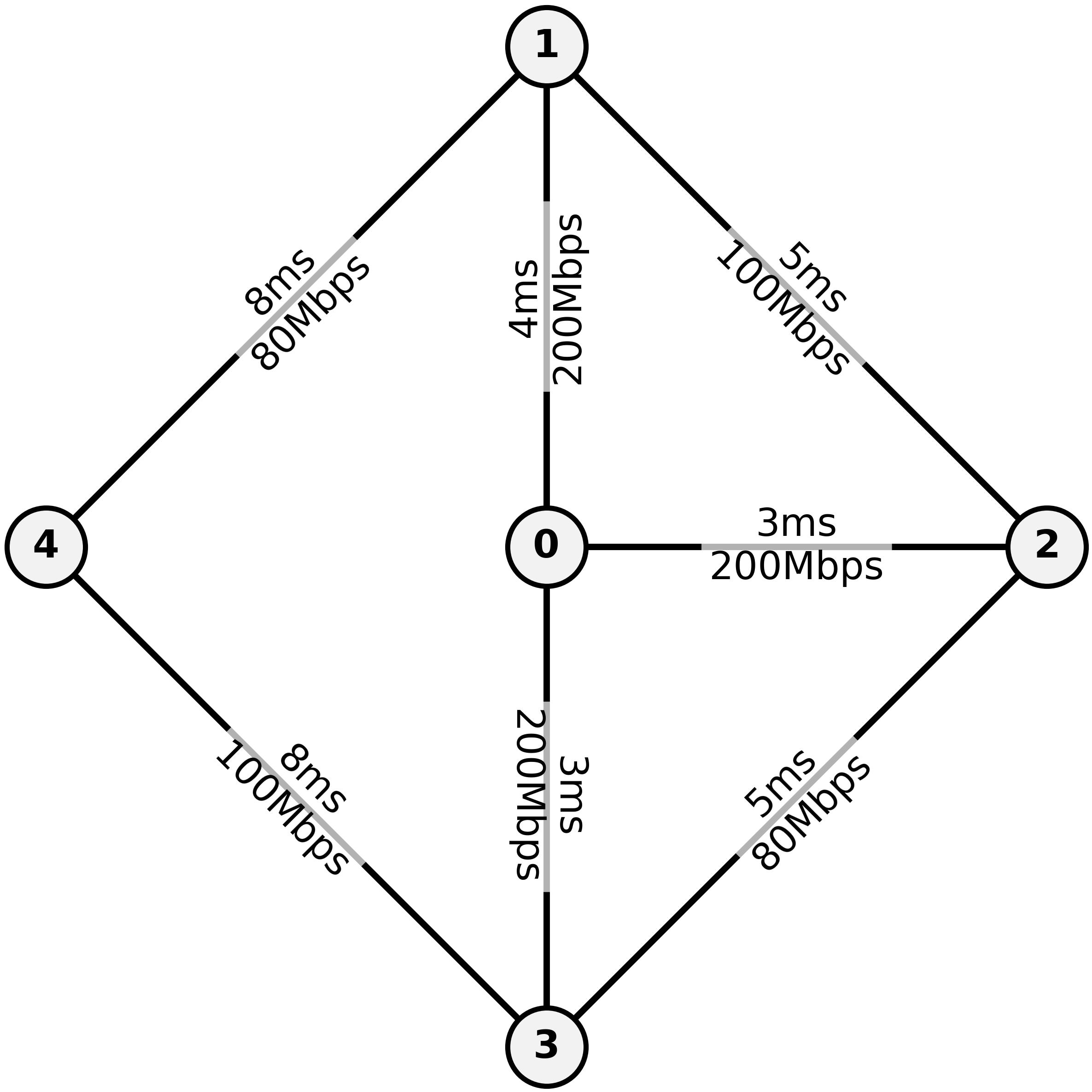

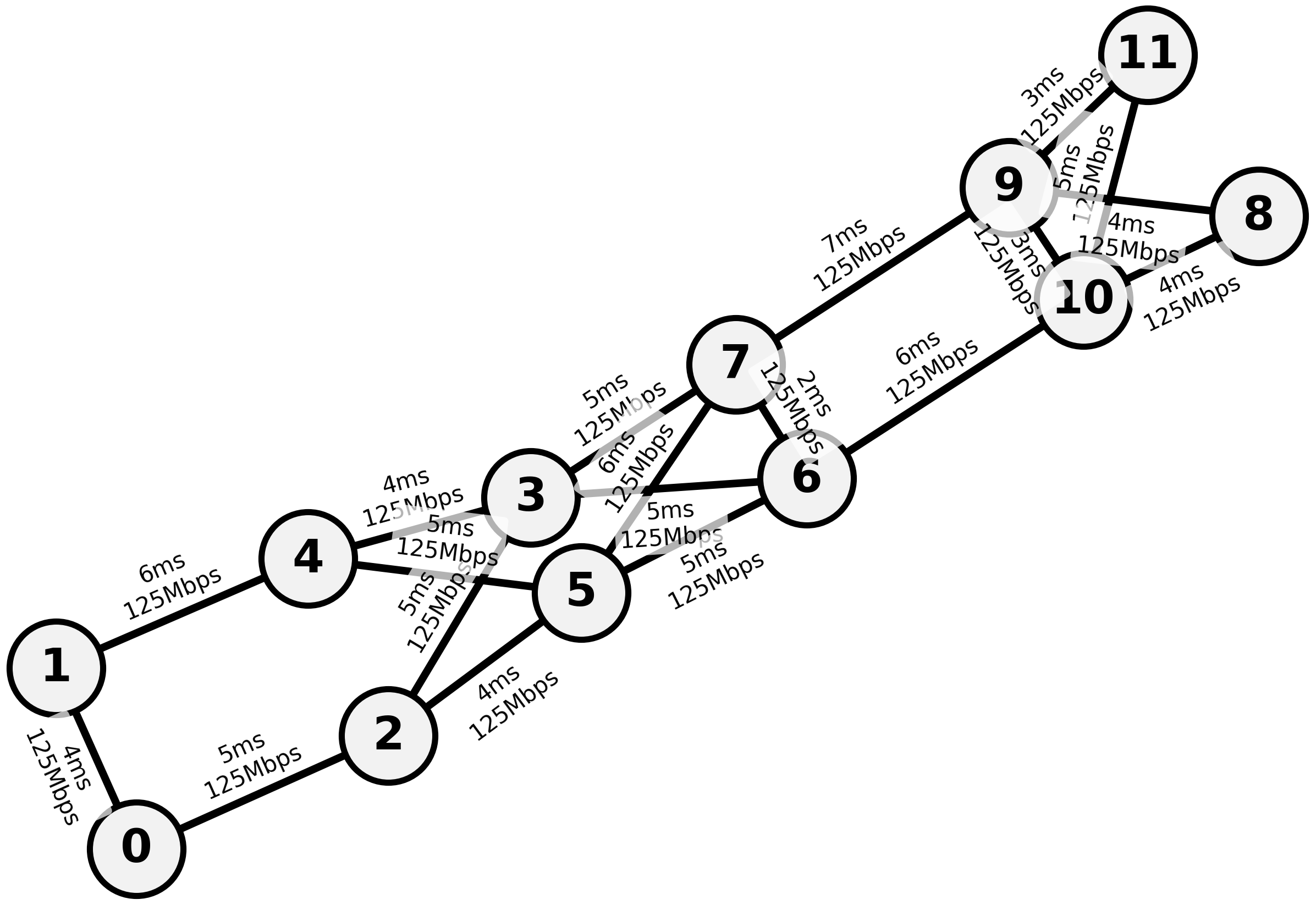

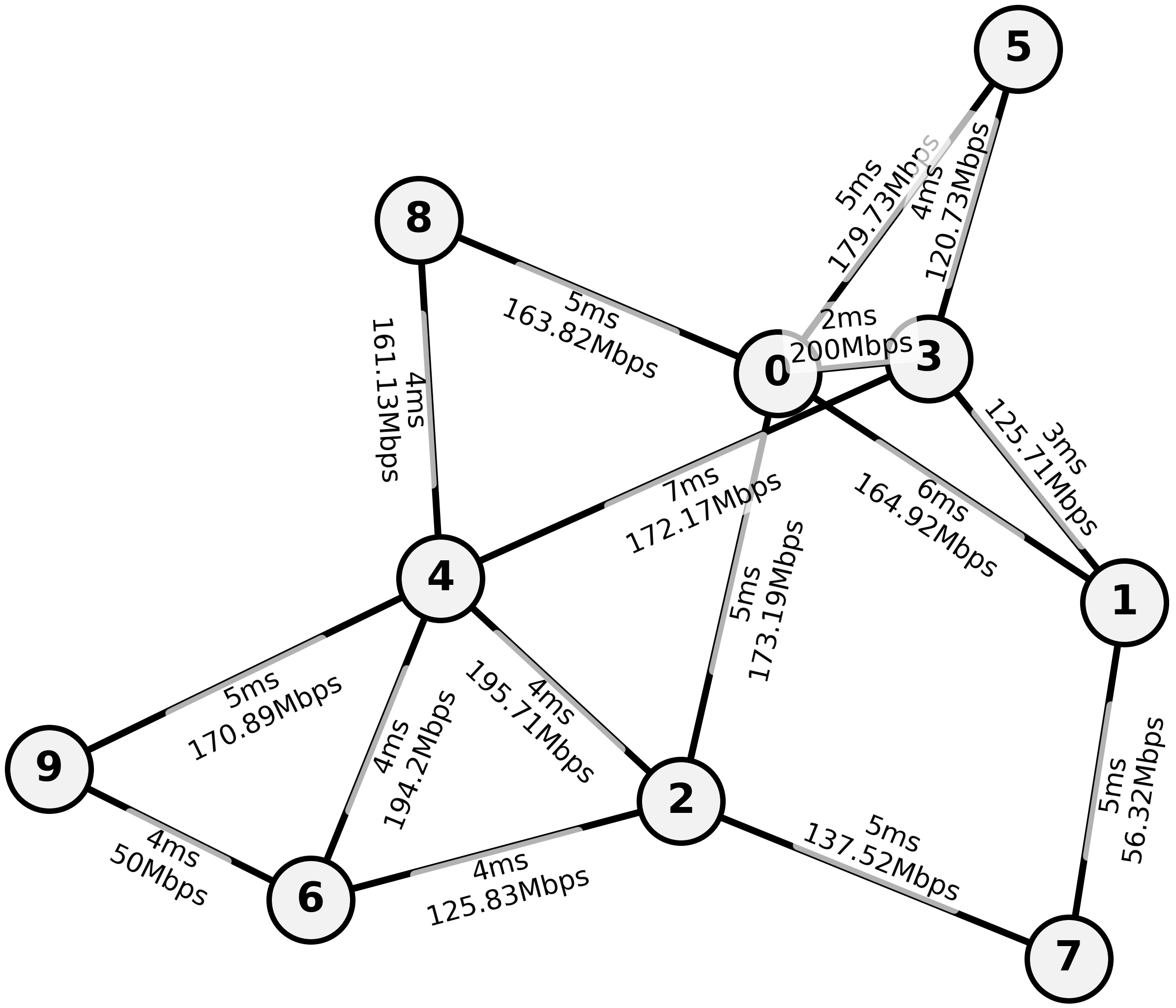

Figure 6: Visualization of sample network topologies, including per-link datarate and propagation delays.

Deployment Mode and Delay Sensitivity

- Local-Multi deployment (distributed agents/observers, per-node partial observation and action inference) is empirically optimal when delay is respected. Centralized observer/decision modes are consistently outperformed, highlighting the penalty of centralized bottlenecks or delayed global snapshots.

- Increased inference delay proportionally reduces throughput; performance is near-monotonic in v∈V1, especially for large topologies (see GEANT results and scaling plots).

- Training with centralized or local observation both converge, but Local-Multi is required for realizing the benefit at inference time; multi-agent RL accelerates convergence, but architectural choice (MAPPO vs. IPPO) has minimal impact.

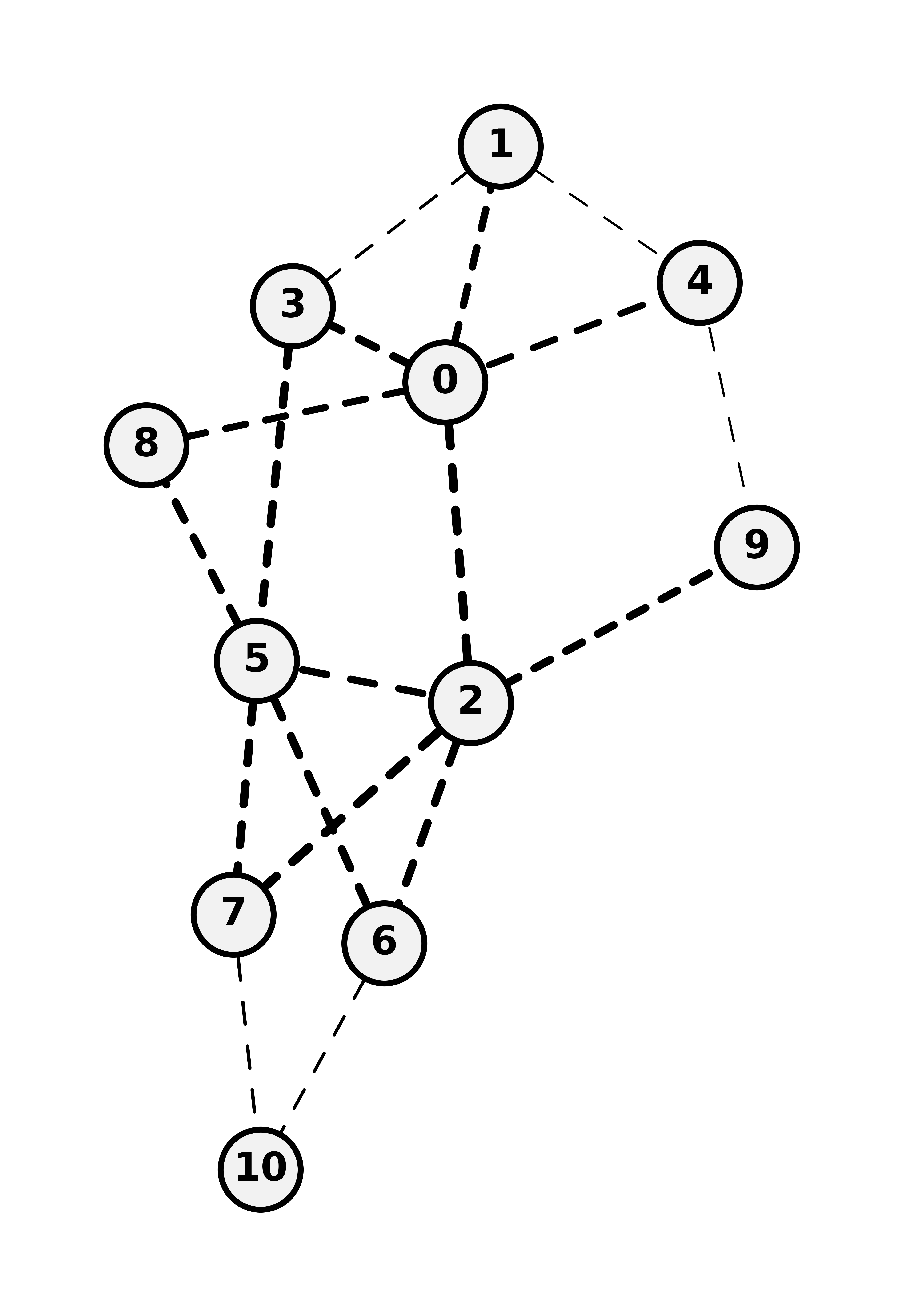

Figure 7: Additional topology visualizations for the nx-

and nx-S families; edge thickness/dashing encode bandwidth/latency.*

Ablations and Additional Observations

- LOGGIA’s architectural ablations confirm each design choice is necessary: log-space output and increased MPN depth/dimension contribute to final performance.

- Path-level, as opposed to edge-level, exploration provides no benefit; local edge-level stochasticity suffices for robust optimization when coupled to strong credit assignment and maximum-entropy regularization.

- Training with DAgger-style IL, followed by RL, significantly improves final policy quality over pure RL or batch behavioral cloning.

- Hardware acceleration matters: reducing per-step inference time by v∈V2 directly translates to a v∈V3 throughput increase in the high-v∈V4 (delay-maximized) regime.

Implications and Future Directions

Practical

- LOGGIA’s scalable, fully-decentralized deployment with delay-robust inference and high generalization capacity positions it as a viable candidate for integration into real-world wide-area and data center fabrics requiring rapid adaptation.

- Hardware-aware deployment and local inference are essential; centralization is not only suboptimal but can undermine neural approaches entirely, as previous “oracle” global models are unrealistic.

Theoretical

- The results articulate and validate the need for delay-aware RL and GNN-based policies in closed-loop network control, extending the canonical MDP formalism to include asynchronous observation/action and partial observability as first-class primitives.

- The combination of local rewards and imitation pretraining is critical for credit assignment in sparse, high-variance, multi-agent network settings.

Future Directions

- Extension to explicit multipath routing (ECMP, Segment Routing), sophisticated multi-objective reward structures (e.g., QoS fairness, latency, loss), and formal linkage to networked/delay-MDP theory [adlakhaNetworkedMarkovDecision2012, katsikopoulosMarkovDecisionProcesses2003].

- Integration with in-band telemetry and lossy communication modeling; adoption of communication-efficient message passing and compression techniques for massive networks.

- Deployment on custom hardware with graph accelerator support (e.g., FPGAs) to further reduce inference delay and boost real-world performance [zhangGraphAGILEFPGABasedOverlay2023, procacciniSurveyGraphConvolutional2024].

- Adaptation to non-IP domains (transportation, energy), recognizing the broader pattern of neural decision-making in closed-loop, networked control.

Conclusion

LOGGIA delivers a comprehensive framework for delay-aware, telemetry-infused network routing based on distributed graph neural policies, validated by end-to-end numerical superiority over both classical and neural baselines when subject to deployment-realistic delays. The work demonstrates the practical necessity of such closed-loop, fully distributed architectures for next-generation network AI and provides robust empirical evidence justifying this approach, as well as a foundation for further theoretical and system-level research in neural networked control.

Reference: "Towards Near-Real-Time Telemetry-Aware Routing with Neural Routing Algorithms" (2604.02927)