Quantifying Trust: Financial Risk Management for Trustworthy AI Agents

Abstract: Prior work on trustworthy AI emphasizes model-internal properties such as bias mitigation, adversarial robustness, and interpretability. As AI systems evolve into autonomous agents deployed in open environments and increasingly connected to payments or assets, the operational meaning of trust shifts to end-to-end outcomes: whether an agent completes tasks, follows user intent, and avoids failures that cause material or psychological harm. These risks are fundamentally product-level and cannot be eliminated by technical safeguards alone because agent behavior is inherently stochastic. To address this gap between model-level reliability and user-facing assurance, we propose a complementary framework based on risk management. Drawing inspiration from financial underwriting, we introduce the \textbf{Agentic Risk Standard (ARS)}, a payment settlement standard for AI-mediated transactions. ARS integrates risk assessment, underwriting, and compensation into a single transaction framework that protects users when interacting with agents. Under ARS, users receive predefined and contractually enforceable compensation in cases of execution failure, misalignment, or unintended outcomes. This shifts trust from an implicit expectation about model behavior to an explicit, measurable, and enforceable product guarantee. We also present a simulation study analyzing the social benefits of applying ARS to agentic transactions. ARS's implementation can be found at https://github.com/t54-labs/AgenticRiskStandard.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

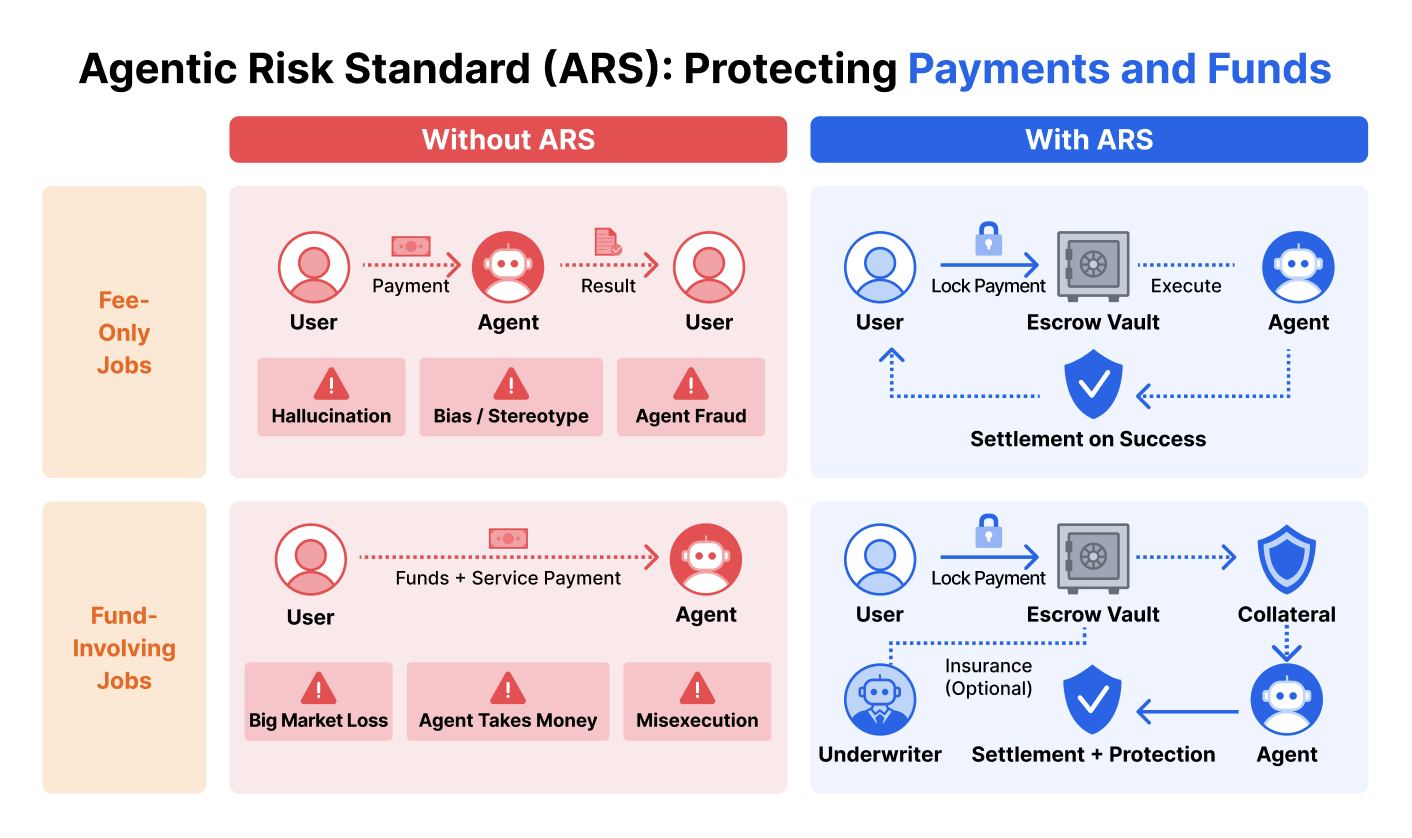

This paper is about making AI “agents” (programs that can act for you, like paying a bill, filing taxes, or trading money) safer to use in the real world. Instead of only trying to make the AI’s brain perfect, the authors propose a new way to protect users at the money-and-payments layer. They introduce the Agentic Risk Standard (ARS), a set of rules for how to hold payments, handle mistakes, and compensate users if an AI agent messes up.

What questions are the authors trying to answer?

- How can we protect people when AI agents take actions that involve real money or sensitive tasks?

- How can we move from “we hope the AI behaves” to “we guarantee what happens if it doesn’t”?

- Can we borrow ideas from finance (like escrow, insurance, and security deposits) to make AI services more trustworthy?

How did they approach the problem?

The authors design ARS as a “settlement-layer” standard—think of it as a safety net built into how you pay for AI services. It doesn’t change how the AI thinks; it changes how money is handled before, during, and after the AI does a job.

Simple definitions you need

- Escrow: Like when a trusted third party holds your payment and only releases it if the job is done right. If not, you get a refund.

- Underwriting: Like buying insurance. An “underwriter” agrees to cover certain losses in exchange for a small fee (a premium).

- Premium: The price you pay for that insurance.

- Collateral: Like a security deposit. The AI service provider puts down money that can be taken if they break the rules.

ARS in action: a step-by-step

Here’s how a typical job works under ARS, in clear steps:

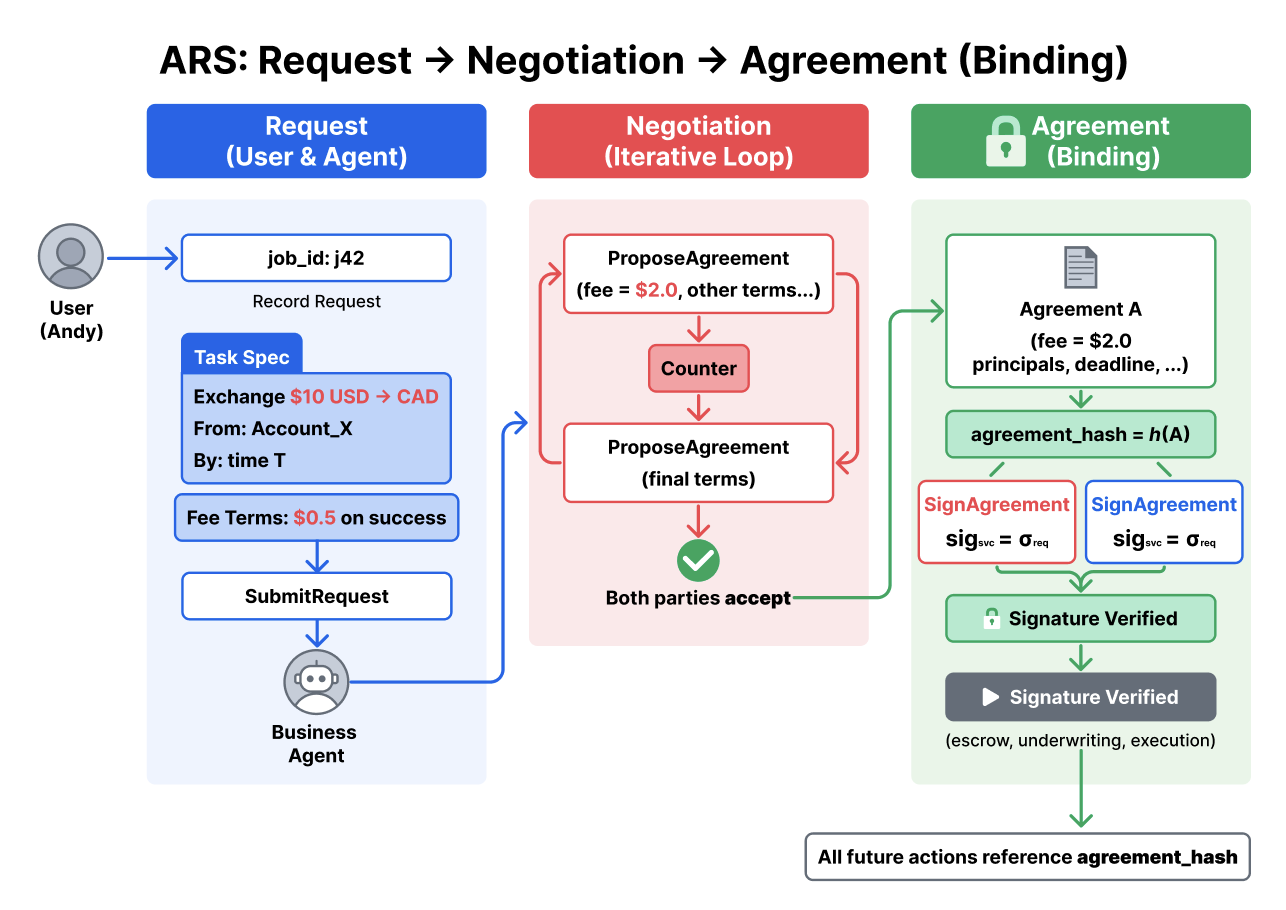

- Agreement: The user and the AI service agree on what the job is, how success will be checked, the fee, deadlines, and what counts as failure.

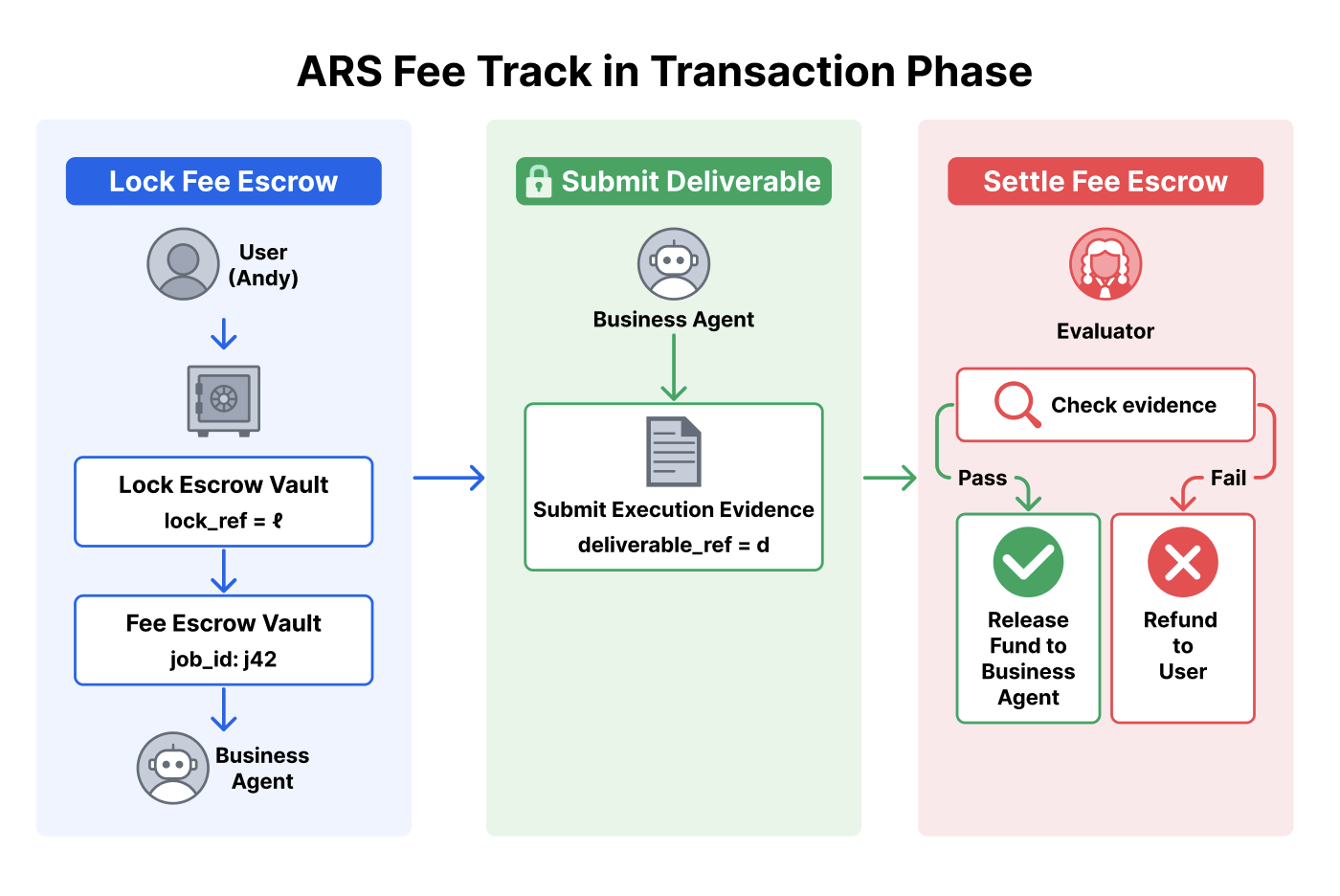

- Lock the fee (escrow): The user’s fee goes into a holding account. It won’t be paid until the result is verified.

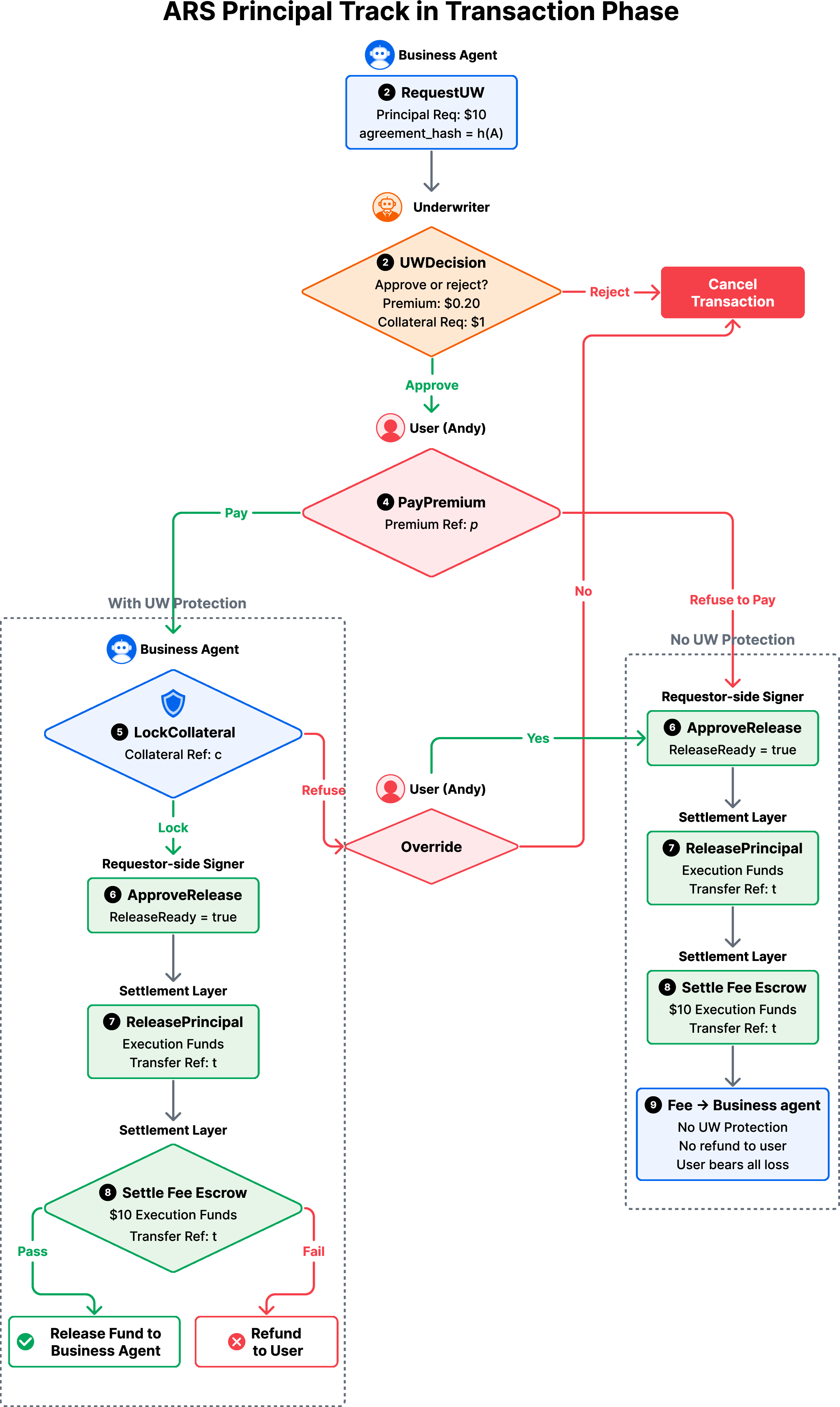

- If the job moves money (fund-involving):

- The user can choose insurance (underwriting) by paying a premium.

- The provider may have to post collateral (a security deposit).

- Only after these are in place is the user’s principal (the actual funds to be moved) released for execution.

- Do the job: The AI agent performs the task and submits proof (like receipts or documents).

- Evaluate: A checker/referee verifies if the job met the agreed rules.

- Settle:

- If success: the escrowed fee is released to the provider and collateral is returned.

- If failure: the fee is refunded; if insured, the user gets paid back according to the policy; collateral may be partially or fully taken to help cover losses.

The standard also cleanly separates two kinds of tasks:

- Fee-only tasks (like making a slide deck): only the fee is at risk pre-check, so escrow and verification are enough.

- Fund-involving tasks (like trading or transferring money): because your money must move before you can check results, ARS adds underwriting and collateral to protect you.

What did they find?

- There’s a “guarantee gap” today: even well-aligned AI can still fail because their behavior is somewhat unpredictable. Technical fixes lower risk but cannot erase it.

- ARS helps close that gap by turning vague trust into clear, enforceable rules about payments and compensation. Instead of hoping nothing goes wrong, you know exactly what happens if it does.

- The authors built the ARS framework and ran simulations showing how it can improve user protection and encourage adoption of AI agents. While they don’t push a one-size-fits-all pricing model, their analysis highlights how escrow, collateral, and underwriting can be tuned to balance user safety, provider participation, and market growth.

- ARS is modular: it works across many types of tasks and doesn’t force one risk model—teams can plug in their own risk assessments while using the same settlement rules.

Why does this matter?

- Safer real-world use: As AI agents start handling money, legal documents, or code, mistakes can be costly. ARS gives people confidence to delegate—because downside risk is bounded and compensation is pre-agreed.

- Clear accountability: ARS defines who pays what and when, using receipts and signed records. This makes disputes rare and faster to resolve.

- Faster adoption: Many industries rely on financial safeguards (escrow for e-commerce, insurance for doctors, collateral for loans). Bringing the same ideas to AI agents can unlock more high-stakes, valuable use cases.

Bottom line

This paper shifts trust from “trust the AI model” to “trust the transaction.” By combining escrow (hold payments), collateral (security deposits), and underwriting (insurance), the Agentic Risk Standard makes AI-driven jobs safer, clearer, and fairer. That means people and companies can use AI agents for more important tasks—with real protections if things go wrong.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a focused list of concrete gaps and unresolved questions that future research should address to turn ARS from a principled proposal into a robust, deployable standard.

- Empirical validation: Lack of real-world case studies quantifying ARS’s impact on user outcomes (loss reduction, dispute rates, adoption) and provider economics (conversion, churn, margins) across domains.

- Pricing models: No concrete actuarial methods for estimating loss probabilities and severities for diverse agentic tasks; unclear data requirements, feature sets, and calibration procedures for premiums, deductibles, and limits.

- Portfolio risk and tail dependence: Absent modeling of correlated and systemic failures (e.g., model version bugs or prompt exploits causing mass losses), reinsurance structures, and capital buffers to ensure underwriter solvency.

- Capital requirements: No framework for setting minimum capital, reserve adequacy, and stress tests for underwriters and collateral pools under severe but plausible scenarios.

- Oracle/evaluation robustness: How to design verifiable, tamper-resistant acceptance tests and “auditable signals” for off-chain outcomes; preventing oracle manipulation and ensuring evaluator independence.

- Dispute resolution design: Unspecified arbiter selection, anti-bias safeguards, appeals processes, SLAs for decisions, and fee allocation during disputes; incentive alignment for evaluators.

- Legal enforceability: Unclear how “contractually enforceable” guarantees map to binding legal contracts across jurisdictions, including licensing for insurance, consumer protection laws, and limitations on arbitration clauses.

- KYC/AML and sanctions: Integration with identity verification and compliance for fund-releasing tasks across payment rails and jurisdictions is unspecified.

- Fraud and collusion: No mechanisms to detect or deter collusion between user, provider, and evaluator (e.g., engineered failures to extract payouts) or moral hazard from users or agents.

- Identity and authorization: Practical methods to authenticate humans vs. assistant agents, manage keys, handle compromise/recovery, and implement the proposed 1-of-2 and 2-of-3 approval rules securely at scale.

- Custody security: Threat model and hardening of escrow, collateral, and payout vaults (e.g., smart-contract audits, key management, operational security) and failure handling if custody components or payment rails fail.

- Payment rails and reversibility: Handling chargebacks, ACH reversals, partial settlements, and finality mismatches between on-chain and off-chain rails; reconciliation across heterogeneous systems.

- Coverage triggers: Formal, machine-checkable definitions for “misalignment,” “misexecution,” and “unintended outcomes,” including edge cases, to avoid ambiguity and gaming.

- Non-monetary harms: Frameworks to quantify and compensate non-monetary losses (e.g., codebase corruption, data breaches, IP leakage, reputational harm), which the paper defers as “context-dependent.”

- Partial and milestone settlement: Support for multi-stage tasks (milestones, partial success), pro-rata releases, and differential coverage across stages is not specified.

- Cancellation and unwind semantics: Clear rules and fairness for premium refunds, collateral unlock, and fee refunds under cancellations, timeouts, or ambiguous outcomes.

- Human-override path: UX, consent capture, and safeguards against dark patterns when users waive underwriting and accept full risk; logging and auditability of override decisions.

- Scalability and latency: Performance of multi-signature approvals, underwriting decisions, and evaluations for high-throughput, low-latency tasks (e.g., trading) is untested.

- Governance of ARS and evaluators: Who controls the settlement layer and evaluator registry; how governance avoids centralization and conflicts of interest; upgrade and versioning policies.

- Interoperability: Concrete mappings to MCP/UCP/ACP and other commerce protocols, including schemas for structured agreements and cross-standard compatibility.

- Simulation realism: The paper’s simulation is not documented with real-world loss distributions, behavioral heterogeneity, or market frictions; sensitivity analyses and validation against empirical incident data are missing.

- Privacy-preserving evidence: Methods (e.g., zero-knowledge proofs, TEEs) to provide verifiable outcome evidence without revealing sensitive financial or business data are not developed.

- Multi-agent/multi-party jobs: Support for tasks spanning multiple providers, chained workflows, or hierarchical agents, including composable coverage and conflict resolution among multiple underwriters.

- Long-running/dynamic tasks: Re-pricing, re-collateralization, and mid-execution contract amendments for evolving jobs (e.g., agent replanning, external shocks) are unspecified.

- Adverse selection and fairness: How to prevent coverage pools from attracting only high-risk jobs; fairness of premiums across users and avoidance of discriminatory features in pricing models.

- Reputation systems: Incorporation of provider/user track records into pricing and collateral requirements; sybil resistance and portability of reputation across platforms.

- Model/version risk: Accounting for risks associated with underlying model updates, jailbreaks, or supply-chain changes that may cause correlated failures across providers.

- Catastrophic risk backstops: Design of mutualized pools, clearinghouse-like mechanisms, or public-private backstops for black-swan agent failures; recovery planning.

- FX and market execution risks: Treatment of slippage, latency, liquidity gaps, and rate oracles for fund-involving tasks; clear assignment of market vs. execution risk.

- Benchmarking and data sharing: Lack of standardized incident taxonomies, loss datasets, and privacy-preserving reporting mechanisms to support cross-provider risk modeling.

- Payout performance: Guarantees on payout timelines, partial/advance payments, penalties for late payment, and automated enforcement if underwriter funds are insufficient.

- Incentive compatibility: Formal analysis ensuring that underwriters, evaluators, and providers have incentives aligned with truthful reporting, rigorous evaluation, and loss mitigation.

- Formal verification: No formal proofs of the state machine’s safety/liveness; absence of model checking or adversarial analysis against byzantine participants.

- User comprehension: Standardized disclosures that make coverage terms, limits, and exclusions legible to non-expert users; measuring comprehension and informed consent.

- Cost-benefit trade-offs: Quantification of operational overhead, transaction costs, and their impact on small-value tasks; thresholds at which ARS becomes economically viable.

- Adoption pathways: Strategies to bootstrap underwriter capacity and liquidity, incentivize early provider participation, and migrate existing agent services into ARS.

- Regulatory compliance for insurance: Clarity on whether underwriting constitutes regulated insurance, licensing requirements, handling of claims disputes, and cross-border compliance.

- Collateral valuation and liquidation: Policies for marking-to-market volatile collateral, liquidation triggers and oracles, and procyclicality risks during market stress.

- Coverage structuring: Guidance on limits, deductibles, co-insurance, and exclusions to balance user protection with underwriter sustainability across task types.

- Monitoring and auditing: Continuous monitoring, audit frameworks, and red-teaming of the settlement layer and evaluators to detect drift, gaming, and emerging risks.

Practical Applications

Immediate Applications

Below are applications that can be piloted or deployed today by leveraging ARS’s escrow, collateral, underwriting, and evaluation primitives. They are grouped as concise bullets; each notes sector(s), likely tools/workflows, and key dependencies.

- AI shopping and booking agents with escrow-backed service fees

- Sectors: e-commerce, travel, consumer fintech; Daily life and industry

- What: Consumers delegate price scouting, booking, and order placement; service fees are escrowed and released only if terms (dates, fare class, return policy) match the signed agreement.

- Tools/workflows: “ARS escrow” plugin for agent marketplaces; evaluator oracles to verify itinerary, vendor receipts, return windows.

- Dependencies/assumptions: Reliable delivery verification (APIs, screenshots with hashes); refund and cancellation policies mapped into acceptance criteria; card-on-file payment rails.

- Consumer FX and bill-pay with capped underwriting

- Sectors: finance/fintech; Daily life, SMBs

- What: Agents execute small FX conversions or bill payments under ARS; principal is released only after (i) premium paid, (ii) provider collateral posted (if required), and (iii) underwriter approval; covered failures trigger reimbursement.

- Tools/workflows: Bank/exchange connectors; “principal track” with RequestUW → PayPremium → LockCollateral → ReleasePrincipal; evaluator checks bank receipts.

- Dependencies/assumptions: KYC/AML on payment rails; underwriter capital for small-ticket coverage; clear failure triggers (late, wrong beneficiary, wrong currency, missing receipt).

- API marketplace “escrow-as-a-service” for per-task agent jobs

- Sectors: software, platforms

- What: API aggregators and agent platforms add an ARS-compliant escrow layer for fee-only tasks (report generation, slide creation, spreadsheet cleanup).

- Tools/workflows: Fee track (LockFeeEscrow → SubmitDeliverable → SettleFeeEscrow); evaluator is automated (file hash, linter/tests passed) or human-in-the-loop.

- Dependencies/assumptions: Stable evaluators; fair dispute windows; low-friction signatures (MCP/ACP tool).

- ARS-gated coding assistants for production changes

- Sectors: software/DevOps; Industry and academia (tooling research)

- What: Coding agents submit PRs under a structured agreement; service fees escrowed and released on objective gates (tests pass, coverage thresholds, code owners’ approval). Monetary claims are limited (fee-only), but agreements can include collateralized penalties for policy violations (e.g., editing protected files).

- Tools/workflows: CI/CD oracles; PR policy evaluators; ARS fee track; optional “policy-collateral” for high-risk repos.

- Dependencies/assumptions: Non-monetary harm is monetized via explicit penalties; organizations define precise acceptance criteria; no broad authorization to deploy without human approval.

- Contact-center “autopilot” with refundable commitments

- Sectors: customer service, telecom, SaaS

- What: Agents that offer discounts/credits do so under ARS; fees are escrowed, and providers post collateral to cover unauthorized promises; disputes are adjudicated via evaluator logs.

- Tools/workflows: Conversation logs as evidence; collateral vault; evaluator/arbiter role configured by the enterprise.

- Dependencies/assumptions: Clear policy codification (authorized offers, spend limits); reliable transcript hashing; privacy controls.

- On-chain ARS vaults for DeFi-limited agent execution

- Sectors: decentralized finance, software

- What: Smart contracts implement ARS fee escrow, provider collateral, and automated payout on covered failures for narrowly scoped on-chain tasks (e.g., rebalance between two DEX pools within slippage/time bounds).

- Tools/workflows: Solidity/Move vaults; oracle-based EvaluatorOutcome; parametric triggers (deadline miss, slippage breach).

- Dependencies/assumptions: Oracle correctness; protocol audit; gas/latency constraints; limited strategy scope to bound loss.

- SMB back-office automation with ARS

- Sectors: finance/ops for SMBs; Daily life (sole proprietors)

- What: Invoice drafting (fee-only escrow); payment initiation (fund-involving with underwriting). Claims when payments miss suppliers or exceed authorized amounts.

- Tools/workflows: Accounting system integration; dual-track custody; evaluator uses bank and invoice system receipts.

- Dependencies/assumptions: Permissions scoping; reconciler oracles; premium pricing economically viable at SMB scale.

- “AI freelancer” marketplaces adopting ARS

- Sectors: platforms, gig economy

- What: Task contracts become ARS-structured agreements; escrowed fees and optional provider collateral improve buyer confidence and reduce disputes.

- Tools/workflows: Job lifecycle UI (REQUEST → NEGOTIATION → TRANSACTION → EVALUATION → CLOSED); arbiter marketplace; claims dashboard.

- Dependencies/assumptions: Platform policy alignment; low-friction KYC for providers; normalized deliverable refs (artifact hashes, sandbox runs).

- Academic testbeds for agent risk and settlement

- Sectors: academia

- What: Courses and labs use the open-source ARS to run controlled experiments on pricing, collateralization, moral hazard, and adoption dynamics.

- Tools/workflows: ARS simulation extensions; synthetic markets with tunable failure rates; standardized evaluator oracles.

- Dependencies/assumptions: Public datasets of agent failures; reproducible configs; IRB guidance for fund-involving experiments.

- Procurement and compliance templates with ARS clauses

- Sectors: policy/government, enterprise legal

- What: RFPs and MSAs include ARS-aligned clauses (escrow, evaluator, dispute windows, logs as evidence) for agent-based services.

- Tools/workflows: Contract templates; evaluator registries; compliance checklists.

- Dependencies/assumptions: Legal recognition of structured agreements and digital signatures; data retention standards for evidence.

- Consumer tax-filing assistants with escrowed fees and bounded fund authority

- Sectors: consumer finance; Daily life

- What: Draft-preparation is fee-only escrow; submission authority (fund-involving for filing fees or remittances) is gated by underwriting with small coverage limits.

- Tools/workflows: Evidence via IRS/state receipts; evaluator checks amounts, deadlines, and ID matches.

- Dependencies/assumptions: Data privacy; regulator-friendly audit logs; premium economics for small-ticket risks.

Long-Term Applications

These applications require further research, scaling, regulatory clarity, robust evaluators/oracles, or deeper integration with payment and legal infrastructure.

- Underwriting markets and reinsurance for AI-agent risk

- Sectors: finance/insurance; Industry and policy

- What: Specialized underwriters price diverse agent risks (software ops, payments, trading, logistics); secondary markets pool and reinsure exposure.

- Tools/workflows: Risk model SDKs; shared claims databases; capital adequacy regimes; dynamic premium APIs.

- Dependencies/assumptions: Actuarial data; standardized triggers; regulatory approval for new insurance lines.

- Agent clearinghouses and central counterparties

- Sectors: finance/market infrastructure

- What: Centralized or decentralized “clearing” of agent transactions with margining, netting, and default management mapped to ARS collateral/payout semantics.

- Tools/workflows: Collateral risk engines; stress testing; default waterfalls.

- Dependencies/assumptions: Interoperability across payment rails; legal entity frameworks; systemic risk oversight.

- Healthcare admin and, eventually, clinical support with ARS guarantees

- Sectors: healthcare

- What: Near-term admin (prior auth, benefits checks) with fee escrow and limited underwriting; long-term clinical decision support with malpractice-like coverage.

- Tools/workflows: HIPAA-compliant evaluator oracles; EMR audit trails; provider-side collateral for misrouting/misdocumentation.

- Dependencies/assumptions: Regulatory pathways; privacy and data minimization; outcome attribution in complex care settings.

- Education agents with performance-backed guarantees

- Sectors: education

- What: Tutoring/coaching agents with escrowed fees contingent on engagement/assignment completion; long-term outcome guarantees (assessments, credentials) with standardized evaluators and limited coverage.

- Tools/workflows: LMS integrations; proctored evaluator oracles; fairness-aware pricing.

- Dependencies/assumptions: Avoidance of perverse incentives; robust measurement of learning outcomes; equity considerations.

- Robotics and physical-world services

- Sectors: robotics, logistics, smart home

- What: Delivery/maintenance robots operate under ARS; collateral and insurance backstop property damage or SLA misses.

- Tools/workflows: IoT-signed telemetry as evidence; parametric triggers (location, time-on-task).

- Dependencies/assumptions: Reliable physical-world oracles; safety certification; tort and insurance alignment.

- Distributed energy and grid services

- Sectors: energy

- What: DER agents (rooftop solar, batteries) bid into markets under ARS with collateralized SLAs and payouts for non-delivery of promised capacity.

- Tools/workflows: Smart-meter evidence; market operator evaluators; collateral pools.

- Dependencies/assumptions: Market rule changes; M&V (measurement and verification) standards; cyber-physical security.

- Cross-border agent commerce with harmonized ARS semantics

- Sectors: payments, policy

- What: Integration with UCP/MCP/ACP-like standards to support cross-rail escrow, collateral, and claims; unified identifiers and receipts.

- Tools/workflows: Multi-rail custodians; FX-aware evaluators; sanctions screening.

- Dependencies/assumptions: KYC/AML harmonization; treatment of digital signatures across jurisdictions.

- Parametric insurance products for agent tasks

- Sectors: insurance, platforms

- What: Pre-specified triggers (deadline, slippage, latency, uptime) automatically pay claims from payout vaults, reducing dispute friction.

- Tools/workflows: Oracle networks; template policy libraries; real-time monitoring.

- Dependencies/assumptions: Oracle integrity; adversarial robustness; anti-gaming mechanisms.

- Enterprise “merge insurance” and ops guarantees

- Sectors: software/DevOps

- What: Providers offer insured CI/CD services; payouts for downtime attributable to agent-initiated changes; collateralized guardrails.

- Tools/workflows: Causality/evidence pipelines; SLO-aware evaluators; premium tied to blast radius.

- Dependencies/assumptions: Causal attribution in complex systems; data-sharing agreements for postmortems.

- Government services and citizen-facing AI with settlement guarantees

- Sectors: public sector

- What: ARS-backed automation for benefits processing, licensing, or filings; citizens compensated for administrative failures.

- Tools/workflows: Public evaluator registries; transparent claims processes; capped coverage.

- Dependencies/assumptions: Legislative mandates; budgeted risk pools; accessibility and due process.

- M2M (machine-to-machine) commerce and supply-chain agents

- Sectors: manufacturing, logistics

- What: Autonomous agents negotiate micro-contracts with escrowed fees, collateralized delivery risks, and standardized claims (e.g., IoT parts ordering, slot booking).

- Tools/workflows: Supply-chain oracles; digital twins for evaluation; interoperable ARS job objects in EDI-like workflows.

- Dependencies/assumptions: Identity/attestation for devices; robust time/date/location proofs; resilience to collusion.

Cross-cutting assumptions and dependencies

- Evaluator/oracle quality: Many applications hinge on reliable, auditable outcome signals; sectors will need certified evaluators and tamper-evident logging.

- Legal enforceability: Structured agreements, digital signatures, collateral slashing, and automated payouts must be recognized in relevant jurisdictions.

- Risk data and pricing: Underwriting requires historical failure/claims data; early markets will start with narrow, capped risks and expand as data accrues.

- Identity, permissions, and privacy: Clear role authentication (human vs. assistant), scoped authorizations for principal release, privacy-preserving evidence.

- Interoperability: Integration with existing protocols (MCP, UCP, ACP), payment rails, and custodians; standardized receipts (lock_ref, settlement_ref, payout_ref).

- Incentive alignment: Moral hazard and adverse selection must be managed via collateral, deductibles, exclusions, and evaluator independence.

- Usability: Human override UX (1-of-2 and 2-of-3 signing) must be simple and explainable to avoid errors and fatigue.

Taken together, ARS enables a practical path to move from probabilistic model reliability to enforceable, auditable product guarantees—first for fee-only tasks through escrow, then for fund-involving tasks via modular underwriting and collateral as evaluators, markets, and regulation mature.

Glossary

- Actuarial risk pricing: Quantitative pricing of risk based on statistical models of loss frequency and severity, used to set premiums and coverage terms. "actuarial risk pricing"

- Actuarially fair premium: A premium equal to the expected loss, before additional loadings for costs and profit. "the actuarially fair premium plus a loading factor"

- Adjudication: The formal process of resolving disputes by an authorized decision-maker. "delivery verification and dispute adjudication"

- Agent Commerce Protocol (ACP): A protocol that coordinates agent transactions via smart contracts with verifiable agreements and evaluation phases. "Agent Commerce Protocol (ACP) defines a smart-contractâmediated workflow for agent transactions"

- Agentic Risk Standard (ARS): A transaction-layer standard specifying escrow, collateral, underwriting, and claims for AI agent jobs. "we introduce the Agentic Risk Standard (ARS), a payment settlement standard for AI-mediated transactions."

- Arbiter: A neutral authority responsible for evaluating outcomes and resolving disputes. "evaluator/arbiter responsible for delivery verification and dispute adjudication"

- Authorization predicate: A precise logical condition that must be satisfied before a protected action (e.g., fund release) is executed. "only when the release authorization predicate defined by is satisfied"

- Bid bond: A surety bond guaranteeing a bidder’s commitment to enter a contract if awarded, used in procurement. "Bid bond / performance bond"

- Clearinghouse: A central counterparty that manages trade settlement and default risk between market participants. "Margin requirements + clearinghouse"

- Collateral: Assets pledged as security to reduce counterparty risk and cover potential losses. "collateral reduces counterparty exposure"

- Collateralization: The practice of securing obligations with pledged assets to mitigate default risk. "escrow, collateralization, insurance, and clearinghouses"

- Counterparty exposure: The risk that the other party in a transaction fails to perform or defaults. "Escrow eliminates direct counterparty exposure"

- Decentralized finance (DeFi): Blockchain-based financial services (e.g., lending, trading) operating without traditional intermediaries. "DeFi / crypto lending"

- Distribution shifts: Changes in data distribution between training and deployment that can degrade model performance. "distribution shifts"

- Escrow: A conditional custody arrangement where funds are held and released only upon verified conditions. "Escrow is a conditional custody arrangement"

- Escrow vault: A controlled account that holds service fees until conditions for release or refund are met. "service fees are locked in an escrow vault"

- Ex ante: Evaluated or bounded before the fact; used to describe pre-commitment risk assessments. "difficult to bound ex ante"

- FX (foreign exchange): The conversion of one currency into another in financial transactions. "perform the FX conversion"

- Fund-involving tasks: Jobs that require releasing user principal or financial authority before results can be verified. "Fund-involving tasks require releasing a principal or granting financial authority before outcomes can be verified"

- Hallucination: An AI failure mode where the model produces confident but false or fabricated outputs. "Misexecution, hallucination, jailbreaking"

- Jailbreaking: Techniques that bypass model safety guardrails to induce unintended behavior. "Misexecution, hallucination, jailbreaking"

- Loading factor: The premium surcharge added above expected loss to cover risk capital, costs, and profit. "a loading factor that accounts for risk capital, administrative costs, and profit margin"

- Margin requirements: Capital that traders must post to cover potential losses and ensure solvency during market movements. "Margin requirements + clearinghouse"

- Mechanistic interpretability: An approach to explain models by uncovering internal computational mechanisms. "mechanistic interpretability"

- Model Context Protocol (MCP): A standardized interface for connecting model hosts to external tools and contexts. "Model Context Protocol (MCP)-style designs"

- Payment rails: Underlying networks or APIs that execute and settle monetary transfers. "Actual transfers are executed via underlying payment rails"

- Performance bond: A surety instrument guaranteeing completion or performance of contracted obligations. "Performance bond"

- Premium: The price paid to an underwriter for assuming specified risk under a coverage policy. "charges premium for doing so."

- Principal (execution principal): The user’s funds released pre-execution for a task that entails financial exposure. "the execution principal (e.g., the \$10 that must be released to perform the FX conversion)"

- Red teaming: Systematic probing of a system (often adversarially) to discover vulnerabilities and failure modes. "red teaming"

- Reimbursement payout vault: A reserve account used to pay approved insurance claims automatically under the policy. "Reimbursement payout vault is optional"

- Risk capital: Capital set aside by an insurer/underwriter to absorb potential losses beyond expected claims. "risk capital, administrative costs, and profit margin"

- Settlement layer: The infrastructure that enforces conditional custody, fund movements, and final transaction settlement. "ARS assumes a settlement layer that implements conditional custody and settlement actions"

- Settlement semantics: The formal rules that map execution outcomes to deterministic payment, refund, or compensation actions. "defines settlement semantics through a structured state machine"

- Slash (slashing collateral): The punitive seizure of pledged collateral upon verified policy-violating failures. "slash/unlock"

- Surety provider: An entity that guarantees a party’s performance via bonds (e.g., bid or performance bonds). "Insurer / surety provider"

- Tool-augmented agents: AI agents that use external tools/APIs to perform structured actions beyond text generation. "Tool-augmented agents integrate APIs and symbolic tools"

- Underwriter: The party that evaluates, prices, and assumes specified risks in exchange for premium. "the underwriter evaluates, prices, and assumes a specified risk"

- Underwriting: The process of assessing, pricing, and contractually assuming risk, often with required collateral and explicit triggers. "Underwriting coverage must be explicitly adopted in the structured agreement."

- Universal Commerce Protocol (UCP): A protocol proposing common primitives for agentic commerce across consumer and payment ecosystems. "Universal Commerce Protocol (UCP) proposes a common language and primitives for agentic commerce"

Collections

Sign up for free to add this paper to one or more collections.