An algorithmic Polynomial Freiman-Ruzsa theorem

Abstract: We provide algorithmic versions of the Polynomial Freiman-Ruzsa theorem of Gowers, Green, Manners, and Tao (Ann. of Math., 2025). In particular, we give a polynomial-time algorithm that, given a set $A \subseteq \mathbb{F}_2n$ with doubling constant $K$, returns a subspace $V \subseteq \mathbb{F}_2n$ of size $|V| \leq |A|$ such that $A$ can be covered by $2KC$ translates of $V$, for a universal constant $C>1$. We also provide efficient algorithms for several "equivalent" formulations of the Polynomial Freiman-Ruzsa theorem, such as the polynomial Gowers inverse theorem, the classification of approximate Freiman homomorphisms, and quadratic structure-vs-randomness decompositions. Our algorithmic framework is based on a new and optimal version of the Quadratic Goldreich-Levin algorithm, which we obtain using ideas from quantum learning theory. This framework fundamentally relies on a connection between quadratic Fourier analysis and symplectic geometry, first speculated by Green and Tao (Proc. of Edinb. Math. Soc., 2008) and which we make explicit in this paper.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper is about finding hidden structure in big collections of 0–1 vectors (think: lists of n bits). The authors turn a famous idea from math (the Polynomial Freiman–Ruzsa theorem) into fast, practical algorithms. They also discover and use a neat bridge between two areas that don’t usually meet: “higher‑order” Fourier analysis (a way to spot patterns) and symplectic geometry (a kind of geometry of pairs and how they interact). They even show how ideas from quantum computing can inspire better classical algorithms.

The big questions the paper asks

- If you have a set A of n‑bit strings where adding the set to itself doesn’t make it much bigger (this is called “small doubling”), can you quickly find a simple, structured shape (a subspace) that almost covers A?

- Can we turn deep, non‑algorithmic math theorems about structure (like inverse theorems for the Gowers norm) into efficient algorithms?

- Can we design an optimal “quadratic Goldreich–Levin” algorithm—that is, a fast way to find the strongest quadratic pattern hidden in data?

- Can quantum ideas help us do the above faster, or inspire simpler methods?

Key ideas explained simply

Before the methods, here are a few core terms in everyday language:

- Small doubling: Take a set A of n‑bit strings. Form A+A by bitwise addition mod 2 of all pairs in A. If (K is not too big), A behaves a bit like a subgroup. In plain terms: when you “add A to itself,” it doesn’t explode in size, so A must be quite organized.

- Subspace and translates: A subspace V is all solutions to some linear rules (like all bitstrings whose bits add up to 0 mod 2 along certain directions). A “translate” is V shifted by a fixed vector. “Covering A by translates of V” means A sits inside a small number of shifted copies of V.

- Gowers norm: Think of this as a pattern detector that is especially good at noticing “quadratic” patterns—patterns that depend on pairs of bits multiplying together.

- Quadratic phase: A simple kind of quadratic pattern; for example, a function that outputs . Correlation with a quadratic phase means your data lines up with a quadratic pattern.

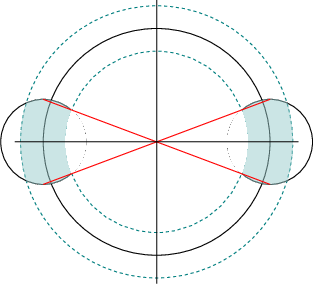

- Symplectic geometry (high level): A way to study pairs (like “position and momentum” in physics) and how they relate. Here, it gives a geometric picture of quadratic patterns. “Lagrangian subspaces” are special “maximally flat” subspaces where certain pairings vanish—these end up matching exactly the strongest patterns.

- Heisenberg/Weyl operators (high level): Think of them as basic “moves” that shift and flip your data. Measuring how your data correlates with these moves reveals structure. These operators also show up in quantum computing and stabilizer codes.

How the authors approach the problem

- They start from the recently proved Polynomial Freiman–Ruzsa (PFR) theorem: if , then A can be covered by a polynomial number of translates of a subspace V with . But that proof is not algorithmic—it doesn’t tell you how to find V efficiently.

- Instead of following the original (information‑theory/entropy) proof, they use a “bridge” to higher‑order Fourier analysis: the Polynomial Gowers Inverse theorem (PGI). There’s a known (combinatorial) equivalence: PFR ↔ PGI. Turning PGI into an algorithm lets them turn PFR into an algorithm too.

- They build a new, near‑optimal Quadratic Goldreich–Levin algorithm. Think of it as a searchlight that scans your data to find the strongest quadratic pattern, fast.

- They make the symplectic geometry link explicit: the best patterns correspond to Lagrangian subspaces in a certain “phase space.” This geometric viewpoint guides their algorithms.

- They borrow ideas from quantum learning (about learning stabilizer states) and “dequantize” them into classical algorithms—keeping much of the speed and simplicity.

What they found (main results) and why it’s important

Here are the main takeaways, with why each matters:

- Algorithmic PFR (classical):

- Result: A polynomial‑time randomized algorithm that, given set A with small doubling (), finds a subspace V with such that A is covered by polynomial-in‑K many translates of V.

- Efficiency: Runs in about time, uses only random samples from A, and a nearly polylog number of membership queries.

- Why it matters: It turns a deep existence theorem into a practical tool. Many problems in theoretical computer science (coding, testing, extractors, complexity, quantum testing) need a fast way to recover structure—not just to know it exists.

- Algorithmic PGI (Polynomial Gowers Inverse):

- Result: A fast algorithm that, if a function has large norm (so it hides quadratic structure), finds an explicit quadratic polynomial that correlates with it by a polynomial amount.

- Efficiency: About time and queries.

- Why it matters: It upgrades earlier, weaker algorithms and is a key step toward algorithmic PFR.

- Near‑optimal Quadratic Goldreich–Levin:

- Result: Given a function, the algorithm finds a quadratic polynomial whose correlation is within a small (almost optimal) gap of the best possible—without exponential blowup.

- Why it matters: This is like a “pattern finder” with almost no loss, improving earlier results that lost correlation exponentially.

- A new framework linking analysis and symplectic geometry:

- Result: They show that the norm can be expressed using Weyl operators and that extremal patterns line up with Lagrangian subspaces.

- Why it matters: This geometric viewpoint simplifies thinking about quadratic structure and guides algorithm design.

- Quantum version with speedups:

- Result: A quantum algorithm that achieves the PFR task with about time and fewer queries than the classical one.

- Why it matters: It shows how quantum tools can give cleaner, faster approaches and also explains how to “dequantize” them back into classical algorithms.

Why this work matters (implications and impact)

- Practical structure recovery: Many tasks in coding theory, property testing, randomness extraction, communication complexity, and quantum information need to detect and use hidden algebraic structure quickly. These algorithms make that feasible.

- Stronger tools for “pattern vs randomness”: The results give better ways to split data into “structured” (quadratic) and “random” parts, with applications to learning, testing, and decompositions.

- Cross‑pollination of fields: Bringing symplectic geometry and quantum ideas into additive combinatorics and algorithms opens new paths for both theory and practice.

- Foundations for future applications: The techniques can help design better testers (e.g., for linearity or stabilizer states), improve decoders or self‑correctors (e.g., for Reed–Muller codes), and support advances in quantum/classical learning algorithms.

The bottom line

If a set of bitstrings barely grows when you add it to itself, it’s hiding strong linear structure. This paper shows how to find that structure quickly. To do it, the authors connect powerful math theorems to efficient algorithms, use a geometric lens to see quadratic patterns, and borrow ideas from quantum learning—getting state‑of‑the‑art classical and quantum algorithms with broad potential impact.

Knowledge Gaps

Below is a concise list of concrete gaps, limitations, and open questions left unresolved by the paper; each item highlights a specific direction for future work.

- Dependence on K in runtime and queries: While the number of translates is polynomial in K, the provided classical algorithmic PFR runs in time K{O(log K)} and uses 2{2K+O(log2 K)} queries (beyond polylog factors in |A|). Can one design an algorithm with running time and query complexity polynomial in both n and K?

- Explicit constants in coverage: The covering bound is stated as 2KC with a universal C>1 but not specified. Can the constant C be made explicit, optimized, or matched to the best-known combinatorial bounds?

- Outputting covering translates: The algorithm outputs a subspace V but does not specify how to efficiently find the actual set of O(KC) translates that cover A. Can one efficiently compute an explicit covering family of translates with comparable guarantees?

- Obliviousness to K: The algorithms are stated for a given K. Can one make the algorithm parameter-free—i.e., discover the appropriate structural parameters adaptively without K as input?

- Robustness to noise in oracles: The analysis assumes exact membership queries and exact uniform samples from A (or exact basis of span(A)). Can the algorithms be made robust to noisy membership or sampling oracles (e.g., adversarial or stochastic noise)?

- Sampling model strength: Uniform sampling from A is assumed and is leveraged to learn span(A). Can one remove or relax this assumption (e.g., rely only on membership queries, or samples from a distribution close to uniform) while retaining polynomial guarantees?

- Space complexity and practical resources: Memory usage and space complexity are not analyzed. What are the space costs of the classical and quantum procedures, and can they be reduced alongside time/query complexities?

- Algorithmic PGI dependence on γ: The algorithmic PGI and Quadratic GL algorithms have complexities (1/γ){O(log(1/γ))} (and similarly in ε). Is it possible to achieve polynomial dependence in 1/γ (or 1/ε) while still returning near-optimal quadratic correlators?

- Optimality of correlation guarantees: The Quadratic GL algorithm nearly preserves the optimal correlation but cannot achieve exact optimality with polynomial queries. What is the tight trade-off between query complexity and correlation loss, and can lower bounds matching the current upper bounds be proved?

- Classical vs. quantum advantage: The quantum algorithm improves the classical runtime and query bounds by roughly a factor of n using stabilizer-learning machinery. Is there a provable asymptotic separation between classical and quantum complexities for algorithmic PFR (or Quadratic GL)? Can the classical algorithm be further dequantized to match quantum performance?

- Extension beyond F2: The symplectic framework and results are discussed for F2n, with comments that odd characteristic often simplifies. Can one provide full algorithmic guarantees (with explicit complexities) for F_pn (p odd), mixed-characteristic groups, and more general finite abelian groups?

- Higher-order analogues: The work centers on U3 and quadratic structure. Can analogous algorithmic inverse theorems and “cubic” (or higher-degree) Goldreich–Levin algorithms be obtained for Uk (k≥4) with polynomial parameter dependencies?

- Alternative access models: Some applications represent A implicitly (e.g., as a graph set or via a function). Can the methods be adapted to settings with only oracle access to a function g whose graph encodes A, or to sparse/streaming models?

- Derandomization: The algorithms are randomized with constant success probability. Can the procedures be derandomized (or use limited independence), and if so, with what cost in time/queries?

- Tightness of n-dependence: The classical algorithm runs in ~O(n4) time for PFR and ~O(n3) for algorithmic PGI. Can these be improved to ~O(n3) or even to near matrix-multiplication time, and are there barriers (conditional or unconditional) to such improvements?

- Stability and outlier tolerance: The guarantees assume exact doubling and exact structural promises. Can one design tolerant/robust versions that recover a structured subspace V when A is only approximately small-doubling (e.g., a fraction of elements violate the promise)?

- Practical computation of Lagrangians: The core primitive “finding high-weight Lagrangian subspaces” is central but only outlined in later sections. Can this step be implemented with improved algorithms (e.g., faster symplectic linear algebra, approximation schemes) or analyzed for stability under noise?

- Beyond coverage: Downstream tasks (e.g., classification of approximate Freiman homomorphisms) are mentioned, but parameter trade-offs and full algorithmic details are not given here. Can the same framework yield explicit, efficient procedures with quantified constants for all equivalent PFR formulations?

- Symplectic-geometric program: The paper strengthens the conceptual bridge between symplectic geometry and quadratic Fourier analysis but does not fully exploit it for new combinatorial/algorithmic tools. Can these connections be extended to yield novel proofs, new invariants, or improved algorithms (e.g., via symplectic reduction or geometric quantization analogues) beyond U3?

- Limits of “non-classical” phenomena: In characteristic two, strictly non-classical quadratic phases matter analytically. Can one quantify how such phases impact algorithmic procedures, and can algorithms detect or exploit non-classical structure directly (versus mapping back to classical quadratics)?

Glossary

- 2-cocycle: A function defining the twisting in a central extension that modifies multiplication; here it encodes the multiplication rule in the Heisenberg group. "in terms of a 2-cocycle $\beta: F_2^{2n} \timesF_2^{2n} \to Z_4$,"

- 2-step nilpotent: A group whose commutator subgroup is contained in the center, making all commutators central. "it is 2-step nilpotent."

- Additive combinatorics: A field studying combinatorial properties of addition in groups and sets, often linking structure and randomness. "is a cornerstone of additive combinatorics,"

- Affine extractors: Pseudorandomness primitives that extract randomness from sources uniform on affine subspaces. "from affine extractors"

- Approximate Freiman homomorphisms: Maps between abelian groups that approximately preserve sums on subsets with small doubling. "the classification of approximate Freiman homomorphisms"

- Central extension: An exact sequence of groups where the kernel lies in the center, extending a group by an abelian group. "This defines a central extension"

- Characteristic function: The indicator function of a set, equal to 1 on the set and 0 outside. "characteristic function "

- Clifford unitaries: Unitary operators that normalize the Pauli group; central in stabilizer quantum mechanics. "testing Clifford unitaries"

- Commutation relations: Algebraic relations describing how operators fail to commute, often reflecting underlying geometry. "satisfy the commutation relations"

- Commutator relations: Equations capturing the group-theoretic commutator structure of generators. "determines the commutator relations:"

- Coset: A translate of a subgroup by a fixed element. "a subgroup or a coset of a subgroup."

- Doubling constant: The ratio |A + A| / |A| measuring how much a set grows under addition; small values indicate structure. "has doubling constant~"

- Gowers norm: A higher-order uniformity norm that quantifies quadratic structure in functions on abelian groups. "The Gowers norm of~ is then given by"

- Group presentation: A description of a group via generators and defining relations among them. "in terms of a group presentation, that is, a set of generators together with the set of defining relations they satisfy"

- Heisenberg group: A central extension of a symplectic vector space encoding canonical commutation relations. "The Heisenberg group is a central extension"

- Heisenberg–Weyl group: The image of the Heisenberg group under the Weil representation; generated by Weyl operators. "is called the Heisenberg--Weyl group"

- Hilbert–Schmidt inner product: An inner product on operators defined via the trace, used to orthonormalize operator bases. "the normalized Hilbert-Schmidt inner product"

- Isotropic subspace: A subspace on which the symplectic form vanishes identically. "A subspace is isotropic"

- Lagrangian manifold: A maximal isotropic subspace in symplectic geometry; here appearing as a geometric intuition. "is a

Lagrangian manifold'' on thephase space'' ." - Lagrangian subspace: A maximal isotropic subspace of a symplectic vector space, of dimension half the ambient space. "Lagrangian subspaces, or Lagrangians for short."

- Non-classical quadratic phase functions: Quadratic phases taking values in higher roots of unity (e.g., 4th roots) rather than just ±1. "non-classical quadratic phase functions:"

- Parseval's identity: An equality relating the L2 norm of a function to the L2 norm of its Fourier transform. "follows easily from Parseval's identity"

- Pauli matrices: The standard single-qubit operators X, Y, Z used to describe qubit dynamics and errors. "In terms of Pauli matrices in quantum theory,"

- Polynomial Freiman–Ruzsa (PFR) theorem: A result stating that small doubling implies polynomially efficient covering by a subspace’s translates. "Polynomial Freiman--Ruzsa theorem"

- Polynomial Gowers inverse (PGI) theorem: The statement that large norm implies polynomially strong correlation with a quadratic phase. "(, for ``polynomial Gowers inverse'')."

- Quadratic Goldreich–Levin algorithm: An algorithm to find a quadratic phase with near-optimal correlation with a given function. "Quadratic Goldreich--Levin algorithm"

- Quadratic Reed–Muller codes: Error-correcting codes whose codewords are evaluations of quadratic polynomials over finite fields. "quadratic Reed-Muller codes over~"

- Stabilizer state: A function (or quantum state) stabilized by an abelian subgroup of Weyl/Pauli operators; extremizes the norm under L2 normalization. "A function is a stabilizer state if"

- Structure-vs-randomness decompositions: Decompositions expressing a function as a structured part plus a pseudorandom part. "quadratic structure-vs-randomness decompositions."

- Symplectic basis: A basis relative to which the symplectic form has standard block form. "called a symplectic basis"

- Symplectic geometry: The study of structures defined by non-degenerate alternating bilinear forms and their invariants. "symplectic geometry"

- Symplectic inner product: The alternating bilinear form defining the symplectic structure. "the standard symplectic inner product:"

- Symplectic group: The group of linear automorphisms preserving the symplectic form. "the symplectic group, denoted~."

- Symplectomorphisms: Structure-preserving maps between symplectic vector spaces (i.e., symplectic linear maps). "symplectic maps, or symplectomorphisms."

- Translation operators: Operators shifting function inputs, representing group action by addition. "the translations~ given by"

- Unitary isometry group: The group of unitary transformations preserving a given norm or inner product structure. "the unitary isometry group of the~ norm modulo the Heisenberg group is isomorphic to the symplectic group~;"

- Unitary representation: A homomorphism from a group to a unitary group acting on a Hilbert space. "give a unitary representation $\rho: H(F_2^{2n})\to \U(F_2^n)$"

- Weil representation: A specific unitary representation of the Heisenberg group (and its normalizer) central in harmonic analysis. "This is called the Weil representation of the Heisenberg group"

- Weyl operators: Unitaries generated by translations and characters, implementing phase-space displacements. "The Weil representation is given in terms of the Weyl operators"

- Z4 (cyclic group of order 4): The integers modulo 4 under addition; appears as the center in the characteristic-2 Heisenberg group. "by~ rather than~"

Practical Applications

Immediate Applications

Below are actionable use cases that can be deployed with the algorithms and insights provided in the paper, together with sectors, potential tools/workflows, and feasibility notes.

- Sector: Software Optimization / SAT Solving

- Use case: Preprocessing and sparsification for 1-in-3-SAT and related Boolean CSPs

- What to do now:

- Integrate the Algorithmic PFR routine to identify low-doubling subsets and cover them by few translates of a subspace V, enabling constraint compression and structure-aware preprocessing.

- Tools/workflows:

- A library routine that takes oracle/query access to Boolean constraints encoded as subsets of F2n and returns a basis for V and a small coset cover.

- Assumptions/dependencies:

- Access to characteristic-function queries and a small number of uniform samples from A.

- Doubling constant K is moderate; mapping of problem constraints to subsets of F2n is available.

- Gains concentrate on instances with latent algebraic structure.

- Sector: Machine Learning / Signal Processing (binary/Boolean domains)

- Use case: Quadratic feature discovery for Boolean functions

- What to do now:

- Use the Quadratic Goldreich–Levin (QGL) algorithm to find quadratic polynomials over F2 that maximally correlate with a given black-box Boolean function. This aids feature engineering, interpretable model diagnostics, and hypothesis testing on nonlinear parity structure.

- Tools/workflows:

- A “quadratic correlator” API that takes query access to f and returns a near-optimal quadratic correlator; supports model analysis pipelines.

- Assumptions/dependencies:

- Query access to f (or high-quality samples).

- Correlation threshold must be non-negligible; runtime grows quasi-polynomially with 1/ε.

- Works natively over F2n; non-binary data must be binarized or embedded.

- Sector: Data Analytics / Cybersecurity

- Use case: Structure-versus-randomness detection

- What to do now:

- Apply the algorithmic PGI (Polynomial Gowers Inverse) to distinguish structured (quadratic-phase–like) signals from noise in Boolean data streams/logs.

- Tools/workflows:

- A “quadratic structure detector” that reports significant quadratic components or certifies high Gowers uniformity.

- Assumptions/dependencies:

- Requires access to queries/samples from the signal/source.

- Effectiveness depends on the magnitude of U3 norm (γ).

- Sector: Coding Theory / Storage / Communications

- Use case: Self-correction and decoding for quadratic Reed–Muller codes RM(2,n)

- What to do now:

- Use QGL to implement near-optimal identification of quadratic codewords closest to a corrupted word; integrate as a robust self-correction step in pipelines handling RM(2,n).

- Tools/workflows:

- A decoder/self-corrector component leveraging the near-optimal correlator returned by QGL.

- Assumptions/dependencies:

- Code family is RM(2,n) over F2; error model compatible with correlation-based decoding.

- Sector: Formal Verification / Program Analysis / Property Testing

- Use case: Testing and classification of approximate homomorphisms and linearity

- What to do now:

- Use the algorithmic equivalents of PFR/PGI to classify maps f: F2n → F2m that are “approximately additive/linear,” facilitating property testing of circuits and transformations.

- Tools/workflows:

- An “approximate homomorphism classifier” that outputs structured approximations or certificates of randomness.

- Assumptions/dependencies:

- Oracle or sample access to f; guarantees are strongest when additive errors are small and K is moderate.

- Sector: Quantum Computing (Verification / Compilation)

- Use case: Stabilizer state learning and Clifford testing

- What to do now (classical):

- Use the classical dequantized QGL and structure-detection methods to analyze data from simulations or measurement logs and infer likely stabilizer structure.

- What to do now (quantum):

- Apply the quantum Algorithmic PFR (fewer queries/gates than classical) to learn subspace structure underlying datasets encoded in oracles, and use the stabilizer-learning workflow as a black-box subroutine for Clifford/unitary testing and device verification.

- Tools/workflows:

- Verification modules that test for stabilizer-like behavior; compiler passes that detect and exploit quadratic/stabilizer structure.

- Assumptions/dependencies:

- Classical: high-quality access to function evaluations/logs.

- Quantum: availability of quantum queries, coherence, and standard gate sets; performance tied to device noise.

- Sector: Cryptography / Randomness Infrastructure

- Use case: Practical pipelines for extractor construction and analysis

- What to do now:

- Employ Algorithmic PFR/PGI to automate steps that convert affine extractors into two-source extractors in prototype systems; use structure detection as a diagnostic to validate entropy assumptions.

- Tools/workflows:

- A “randomness pipeline auditor” that flags structured biases via U3-based tests and suggests corrective transformations.

- Assumptions/dependencies:

- Proof-of-concept/prototyping stage; production-ready integrations require standardization and additional security analysis.

- Sector: Research Tooling (Academia/Industrial Research)

- Use case: Symplectic-geometry–based primitives for quadratic Fourier analysis

- What to do now:

- Adopt the paper’s high-weight Lagrangian finder and Weyl-operator formulations to build reproducible research code for additive combinatorics, learning theory, and quantum information studies.

- Tools/workflows:

- Libraries exposing Heisenberg–Weyl operator bases, Lagrangian subspace optimizers, and U3 computations in L2.

- Assumptions/dependencies:

- Users comfortable with F2-linear algebra; performance best on moderate n or structured instances.

Long-Term Applications

These applications require further research, engineering, scaling, or standardization before large-scale deployment.

- Sector: Quantum Computing (Standards and Certification)

- Use case: Standardized, scalable device verification using stabilizer learning and Clifford testing

- Future direction:

- Integrate the quantum Algorithmic PFR and stabilizer-learning results into certification suites and benchmarks (e.g., for NISQ devices, future fault-tolerant systems).

- Dependencies/assumptions:

- Hardware maturity, robust noise models, reproducible quantum queries; standard-setting bodies alignment.

- Sector: Cryptography / Secure Coding

- Use case: Deployable non-malleable codes and average-case reductions

- Future direction:

- Translate the algorithmic PFR/PGI framework into practical constructions and proofs supporting non-malleable codes and average-case hardness guarantees.

- Dependencies/assumptions:

- Tight parameter analyses, end-to-end security proofs, efficient implementations for real-world block sizes.

- Sector: Large-Scale SAT/SMT / Planning / Configuration Management

- Use case: Structure-aware solvers exploiting low-doubling substructures

- Future direction:

- Embed Algorithmic PFR-driven preprocessors directly into industrial SAT/SMT solvers and orchestration tools, yielding speedups on structured real-world instances.

- Dependencies/assumptions:

- Consistent discovery of low-doubling subsets in production instances; robust mapping from solver internals to F2 representations.

- Sector: Communications/Storage

- Use case: Broad adoption of RM(2,n)-based schemes with fast self-correction

- Future direction:

- Leverage near-optimal QGL-based self-correction to support higher-throughput or low-latency error correction pipelines, possibly with hardware acceleration.

- Dependencies/assumptions:

- System-level evaluation of RM(2,n) trade-offs vs. LDPC/polar codes; hardware support for F2n quadratic operations.

- Sector: Data Science / AI Systems

- Use case: Generalized detection of higher-order structure in categorical/binary data

- Future direction:

- Extend beyond F2 to richer alphabets and mixed-type data; incorporate robust/noisy variants and streaming settings to detect nonlinearity and pseudo-randomness at scale.

- Dependencies/assumptions:

- Theoretical extensions to F_p and to approximate/noisy settings; scalable implementations with sublinear sampling where possible.

- Sector: Theoretical Computer Science / Additive Combinatorics

- Use case: Generalizing PFR/PGI algorithms beyond F2n and tightening constants

- Future direction:

- Develop efficient algorithms for other groups (e.g., F_pn for odd p, or Z/NZ settings), improve dependence on K and γ, and achieve tighter practical constants for broad adoption.

- Dependencies/assumptions:

- New algorithmic insights; bridging proofs that are currently non-constructive (entropy minimization, etc.) with efficient procedures.

- Sector: Tooling for Symplectic/Heisenberg Methods

- Use case: End-to-end libraries for Heisenberg–Weyl analytics and Lagrangian optimization

- Future direction:

- Production-grade ecosystems that integrate symplectic geometry, Weyl operator bases, and U3 analysis for cross-domain use (quantum, coding theory, ML).

- Dependencies/assumptions:

- Community uptake, performance engineering, interoperability with existing scientific computing stacks.

Notes on Assumptions and Dependencies (cross-cutting)

- Access model:

- Many algorithms require query access to characteristic functions over F2n and a small number of uniform samples from A (or a basis for span(A)). In dense or non-oracle settings, sampling strategies must be engineered.

- Parameter regimes:

- Runtime is polynomial in n but may be quasi-polynomial or exponential in K or log(1/γ); practical wins occur when K is modest and γ is not too small.

- Domain modeling:

- Real-world problems must be faithfully embedded into F2n to benefit from these methods (e.g., binary encodings of constraints, signals, or states).

- Noise/robustness:

- Algorithmic guarantees are strongest under exact or bounded-error assumptions; robust variants may be needed for noisy data or device measurements.

- Quantum practicality:

- Quantum advantages rely on coherent access, low noise, and feasible quantum query models; classical dequantized counterparts provide immediate alternatives where hardware is unavailable.

- Generalization:

- The current work focuses on F2n; extensions to odd characteristic fields or other groups are plausible but may require additional development for deployment.

Collections

Sign up for free to add this paper to one or more collections.