Agentic Alpha: Autonomous Market Signals

- Agentic Alpha is the emergent risk-adjusted return from fully autonomous LLM-driven agents that orchestrate end-to-end signal extraction in both market and safety-critical settings.

- The framework employs dynamic capability selection and plan-first execution to efficiently manage diverse toolchains and ensure reliable, transparent workflows.

- Case studies demonstrate that agentic pipelines achieve statistically significant alpha with robust performance across regime shifts and scalable automation.

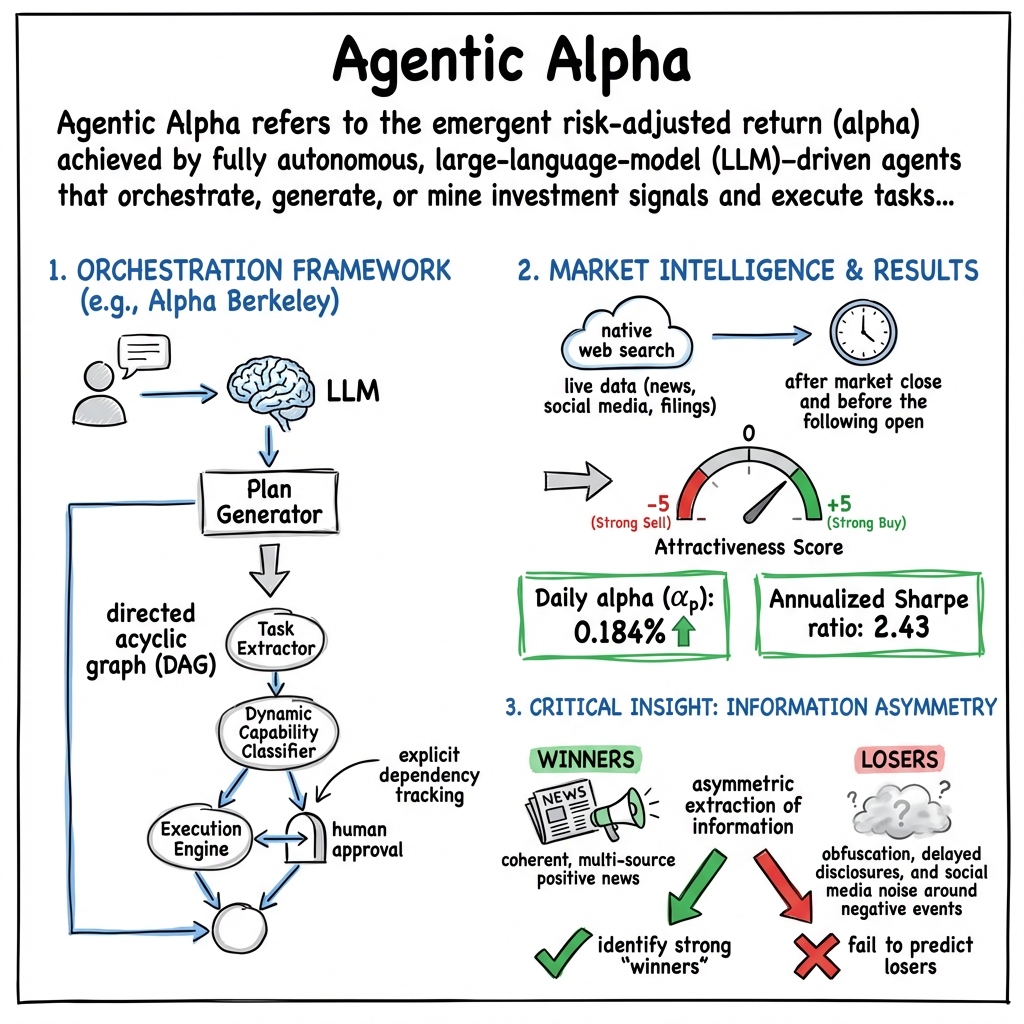

Agentic Alpha refers to the emergent risk-adjusted return (alpha) achieved by fully autonomous, large-language-model (LLM)–driven agents that orchestrate, generate, or mine investment signals and execute tasks, either within safety-critical workflow automation or within market-facing contexts. The “agentic” aspect denotes complete autonomy in information acquisition, filtering, planning, and execution, without human selection of sources or hand-tooled curation. Pioneering frameworks and empirical studies—spanning control engineering (Hellert et al., 20 Aug 2025), real-time equity selection (Chen et al., 17 Jan 2026), and evolutionary alpha mining (Han et al., 6 Feb 2026)—have established that agentic pipelines can achieve both scalable automation in high-stakes environments and statistically significant alpha in adversarial, non-stationary markets.

1. Agentic Orchestration Frameworks

A foundational implementation of the agentic paradigm is the Alpha Berkeley framework (Hellert et al., 20 Aug 2025), which systematizes the orchestration of LLM-driven agents across heterogeneous toolchains. Its architecture comprises:

- Dialogue Interface: CLI or WebUI capturing user utterances for subsequent pipeline processing.

- Context-Aware Task Extractor: Fuses dialogue context, persistent memory, and domain ontologies to yield structured micro-tasks.

- Dynamic Capability Classifier: Embedding-based selection module that prunes a large inventory of tool APIs, controlling LLM prompt exposure and preventing prompt explosion.

- Plan Generator: LLM-driven planner that linearizes selected tools into a directed acyclic graph (DAG), with explicit dependency tracking and optional human approval.

- Execution Engine: Orchestrates real tool invocations or code execution per the generated DAG (runtime built on LangGraph).

- Reliability Layer: Includes an Artifact Store for intermediate outputs and a Checkpoint Manager for agentic state snapshotting, rollback, and resumption.

The table below summarizes the primary modules and their roles:

| Module | Function | Technical Note |

|---|---|---|

| Dialogue Interface | User input collection | CLI/WebUI |

| Task Extractor | Contextual task structuring | LLM fusion of H/M/O |

| Capability Classifier | Tool subset selection | Embedding softmax + threshold |

| Plan Generator | Plans executable workflow | LLM → DAG (+ human approval) |

| Execution Engine | Plan execution (tool/code calls) | LangGraph runtime |

| Reliability Layer | Checkpointing, artifact/version management | State snapshotting, artifact hashing |

H/M/O = History/Memory/Ontology

2. Dynamic Capability Selection and Plan-First Execution

To maintain tractable scaling as the agent’s tool environment expands, Alpha Berkeley employs dynamic capability classification over the toolset . For each , a relevance score

is computed, where Query is the structured embedding of the extracted task and Meta encodes tool metadata. The resultant selection probability

is thresholded at to filter relevant capabilities, ensuring prompt size remains constant as tool library () grows.

Workflow execution is strictly plan-first: the LLM constructs a complete DAG , with explicit type-checked dependencies and, where specified, human-in-the-loop approval. Execution proceeds topologically through the DAG, with state checkpointing and artifact logging after each node. Any error triggers a bounded retry, partial reclassification, or clean abort with full auditability. This structure ensures transparency, replayability, and separation between planning and action.

3. Agentic Alpha in Market Intelligence

Chen and Pu’s “Autonomous Market Intelligence” (Chen et al., 17 Jan 2026) operationalizes agentic alpha in equity markets. A state-of-the-art LLM, equipped with native web search, autonomously queries and synthesizes live data (news, social media, filings) for each Russell 1000 stock after market close and before the following open. For each security and horizon, the agent outputs:

- An “Attractiveness Score” from –5 (Strong Sell) to +5 (Strong Buy)

- Point forecasts (price, EPS, earnings-surprises)

- Sentiment/divergence measures

- Panel of 40+ structured signals

The pipeline is strictly out-of-sample: all prompts and searches predate the period of return measurement, eliminating look-ahead bias. Portfolios are constructed by ranking stocks on the daily Attractiveness Score.

Abnormal return is measured using the six-factor regression:

where are Fama–French factors and is the Carhart momentum factor.

Summary statistics for the top-20 long portfolio:

- Daily alpha (): 0.184% (t = 2.46)

- Annualized Sharpe ratio: 2.43

- Transaction costs: <10% of alpha (bid–ask spreads ≈1.6 bps/trade)

- Alpha dilution: Sharpe and mean alpha decay nearly linearly as increases; no negative-alpha concentration at portfolio tail.

4. Evolutionary Agentic Alpha Mining

QuantaAlpha (Han et al., 6 Feb 2026) extends agentic automation by recasting end-to-end “mining runs” as trajectories . The system features:

- Diversified initialization: A portfolio of orthogonal hypotheses spans alpha sources (price/volume, long/short horizon, momentum, reversal).

- Controllable symbol/code generation: Each hypothesis is mapped to a semantic description, factor expression (over library ), and executable code, strictly enforcing semantic consistency.

- Iterated evolutionary improvement: Trajectory-level mutation localizes and re-writes only the most suboptimal segment identified by self-reflection (argmax marginal loss index), freezing higher-reward segments. Crossover recombines high-reward segments across parents.

- Elitist selection: Only top-reward children survive into subsequent rounds, ensuring survival of robust building blocks.

All factor proposals are regularized for symbolic complexity, parameter count, and redundancy relative to a reference “alpha zoo.” Rigorous ablation demonstrates that abrogating mutation, crossover, or semantic/complexity gates causes pronounced deterioration in both Information Coefficient (IC) and Annualized Rate of Return (ARR).

On CSI 300, QuantaAlpha achieves:

- IC = 0.1501, ARR = 27.75%, MDD = 7.98%

- Consistent transfer to CSI 500 and S&P 500 with 137–160% cumulative excess return over four years

- Robustness to regime shift (2023) documented via stability of IC and Rank IC relative to collapsing baselines

5. Information Structure, Limitations, and Robustness

Analysis of agentic alpha across domains reveals asymmetric extraction of information: agentic LLMs consistently identify strong “winners” based on coherent, multi-source positive news but fail to predict losers, plausibly due to obfuscation, delayed disclosures, and social media noise around negative events (Chen et al., 17 Jan 2026). A plausible implication is that agentic evidence-aggregation provides reliable outperformance in environments where good news is reliably signaled and widely disseminated, but may not deliver short-side signals in adversarial or opaque informational settings.

Agentic alpha pipelines are subject to irreproducibility due to their dependence on transitory web environments, continual LLM evolution, and sensitivity to market structure. Additionally, widespread deployment of agentic trading may compress alpha and incentivize strategic misinformation or adversarial adaptation in both news and control environments.

6. Case Studies and Empirical Lessons

Case study evaluations provide concrete evidence of agentic alpha pipelines in both industrial orchestration and financial markets.

- Alpha Berkeley—Wind Farm Monitoring: Achieves six-step DAG plans (latency ≈3–8 s, <2% error rate, stable throughput 7 runs/min). Integrated checkpointing and artifact logs support rapid replay and error recovery (Hellert et al., 20 Aug 2025).

- Alpha Berkeley—ALS Particle Accelerator: Nine-step plan yields zero safety incidents and full operator oversight, with effective recovery from network glitches (10 s) and constant prompt size despite toolset scaling (50 200+).

- Agentic Alpha in Russell 1000: Statistically significant daily/annualized alpha, with cost-efficient execution and clear alpha concentration in the top decile (Chen et al., 17 Jan 2026).

- QuantaAlpha—CSI 300 and Transfer: Demonstrates stable IC and ARR under regime shifts and strong out-of-market robustness through evolution-driven trajectory recombination (Han et al., 6 Feb 2026).

In summary, the agentic alpha paradigm—manifested as closed-loop, LLM-driven system orchestration—enables scalable, transparent, and fault-tolerant signal extraction and workflow execution, achieving performance and robustness benchmarks that surpass prior semi-automated or hand-tuned systems. The ongoing research frontier concerns the interplay of agentic search, information structure, and adversarial adaptation in both control and financial domains.