Finite-State Machines: Theory & Applications

- Finite-State Machines (FSMs) are formal models defined by a 5-tuple that manage transitions among a finite set of states triggered by discrete events.

- They are applied in protocol engineering, computational linguistics, hardware design, and AI to verify, simulate, and automate system behaviors.

- Research advances include automated extraction, evolutionary generation, and neural-symbolic emulation that enhance scalability, precision, and design automation.

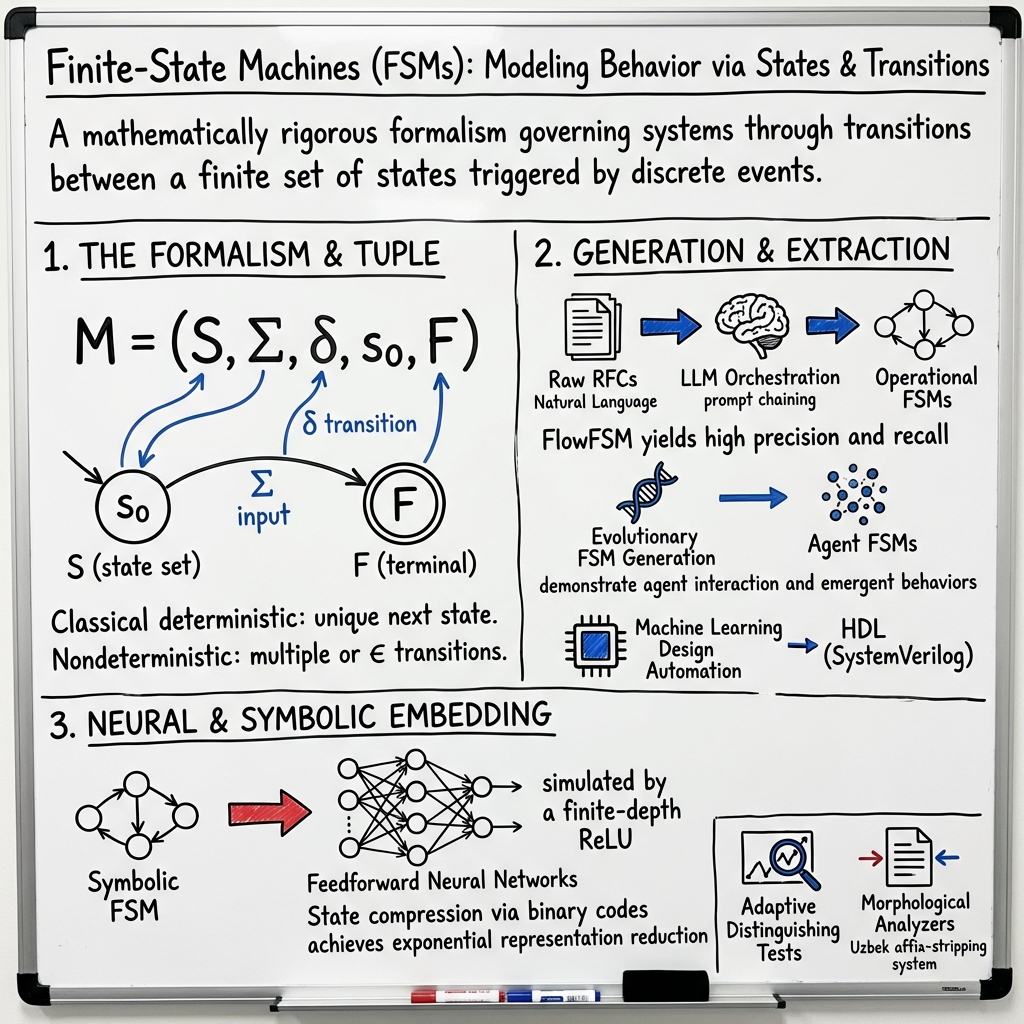

A finite-state machine (FSM) is a mathematically rigorous formalism for modeling systems whose behavior is governed by transitions between a finite set of states triggered by discrete events. FSMs underpin operational logic in domains such as protocol engineering, computational linguistics, hardware design, and artificial intelligence. An FSM is typically specified as a 5-tuple , where is the state set, the alphabet of input events, the transition function, the initial state, and the set of accepting or terminal states, with variants and generalizations found in protocol modeling, agent-based systems, neural-symbolic computation, and morphological analyzers.

1. Mathematical Formalism and Variants

The classical deterministic case defines an FSM as , where maps each state-event pair to a unique next state (Wael et al., 15 Jul 2025). Nondeterministic FSMs generalize to , allowing multiple or (empty string) transitions (Morazán et al., 5 Aug 2025). In labeled-transition systems and networked protocols, FSMs may alternate between inputs and outputs, and can be empty in ongoing applications (e.g., network protocols), or used to encode accepting conditions in verification and linguistic applications.

Finite-state machines are often specialized:

- Agent FSMs: Each agent represented by , with constructed from domain-specific "action-nodes" and from conditional event types (Charity et al., 2023).

- Morphological Analyzers: Each affix-class is modeled as a (possibly non-deterministic) FSM ; affix analyzers in agglutinative languages concatenate several FSMs through right-to-left composition (Sharipov et al., 2022).

A summary of the FSM tuple structure in different domains:

| Application | State Set | Input Alphabet | Transition Function | Initial State | Terminal States |

|---|---|---|---|---|---|

| Network Protocol | Protocol states | Commands/events | E.g. NotConnected | Empty or terminal set | |

| Artificial Life/Agent | Action nodes | Condition labels (none, step, ... ) | Adjacency list | "idle" | Often unused () |

| Linguistic Morphology | Affix parsing states | Letters/morpheme segments | (NFA/DFA) | Class start | Accepting class end |

2. FSM Extraction and Generation Methodologies

FSM modeling and extraction methodologies include hand-crafted specification, automated learning from scenarios, and neural-symbolic emulation:

- Automated Protocol Extraction: FlowFSM uses multi-agent LLM orchestration, applying prompt chaining and chain-of-thought reasoning to extract FSM transitions from raw RFCs. The three-stage process—command extraction, state transition analysis, and rulebook synthesis—systematizes mapping natural-language protocol specifications to operational FSMs and yields high precision and recall (FTP: Precision 83.3%, Recall 88.2%, F1 85.7% on standard benchmarks) (Wael et al., 15 Jul 2025).

- Evolutionary FSM Generation: In artificial life simulation, agent FSMs are generated randomly and evolved using a (1+1) greedy hill-climber, mutating nodes, edges, and instance counts, and pruning unreachable components. Fitness is computed as , where and denote visited and unvisited nodes/edges, and the total number. Evolved FSM populations demonstrate agent interaction and emergent behaviors (Charity et al., 2023).

- Machine Learning Design Automation: LLMs (Claude 3 Opus, ChatGPT-4, ChatGPT-4o) are prompted in structured formats to generate FSM implementations in HDL (SystemVerilog). Prompt refinement via To-do-Oriented Prompting (TOP Patch) substantially increases the correctness rate in difficult FSM design scenarios, e.g., synchronous-reset FSMs, one-hot encoding (Lin et al., 26 Mar 2025).

- Minimum FSM Identification: In software verification, minimum-state FSM synthesis from scenario traces and temporal logic constraints is solved via four algorithms: Iterative SAT-based, QSAT-based, Exponential SAT-based, and Backtracking. The scenario-tree encoding and counterexample prohibition methodology in the Iterative SAT-based framework delivers certified minimal models, outperforming inexact heuristics on optimized benchmarks (Ulyantsev et al., 2016).

3. FSMs in Symbolic and Neural Computation

A primary axis of research focuses on representing FSM logic in distributed neural substrates:

- Feedforward Neural Networks as FSMs: Any DFA can be exactly simulated by a finite-depth ReLU or binary-threshold neural network, mapping one-hot or binary state encodings and symbol encodings layer-wise. The transition function is linearly separable and constructible by a two-layer MLP per step, allowing compositional stacking for fixed-length inputs. State compression via binary codes achieves exponential representation reduction (compression ratio for states) (Dhayalkar, 16 May 2025).

- FSMs in Vector Symbolic Architectures: An FSM can be embedded in a Hopfield attractor network with high-dimensional state and stimulus hypervectors, with transitions encoded as asymmetric weight matrix terms. The practical maximum number of storable FSM states scales linearly in network size for dense codes and quadratically for optimal sparsity. This mechanism is robust to binarized, noisy, or low-precision weights and supports distributed, biologically plausible state machines (Cotteret et al., 2022).

A summary table of neural FSM embedding capacities:

| Neural Substrate | State Representation | Capacity Scaling | Transition Mechanism |

|---|---|---|---|

| Feedforward network | One-hot, binary codes | Linear in width/depth | Layer-wise MLP, threshold units |

| Hopfield attractor network | Dense/sparse hypervectors | Linear (dense), quadratic (sparse) | Weight-matrix-encoded transitions |

4. FSM Testing, State Identification, and Verification

FSMs are central to protocol verification and formal testing:

- Adaptive Distinguishing Tests: Computation of state-distinguishing sequences for FSMs and labeled transition systems (LTSs) exploits compatibility relations, splitting graphs, and recursive extraction of adaptive test trees. Scalability is governed by state and transition counts. Empirically, more than 99.95% of incompatible state pairs are distinguished in large industrial models (e.g., Engine Status Managers with up to 3,168 states), via compact test structures (Bos et al., 2019).

- Scenario-LTL Verification: Counterexample-guided identification and negative scenario tree encoding guarantee synthesized FSMs meet not only scenario traces but also full temporal logic properties, supporting formal correctness guarantees and optimal size (Ulyantsev et al., 2016).

5. Linguistic and Domain-Specific FSMs

FSMs underpin lexical analyzers, stemmers, and sequence-taggers in natural language processing:

- Uzbek Morphological Analyzer: The Uzbek affix-stripping system models each of seven affix classes by separate FSMs, designed left-to-right, inverted to right-to-left deterministic automata, and concatenated into a "head" machine. High efficiency is achieved— runtime per word and sub-10 KB memory footprint—allowing out-of-vocabulary processing without lexicon storage (Sharipov et al., 2022).

- Class-Based Composition: Morphotactic regularities (affix class ordering) enforced by automata concatenation allow modular addition and updating, though lexicon-free approaches cannot disambiguate roots or highly irregular forms.

6. Visualization, Pedagogy, and Software Tools

Visualization and interactive design environments facilitate FSM comprehension and debugging:

- Dynamic FSM Visualization: Interactive DSLs and BFS-based computation tree exploration clarify nondeterministic branching, acceptance/rejection semantics, invariant validation, and path trace merging. Visualization aids the identification of subtle design flaws (e.g., missing transitions, invariant violations) and accelerates learning in formal languages courses (Morazán et al., 5 Aug 2025).

- Software Tools: Open-source FSM synthesis packages (e.g., EFSM-tools) support scenario+LTL input, SAT/QSAT-based encoding, minimization, and certified output, advancing integration with verification and model-checking workflows (Ulyantsev et al., 2016).

7. Impact, Scalability, Current Challenges, and Future Directions

FSMs maintain foundational significance in protocol verification, system synthesis, AI agent modeling, and computational linguistics. Open research challenges include scaling automated extraction to large, ambiguous specifications; optimizing agentic inference workflows for cost and latency; integrating neural-symbolic representations for hardware realization; and automating design refinements with prompt-engineered LLMs. Future directions highlight:

- Extending agentic extraction methods to diverse protocol families and integration with fuzzing environments (Wael et al., 15 Jul 2025).

- Modular adaptation of lexicon-free FSM analyzers to other agglutinative languages (Sharipov et al., 2022).

- Developing neural FSM substrates for robust symbolic control in neuromorphic systems (Cotteret et al., 2022).

- Automating FSM synthesis from natural language using LLM feedback loops and multi-shot chain-of-thought reasoning (Lin et al., 26 Mar 2025).

The systematic formalization, extraction, and implementation of FSMs continue to advance applications in verification, design automation, distributed intelligence, and computational linguistics, with ongoing investigation into scalability, ambiguity resolution, and biologically plausible representations.