Gated DeltaNet: Adaptive Fast-Weight Model

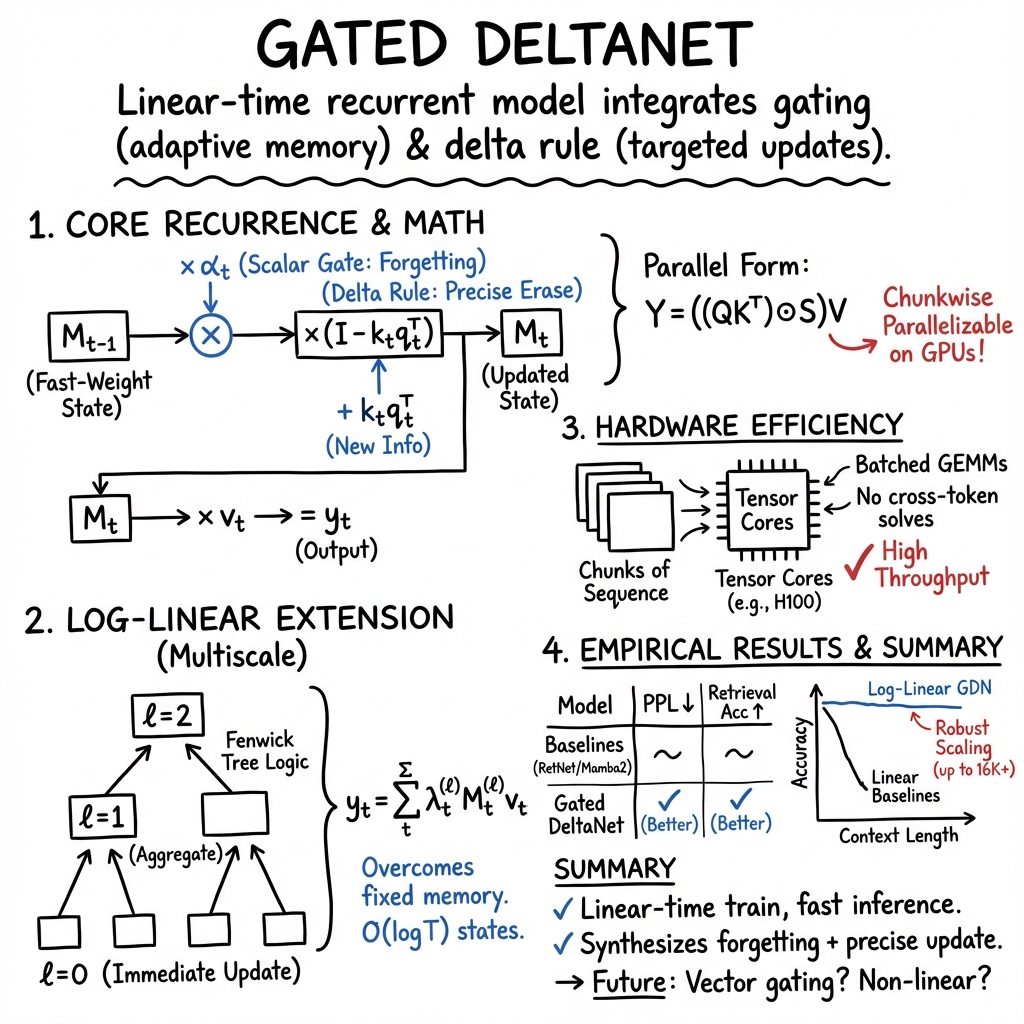

- Gated DeltaNet is a linear-time recurrent model that combines scalar gating with the delta rule to achieve adaptive, selective fast-weight updates.

- The model integrates log-linear attention with multiscale memory, enabling efficient parallelization and enhanced long-range context tracking.

- Empirical benchmarks demonstrate improved language modeling and retrieval performance compared to previous linear transformer approaches.

Gated DeltaNet is a linear-time recurrent sequence model that integrates gating for adaptive memory control and the delta rule for targeted fast-weight updates. It is designed to overcome limitations in retrieval and long-context modeling that affect previous linear transformers by fusing two key mechanisms: scalar gating enabling rapid global forgetting and the delta update rule enabling selective, precise overwriting of memory. Recent advancements further extend Gated DeltaNet to log-linear attention, introducing logarithmically growing multiscale memory states for enhanced long-range context tracking while preserving matmul-rich parallelization suitable for modern hardware (Guo et al., 5 Jun 2025, Yang et al., 2024).

1. Mathematical Formulation and Core Recurrence

The central recurrence of Gated DeltaNet is defined as follows. For each token with projections , the fast-weight state is updated by:

where is a scalar gate computed as a function of . The term applies the delta rule for erasure and update of key-value association. The output at each step is:

This linear recurrent update enables parallel matrix-multiply implementations. Let represent the stacked queries, keys, and values for a length- sequence. Define the lower-triangular semiseparable mask matrix 0, with

1

Sequence outputs are computed by:

2

The update process is compatible with chunkwise block processing and highly amenable to GPU tensor-core parallelization (Yang et al., 2024).

2. Extensions: Log-Linear Attention and Multiscale Memory

To address the fixed memory constraint of linear-time models, log-linear attention introduces a hierarchical Fenwick-tree partitioning of the memory state. For each 3, 4 multiscale memory matrices 5 summarize buckets 6 defined by binary-indexed tree logic. The update for each bucket is:

7

Per-level weights 8 are computed via a small linear layer on 9, and attention output aggregates across levels:

0

Parallel training utilizes a hierarchical mask 1 that encodes the multilevel memory access pattern, supporting efficient kernel fusion and O(2) training complexity (Guo et al., 5 Jun 2025).

3. Hardware-Efficient Parallel Training Algorithms

Gated DeltaNet is implemented with chunkwise parallelism, typically with chunk size 3. Within each chunk, all operations—matrix projections, gating, and delta updates—are compiled into batched GEMMs (matrix-matrix multiplies) and elementwise multiplies by diagonal cumulative decays. The WY/UT factorization reformulates the low-rank updates:

7

No cross-token triangular solves are needed; memory use remains 4 per head for linear (classic) Gated DeltaNet and 5 for log-linear variants. Wall-clock throughput reaches 45 Kt/s for a 1.3B model on H100 GPUs (Yang et al., 2024).

4. Empirical Performance and Benchmark Results

Gated DeltaNet achieves consistent improvements across language modeling, retrieval, long-context modeling, and robustness to context scaling. Empirical results on 1.3B parameter models pretrained with FineWeb-Edu (100B tokens) reveal the following trends:

| Model | Wiki PPL ↓ | Long-Books PPL ↓ | Avg. CS Acc ↑ |

|---|---|---|---|

| RetNet | 19.08 | 17.27 | 52.02 |

| Mamba2 | 16.56 | 12.56 | 54.89 |

| DeltaNet | 17.71 | 16.88 | 52.14 |

| Gated DeltaNet | 16.42 | 12.17 | 55.32 |

For retrieval tasks (Synthetic NIAH 1–3, SQuAD, TriviaQA, NQ, DROP), Gated DeltaNet outperforms or matches linear baselines with up to 16K context, and log-linear extensions preserve high accuracy as length and number of key–value pairs grow. On LongBench (14 tasks), log-linear Gated DeltaNet exceeds linear variants on 8 of 14 tasks. Fine-grained analysis shows robust scaling to longer context windows without loss plateaus—a constraint for classical linear-time architectures (Guo et al., 5 Jun 2025, Yang et al., 2024).

5. Hybrid Architectures and Comparative Complexity

Hybrid architectures combine Gated DeltaNet blocks with sliding window attention or Mamba2 layers to fuse global and local memory mechanisms. Example patterns:

- Gated DeltaNet-H1: [GDN → SWA]n

- Gated DeltaNet-H2: [Mamba2 → GDN → SWA]n

These variants deliver superior throughput (54 Kt/s for H1) and modeling accuracy, closing most of the performance gap to standard Transformers, while retaining hardware efficiency and scaling advantages.

| Model | Training Time | Training Memory | Decode Time | Decode Memory |

|---|---|---|---|---|

| Gated DeltaNet | 6 | 7 | 8 | 9 |

| Log-Linear Gated DeltaNet | 0 | 1 | 2 | 3 |

Log-linear Gated DeltaNet trades minor complexity overhead for expanding capacity, allowing richer encoding of long-range dependencies (Guo et al., 5 Jun 2025).

6. Discussion, Limitations, and Future Directions

Gated DeltaNet synthesizes rapid selective forgetting via scalar gating with precise fast-weight memory modifications via the delta rule. This architecture is fully compatible with highly parallel matmul-rich kernels and exhibits stable training with robust extrapolation. Notable strengths include linear-time training, low-latency inference, and demonstrable gains across diverse benchmarks.

Potential research directions include extending the scalar gate 4 to diagonal or vector forms for per-dimension control; enriching the structure of transition matrices (e.g., by allowing negative eigenvalues); and exploring non-linear recurrences for further expressivity. A plausible implication is that such variants may improve memory management and robustness under extreme long-context requirements.

Limitations include the rank-two transition updates per token and current restriction to scalar gating. The log-linear extension addresses the fixed-memory bottleneck but does incur 5 decode-time state. Application-specific tuning of level weights (6) and incorporation of local attention remain active areas of investigation (Guo et al., 5 Jun 2025, Yang et al., 2024).