Learnable Frequency Attention Module

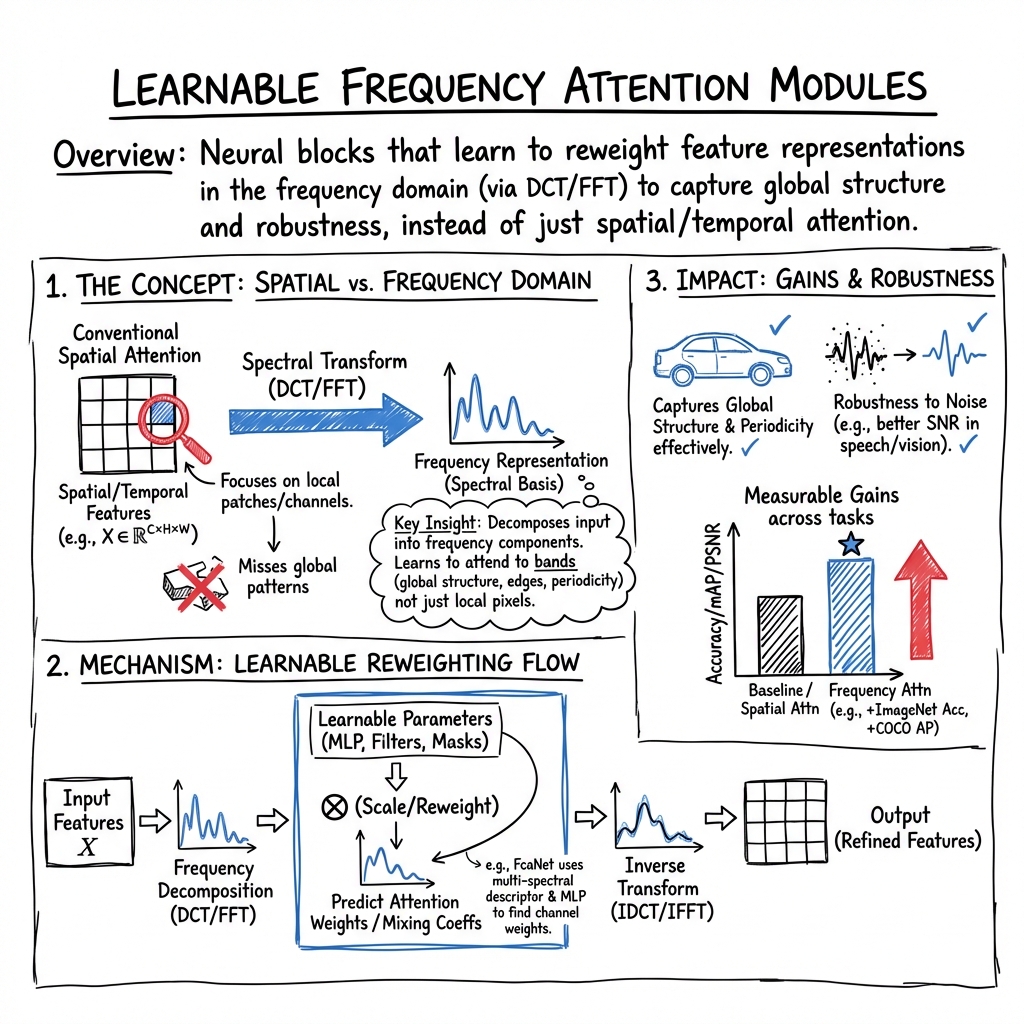

- Learnable Frequency Attention Module is a neural block that transforms input features using spectral methods like DCT or FFT to reweight frequency components.

- It efficiently captures global patterns, periodicity, and edge details by applying learnable weights on decomposed frequency bands.

- Its applications span image classification, denoising, speech processing, and time series forecasting, offering improved performance and interpretability.

A learnable frequency attention module is a neural block which, instead of applying conventional attention in the spatial or temporal domain, learns to reweight feature representations in the frequency domain. This mechanism decomposes the input features (images, spectrograms, sequence embeddings) using spectral transforms such as DCT or FFT, then predicts attention weights or mixing coefficients for these frequency components via learnable parameters. By focusing on frequency bands rather than purely spatial channels or local patches, frequency attention modules can efficiently capture global structure, periodicity, edge content, and robustness to noise. Recent architectures employ variants ranging from per-frequency scaling, spectral-domain masking, spectrum-aware pooling, cross-attention over a frequency-feature bank, and integration with spatial and wavelet branches.

1. Mathematical Basis: Frequency Decomposition and Attention

Central to frequency attention is frequency-domain decomposition. Input features are transformed to a spectral basis using operations like DCT or FFT. For a feature map , the 2D DCT yields

In classical SE blocks, Global Average Pooling (GAP) is equivalent to selecting the frequency component. Frequency attention generalizes this by extracting multiple coefficients, for , forming a multi-spectral descriptor (Qin et al., 2020).

Weights for frequency components are then learned via linear layers or more complex networks. For channel attention in FcaNet (Qin et al., 2020), the attention weight for channel is a function where is a two-layer MLP and is a sigmoid. In global spectral modules (Pham et al., 2024), filters are trained, producing filtered spectrum:

Also common is the use of softmax-normalized frequency weight vectors for spectral selection (Xie et al., 2022, Yu et al., 2021) and more advanced spectrum scaling per attention head ((Wu, 2024): MSS—Multi-head Spectrum Scaling).

2. Core Architectures and Implementation Mechanisms

A variety of implementation strategies exist across tasks:

- Multi-spectral channel attention (FcaNet): Squeeze features with top- spectral coefficients and learn to combine them per channel using a two-layer MLP; DCT bases are precomputed and fixed (Qin et al., 2020).

- Learnable frequency filters (presentation attack detection): Constrain DCT/FFT frequency masks with base binary filters plus trainable offset maps; apply bounded activation before spectral filtering and inverse transform (Fang et al., 2021).

- Frequency attention in knowledge distillation: Student features in the spectral domain are filtered via a learnable kernel ; a high-pass mask zeroes low frequencies; the output fuses global spectral and local spatial branches via trainable mixing weights (Pham et al., 2024).

- Window-based frequency/channel attention (SFANet): Features are partitioned into fixed-size blocks, FFT is applied per block, real and imaginary parts are processed using a lightweight MLP channel attention; recalibrated blocks are aggregated via inverse FFT (Guo et al., 2023).

- Multi-frequency attention with MSS (FSatten): Fourier amplitude maps per variate are scaled by learned head-specific masks; frequency-domain attention replaces QK projections without altering base architectures (Wu, 2024).

- Composite triple-branch fusion: Spatial, frequency (FFT with learnable projection), and wavelet branches are processed in parallel, gated by a small network, and fused via softmax-weighted summation (Zhang et al., 5 Dec 2025).

The table below summarizes exemplary mechanisms:

| Paper / Module | Frequency Transform | Learnable Parameters | Aggregation Op |

|---|---|---|---|

| FcaNet | DCT | MLP, DCT bases fixed | Two-layer excitation, sigmoid |

| Frequency Distillation [2403] | FFT | , branch weights | HPF, spatial fusion, feature loss |

| SFANet WFCA [2302] | FFT (patch-wise) | MLP channel attn. | Block IFFT, real & imag branches |

| FSatten [2407] | FFT | Per-head mask W | Dot-product attention in freq. domain |

| TFFA (medical seg) [2512] | FFT, wavelets | Linear (FFT), DW Conv | Softmax-gating, weighted sum |

3. Integration with Backbone Architectures

Frequency attention modules are typically inserted into standard deep learning pipelines. In image recognition, Fca modules replace or supplement SE blocks (between convolution layers or at bottlenecks), initialized with spectral bases. In time series forecasting, FSatten swaps the QK computation in Transformer attention with spectral-domain scaling (Wu, 2024).

Hybrid models for dense prediction (segmentation) or speaker identification often apply frequency attention either immediately after input conversion (spectrograms), or interleaved early in the network (see FEFA (Hajavi et al., 2020), LFE (Xie et al., 2022)). For multi-modal and fusion tasks, frequency branches may be fused with spatial, wavelet, or temporal features using trainable gates (Zhang et al., 5 Dec 2025, Yu et al., 2021).

The modules generally require minimal changes to pipeline dimensioning; the majority of the overhead lies in either spectral transforms (FFT/DCT) or additional lightweight parameter matrices.

4. Quantitative Performance and Empirical Impact

Frequency attention modules consistently demonstrate measurable gains across tasks:

- Classification (FcaNet): ResNet-50 + FcaNet (): 77.5% (vs. SE’s 77.1%, baseline 76.1%) on ImageNet; mAP and mIoU gains in COCO and Cityscapes (Qin et al., 2020).

- Knowledge distillation: Frequency attention module yields up to +1.25 top-1 accuracy on ImageNet, +0.45 AP on COCO detection, consistently outperforming spatial-attention distillation (Pham et al., 2024).

- Speaker identification (FEFA, f-CBAM): EER reduction by 8–28% relative for various CNN backbones on VoxCeleb; robustness to noise improved, with up to ~30% less EER degradation under severe SNR loss (Hajavi et al., 2020, Yadav et al., 2019).

- Long-term time series forecasting (FSatten): MSE reduction by 8.1% (FSatten) and up to 21.8% (SOatten) at long horizons versus dense attention baselines (Wu, 2024).

- Image denoising (SFANet): WFCA blocks yield significant PSNR and HFEN improvements and efficient O() complexity (Guo et al., 2023).

Ablation studies across several papers confirm that frequency attention branches contribute unique advantages beyond channel, spatial, or temporal attention, including robustness to masking, noise, and improved convergence speed in high-frequency regimes (Feng et al., 21 Dec 2025).

5. Design Choices and Parameterization

Key design choices in frequency attention modules include:

- Type of spectral basis: Fixed DCT/FFT, adaptively-learned filters, or orthogonally-initialized projections (SOatten (Wu, 2024)).

- Granularity: Per-frequency-bin, spectral block, attention head, or grouped frequency bands.

- Normalization and gating: Softmax, sigmoid, or masking to ensure controlled scaling of frequency components and avoid instability.

- Wavelet augmentation: Combining contextual priors from analytic wavelets (DoG, Mexican Hat) with learned spectrum weights (Zhang et al., 5 Dec 2025).

- Regularization: Explicit spectral regularizers to control scale in high-frequency branches, as in (Zhang et al., 5 Dec 2025), or adaptive masking for node allocation in Laplace-based attention (Kiruluta, 1 Jun 2025).

- Learning protocol: Spectrum weights and attention parameters are universally trained end-to-end with standard optimizers, usually no explicit spectral constraint after initialization.

Modules are distinguished by their parameter efficiency; for instance, FEFA introduces only parameters (frequency bins), FcaNet scales with extra weights, while TFFA’s fusion gate is governed by a tiny MLP (Qin et al., 2020, Zhang et al., 5 Dec 2025).

6. Applications and Domain-Specific Variations

Frequency attention is widely adopted for domains where global patterns, periodicity, texture, or spectral information is critical:

- Vision: Image classification, detection, segmentation, denoising (FcaNet, SFANet, TFFA); night-time scene parsing with DCT-based LFE and cross-modal fusion (Xie et al., 2022).

- Speech/audio: Speaker verification, emotion recognition, ASR (FEFA, f-CBAM, F-Attention (Alastruey et al., 2023)), singing/energy melody extraction (frequency-temporal attention (Yu et al., 2021)).

- Sequential tasks: Multivariate time series forecasting (FSatten (Wu, 2024)), sequential recommendation (FEARec (Du et al., 2023)).

- Communication systems: OFDM channel estimation with SNR-embedded frequency attention (Yang et al., 2021).

- Regression/PDE learning: Spectral-bias overcoming in cross-attention architectures with adaptive Fourier token scaling and incremental spectral enrichment (Feng et al., 21 Dec 2025).

In each case, frequency-based attention addresses limitations of purely spatial or time-local mechanisms, excelling at extracting globally informative or robust representations.

7. Interpretability, Scalability, and Future Directions

Frequency attention delivers improvements in both interpretability and scalability relative to standard attention:

- Interpretability: Learned frequency weights, filters, or node parameters (decay rate, frequency in Laplace transforms (Kiruluta, 1 Jun 2025)) are directly visible and can be mapped to salient regions in the spectral domain.

- Scalability: Modules such as adaptive Laplace attention scale as versus in dot-product attention, with FFT-accelerated relevance computation.

- Robustness: Empirical analyses reveal consistent decreases in condition number for attention maps, improved stability for periodic and oscillatory signals (Wu, 2024), and pronounced reduction in error under synthetic noise (Hajavi et al., 2020, Alastruey et al., 2023).

Future research directions include dynamic frequency basis learning, cross-domain and cross-modal frequency attention fusion, spectral regularization for noise suppression, streaming-efficient attention via Laplace or orthogonal basis transforms, and interpretability analysis in complex architectures. Continued development of hybrid spatial-frequency attention blocks and integration with foundation CNN and transformer platforms is anticipated across both academic and industrial applications.