Linear Recurrent Unit (LRU)

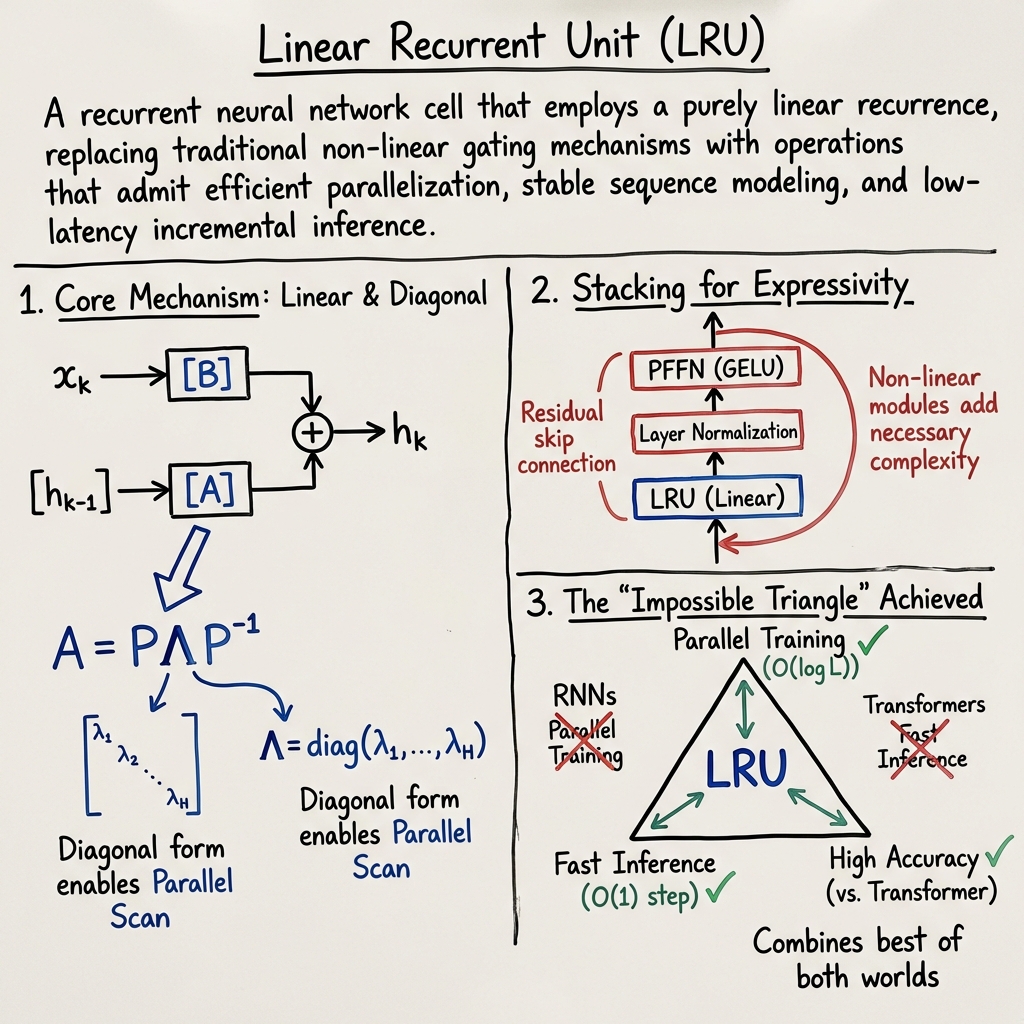

- Linear Recurrent Unit (LRU) is a recurrent neural network cell that replaces non-linear gating with linear recurrences, enabling efficient parallelization and fast incremental inference.

- It utilizes diagonalized spectral decomposition and parallel scan algorithms to reduce computational complexity, achieving significant throughput improvements over traditional models.

- Modern LRU variants integrate nonlinear modules like Layer Normalization and feed-forward networks to capture complex dependencies, boosting accuracy in recommendation, forecasting, NLP, and reinforcement learning.

A Linear Recurrent Unit (LRU) is a recurrent neural network cell that employs a purely linear recurrence, replacing traditional non-linear gating mechanisms with operations that admit efficient parallelization, stable sequence modeling, and low-latency incremental inference. LRUs have been developed in response to the computational bottlenecks of attention-based models and the optimization difficulties found in deep non-linear RNNs. Their design has influenced multiple modern architectures in sequential recommendation, time-series forecasting, natural language processing, and online reinforcement learning.

1. Mathematical Foundations and Model Structure

The canonical LRU updates the hidden state at timestep by a strictly linear recurrence: where is an input vector, and are learnable parameter matrices of appropriate dimensions. To stabilize training and ensure efficient computation, is diagonalized via eigendecomposition: with spectral radius , often parametrized in exponential form for both numerical stability and effective gradient propagation: After absorbing into and , the recurrence simplifies to a diagonal or complex form where each hidden channel evolves independently. This structure enables parallel scans along the sequence dimension, achieving training time for length- sequences using divide-and-conquer scan algorithms (Yue et al., 2023, Orvieto et al., 2023, Liu et al., 11 Apr 2025).

2. Architectural Extensions and Non-Linearity

Pure LRUs lack intrinsic non-linearity, which restricts model expressivity. Consequently, modern LRU stacks interleave the linear recurrence with nonlinear position-wise modules:

- Layer Normalization before and/or after the linear recurrence.

- Position-wise Feed-Forward Networks (PFFN) using GELU activations.

- Residual skip connections surrounding PFFN modules.

- Stacks of multiple LRU-Norm-PFFN blocks.

This construction exploits the isolability of the linear recurrence to efficiently propagate global sequence context, while leveraging the nonlinear modules for cross-channel interactions and high-order dependencies. Ablation studies show that omitting any of these components (LayerNorm, PFFN, residual skip) can result in up to an 82% drop in recall on sparse data, indicating their necessity for maintaining competitive accuracy (Yue et al., 2023).

The Real-Gated Linear Recurrent Unit (RG-LRU), as introduced in Hawk and Griffin models, further augments the LRU with input-conditioned gates: These gates allow element-wise control of memory retention and input injection, increasing expressivity while preserving parallelizable and efficient updates (De et al., 2024, Liu et al., 2024).

3. Computational Properties and Parallelization

A defining property of LRUs is their compatibility with parallel scan algorithms. Formally, the unrolled LRU recurrence is: In the diagonalized basis, this simplifies to a sum of powers of , computed with element-wise multiplications. Divide-and-conquer scan methods, implemented in custom CUDA kernels, achieve compute per sequence for batched training. For variable-length sequences, embedding-only padding minimizes wasted computation by only padding inputs rather than hidden states.

Incremental inference is as efficient as classic RNNs: given and an incoming ,

is computed in time. This is orders of magnitude faster than attention-based models whose inference cost grows with sequence length. Empirical wall-clock measurements indicate that LRURec can achieve a improvement over SASRec in throughput for sequence recommendation tasks (Yue et al., 2023, Liu et al., 2024).

4. Comparison with Nonlinear RNNs, Transformers, and SSMs

Linear Recurrence Units contrast sharply with standard RNNs (LSTM/GRU) and Transformer models:

| Model | Time Complexity | Training Parallelism | Inference Efficiency | Expressivity Mechanism |

|---|---|---|---|---|

| LSTM/GRU | Sequential | Gated nonlinear recurrence | ||

| Transformer | Parallel | Full attention | ||

| LRU (diagonalized) | Parallel | Linear + stacked nonlinearity | ||

| SSM (S4/S5) | Parallel | State-space convolution |

Editor's term: "impossible triangle"—LRUs achieve hardware-parallel training, RNN-like fast incremental inference, and accuracy competitive with or exceeding Transformer-based sequence models, notably outperforming baselines by 2–17% on recall and NDCG metrics across multiple datasets (Yue et al., 2023, Liu et al., 2024, Orvieto et al., 2023, Liu et al., 11 Apr 2025).

5. Empirical Evaluation and Applications

LRUs underpin numerous practical sequence modeling frameworks:

- Sequential Recommendation: On benchmarks such as MovieLens-1M, Beauty, Sports, and the extended XLong (avg length 958), LRURec outperforms SASRec in both NDCG@10 and Recall@10. LRURec converges in 23K steps versus 50K for SASRec, demonstrating both speed and efficiency. For extremely sparse datasets, relative improvements exceed 17% (Yue et al., 2023, Liu et al., 2024).

- Time-Series Forecasting and Multisource Fusion: The BLUR model (Bidirectional LRU) achieves superior accuracy (ETTh1 MSE=0.247, MAE=0.336) compared with both Transformer and SSM baselines, confirming LRUs’ advantages for long-horizon, high-dimensional temporal datasets (Liu et al., 11 Apr 2025).

- Language Modeling: Real-Gated LRUs, interleaved or hybridized with local attention (Griffin), match or surpass Llama2 with less data and lower computational cost, and demonstrate excellent extrapolation to longer contexts (De et al., 2024).

- Online Reinforcement Learning: LRUs with diagonal complex-valued recurrence admit efficient real-time recurrent learning (RTRL) of exact gradients at cost, sharply contrasting with for dense RNNs. This structure reduces bias and computation, and further improvements are realized by extensions like Recurrent Trace Units (Elelimy et al., 2024).

6. Extensions, Generalizations, and Future Directions

Recent developments generalize LRUs by introducing dynamic, input-dependent gates. GateLoop extends LRU with fully data-controlled recurrence coefficients, key/value/output gates, and offers three computation modes: linear recurrent (), parallel scan (), and a surrogate attention mode (). This generative view enables data-controlled relative positional encoding and amplifies expressivity for auto-regressive modeling, as validated by empirical gains in WikiText-103 perplexity (13.4 for GateLoop vs. 18.6 for Transformer) (Katsch, 2023).

Behavior-dependent LRUs (RecBLR) specialize gating to user behavior context, realizing further gains in recommendation accuracy and hardware utilization. Empirical studies indicate scalability to extremely long sequences with minimal loss in throughput or accuracy.

A plausible implication is that emerging LRU variants—via specialized gating, parameter sharing, hardware-aware parallelization, and hybridization with attention—are well positioned to supplant traditional nonlinear RNNs and serve as core primitives in efficient sequence modeling across domains.

7. Limitations and Open Challenges

While LRUs achieve linear runtime and competitive empirical accuracy, their purely linear recurrence—without gates or nonlinearities—limits expressivity. Remedies such as stacking with MLPs, interleaving gating mechanisms, or hybridizing with attention increase parameter count and architectural complexity. Furthermore, the success of content-dependent (data-driven) parameterizations underscores a trend toward models with dynamic adaptation, challenging the fixed spectral design of classical LRUs.

There remains ongoing investigation into the tradeoffs between recurrence expressivity, parallelizability, and stability, as well as the optimization of initialization, normalization, and gating mechanisms for diverse application domains. LRUs’ secure niche in efficient sequence modeling is now complemented by promising extensions in fully data-driven recurrence and bidirectional architectures.