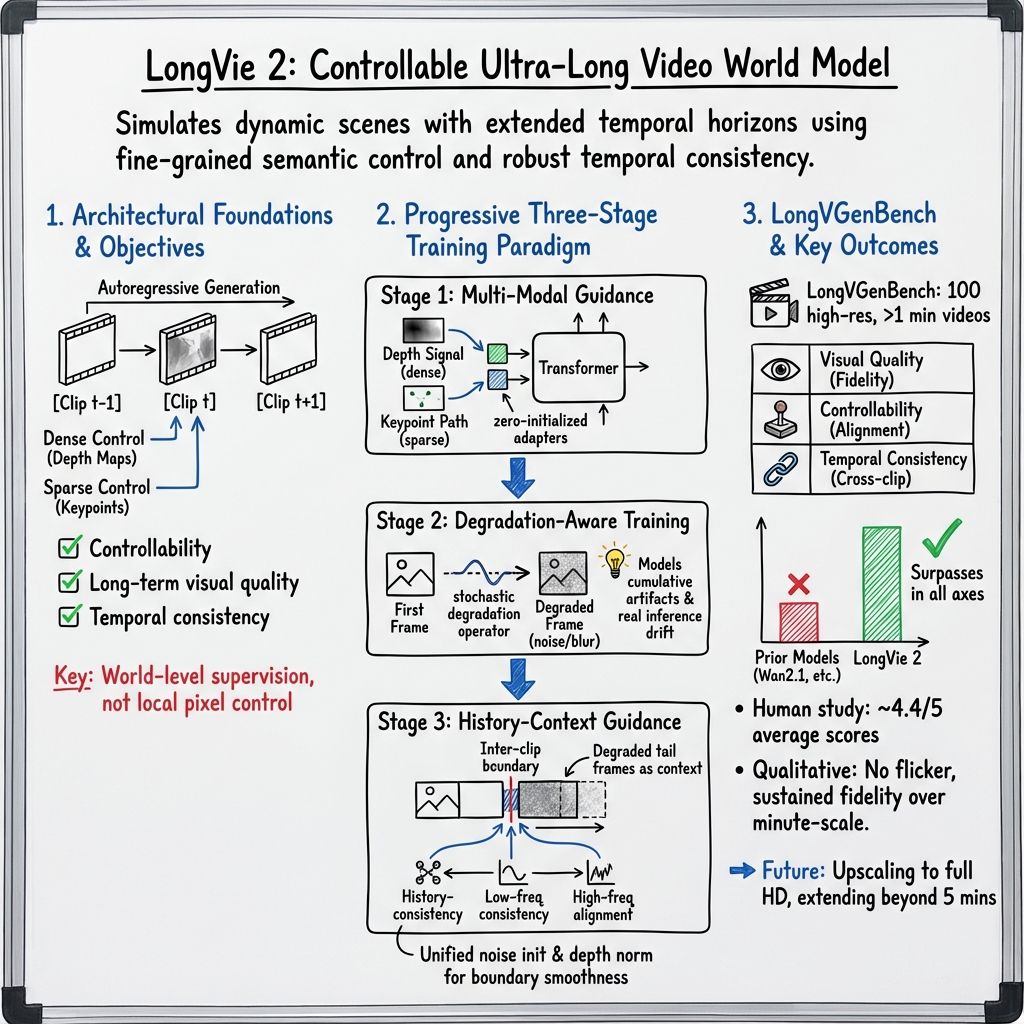

LongVie 2: Ultra-Long Video World Model

- LongVie 2 is a multimodal video world model that integrates dense depth signals and sparse keypoints to enable fine-grained semantic control over minute-long video sequences.

- The model employs a progressive three-stage training paradigm, including multi-modal guidance, degradation-aware adaptation, and history-context alignment to enhance temporal consistency.

- Experimental results on LongVGenBench demonstrate that LongVie 2 outperforms previous approaches in visual quality, controllability, and overall temporal fidelity.

LongVie 2 is a multimodal, controllable ultra-long video world model designed to simulate and generate dynamic scenes with extended temporal horizons, leveraging pretrained video generation backbones. Its architecture emphasizes fine-grained semantic control, long-term visual quality, and robust temporal consistency, overcoming core limitations in prior video world modeling approaches (Gao et al., 15 Dec 2025).

1. Architectural Foundations and Objectives

LongVie 2 builds upon a latent video diffusion backbone (Wan2.1 I2V 14B) integrated with a DiT transformer core. Video generation proceeds autoregressively, producing minute-scale sequences by dividing them into consecutive clips, each comprising frames (commonly at 16 fps). Key controllability is achieved by fusing dense and sparse modalities—specifically, depth maps and tracked keypoints—into the generative process, enabling world-level supervision rather than local pixel control.

Essential properties targeted are:

- Controllability: Direct steering of semantic dynamics—camera trajectory, object motion, and style.

- Long-term visual quality: Mitigation of artifact accumulation over minutes-long sequences.

- Temporal consistency: Prevention of flicker, scene jumps, or identity drift across clips.

2. Progressive Three-Stage Training Paradigm

LongVie 2 employs a modular, staged training workflow:

- Multi-Modal Guidance (Stage 1): Augments the transformer with two lightweight branches: for dense depth signals and for sparse keypoint paths. At each of the first 12 blocks, zero-initialized adapters inject fused control information,

Two degradations—random scaling (feature-level) and blur/downsampling (data-level)—bias reliance toward both branches.

- Degradation-Aware Training (Stage 2): Models the cumulative effect of VAE and diffusion artifacts by applying a stochastic degradation operator to the “first frame” during training,

ensuring model robustness against real inference drift.

- History-Context Guidance (Stage 3):

Addresses inter-clip coherence by introducing degraded tail frames from the preceding clip as context and applying three inter-clip boundary alignment losses: - History-consistency: - Low-frequency consistency: - High-frequency alignment:

Unified noise initialization and global depth normalization further enhance temporal regularity, particularly across clip boundaries.

3. Model Inputs, Outputs, and Autoregressive Objectives

Inputs to the system include:

- Initial frame latent:

- Dense control latents: (derived from depth maps)

- Sparse control latents: (keypoint trajectories)

- History latents: (for Stage 3)

The principal training objective is the latent diffusion loss,

which aligns with the conceptual autoregression over frames,

Model outputs are high-fidelity, minute-scale video clips with seamless temporal transitions.

4. Benchmarking: LongVGenBench

To facilitate robust evaluation, LongVGenBench is introduced—a benchmark comprising 100 high-resolution (1080p, 16 fps, 1 minute) videos spanning diverse environments and activities. Each video is partitioned into 81-frame clips (one-frame overlap). Control signals (caption via Qwen 2.5-VL, depth, points) are extracted per clip; the first frame is treated as ground-truth for seeding.

Key evaluation axes include:

| Axis | Metric(s) | Focus |

|---|---|---|

| Visual Quality | A.Q. (Aesthetic), I.Q. (Imaging) | Frame fidelity |

| Controllability | SSIM↑, LPIPS↓ | Signal alignment |

| Temporal Consistency | S.C., B.C., O.C., D.D. | Cross-clip |

Definitions follow VBench conventions.

5. Experimental Outcomes and Comparative Analysis

Quantitative results on LongVGenBench demonstrate that LongVie 2 surpasses prior models (Wan2.1, VideoComposer, Motion-I2V, Go-With-The-Flow, DiffusionAsShader, Matrix-Game, HunyuanGameCraft) in all axes:

- A.Q.=,

- I.Q.=,

- SSIM=$0.529$,

- LPIPS=$0.295$,

- S.C.=,

- B.C.=,

- O.C.=,

- D.D.=.

Human study yields average perceptual scores in all measured dimensions (VQ, PVC, CC, ColC, TC). Ablation studies corroborate the necessity of each stage: control branch enhances SSIM/LPIPS, degradation-aware adaptation boosts A.Q./I.Q., and history context substantially increases S.C./B.C./O.C./D.D. Training-free strategies (noise initialization, depth normalization) are indispensable for temporal continuity.

Qualitative analysis shows LongVie 2 producing long videos without flicker, sustaining style and motion fidelity, and accurately propagating physical phenomena.

6. Limitations and Prospective Developments

Current generation is limited to resolutions of due to practical constraints, with anticipated improvements from upscaling to full HD. Expanding the history context or deploying hierarchical memory mechanisms could extend generation beyond five minutes. Incorporating interactive inputs or reinforcement learning-based simulation remains an open avenue.

Key insights include the effectiveness of progressive, modular training (“control first, then long-term”), the impact of simulated inference corruption in bridging train–test mismatch for long-horizon generation, and the superiority of explicit history context over purely autoregressive approaches for temporal alignment.

LongVie 2 constitutes an advance toward unified video world models, integrating multimodal, semantically-controllable generation with sustained fidelity and consistency over minute-scale horizons (Gao et al., 15 Dec 2025).