Qwen3-VL-32B: Vision-Language Transformer

- Qwen3-VL-32B is a vision-language model featuring a 32B parameter transformer with interleaved-MRoPE and DeepStack fusion for enriched multimodal reasoning.

- It introduces innovations like multi-axis rotary position encoding and text-based time alignment to boost long-context and spatial-temporal understanding.

- Pretrained in four stages on diverse data, the model achieves state-of-the-art performance in tasks ranging from image-grounded reasoning to agentic decision-making.

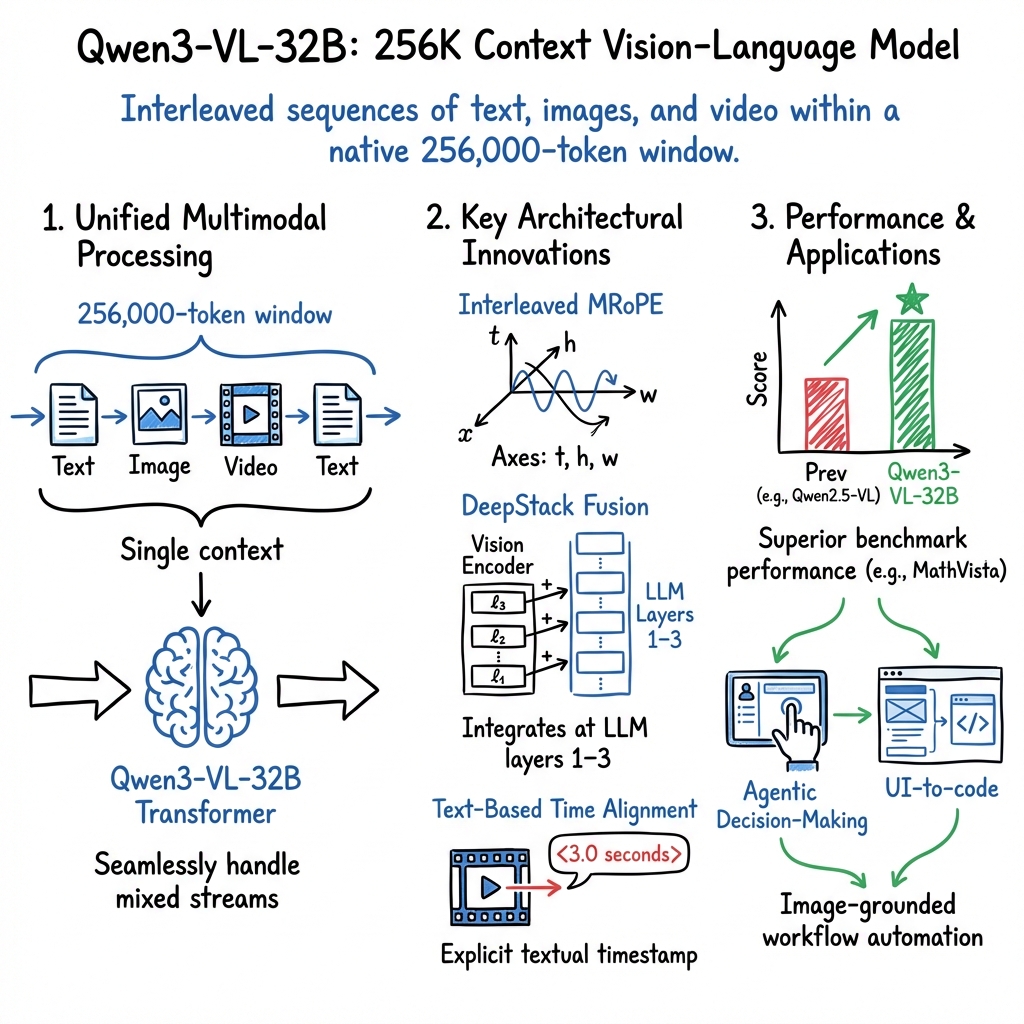

Qwen3-VL-32B is a vision-LLM in the Qwen3-VL series, designed as a transformer-based architecture with approximately 32 billion parameters and enhanced multimodal and long-context capabilities. It processes interleaved sequences of text, images, and video within a native 256,000-token window, supporting advanced spatial-temporal grounding and multimodal reasoning tasks. The model integrates architectural innovations, including an interleaved multi-axis Rotary Position Encoding (MRoPE), DeepStack multi-level visual-textual fusion, and text-based temporal alignment, resulting in superior benchmark performance for both unimodal and multimodal scenarios (Bai et al., 26 Nov 2025).

1. Architecture and Core Components

Qwen3-VL-32B comprises 64 transformer decoder layers with a hidden dimension of 12,288, 96 attention heads, and a feed-forward size of 49,152. The model pipeline consists of three primary modules: a ViT-based vision encoder (SigLIP-2), a two-layer MLP merger projecting visual patches to the LLM dimension, and the Qwen3-32B transformer decoder modified for multimodal fusion.

Interleaved-MRoPE

Rotary positional encodings (RoPE) are extended across three frequency axes: temporal (), horizontal (), and vertical (). Unlike previous Qwen2.5-VL models that grouped frequencies by axis, Qwen3-VL interleaves these triplets, distributing , , and frequencies uniformly across low and high-frequency bands. The frequencies are defined per axis as: with and as axis-specific scalars. The permutation across embedding dimensions mitigates spectral biases and empirically improves long-scale video understanding.

DeepStack Multi-Level Fusion

DeepStack fuses visual features from multiple depths of the vision encoder into the first three layers of the LLM. For each of the selected ViT layers (), independent MLP mergers project patch tokens to the hidden dimension, and residual addition integrates them at LLM layers 1–3. This approach enriches the LLM with both low- and high-level visual semantics without increasing sequence length.

Text-Based Time Alignment

Each group of video frames is preceded by an explicit textual timestamp token (e.g., "<3.0 seconds>", "<00:03:00>"), embedded using standard text mechanisms. The model alternates timestamp format during training to encourage robustness. This textual anchoring supersedes the original T-RoPE, which was tied to absolute frame indices, thereby improving temporal grounding accuracy for video inputs.

2. Training Protocol and Data Composition

Qwen3-VL-32B is pretrained in four stages, gradually increasing both model scope and sequence length, culminating in a 256K-token training window:

- S0: Merger only, 8K sequence, 67B tokens

- S1: Full model, 8K sequence, ~1T tokens

- S2: Full model, 32K sequence, ~1T tokens

- S3: Full model, 262K sequence, 100B tokens

Long context support is enabled by extending RoPE via YaRN-based or 2D interpolation and leveraging FlashAttention-2 for both training and inference. Inference utilizes vLLM’s PagedAttention for efficient sliding-window memory management.

The training corpus is a balanced mixture of modalities—image caption pairs, interleaved documents, OCR (39 languages, 30M samples), document parsing (HTML→Markdown), VQA, object and point grounding, 3D spatial reasoning, code (including UI-to-code, SVG), dense captioned video, STEM visual reasoning (e.g., diagram captions, K–12 exercises), and agentic trajectories (GUI plans, tool-calling, search). All modalities are tightly interleaved in the sequence for unified cross-modal attention.

3. Long-Context and Multimodal Processing

Qwen3-VL-32B’s 256K native token window supports retention and retrieval across hundreds of pages, including cross-referencing across long documents and videos. The interleaved MRoPE, DeepStack, and shifted temporal representation allow the model to seamlessly handle mixed streams of text, images, diagrams, and video segments within a single context, maintaining alignment and semantic consistency across modalities.

FlashAttention-2 enables memory-efficient exact attention over long contexts. At deployment, vLLM’s PagedAttention restricts on-chip storage to a sliding window of key/value pairs, maintaining sub-millisecond per-token inference latency even for extended contexts.

4. Benchmark Performance and Throughput

Qwen3-VL-32B achieves state-of-the-art or leading results across multiple benchmark domains:

- Pure-Text Understanding: On MMLU-Pro, MMLU-Redux, and GPQA, Qwen3-VL-32B-Instruct outperforms the text-only Qwen3-32B backbone by 3–5 points across subjects (e.g., 78.6% vs 71.9% on MMLU-Pro).

- Long-Context Comprehension: On MMLongBench-Doc (up to 256K tokens), Instruct and Thinking variants achieve 54.6% and 55.4%, respectively, versus 38% for Qwen3-32B (8K).

- Multimodal Reasoning: On STEM visual tasks:

| Benchmark | Q3-VL-32B-Think | Q3-VL-32B-Instruct | Qwen2.5-VL-72B | |------------------|-----------------|--------------------|----------------| | MMMU | 78.1 | 76.0 | 77.7 | | MathVista_mini | 85.9 | 83.8 | 79.4 | | MathVision | 70.2 | 63.4 | 64.3 |

On multi-image (BLINK/MUIR) and video (MVBench/Video-MME), Qwen3-VL-32B-Thinking matches or exceeds Gemini-2.5-Flash with similar frame budgets, scoring 80.3/82.1 on BLINK/MUIR and ~77 on MVBench.

- Throughput and Latency: For a 128-token latency budget, Qwen3-VL-32B achieves ~1,000 tokens/sec on 4×A100 using FlashAttention-2, compared to Gemini-Flash’s ~800 tokens/sec. At full 256K-token context, PagedAttention confers a 2× throughput advantage over non-paged models.

- Scalability: Latency per token scales linearly with model size. On a single A100: 2B (~4K t/s), 4B (~2K t/s), 8B (~1K t/s), 32B (~400 t/s); with 4×A100 model-parallelism, 32B reaches 1,600 t/s (~200 ms per 1K tokens).

5. Application Domains and Real-World Relevance

Qwen3-VL-32B is positioned for a range of applied multimodal reasoning tasks:

- Image-Grounded Reasoning: Achieves SOTA on MMMU, MathVista, and MMBench.

- Agentic Decision-Making: Performs strongly on GUI-based benchmarks (ScreenSpot-Pro 60.5/57.1, AndroidWorld 63.7), supporting multi-step plan generation and execution in interactive environments.

- Multimodal Code Intelligence: Enables end-to-end conversion of UI images to HTML/CSS, chart-to-code generation, and SVG manipulation (Design2Code 93.4, ChartMimic 78.4, UniSVG 65.8).

A plausible implication is that Qwen3-VL-32B’s unified sequence modeling with interleaved modalities and long-context retention can serve as an engine for image-grounded workflow automation, document analysis, and agentic systems requiring joint visual, textual, and temporal reasoning.

6. Comparative Overview and Significance

Qwen3-VL-32B’s dense transformer design, enhanced position encoding, deep visual fusion, and flexible timestamping mark advances over prior Qwen2.5-VL and contemporary models such as Gemini-2.5-Flash on both efficiency and benchmark accuracy under comparable compute budgets. Its architecture supports both throughput-critical (e.g., live agent) and accuracy-critical (e.g., scientific VQA) applications, driven by end-to-end interleaved attention and broad training data diversity (Bai et al., 26 Nov 2025). This architecture establishes a technical reference point for future large-scale, long-context, and richly multimodal models.