Rectified Straight-Through Estimator (ReSTE)

- ReSTE is a surrogate gradient method that interpolates between identity and non-differentiable functions to enable effective training of binary neural networks.

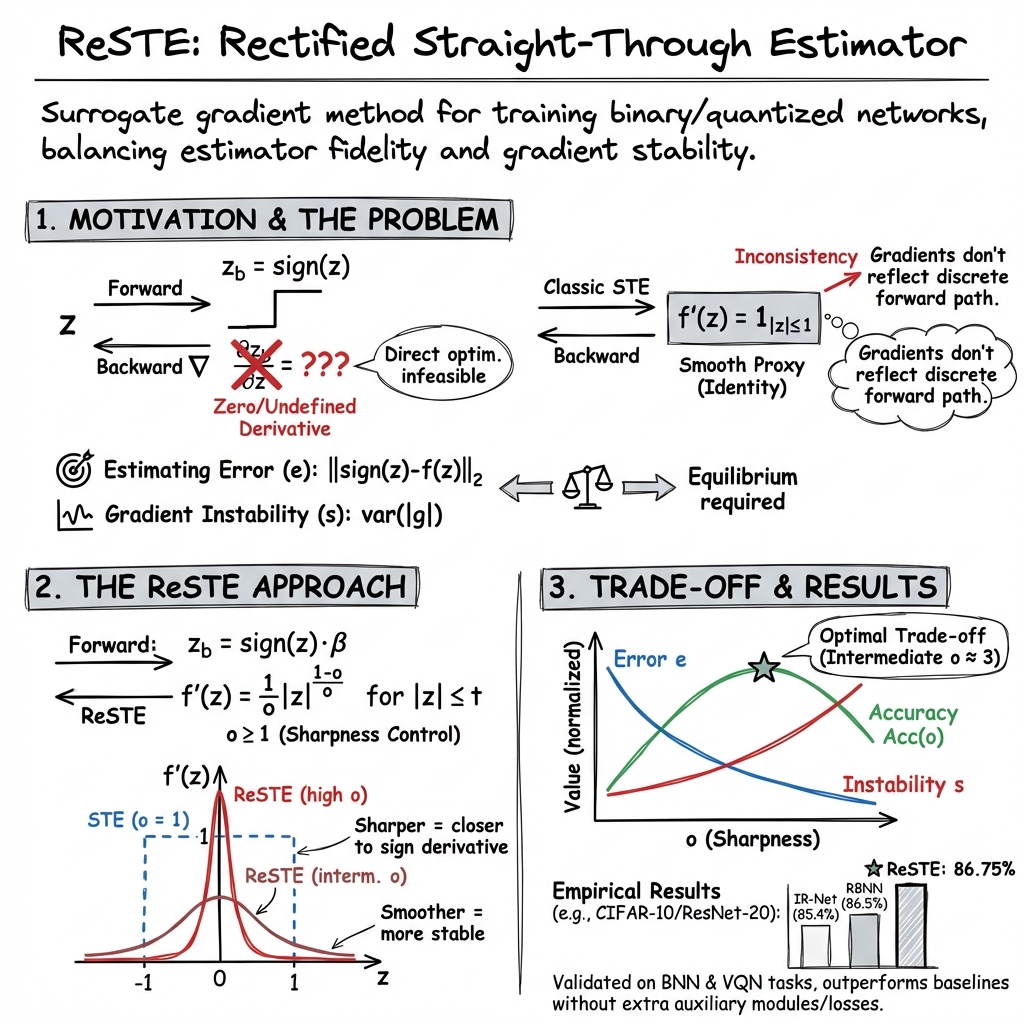

- It introduces an equilibrium perspective by quantifying estimating error and gradient instability, allowing fine-tuning of the estimator-stability trade-off.

- Empirical evaluations on CIFAR-10 and ImageNet show that ReSTE outperforms classic STE methods, achieving superior accuracy without extra auxiliary modules.

The Rectified Straight-Through Estimator (ReSTE) is a class of surrogate gradient methods designed to address fundamental limitations in training neural networks with hard discrete operations, specifically in the context of binary neural networks (BNNs) and vector-quantized models. ReSTE systematically interpolates between the classic identity-based Straight-Through Estimator (STE) and the non-differentiable target function (e.g., sign or quantization), introducing both theoretical and practical mechanisms for balancing gradient fidelity and stability (Wu et al., 2023, Huh et al., 2023).

1. Motivation and Equilibrium Perspective

Neural network binarization compresses models by forcing parameters or activations to discrete (often binary) values. A canonical example is using the sign function in forward computations. However, direct optimization is infeasible due to the sign function’s zero (almost everywhere) and undefined (at zero) derivatives. The classic STE replaces the backward gradient of the non-differentiable operator with that of a smooth proxy, typically the identity, but this introduces a critical estimator inconsistency: gradients do not reflect the true discrete nature of the forward path.

ReSTE introduces a quantitative equilibrium perspective on surrogate gradient design. Two indicators are defined:

- Estimating Error ():

- Gradient Instability (): , the mean absolute gradient

A decrease in estimating error (using a sharper estimator) results in increased gradient instability, risking vanishing/exploding gradients, and vice versa. Effective training requires an equilibrium between these competing factors (Wu et al., 2023).

2. From Classic STE to Power-Function-Based ReSTE

2.1 Standard STE

STE, as implemented in BinaryConnect and DoReFa, uses

- Forward:

- Backward: Replace with (identity within , zero otherwise)

This approach yields highly stable gradients but maximizes proxy error everywhere except close to , decoupling training signals from binarization boundaries.

2.2 Rectified STE: The ReSTE Power Function

ReSTE generalizes the backward pass with a one-parameter power function:

- Hyperparameter: ; controls transition sharpness

- Forward: with (layer-wise scaling)

- Backward:

for , a clipping threshold (e.g., ). Near zero, a finite-difference estimate is used to avoid singularities.

When , (standard STE); as , , recapitulating the hard sign. The shape parameter explicitly controls the trade-off between approximating the sign function (low , high ) and preserving stable gradients (high , low ).

3. Error–Stability Trade-off and Empirical Characterization

Empirical investigations on tasks such as CIFAR-10 with ResNet-20 demonstrate the quantitative effect of varying :

- Low (e.g., 1): Low instability , high error , suboptimal final accuracy.

- Intermediate (): Near-optimal trade-off, best top-1 accuracy observed.

- High (): Exploding , training collapse due to gradient instability.

Reported accuracy curves confirm this: accuracy exhibits a single-peaked structure, maximizing at intermediate . Both and also behave monotonically: decreases, increases with (Wu et al., 2023).

4. ReSTE in Vector-Quantized Architectures

In the context of vector quantization (VQ) layers, the STE is used to backpropagate through non-differentiable quantization:

- Continuous embedding:

- Quantized code: ,

- STE:

Limitations here include gradient sparsity and index collapse due to codebook–embedding misalignment and update asymmetry.

ReSTE for VQNs comprises three mechanisms (Huh et al., 2023):

- Affine Re-Parameterization: Codes allow global moment matching, ensuring that all codes receive updates and encoder-codebook alignment improves.

- Alternating Optimization: EM-style separation of codebook and encoder/decoder updates reduces the STE gradient gap:

(-Lipschitz ).

- Synchronized Commitment Update: Gradients of flow to code vectors each step, avoiding a one-step update lag.

5. Algorithmic Implementation

A typical ReSTE-based BNN layer requires the following steps per iteration:

- Forward: , .

- Backward: For each ,

- If ,

- Else if , finite-difference

- Else

- Gradient synthesis:

Typical hyperparameters:

- Optimizer: SGD, LR = 0.1, cosine decay

- STE-clip , finite-diff threshold

- linearly increased from 1 to over epochs

- Batch size and augmentations as in canonical baselines

For VQNs, alternating optimization and code re-parametrization are incorporated, following an EM-like update schedule, with improved commitment loss (Huh et al., 2023).

6. Empirical Evaluation and Comparative Results

ReSTE has been validated across standard image classification and generative modeling tasks:

BNN (CIFAR-10, ImageNet):

| Backbone | Method | W/A | Auxiliary | Top-1 Acc. | Top-5 Acc. |

|---|---|---|---|---|---|

| ResNet-20 | IR-Net | 1/1 | Module | 85.40% | — |

| ResNet-20 | LCR-BNN | 1/1 | Loss | 86.00% | — |

| ResNet-20 | RBNN | 1/1 | Module | 86.50% | — |

| ResNet-20 | ReSTE | 1/1 | — | 86.75% | — |

| ResNet-18 | FDA | 1/1 | Module | 60.20% | 82.30% |

| ResNet-18 | LCR-BNN | 1/1 | Loss | 59.60% | 81.60% |

| ResNet-18 | ReSTE | 1/1 | — | 60.88% | 82.59% |

VQN (ImageNet-100, Generative/Recon):

- Affine re-param, synchronized updates, and alternating STE improve accuracy (AlexNet to 57.9%, ResNet-18 to 71.0%, ViT to 56.7%), perplexity, and FID over baselines (Huh et al., 2023).

Ablation studies confirm ReSTE’s flexibility and rational design: it outperforms STE (84.44%), DSQ (84.11%), and RBNN (85.87%) with 86.75% accuracy on CIFAR-10/ResNet-20, without requiring extra auxiliary modules or losses.

7. Limitations and Future Directions

Open challenges remain in automating the optimal selection of ReSTE’s shape parameter for diverse tasks, architectures, or data regimes. Extending the equilibrium perspective beyond single-bit quantization to multi-bit or other discrete mappings is an active research direction. Theoretical convergence properties under ReSTE have yet to be fully established (Wu et al., 2023).

A plausible implication is that ReSTE’s explicit mechanism for controlling the estimator–stability trade-off could generalize to other non-differentiable neural operators, facilitating principled surrogate gradient design across a broad spectrum of quantized and discretized architectures.