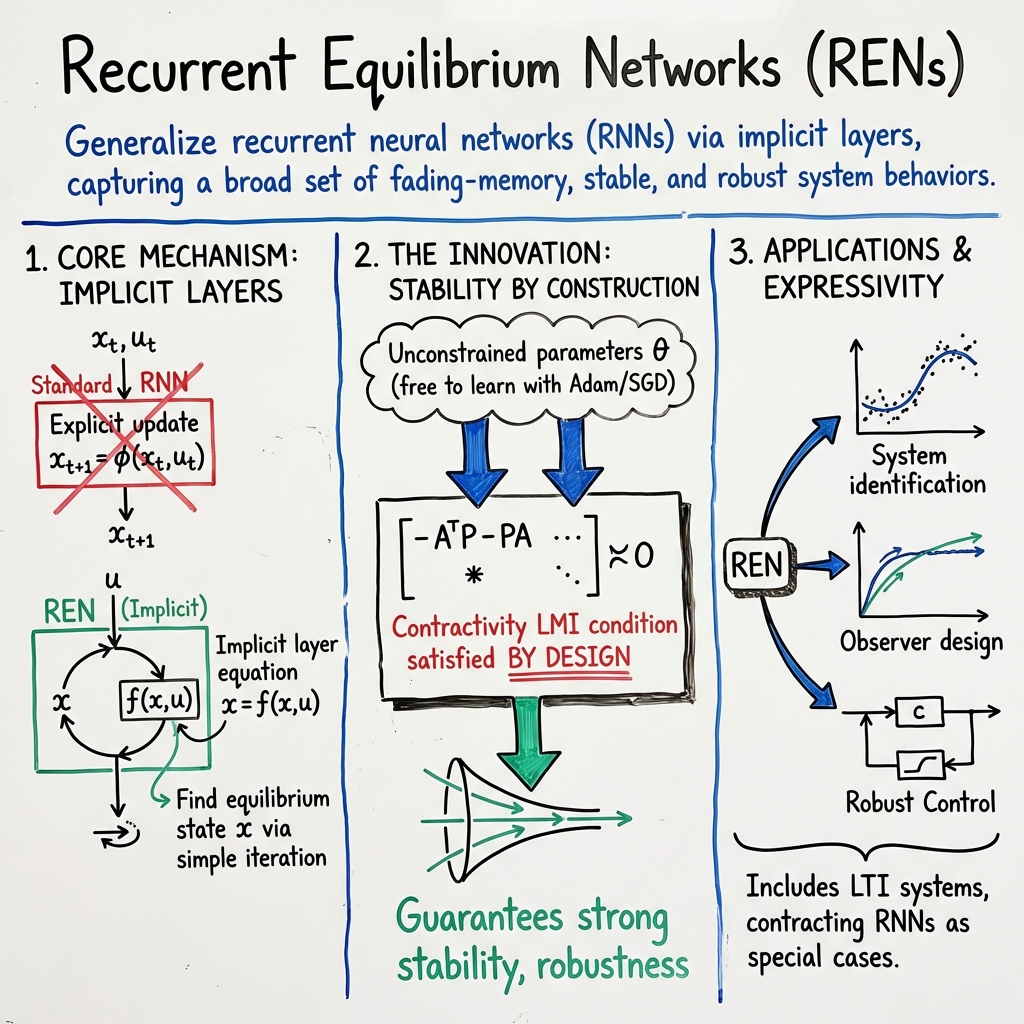

Recurrent Equilibrium Networks (RENs)

- Recurrent Equilibrium Networks are nonlinear dynamical models defined via implicit fixed-point equations that ensure unique equilibria under contractivity.

- They employ an unconstrained parametrization that guarantees stability, dissipativity, and robust performance in tasks like system identification and control.

- RENs generalize standard RNNs with both discrete and continuous-time formulations, preserving formal robustness via LMI-based certificates.

Recurrent Equilibrium Networks (RENs) are a class of nonlinear dynamical models that generalize recurrent neural networks (RNNs) via implicit layers, capturing a broad set of fading-memory, stable, and robust system behaviors. They are defined by fixed-point equations rather than explicit state transitions, and can be parameterized to be contracting and dissipative by construction, a property preserved even when scaling to large parameter spaces. RENs admit unconstrained learning, enabling the use of generic stochastic gradient-descent methods while guaranteeing strong stability, robustness, and incremental integral quadratic constraint (IQC) properties. The REN framework encompasses discrete- and continuous-time models and supports advanced applications in system identification, observer design, control, distributed control, and reduction of dynamics with formal preservation of robustness guarantees.

1. Mathematical Formulation and Core Properties

A discrete-time Recurrent Equilibrium Network is specified by the implicit layer equation , where is the equilibrium state, is the input, and is typically affine in with a nonlinear component:

The solution for a given input is determined as the unique fixed-point of , under the condition that is globally Lipschitz in with constant . In such case, uniqueness and existence follow from the Banach fixed-point theorem and the equilibrium can be found via simple iteration . The contractivity of is equivalently enforced via the Jacobian spectral norm .

Continuous-time generalizations, termed NodeRENs, embed the REN structure into neural ODEs: Here, is slope-restricted to , and structural constraints (e.g., strictly lower-triangular ) ensure well-posedness and uniqueness for given .

2. Unconstrained Parametrization, Contractivity, and Dissipativity

The central innovation for large-scale, robust learning in RENs is the unconstrained parametrization of network weights so that contractivity and dissipativity are maintained for all parameter values. In the discrete-time case, the weight matrices are constructed from free parameters via block matrices (e.g., partitioned into suitable blocks), followed by closed-form recovery of REN parameters that satisfy a contractivity linear matrix inequality (LMI). For contractive RENs (C-RENs), parameters , etc., are used to synthesize weight matrices guaranteeing

where , diagonal.

For dissipativity or incremental IQC-enforced RENs (IQC-RENs), free parameters feed through a Cayley-type parameterization, ensuring the satisfaction of the extended LMI corresponding to a supply matrix (e.g., for -gain, Lipschitz, or passivity properties). The continuous-time NodeREN versions mirror this process by constructing appropriate Lyapunov and supply matrices via the same parameterization logic, guaranteeing that contractivity and dissipativity hold.

A summary of key contractivity and IQC LMI structures is provided below:

| LMI Type | Key Block-Form Condition | Guarantee |

|---|---|---|

| Contractivity | Uniqueness, geometric convergence to equilibrium | |

| Incremental IQC | See text (block matrix involving , , , , , , , , ) | Dissipativity in sense of the given IQC |

The unconstrained design allows solvers like Adam (SGD) to operate directly on free parameters, without recourse to constrained optimization or projections.

3. Expressiveness, Universal Approximation, and System-Theoretic Perspective

RENs are strictly more general than standard RNNs and represent numerous important model classes:

- All stable linear time-invariant (LTI) systems are included as special cases of the REN architecture.

- Contracting classical RNNs and echo state networks appear as specializations through selected zero structures in the REN matrices.

- Static deep feedforward neural networks are realized as block-triangular cases.

- Wiener/Hammerstein and block-structured models emerge via structured zeroing.

The universal approximation property is established: as the REN's state and nonlinear dimension increase, the model class is dense in fading-memory operators and contracting nonlinear systems with finite incremental -gain. Special cases (e.g., truncated Volterra series) show that RENs can approximate a broad class of nonlinear dynamical systems while maintaining stability constraints.

4. Training Methodology and Implementation Considerations

Training an REN consists of:

- Simulating the system via the implicit state-update: fixed-point iterations for the inner equilibrium, which are empirically efficient.

- Optimization of a simulation- or trajectory-based loss (e.g., mean-squared error in system identification, or cost in control scenarios) via Adam or similar optimizers, acting on parameterizations that guarantee feasibility of contractivity/dissipativity.

- Gradients with respect to parameters are computed efficiently using the implicit function theorem. For the inner equilibrium :

where is a diagonal matrix of .

Adaptive ODE solvers, e.g., Dormand-Prince (dopri5), can be employed for NodeREN time integration, with trade-offs in accuracy and function evaluations.

5. Applications: System Identification, Observer Design, and Robust Nonlinear Control

RENs have wide applicability:

- System identification of nonlinear systems: contracting RENs have demonstrated superior performance versus RNNs, LSTMs, and robust RNNs, particularly in sensitivity and certification of Lipschitz bounds. For example, a contracting REN on the F-16 ground vibration dataset achieved NRMSE ≈ 20.1% with observed sensitivity γ̂ ≈ 36.7 under a bound, where RNNs/LSTMs failed to meet robustness requirements (Revay et al., 2021).

- Observer design: by learning a contracting nonlinear observer and imposing correctness with respect to the nominal model, RENs guarantee convergence of the estimated to the true state, for instance, in semilinear PDE discretizations (Revay et al., 2021).

- Nonlinear robust and distributed control: RENs are integrated into control frameworks, including the nonlinear Youla parameterization ("Youla-REN"), robust data-driven control, and networked/distributed settings with certifiable -gain on interconnections (Wang et al., 2021, Saccani et al., 2024).

- Distributed RENs can be interconnected per a communication graph, each with gain-imposed certificates, and the composite system achieves a global -stability bound, enforced by design and without solver constraints (Saccani et al., 2024).

In direct reinforcement learning (RL) scenarios, projected policy-gradient approaches allow maximization of arbitrary reward functions under enforced closed-loop stability. The projection onto the feasible set specified by the LMI can be posed as a standard semidefinite program (SDP) and solved via, e.g., CVXPY (Junnarkar et al., 2022).

6. Model Order Reduction and Scaling via Contraction Certificate

Large-scale (high-dimensional) RENs present challenges for real-time deployment on resource-constrained platforms. RENs support dimension reduction by projection, uniquely leveraging the pre-learned contraction or robustness certificate. The method constructs two projection matrices: one (involving ) fixes contractivity/robustness preservation by construction,

The other, , is updated iteratively to minimize the -error in the LTI component using necessary optimality conditions—mirroring IRKA-type approaches (Shakib, 4 Aug 2025). The reduced-order REN satisfies the same LMI-based guarantees as the original. Numerical results confirm that up to 90% state reduction preserves the contraction and IQC properties with minimal accuracy loss.

7. Empirical Validation, Robustness, and Limitations

RENs and NodeRENs have been validated on standard system identification and control benchmarks:

- System identification of nonlinear pendulum systems, with noisy and irregularly sampled data, demonstrating stability even beyond training horizons where unconstrained models fail (Martinelli et al., 2023).

- Comparative studies against conventional RNNs, LSTMs, and robust baselines, consistently showing superior sensitivity margins and lower cost when explicit robustness constraints are imposed.

- Distributed control applications, where REN networks enable formation control under obstacle avoidance, maintaining network-level stability by construction.

- Scaling analyses showing that the unconstrained parameterization supports efficient and stable training on large models.

A key property is robustness to irregular sampling and model uncertainty: RENs trained on differently sampled datasets achieve tight clusters of test loss, confirming their performance consistency.

The primary computational cost lies in the fixed-point solve at each timestep or time-continuous step, but practical architectures and modern hardware easily amortize this overhead, especially as the parameterization eliminates the need for costly projections or stability checks during SGD.

RENs thus unify system-theoretic stability principles with expressive nonlinear modeling and scalable, unconstrained learning. Their structural guarantees enable principled deployment in sensitive applications demanding formal robustness, such as safety-critical control, distributed multi-agent systems, and nonlinear observer design.