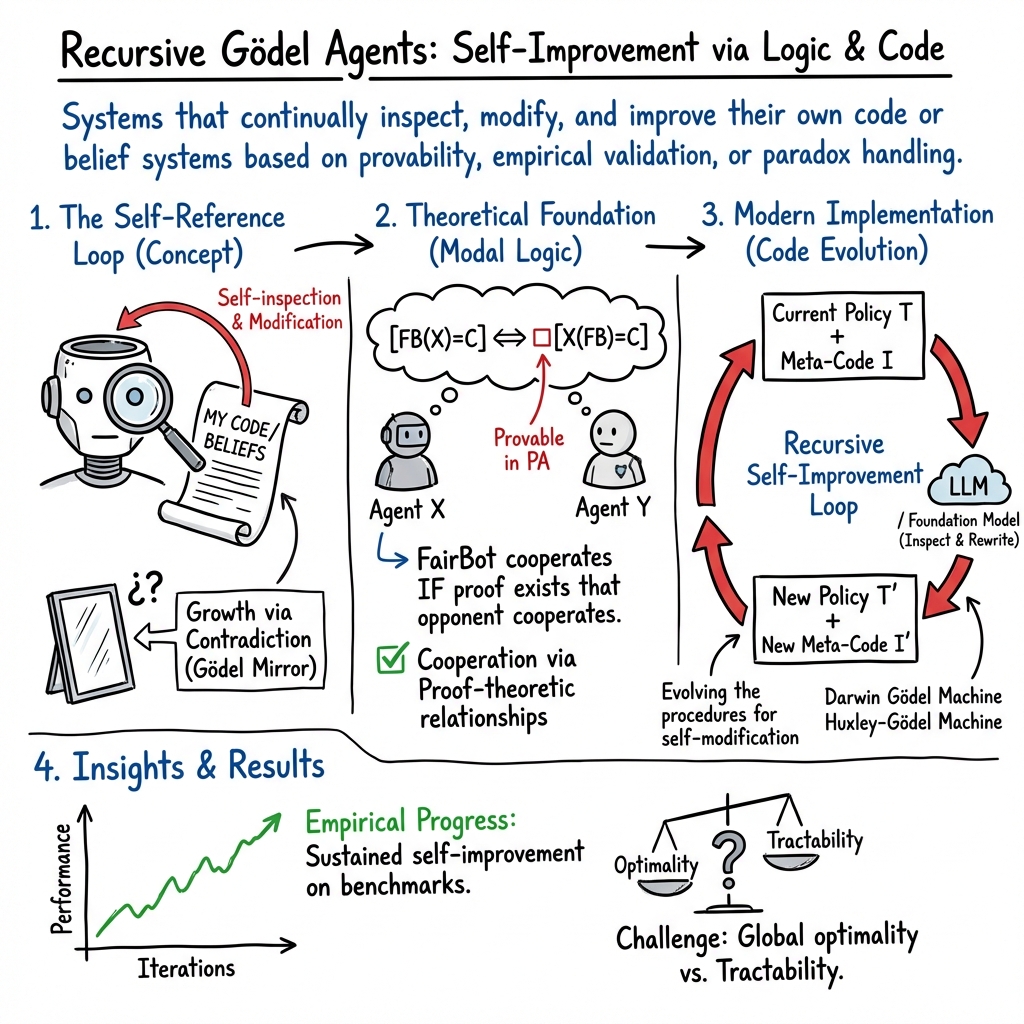

Recursive Gödel Agents

- Recursive Gödel Agents are self-referential, self-modifying systems that leverage recursive proof techniques and formal logic to inspect and update their own code.

- They employ modal provability logic, self-modification frameworks, and contradiction-driven recursion to enable robust cooperation and continuous performance gains.

- Empirical studies demonstrate these agents achieve significant improvements in benchmarks through iterative self-improvement and meta-learning strategies.

Recursive Gödel Agents are a class of self-referential, self-modifying systems grounded in the logic, formal proof, and agent architectures inspired by Gödel’s incompleteness and self-reference machinery. Fundamentally, such agents continually inspect, modify, and improve their own code or belief systems based on provability, empirical validation, or controlled handling of contradiction. They leverage recursion both in the construction of their actions and in evolving the very procedures that produce those actions, yielding a lineage of agents/systems with ever-richer structure or capability. Architectures range from modal provability logic (FairBot, PrudentBot) (Barasz et al., 2014), through tree-search and clade metaproductivity (Huxley-Gödel Machine, Darwin Gödel Machine) (Wang et al., 24 Oct 2025, Zhang et al., 29 May 2025), to paraconsistent formal calculi (Gödel Mirror) (Chan, 16 Sep 2025) and incremental theory extension (Recursive Gödel Agent, “bot beliefs”) (Pavlovic et al., 2023). Recent results demonstrate empirical progress toward recursive self-improvement leveraging LLMs and foundation models (Yin et al., 2024, Wang et al., 24 Oct 2025, Zhang et al., 29 May 2025).

1. Formal Characterization of Recursive Gödel Agents

Gödelian agents are built on self-reference: their internal logic or code contains representations of themselves, and their decision functions include proofs or recursive evaluations of own and opponent actions. In the original modal agent construction (Barasz et al., 2014), each agent is defined as a formula in Peano Arithmetic with one free variable. For two agents X, Y, the sentence formalizes “X will cooperate when playing against Y.” Using provability logic GL (Gödel–Löb modal logic), one can define actions conditional on what is provable about interactions, e.g.,

for FairBot, which cooperates if and only if Peano Arithmetic can prove the opponent will cooperate against it.

More broadly, recursive Gödel Agents may be formalized in multiple paradigms:

- Modal provability agents (Barasz et al., 2014): Actions determined by fixed-point formulas in GL and their PA interpretations.

- Self-modifying agent frameworks: The agent is given by a pair (policy, meta-learning code), where both components are recursively inspected and rewritten (Yin et al., 2024).

- Theory-extending bot beliefs: Agents iteratively add new axioms to their internal formal theories, aiming for eventual completeness for targeted sentence classes (e.g., all true sentences) (Pavlovic et al., 2023).

- Contradiction-driven recursion: Agents that metabolize paradoxes into structural growth via deterministic term-rewriting (Gödel Mirror calculus) (Chan, 16 Sep 2025).

2. Provability Logic and Modal Agents

Barász et al. (Barasz et al., 2014) provide a detailed construction of “modal agents” using Gödel–Löb logic GL. The framework employs:

- Modal operator interpreted as “provable in PA.”

- Fixed-point (diagonal) theorems to enable recursive dependence on provability.

- Agents such as FairBot and PrudentBot, whose actions are logical fixed points over modal formulas.

A modal agent of rank is formally characterized by

where are simpler agents and is a modalized propositional formula.

Cooperative equilibria (program equilibria) emerge, where two agents mutually cooperate based not on code equality but on proof-theoretic relationships. Löb’s axiom ensures robust, unexploitable cooperation, as in the mutual FairBot scenario:

The recursive nature derives from each agent’s decision relying on proofs about the opponent’s proofs about itself, yielding nested self-reference up to the base axioms of arithmetic.

3. Recursive Self-Improvement: Code and Agent Evolution

Contemporary Gödel-inspired agent architectures operationalize recursion through empirical self-improvement:

- Gödel Agent framework (Yin et al., 2024): Recursively inspects and updates both problem policy and its own meta-modification code , with each recursive call:

enabling deeper rewrites not just of action logic but of the mechanisms that search for improvements.

- Darwin Gödel Machine (DGM) (Zhang et al., 29 May 2025): Maintains a branching archive of agents, recursively generates self-modifications using FMs, and empirically validates improvements over benchmarks. Its recursion compounds agent performance and meta-editing proficiency over iterations.

- Huxley-Gödel Machine (HGM) (Wang et al., 24 Oct 2025): Employs “clade metaproductivity” (CMP), recursively aggregating successes/failures across descendants in the agent modification tree. Thompson sampling on clade statistics estimates long-run improvement potential, steering recursive expansions.

The recursive loop is not only in solving tasks but fundamentally in evolving the procedures for self-modification, analogous to higher-order meta-learning.

4. Contradiction-Driven Recursion: Gödel Mirror Calculus

The Gödel Mirror system (Chan, 16 Sep 2025) formalizes recursion and self-improvement as productive reactions to contradictions. Terms encode self-reference and paradox:

- On detecting a paradox (e.g., ), the calculus applies the deterministic sequence:

- Paradox detection encapsulate

- Integrate reenter

- Reentry growth

Agents embedding the Mirror loop reflect encountered paradoxes as new inference rules, learning structure from their own contradictions without logical explosion. Recursive agent design proceeds by updating the agent’s context with rules learned from self-referential loops.

5. Empirical Recursive Gödel Agents: Benchmarks and Architectures

Practical recursive Gödel agents utilize modern ML/LLM-driven code evolution. Empirical results demonstrate recursive performance gains:

- Gödel Agent (Yin et al., 2024): Outperforms baselines such as CoT, Self-Refine, and Meta Agent Search across math and science benchmarks. The agent continuously improves via recursive LLM-guided rewrites of both task and code meta-routines.

- Darwin Gödel Machine (Zhang et al., 29 May 2025): Achieves α increases on SWE-bench (20.0%→50.0%) and Polyglot (14.2%→30.7%) over 80–150 iterations, validating recursion-mediated innovation.

- Huxley-Gödel Machine (Wang et al., 24 Oct 2025): Matches human-level SWE-bench performance by recursively optimizing estimated via clade-based Thompson sampling.

Architecturally, empirical recursive Gödel agents are distinguished by full access to modify every component of their own codec—including the routines for agent evolution—achieving open-ended exploration of the agent design space.

| Agent Formalism | Recursion Mechanism | Empirical Verification |

|---|---|---|

| Modal Agents (FairBot etc.) | Proof recursion | Program equilibrium properties |

| Gödel Agent (LLM) | Code recursion | Benchmark improvement |

| DGM/HGM (FM/LLM) | Clade recursion | Tree search, empirical α |

| Gödel Mirror (Lean) | Paradox recursion | Lean proof, term evolution |

| RGA (bot beliefs) | Theory extension | Logical limit constructions |

6. Theoretical and Practical Limitations

Recursive Gödel agent design inherits both mathematical and computational limits. Modal agent equilibria rely on consistency and unbounded proof search, unattainable with bounded computation (Barasz et al., 2014). Empirical approaches (DGM, HGM) sacrifice formal optimality for tractability, guided by benchmark performance and statistical aggregation (Wang et al., 24 Oct 2025, Zhang et al., 29 May 2025). Paraconsistent recursion (Gödel Mirror) avoids logical explosion but requires external control of resource growth and semantic interpretation (Chan, 16 Sep 2025).

No agent architecture achieves unrestricted provable optimality; all frameworks face fundamental obstacles to dominance ordering, completeness, and convergence. Gödel-style recursion enables robust self-improvement but is limited by computational, empirical, or logical constraints.

7. Synthesis and Outlook

Recursive Gödel Agents exemplify self-referential systems whose improvement mechanism operates on the same logical, architectural, or empirical substrate as their operative behavior. Whether via modal provability, clade-based tree recursion, paradox-driven term rewriting, or incremental theory enrichment, such agents systematically embody the Gödelian paradigm “the agent that rewrites itself must operate using rules that encode and improve its own meta-regulation.” Emerging implementations demonstrate sustained self-improvement and robust cooperative behaviors, but global optimality, computational resource management, and robust convergence remain open challenges for future research (Barasz et al., 2014, Yin et al., 2024, Wang et al., 24 Oct 2025, Zhang et al., 29 May 2025, Chan, 16 Sep 2025, Pavlovic et al., 2023).