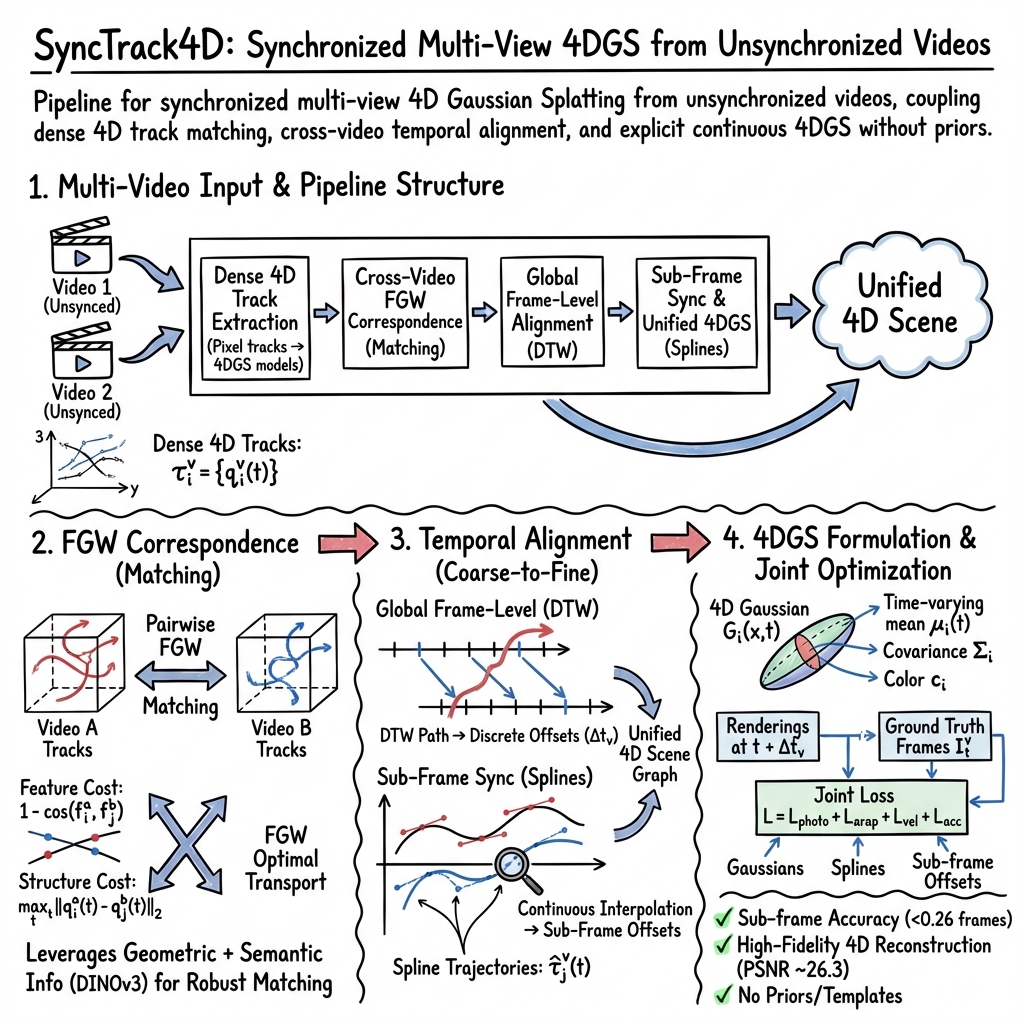

SyncTrack4D: 4D Gaussian Splatting Pipeline

- SyncTrack4D is a pipeline for synchronized multi-view 4D Gaussian Splatting that aligns unsynchronized video sets using dense feature tracking and FGW optimal transport.

- It integrates dense 4D track extraction, dynamic time warping, and continuous spline-based sub-frame synchronization to achieve precise temporal alignment.

- Empirical evaluations demonstrate sub-frame synchronization accuracy (error <0.26 frames) and high-quality renderings, validated on both synthetic and real-world datasets.

SyncTrack4D is a pipeline for synchronized multi-view 4D Gaussian Splatting (4DGS) from unsynchronized monocular or multi-view video sets. It couples dense 4D track matching, cross-video temporal alignment, and explicit continuous 4D Gaussian scene representations, enabling high-fidelity dynamic scene reconstruction and sub-frame video synchronization without requiring object templates, prior models, or hardware triggers. The framework formalizes cross-video motion alignment using fused Gromov-Wasserstein (FGW) optimal transport and continuous-time trajectory parameterization to achieve robust sub-frame alignment and rendering of dynamic, real-world scenes (Lee et al., 3 Dec 2025).

1. Multi-Video Input and Pipeline Structure

SyncTrack4D operates on unsynchronized videos,

with known camera intrinsics and extrinsics . The pipeline consists of the following core stages:

- Dense 4D Feature Track Extraction: For each video, 2D pixel tracks (e.g., via SpatialTracker) and optical flows are lifted into initial monocular 4DGS models (based on MoSca). Dense per-video 4D tracks

() are extracted, each with a fixed feature vector (typically DINOv3 descriptors). Additionally, anchor (scaffold) tracks are identified for motion compression.

- Cross-Video Correspondence via FGW: Dense matching across videos is achieved by casting the problem as FGW optimal transport using both feature similarity and the structure of track geometries.

- Coarse Global Frame-Level Temporal Alignment (DTW): Dynamic Time Warping applies to the matched tracks to globally solve for discrete frame offsets per video.

- Sub-Frame Synchronization and Unified 4DGS: Continuous-time spline-based parameterization allows accurate fine alignment (sub-frame offsets) and fuses all video tracks into a single synchronized 4DGS scene, optimized via photometric and geometric objectives.

2. Dense 4D Tracks and Fused Gromov-Wasserstein Correspondence

Each per-video 4D track is

with a constant descriptor from DINOv3.

Pairwise FGW Matching:

For a pair of videos (reference 0, query 1):

- Feature Cost: 2.

- Intra-track Structure: 3.

FGW optimal transport solves:

4

with entropic regularization 5 and uniform weights 6, 7. In practice, Sinkhorn-style iterations (e.g., via the POT library) yield 8, from which top-9 correspondences are discretized via the Hungarian algorithm.

This formulation leverages both geometric and semantic information, enforcing both per-track similarity and matching of motion/scene structure.

3. Temporal Alignment: Frame-Level and Sub-Frame

Global Frame-Level Alignment:

Introduce integer offsets 0 per video (1 for the reference). Frame-to-frame geometric costs for each matched 2 are computed as

3

where 4 denotes the matched pairs. Dynamic Time Warping identifies a monotonic mapping between sequences to deduce the optimal shift 5, usually by selecting the most frequent offset on the DTW path.

Sub-Frame Synchronization and Spline-Based Trajectory Modeling:

To refine synchronization at sub-frame level, each video’s temporal offset 6 is extended to 7. Anchor (scaffold) tracks are parameterized as cubic Hermite splines:

8

with control points 9. This enables interpolation of trajectories at non-integer timesteps, facilitating gradient-based optimization of 0 for sub-frame accuracy.

Leaf Gaussian trajectories 1 are modeled as linear blends of nearby spline anchors, yielding a globally consistent, smoothly time-varying 4D scene graph.

4. 4D Gaussian Splatting Formulation and Joint Optimization

SyncTrack4D’s final stage constructs a unified multi-video 4DGS, with each Gaussian 2 defined by:

- Time-varying mean 3,

- Covariance 4,

- Color 5.

Its contribution at 6:

7

Videos are rendered by splatting all 8 at times 9 into view 0 and comparing outputs 1 to ground-truth frames 2. The full loss is:

3

where:

- 4: Photometric difference,

- 5: As-rigid-as-possible regularization on the spline scaffold,

- 6: Velocity smoothness,

- 7: Acceleration smoothness.

Optimization variables include Gaussian parameters 8, spline controls 9, and the sub-frame offsets 0, all jointly optimized by Adam or related optimizers.

5. Empirical Evaluation

SyncTrack4D’s evaluation is conducted on:

- CMU Panoptic Studio: Large-camera array, multi-human activities, providing challenging real-world dynamic scenes.

- SyncNeRF Blender: Synthetic benchmark with 14 views and multiple objects (Box/Fox/Deer).

Test sequences are unsynchronized, with artificial offsets up to 130 frames.

Results:

- Synchronization Accuracy: Post-alignment, average error is less than 0.26 frames on Panoptic Studio (improved from over 5 frames before alignment).

- Novel-View Synthesis:

- Panoptic Studio: PSNR ≈ 26.3, SSIM ≈ 0.88, LPIPS ≈ 0.14.

- Outperforms SyncNeRF in both synchronized and unsynchronized conditions.

- Qualitative Observations: Yields temporally coherent 4D reconstructions with smooth cross-view motion; accurately preserves fine temporal details (e.g., fast object/human motions).

| Dataset | Synchronization Error (frames) | PSNR | SSIM | LPIPS |

|---|---|---|---|---|

| Panoptic Studio | <0.26 | ≈26.3 | ≈0.88 | ≈0.14 |

| SyncNeRF Blender | Not specified | – | – | – |

These results demonstrate sub-frame accuracy without hardware synchronization or predefined templates.

6. Technical Significance and Context

SyncTrack4D is, to date, the first general 4D Gaussian Splatting framework tailored for unsynchronized video sets without reliance on prior object models or explicit scene segmentations. The key innovation is leveraging dense 4D feature tracks and FGW optimal transport to drive both correspondence and alignment, enabling robust synchronization and scene consolidation across diverse, real-world scenarios. The method’s coarse-to-fine alignment cascade (DTW and continuous spline optimization) supports sub-frame precision in both frame association and 4DGS parameter estimation. This configuration yields high-fidelity, temporally coherent reconstructions suitable for dynamic scene renderings and further research into multi-view temporal alignment (Lee et al., 3 Dec 2025).