Hyperagents: Self-Improving AI That Learns How to Improve Itself

This presentation explores Hyperagents, a breakthrough system that enables AI agents to recursively improve not just their task performance, but the very process by which they self-modify. By implementing fully editable meta-level mechanisms and open-ended search, the Darwin Gödel Machine with Hyperagents (DGM-H) demonstrates compounding, transferable self-improvement across diverse domains including coding, paper review, robotics, and mathematics—without domain-specific customization.Script

Most AI systems learn to perform tasks better. But what if an AI could learn to become better at improving itself? That recursive leap—metacognitive self-modification—is the foundation of Hyperagents.

Previous approaches hit a wall: they could improve at tasks, but the machinery controlling that improvement was frozen. Hyperagents breaks this constraint by making the entire meta-level editable, so agents evolve not just solutions, but the strategies for finding better solutions.

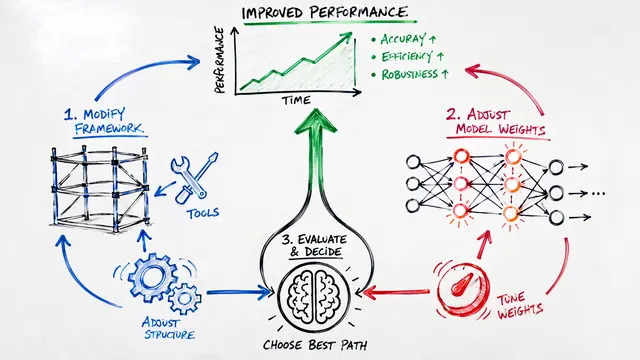

The architecture itself is elegantly recursive.

DGM-H alternates between modification and evaluation, but critically, the modification process itself evolves. Agents don't just stumble on better solutions—they build infrastructure for analyzing failures, tracking progress, and strategically allocating effort. These mechanisms emerge without explicit programming.

The most striking evidence comes from transfer experiments. When hyperagents optimized on paper review and robotics are applied to Olympiad-level math grading—a completely novel domain—they generate improved agents 63% of the time over 50 iterations. Systems without metacognitive self-modification transfer nothing. The meta-level skills are genuinely general, not domain-specific tricks.

Across coding, paper review, and robotics reward design, DGM-H shows sustained improvement where ablated systems stagnate. Removing either metacognition or open-ended search causes collapse. Both are necessary. The system even bootstraps faster when initialized from previously improved agents, showing that meta-level gains compound across runs.

Hyperagents demonstrates that AI can learn not just to solve problems, but to recursively refine the process of self-improvement itself. That leap from task optimization to meta-optimization may be the most consequential architectural shift in autonomous systems. Visit EmergentMind.com to explore the paper in depth and create your own research video.