SLATE: Teaching Machines to Imagine Without Words

This presentation explores SLATE, a groundbreaking approach that combines object-centric representation learning with transformer-based generation to achieve compositional image synthesis without text supervision. Unlike DALL-E which relies on text prompts, SLATE learns discrete visual concepts directly from images and can recombine them into novel, globally consistent scenes through zero-shot imagination.Script

Imagine if machines could learn to compose new images the way children play with building blocks, combining familiar objects into scenes they've never seen before. This paper introduces SLATE, a system that learns visual composition directly from images without ever reading a single word of text.

To understand why this matters, we need to examine the fundamental challenge of compositional imagination.

Building on this challenge, researchers wanted to achieve what they call zero-shot imagination - generating novel image combinations without any text supervision. Current object-centric models can identify visual parts called slots, but they struggle to recombine these parts convincingly.

Previous approaches faced two fundamental problems. The slot-decoding dilemma forced a choice between meaningful object representations and image quality. Meanwhile, the pixel independence problem meant that when you combined slots from different images, you got incoherent mashups instead of realistic scenes.

The authors propose SLATE to solve both problems simultaneously.

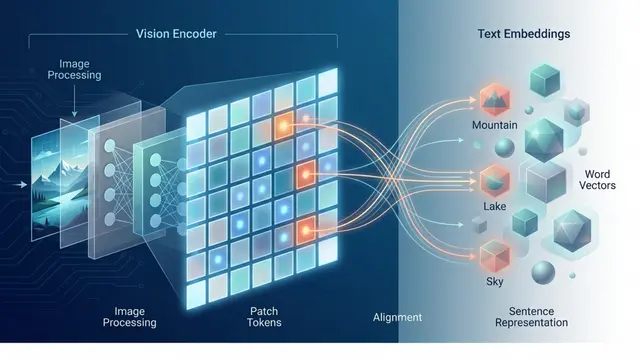

SLATE brilliantly combines the best aspects of existing approaches. Like DALL-E, it uses a transformer decoder for global consistency, but like Slot Attention, it learns composable units directly from images without text supervision. The key insight is using slots as a visual vocabulary that can prompt the transformer to generate coherent compositions.

The architecture follows a clever four-step process. First, images are broken into discrete tokens, then Slot Attention discovers object-like representations from these tokens. The transformer decoder generates new token sequences conditioned on slots, creating global coherence that was missing in previous pixel-mixture approaches.

Let's dive deeper into the technical mechanics that make this possible.

The architecture reveals two parallel reconstruction pathways. The DVAE path handles direct patch reconstruction, while the slot-based path goes through the transformer decoder. This dual approach allows the model to learn both discrete visual tokens and meaningful object representations simultaneously, with the transformer providing the crucial global dependencies.

The training process carefully balances multiple objectives. The joint loss ensures both pathways learn effectively, while Gumbel-Softmax allows gradual transition from soft to discrete representations. Remarkably, this entire process requires only raw images with no labels, text, or bounding boxes.

The concept library transforms learned slots into a reusable visual vocabulary. By clustering similar slots, the system identifies recurring visual concepts that can be mixed and matched. This creates the foundation for true compositional generation where concepts from different images can be seamlessly combined.

Now let's examine how SLATE performs across multiple challenging scenarios.

The evaluation spans an impressive range of scenarios, from geometric shapes to textured objects to human faces. The researchers generated tens of thousands of compositions per dataset, measuring both objective metrics like FID scores and subjective human preferences to ensure comprehensive assessment.

The visual comparison dramatically illustrates SLATE's advantages. While the baseline Slot Attention produces blurry, inconsistent compositions, SLATE generates crisp, globally coherent images where lighting, shadows, and textures adapt naturally to the new object arrangements. The difference in visual quality is immediately apparent.

The quantitative results strongly support SLATE's effectiveness. Beyond just better FID scores, human evaluators consistently prefer SLATE's outputs, indicating that the improvements translate to perceptually meaningful differences. Interestingly, while reconstruction MSE remains comparable, the visual quality improvements suggest SLATE captures more realistic image statistics.

This comparison reveals a crucial advantage in textured scenarios. While Slot Attention merges objects with similar-colored backgrounds, SLATE maintains clean object boundaries. The transformer's global context helps resolve these challenging segmentation cases that trip up pixel-level mixture approaches.

Perhaps most impressively, SLATE demonstrates true zero-shot generalization by generating scenes with object counts never seen during training. The model can create empty scenes, sparse arrangements, or crowded compositions while maintaining realistic details like mirror reflections that adapt to the new object configurations.

The out-of-distribution results showcase SLATE's compositional power. Trained only on single towers, the system can generate two-tower arrangements with dramatically better texture quality and environmental consistency. The accompanying FID scores show substantial improvements, with SLATE achieving 82.38 compared to Slot Attention's 172.82 on this challenging task.

Let's consider what these results mean for the broader field.

The authors position SLATE as achieving several important firsts in the field. Most significantly, it demonstrates that you can achieve DALL-E-like compositional generation without any text supervision whatsoever. This opens up possibilities for learning visual composition from image datasets where text descriptions aren't available.

The researchers acknowledge several areas for future improvement. The concept library could benefit from more principled organization, and the lack of slot-level priors limits efficient sampling strategies. However, they note that current output quality, while impressive, isn't yet realistic enough for deceptive applications.

SLATE represents a significant step toward machines that can imagine and compose visual scenes like humans do, learning the grammar of visual composition directly from observation. Visit EmergentMind.com to explore more cutting-edge research that's reshaping how we think about artificial creativity and visual understanding.