Intelligent AI Delegation: Frameworks, Protocols, and Sociotechnical Considerations

This presentation explores a comprehensive framework for intelligent AI delegation in emerging autonomous agent ecosystems. As AI agents become more complex and autonomous, traditional delegation approaches prove inadequate. The authors propose a robust, adaptive framework that addresses task decomposition, dynamic assignment, real-time monitoring, trust calibration, and verifiable completion while navigating critical sociotechnical challenges including accountability diffusion, security threats, and the preservation of meaningful human control.Script

Imagine an economy where autonomous AI agents delegate tasks to each other at scale, recursively decomposing work across networks of specialized systems. How do we ensure accountability, security, and meaningful human control when delegation chains stretch beyond direct oversight?

Building on that tension, the authors identify why existing delegation methods break down. As agent autonomy increases and agents begin delegating to other agents, we face principal-agent misalignment at every link in the chain, while accountability becomes diffused across complex networks vulnerable to sophisticated attacks.

The paper proposes a comprehensive solution built on 5 core pillars.

These 5 pillars work together to create a framework that's both robust and flexible. Dynamic assessment evaluates tasks along axes like complexity, criticality, and verifiability, while adaptive execution continuously responds to specification changes or agent failures, all anchored by transparent monitoring and market-based coordination mechanisms.

The framework operationalizes delegation through 2 key mechanisms. Task decomposition follows a contract-first approach, recursively breaking work into verifiable sub-tasks that eliminate downstream ambiguity, while assignment shifts to decentralized markets where agents bid competitively and smart contracts enforce mutually protective terms.

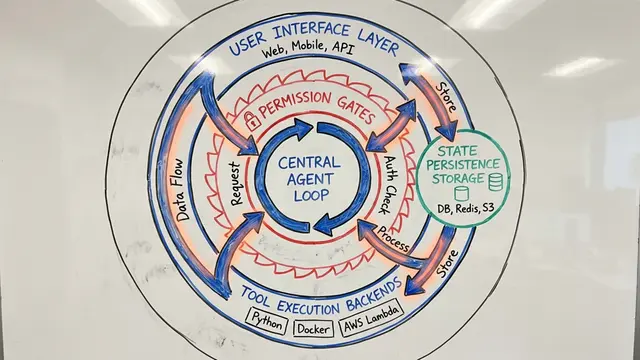

This diagram captures how the framework maintains responsiveness throughout execution. Environmental triggers like specification changes, resource fluctuations, or anomalous delegatee behavior activate a coordination cycle that can reallocate tasks, trigger remediation, or adjust monitoring intensity. The framework carefully balances centralized and decentralized orchestration to avoid bottlenecks while preventing oscillatory dynamics that could cascade into system-wide failures.

Extending the verification layer, the framework distinguishes trust as dynamic contextual belief from reputation as immutable performance history. Cryptographic primitives like zero-knowledge proofs allow verification without exposing sensitive data, while game-theoretic mechanisms like Schelling point consensus and dispute panels provide robust completion verification across delegation trees.

Security permeates every layer of the architecture. Permission handling follows least privilege principles with graduated authority that attenuates through delegation chains, enforced by delegation capability tokens and real-time circuit breakers. The defense strategy addresses threats from malicious actors to system-level vulnerabilities through trusted execution environments, cryptographic identity, and proactive mechanisms against collusion and cognitive monocultures.

Perhaps most critically, the authors address the human dimension. As delegation automates more cognitive work, the framework embeds mechanisms to preserve meaningful oversight—cognitive friction that sustains engagement, explicit transfer points where authority shifts, and curriculum-aware routing that prevents de-skilling by maintaining human capability through strategic task exposure.

This framework offers a scalable path toward autonomous agent economies where safety, accountability, and efficiency coexist through cryptoeconomic incentives and protocol-level verifiability. To explore the full technical specification and protocol mappings, visit EmergentMind.com.