Memory Mechanisms in LLM Agents

This presentation explores how large language model agents store, retrieve, and use information beyond their fixed context windows. We examine the architectural principles behind agent memory—from simple history buffers to sophisticated multi-agent systems—and reveal how these mechanisms enable persistence, reasoning, and adaptation across extended interactions. You'll discover the taxonomy of memory types, the engineering behind retrieval and consolidation, and the open challenges shaping the next generation of context-aware agents.Script

A language model agent answering your tenth question in a conversation must somehow remember the first nine. That memory—how it's stored, retrieved, and updated—determines whether the agent truly understands context or merely pretends to.

Agent memory spans a spectrum from transient context buffers to permanent knowledge stores. Short-term memory manages immediate decision context within a session, while long-term memory persists across tasks and conversations. The most sophisticated systems, like MIRIX, partition memory into specialized modules—core facts, episodic experiences, semantic knowledge, procedural skills—each optimized for its role.

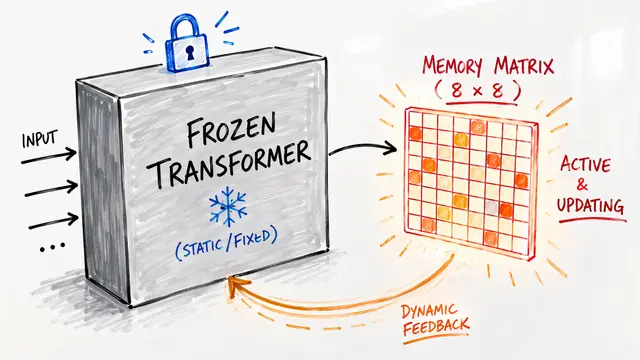

But static storage is not enough; memory must evolve as agents learn.

Traditional memory simply appends history and retrieves by similarity. Agentic systems like A-MEM build dynamic knowledge graphs where each memory note links to related concepts, and those links evolve as new experiences arrive. Retrieval weights—balancing recency, relevance, and importance—are learned rather than hardcoded, letting agents adapt their recall strategies to the task at hand.

Effective retrieval demands more than keyword matching. Agents employ iterative refinement, where each query is progressively sharpened by prior results. Consolidation mimics human memory: frequently recalled facts strengthen, while unused details fade according to probabilistic decay functions. Selective pruning deletes stale or misleading traces, preventing the compounding errors that plague naive add-everything strategies.

When multiple agents or users collaborate, memory becomes a shared resource with competing privacy needs. Collaborative architectures partition memory into private fragments—visible only to specific users—and shared pools that propagate knowledge across the system. Dynamic access graphs track who can read or update each fragment, and immutable provenance logs ensure every memory's origin is auditable. This enables secure, scalable multi-agent reasoning without sacrificing transparency.

Memory transforms language models from stateless responders into agents that learn, adapt, and remember. The architectures we've explored—agentic graphs, adaptive retrieval, collaborative storage—are rewriting what's possible when context persists. Visit EmergentMind.com to explore the research behind these systems and create your own video presentations.