Mining Generalizable Activation Functions

This presentation explores how evolutionary search methods can automatically discover neural network activation functions that improve out-of-distribution generalization. Using the AlphaEvolve framework, researchers evolved activation functions through iterative testing on synthetic datasets, prioritizing performance on unseen data distributions. The discovered functions, including novel combinations with periodic components like GELUSine and GELU-Sinc-Perturbation, demonstrate robust generalization across diverse tasks from CIFAR-10 to molecular property prediction, opening new paths for designing neural networks that adapt better to real-world distribution shifts.Script

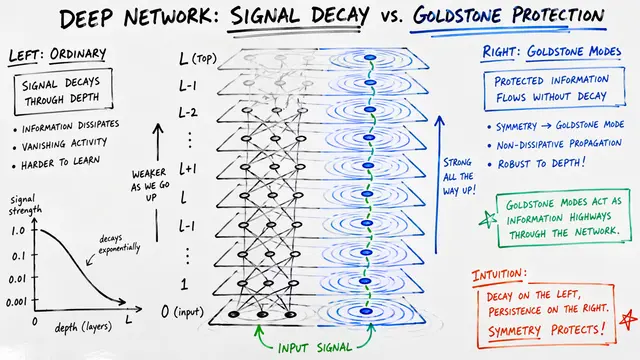

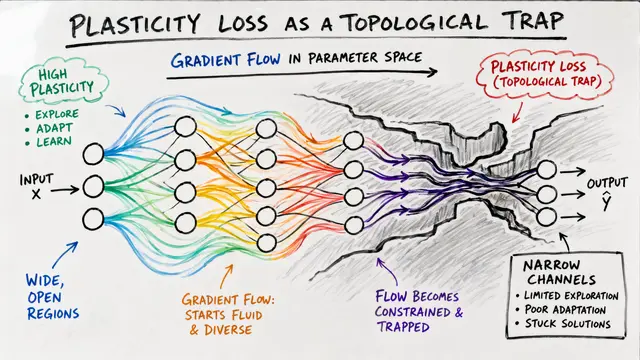

Neural networks fail when they encounter data that looks different from their training set. The culprit often hiding in plain sight is the activation function, that mathematical gate deciding what information flows forward and what gets blocked.

Most activation functions today are hand-crafted by researchers or discovered through narrow automated searches. This paper asks whether we can systematically mine the space of possible functions to find those that naturally generalize beyond their training distribution.

The researchers turned to evolutionary search, letting functions compete for survival based on a single criterion: how well they handle the unexpected.

AlphaEvolve initializes with familiar activation functions, then uses language models to generate new candidates expressed as Python code. Each candidate trains a small neural network on synthetic datasets designed to mimic distribution shifts, and only those that maintain performance on unseen distributions survive to the next generation.

The evolutionary process revealed something unexpected. Functions that generalize well often combine smooth, differentiable behavior with periodic elements. GELUSine and GELU-Sinc-Perturbation emerged as top performers, blending the stability of GELU with oscillatory components that seem to encode useful inductive biases.

Theory is one thing, but do these functions actually work when tested in the wild?

The researchers tested discovered functions across dramatically different domains: image classification, algorithmic reasoning, and molecular property prediction. In each case, the evolved activation functions matched or exceeded the generalization performance of standard activations without sacrificing accuracy on in-distribution data.

What's fascinating is watching the evolutionary trajectory unfold. The search doesn't start from scratch. It first rediscovers well-known activations, validating the approach, then ventures into unexplored territory by hybridizing components that hadn't been combined before.

The work opens as many questions as it answers. The discovered functions were validated on smaller models and carefully constructed distribution shifts, not the massive-scale, unpredictable shifts encountered in deployment. And while periodic components clearly help, understanding why they confer robustness remains an open challenge.

This research suggests that the space of useful activation functions is far larger than we've explored, and that functions optimized for the unexpected might be hiding in plain sight, waiting to be evolved. Visit EmergentMind.com to learn more and create your own research videos.