- The paper proposes a Bayesian nonparametric framework using a geometric stick-breaking prior to efficiently reconstruct and predict stochastic dynamics.

- It demonstrates that the GSBR model outperforms traditional Dirichlet process mixtures in speed and accuracy, particularly with heavy-tailed, non-Gaussian noise.

- Simulation studies on cubic random maps validate the method’s robustness and predictive power, offering a practical tool for complex system analysis.

Bayesian Nonparametric Mixture Models for Stochastic Dynamical System Reconstruction

Introduction and Motivation

This work presents a Bayesian nonparametric approach for the simultaneous reconstruction and prediction of random dynamical systems from time series data, focusing on systems subject to non-Gaussian, potentially heavy-tailed and outlier-prone dynamic noise. The methodology targets scenarios with limited observations and arbitrary, unknown noise distributions, settings where standard parametric methods or Gaussian error assumptions are suboptimal or lead to misleading inference.

The central contribution is the derivation and empirical evaluation of a Bayesian nonparametric mixture model using a Geometric Stick-Breaking (GSB) prior for the stochastic noise component. This model is contrasted with classical nonparametric alternatives utilizing Dirichlet Process (DP) mixtures. The operational focus is on discrete-time polynomial maps with additive noise, though the framework generalizes readily.

The assumed generative model for the observed time series is:

Xi=g(θ,Xi−1)+Zi,i≥1

where g is a nonlinear polynomial map parameterized by θ, and {Zi} are IID innovations from an unknown density f. Both θ and f are inferred.

Bayesian Nonparametric Noise Modeling

Two Bayesian nonparametric priors for f are compared:

- Dirichlet Process Mixture (DPM): The traditional approach models f as an infinite DP mixture of zero-mean Gaussian kernels, following the stick-breaking construction for the weights. The concentration parameter c governs component proliferation.

- Geometric Stick Breaking Mixture (GSB): The core innovation is replacing the DP mixture's random weights with the expectation (geometric sequence), resulting in

f(z)=pj=1∑∞(1−p)j−1N(z∣0,λj−1)

with fewer latent variables and a more parsimonious Gibbs sampler. The geometric sequence parameter p inherits a prior connected to the DP concentration parameter, maintaining synchronization for comparison.

Slice sampling (both sequential and non-sequential) is introduced to ensure computational tractability and finite updates during Gibbs sampling over infinite mixtures.

Sampling Algorithms

A finite Gibbs sampler is derived for both rDPR and GSBR models:

- Conditional posteriors for the precision parameters λj, allocation variables di, slice variables (Ni for GSBR), model parameters θ, initial condition x0, and future values xn+T are presented.

- For GSBR, parameter updating is simpler due to the single infinite-dimensional parameter space, enabling faster convergence and lower computational cost.

Innovative augmentation strategies and auxiliary variable techniques are used to enable direct sampling for otherwise non-standard or multimodal full conditionals.

Simulation Studies

Empirical evaluation is conducted on synthetic data from a cubic random map benchmark:

g(ϑ,x)=0.05+ϑx−0.99x3

with both thin- and heavy-tailed (outlier-prone) innovations constructed via finite mixtures of Gaussians with varying tail weights.

Reconstructions and predictions use both informative and noninformative prior regimes, with the following findings:

- Accuracy: Both the rDPR and GSBR models recover the true map parameters and the dynamical noise density accurately, outperforming Gaussian parametric samplers, especially in non-Gaussian noise settings.

- Speed and Implementation: The GSBR sampler executes in less than half the time of rDPR in matched experiments (Table 2), yielding virtually identical marginal posteriors in parameter reconstruction and predictive distributions.

- Robustness to Heavy-Tailed Noise: The GSBR approach maintains high accuracy even as the noise distribution becomes more heavy-tailed and multimodal (Tables 3, 4, and Figure 1), while parametric approaches rapidly degrade.

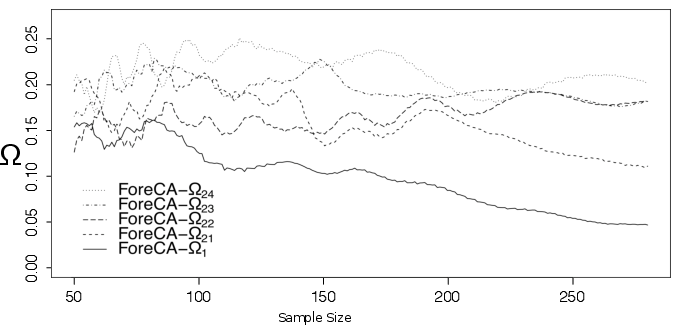

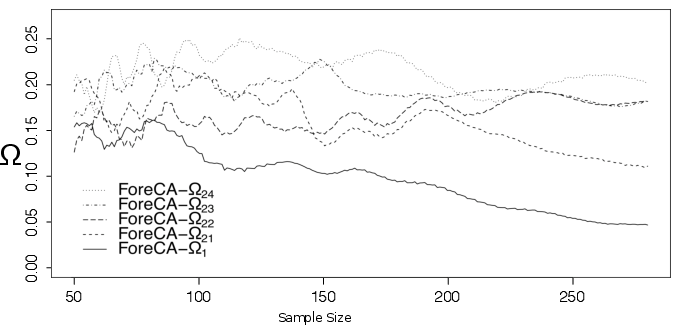

Forecastable Component Analysis (Ω) and Approximate Entropy (ApEn) are employed to quantify the complexity and predictability of realized time series. Higher Ω implies more predictable series and informs prior specification.

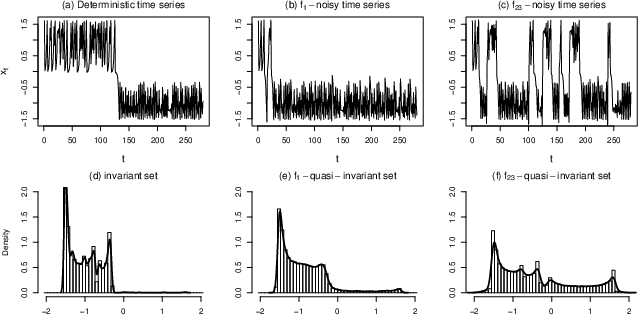

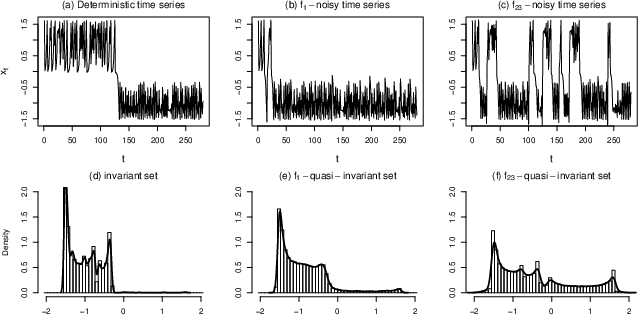

Figure 2: Examples of deterministic and noisy orbits from the cubic map, along with the associated invariant and quasi-invariant measures for deterministic and stochastic cases.

Figure 3: Forecastability curves (Ω) for different dynamical noise models as a function of sample size.

(Figure 1)

Figure 1: GSBR out-of-sample prediction density estimates for different heavy-tailed noise processes compared with quasi-invariant densities.

Posterior Predictive Analysis and Theoretical Insights

- Prediction Barrier: The posterior predictive distribution for future observations converges to the system's quasi-invariant measure as the forecast step increases, forming an intrinsic "prediction barrier" that reflects model uncertainty under chaos and noise.

- Noise Decomposition: The GSB mixture is capable of isolating multiple concurrent noise sources due to its infinite mixture flexibility, allowing accurate identification of the underlying noise regime irrespective of component count or tail behavior.

- Limitations: When the dataset is large and the true noise is Gaussian, nonparametric models are essentially equivalent to parametric approaches. However, for small sample sizes or strongly non-Gaussian noise, GSBR offers marked improvements in both inference and prediction.

Implications and Future Directions

The presented GSBR mixture methodology offers a principled and tractable approach for system identification in nonlinear dynamics with arbitrary noise, broadening the scope of inference beyond restrictive parametric models. Its efficiency recommends it for real-world physical, biological, and engineering systems where data are limited and noise characteristics are unknown or potentially impulsive.

The authors suggest several avenues for advancement:

- Mixed-Impulsive Noise Handling: Extending GSBR to handle impulsive noise via mixtures including discrete components.

- Persistent Strong Perturbations: Adaptive priors and specialized constrained Gibbs updates for cases where persistent, strong noise perturbs the identifiability of model parameters.

- Latent State/Observation Noise Models: Generalization to hierarchical models with both dynamical and observational noise, using coupled GSB measures for hidden and observed states.

- Improved MCMC Schemes: Application of hybrid or adaptive MCMC to enhance mixing under multimodal, strongly nonidentifiable regimes.

These innovations carry direct implications for uncertainty quantification and probabilistic forecasting in high-complexity, low-observability nonlinear systems.

Conclusion

This paper rigorously demonstrates that Bayesian nonparametric mixture models using geometric stick-breaking priors—a notable simplification over Dirichlet mixtures—provide accurate, efficient, and interpretable inference and prediction in chaotic stochastic dynamical systems, especially in the presence of non-Gaussian noises and under limited data regimes. The GSBR sampler's computational parsimony and empirical performance establish it as a practical tool for robust dynamical system analysis.