- The paper presents a novel CVNN framework using Wirtinger calculus to enable effective gradient descent with complex-valued activations.

- Experiments with synthesized waveforms show that CVNNs learn filters with harmonic selectivity and achieve performance on par with real-valued networks.

- The study identifies challenges like sensitivity to hyperparameters and suggests future research to improve robust complex-valued representations in signal processing.

Complex-Valued Neural Networks

Complex-valued neural networks (CVNNs) are an intriguing area within the neural network field, driven by the promise of their representational capabilities, particularly for environment signals such as audio, image, and physiological data. CVNNs exploit complex number properties, enabling them to naturally represent data involving both magnitude and phase, which are routinely encountered in signal processing through complex basis transformations like the Fourier transform.

Motivation and Challenges

Traditional neural networks usually operate within the real-valued domain, making a transition to the complex domain, via conventional transforms like Fourier or other complex basis methods, often necessary. This shift allows CVNNs to learn complex representations of real-valued times-series data. Despite this advantage, there are significant hurdles: CVNNs can be difficult to train because their complex-valued activation functions lack simultaneous boundedness and complex differentiability, critical factors in ensuring convergence and stability during training sessions.

Activation Functions and Wirtinger Calculus

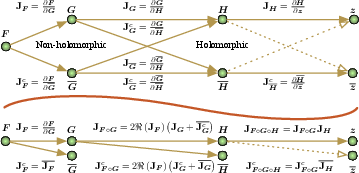

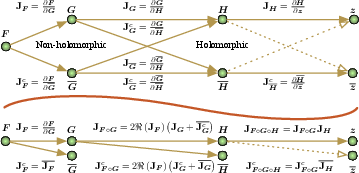

The paper explores a unique approach that combines holomorphic and non-holomorphic functions by applying the Wirtinger derivative, a technique that facilitates differentiation of complex-valued functions which are not complex-analytic but real-analytic. The use of Wirtinger calculus simplifies the gradient descent computation in back-propagation, circumventing the limitations posed by conventional complex differentiability.

Figure 1: The complex hyperbolic tangent function magnitude plot, illustrating the behavior of the tanh activation with complex inputs.

Computational Graph

The utilization of Wirtinger calculus brings an additional layer of efficiency to CVNNs by reducing computational dependencies within the neural network graph. This is vital for optimizing complex models, and aids in maintaining the robustness of gradient propagation when integrating holomorphic and non-holomorphic activation functions.

Figure 2: Computational dependency graph for a composition of functions in CVNNs.

Experiments and Outcomes

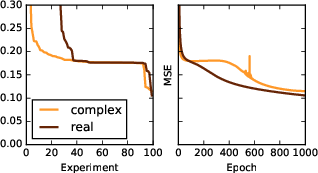

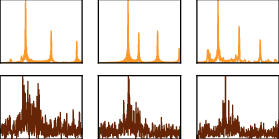

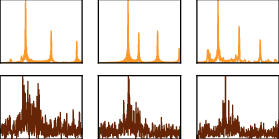

The paper presents experiments that illuminate the performance of CVNNs against traditional networks, with various synthesized datasets representing real-world signals. Data such as Sawtooth-like analytic waveforms and inharmonic signals were employed. These tests demonstrated that, although CVNNs do not unequivocally outperform real-valued networks, they exhibit comparable performance levels. Notably, CVNNs effectively learn filters that align closely with data domains, indicating profound prospects for certain applications.

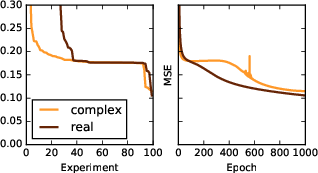

Figure 3: Left: Sorted validation error during hyperparameter optimization, highlighting sensitivity in CVNN training.

Aside from the numerical performance, the evaluations reveal the sensitivity of complex models to hyperparameter settings and initial conditions. This sensitivity suggests a requirement for meticulous optimization processes to achieve reliable results.

Figure 4: Spectral analysis of learned filters showing distinct harmonic selectivity in complex-value models.

Implications and Future Directions

The exploration of CVNNs within broader AI, particularly their ability to represent data in its native complex form, points to potential advancements in signal processing tasks, with direct implications for fields such as audio synthesis and communications.

While challenges remain, such as handling singularities within the complex domain and optimizing hyperparameter settings, ongoing research efforts are crucial to improving the robustness and flexibility of CVNNs. Future work may include distributed learning frameworks and novel activation functions, possibly bridging the gap towards leveraging CVNNs in mainstream applications.

Conclusion

Complex-valued neural networks offer a promising avenue for machine learning by introducing new ways to handle data encompassing magnitude and phase joint representations. The integration of techniques like Wirtinger calculus exemplifies a pathway to address existing computational complexities, bringing these models a step closer to broader applicability and acceptance in the AI community. Continued experimentation and optimization are anticipated to advance this field further, unlocking the full potential of CVNN structures.