- The paper presents a modular ASN model that generates Abstract Syntax Trees for code generation and semantic parsing tasks.

- It employs an encoder-decoder architecture with hierarchical and supervised attention to enhance prediction accuracy.

- The model significantly outperforms traditional sequence-to-sequence methods with notable BLEU and exact match improvements.

Abstract Syntax Networks for Code Generation and Semantic Parsing

Introduction to Abstract Syntax Networks

The paper "Abstract Syntax Networks for Code Generation and Semantic Parsing" introduces a novel modeling framework named Abstract Syntax Networks (ASNs) designed to tackle structured prediction tasks such as code generation and semantic parsing. These tasks require converting unstructured inputs into well-formed outputs represented as Abstract Syntax Trees (ASTs). Unlike traditional sequence-to-sequence models, which handle outputs primarily as unstructured sequences, ASNs leverage a modular approach with a decoder network that dynamically adapts its structure to mirror the output tree's structure.

Model Overview and Architecture

The ASN framework is built upon an encoder-decoder architecture enhanced by hierarchical attention mechanisms. This means that during the encoding process, bidirectional LSTMs capture input sequences' context, which is followed by a decoder that constructs outputs as ASTs. Each component of the output is predicted by a specialized module corresponding to distinct elements in the AST grammar, facilitating recursive and modular generation processes. Information is propagated through a vertical LSTM as the decoder operates, ensuring that module decisions are well-informed.

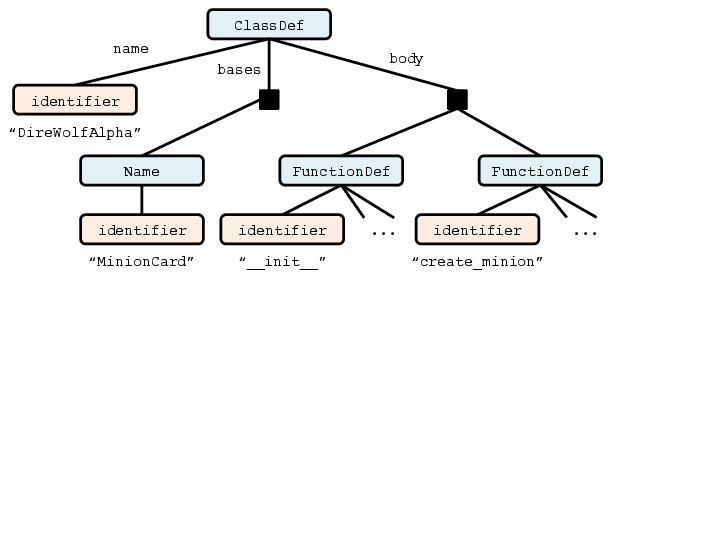

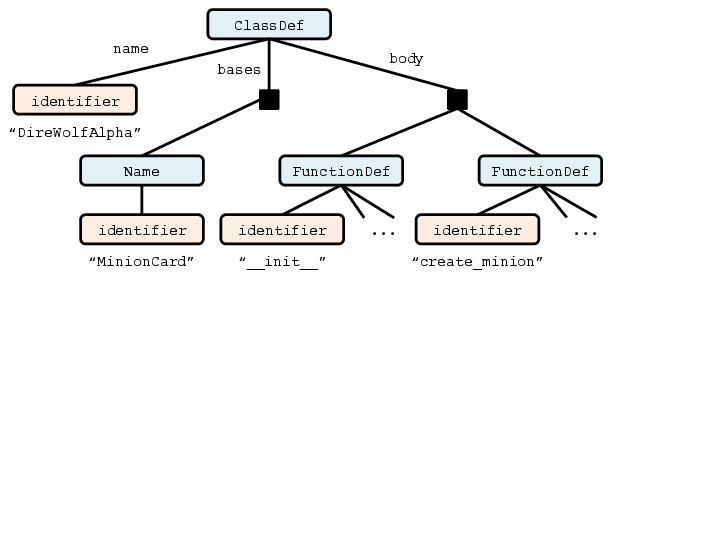

Figure 1: Example code for the ``Dire Wolf Alpha'' Hearthstone card.

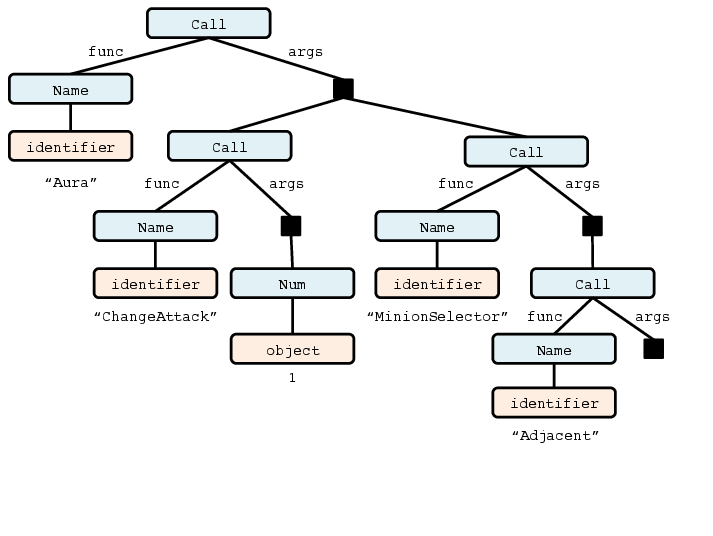

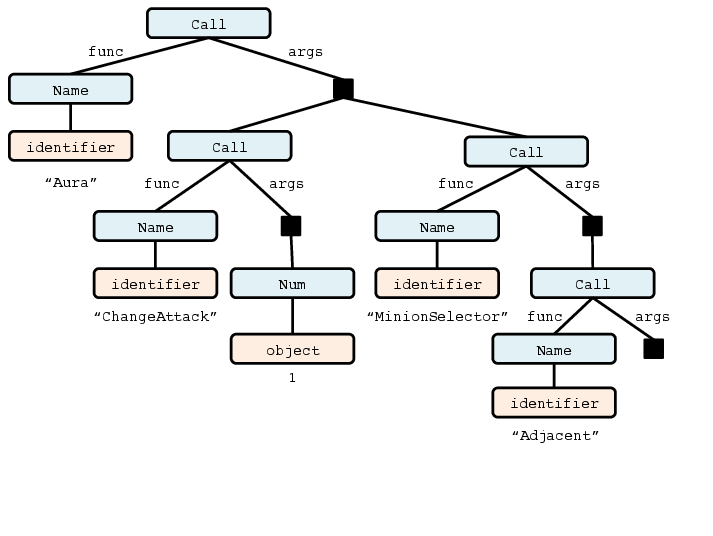

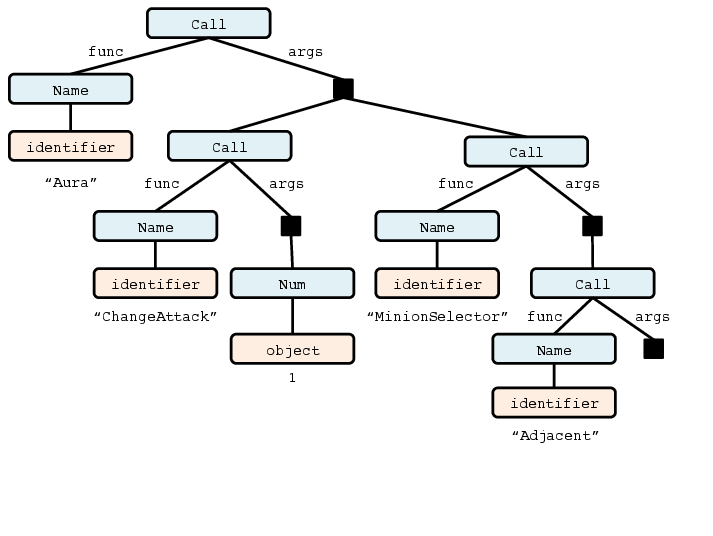

Figure 2: Fragments from the abstract syntax tree corresponding to the example code in Figure 1.

Implementation and Training

ASNs are implemented using the DyNet neural network library and trained using the Adam optimizer. The model's strength lies in recursively generating ASTs where submodules are activated based on the type of node being expanded. Attention mechanisms are applied at each step to focus on relevant parts of the input, which is particularly effective for tasks involving partial copying of input elements. Additionally, specific architectural features like supervised attention and character-level LSTM LLMs are utilized to enhance model predictions and handle open class string-valued types.

Experimental Results

On the task of code generation, particularly using the Hearthstone dataset, ASNs demonstrate substantial improvements over previous models. The model achieves a BLEU score of 79.2 and an exact match accuracy of 22.7%, compared to 67.1 BLEU and 6.1% exact match achieved by prior sequence-to-sequence models. Similarly, for semantic parsing tasks, ASNs display competitive performance, achieving state-of-the-art results on the Jobs dataset with exact match accuracies surpassing 92.9%.

Error Analysis

Despite its effectiveness, ASNs face challenges when dealing with complex logic expressed in imperative styles. Missteps in variable naming, control flow, and domain-specific conventions pose difficulties. Simply put, while ASNs excel at handling structure-rich outputs, they sometimes struggle with intricate details that require finer-grained language understanding or broader contextual awareness.

Figure 3: Cards with minimal descriptions exhibit a uniform structure that our system almost always predicts correctly, as in this instance.

Figure 4: For many cards with moderately complex descriptions, the implementation follows a functional style that seems to suit our modeling strategy, usually leading to correct predictions.

Figure 5: Cards with nontrivial logic expressed in an imperative style are the most challenging for our system. In this example, our prediction comes close to the gold code, but misses an important statement in addition to making a few other minor errors. (Left) gold code; (right) predicted code.

Conclusion

ASNs represent a significant step towards more expressive and accurate models for structured prediction tasks. Their modular design aligns naturally with the recursive nature of many structured output spaces, providing robust performance across varied tasks without extensive task-specific engineering. Future directions may include extending ASNs' applicability beyond tree structures to more complex graph-based representations and improving coherence in model outputs to maintain semantic constraints like well-typedness and executability. This approach's modularity and flexibility position it as a valuable tool in advancing structured prediction and understanding tasks across various domains.