- The paper introduces generative synthesis via a generator-inquisitor framework to automatically generate efficient deep neural networks.

- It demonstrates improved performance in image classification, semantic segmentation, and object detection using fewer MAC operations and enhanced energy efficiency.

- The methodology offers an iterative, flexible approach to optimize model architectures under operational constraints, making it ideal for edge device deployment.

Introduction

The paper "FermiNets: Learning generative machines to generate efficient neural networks via generative synthesis" proposes a novel approach to automatically generate efficient deep neural networks using a methodology termed generative synthesis. This method leverages a unique generator-inquisitor pair to produce models that optimize architectural efficiency and computational costs, making them suitable for deployment on edge devices. Such a capability addresses the challenge of deploying complex deep learning architectures on resource-constrained environments like mobile phones and other consumer electronics.

Generative Synthesis Methodology

Generative synthesis is predicated on the collaboration between a generator G(s;θG) and an inquisitor I(G;θI). The generator's role is to create neural network architectures based on input seeds, while the inquisitor adapively tunes the generator by analyzing the efficiency of generated models with respect to predefined operational requirements.

The process initiates with the creation of a baseline generator G0 and an inquisitor I0. The cyclical procedure involves generating networks, probing them with stimulus signals, and then adapting the generator based on observation insights. This iterative mechanism aims at solving a constrained optimization problem, where the generator seeks the best possible model efficiency, maximized through a universal performance function U and subject to operational constraints.

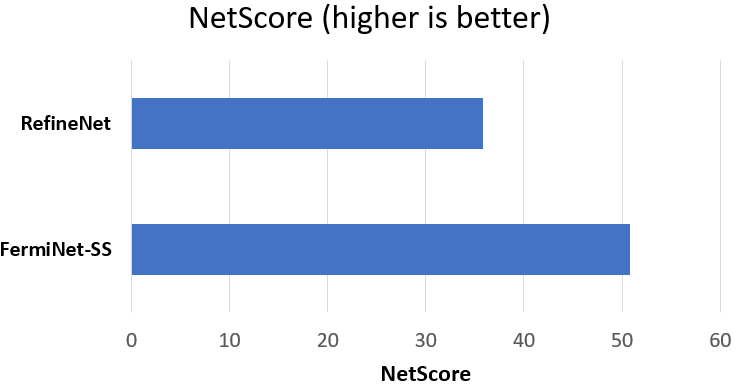

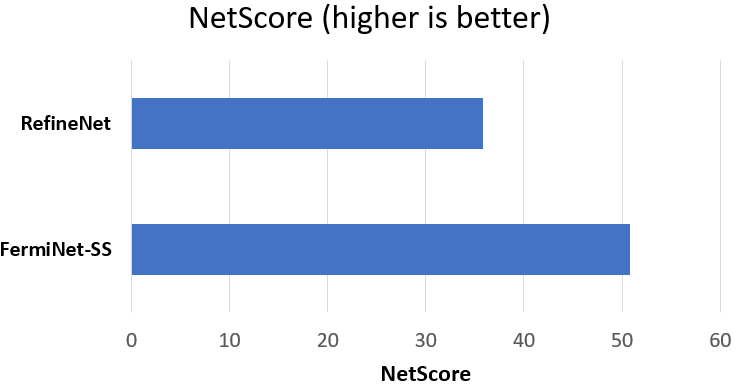

Figure 1: Semantic segmentation: (a) Information density, (b) MAC operations, and (c) NetScore.

Experimental Evaluation

The study conducted experiments across three tasks: image classification, semantic segmentation, and object detection. The models created, termed FermiNets, were compared against state-of-the-art networks to evaluate their performance enhancements in efficiency and energy consumption.

Image Classification

For image classification, FermiNets demonstrated significant efficiency improvements. On the CIFAR-10 benchmark, FermiNets surpassed other models like MobileNet and ShuffleNet in top-1 accuracy and information density, requiring notably fewer MAC operations.

Semantic Segmentation

In semantic segmentation tasks using the CamVid dataset, the generated FermiNet-SS achieved a pixel-wise accuracy competitive with RefineNet. FermiNet-SS showcased significant advancements in information density, implying higher modeling efficiency, and exhibited superior computational performance with fewer MAC operations.

Object Detection

Object detection evaluation against DetectNet highlighted FermiNet-OD's capability to exceed in information density and computational efficiency. Additionally, in mobile-edge scenarios using Nvidia Tegra X2, FermiNet-OD showed marked improvements in energy efficiency, sustaining higher image inference rates per joule.

Implications and Future Directions

The generative synthesis approach presents a robust alternative for generating efficient deep neural networks, particularly suited for edge computing scenarios. Its ability to produce a diverse set of architectures from different seeds establishes its flexibility and potential for widespread application across various tasks. Future work may explore extending this framework to multi-modal learning scenarios or other resource-constrained platforms, further refining model synthesis techniques for enhanced neural architecture search.

Conclusion

This research underlines the effectiveness of generative synthesis in creating resource-efficient neural networks, fulfilling the operational requirements of edge devices. By optimizing model efficiency and reducing computational burdens, FermiNets provide a pathway for advancing AI capabilities on constrained platforms, enhancing accessibility and application scope within mobile and consumer technology sectors.