- The paper introduces a framework integrating RGB-D mapping, stochastic path planning, and trajectory generation for real-time MAV navigation in cluttered environments.

- It employs a modified RRT-Connect algorithm and linear octree mapping to efficiently detect obstacles and dynamically replan safe paths.

- Experimental evaluations in simulation and real-world scenarios validate the framework's robustness and adaptability in dynamic and static conditions.

Autonomous Navigation of MAVs in Unknown Cluttered Environments

Introduction

The paper "Autonomous Navigation of MAVs in Unknown Cluttered Environments" (1906.08839) addresses a fundamental problem in robotics: enabling multirotor Micro Aerial Vehicles (MAVs) to autonomously navigate unknown environments that are cluttered and devoid of GPS signals. This capability is crucial for applications like search and rescue, reconnaissance, and industrial inspection. MAVs are uniquely suited to these tasks due to their size, agility, and onboard processing capabilities. This paper's contribution is a framework that integrates environment mapping, path planning, and trajectory generation, specifically designed for computationally constrained platforms.

Framework Components

Environment Mapping

The first component of the framework utilizes data from an RGB-D camera to construct a map of the environment. This map is generated using a linear octree structure, which offers efficient space representation and collision checking. The disparity measurements from the depth sensor are processed to update the map dynamically, accommodating the robot's odometry and sensor drift errors. The innovative use of linear octrees balances memory efficiency with real-time spatial processing capability.

Figure 1: Flow diagram of the mapping algorithm.

Path Planning

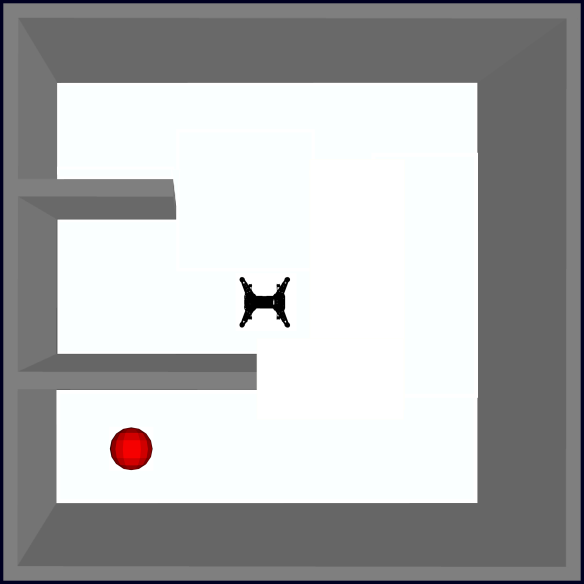

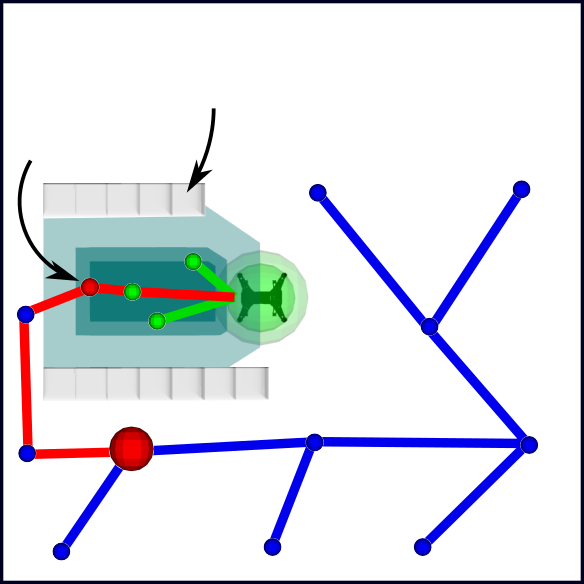

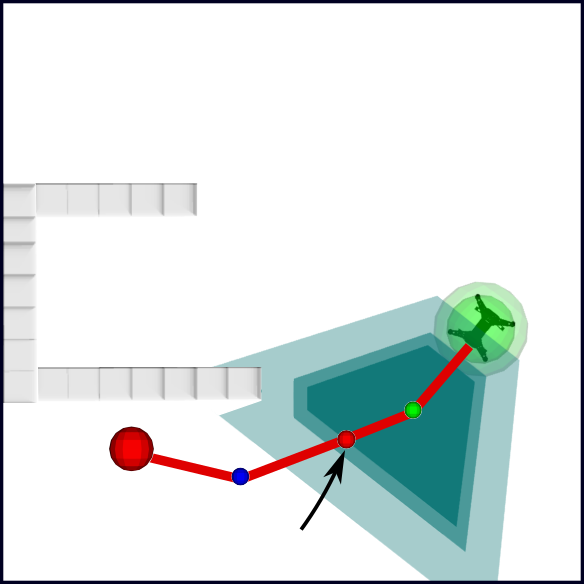

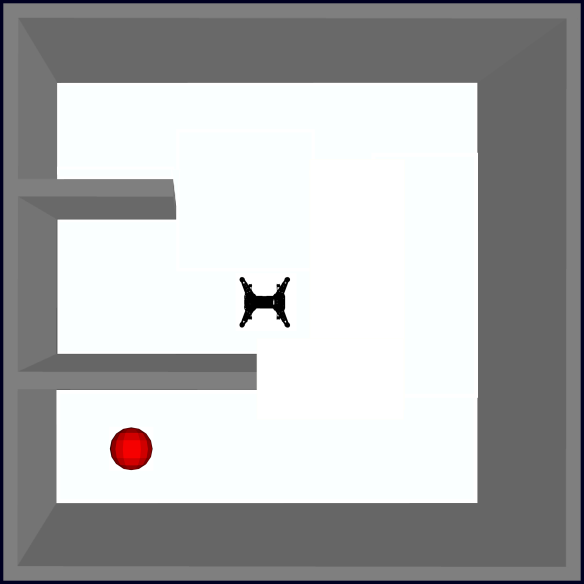

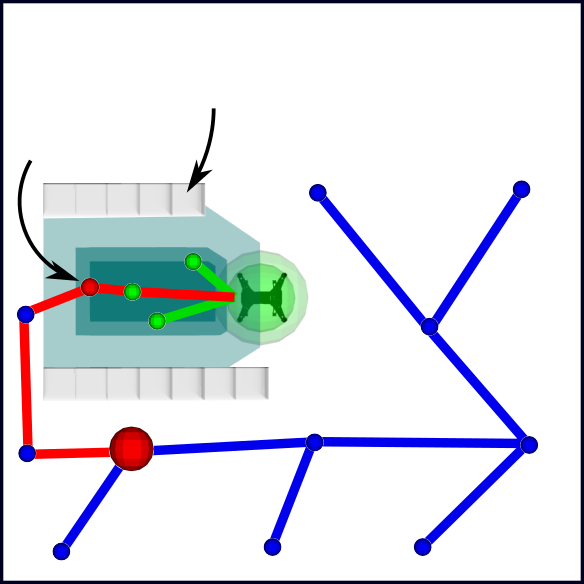

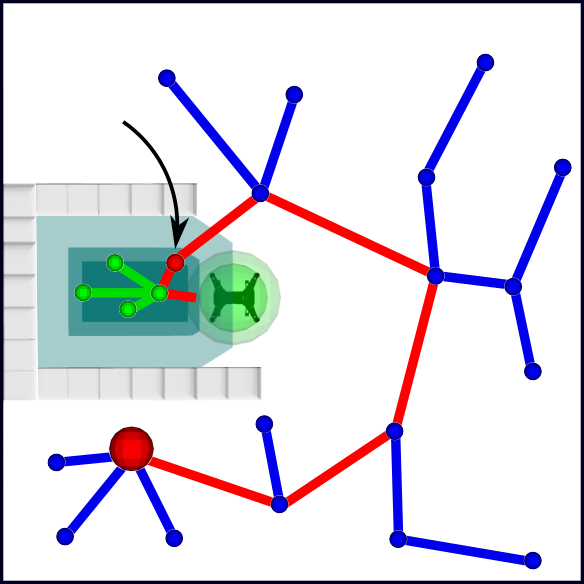

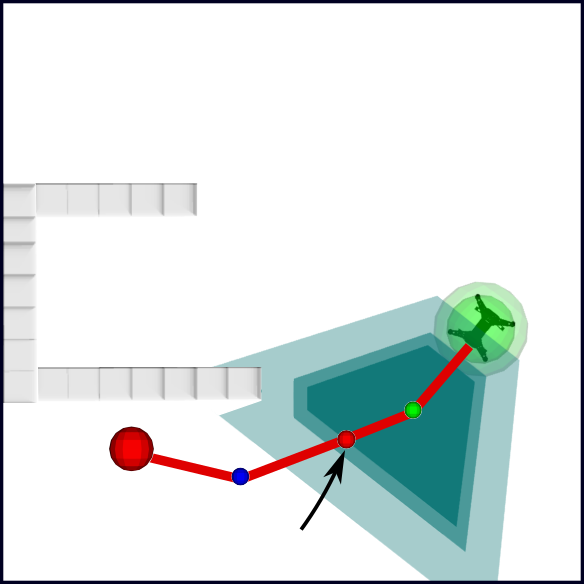

The path planning element of the framework employs a stochastic method to chart a course towards the target. Using a modified RRT-Connect algorithm, the goal is dynamically connected based on sampled safe areas, leveraging insights from fringes of previously mapped regions. This approach ensures that the UAV remains within its field of view, thus maximizing navigation confidence. The algorithm dynamically replans when new obstacles are detected or when the goal's path is compromised.

Figure 2: Illustration of the motion planning method for reaching a goal in an unknown environment.

Trajectory Generation

The final component, trajectory generation, accounts for the MAV's dynamic constraints, environmental uncertainties, and disturbances. Crucially, it does so without relying on computationally intense optimization solvers. This real-time approach enables the MAV to maintain its trajectory efficiently while ensuring the resulting path is safe and feasible given the MAV's dynamics.

Figure 3: Flow diagram of the motion planning algorithm.

Experimental Evaluation

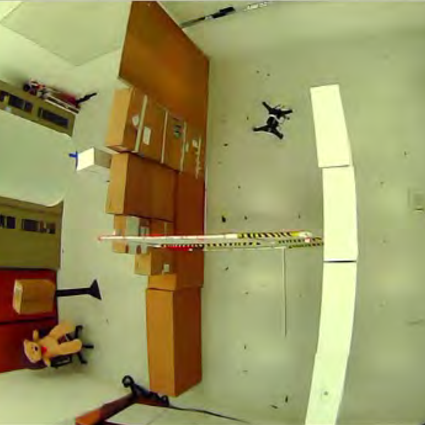

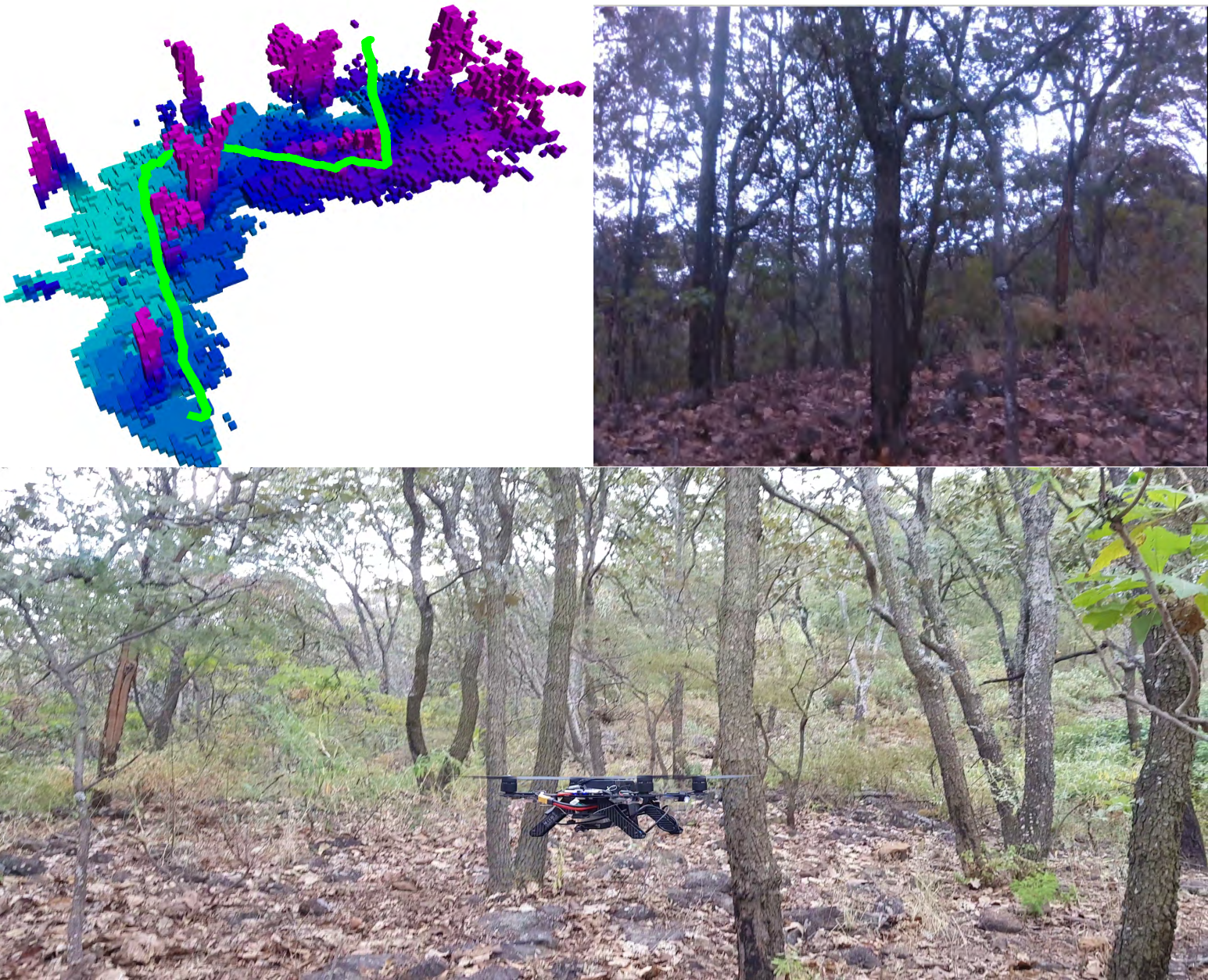

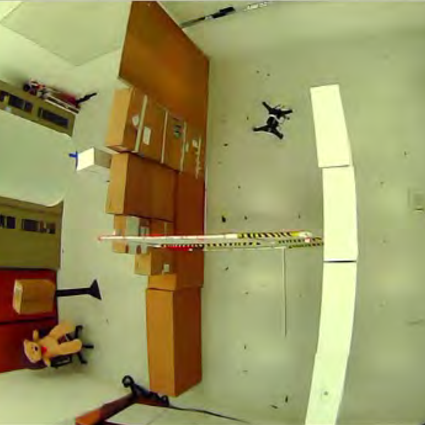

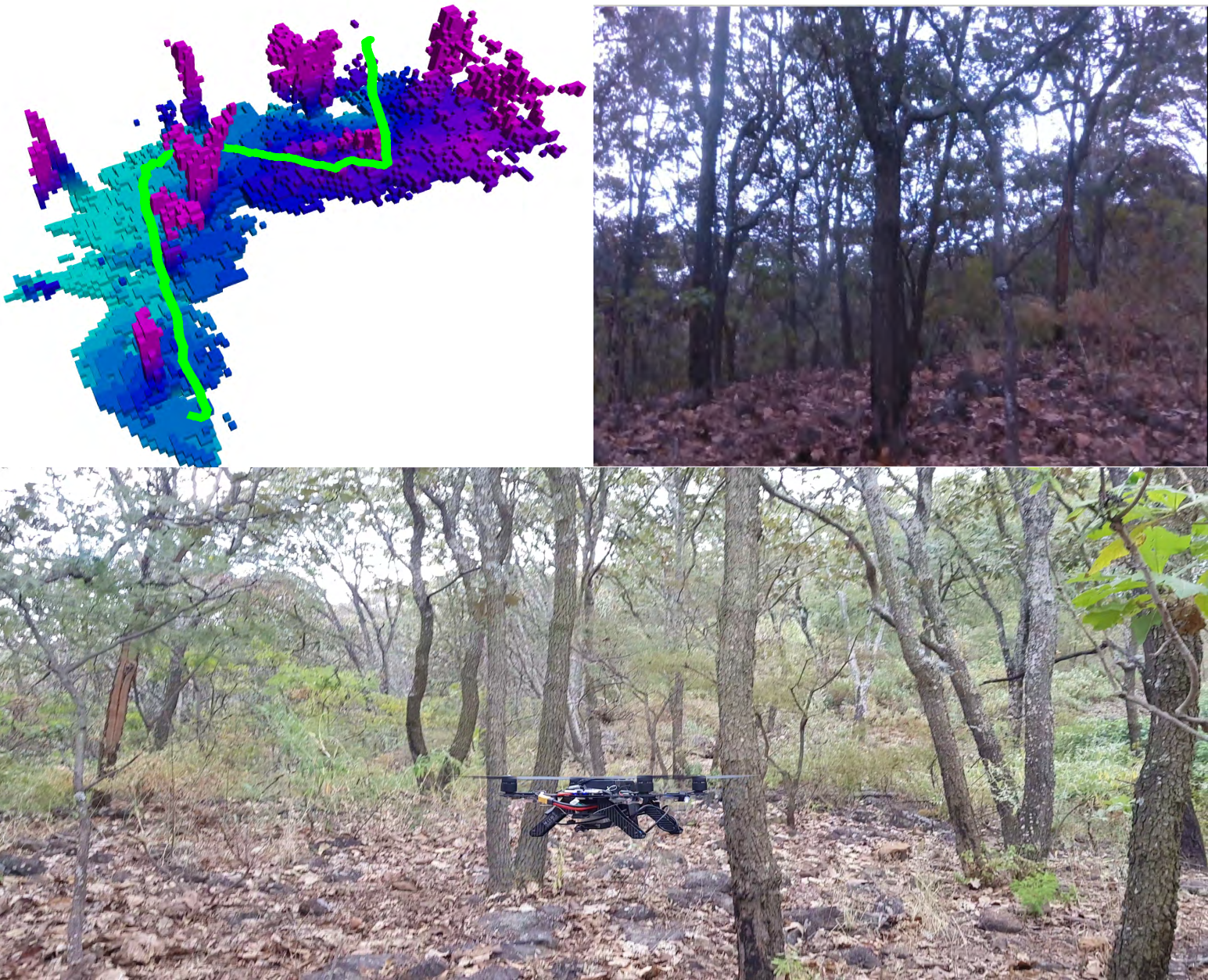

Extensive experiments were conducted in both simulation and real-world environments to validate the framework. The simulated environments included complex 3D mazes and various forest scenarios, challenging the MAV to maneuver around obstacles efficiently. Real-world tests involved dynamic and static cluttered spaces such as warehouses and outdoor forests. These experiments demonstrated the system's capability to navigate autonomously, adaptively construct its environment map, and plan safe paths in real-time using onboard computation exclusively.

Figure 4: Environments for real-world experiments: (a) 3D maze scenario. (b) Warehouse scenario. (c) Dynamic environment. (d) Forest environment.

Implications and Future Directions

The proposed framework provides a robust solution for autonomous MAV navigation in unexplored, cluttered environments. It stands out by enabling real-time operation on resource-limited platforms, thus broadening the application scope of MAVs in real-world scenarios. The paper suggests potential enhancements including dynamic obstacle prediction and the integration of trajectory tracking systems, setting the stage for future innovations in crowded environments where unpredictability is a key challenge.

Figure 5: Reaching a goal in a forest environment: The top left image shows the map representation generated together with the generated trajectory. The top right image shows the camera robot footage. The bottom image shows a picture of the robot in the forest performing the experiment.

Conclusion

This framework exemplifies where computational efficiency and robust navigation converge, showcasing a detailed approach suitable for deployment in a range of challenging scenarios. By maintaining a focus on the low resource requirements and high adaptability of MAVs, it offers a significant advancement in the field of autonomous robotic navigation, opening avenues for future research and development.