- The paper presents an unsupervised AAE methodology that improves detection of both global and local accounting anomalies by imposing a probabilistic prior on the latent space.

- It leverages reconstruction loss and adversarial training to enable robust semantic partitioning of journal entries with high precision.

- Experimental results on real-world and synthetic datasets validate the approach’s effectiveness in enhancing interpretability and supporting forensic auditing.

Detection of Accounting Anomalies in the Latent Space using Adversarial Autoencoder Neural Networks

This essay analyzes the methodology, results, and implications of using Adversarial Autoencoder Neural Networks (AAEs) for detecting accounting anomalies in the latent space. The study, titled "Detection of Accounting Anomalies in the Latent Space using Adversarial Autoencoder Neural Networks" (1908.00734), addresses the challenges of identifying fraudulent activity within financial records, emphasizing enhanced interpretability and adaptive anomaly detection.

Introduction

Fraud detection in accounting data poses significant challenges due to the dynamic nature of fraud scenarios and the complexity of financial data. Traditional rule-based approaches have limitations in generalizing beyond known fraud scenarios, often leading to circumvention by fraudsters. Recent advancements in deep learning, while powerful, often lack interpretability, which is crucial for auditing and forensic accounting. This research proposes employing Adversarial Autoencoder (AAE) networks, which proved effective in learning semantically meaningful representations of real-world journal entries. The approach significantly improves the interpretability of detected anomalies while providing an unsupervised and adaptive method for anomaly assessment.

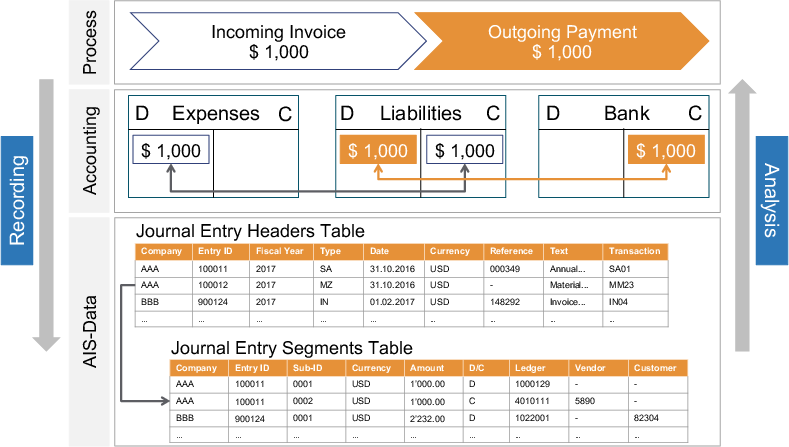

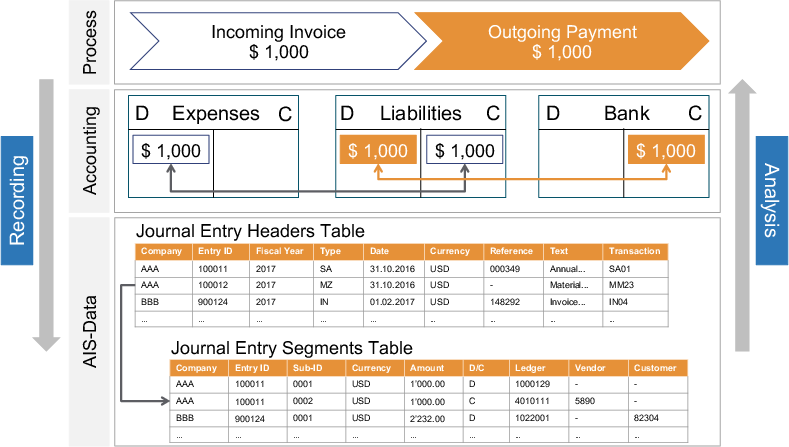

Figure 1: Hierarchical view of an Accounting Information System (AIS) that records distinct layers of abstractions.

Methodology

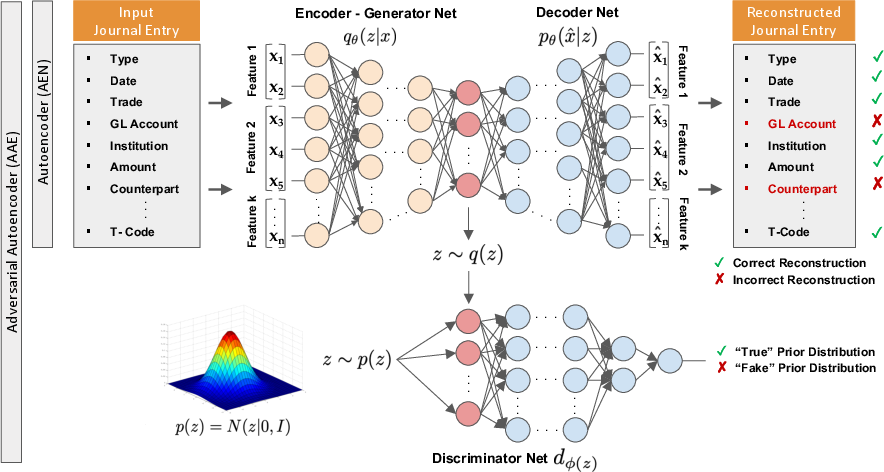

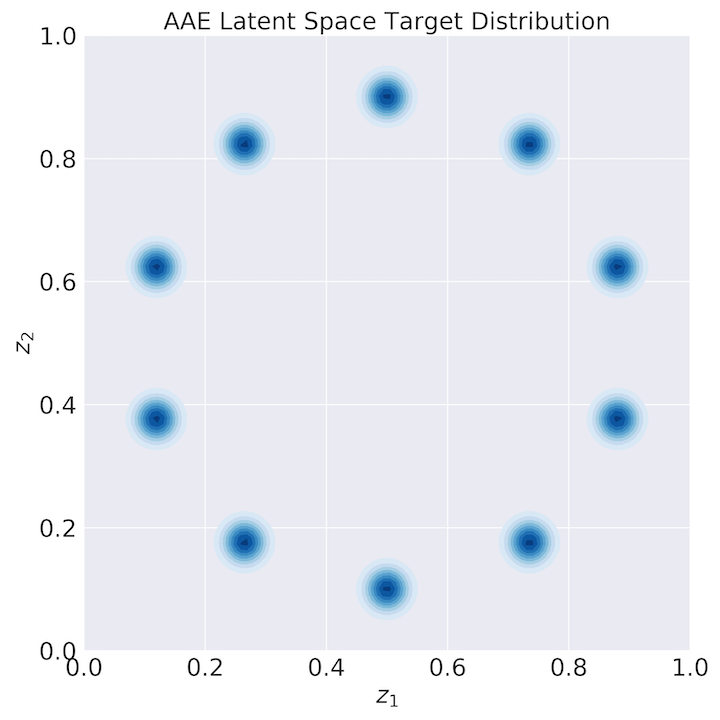

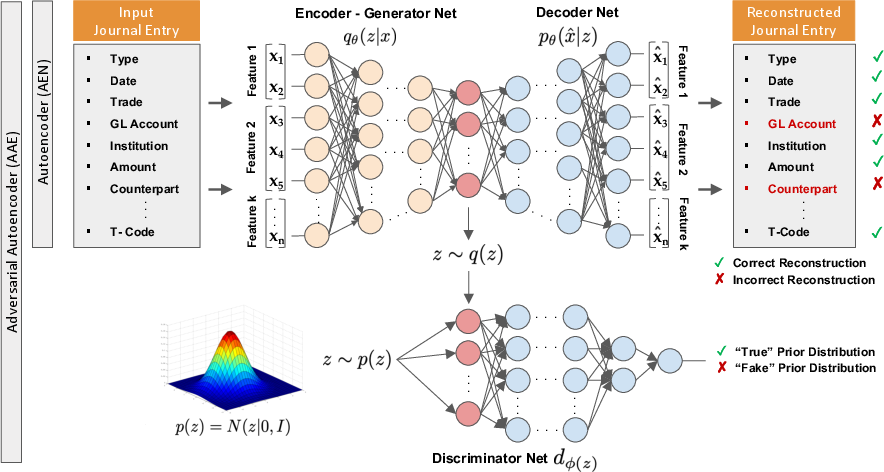

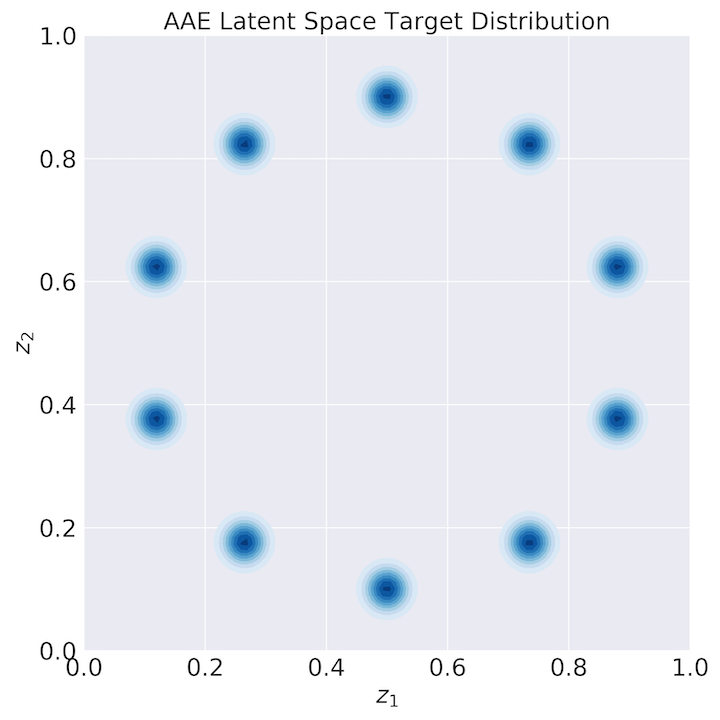

The proposed methodology utilizes the architecture of AAEs to detect anomalies in accounting data sourced from Enterprise Resource Planning (ERP) systems. The AAE architecture consists of an autoencoder network endowed with adversarial training capabilities, allowing it to learn representations that detect both global and local anomalies within journal entries. The architecture imposes a prior distribution on the latent space, typically a mixture of Gaussians, and leverages both reconstruction loss and adversarial loss to achieve a balanced learning objective.

Figure 2: The adversarial autoencoder architecture, illustrating the components for learning journal entries characteristics.

Global and Local Anomalies

- Global Anomalies: These refer to journal entries exhibiting rare attribute values. They are detected by measuring divergence from high-density regions of the prior distribution in the latent space.

- Local Anomalies: These anomalies manifest as unusual combinations of attribute values, despite individual values being common. The AAE identifies these through elevated reconstruction errors.

Experimental Setup

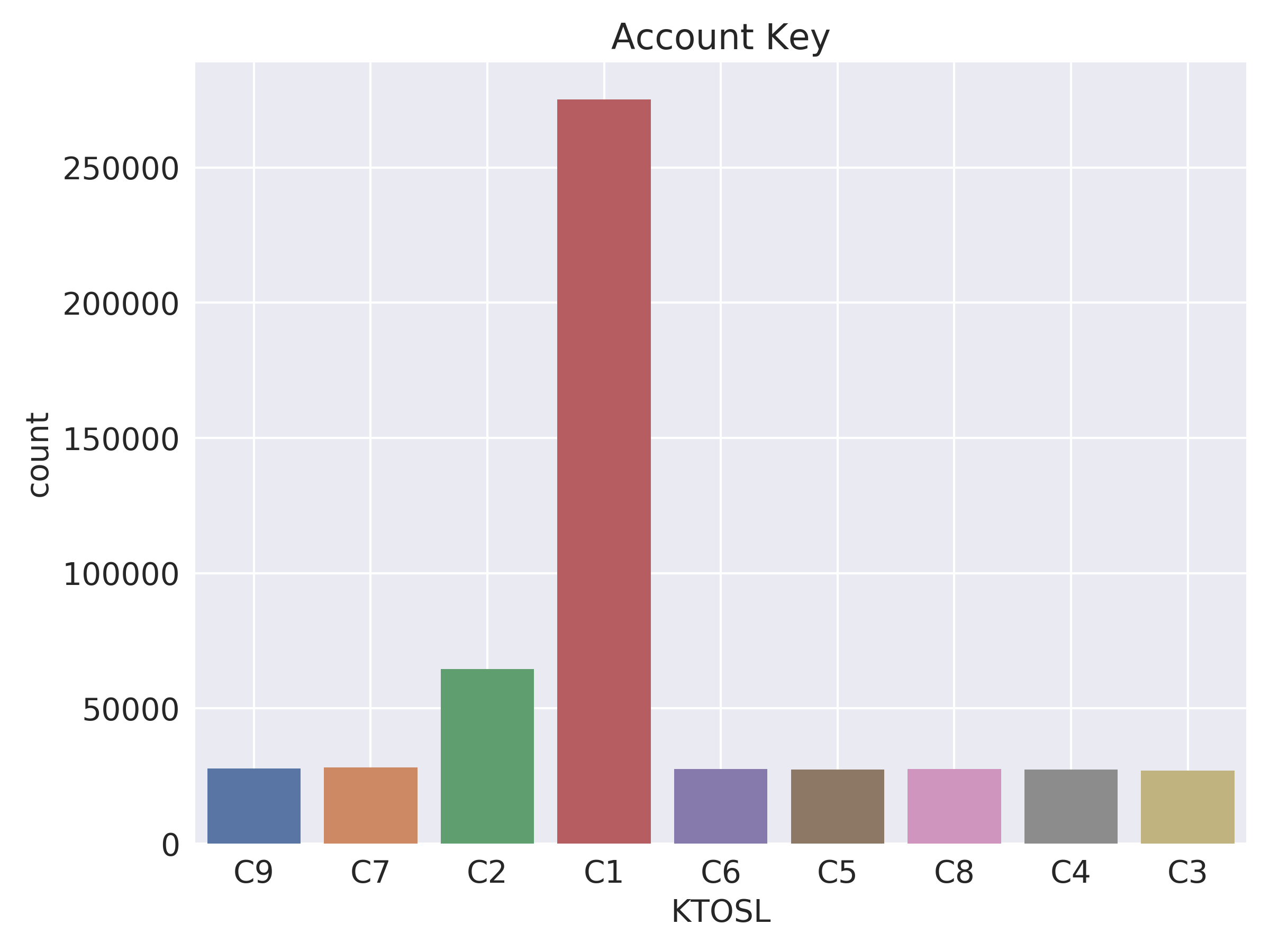

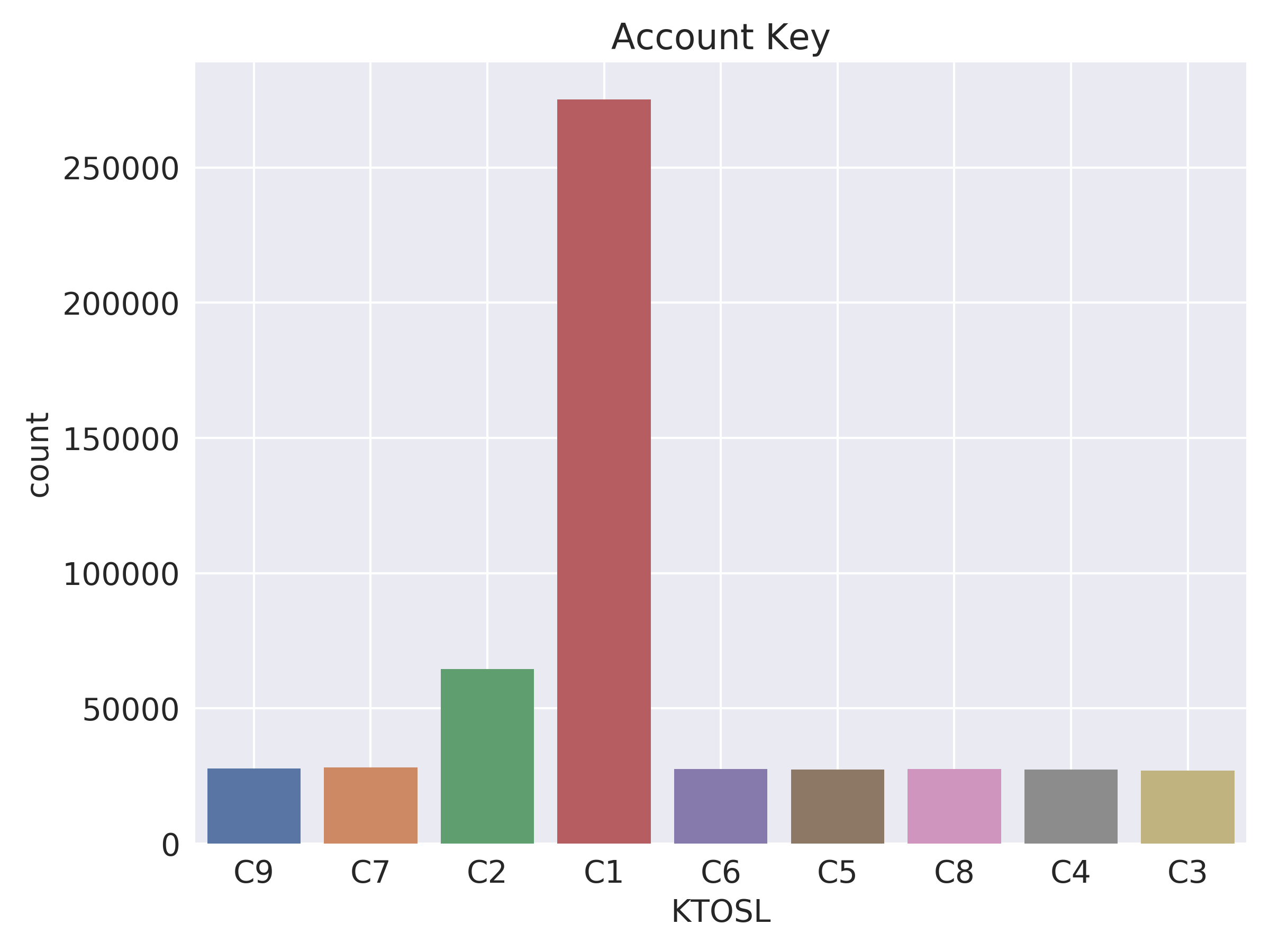

The research evaluates the anomaly detection performance using two datasets of journal entries: one real-world and one synthetic. Key components of the experimental setup include pre-processing categorical attributes into binary forms and injecting synthetic anomalies to test detection capabilities. The AAE model is trained for a max of 10,000 epochs, with early stopping applied upon convergence of the reconstruction loss.

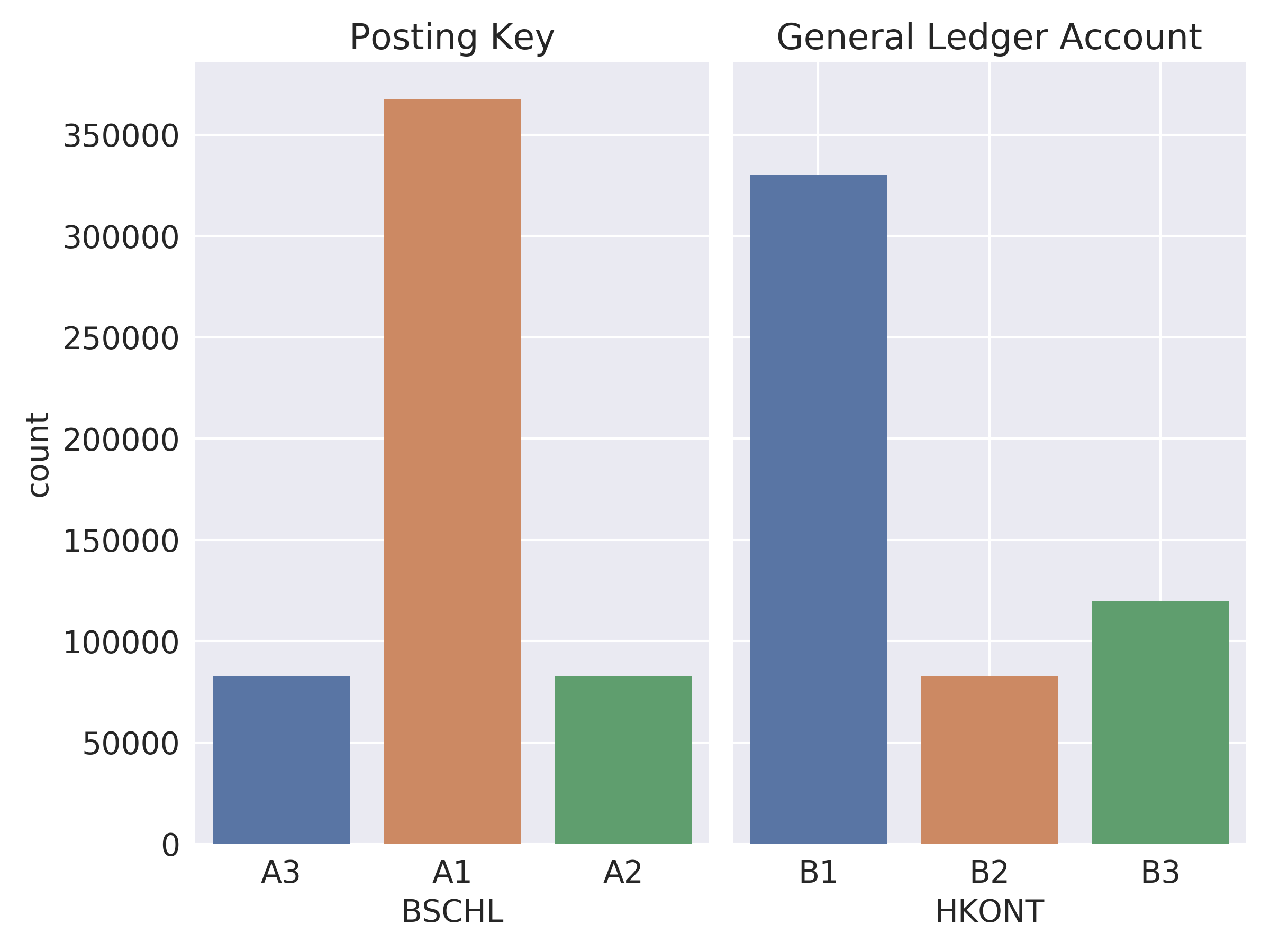

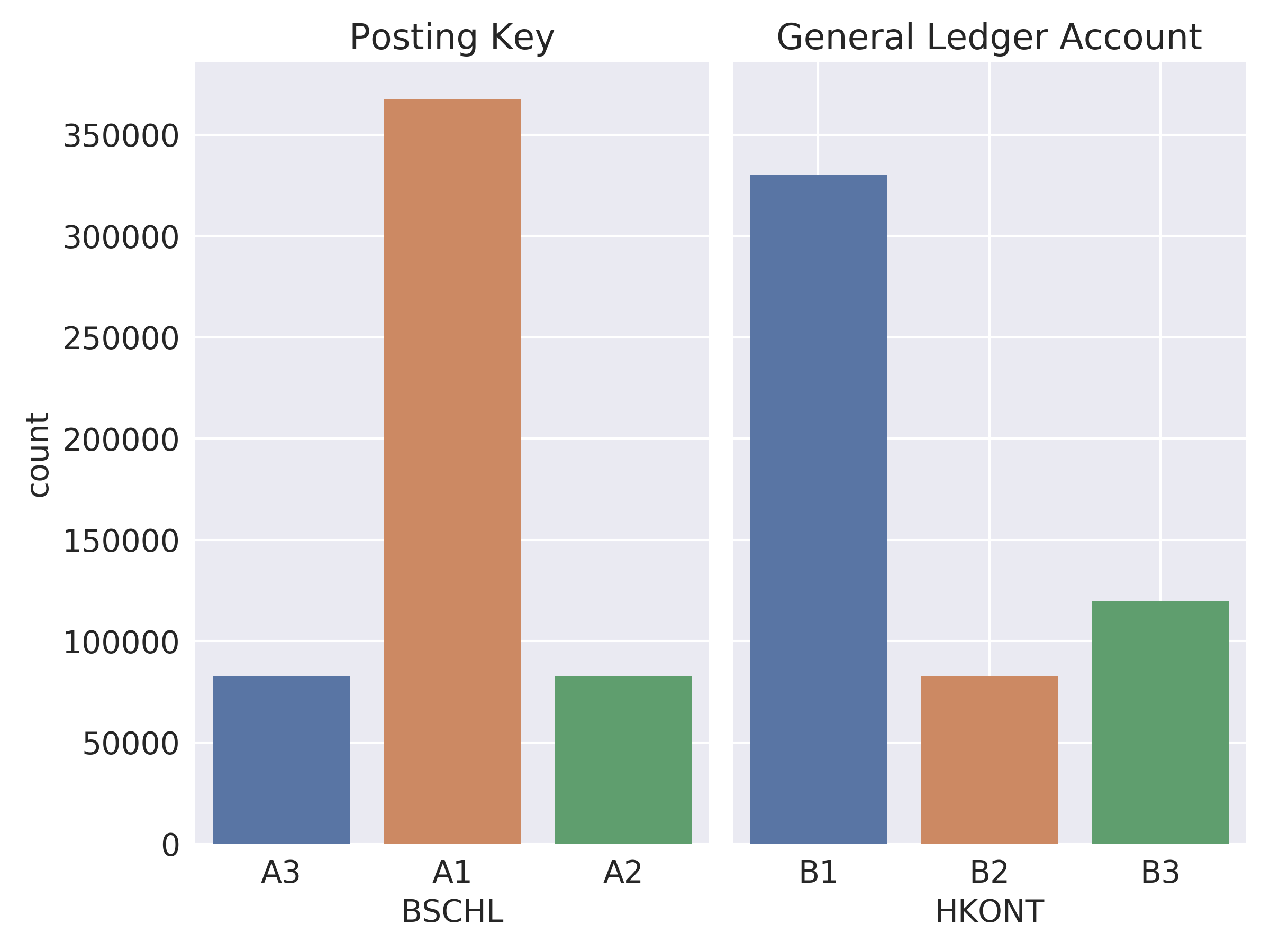

Figure 3: Exemplary distribution of crucial journal entry attributes within Dataset B.

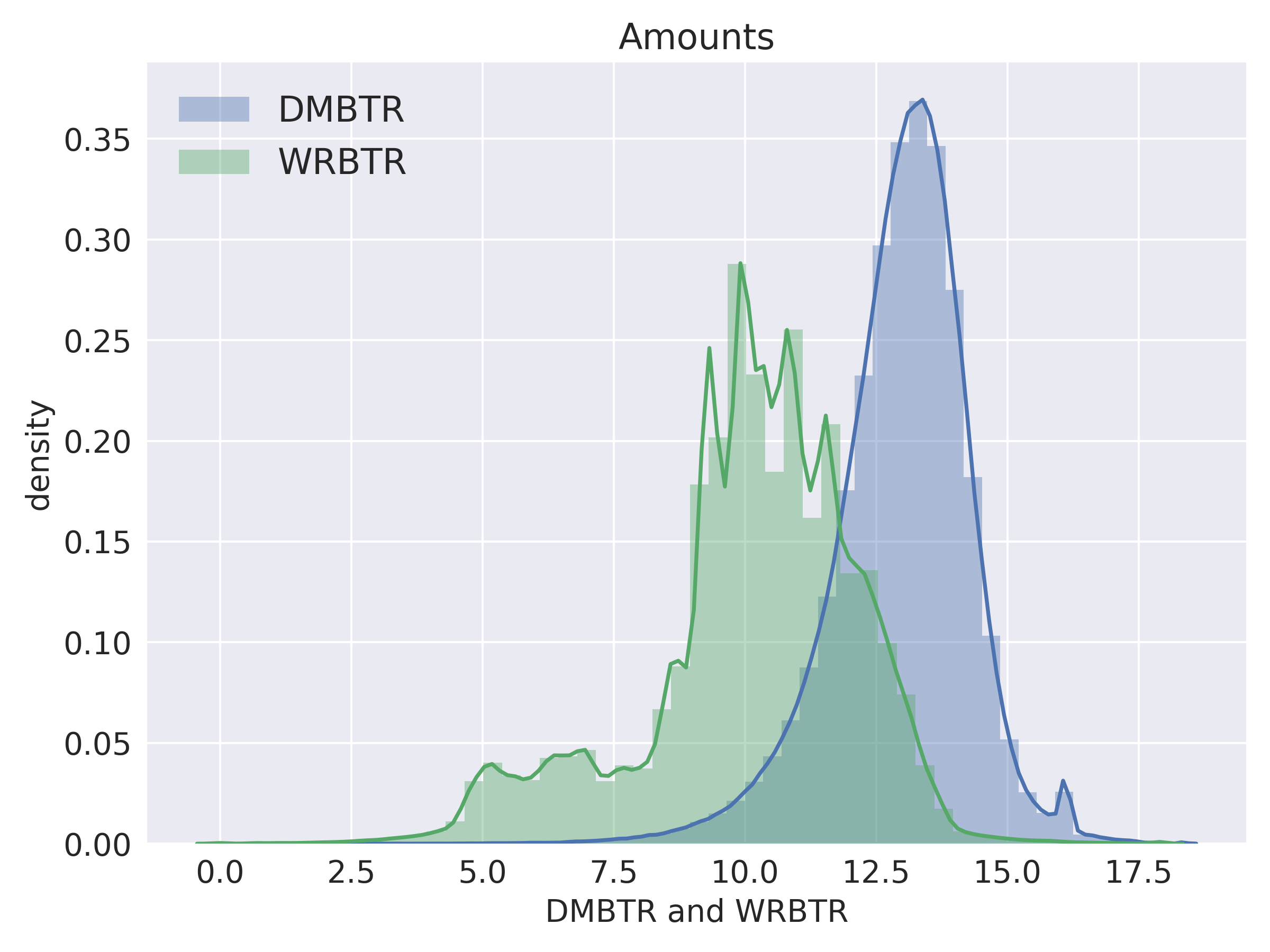

Results and Analysis

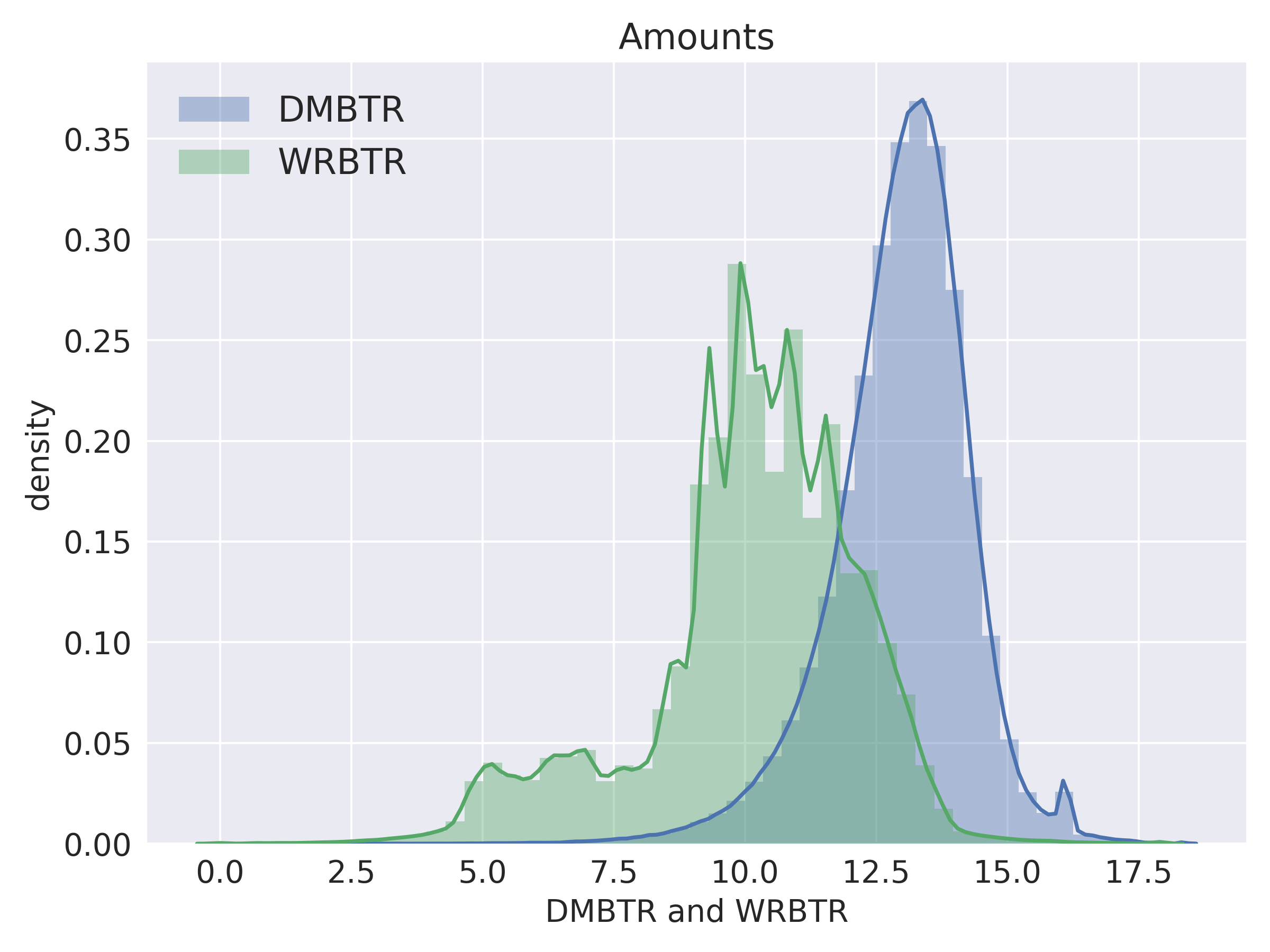

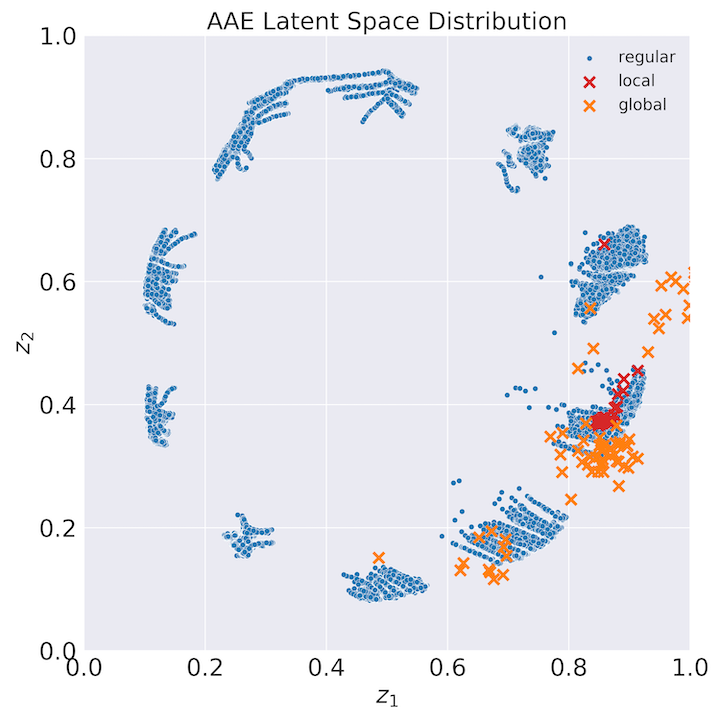

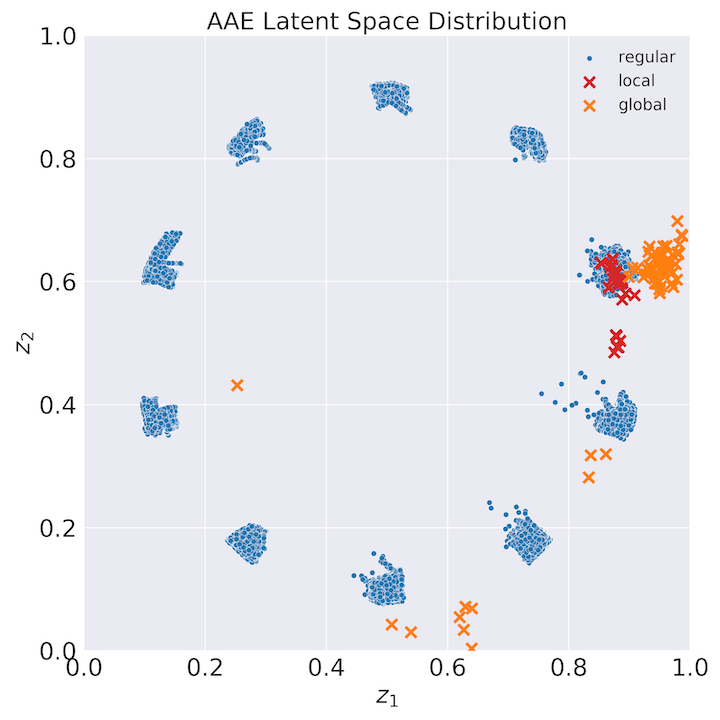

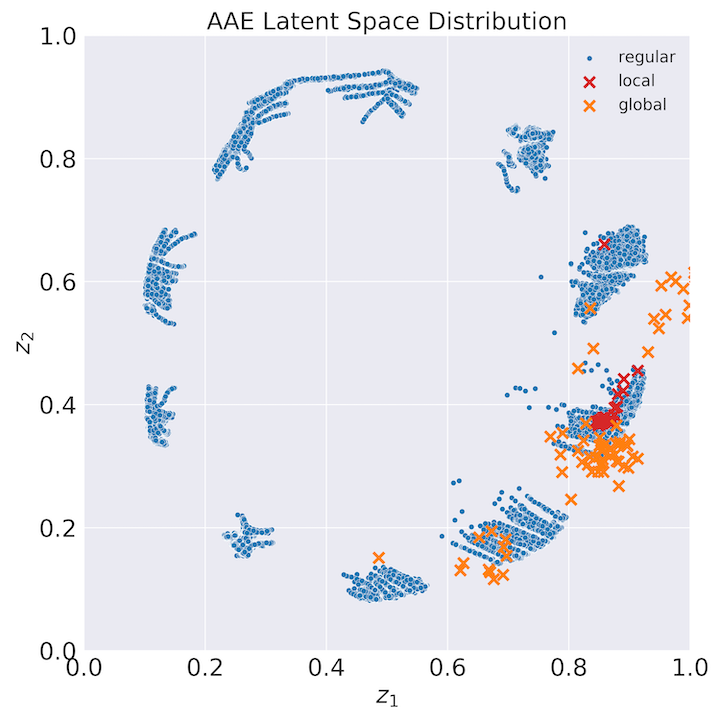

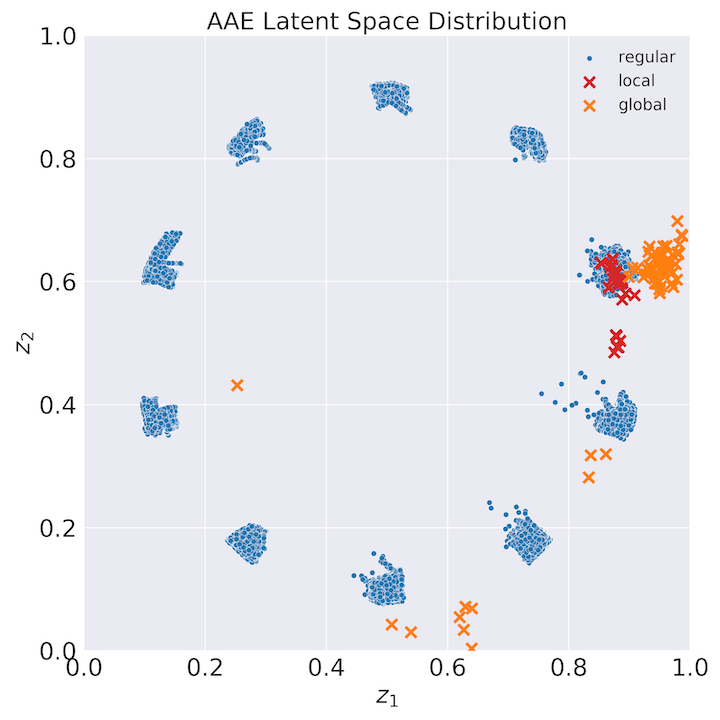

The trained AAE models demonstrated the capability to effectively partition journal entries into semantically distinct groups, reflecting underlying accounting processes. Evaluation metrics indicate that the proposed anomaly score successfully distinguishes between regular entries and both types of anomalies with notable accuracy.

Figure 4: AAE latent space distribution showing the progression from prior distribution definition to a trained posterior distribution.

- Semantic Partitioning: Detailed examination of latent space representations revealed high semantic similarity within partitions, confirming meaningful differentiation consistent with real-world accounting practices.

- Detection Efficacy: Anomaly scores, leveraging both reconstruction and mode divergence measurements, effectively categorized and identified most injected anomalies, highlighting the model's sensitivity to unusual data patterns.

Implications and Future Work

The implications of this study are significant for the domain of forensic accounting and auditing. The unsupervised nature of the proposed approach enables the detection of previously unknown fraud patterns without reliance on labeled data. Furthermore, the improved interpretability of results empowers auditors and forensic accountants to make informed decisions, thus enhancing report defensibility.

The research opens avenues for further exploration in automating semantic disentanglement processes, which could save considerable time and effort in large-scale financial auditing. Future works could also investigate the applicability of AAEs on alternative datasets and explore parameter optimization to refine anomaly detection capabilities.

Conclusion

In conclusion, the application of Adversarial Autoencoders to detect accounting anomalies presents a promising advancement for the field of accounting fraud detection. By achieving a balance between anomaly detection capability and result interpretability, this strategy not only addresses technical limitations in current methodologies but also enhances practical auditing frameworks. Additionally, the study suggests continued research into deep learning methodologies for forensic accounting, targeting improved accuracy and efficiency in anomaly detection.