- The paper introduces DGCM-Net, a dense geometrical correspondence matching network that leverages incremental experience for robotic grasping.

- It employs metric learning and 3D-3D correspondences to accurately transfer grasp configurations between similar object geometries.

- Experimental results show improved grasp success rates and robustness in both offline tests and real-world robotic applications.

DGCM-Net: Dense Geometrical Correspondence Matching Network for Incremental Experience-based Robotic Grasping

Introduction

This paper introduces DGCM-Net, a novel method for enhancing robotic grasping through incremental learning from past experiences. The proposed system aims to improve the grasp success rates of robots by leveraging successful attempts to guide future grasps. The core innovation is the dense geometric correspondence matching network (DGCM-Net), which employs metric learning to encode similar geometries closely in feature space, thereby facilitating the retrieval of relevant experiences for novel objects. This approach is designed to incrementally accumulate and utilize experience for more reliable robotic manipulation.

Methodology

Incremental Grasp Learning Framework

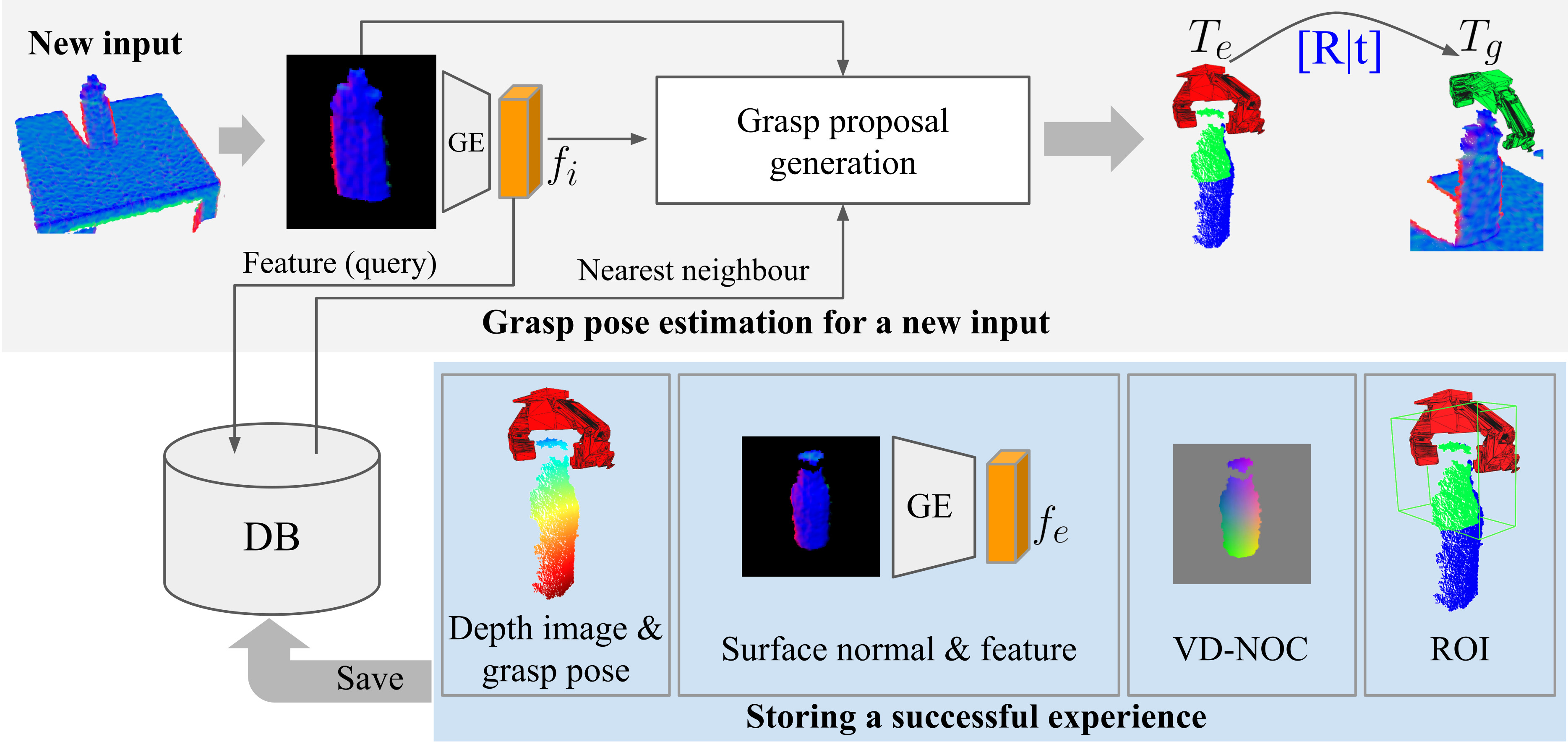

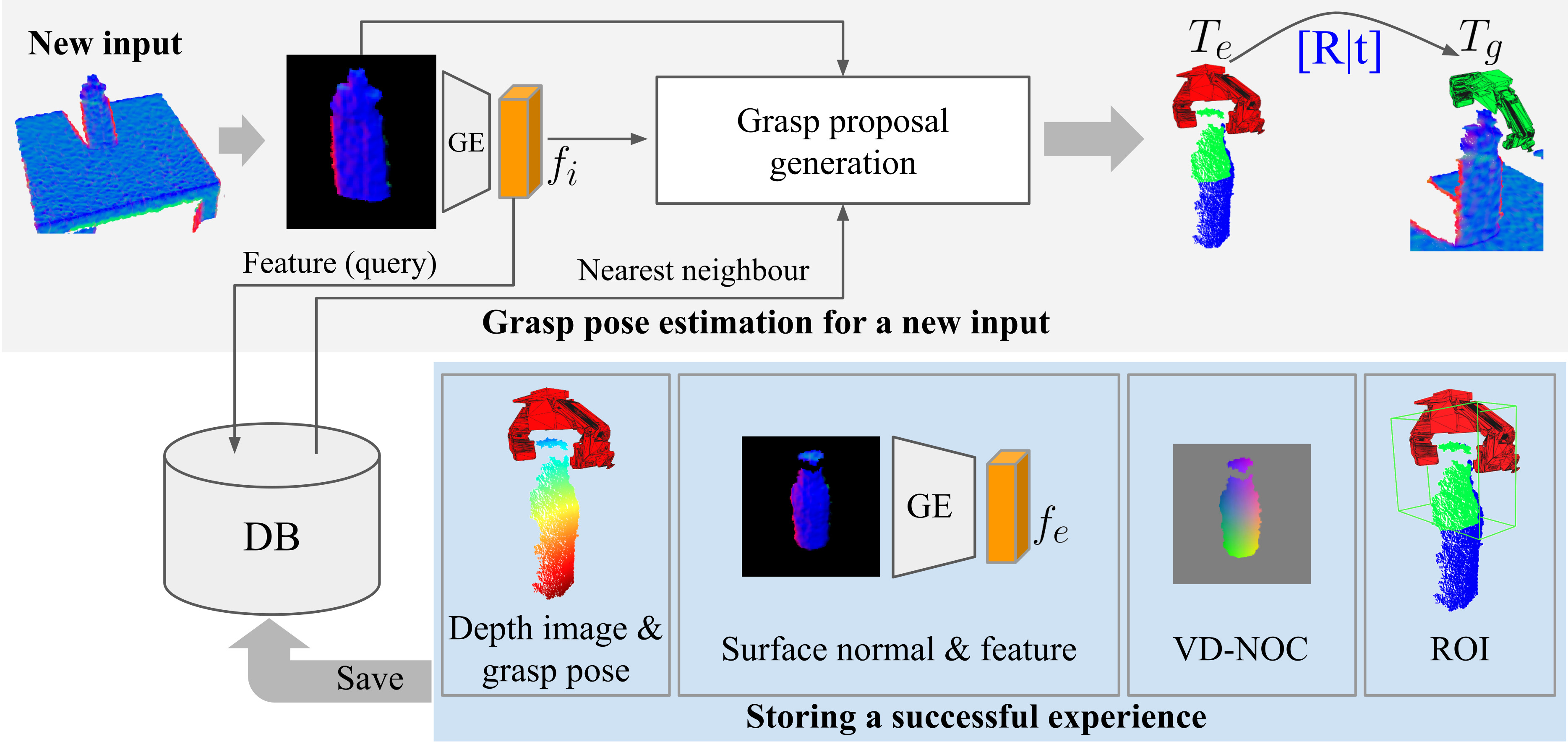

The framework centers around DGCM-Net, which processes depth images to identify and transfer successful grasp strategies from prior experiences stored in a database. It utilizes a combination of geometry encoding, VD-NOC encoding, and VD-NOC decoding to propose grasp configurations for unseen objects based on previously successful grasps. The grasping process involves nearest neighbor searching to retrieve similar experiences, followed by transforming stored grasp poses through reconstructed 3D-3D correspondences.

Figure 1: Overview of storing and retrieving experience with the incremental grasp learning framework.

Dense Geometrical Correspondence Matching in DGCM-Net

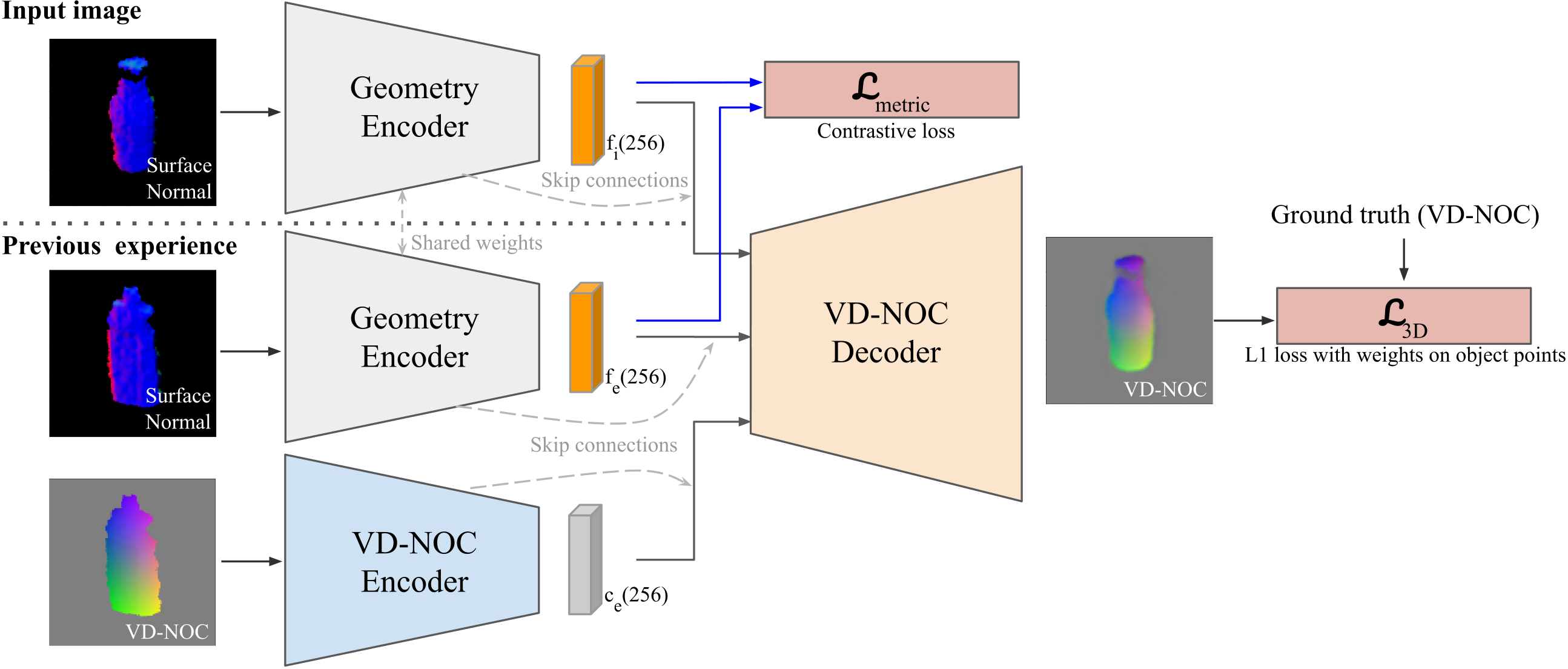

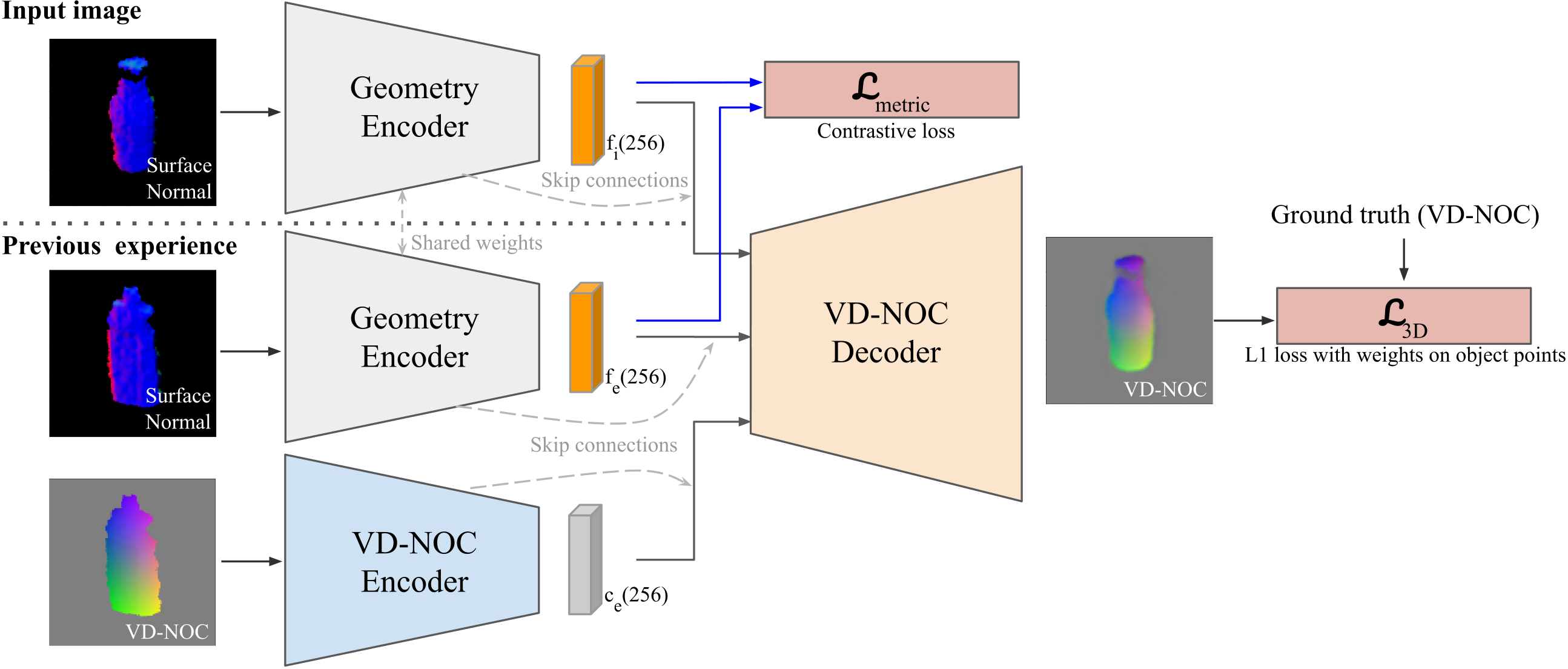

DGCM-Net comprises several components: a geometry encoder, a VD-NOC encoder, and a decoder that reconstructs dense geometrical correspondences from depth images. Metric learning in the geometry encoder computes feature distances, optimizing DGCM-Net for robust experience retrieval. VD-NOC values represent geometrical similarity, allowing for precise grasp transformation via predicted 3D-3D correspondences across varying object geometries.

Figure 2: Overview of the DGCM-Net architecture and training objectives.

Experimental Evaluation

Offline Experiments

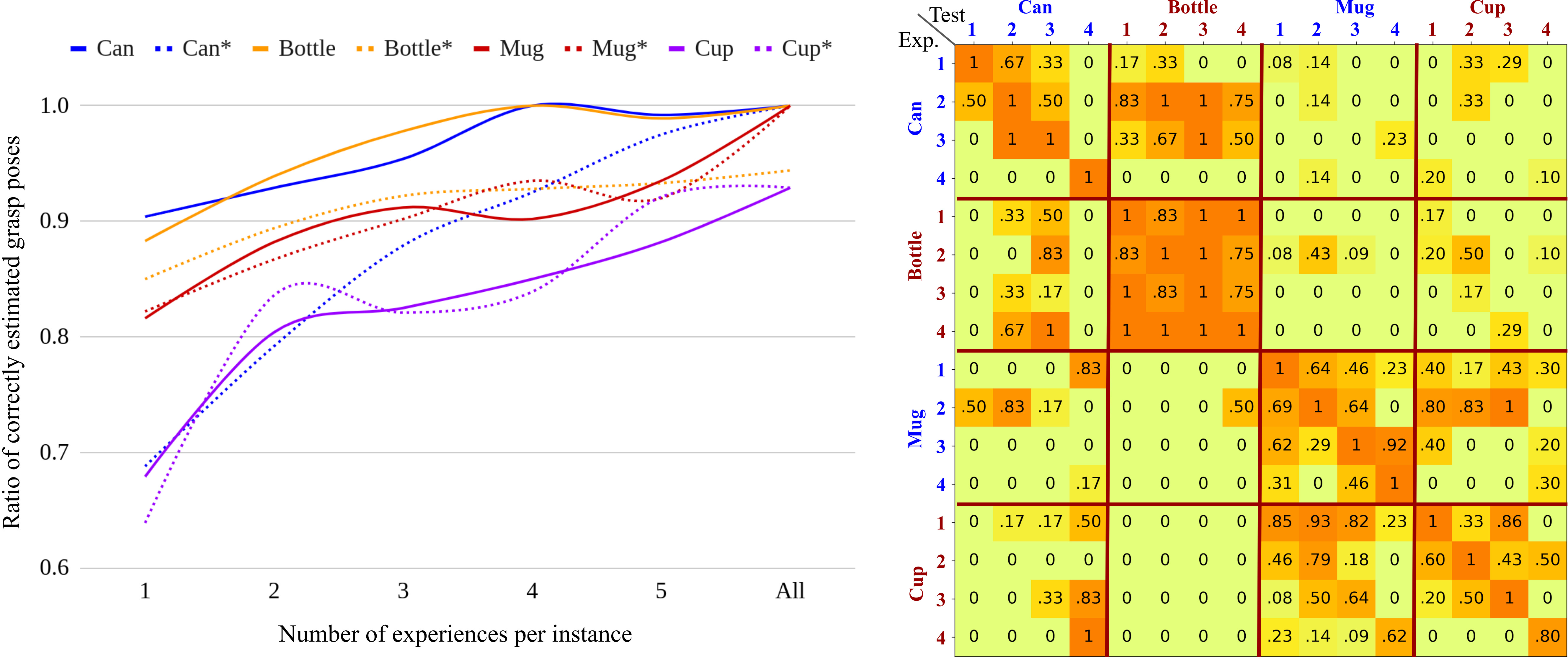

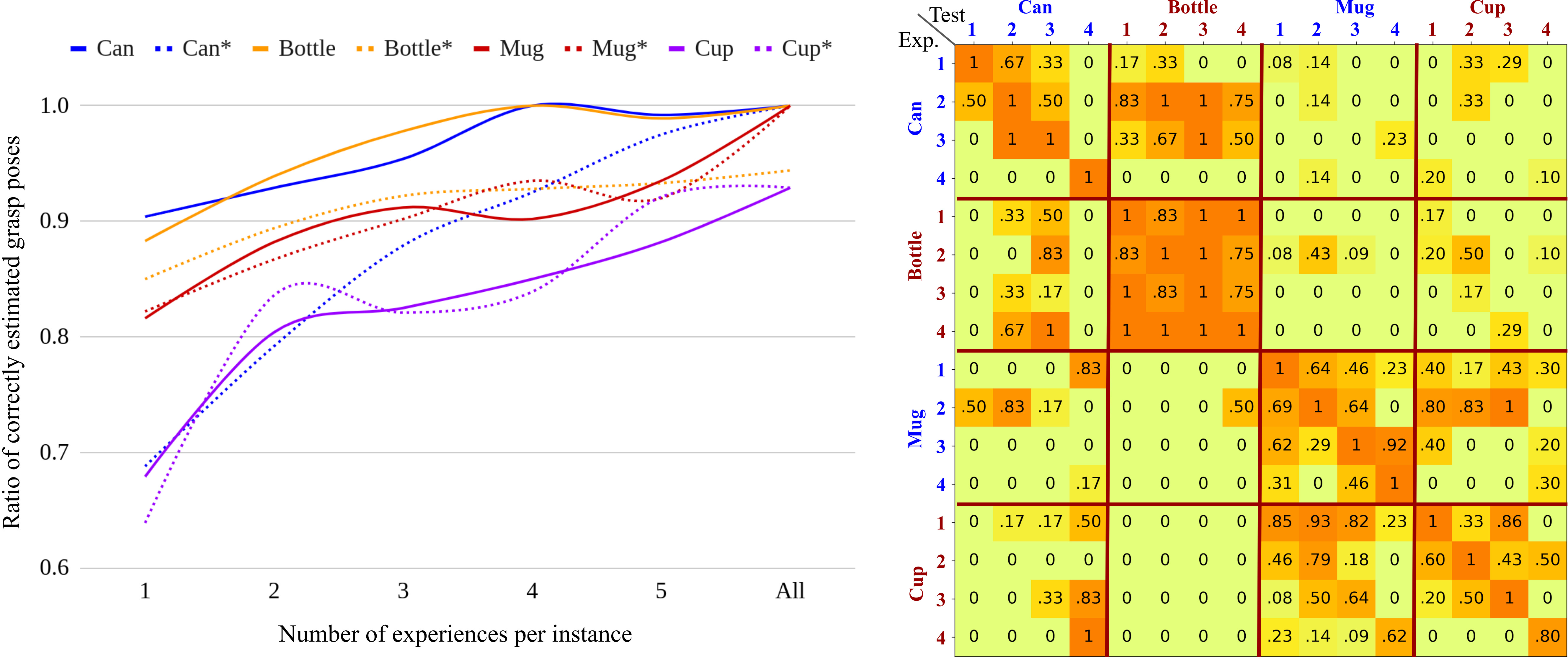

Offline evaluations demonstrate robust grasp transfer capabilities of DGCM-Net both within and across object classes, highlighting the model's ability to generalize from limited experience. With increasing stored experiences per instance, the grasp pose accuracy significantly improves. The model effectively bridges between classes with similar geometric features, showcasing its potential for real-world application scalability.

Figure 3: Results of the offline experiments. Left: Ratio of accurately estimated grasp pose with increasing number of experiences per instance. Right: Ratio of accurately estimated grasp poses using experience from each instance in all classes.

Robotic Grasp Experiments

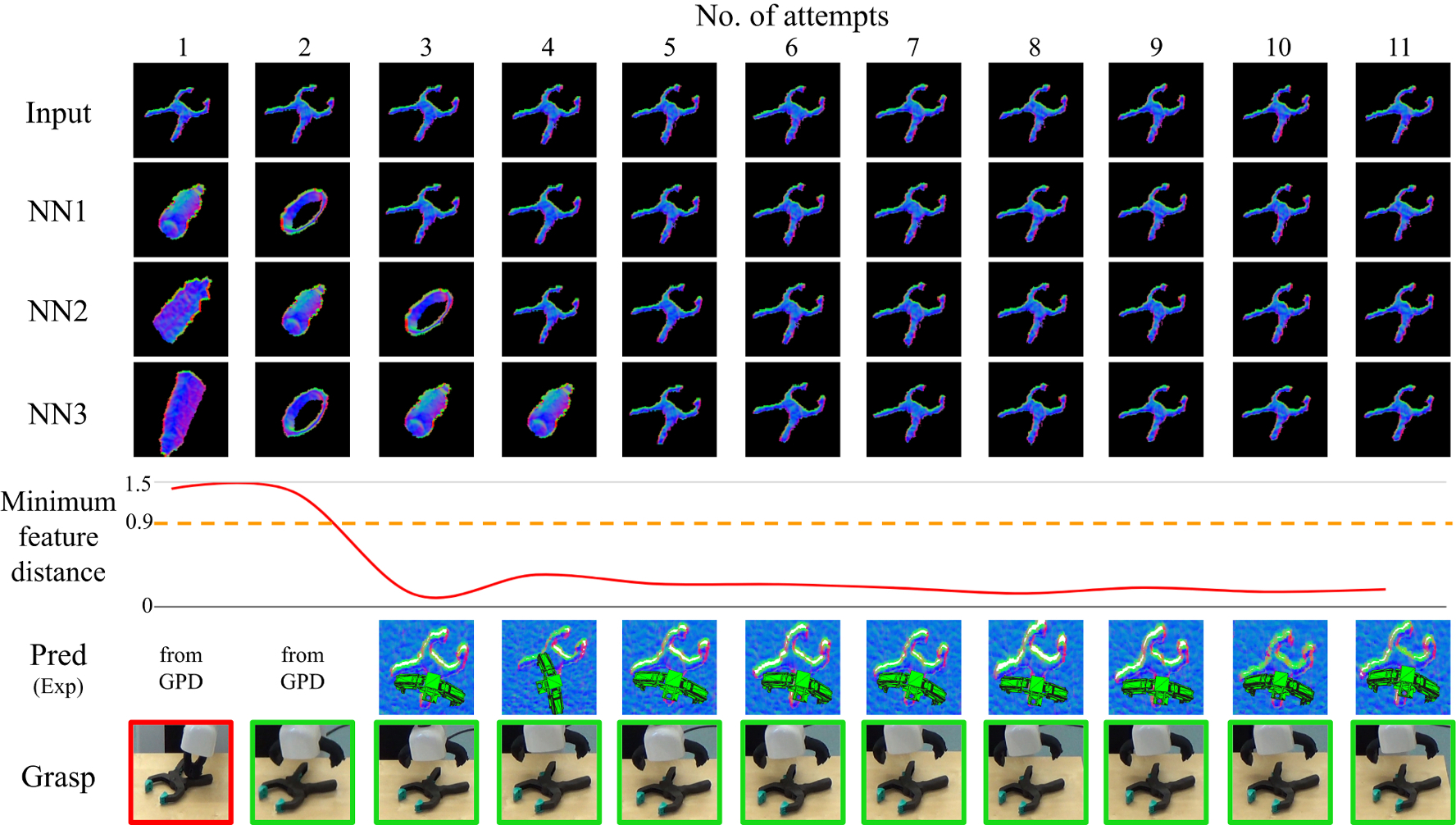

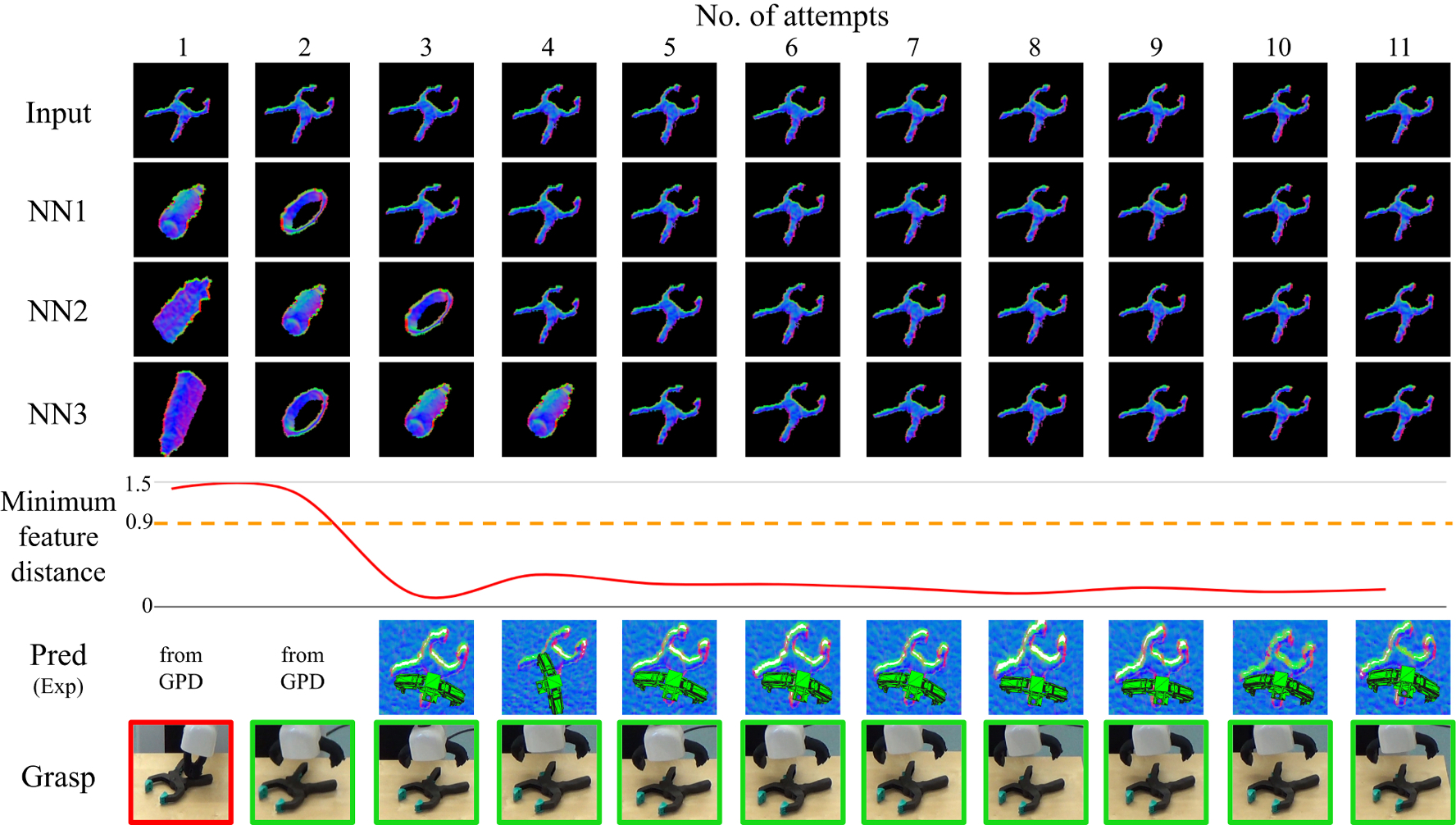

Real-world experiments validate DGCM-Net's competitive performance in grasping tasks with household objects. The method achieves comparable success rates to baseline methods such as GPD and HAF and notably enhances baseline performance when combined with GPD predictions. Incremental learning trials with novel objects demonstrate DGCM-Net's effectiveness, requiring only a few successful grasps to reliably generalize learned grasp configurations.

Figure 4: Summary of results for the incremental learning experiment with the YCB plastic drill.

Conclusion

DGCM-Net presents a significant advancement in experience-based robotic grasping by effectively encoding and utilizing geometric features for reliable grasp transfer. The model's robust performance in both offline and real-world scenarios suggests its potential for broad applicability across diverse robotic manipulation tasks. Future directions may involve expanding the feature learning to encompass more complex geometries and augmenting the system with adaptive experience management to further optimize its learning efficiency.