- The paper introduces a diffusion-based framework that employs DDPM and on-generator training to generate and score 6-DOF grasp configurations.

- It utilizes a comprehensive dataset of over 53 million grasps and a Diffusion-Transformer architecture to bridge the gap between simulation and real-world application.

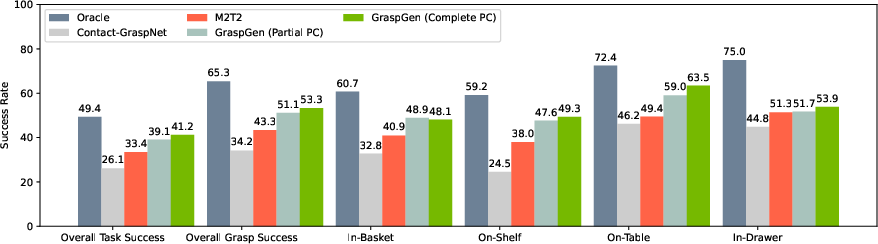

- Experimental evaluations show that GraspGen outperforms baseline methods in both cluttered simulation benchmarks and real-robot scenarios.

"GraspGen: A Diffusion-based Framework for 6-DOF Grasping with On-Generator Training" (2507.13097)

Introduction

"GraspGen: A Diffusion-based Framework for 6-DOF Grasping with On-Generator Training" presents a novel approach to robotic grasping, a complex task that has traditionally involved significant challenges in terms of generalization across different gripper types and real-world environments. The authors introduce a new framework leveraging diffusion models to enhance grasp generation and evaluation. GraspGen systematically integrates a Diffusion-Transformer architecture for grasp generation with an efficient discriminator, implemented through on-generator training, to effectively score and filter grasp samples. The study claims significant improvements over existing methodologies in simulation environments and real-world scenarios.

Methodology

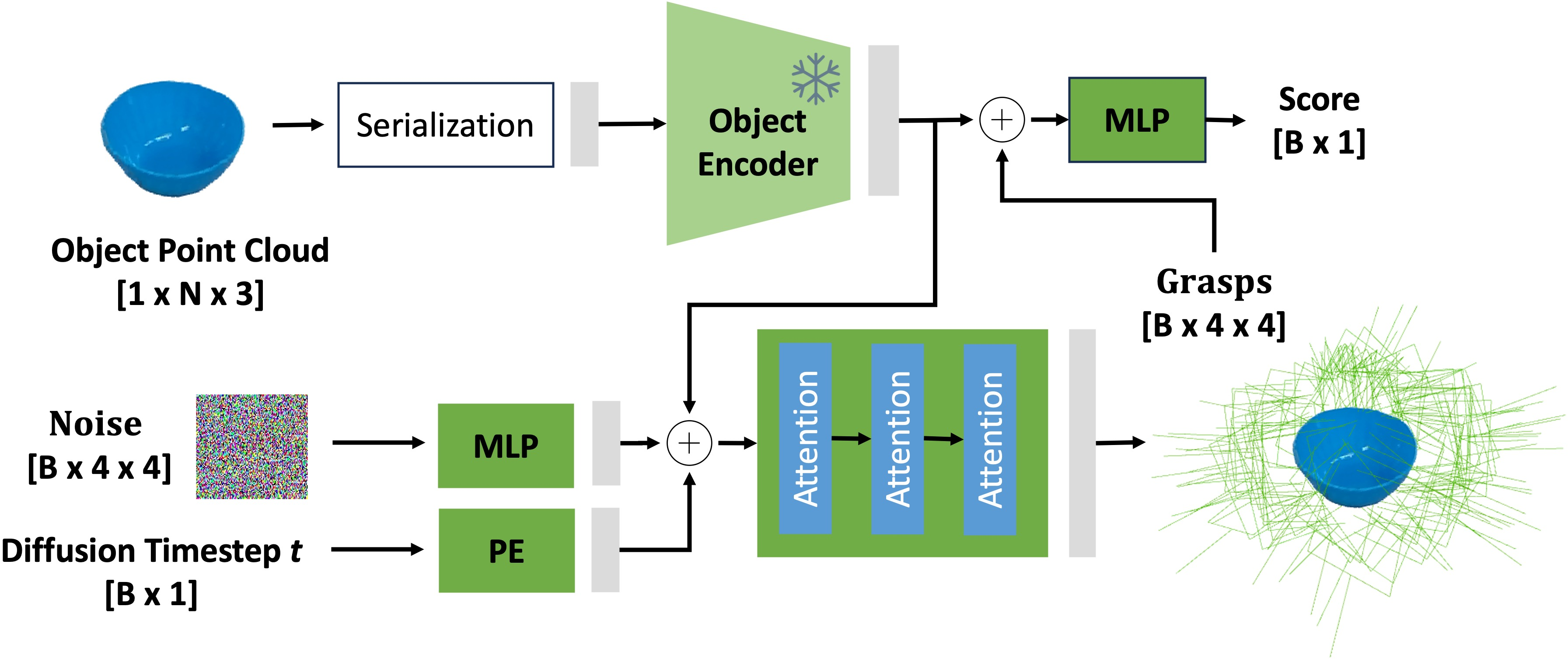

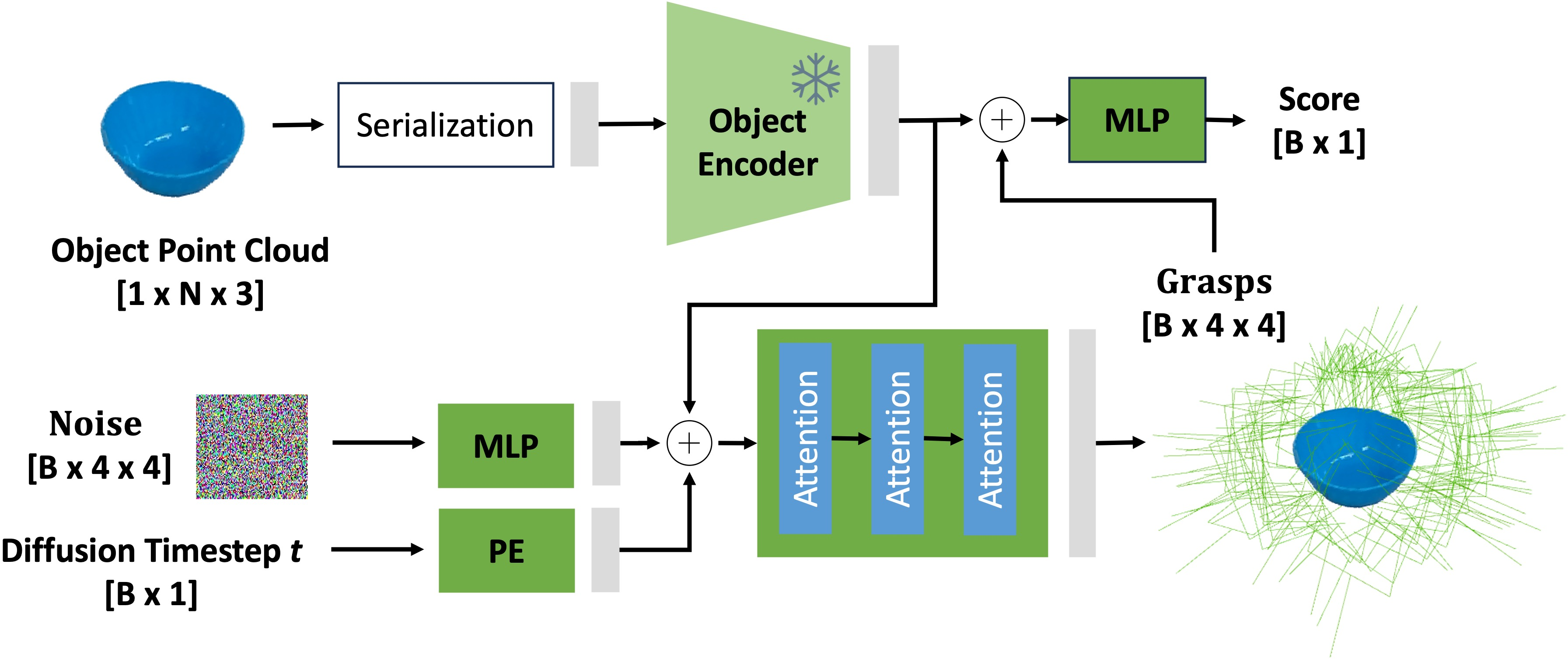

Diffusion Model Architecture

The cornerstone of GraspGen is its diffusion-based grasp generation model, which is adapted for SE(3) space. The model employs a Denoising Diffusion Probabilistic Model (DDPM) that allows efficient inference by modeling grasp poses as a denoising process. Unlike previous generative methods relying on computationally expensive score-matching Langevin dynamics, DDPM offers faster computation by iteratively reducing noise to obtain high-probability grasp configurations.

Figure 1: Architecture for the diffusion noise prediction network.

On-Generator Training

A significant innovation in GraspGen is the on-generator training strategy. Instead of relying solely on pre-collected datasets of successful and unsuccessful grasps, GraspGen generates grasps using its diffusion model and then annotates these grasps in simulation as part of the training process. This approach addresses the gap between the offline dataset distribution and the actual distribution produced by the generative model, facilitating enhanced performance in real-world implementations.

Dataset

GraspGen introduces a comprehensive dataset, exceeding 53 million grasps, to support its training regimen across diverse objects and gripper types. The dataset is generated using Isaac Simulator, leveraging robust simulation environments to ensure the realism and diversity of grasp scenarios.

Experimental Evaluation

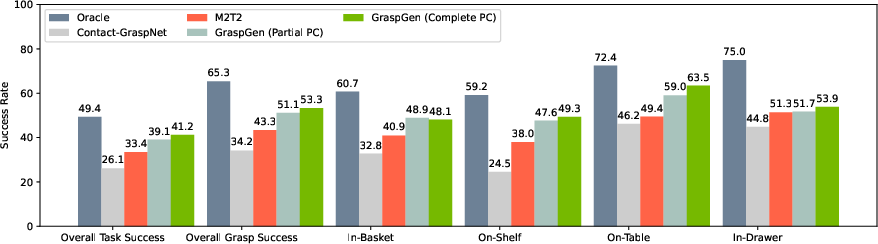

GraspGen demonstrates superior performance compared to contemporary methods across various robustness benchmarks such as FetchBench. In simulated environments, GraspGen's grasp success rates surpass those of baseline methods, including SE3-Diffusion Fields and Contact-GraspNet, particularly in cluttered scenarios.

Figure 2: Large-scale evaluation on FetchBench, showcasing GraspGen's superior performance compared to previous methods.

Real Robot Tests

When deployed in physical robotics setups using a UR10 arm, GraspGen maintains impressive success rates in grasp execution, even in complex settings involving occlusions and clutter. Unlike the baseline models, GraspGen manages to maintain high precision and coverage, effectively dealing with segmentation errors and depth estimation inaccuracies common in real-world conditions.

Ablation Studies

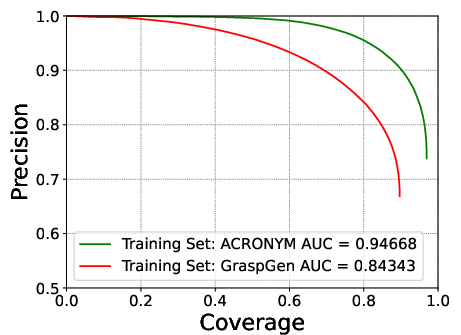

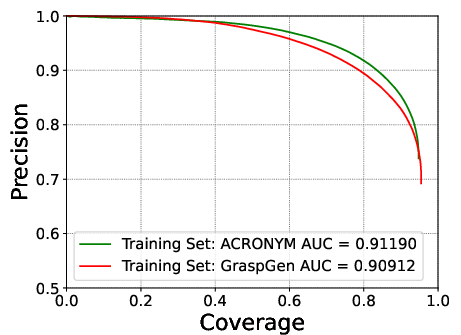

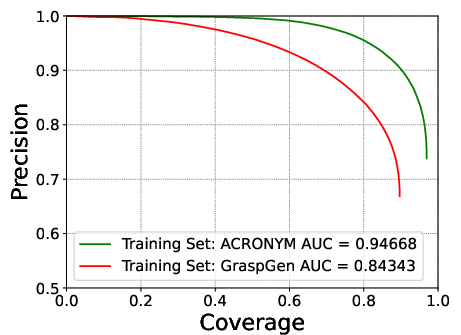

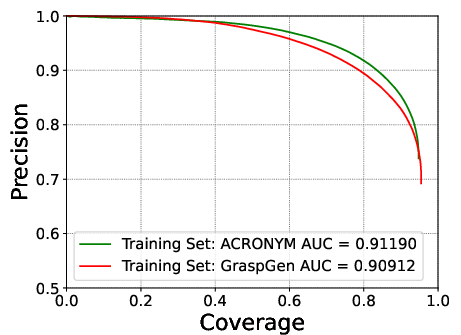

The paper includes extensive ablation studies to validate design choices such as translation normalization, rotation representation, and discriminator architecture. For instance, GraspGen employs PointTransformerV3 as an object encoder, leading to notable improvements over traditional PointNet++ implementations. Such decisions are corroborated through detailed empirical analysis.

Figure 3: ACRONYM Franka test set comparisons, reinforcing the effectiveness of GraspGen across different datasets.

Conclusion

GraspGen represents a robust framework for 6-DOF grasp generation using diffusion models, complemented by a discriminator optimized through a unique training strategy. While currently exhibiting state-of-the-art results in both simulated environments and real-world robot tasks, the framework's computational demands remain a challenge. Future developments may focus on further optimizing GraspGen's architecture for efficiency and extending its applicability to more diverse robotic applications. The methodology proposed in GraspGen is poised to significantly influence the development of general-purpose robotic grasping systems, providing a scalable solution to previously unresolved challenges in embodiment and environmental adaptability.